本篇文章通过TensorFlow搭建最基础的全连接网络,使用MNIST数据集实现基础的模型训练和测试。

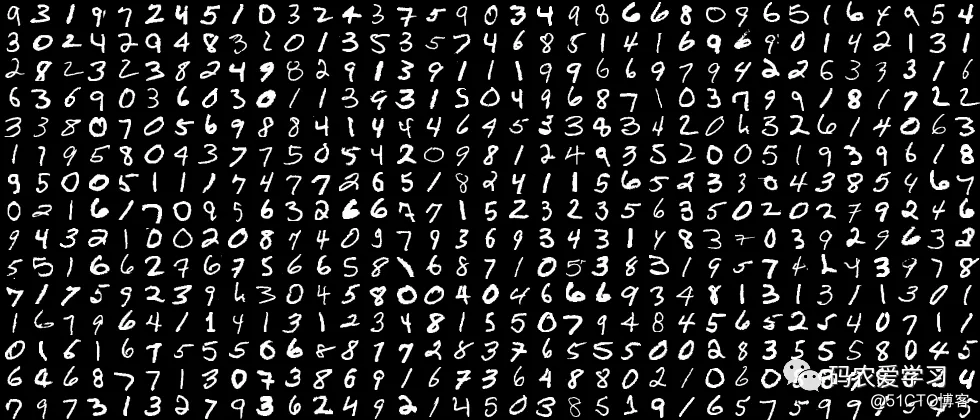

MNIST数据集

MNIST数据集 :包含7万张黑底白字手写数字图片,其中55000张为训练集,5000张为验证集,10000张为测试集。

每张图片大小为28X28像素,图片中纯黑色像素值为0,纯白色像素值为1。

数据集的标签是长度为10的一维数组,数组中每个元素索引号表示对应数字出现的概率 。

在将MNIST数据集作为输入喂入神经网络时,需先将数据集中每张图片变为长度784 一维数组,将该数组作为神经网络输入特征喂入神经网络。

例如:

一张数字手写体图片变成长度为 784 的一维数组[0.0.0.0.0.231 0.235 0.459……0.219 0.0.0.0.]输入神经网络。

该图片对应的标签为[0.0.0.0.0.0.1.0.0.0],标签中索引号为 6 的元素为 1,表示是数字 6 出现的概率为 100%,则该图片对应的识别结果是 6。

使用input_data 模块中的read_data_sets()函数加载MNIST数据集:

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("./data/", one_hot=True)

输出:

Extracting ./data/train-images-idx3-ubyte.gz

Extracting ./data/train-labels-idx1-ubyte.gz

Extracting ./data/t10k-images-idx3-ubyte.gz

Extracting ./data/t10k-labels-idx1-ubyte.gz

在 read_data_sets()函数中有两个参数,第一个参数表示数据集存放路径,第二个参数表示数据集的存取形式。

当第二个参数为 Ture 时,表示以独热码形式存取数据集。

read_data_sets()函数运行时,会检查指定路径内是否已经有数据集,若指定路径中没有数据集,则自动下载,

并将MNIST数据集分为训练集train、验证集validation和测试集test存放。

MNIST数据集结构

在 Tensorflow中用以下函数返回子集样本数:

① 返回训练集train样本数

print("train data size:",mnist.train.num_examples)

② 返回验证集validation样本数

print("validation data size:",mnist.validation.num_examples)

输出:

validation data size: 5000

③ 返回测试集test样本数

print("test data size:",mnist.test.num_examples)

输出:

test data size: 10000

数据集标签

例如:

在MNIST数据集中,若想要查看训练集中第0张图片的标签,则使用如下函数:

mnist.train.labels[0]

输出:

array([0., 0., 0., 0., 0., 0., 0., 1., 0., 0.])

MNIST数据集图片像素值

例如:

在MNIST数据集中,若想要查看训练集中第0张图片像素值,则使用如下函数:

mnist.train.images[0]

输出:

array([0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

...略去中间部分,太多了

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0.34901962, 0.9843138 , 0.9450981 ,

0.3372549 , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0.01960784,

0.8078432 , 0.96470594, 0.6156863 , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0.01568628, 0.45882356, 0.27058825,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. ], dtype=float32)

将数据输入神经网络

例如:

BATCH_SIZE = 200

xs,ys = mnist.train.next_batch(BATCH_SIZE)

print("xs shape:",xs.shape)

print("ys shape:",ys.shape)

输出:

xs shape: (200, 784)

ys shape: (200, 10)

其中,mnist.train.next_batch()函数包含一个参数BATCH_SIZE,表示随机从训练集中抽取BATCH_SIZE个样本输入神经网络,并将样本的像素值和标签分别赋给xs和ys。

在本例中,BATCH_SIZE设置为200,表示一次将200个样本的像素值和标签分别赋值给xs和ys,故xs的形状为(200,784),对应的ys的形状为(200,10)。

TensorFlow模型搭建基础

实现“MNIST数据集手写数字识别 ”的常用函数

① tf.get_collection("") 函数表示从collection集合中取出全部变量生成一个列表 。

② tf.add()函数表示将参数列表中对应元素相加 。

例如:

import tensorflow as tf

x=tf.constant([[1,2],[1,2]])

y=tf.constant([[1,1],[1,2]])

z=tf.add(x,y)

with tf.Session( ) as sess:

print(sess.run(z))

输出:

[[2 3]

[2 4]]

③ tf.cast(x,dtype)函数表示将参数x转换为指定数据类型

import numpy as np

A = tf.convert_to_tensor(np.array([[1,1,2,4], [3,4,8,5]]))

print(A.dtype)

b = tf.cast(A, tf.float32)

print(b.dtype)

输出:

<dtype: 'int32'>

<dtype: 'float32'>

从输出结果看出,将矩阵A由整数型变为32位浮点型。

④ tf.equal()函数表示对比两个矩阵或者向量的元素。若对应元素相等,则返回True,若对应元素不相等,则返回False。

例如:

A = [[1,3,4,5,6]]

B = [[1,3,4,3,2]]

with tf.Session( ) as sess:

print(sess.run(tf.equal(A, B)))

输出:

[[ True True True False False]]

在矩阵A和B中,第1、2、3个元素相等,第4、5个元素不等,故输出结果中,第1、2、3个元素取值为 True,第4、5个元素取值为 False。

⑤tf.reduce_mean(x,axis)函数表示求取矩阵或张量指定维度的平均值。

-

若不指定第二个参数,则在所有元素中取平均值

-

若指定第二个参数为0,则在第一维元素上取平均值,即每一列求平均值

- 若指定第二个参数为1,则在第二维元素上取平均值,即每一行求平均值

例如:

x = [[1., 1.],

[2., 2.]]

with tf.Session() as sess:

print(sess.run(tf.reduce_mean(x)))

print(sess.run(tf.reduce_mean(x,0)))

print(sess.run(tf.reduce_mean(x,1)))

输出:

1.5

[1.5 1.5]

[1. 2.]

⑥ tf.argmax(x,axis)函数表示返回指定维度axis下,参数x中最大值索引号。

例如:

在tf.argmax([1,0,0],1)函数中,axis为1,参数x为[1,0,0],表示在参数x的第一个维度取最大值对应的索引号,故返回 0。

⑦ os.path.join()函数表示把参数字符串按照路径命名规则拼接。

例如:

import os

os.path.join('/hello/','good/boy/','doiido')

输出:

'/hello/good/boy/doiido'

⑧ 字符串.split()函数表示定按照指定“拆分符”对字符串拆分,返回拆分列表。

例如:

'./model/mnist_model-1001'.split('/')[-1].split('-')[-1]

在该例子中,共进行两次拆分。

-

拆分符为/,返回拆分列表,并提取列表中索引为-1 的元素即倒数第一个元素;

- 拆分符为-,返回拆分列表,并提取列表中索引为-1 的元素即倒数第一个元素,故函数返回值为 1001。

⑨ tf.Graph( ).as_default( )函数表示将当前图设置成为默认图,并返回一个上下文管理器。

该函数一般与with关键字搭配使用,应用于将已经定义好的神经网络在计算图中复现。

例如:

with tf.Graph().as_default() as g,表示将在Graph()内定义的节点加入到计算图g中。

神经网络模型的保存

在反向传播过程中,一般会间隔一定轮数保存一次神经网络模型,并产生三个文件:

-

保存当前图结构的.meta文件

-

保存当前参数名的.index文件

- 保存当前参数的.data文件

在Tensorflow中如下表示:

saver = tf.train.Saver()

with tf.Session() as sess:

for i in range(STEPS):

if i % 轮数 == 0:

saver.save(sess, os.path.join(MODEL_SAVE_PATH,MODEL_NAME), global_step=global_step)

其中,tf.train.Saver()用来实例化saver对象。

上述代码表示,神经网络每循环规定的轮数,将神经网络模型中所有的参数等信息保存到指定的路径中,并在存放网络模型的文件夹名称中注明保存模型时的训练轮数。

神经网络模型的加载

在测试网络效果时,需要将训练好的神经网络模型加载,在TensorFlow 中这样表示:

with tf.Session() as sess:

ckpt = tf.train.get_checkpoint_state(存储路径)

if ckpt and ckpt.model_checkpoint_path:

saver.restore(sess, ckpt.model_checkpoint_path)

在with结构中进行加载保存的神经网络模型,若ckpt和保存的模型在指定路径中存在,则将保存的神经网络模型加载到当前会话中。加载模型中参数的滑动平均值

在保存模型时,若模型中采用滑动平均,则参数的滑动平均值会保存在相应文件中。通过实例化saver对象,实现参数滑动平均值的加载,

在TensorFlow中如下表示:

ema = tf.train.ExponentialMovingAverage(滑动平均基数)

ema_restore = ema.variables_to_restore()

saver = tf.train.Saver(ema_restore)

神经网络模型准确率评估方法

在网络评估时,一般通过计算在一组数据上的识别准确率,评估神经网络的效果。在TensorFlow 中这样表示:

correct_prediction = tf.equal(tf.argmax(y, 1),tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction,tf.float32))

-

y:表示在一组数据(即batch_size个数据)上神经网络模型的预测结果,y的形状为[batch_size,10],每一行表示一张图片的识别结果。

-

tf.argmax():取出每张图片对应向量中最大值元素对应的索引值,组成长度为输入数据batch_size个的一维数组。

-

tf.equal():判断预测结果张量和实际标签张量的每个维度是否相等,若相等则返回 True,不相等则返回 False。

- tf.cast():将得到的布尔型数值转化为实数型,再通过tf.reduce_mean()函数求平均值,最终得到神经网络模型在本组数据上的准确率。

网络模型分析

神经网络包括前向传播过程和反向传播过程。

反向传播过程中用到的正则化、指数衰减学习率、滑动平均方法的设置、以及测试模块。

前向传播过程(forward.py )

前向传播过程完成神经网络的搭建,结构如下:

def forward(x, regularizer):

w=

b=

y=

return y

def get_weight(shape, regularizer):

def get_bias(shape):

前向传播过程中,需要定义神经网络中的参数w和偏置b,定义由输入到输出的网络结构。

通过定义函数get_weight()实现对参数w的设置,包括参数w的形状和是否正则化的标志。

同样,通过定义函数get_bias()实现对偏置b的设置。

反向传播过程( back word.py )

反向传播过程完成网络参数的训练,结构如下:

def backward( mnist ):

x = tf.placeholder(dtype,shape)

y_ = tf.placeholder(dtype,shape)

#定义前向传播函数

y = forward()

global_step =

loss =

train_step = tf.train .GradientDescentOptimizer( learning_rate).minimize(loss, global_step=global_step)

#实例化saver对象

saver = tf.train.Saver()

with tf.Session() as sess:

#初始化所有模型参数

tf.initialize_all_variables().run()

#训练模型

for i in range(STEPS):

sess.run(train_step, feed_dict={x: , y_: })

if i % 轮数 == 0:

print

saver.save( )反向传播过程中:

- tf.placeholder(dtype, shape):实现训练样本x和样本标签y_占位

参数dtype表示数据的类型

参数shape表示数据的形状

-

y:定义的前向传播函数 forward

-

loss:定义的损失函数,一般为预测值与样本标签的交叉熵(或均方误差)与正则化损失之和

- train_step:利用优化算法对模型参数进行优化

常用优化算法有GradientDescentOptimizer、AdamOptimizer、MomentumOptimizer,在上述代码中使用的GradientDescentOptimizer优化算法。

接着实例化saver对象:

-

tf.initialize_all_variables().run():实例化所有参数模型

- sess.run( ):实现模型的训练优化过程,并每间隔一定轮数保存一次模型

正则化、指数衰减学习率、滑动平均方法的设置

① 正则化项 regularization

当在前向传播过程中即forward.py文件中,设置正则化参数regularization为1 时,则表明在反向传播过程中优化模型参数时,需要在损失函数中加入正则化项。

结构如下:

首先,需要在前向传播过程即forward.py文件中加入

if regularizer != None:

tf.add_to_collection('losses',tf.contrib.layers.l2_regularizer(regularizer)(w))

其次,需要在反向传播过程即byackword.py文件中加入

ce = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y,labels=tf.argmax(y_, 1))

cem = tf.reduce_mean(ce)

loss = cem + tf.add_n(tf.get_collection('losses'))

- tf.nn.sparse_softmax_cross_entropy_with_logits():表示softmax()函数与交叉熵一起使用。

②指数衰减学习率

在训练模型时,使用指数衰减学习率可以使模型在训练的前期快速收敛接近较优解,又可以保证模型在训练后期不会有太大波动。

运用指数衰减学习率,需要在反向传播过程即backword.py文件中加入:

learning_rate = tf.train.exponential_decay(LEARNING_RATE_BASE,global_step,LEARNING_RATE_STEP, LEARNING_RATE_DECAY,staircase=True)

③ 滑动平均

在模型训练时引入滑动平均可以使模型在测试数据上表现的更加健壮。

需要在反向传播过程即backword.py文件中加入:

ema = tf.train .ExponentialMovingAverage(MOVING_AVERAGE_DECAY,global_step)

ema_op = ema.apply(tf.trainable_variables())

with tf.control_dependencies([train_step, ema_op]):

train_op = tf.no_op(name='train')

测试过程( test .py )

当神经网络模型训练完成后,便可用于测试数据集,验证神经网络的性能。结构如下:

首先,制定模型测试函数test()

def test( mnist ):

with tf.Graph( ).as_default( ) as g:

#给x y_ 占位

x = tf.placeholder( dtype, , shape) )

y_ = tf.placeholder( dtype, , shape) )

#前向传播得到预测结果y

y = mnist_forward.forward(x, None )

#实例化可还原滑动平均的saver

ema = tf.train.ExponentialMovingAverage(滑动衰减率)

ema_restore = ema.variables_to_restore()

saver = tf.train.Saver(ema_restore)

#计算正确率

correct_prediction = tf.equal(tf.argmax(y,1),tf.argmax(y_,1))

accuracy = tf.reduce_mean(tf.cast( correct_prediction,tf.float32))

while True:

with tf.Session() as sess:

#加载训练好的模型

ckpt = tf.train.get_checkpoint_state( 存储路径) )

#如果已有ckpt模型则恢复

if ckpt and ckpt.model_checkpoint_path:

#恢复会话

saver.restore(sess, ckpt.model_checkpoint_path)

#恢复轮数

global_ste = = ckpt.model_checkpoint_path.split('/')[-1].split('- ')[-1]

#计算准确率

accuracy_score = sess.run(accuracy, feed_dict={x: 测试数据 , y_: 测试数据标签 })

#打印提示

print("After %s training step(s), test accuracy=

%g" % (global_step, accuracy_score ))

#如果没有模型

else:

print('No checkpoint file found') # # 模型不存在 提示

return

其次,制定main()函数

def main():

#加载测试数据集

mnist = input_data.read_data_sets ("./data/", one_hot=True)

#调用定义好的测试函数test ()

test(mnist)

if __name__ == '__main__':

main()

通过对测试数据的预测得到准确率,从而判断出训练出的神经网络模型的性能好坏。

当准确率低时,可能原因有模型需要改进,或者是训练数据量太少导致过拟合。

网络模型搭建与测试

实现手写体MNIST数据集的识别任务,共分为三个模块文件,分别是:

-

描述网络结构的前向传播过程文件(mnist_forward.py)

-

描述网络参数优化方法的反向传播过程文件(mnist_backward.py)、

- 验证模型准确率的测试过程文件(mnist_test.py )。

前向传播过程文件( mnist_forward.py )

在前向传播过程中,需要定义网络模型输入层个数、隐藏层节点数、输出层个数,定义网络参数 w、偏置 b,定义由输入到输出的神经网络架构。

实现手写体MNIST数据集的识别任务前向传播过程如下:

import tensorflow as tf

INPUT_NODE = 784

OUTPUT_NODE = 10

LAYER1_NODE = 500

def get_weight(shape, regularizer):

w = tf.Variable(tf.truncated_normal(shape,stddev=0.1))

if regularizer != None: tf.add_to_collection('losses', tf.contrib.layers.l2_regularizer(regularizer)(w))

return w

def get_bias(shape):

b = tf.Variable(tf.zeros(shape))

return b

def forward(x, regularizer):

w1 = get_weight([INPUT_NODE, LAYER1_NODE], regularizer)

b1 = get_bias([LAYER1_NODE])

y1 = tf.nn.relu(tf.matmul(x, w1) + b1)

w2 = get_weight([LAYER1_NODE, OUTPUT_NODE], regularizer)

b2 = get_bias([OUTPUT_NODE])

y = tf.matmul(y1, w2) + b2

return y由上述代码可知,在前向传播过程中,规定网络:

-

输入结点:784个(代表每张输入图片的像素个数)

-

隐藏层节点:500 个

-

输出节点:10个(表示输出为数字 0-9的十分类)

-

w1:由输入层到隐藏层的参数,形状为[784,500]

- w2:由隐藏层到输出层的参数,形状为[500,10]

(参数满足截断正态分布,并使用正则化,将每个参数的正则化损失加到总损失中)

-

b1:由输入层到隐藏层的偏置,形状为长度为 500的一维数组

-

b2:由隐藏层到输出层的偏置,形状为长度为10的一维数组,初始化值为全 0。

-

y1:隐藏层输出,由前向传播结构第一层为输入x与参数w1矩阵相乘加上偏置b1,再经过relu函数得到

- y:输出,由前向传播结构第二层为隐藏层输出y1与参数w2矩阵相乘加上偏置b2得到

(由于输出y要经过softmax函数,使其符合概率分布,故输出y不经过relu函数)

反向传播过程文件(mnist_backward.py )

反向传播过程实现利用训练数据集对神经网络模型训练,通过降低损失函数值,实现网络模型参数的优化,从而得到准确率高且泛化能力强的神经网络模型。

实现手写体MNIST数据集的识别任务反向传播过程如下:

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import mnist_forward

import os

BATCH_SIZE = 200

LEARNING_RATE_BASE = 0.1

LEARNING_RATE_DECAY = 0.99

REGULARIZER = 0.0001

STEPS = 500 #50000

MOVING_AVERAGE_DECAY = 0.99

MODEL_SAVE_PATH="./model/"

MODEL_NAME="mnist_model"

def backward(mnist):

x = tf.placeholder(tf.float32, [None, mnist_forward.INPUT_NODE])

y_ = tf.placeholder(tf.float32, [None, mnist_forward.OUTPUT_NODE])

y = mnist_forward.forward(x, REGULARIZER)

global_step = tf.Variable(0, trainable=False)

ce = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y, labels=tf.argmax(y_, 1))

cem = tf.reduce_mean(ce)

loss = cem + tf.add_n(tf.get_collection('losses'))

learning_rate = tf.train.exponential_decay(

LEARNING_RATE_BASE,

global_step,

mnist.train.num_examples / BATCH_SIZE,

LEARNING_RATE_DECAY,

staircase=True)

train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step)

ema = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)

ema_op = ema.apply(tf.trainable_variables())

with tf.control_dependencies([train_step, ema_op]):

train_op = tf.no_op(name='train')

saver = tf.train.Saver()

with tf.Session() as sess:

init_op = tf.global_variables_initializer()

sess.run(init_op)

for i in range(STEPS):

xs, ys = mnist.train.next_batch(BATCH_SIZE)

_, loss_value, step = sess.run([train_op, loss, global_step], feed_dict={x: xs, y_: ys})

if i % 1000 == 0:

print("After %d training step(s), loss on training batch is %g." % (step, loss_value))

saver.save(sess, os.path.join(MODEL_SAVE_PATH, MODEL_NAME), global_step=global_step)

def main():

mnist = input_data.read_data_sets("./data/", one_hot=True)

backward(mnist)

if __name__ == '__main__':

main()

输出:

Extracting ./data/train-images-idx3-ubyte.gz

Extracting ./data/train-labels-idx1-ubyte.gz

Extracting ./data/t10k-images-idx3-ubyte.gz

Extracting ./data/t10k-labels-idx1-ubyte.gz

After 1 training step(s), loss on training batch is 3.47547.

After 1001 training step(s), loss on training batch is 0.283958.

After 2001 training step(s), loss on training batch is 0.304716.

After 3001 training step(s), loss on training batch is 0.266811.

...省略

After 47001 training step(s), loss on training batch is 0.128592.

After 48001 training step(s), loss on training batch is 0.125534.

After 49001 training step(s), loss on training batch is 0.123577.

由上述代码可知,在反向传播过程中:

-

引入tensorflow、input_data、前向传播mnist_forward 和os模块

-

定义每轮喂入神经网络的图片数、初始学习率、学习率衰减率、正则化系数、训练轮数、模型保存路径以及模型保存名称等相关信息

-

反向传播函数backword中:

-

读入mnist,用placeholder给训练数据x和标签y_占位

-

调用mnist_forward文件中的前向传播过程forword()函数,并设置正则化,计算训练数据集上的预测结果y

-

并给当前计算轮数计数器赋值,设定为不可训练类型

-

调用包含所有参数正则化损失的损失函数loss,并设定指数衰减学习率learning_rate

-

使用梯度衰减算法对模型优化,降低损失函数,并定义参数的滑动平均

-

在with结构中:

-

实现所有参数初始化

-

每次喂入batch_size组(即 200 组)训练数据和对应标签,循环迭代steps轮

-

并每隔 1000 轮打印出一次损失函数值信息,并将当前会话加载到指定路径

- 通过主函数main(),加载指定路径下的训练数据集,并调用规定的backward()函数训练模型

测试过程文件(mnist_ test .py )

当训练完模型后,给神经网络模型输入测试集验证网络的准确性和泛化性。

注意,所用的测试集和训练集是相互独立的。

实现手写体MNIST数据集的识别任务测试传播过程如下:

#coding:utf-8

import time

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import mnist_forward

import mnist_backward

TEST_INTERVAL_SECS = 5

def test(mnist):

with tf.Graph().as_default() as g:

x = tf.placeholder(tf.float32, [None, mnist_forward.INPUT_NODE])

y_ = tf.placeholder(tf.float32, [None, mnist_forward.OUTPUT_NODE])

y = mnist_forward.forward(x, None)

ema = tf.train.ExponentialMovingAverage(mnist_backward.MOVING_AVERAGE_DECAY)

ema_restore = ema.variables_to_restore()

saver = tf.train.Saver(ema_restore)

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

while True:

with tf.Session() as sess:

ckpt = tf.train.get_checkpoint_state(mnist_backward.MODEL_SAVE_PATH)

if ckpt and ckpt.model_checkpoint_path:

saver.restore(sess, ckpt.model_checkpoint_path)

global_step = ckpt.model_checkpoint_path.split('/')[-1].split('-')[-1]

accuracy_score = sess.run(accuracy, feed_dict={x: mnist.test.images, y_: mnist.test.labels})

print("After %s training step(s), test accuracy = %g" % (global_step, accuracy_score))

return

else:

print('No checkpoint file found')

return

time.sleep(TEST_INTERVAL_SECS)

def main():

mnist = input_data.read_data_sets("./data/", one_hot=True)

test(mnist)

if __name__ == '__main__':

main()

输出:

Extracting ./data/train-images-idx3-ubyte.gz

Extracting ./data/train-labels-idx1-ubyte.gz

Extracting ./data/t10k-images-idx3-ubyte.gz

Extracting ./data/t10k-labels-idx1-ubyte.gz

After 49001 training step(s), test accuracy = 0.98

在上述代码中,

-

引入time模块、tensorflow、input_data、前向传播mnist_forward、反向传播 mnist_backward 模块和 os 模块

-

规定程序 5 秒的循环间隔时间

-

定义测试函数test(),读入mnist数据集:

-

利用tf.Graph()复现之前定义的计算图

-

利用placeholder给训练数据x和标签y_占位

-

调用mnist_forward文件中的前向传播过程forword()函数,计算训练数据集上的预测结果y

-

实例化具有滑动平均的saver对象,从而在会话被加载时模型中的所有参数被赋值为各自的滑动平均值,增强模型的稳定性

-

计算模型在测试集上的准确率

-

在with结构中,加载指定路径下的ckpt:

-

若模型存在,则加载出模型到当前对话,在测试数据集上进行准确率验证,并打印出当前轮数下的准确率

-

若模型不存在,则打印出模型不存在的提示,从而test()函数完成

- 通过主函数main(),加载指定路径下的测试数据集,并调用规定的test函数,进行模型在测试集上的准确率验证

从上面的运行结果可以看出,最终在测试集上的准确率在98%,模型训练mnist_backward.py与模型测试mnist_test.py可同时执行,这里可以更加直观看出:随着训练轮数的增加,网络模型的损失函数值在不断降低,并且在测试集上的准确率在不断提升,有较好的泛化能力。

参考:人工智能实践:Tensorflow笔记