项目地址:https://github.com/easzlab/kubeasz

#:先配置harbor #:利用脚本安装docker root@k8s-harbor1:~# vim docker_install.sh #!/bin/bash sudo apt-get update sudo apt-get -y install apt-transport-https ca-certificates curl software-properties-common curl -fsSL http://mirrors.aliyun.com/docker-ce/linux/ubuntu/gpg | sudo apt-key add - sudo add-apt-repository "deb [arch=amd64] http://mirrors.aliyun.com/docker-ce/linux/ubuntu $(lsb_release -cs) stable" sudo apt-get -y update sudo apt install -y docker-ce=5:18.09.9~3-0~ubuntu-bionic docker-ce-cli=5:18.09.9~3-0~ubuntu-bionic root@k8s-harbor1:~# bash docker_install.sh #:配置加速器 root@k8s-harbor1:~# sudo mkdir -p /etc/docker root@k8s-harbor1:~# sudo tee /etc/docker/daemon.json <<-'EOF' > { > "registry-mirrors": ["https://5zw40ihv.mirror.aliyuncs.com"] > } > EOF { "registry-mirrors": ["https://5zw40ihv.mirror.aliyuncs.com"] } root@k8s-harbor1:~# sudo systemctl daemon-reload root@k8s-harbor1:~# sudo systemctl restart docker #:安装docker-compose root@k8s-harbor1:~# apt install -y docker-compose #:下载harbor包,解压并做软连接 root@k8s-harbor1:/usr/local/src# ls harbor-offline-installer-v1.7.5.tgz root@k8s-harbor1:/usr/local/src# tar xf harbor-offline-installer-v1.7.5.tgz root@k8s-harbor1:/usr/local/src# ln -sv /usr/local/src/harbor /usr/local/harbor #:在准备证书,为harbor配置中准备的 root@k8s-harbor1:/usr/local/harbor# mkdir /usr/local/src/harbor/certs #:准备一个放证书的目录 root@k8s-harbor1:/usr/local/harbor# cd /usr/local/src/harbor/certs root@k8s-harbor1:/usr/local/src/harbor/certs# openssl genrsa -out /usr/local/src/harbor/certs/harbor-ca.key 2048 #:生成私有key root@k8s-harbor1:/usr/local/src/harbor/certs# openssl req -x509 -new -nodes -key /usr/local/src/harbor/certs/harbor-ca.key -subj "/CN=harbor.magedu.net" -days 7120 -out /usr/local/src/harbor/certs/harbor-ca.crt #:注意改域名,这个名字harbor配置中的hostname一定要一样, 生成自签名证书,在ubuntu系统会以下错误 Can't load /root/.rnd into RNG 139879360623040:error:2406F079:random number generator:RAND_load_file:Cannot open file:../crypto/rand/randfile.c:88:Filename=/root/.rnd #:根据提示创建这个文件,再次执行即可 root@k8s-harbor1:/usr/local/src/harbor/certs# touch /root/.rnd #:修改harbor配置文件 root@k8s-harbor1:/usr/local/src/harbor/certs# cd /usr/local/harbor root@k8s-harbor1:/usr/local/harbor# vim harbor.cfg hostname = harbor.magedu.net ui_url_protocol = https #:此处要使用https协议 ssl_cert = /usr/local/src/harbor/certs/harbor-ca.crt ssl_cert_key = /usr/local/src/harbor/certs/harbor-ca.key #:此处就写上步生成的证书 harbor_admin_password = 123456 #:harbor的登录密码 #:开始安装harbor root@k8s-harbor1:/usr/local/harbor# ./install.sh #:测试

#:配置master1可以上传和拉取镜像 #:先利用脚本安装docker root@k8s-master1:~# bash docker_install.sh #:创建一个以harbor访问名相同的目录(必须要同访问名相同)放证书,否则不能上传和下载镜像 root@k8s-master1:~# mkdir /etc/docker/certs.d/harbor.magedu.net -p #:将harbor的公钥拷贝到要上传镜像的服务器 root@k8s-harbor1:~# scp /usr/local/src/harbor/certs/harbor-ca.crt 192.168.5.101:/etc/docker/certs.d/harbor.magedu.ne #:重启docker root@k8s-master1:~# systemctl restart docker #:配置域名解析 root@k8s-master1:~# vim /etc/hosts 192.168.5.103 harbor.magedu.net #:登录测试 root@k8s-master1:~# docker login harbor.magedu.net #:在harbor的web端创建一个项目,设置成公开 #:下载一个小镜像,修改tag,上传一下测试 root@k8s-master1:~# docker pull alpine root@k8s-master1:~# docker tag 961769676411 harbor.magedu.net/linux37/alpine:v1 root@k8s-master1:~# docker push harbor.magedu.net/linux37/alpine:v1 #:配置master2可以上传和拉取镜像 #:在master2 利用脚本安装docker root@k8s-master2:~# bash docker_install.sh #:因为只有master2上传镜像,所以我们手动将认证文件和证书传到master2上 root@k8s-master1:~# scp -r /root/.docker 192.168.5.102:/root #:利用脚本将master1的公钥拷贝到master2,etcd,node节点,实现免秘钥登录 root@k8s-master1:~# vim scp.sh #!/bin/bash IP=" 192.168.5.101 192.168.5.102

192.168.5.104 192.168.5.105 192.168.5.106 192.168.5.107 192.168.5.108 192.168.5.109 " for node in ${IP};do sshpass -p centos ssh-copy-id ${node} -o StrictHostKeyChecking=no if [ $? -eq 0 ];then echo "${node} 秘钥拷贝完成" else echo "${node} 秘钥拷贝失败" fi done #:安装sshpass命令 root@k8s-master1:~# apt install sshpass #:在master1上生成秘钥对 root@k8s-master1:~# ssh-keygen #:执行脚本 root@k8s-master1:~# bash scp.sh #:再次修改脚本,将证书文件,认证文件,资源限制,拷贝到各主机 root@k8s-master1:~# vim scp.sh #!/bin/bash IP=" 192.168.5.102

192.168.5.104 192.168.5.105 192.168.5.106 192.168.5.107 192.168.5.108 192.168.5.109 " for node in ${IP};do # sshpass -p centos ssh-copy-id ${node} -o StrictHostKeyChecking=no # if [ $? -eq 0 ];then # echo "${node} 秘钥拷贝完成" # else # echo "${node} 秘钥拷贝失败" # fi scp docker_install.sh ${node}:/root scp -r /etc/docker/certs.d ${node}:/etc/docker scp /etc/hosts ${node}:/etc/ scp /etc/security/limits.conf ${node}:/etc/security/limits.conf scp /etc/sysctl.conf ${node}:/etc/sysctl.conf ssh ${node} "reboot" echo "${node} 重启成功" done

#:优化参数

root@k8s-master1:~# vim /etc/sysctl.conf

net.ipv4.ip_nonlocal_bind = 1

net.ipv4.ip_forward = 1

net.ipv4.tcp_tw_reuse = 0

net.ipv4.tcp_timestamps = 0

net.ipv4.tcp_tw_recycle = 0

root@k8s-master1:~# vim /etc/security/limits.conf

* soft core unlimited

* hard core unlimited

* soft nproc 1000000

* hard nproc 1000000

* soft nofile 1000000

* hard nofile 1000000

* soft memlock 32000

* hard memlock 32000

* soft msgqueue 8192000

* hard msgqueue 8192000

#:重启自己 root@k8s-master1:~# reboot

#:配置haproxy+keepalived #:安装haproxy和keepalive的 root@k8s-ha1:~# apt install -y haproxy keepalived #:配置keepalive的 root@k8s-ha1:~# find / -name keepalived.conf* root@k8s-ha1:~# cp /usr/share/doc/keepalived/samples/keepalived.conf.vrrp /etc/keepalived/keepalived.conf root@k8s-ha1:~# vim /etc/keepalived/keepalived.conf virtual_ipaddress { 192.168.5.248 dev eth0 label eth0:0 } #:配置haproxy root@k8s-etcd3:~# vim /etc/haproxy/haproxy.cfg listen k8s-api-6443 bind 192.168.5.248:6443 mode tcp server 192.168.5.101 192.168.5.101:6443 check fall 3 rise 3 inter 3s server 192.168.5.102 192.168.5.102:6443 check fall 3 rise 3 inter 3s #:重启服务 root@k8s-ha1:~# systemctl restart haproxy root@k8s-ha1:~# systemctl restart keepalived #:设置开机启动 root@k8s-ha1:~# systemctl enable haproxy root@k8s-ha1:~# systemctl enable keepalived #:另外一台也用同样的方法,然后测试

#:在master1上配置ansible #:安装ansible root@k8s-master1:/etc/ansible# apt install -y ansible #:将项目clone下来,我们用的0.6.1.地址:https://github.com/easzlab/kubeasz/tree/0.6.1 root@k8s-master1:/etc/ansible# cd /opt/ root@k8s-master1:/opt# git clone -b 0.6.1 https://github.com/easzlab/kubeasz.git #:将ansible默认安装的文件移走,然后将clone下来的所有文件移到ansible的配置中 root@k8s-master1:/opt# mv /etc/ansible/* /tmp #:注意此处如果没有别的东西,可删除 root@k8s-master1:/opt# cp -rf kubeasz/* /etc/ansible/ #:如果你的版本启动的时候,需要改变参数,可以到一下目录,修改 root@k8s-master1:/etc/ansible/roles/kube-master/templates# cd /etc/ansible/roles/kube-master/templates/ root@k8s-master1:/etc/ansible/roles/kube-master/templates# ls aggregator-proxy-csr.json.j2 kube-apiserver.service.j2 kube-controller-manager.service.j2 kube-scheduler.service.j2 basic-auth.csv.j2 kube-apiserver-v1.8.service.j2 kubernetes-csr.json.j2 #:我们选择什么部署方式,是单节点还是多节点,我们是多节点 root@k8s-master1:/etc/ansible# cd /etc/ansible/ root@k8s-master1:/etc/ansible# ll example/ total 40 drwxr-xr-x 2 root root 4096 Oct 6 13:42 ./ drwxr-xr-x 10 root root 4096 Oct 6 13:42 ../ -rw-r--r-- 1 root root 2207 Oct 6 13:42 hosts.allinone.example -rw-r--r-- 1 root root 2241 Oct 6 13:42 hosts.allinone.example.en -rw-r--r-- 1 root root 2397 Oct 6 13:42 hosts.cloud.example -rw-r--r-- 1 root root 2325 Oct 6 13:42 hosts.cloud.example.en -rw-r--r-- 1 root root 2667 Oct 6 13:42 hosts.m-masters.example #;多节点部署 ,中文版 -rw-r--r-- 1 root root 2626 Oct 6 13:42 hosts.m-masters.example.en #;多节点部署,英文版 -rw-r--r-- 1 root root 2226 Oct 6 13:42 hosts.s-master.example -rw-r--r-- 1 root root 2258 Oct 6 13:42 hosts.s-master.example.en #:因为我们部署的是多节点,所以讲多节点部署的文件拷贝到ansible下面 root@k8s-master1:/etc/ansible# cp example/hosts.m-masters.example ./hosts

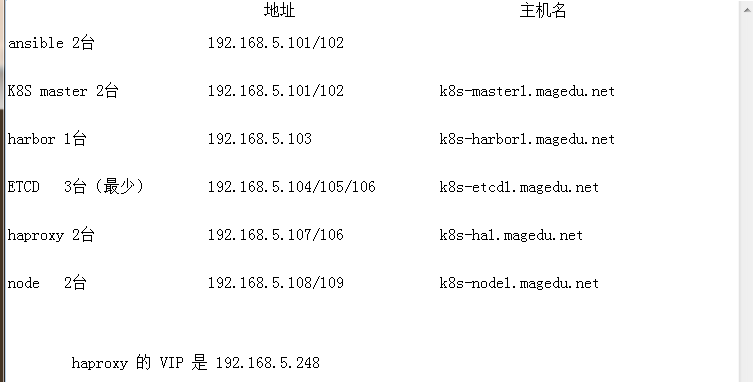

#:ansible部署k8s #;根据官方文档配置 https://github.com/easzlab/kubeasz/blob/0.6.1/docs/setup/00-planning_and_overall_intro.md #:升级一下apt源 root@k8s-master1:/etc/ansible# apt-get update #:安装python2.7 root@k8s-master1:/etc/ansible# apt-get install python2.7 #:做软链接 root@k8s-master2:~# ln -s /usr/bin/python2.7 /usr/bin/python #:在node和etcd节点也安装Python,并做软链接 #:下载二进制文件K8S解压到/etc/ansible/bin目录 root@k8s-master1:/usr/local/src# tar xf k8s.1-13-5.tar.gz root@k8s-master1:/usr/local/src# ls bin k8s.1-13-5.tar.gz root@k8s-master1:/usr/local/src# mv bin/* /etc/ansible/bin/ #:测试一下,必须可以打出当前版本 root@k8s-master1:/etc/ansible/bin# ./kube-apiserver --version Kubernetes v1.13.5 #:退出目录,根据情况修改hosts root@k8s-master1:/etc/ansible/bin# cd .. root@k8s-master1:/etc/ansible# vim hosts #:这个就是选的那个部署方式,改的名 [deploy] 192.168.5.101 NTP_ENABLED=no #:本机的IP # etcd集群请提供如下NODE_NAME,注意etcd集群必须是1,3,5,7...奇数个节点 [etcd] 192.168.5.104 NODE_NAME=etcd1 192.168.5.105 NODE_NAME=etcd2 192.168.5.106 NODE_NAME=etcd3 [new-etcd] # 预留组,后续添加etcd节点使用 #192.168.1.x NODE_NAME=etcdx [kube-master] 192.168.5.101 [new-master] # 预留组,后续添加master节点使用 192.168.5.102 #:这个是故意留出来的,后期测试添加节点 [kube-node] 192.168.5.108 [new-node] # 预留组,后续添加node节点使用 192.168.5.109 K8S_VER="v1.13" #:这个要注意版本号 MASTER_IP="192.168.5.248" #:这个是VIP地址 KUBE_APISERVER="https://{{ MASTER_IP }}:6443" #:注意这个是6443 CLUSTER_NETWORK="calico" #;我们用的calico网络 SERVICE_CIDR="10.20.0.0/16" #;service 的网段,注意不要和内网冲突 CLUSTER_CIDR="172.31.0.0/16" #:这个是分配给容器的网段 CLUSTER_KUBERNETES_SVC_IP="10.20.0.1" #:上面service定义的第一个网段 CLUSTER_DNS_SVC_IP="10.20.254.254" #:DNS的网段,我们用的service最后一个网段 CLUSTER_DNS_DOMAIN="linux37.local." #:DNS的域名 BASIC_AUTH_USER="admin" BASIC_AUTH_PASS="123456" #:集群的密码 bin_dir="/usr/bin" #:注意这个一般放这个文件,不然执行时候还要修改

#:测试一下

root@k8s-master1:/etc/ansible# ansible all -m ping

#:根据官网分布安装 root@k8s-master1:/etc/ansible# ansible-playbook 01.prepare.yml #:执行02的时候,如果想换版本,就去下载高点的版本,然后解压 root@k8s-master1:/opt# tar xf etcd-v3.3.15-linux-amd64.tar.gz #:进到解压目录,测试一下 root@k8s-master1:/opt/etcd-v3.3.15-linux-amd64# ./etcd --version #:然后将可执行文件移到ansible root@k8s-master1:/opt/etcd-v3.3.15-linux-amd64# mv etcd* /etc/ansible/bin/ #:开始部署02 root@k8s-master1:/etc/ansible# ansible-playbook 02.etcd.yml #:在任何一个etcd服务器执行一下命令,验证etcd服务(必须返回successfully) root@k8s-etcd1:~# export NODE_IPS="192.168.5.104 192.168.5.105 192.168.5.106" root@k8s-etcd1:~# for ip in ${NODE_IPS}; do ETCDCTL_API=3 /usr/bin/etcdctl --endpoints=https://${ip}:2379 --cacert=/etc/kubernetes/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem endpoint health;done https://192.168.5.104:2379 is healthy: successfully committed proposal: took = 10.453066ms https://192.168.5.105:2379 is healthy: successfully committed proposal: took = 11.483075ms https://192.168.5.106:2379 is healthy: successfully committed proposal: took = 11.542092ms #:因为docker我们已经装好了 ,所以03就不用做了 #:开始部署04 root@k8s-master1:/etc/ansible# ansible-playbook 04.kube-master.yml #:找一台主机测试VIP的6443通不通 root@k8s-harbor1:~# telnet 192.168.5.248 6443 #:现在就可以在master1上get node了,查看状态是不是ready root@k8s-master1:/etc/ansible# kubectl get node NAME STATUS ROLES AGE VERSION 192.168.5.101 Ready,SchedulingDisabled master 2m9s v1.13.5 #:开始部署05(将node节点添加到master) root@k8s-master1:/etc/ansible# ansible-playbook 05.kube-node.yml TASK [kube-node : 开启kubelet 服务] ***************************************************************************************************** fatal: [192.168.5.108]: FAILED! => {"changed": true, "cmd": "systemctl daemon-reload && systemctl restart kubelet", "delta": "0:00:00.249926", "end": "2019-10-06 15:40:48.272879", "msg": "non-zero return code", "rc": 5, "start": "2019-10-06 15:40:48.022953", "stderr": "Failed to restart kubelet.service: Unit docker.service not found.", "stderr_lines": ["Failed to restart kubelet.service: Unit docker.service not found."], "stdout": "", "stdout_lines": []} #:此时会报错,因为node节点没有安装docker(这次在node1和2都安装docker) root@k8s-node1:~# bash docker_install.sh #:在此执行 root@k8s-master1:/etc/ansible# ansible-playbook 05.kube-node.yml #:查看 root@k8s-master1:/etc/ansible# kubectl get node NAME STATUS ROLES AGE VERSION 192.168.5.101 Ready,SchedulingDisabled master 18m v1.13.5 192.168.5.108 Ready node 17s v1.13.5 #:开始部署06 (网络组件) #:我们需要准备镜像,准备哪些可以查看你安装那个版本的calico就去查看那个版本(我们这个是装的3.4的,具体看task/default/main.yml定义的版本) root@k8s-master1:/etc/ansible# vim roles/calico/templates/calico-v3.4.yaml.j2 #:在这个里面搜索image,然后找到需要下载的镜像 #:找到后再GitHub上查找calico3.4最新版本的下载下来 #;然后kublet也需要一个镜像 root@k8s-master1:/etc/ansible# vim roles/calico/templates/calico-v3.4.yaml.j2 --pod-infra-container-image={{ SANDBOX_IMAGE }} \ #:他是用变量显示的,我们查找一下这个镜像在哪里 root@k8s-master1:/etc/ansible# grep pod-infra-container-image* ./* -R root@k8s-master1:/etc/ansible# grep mirrorgooglecontainers* ./* -R ./roles/kube-node/defaults/main.yml:SANDBOX_IMAGE: "mirrorgooglecontainers/pause-amd64:3.1" #:这样我们就查到他在哪里了,打开文件 root@k8s-master1:/etc/ansible# vim ./roles/kube-node/defaults/main.yml SANDBOX_IMAGE: "mirrorgooglecontainers/pause-amd64:3.1" #:然后我们找一台主机,将这个镜像下下来,然后修改tag号,传到harbor root@k8s-node1:~# docker pull mirrorgooglecontainers/pause-amd64:3.1 root@k8s-node1:~# docker tag mirrorgooglecontainers/pause-amd64:3.1 harbor.magedu.net/linux37/pause-amd64:3.1 root@k8s-node1:~# docker push harbor.magedu.net/linux37/pause-amd64:3.1 #:在master主机改掉镜像地址 root@k8s-master1:/etc/ansible# vim ./roles/kube-node/defaults/main.yml SANDBOX_IMAGE: "harbor.magedu.net/linux37/pause-amd64:3.1" #:然后重新执行一下 root@k8s-master1:/etc/ansible# ansible-playbook 05.kube-node.yml #:在node节点查看 root@k8s-node1:~# ps aux |grep kubelet --pod-infra-container-image=harbor.magedu.net/linux37/pause-amd64:3.1 #:然后将master的也改掉 root@k8s-master1:/etc/ansible# vim /etc/systemd/system/kubelet.service --pod-infra-container-image=harbor.magedu.net/linux37/pause-amd64:3.1 \ --max-pods=110 \ #:注意这个在生产环境一定要改大点,这个就是一个master起多少容器 #:然后重启 root@k8s-master1:/etc/ansible# systemctl daemon-reload root@k8s-master1:/etc/ansible# systemctl restart kubelet #;查看 root@k8s-master1:/etc/ansible# kubectl get nodes #:然后还继续准备网络的镜像 #:将下载好的calico包传到服务器,并解压,解压后会出现三个镜像 root@k8s-master1:/opt# tar xf release-v3.4.4_\(1\).tgz root@k8s-master1:/opt# cd release-v3.4.4/ root@k8s-master1:/opt/release-v3.4.4# cd images/ #:先将ini的镜像导进来,改tag,传到harbor root@k8s-master1:/opt/release-v3.4.4/images# docker load -i calico-cni.tar root@k8s-master1:/opt/release-v3.4.4/images# docker tag f5e5bae3eb87 harbor.magedu.net/linux37/calico-cni:v3.4.4 root@k8s-master1:/opt/release-v3.4.4/images# docker push harbor.magedu.net/linux37/calico-cni:v3.4.4 #:然后修改镜像地址 root@k8s-master1:/etc/ansible# vim roles/calico/templates/calico-v3.4.yaml.j2 - name: install-cni image: harbor.magedu.net/linux37/calico-cni:v3.4.4 #:将node镜像导进来,改tag,传到harbor root@k8s-master1:/opt/release-v3.4.4/images# docker load -i calico-node.tar root@k8s-master1:/opt/release-v3.4.4/images# docker tag a8dbf15bbd6f harbor.magedu.net/linux37/calico-node:v3.4.4 root@k8s-master1:/opt/release-v3.4.4/images# docker push harbor.magedu.net/linux37/calico-node:v3.4.4 #:然后修改镜像地址 root@k8s-master1:/etc/ansible# vim roles/calico/templates/calico-v3.4.yaml.j2 - name: calico-node image: harbor.magedu.net/linux37/calico-node:v3.4.4 #:将kubee镜像导进来,改tag,传到harbor root@k8s-master1:/opt/release-v3.4.4/images# docker load -i calico-kube-controllers.tar root@k8s-master1:/opt/release-v3.4.4/images# docker tag 0030ff291350 harbor.magedu.net/linux37/calico-kube-controllers:v3.4.4 root@k8s-master1:/opt/release-v3.4.4/images# docker push harbor.magedu.net/linux37/calico-kube-controllers:v3.4.4 #:然后修改镜像地址 root@k8s-master1:/etc/ansible# vim roles/calico/templates/calico-v3.4.yaml.j2 containers: - name: calico-kube-controllers image: harbor.magedu.net/linux37/calico-kube-controllers:v3.4.4 #:开始部署06 root@k8s-master1:/etc/ansible# ansible-playbook 06.network.yml #:查看 root@k8s-master1:/etc/ansible# calicoctl node status Calico process is running. IPv4 BGP status +---------------+-------------------+-------+----------+-------------+ | PEER ADDRESS | PEER TYPE | STATE | SINCE | INFO | +---------------+-------------------+-------+----------+-------------+ | 192.168.5.108 | node-to-node mesh | up | 08:57:09 | Established | +---------------+-------------------+-------+----------+-------------+

#:添加node和master

#:首先在配置文件中写好要添加的node root@k8s-master1:/etc/ansible# vim hosts [new-node] # 预留组,后续添加node节点使用 192.168.5.109 #:执行添加 root@k8s-master1:/etc/ansible# ansible-playbook 20.addnode.yml #:查看 root@k8s-master1:/etc/ansible# kubectl get node NAME STATUS ROLES AGE VERSION 192.168.5.101 Ready,SchedulingDisabled master 93m v1.13.5 192.168.5.108 Ready node 75m v1.13.5 192.168.5.109 Ready node 62s v1.13.5 #:因为它安装的docker不符合我们的版本,所以执行替换 root@k8s-master1:/etc/ansible# docker version Client: Version: 18.09.9 API version: 1.39 Go version: go1.11.13 Git commit: 039a7df9ba Built: Wed Sep 4 16:57:28 2019 OS/Arch: linux/amd64 Experimental: false Server: Docker Engine - Community Engine: Version: 18.09.9 API version: 1.39 (minimum version 1.12) Go version: go1.11.13 Git commit: 039a7df Built: Wed Sep 4 16:19:38 2019 OS/Arch: linux/amd64 Experimental: false root@k8s-master1:/etc/ansible# cp /usr/bin/docker* /etc/ansible/bin/ root@k8s-master1:/etc/ansible# cp /usr/bin/containerd* /etc/ansible/bin/ #:在添加会出错,因为node已经添加过了,所以在配置文件删掉重新执行 root@k8s-master1:/etc/ansible# vim hosts [new-node] # 预留组,后续添加node节点使用 192.168.5.109 #:再次执行 root@k8s-master1:/etc/ansible# ansible-playbook 20.addnode.yml #;检查 root@k8s-master1:/etc/ansible# kubectl get nodes #:在node节点查看 root@k8s-node2:~# calicoctl node status Calico process is running. IPv4 BGP status +---------------+-------------------+-------+----------+-------------+ | PEER ADDRESS | PEER TYPE | STATE | SINCE | INFO | +---------------+-------------------+-------+----------+-------------+ | 192.168.5.101 | node-to-node mesh | up | 09:13:07 | Established | | 192.168.5.108 | node-to-node mesh | up | 09:13:07 | Established | +---------------+-------------------+-------+----------+-------------+

#:添加master #:在配置文件写好要添加的master root@k8s-master1:/etc/ansible# vim hosts [new-master] # 预留组,后续添加master节点使用 192.168.5.102 #:注释lb选项 root@k8s-master1:/etc/ansible# vim 21.addmaster.yml # reconfigure and restart the haproxy service #- hosts: lb # roles: # - lb #;添加 root@k8s-master1:/etc/ansible# ansible-playbook 21.addmaster.yml #:检测 root@k8s-master1:/etc/ansible# kubectl get node NAME STATUS ROLES AGE VERSION 192.168.5.101 Ready,SchedulingDisabled master 113m v1.13.5 192.168.5.102 Ready,SchedulingDisabled master 5m58s v1.13.5 192.168.5.108 Ready node 95m v1.13.5 192.168.5.109 Ready node 20m v1.13.5 #:在node节点检测(必须要保证后面是establishd) root@k8s-node1:~# calicoctl node status Calico process is running. IPv4 BGP status +---------------+-------------------+-------+----------+-------------+ | PEER ADDRESS | PEER TYPE | STATE | SINCE | INFO | +---------------+-------------------+-------+----------+-------------+ | 192.168.5.101 | node-to-node mesh | up | 08:57:10 | Established | | 192.168.5.109 | node-to-node mesh | up | 09:13:08 | Established | | 192.168.5.102 | node-to-node mesh | up | 09:22:28 | Established | +---------------+-------------------+-------+----------+-------------+ #:运行几个容器检测一下 root@k8s-master1:/etc/ansible# kubectl run net-test --image=alpine --replicas=4 sleep 36000 root@k8s-master1:/etc/ansible# kubectl get pod NAME READY STATUS RESTARTS AGE net-test-7d5ddd7497-9zmfs 1/1 Running 0 62s net-test-7d5ddd7497-l2b28 1/1 Running 0 62s net-test-7d5ddd7497-strk6 1/1 Running 0 62s net-test-7d5ddd7497-vwsh7 1/1 Running 0 62s #:查看pod的地址 root@k8s-master1:/etc/ansible# kubectl get pod -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES net-test-7d5ddd7497-9zmfs 1/1 Running 0 112s 172.31.58.65 192.168.5.108 <none> <none> net-test-7d5ddd7497-l2b28 1/1 Running 0 112s 172.31.58.66 192.168.5.108 <none> <none> net-test-7d5ddd7497-strk6 1/1 Running 0 112s 172.31.13.129 192.168.5.109 <none> <none> net-test-7d5ddd7497-vwsh7 1/1 Running 0 112s 172.31.13.130 192.168.5.109 <none> <none> #:进到容器测试一下 root@k8s-master1:/etc/ansible# kubectl exec -it net-test-7d5ddd7497-9zmfs sh / # ping 172.31.13.129 PING 172.31.13.129 (172.31.13.129): 56 data bytes 64 bytes from 172.31.13.129: seq=0 ttl=62 time=2.312 ms ^C --- 172.31.13.129 ping statistics --- 1 packets transmitted, 1 packets received, 0% packet loss round-trip min/avg/max = 2.312/2.312/2.312 ms / # ping 223.6.6.6 PING 223.6.6.6 (223.6.6.6): 56 data bytes 64 bytes from 223.6.6.6: seq=0 ttl=127 time=41.006 ms ^C --- 223.6.6.6 ping statistics --- 1 packets transmitted, 1 packets received, 0% packet loss round-trip min/avg/max = 41.006/41.006/41.006 ms