系统centos7.6.1801

master: 192.168.60.115

slave1:192.168.60.102

slave2:192.168.60.101

保证所有swap分区是0

所有节点:

[root@k8s-master ~]# cat <<EOF > /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

[root@k8s-master ~]# sysctl --system

[root@k8s-master ~]# yum install -y yum-utils device-mapper-persistent-data lvm2

[root@k8s-master ~]# yum-config-manager --add-repo https://download.docker.com/linux/cento

s/docker-ce.repo

[root@k8s-master ~]# yum install docker-ce-18.06.3.ce-3.el7

添加k8s阿里源

[root@k8s-master ~]# cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/d

oc/rpm-package-key.gpg

EOF

安装k8s kubeadmin

[root@k8s-master ~]# yum install -y kubelet kubeadm kubectl #不安装默认1.18.4版本会报以下错,因kuberadmin,kubectl后续会拉取proxy等镜像最好同版本这个先这样

指定版本会报错:

[root@k8s-master ~]# yum install -y kubelet-1.13.3 kubeadm-1.13.3 kubectl-1.13.3 kubernete

s-cni-0.7.5

错误:软件包:kubelet-1.13.3-0.x86_64 (kubernetes)

需要:kubernetes-cni = 0.6.0

可用: kubernetes-cni-0.3.0.1-0.07a8a2.x86_64 (kubernetes)

kubernetes-cni = 0.3.0.1-0.07a8a2

可用: kubernetes-cni-0.5.1-0.x86_64 (kubernetes)

kubernetes-cni = 0.5.1-0

可用: kubernetes-cni-0.5.1-1.x86_64 (kubernetes)

kubernetes-cni = 0.5.1-1

可用: kubernetes-cni-0.6.0-0.x86_64 (kubernetes)

kubernetes-cni = 0.6.0-0

可用: kubernetes-cni-0.7.5-0.x86_64 (kubernetes)

kubernetes-cni = 0.7.5-0

错误:软件包:kubeadm-1.13.3-0.x86_64 (kubernetes)

需要:kubernetes-cni >= 0.6.0

可用: kubernetes-cni-0.3.0.1-0.07a8a2.x86_64 (kubernetes)

kubernetes-cni = 0.3.0.1-0.07a8a2

可用: kubernetes-cni-0.5.1-0.x86_64 (kubernetes)

kubernetes-cni = 0.5.1-0

可用: kubernetes-cni-0.5.1-1.x86_64 (kubernetes)

kubernetes-cni = 0.5.1-1

可用: kubernetes-cni-0.6.0-0.x86_64 (kubernetes)

kubernetes-cni = 0.6.0-0

可用: kubernetes-cni-0.7.5-0.x86_64 (kubernetes)

kubernetes-cni = 0.7.5-0

您可以尝试添加 --skip-broken 选项来解决该问题

您可以尝试执行:rpm -Va --nofiles --nodigest

自定义kuber的版本为1.18.3也可成功#没有1.18.4版本现在

主节点操作

[root@k8s-master ~]# kubeadm init --image-repository=registry.aliyuncs.com/google_containers --pod-network-cidr=172.17.0.0/16 --kubernetes-version=v1.18.3

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.60.115:6443 --token 67tkfz.u343ok0fmwhaawop \

--discovery-token-ca-cert-hash sha256:534f975a09ddc465d8f319e5d15cc5ef49a797726dd4e1feba4d1635b9a1e0e0

子节点通过以上黑体字添加到集群里

完成子节点添加后:

主节点操作:

以下错没有创建配置文件

[root@k8s-master ~]# kubectl get nodes

The connection to the server localhost:8080 was refused - did you specify the right host or port?

创建配置文件

[root@k8s-master ~]# mkdir -p $HOME/.kube

[root@k8s-master ~]#

[root@k8s-master ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s-master ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

发现所有节点都是notready原因是没有安装网络组件

查日志 journalctl -f -u kubelet以下报错确定无疑

6月 19 16:34:07 k8s-master kubelet[9304]: E0619 16:34:07.402431 9304 kubelet.go:2187] Container runtime network not ready: NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is not ready: cni config uninitialized

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master NotReady master 2m57s v1.18.4

k8s-slave1.novalocal NotReady <none> 109s v1.18.4

k8s-slave2.novalocal NotReady <none> 118s v1.18.4

安装网路组件

[root@k8s-master ~]# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

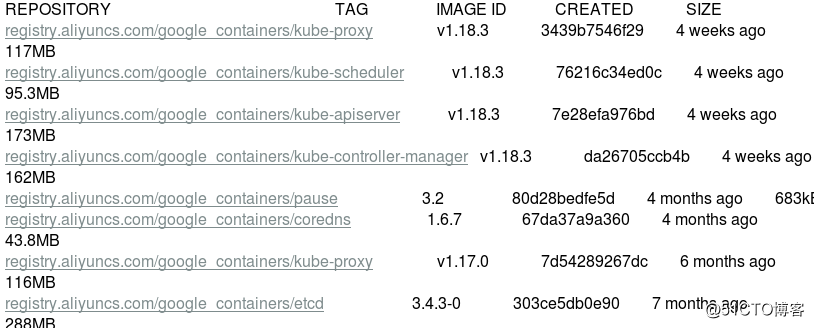

检查镜像:

[root@k8s-master ~]# docker images

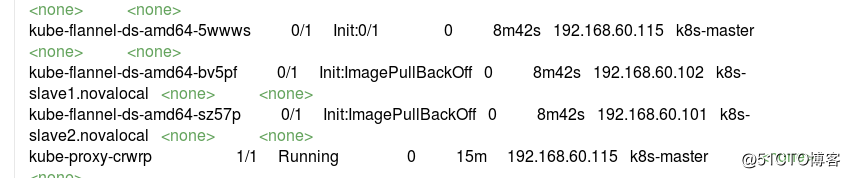

检查所有k8s 系统组件 发现有拉去失败的删除失败的镜像重新拉(网络原因)

也可以docker pull 镜像手动去拉保证flannel组件版本节点一致自动会是running状态

[root@k8s-master ~]# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

[root@k8s-master ~]# kubectl get pods -o wide -n kube-system

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready master 83m v1.18.4

k8s-slave1.novalocal Ready <none> 82m v1.18.4

k8s-slave2.novalocal Ready <none> 82m v1.18.4