前言

1.在当下的环境上,短视频已是生活的常态,但这是很容易就侵犯别人肖像权,好多视频都会在后期给不相关的人打上码,这里是基于yolov5的人脸检测实现人脸自动打码功能。

2.开发环境是win10,显卡RTX3080,cuda10.2,cudnn7.1,OpenCV4.5,NCNN,IDE 是Vs2019。

一、人脸检测

1.首先最主要的一步是肯定是先检测到当前图像是否存在人脸,这个属于人脸检测的范围,目前有很多开源的人脸检测算法和模型,OpenCV本身也带有人脸检测的算法,但了帧率跟得上,这里使用更轻快些的yolov5。

2.实现代码

#ifndef YOLOFACE_H

#define YOLOFACE_H

#include <opencv2/core/core.hpp>

#include <opencv2/opencv.hpp>

#include <ncnn/net.h>

struct Object

{

cv::Rect rect;

int label;

float prob;

std::vector<cv::Point2f> pts;

float live;

};

class YoloFace

{

public:

YoloFace();

int loadModel(std::string model, bool use_gpu = true);

int detection(const cv::Mat& rgb, std::vector<Object>& objects, float prob_threshold = 0.25f, float nms_threshold = 0.45f);

int drawFace(cv::Mat& rgb,cv::Mat &cv_dst, std::vector<Object>& objects);

private:

ncnn::Net face_net;

int target_size = 640;

const float norm_vals[3] = {

1 / 255.f, 1 / 255.f, 1 / 255.f };

int image_w;

int image_h;

int in_w;

int in_h;

};

#endif // NANODET_H

#include "yoloface.h"

#include <opencv2/core/core.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#define clip(x, y) (x < 0 ? 0 : (x > y ? y : x))

static inline float intersection_area(const Object& a, const Object& b)

{

cv::Rect_<float> inter = a.rect & b.rect;

return inter.area();

}

static void qsort_descent_inplace(std::vector<Object>& faceobjects, int left, int right)

{

int i = left;

int j = right;

float p = faceobjects[(left + right) / 2].prob;

while (i <= j)

{

while (faceobjects[i].prob > p)

i++;

while (faceobjects[j].prob < p)

j--;

if (i <= j)

{

// swap

std::swap(faceobjects[i], faceobjects[j]);

i++;

j--;

}

}

#pragma omp parallel sections

{

#pragma omp section

{

if (left < j) qsort_descent_inplace(faceobjects, left, j);

}

#pragma omp section

{

if (i < right) qsort_descent_inplace(faceobjects, i, right);

}

}

}

static void qsort_descent_inplace(std::vector<Object>& faceobjects)

{

if (faceobjects.empty())

return;

qsort_descent_inplace(faceobjects, 0, faceobjects.size() - 1);

}

static void nms_sorted_bboxes(const std::vector<Object>& faceobjects, std::vector<int>& picked, float nms_threshold)

{

picked.clear();

const int n = faceobjects.size();

std::vector<float> areas(n);

for (int i = 0; i < n; i++)

{

areas[i] = faceobjects[i].rect.area();

}

for (int i = 0; i < n; i++)

{

const Object& a = faceobjects[i];

int keep = 1;

for (int j = 0; j < (int)picked.size(); j++)

{

const Object& b = faceobjects[picked[j]];

// intersection over union

float inter_area = intersection_area(a, b);

float union_area = areas[i] + areas[picked[j]] - inter_area;

// float IoU = inter_area / union_area

if (inter_area / union_area > nms_threshold)

keep = 0;

}

if (keep)

picked.push_back(i);

}

}

static inline float sigmoid(float x)

{

return static_cast<float>(1.f / (1.f + exp(-x)));

}

static void generate_proposals(const ncnn::Mat& anchors, int stride, const ncnn::Mat& in_pad, const ncnn::Mat& feat_blob, float prob_threshold, std::vector<Object>& objects)

{

const int num_grid = feat_blob.h;

int num_grid_x;

int num_grid_y;

if (in_pad.w > in_pad.h)

{

num_grid_x = in_pad.w / stride;

num_grid_y = num_grid / num_grid_x;

}

else

{

num_grid_y = in_pad.h / stride;

num_grid_x = num_grid / num_grid_y;

}

const int num_class = feat_blob.w - 5-10;

const int num_anchors = anchors.w / 2;

for (int q = 0; q < num_anchors; q++)

{

const float anchor_w = anchors[q * 2];

const float anchor_h = anchors[q * 2 + 1];

const ncnn::Mat feat = feat_blob.channel(q);

for (int i = 0; i < num_grid_y; i++)

{

for (int j = 0; j < num_grid_x; j++)

{

const float* featptr = feat.row(i * num_grid_x + j);

// find class index with max class score

int class_index = 0;

float class_score = -FLT_MAX;

for (int k = 0; k < num_class; k++)

{

float score = featptr[5 +10+ k];

if (score > class_score)

{

class_index = k;

class_score = score;

}

}

float box_score = featptr[4];

float confidence = sigmoid(box_score); //* sigmoid(class_score);

if (confidence >= prob_threshold)

{

// yolov5/models/yolo.py Detect forward

// y = x[i].sigmoid()

// y[..., 0:2] = (y[..., 0:2] * 2. - 0.5 + self.grid[i].to(x[i].device)) * self.stride[i] # xy

// y[..., 2:4] = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # wh

float dx = sigmoid(featptr[0]);

float dy = sigmoid(featptr[1]);

float dw = sigmoid(featptr[2]);

float dh = sigmoid(featptr[3]);

float pb_cx = (dx * 2.f - 0.5f + j) * stride;

float pb_cy = (dy * 2.f - 0.5f + i) * stride;

float pb_w = pow(dw * 2.f, 2) * anchor_w;

float pb_h = pow(dh * 2.f, 2) * anchor_h;

float x0 = pb_cx - pb_w * 0.5f;

float y0 = pb_cy - pb_h * 0.5f;

float x1 = pb_cx + pb_w * 0.5f;

float y1 = pb_cy + pb_h * 0.5f;

Object obj;

obj.rect.x = x0;

obj.rect.y = y0;

obj.rect.width = x1 - x0;

obj.rect.height = y1 - y0;

obj.label = class_index;

obj.prob = confidence;

for (int l = 0; l < 5; l++)

{

float x = featptr[2 * l + 5] * anchor_w + j * stride;

float y = featptr[2 * l + 1 + 5] * anchor_h + i * stride;

obj.pts.push_back(cv::Point2f(x, y));

}

objects.push_back(obj);

}

}

}

}

}

YoloFace::YoloFace()

{

}

int YoloFace::loadModel(std::string model, bool use_gpu)

{

bool has_gpu = false;

face_net.clear();

face_net.opt = ncnn::Option();

#if NCNN_VULKAN

ncnn::create_gpu_instance();

has_gpu = ncnn::get_gpu_count() > 0;

#endif

bool to_use_gpu = has_gpu && use_gpu;

face_net.opt.use_vulkan_compute = to_use_gpu;

face_net.load_param((model+".param").c_str());

face_net.load_model((model + ".bin").c_str());

return 0;

}

int YoloFace::detection(const cv::Mat& rgb, std::vector<Object>& objects, float prob_threshold, float nms_threshold)

{

int img_w = rgb.cols;

int img_h = rgb.rows;

// letterbox pad to multiple of 32

int w = img_w;

int h = img_h;

float scale = 1.f;

if (w > h)

{

scale = (float)target_size / w;

w = target_size;

h = h * scale;

}

else

{

scale = (float)target_size / h;

h = target_size;

w = w * scale;

}

ncnn::Mat in = ncnn::Mat::from_pixels_resize(rgb.data, ncnn::Mat::PIXEL_RGB, img_w, img_h, w, h);

int wpad = (w + 31) / 32 * 32 - w;

int hpad = (h + 31) / 32 * 32 - h;

ncnn::Mat in_pad;

ncnn::copy_make_border(in, in_pad, hpad / 2, hpad - hpad / 2, wpad / 2, wpad - wpad / 2, ncnn::BORDER_CONSTANT, 114.f);

in_pad.substract_mean_normalize(0, norm_vals);

ncnn::Extractor ex = face_net.create_extractor();

ex.input("data", in_pad);

std::vector<Object> proposals;

// anchor setting from yolov5/models/yolov5s.yaml

// stride 8

{

ncnn::Mat out;

ex.extract("981", out);

ncnn::Mat anchors(6);

anchors[0] = 4.f;

anchors[1] = 5.f;

anchors[2] = 8.f;

anchors[3] = 10.f;

anchors[4] = 13.f;

anchors[5] = 16.f;

std::vector<Object> objects8;

generate_proposals(anchors, 8, in_pad, out, prob_threshold, objects8);

proposals.insert(proposals.end(), objects8.begin(), objects8.end());

}

// stride 16

{

ncnn::Mat out;

ex.extract("983", out);

ncnn::Mat anchors(6);

anchors[0] = 23.f;

anchors[1] = 29.f;

anchors[2] = 43.f;

anchors[3] = 55.f;

anchors[4] = 73.f;

anchors[5] = 105.f;

std::vector<Object> objects16;

generate_proposals(anchors, 16, in_pad, out, prob_threshold, objects16);

proposals.insert(proposals.end(), objects16.begin(), objects16.end());

}

// stride 32

{

ncnn::Mat out;

ex.extract("985", out);

ncnn::Mat anchors(6);

anchors[0] = 146.f;

anchors[1] = 217.f;

anchors[2] = 231.f;

anchors[3] = 300.f;

anchors[4] = 335.f;

anchors[5] = 433.f;

std::vector<Object> objects32;

generate_proposals(anchors, 32, in_pad, out, prob_threshold, objects32);

proposals.insert(proposals.end(), objects32.begin(), objects32.end());

}

// sort all proposals by score from highest to lowest

qsort_descent_inplace(proposals);

// apply nms with nms_threshold

std::vector<int> picked;

nms_sorted_bboxes(proposals, picked, nms_threshold);

int count = picked.size();

objects.resize(count);

for (int i = 0; i < count; i++)

{

objects[i] = proposals[picked[i]];

// adjust offset to original unpadded

float x0 = (objects[i].rect.x - (wpad / 2)) / scale;

float y0 = (objects[i].rect.y - (hpad / 2)) / scale;

float x1 = (objects[i].rect.x + objects[i].rect.width - (wpad / 2)) / scale;

float y1 = (objects[i].rect.y + objects[i].rect.height - (hpad / 2)) / scale;

for (int j = 0; j < objects[i].pts.size(); j++)

{

float ptx = (objects[i].pts[j].x - (wpad / 2)) / scale;

float pty = (objects[i].pts[j].y - (hpad / 2)) / scale;

objects[i].pts[j] = cv::Point2f(ptx, pty);

}

// clip

x0 = std::max(std::min(x0, (float)(img_w - 1)), 0.f);

y0 = std::max(std::min(y0, (float)(img_h - 1)), 0.f);

x1 = std::max(std::min(x1, (float)(img_w - 1)), 0.f);

y1 = std::max(std::min(y1, (float)(img_h - 1)), 0.f);

objects[i].rect.x = x0;

objects[i].rect.y = y0;

objects[i].rect.width = x1 - x0;

objects[i].rect.height = y1 - y0;

}

return 0;

}

int YoloFace::drawFace(cv::Mat& rgb,cv::Mat &cv_dst, std::vector<Object>& objects)

{

cv_dst = rgb.clone();

static const unsigned char colors[19][3] = {

{

54, 67, 244},

{

99, 30, 233},

{

176, 39, 156},

{

183, 58, 103},

{

181, 81, 63},

{

243, 150, 33},

{

244, 169, 3},

{

212, 188, 0},

{

136, 150, 0},

{

80, 175, 76},

{

74, 195, 139},

{

57, 220, 205},

{

59, 235, 255},

{

7, 193, 255},

{

0, 152, 255},

{

34, 87, 255},

{

72, 85, 121},

{

158, 158, 158},

{

139, 125, 96}

};

int color_index = 0;

for (size_t i = 0; i < objects.size(); i++)

{

Object& obj = objects[i];

const unsigned char* color = colors[color_index % 19];

color_index++;

cv::Scalar cc(color[0], color[1], color[2]);

cv::rectangle(cv_dst,obj.rect, cc, 2);

char text[256];

sprintf(text, "Face = %.1f%%", obj.prob * 100);

int baseLine = 0;

cv::Size label_size = cv::getTextSize(text, cv::FONT_HERSHEY_SIMPLEX, 0.5, 1, &baseLine);

int x = obj.rect.x;

int y = obj.rect.y - label_size.height - baseLine;

if (y < 0)

y = 0;

if (x + label_size.width > rgb.cols)

x = rgb.cols - label_size.width;

cv::rectangle(cv_dst, cv::Rect(cv::Point(x, y), cv::Size(label_size.width, label_size.height + baseLine)), cc, -1);

cv::Scalar textcc = (color[0] + color[1] + color[2] >= 381) ? cv::Scalar(0, 0, 0) : cv::Scalar(255, 255, 255);

cv::putText(cv_dst, text, cv::Point(x, y + label_size.height), cv::FONT_HERSHEY_SIMPLEX, 0.5, textcc, 1);

for (int j = 0; j < obj.pts.size(); j++)

{

cv::circle(cv_dst, obj.pts[j], 2, cv::Scalar(0, 255, 0), -1);

}

}

return 0;

}

2.人脸检测效果

二、人脸打码

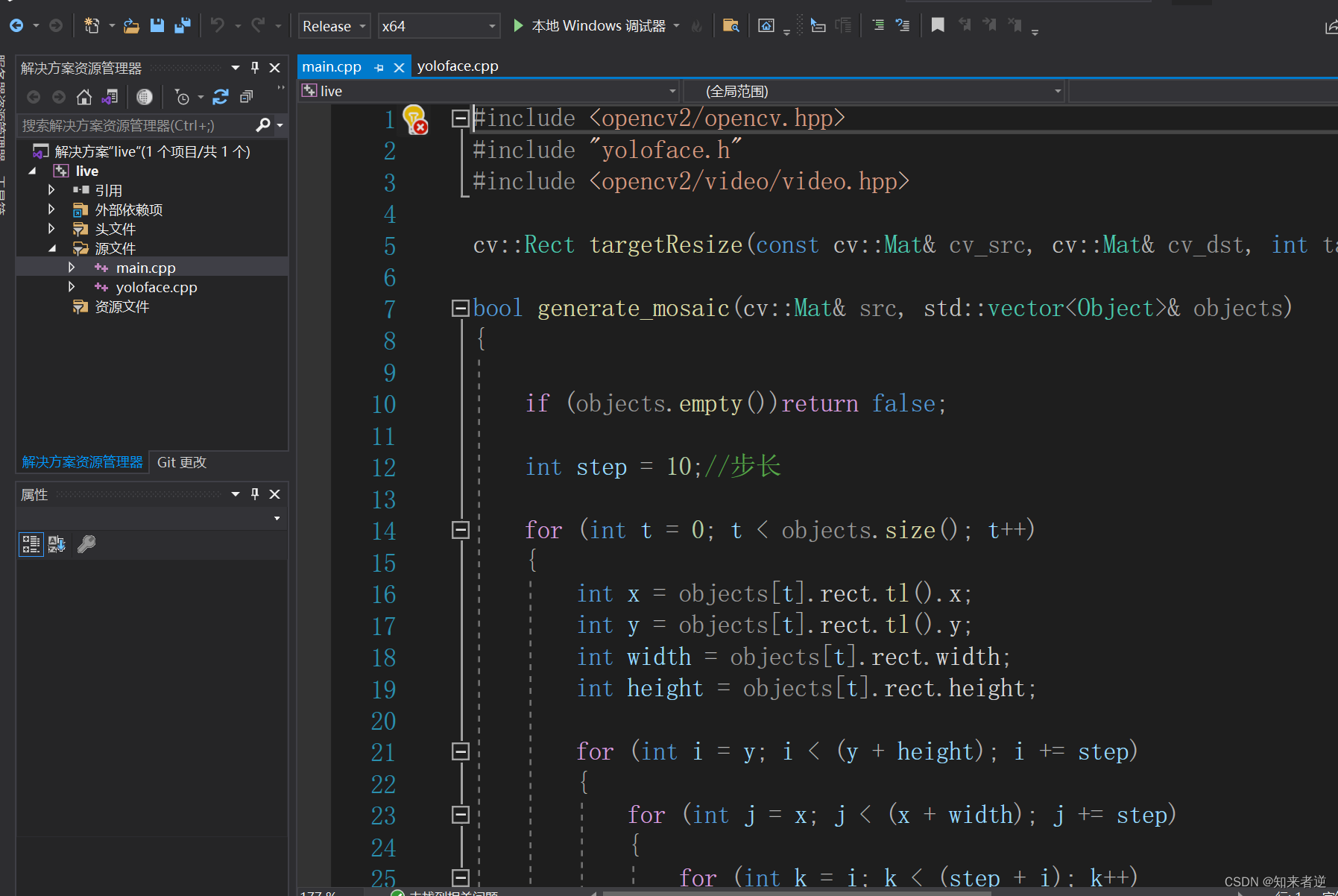

代码:

#include <opencv2/opencv.hpp>

#include "yoloface.h"

#include <opencv2/video/video.hpp>

cv::Rect targetResize(const cv::Mat& cv_src, cv::Mat& cv_dst, int target_w, int target__h);

bool generate_mosaic(cv::Mat& src, std::vector<Object>& objects)

{

if (objects.empty())return false;

int step = 10;//步长

for (int t = 0; t < objects.size(); t++)

{

int x = objects[t].rect.tl().x;

int y = objects[t].rect.tl().y;

int width = objects[t].rect.width;

int height = objects[t].rect.height;

for (int i = y; i < (y + height); i += step)

{

for (int j = x; j < (x + width); j += step)

{

for (int k = i; k < (step + i); k++)

{

for (int m = j; m < (step + j); m++)

{

for (int c = 0; c < 3; c++)

{

src.at<cv::Vec3b>(k, m)[c] = src.at<cv::Vec3b>(i, j)[c];

}

}

}

}

}

}

return true;

}

int main(void)

{

YoloFace yolo_face;

//加载人脸检测模型

yolo_face.loadModel("models/face/face_lite");

cv::VideoCapture cap;

//打开摄像头或者是输入视频

//cap.open(0);

cap.open("222.mp4");

if (!cap.isOpened())

{

return 0;

}

cv::Mat cv_src;

while (1)

{

cap >> cv_src;

if (cv_src.empty())

{

break;

}

std::vector<Object> objects;

//图像边界扩展,这步是为了提高精度

cv::Mat cv_target;

// cv::Rect rect = targetResize(cv_src, cv_target, target_w, target_h);

//人脸检测

yolo_face.detection(cv_src, objects);

cv::Mat cv_dst;

generate_mosaic(cv_src, objects);

//yolo_face.drawFace(cv_src, cv_dst, objects);

cv::namedWindow("face", 0);

cv::imshow("face", cv_dst);

cv::waitKey(20);

}

cap.release();

return 0;

}

cv::Rect targetResize(const cv::Mat& cv_src, cv::Mat& cv_dst, int target_w, int target_h)

{

float s;

if (cv_src.cols > cv_src.rows)

{

s = float(target_w) / cv_src.cols;

}

else

{

s = float(target_h) / cv_src.rows;

}

float w = cv_src.cols * s;

float h = cv_src.rows * s;

int w_p = (target_w - w) / 2;

int h_p = (target_h - h) / 2;

cv::Mat cv_size;

cv::resize(cv_src, cv_size, cv::Size(w, h));

cv::copyMakeBorder(cv_size, cv_dst, h_p, h_p, w_p, w_p, cv::BORDER_CONSTANT, 114.f);

return cv::Rect(w_p, h_p, cv_size.cols, cv_size.rows);

}

打码效果:

三、源码

1.源码地址:https://mp.csdn.net/mp_download/manage/download/UpDetailed

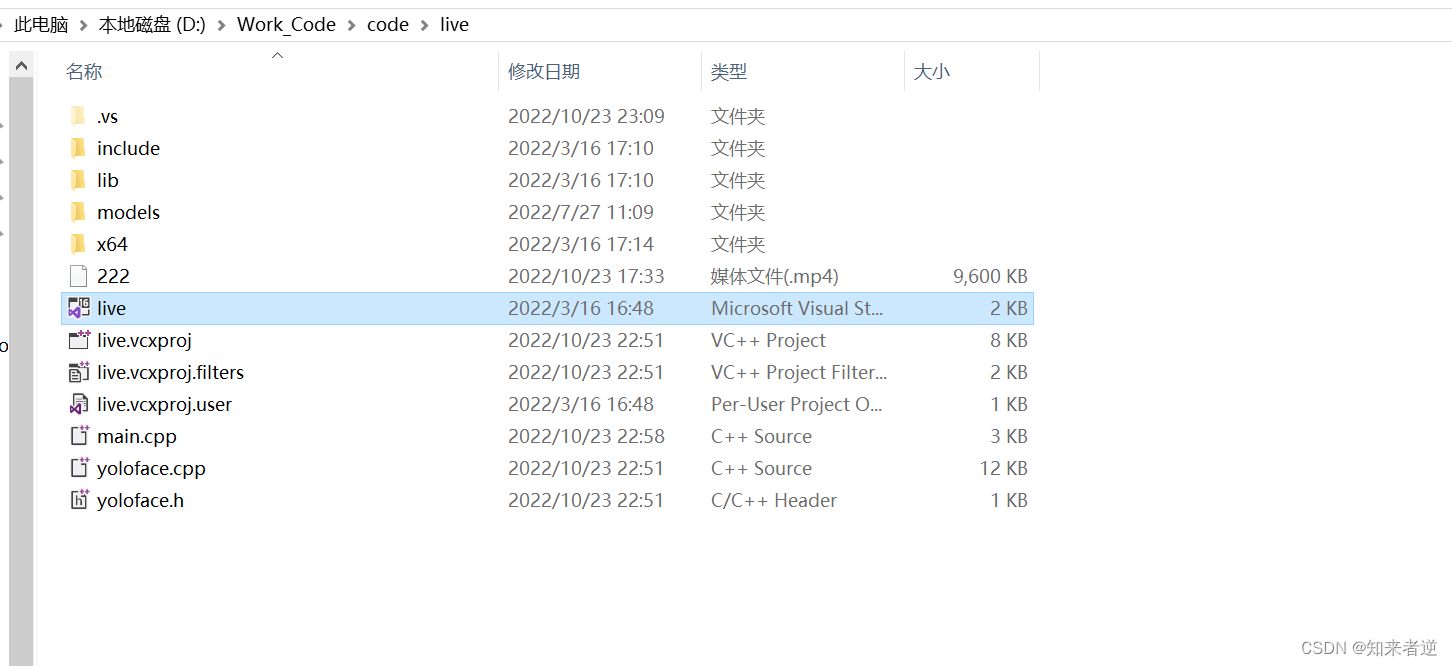

2.源码配置方法

1.文件目录

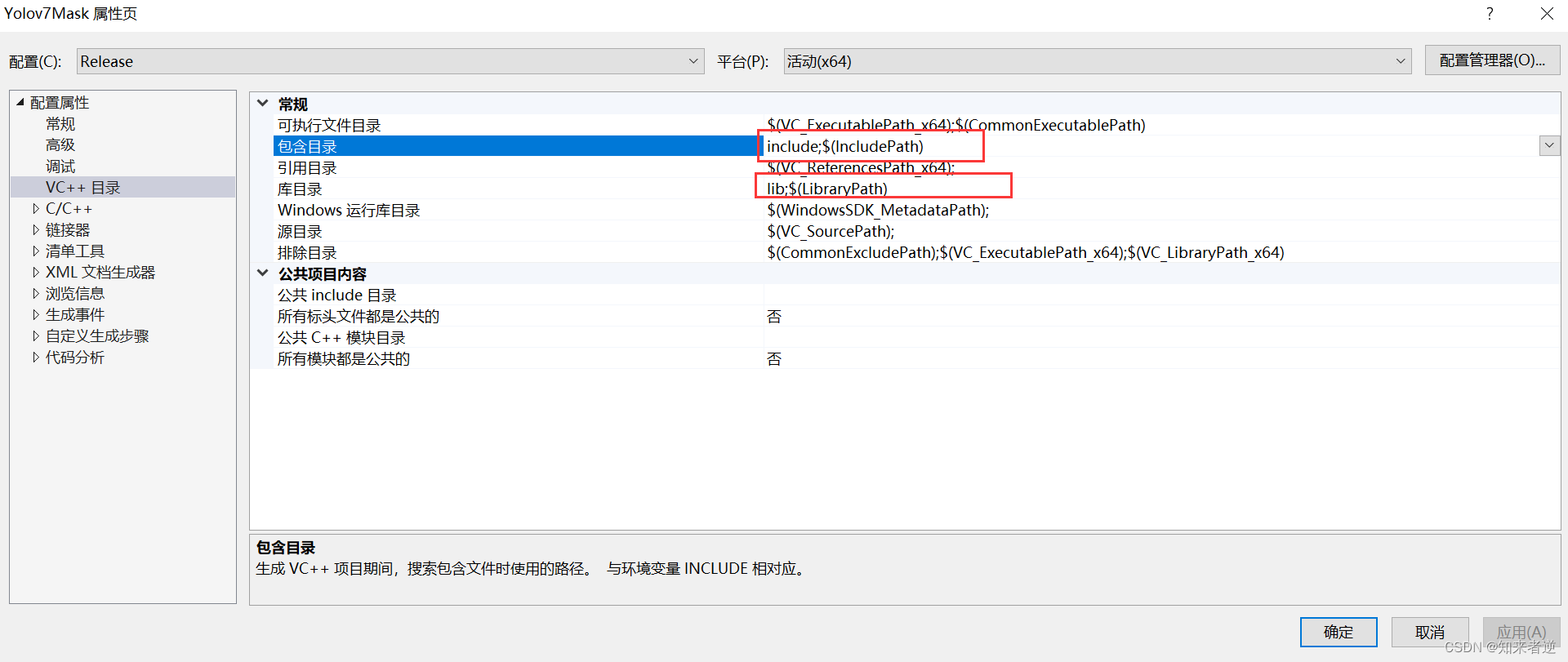

2.配置include和lib路径

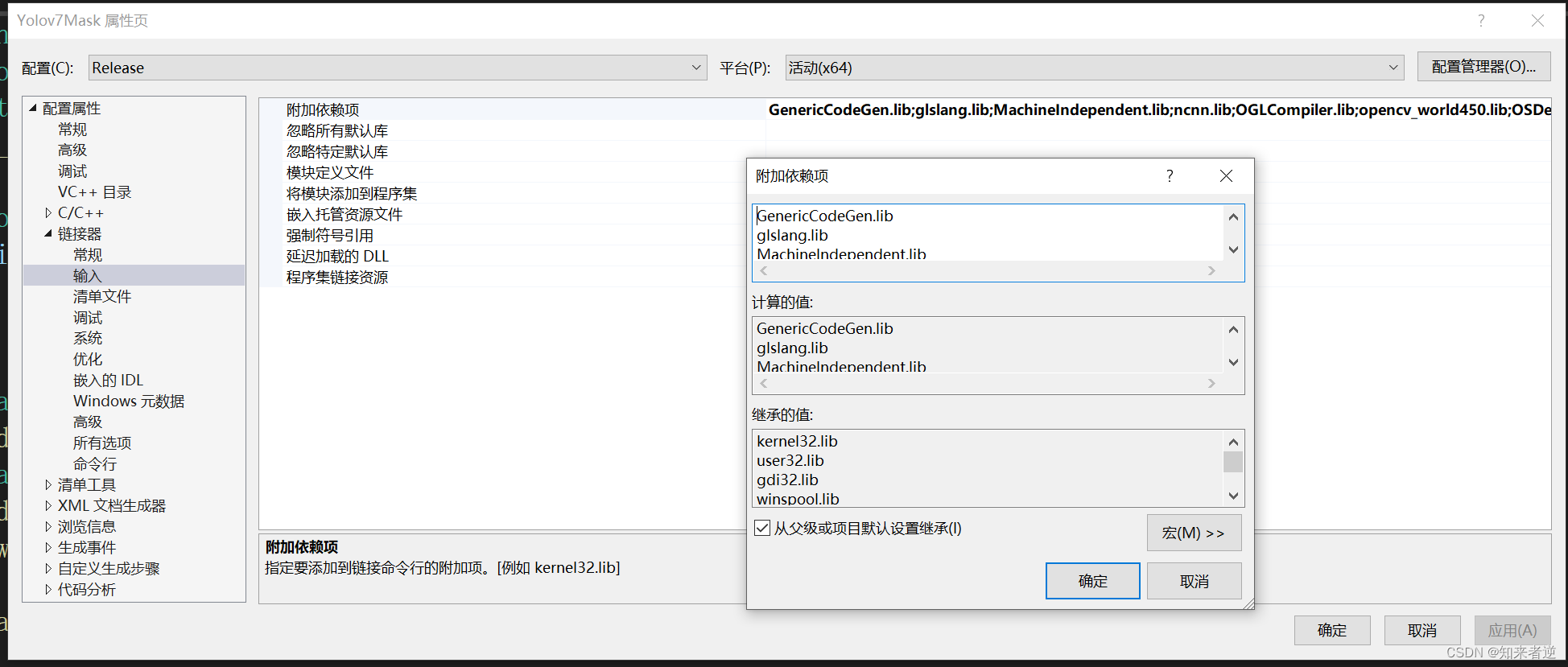

3.添加lib名,就是源码目录里面所有点lib后缀的名称。

4.IDE的配置