由于我们在使用pytorch时遇到的各种问题,出现了Pytorch-Lighting这一框架。

Pytorch-Lighting 的一大特点是把模型和系统分开来看。系统定义了一组模型如何相互交互,如GAN(生成器网络与判别器网络)、Seq2Seq(Encoder与Decoder网络)和Bert。有时候问题只涉及一个模型,例如UNet、ResNet等,那么这个系统则可以是一个通用的系统,用于描述模型如何使用,并可以被复用到很多其他项目。

Pytorch-Lighting 框架下,每个网络包含了如何训练、如何测试、优化器定义等内容。

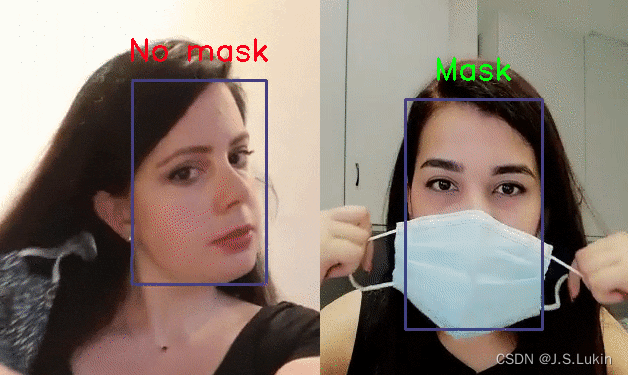

Lightning社区中的Face Mask Detector: https://towardsdatascience.com/how-i-built-a-face-mask-detector-for-covid-19-using-pytorch-lightning-67eb3752fd61

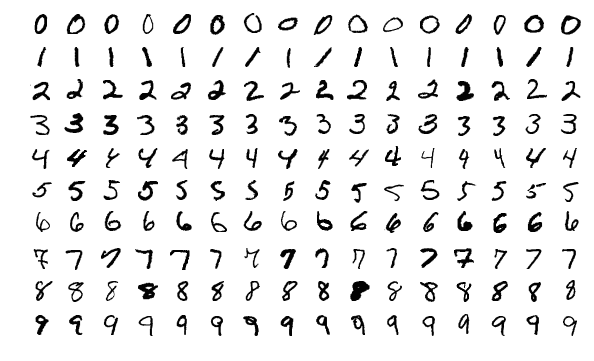

以手写体识别为例程的PyTorch Lightning教程:https://colab.research.google.com/drive/1Mowb4NzWlRCxzAFjOIJqUmmk_wAT-XP3

1.安装

pip install pytorch-lightning

2.网络设计

import torch

from torch import nn

import pytorch_lightning as pl

class LightningMNISTClassifier(pl.LightningModule):

def __init__(self):

super(LightningMNISTClassifier, self).__init__()

# mnist images are (1, 28, 28) (channels, width, height)

self.layer_1 = torch.nn.Linear(28 * 28, 128)

self.layer_2 = torch.nn.Linear(128, 256)

self.layer_3 = torch.nn.Linear(256, 10)

def forward(self, x):

batch_size, channels, width, height = x.siz()

# (b, 1, 28, 28) -> (b, 1*28*28)

x = x.view(batch_size, -1)

# layer 1

x = self.layer_1(x)

x = torch.relu(x)

# layer 2

x = self.layer_2(x)

x = torch.relu(x)

# layer 3

x = self.layer_3(x)

# probability distribution over labels

x = torch.log_softmax(x, dim=1)

return x

可以发现PyTorch和LP是几乎一样的。

# restore with PyTorch

pytorch_model = MNISTClassifier()

pytorch_model.load_state_dict(torch.load(PATH))

model.eval()

lightning_model = LightningMNISTClassifier.load_from_checkpoint(PATH)

lightning_model.eval()

3. 数据

让我们生成MNIST数据集的三个数据集——训练、验证和测试。

在pytorch中,数据集被添加到Dataloader中,Dataloader处理数据集的加载、shuffling和batching 。

- 图像转换。

- 生成训练、验证和测试数据集。

- 将每个数据集载入到DataLoader中。

如下

from torch.utils.data import DataLoader, random_split

from torchvision.datasets import MNIST

import os

from torchvision import datasets, transforms

# ----------------

# TRANSFORMS

# ----------------

# prepare transforms standard to MNIST

transform=transforms.Compose([transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))])

# ----------------

# TRAINING, VAL DATA

# ----------------

mnist_train = MNIST(os.getcwd(), train=True, download=True)

# train (55,000 images), val split (5,000 images)

mnist_train, mnist_val = random_split(mnist_train, [55000, 5000])

# ----------------

# TEST DATA

# ----------------

mnist_test = MNIST(os.getcwd(), train=False, download=True)

# ----------------

# DATALOADERS

# ----------------

# The dataloaders handle shuffling, batching, etc...

mnist_train = DataLoader(mnist_train, batch_size=64)

mnist_val = DataLoader(mnist_val, batch_size=64)

mnist_test = DataLoader(mnist_test, batch_size=64)

在PyTorch中,这种数据加载可以在训练程序的任何地方进行,而在PyTorch Lightning中,dataloader可以直接使用,也可以在LightningDataModule下将三种方法组合起来使用

train_dataloader()

val_dataloader()

test_dataloader()

还有第四个方法是用于数据准备/下载的。

prepare_data()

Lightning采用这种方法,使每个用Lightning实现的模型都遵循相同的结构。这使得代码具有极高的可读性和组织性。

也就是说,当你遇到一个使用Lightning的项目,从代码中能清楚地知道数据处理/下载发生在哪里。

LP如下:

from torch.utils.data import DataLoader, random_split

from torchvision.datasets import MNIST

import os

from torchvision import datasets, transforms

class MNISTDataModule(pl.LightningDataModule):

def setup(self, stage):

# transforms for images

transform=transforms.Compose([transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))])

# prepare transforms standard to MNIST

mnist_train = MNIST(os.getcwd(), train=True, download=True, transform=transform)

mnist_test = MNIST(os.getcwd(), train=False, download=True, transform=transform)

self.mnist_train, self.mnist_val = random_split(mnist_train, [55000, 5000])

def train_dataloader(self):

return DataLoader(self.mnist_train, batch_size=64)

def val_dataloader(self):

return DataLoader(self.mnist_val, batch_size=64)

def test_dataloader(self):

return DataLoader(self,mnist_test, batch_size=64)

优化器 Optimizer

我们选用Adam优化器,Pytorch代码如下:

pytorch_model = MNISTClassifier()

optimizer = torch.optim.Adam(pytorch_model.parameters(), lr=1e-3)

在Lightning中,传入了self.parameters() 而不是模型,因为LightningModule是模型。

class LightningMNISTClassifier(pl.LightningModule):

def configure_optimizers(self):

optimizer = torch.optim.Adam(self.parameters(), lr=1e-3)

return optimizer

损失 Loss

对于n向分类,要取对数并计算交叉熵损失。交叉熵与将使用的NegativeLogLikelihood(log_softmax)相同。

from torch.nn import functional as F

def cross_entropy_loss(logits, labels):

return F.nll_loss(logits, labels)

在PyTorch Lightning中,我们使用完全相同的代码来计算损失。但是我们可以把它放在文件的任何位置。

from torch.nn import functional as F

class LightningMNISTClassifier(pl.LightningModule):

def cross_entropy_loss(self, logits, labels):

return F.nll_loss(logits, labels)

训练 Training loop

我们现在已经定义了神经网络中所有的关键成分:

- 一个模型(3层网络)

- 数据集(MNIST)

- 一个优化器(Adam)

- 一个损失函数(交叉熵损失)

现在,我们实现了一个完整的训练程序,如下:

- 迭代 Iterates for many epochs。

D = { ( x 1 , y 1 ) , . . . , ( x n , y n ) } D=\{(x_1,y_1),...,(x_n,y_n)\} D={(x1,y1),...,(xn,yn)} - 在每个epoch中,我们分批(batch)对数据集进行迭代。

b ∈ D b∈D b∈D - 进行前向传递。

y ^ = f ( x ) \hat y=f(x) y^=f(x) - 计算损失。

L = − ∑ i = 1 C ( y ^ l o g ( y ^ i ) ) \begin{aligned} L&=-\sum_{i=1}^C(\hat y \mathrm log(\hat y_i))\\ \end{aligned} L=−i=1∑C(y^log(y^i)) - 执行后向传递,计算所有权重的梯度。

∇ ω i = ∂ L ∂ ω i ∀ ω i ∇\omega_i = \frac{\partial L}{\partial\omega_i} \qquad ∀\omega_i ∇ωi=∂ωi∂L∀ωi - 将这些梯度反馈到每个权重。

ω i = ω i + α ∇ ω i \omega_i=\omega_i+α∇\omega_i ωi=ωi+α∇ωi

num_epochs = 100

for epoch in range(num_epochs): # (1)

for batch in dataloader: # (2)

x, y = batch

logits = model(x) # (3)

loss = cross_entropy_loss(logits, y) # (4)

loss.backward() # (5)

optimizer.step() # (6)

PyTorch Training loop

import torch

from torch import nn

import pytorch_lightning as pl

from torch.utils.data import DataLoader, random_split

from torch.nn import functional as F

from torchvision.datasets import MNIST

from torchvision import datasets, transforms

import os

# -----------------

# MODEL

# -----------------

class LightningMNISTClassifier(pl.LightningModule):

def __init__(self):

super(LightningMNISTClassifier, self).__init__()

# mnist images are (1, 28, 28) (channels, width, height)

self.layer_1 = torch.nn.Linear(28 * 28, 128)

self.layer_2 = torch.nn.Linear(128, 256)

self.layer_3 = torch.nn.Linear(256, 10)

def forward(self, x):

batch_size, channels, width, height = x.sizes()

# (b, 1, 28, 28) -> (b, 1*28*28)

x = x.view(batch_size, -1)

# layer 1

x = self.layer_1(x)

x = torch.relu(x)

# layer 2

x = self.layer_2(x)

x = torch.relu(x)

# layer 3

x = self.layer_3(x)

# probability distribution over labels

x = torch.log_softmax(x, dim=1)

return x

# ----------------

# DATA

# ----------------

transform=transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.1307,), (0.3081,))])

mnist_train = MNIST(os.getcwd(), train=True, download=True, transform=transform)

mnist_test = MNIST(os.getcwd(), train=False, download=True, transform=transform)

# train (55,000 images), val split (5,000 images)

mnist_train, mnist_val = random_split(mnist_train, [55000, 5000])

mnist_test = MNIST(os.getcwd(), train=False, download=True)

# The dataloaders handle shuffling, batching, etc...

mnist_train = DataLoader(mnist_train, batch_size=64)

mnist_val = DataLoader(mnist_val, batch_size=64)

mnist_test = DataLoader(mnist_test, batch_size=64)

# ----------------

# OPTIMIZER

# ----------------

pytorch_model = MNISTClassifier()

optimizer = torch.optim.Adam(pytorch_model.parameters(), lr=1e-3)

# ----------------

# LOSS

# ----------------

def cross_entropy_loss(logits, labels):

return F.nll_loss(logits, labels)

# ----------------

# TRAINING LOOP

# ----------------

num_epochs = 1

for epoch in range(num_epochs):

# TRAINING LOOP

for train_batch in mnist_train:

x, y = train_batch

logits = pytorch_model(x)

loss = cross_entropy_loss(logits, y)

print('train loss: ', loss.item())

loss.backward()

optimizer.step()

optimizer.zero_grad()

# VALIDATION LOOP

with torch.no_grad():

val_loss = []

for val_batch in mnist_val:

x, y = val_batch

logits = pytorch_model(x)

val_loss.append(cross_entropy_loss(logits, y).item())

val_loss = torch.mean(torch.tensor(val_loss))

print('val_loss: ', val_loss.item())

PyTorch Lightning Training Loop

为了在Lightning中做到这一点,我们把训练循环和验证循环的主要部分提取出来,变成三个函数:

- training_step

- validation_step

- validation_end

for epoch in range(num_epochs):

# TRAINING LOOP

for train_batch in mnist_train:

x, y = train_batch # training_step

logits = pytorch_model(x) # training_step

loss = cross_entropy_loss(logits, y) # training_step

print('train loss: ', loss.item()) # training_step

loss.backward()

optimizer.step()

optimizer.zero_grad()

# VALIDATION LOOP

with torch.no_grad():

val_loss = []

for val_batch in mnist_val:

x, y = val_batch # validation_step

logits = pytorch_model(x) # validation_step

val_loss.append(cross_entropy_loss(logits, y).item())

val_loss = torch.mean(torch.tensor(val_loss)) # validation_epoch_end

print('val_loss: ', val_loss.item())

training_step展示了训练循环的过程。

class LightningMNISTClassifier(pl.LightningModule):

def training_step(self, batch, batch_idx):

x, y = train_batch

logits = self.forward(x) # we already defined forward and loss in the lightning module. We'll show the full code next

loss = self.cross_entropy_loss(logits, y)

self.log('train_loss', loss)

return loss

Trainer(precision=16)

validation_step执行的是验证循环的过程。但我们计算验证循环中所有批次的平均损失。为此,我们使用validation_end,它接收一个list(output),其中包括每个批次的validation_step的输出。

outputs = []

for batch in validation_dataloader:

loss = some_loss(batch) # validation_step

outputs.append(loss # validation_step

outputs = outputs.mean() # validation_epoch_end

class LightningMNISTClassifier(pl.LightningModule):

def validation_step(self, batch, batch_idx):

x, y = train_batch

logits = self.forward(x)

loss = self.cross_entropy_loss(logits, y)

self.log('val_loss', loss)

最后是完整的LightningModule。

import torch

from torch import nn

import pytorch_lightning as pl

from torch.utils.data import DataLoader, random_split

from torch.nn import functional as F

from torchvision.datasets import MNIST

from torchvision import datasets, transforms

import os

class LightningMNISTClassifier(pl.LightningModule):

def __init__(self):

super(LightningMNISTClassifier, self).__init__()

# mnist images are (1, 28, 28) (channels, width, height)

self.layer_1 = torch.nn.Linear(28 * 28, 128)

self.layer_2 = torch.nn.Linear(128, 256)

self.layer_3 = torch.nn.Linear(256, 10)

def forward(self, x):

batch_size, channels, width, height = x.size()

# (b, 1, 28, 28) -> (b, 1*28*28)

x = x.view(batch_size, -1)

# layer 1 (b, 1*28*28) -> (b, 128)

x = self.layer_1(x)

x = torch.relu(x)

# layer 2 (b, 128) -> (b, 256)

x = self.layer_2(x)

x = torch.relu(x)

# layer 3 (b, 256) -> (b, 10)

x = self.layer_3(x)

# probability distribution over labels

x = torch.log_softmax(x, dim=1)

return x

def cross_entropy_loss(self, logits, labels):

return F.nll_loss(logits, labels)

def training_step(self, batch, batch_idx):

x, y = train_batch

logits = self.forward(x) # we already defined forward and loss in the lightning module. We'll show the full code next

loss = self.cross_entropy_loss(logits, y)

self.log('train_loss', loss)

return loss

def validation_step(self, batch, batch_idx):

x, y = train_batch

logits = self.forward(x)

loss = self.cross_entropy_loss(logits, y)

self.log('val_loss', loss)

def configure_optimizers(self):

optimizer = torch.optim.Adam(self.parameters(), lr=1e-3)

return optimizer

# train

model = LightningMNISTClassifier()

trainer = pl.Trainer()

trainer.fit(model)

可以发现:

- 这个结构是非常规范化的。

- 这是同样的PyTorch代码,只是它被组织起来了。

- PyTorch训练代码的内循环变成了

training_step。但我们不需要做任何关于梯度的事情,因为Lightning会自动做这些事情。

for train_batch in mnist_train:

x, y = train_batch # training_step

logits = pytorch_model(x) # training_step

loss = cross_entropy_loss(logits, y) # training_step

print('train loss: ', loss.item()) # training_step

loss.backward()

optimizer.step()

optimizer.zero_grad()

- PyTorch验证代码的内循环成为

validation_step。

outputs = []

for batch in validation_dataloader:

loss = some_loss(batch) # validation_step

outputs.append(loss # validation_step

- 而

validation_end步骤允许我们计算整个验证集的指标。同样,我们不需要打开梯度或冻结模型或循环任何结构。Lightning将为我们自动完成。

outputs = outputs.mean() # validation end

validation_loss = outputs

为了实现更高的移植性和规范化,我们实际上可以把模型定义拉到外面,这将让我们传入任意的分类器。

import torch

from torch import nn

import pytorch_lightning as pl

from torch.utils.data import DataLoader, random_split

from torch.nn import functional as F

from torchvision.datasets import MNIST

from torchvision import datasets, transforms

import os

class Backbone(nn.Module):

def __init__(self):

super().__init__()

# mnist images are (1, 28, 28) (channels, width, height)

self.layer_1 = torch.nn.Linear(28 * 28, 128)

self.layer_2 = torch.nn.Linear(128, 256)

self.layer_3 = torch.nn.Linear(256, 10)

def forward(self, x):

batch_size, channels, width, height = x.size()

# (b, 1, 28, 28) -> (b, 1*28*28)

x = x.view(batch_size, -1)

# layer 1 (b, 1*28*28) -> (b, 128)

x = self.layer_1(x)

x = torch.relu(x)

# layer 2 (b, 128) -> (b, 256)

x = self.layer_2(x)

x = torch.relu(x)

# layer 3 (b, 256) -> (b, 10)

x = self.layer_3(x)

return x

class LightningClassifier(pl.LightningModule):

def __init__(self, backbone):

super().__init__()

self.backbone = backbone

def cross_entropy_loss(self, logits, labels):

return F.nll_loss(logits, labels)

def training_step(self, batch, batch_idx):

x, y = train_batch

logits = self.backbone(x)

# probability distribution over labels

x = torch.log_softmax(x, dim=1)

loss = self.cross_entropy_loss(logits, y)

self.log('train_loss', loss)

return loss

def validation_step(self, batch, batch_idx):

x, y = train_batch

# probability distribution over labels

logits = self.backbone(x)

x = torch.log_softmax(x, dim=1)

loss = self.cross_entropy_loss(logits, y)

self.log('val_loss', loss)

def configure_optimizers(self):

optimizer = torch.optim.Adam(self.parameters(), lr=1e-3)

return optimizer

# train

model = Backbone()

classifier = LightningClassifier(model)

trainer = pl.Trainer()

trainer.fit(classifier)