本文记录了在mac搭建的istio环境在创建了4个带有istio-proxy的pod后,无法再新建pod的问题,提示 FailedScheduling 0/1 nodes are available: 1 Insufficient cpu. preemption: 0/1 nodes are available: 1 No preemption victims found for incoming pod.,开始查找分析原因,最终找到解决方案。

一、问题背景

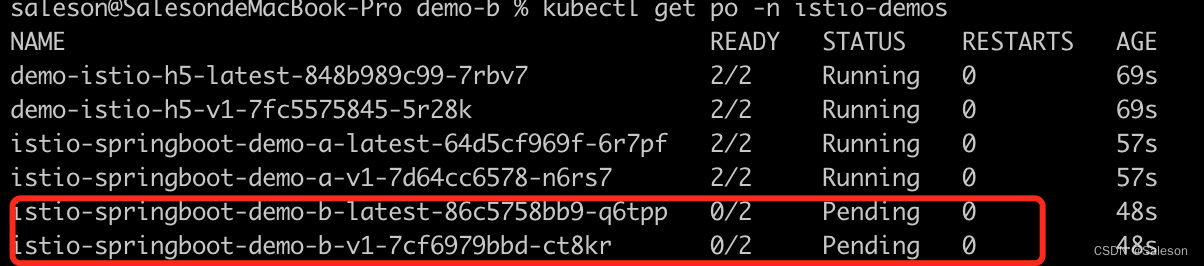

创建的pod一直在pending状态

使用 kubectl describe po 查看下原因

kubectl describe po istio-springboot-demo-b-v1-7cf6979bbd-ct8kr -n istio-demos

输出的原因部分如下:

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning FailedScheduling 3m default-scheduler 0/1 nodes are available: 1 Insufficient cpu. preemption: 0/1 nodes are available: 1 No preemption victims found for incoming pod.

从Message看,很明显是cpu不够不了,无法调度。为什么会这样呢?

通过下面的步骤查看下node的信息

先查看node

saleson@SalesondeMacBook-Pro demo-b % kubectl get nodes

NAME STATUS ROLES AGE VERSION

docker-desktop Ready control-plane 21d v1.24.1

再通过下面的命令的查看po使用cpu和内在的情况

kubectl describe node docker-desktop

Capacity:

cpu: 2

ephemeral-storage: 61255492Ki

hugepages-1Gi: 0

hugepages-2Mi: 0

memory: 8049416Ki

pods: 110

Allocatable:

cpu: 2

ephemeral-storage: 56453061334

hugepages-1Gi: 0

hugepages-2Mi: 0

memory: 7947016Ki

pods: 110

System Info:

Machine ID: 7679dc44-ba20-41de-afe7-6131f6cdfb01

System UUID: 25cc4672-0000-0000-aaf7-f9f722a48b3c

Boot ID: 72f1a678-b1e3-435a-a235-409592f5bf2b

Kernel Version: 5.10.104-linuxkit

OS Image: Docker Desktop

Operating System: linux

Architecture: amd64

Container Runtime Version: docker://20.10.17

Kubelet Version: v1.24.1

Kube-Proxy Version: v1.24.1

Non-terminated Pods: (20 in total)

Namespace Name CPU Requests CPU Limits Memory Requests Memory Limits Age

--------- ---- ------------ ---------- --------------- ------------- ---

istio-demos demo-istio-h5-latest-848b989c99-7rbv7 100m (5%) 2 (100%) 128Mi (1%) 1Gi (13%) 6m34s

istio-demos demo-istio-h5-v1-7fc5575845-5r28k 100m (5%) 2 (100%) 128Mi (1%) 1Gi (13%) 6m34s

istio-demos istio-springboot-demo-a-latest-64d5cf969f-6r7pf 100m (5%) 2 (100%) 128Mi (1%) 1Gi (13%) 6m22s

istio-demos istio-springboot-demo-a-v1-7d64cc6578-n6rs7 100m (5%) 2 (100%) 128Mi (1%) 1Gi (13%) 6m22s

istio-system grafana-6787c6fb46-jklbh 0 (0%) 0 (0%) 0 (0%) 0 (0%) 6d13h

istio-system istio-egressgateway-679565ff87-g8lq7 100m (5%) 2 (100%) 128Mi (1%) 1Gi (13%) 6d14h

istio-system istio-ingressgateway-b67d7948d-wfxgb 100m (5%) 2 (100%) 128Mi (1%) 1Gi (13%) 6d14h

istio-system istiod-645c8d8598-qmslz 500m (25%) 0 (0%) 2Gi (26%) 0 (0%) 6d14h

istio-system jaeger-5b994f64f4-gvcqt 10m (0%) 0 (0%) 0 (0%) 0 (0%) 6d13h

istio-system kiali-565bff499-bfrhr 0 (0%) 0 (0%) 0 (0%) 0 (0%) 6d13h

istio-system prometheus-74cd54db7f-flppp 0 (0%) 0 (0%) 0 (0%) 0 (0%) 6d13h

kube-system coredns-6d4b75cb6d-5rqbc 100m (5%) 0 (0%) 70Mi (0%) 170Mi (2%) 21d

kube-system coredns-6d4b75cb6d-wdh6b 100m (5%) 0 (0%) 70Mi (0%) 170Mi (2%) 6d14h

kube-system etcd-docker-desktop 100m (5%) 0 (0%) 100Mi (1%) 0 (0%) 21d

kube-system kube-apiserver-docker-desktop 250m (12%) 0 (0%) 0 (0%) 0 (0%) 21d

kube-system kube-controller-manager-docker-desktop 200m (10%) 0 (0%) 0 (0%) 0 (0%) 21d

kube-system kube-proxy-tnb9q 0 (0%) 0 (0%) 0 (0%) 0 (0%) 21d

kube-system kube-scheduler-docker-desktop 100m (5%) 0 (0%) 0 (0%) 0 (0%) 21d

kube-system storage-provisioner 0 (0%) 0 (0%) 0 (0%) 0 (0%) 21d

kube-system vpnkit-controller 0 (0%) 0 (0%) 0 (0%) 0 (0%) 21d

Allocated resources:

(Total limits may be over 100 percent, i.e., overcommitted.)

Resource Requests Limits

-------- -------- ------

cpu 1960m (98%) 12 (600%)

memory 3056Mi (39%) 6484Mi (83%)

ephemeral-storage 0 (0%) 0 (0%)

hugepages-1Gi 0 (0%) 0 (0%)

hugepages-2Mi 0 (0%) 0 (0%)

Events: <none>

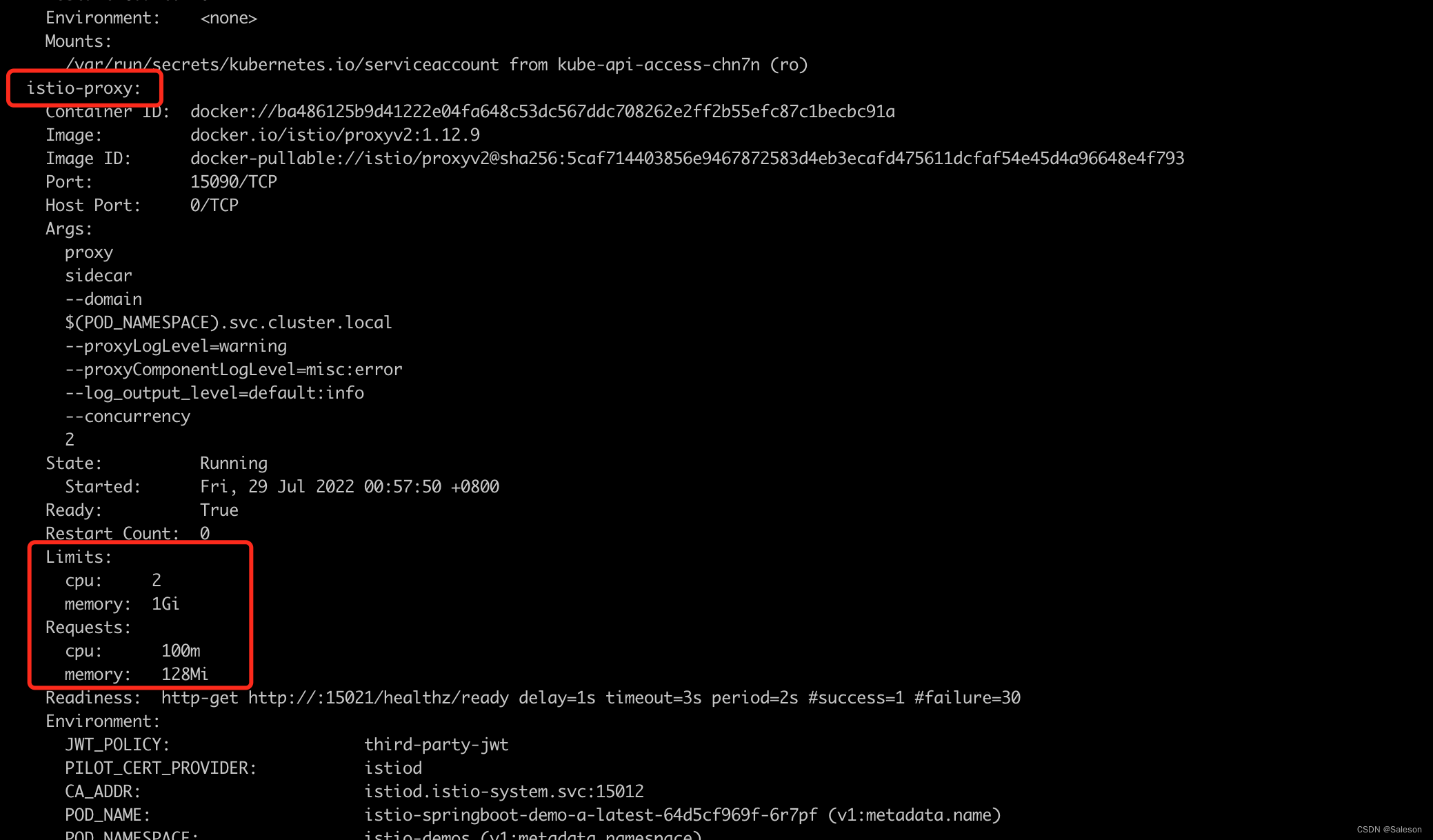

可以看到几个用于istio测试的demo的pod都有使用配额,从其中挑选一个pod进行查看

kubectl describe po istio-springboot-demo-a-latest-64d5cf969f-6r7pf -n istio-demos

找到了使用配额的容器,原来是istio的代理容器(sidecar) : istio-proxy

二、调整istio关于sidecar的资源配额参数

查找参数配置

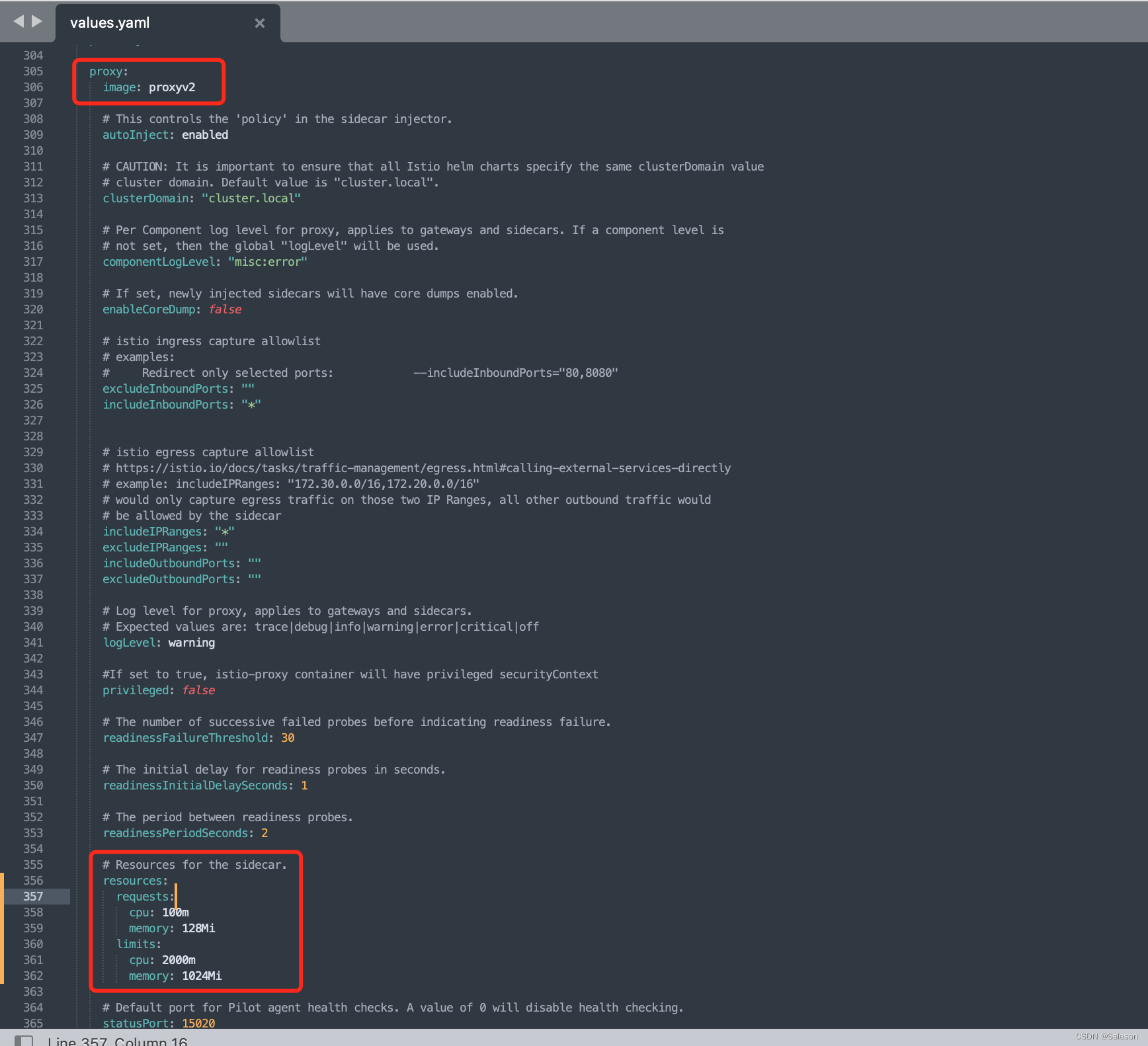

后来在istio安装包找到关于proxy相关的资源配额参数,文件路径:istio-1.12.9/manifests/charts/istio-control/istio-discovery/values.yaml

resources相关参数:

动态调整

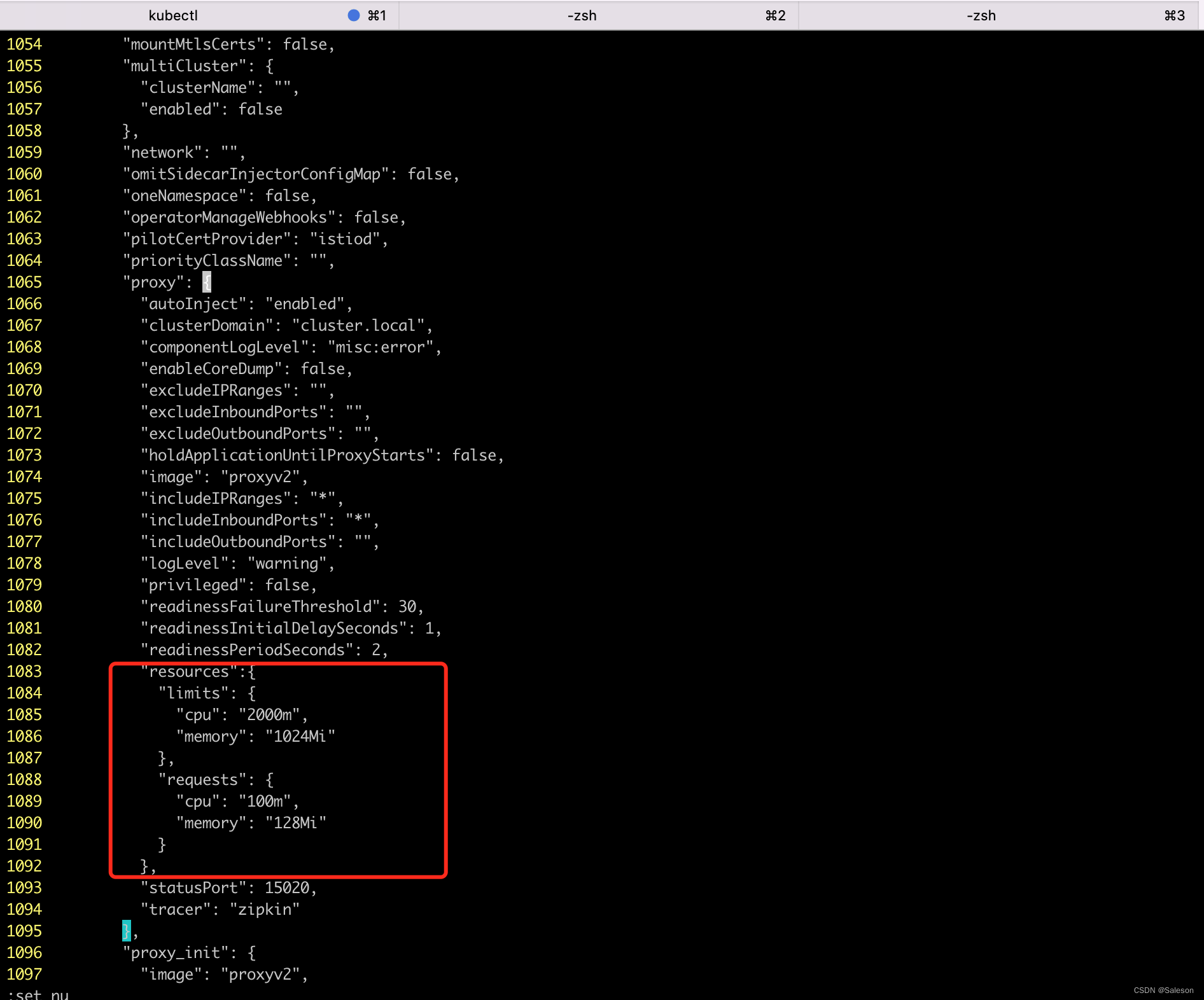

然后找到了参数所在的文件对于已经在运行的istio环境也无能为力,因为改文件中的参数并不能应用于正在运行的istio环境中,后面找了好久,终于找到一篇华为的文章: 如何调整istio-proxy容器resources requests取值?

这里记录一下

方法一:调整网格中的所有服务

调整后,对已经创建的istio-proxy并不会做改动,但是之后创建的istio-proxy将会使用调整后的参数。

- 执行以下命令修改comfigmap

kubectl edit cm istio-sidecar-injector -n istio-system

可以调整配额大小,也可以删除掉。

本文的实验中,是将之删除掉了。

-

重启istio-system命名空间下的istio-sidecar-injector Pod。

本文中的实验并没有按上述文章中的步骤重启istio-system命名空间下的istio-sidecar-injector,但之后创建的istio-proxy仍是使用调整后的参数。

也是由于是实验关系,在使用环境建议还是按上述文章中的步骤重启。 -

重启业务服务Pod,多实例滚动升级不会断服。

方法二:调整网格中的某个服务

-

修改服务的yaml文件

命令格式:kubectl edit deploy <nginx> -n <namespace>本次实验在namespace istio-demos进行,查看namespace下的deployment

kubectl get deploy -n istio-demos输出如下:

saleson@SalesondeMacBook-Pro istio-discovery % kubectl get deploy -n istio-demos NAME READY UP-TO-DATE AVAILABLE AGE demo-istio-h5-latest 1/1 1 1 3h59m demo-istio-h5-v1 1/1 1 1 3h59m istio-springboot-demo-a-latest 1/1 1 1 3h58m istio-springboot-demo-a-v1 1/1 1 1 3h58m istio-springboot-demo-b-latest 1/1 1 1 27m istio-springboot-demo-b-v1 1/1 1 1 27m使用下命令进入istio-springboot-demo-b-v1的编辑面板:

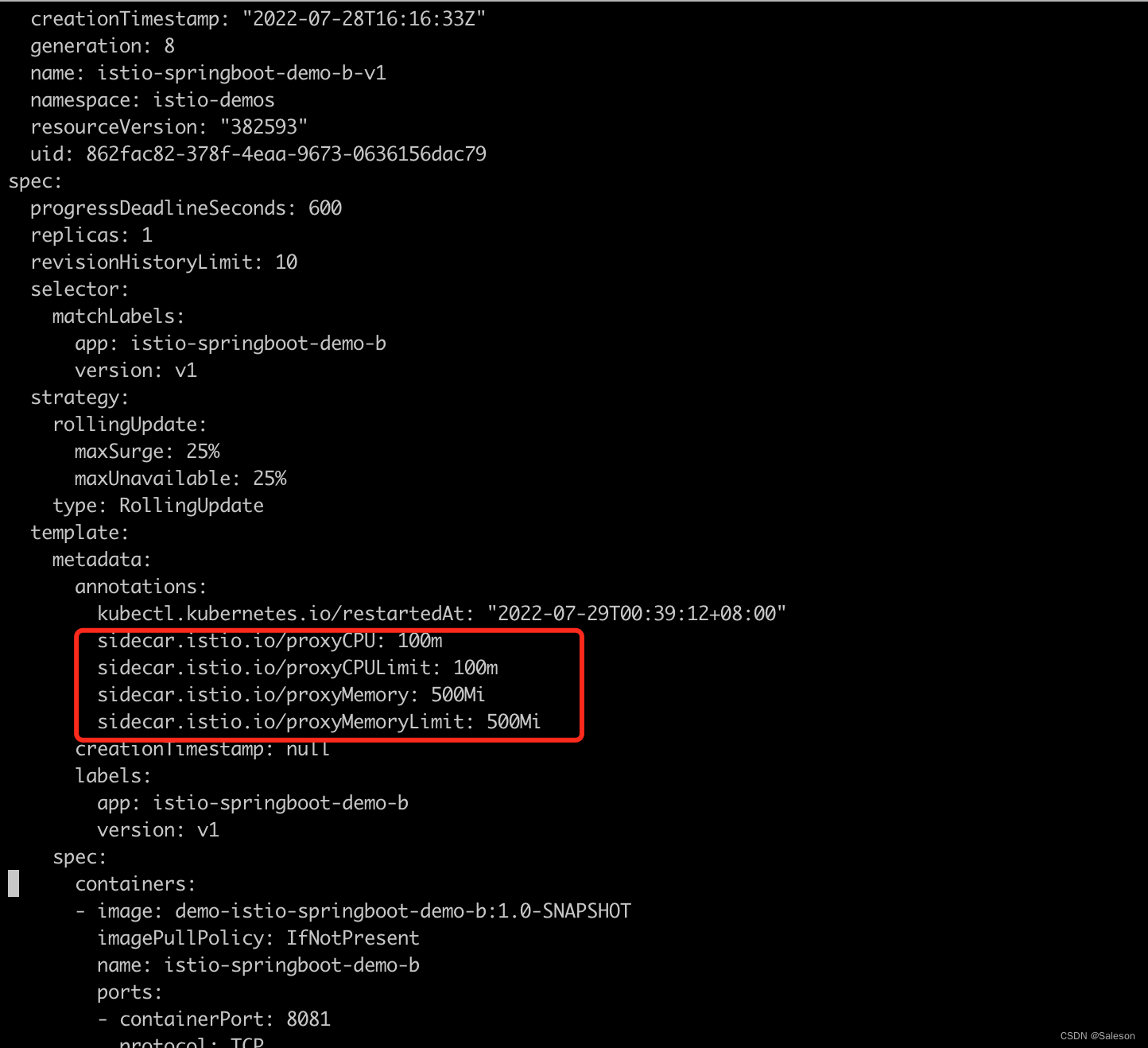

kubectl edit deploy istio-springboot-demo-b-v1 -n istio-demos -

在spec.template.metadata.annotations下添加如下配置(大小仅供参考,请自行替换)

sidecar.istio.io/proxyCPU: 100m sidecar.istio.io/proxyCPULimit: 100m sidecar.istio.io/proxyMemory: 500Mi sidecar.istio.io/proxyMemoryLimit: 500Mi编辑保存后的结果如下:

-

修改后服务滚动升级,确保不会断服

执行如下命令重启deploymentkubectl rollout restart deployment istio-springboot-demo-b-v1 -n istio-demos -

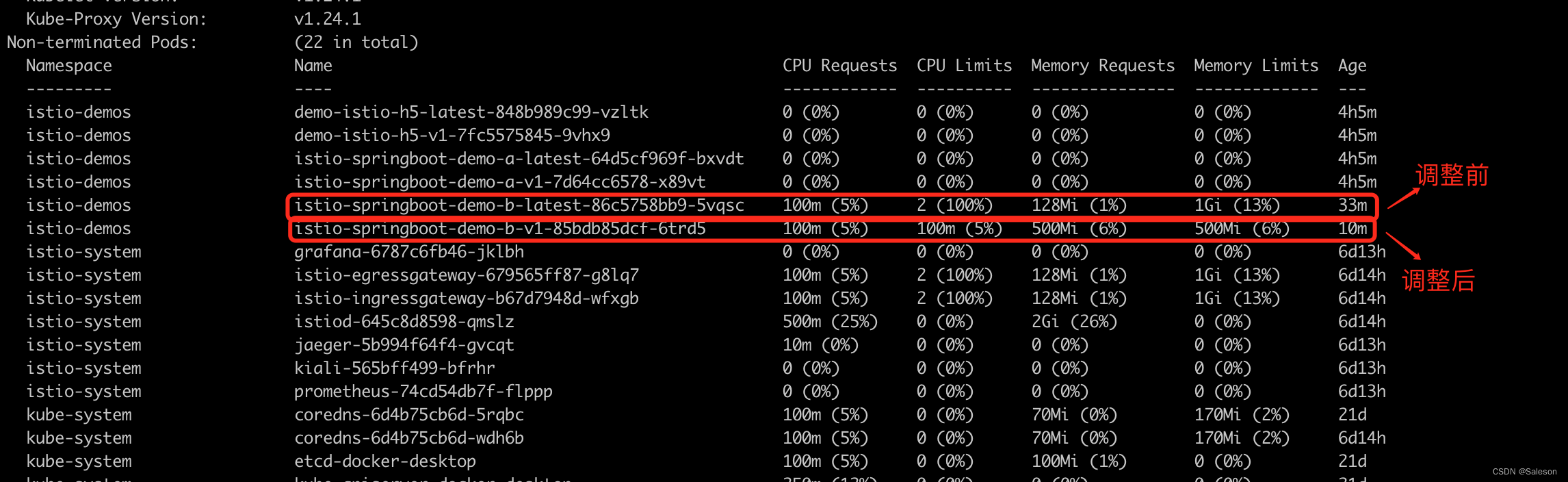

查看修改后的结果

使用下面的命令查看修改后的配额kubectl describe node docker-desktop

三、提前规划

先调整istio-1.12.9/manifests/charts/istio-control/istio-discovery/values.yaml中关于proxy的配额,再安装istio-discovery