Kubernetes概述

使用kubeadm快速部署一个k8s集群

Kubernetes高可用集群二进制部署(一)主机准备和负载均衡器安装

Kubernetes高可用集群二进制部署(二)ETCD集群部署

Kubernetes高可用集群二进制部署(三)部署api-server

Kubernetes高可用集群二进制部署(四)部署kubectl和kube-controller-manager、kube-scheduler

Kubernetes高可用集群二进制部署(五)kubelet、kube-proxy、Calico、CoreDNS

Kubernetes高可用集群二进制部署(六)Kubernetes集群节点添加

1. 工作节点(worker node)部署

1.1 docker安装及配置

wget -O /etc/yum.repos.d/docker-ce.repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum -y install docker-ce

systemctl enable docker

systemctl start docker

cat <<EOF | sudo tee /etc/docker/daemon.json

{

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors": ["https://8i185852.mirror.aliyuncs.com"]

}

EOF

必须配置native.cgroupdriver,不配置这个步骤会导致kubelet启动失败

systemctl restart docker

1.2 部署kubelet

在k8s-master1(同时作为控制平面和数据平面)上操作

1.2.1 创建kubelet-bootstrap.kubeconfig

BOOTSTRAP_TOKEN=$(awk -F "," '{print $1}' /etc/kubernetes/token.csv)

#192.168.10.100 VIP(虚拟IP)

kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.10.100:6443 --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl config set-credentials kubelet-bootstrap --token=${

BOOTSTRAP_TOKEN} --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl config use-context default --kubeconfig=kubelet-bootstrap.kubeconfig

#创建集群角色绑定

kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=kubelet-bootstrap

kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl describe clusterrolebinding cluster-system-anonymous

kubectl describe clusterrolebinding kubelet-bootstrap

1.2.2 创建kubelet配置文件

[root@k8s-master1 k8s-work]# cat > kubelet.json << "EOF"

{

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1",

"authentication": {

"x509": {

"clientCAFile": "/etc/kubernetes/ssl/ca.pem"

},

"webhook": {

"enabled": true,

"cacheTTL": "2m0s"

},

"anonymous": {

"enabled": false

}

},

"authorization": {

"mode": "Webhook",

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"address": "192.168.10.103", #当前主机地址

"port": 10250,

"readOnlyPort": 10255,

"cgroupDriver": "systemd",

"hairpinMode": "promiscuous-bridge",

"serializeImagePulls": false,

"clusterDomain": "cluster.local.",

"clusterDNS": ["10.96.0.2"]

}

EOF

1.2.3 创建kubelet配置文件

cat > kubelet.service << "EOF"

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/usr/local/bin/kubelet \

--bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig \

--cert-dir=/etc/kubernetes/ssl \

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig \

--config=/etc/kubernetes/kubelet.json \

--network-plugin=cni \

--rotate-certificates \

--pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.2 \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

1.2.4 同步文件到集群节点

cp kubelet-bootstrap.kubeconfig /etc/kubernetes/

cp kubelet.json /etc/kubernetes/

cp kubelet.service /usr/lib/systemd/system/

for i in k8s-master2 k8s-master3 k8s-worker1;do scp kubelet-bootstrap.kubeconfig kubelet.json $i:/etc/kubernetes/;done

for i in k8s-master2 k8s-master3 k8s-worker1;do scp ca.pem $i:/etc/kubernetes/ssl/;done

for i in k8s-master2 k8s-master3 k8s-worker1;do scp kubelet.service $i:/usr/lib/systemd/system/;done

说明:

kubelet.json中address需要修改为当前主机IP地址。

vim /etc/kubernetes/kubelet.json

1.2.5 创建目录及启动服务

在所有worker节点执行

mkdir -p /var/lib/kubelet

mkdir -p /var/log/kubernetes

systemctl daemon-reload

systemctl enable --now kubelet

systemctl status kubelet

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 NotReady <none> 12s v1.21.10

k8s-master2 NotReady <none> 19s v1.21.10

k8s-master3 NotReady <none> 19s v1.21.10

k8s-worker1 NotReady <none> 18s v1.21.10

NotReady是因为网络还没有启动

# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

csr-b949p 7m55s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

csr-c9hs4 3m34s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

csr-r8vhp 5m50s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

csr-zb4sr 3m40s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

说明:

确认kubelet服务启动成功后,接着到master上Approve一下bootstrap请求。

1.3 部署kube-proxy

1.3.1 创建kube-proxy证书请求文件

[root@k8s-master1 k8s-work]# cat > kube-proxy-csr.json << "EOF"

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}

]

}

EOF

1.3.2 生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

# ls kube-proxy*

kube-proxy.csr kube-proxy-csr.json kube-proxy-key.pem kube-proxy.pem

1.3.3 创建kubeconfig文件

#设置管理集群

kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.10.100:6443 --kubeconfig=kube-proxy.kubeconfig

#设置证书

kubectl config set-credentials kube-proxy --client-certificate=kube-proxy.pem --client-key=kube-proxy-key.pem --embed-certs=true --kubeconfig=kube-proxy.kubeconfig

#设置上下文

kubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=kube-proxy.kubeconfig

#使用上下文

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

1.3.4 创建服务配置文件

cat > kube-proxy.yaml << "EOF"

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 192.168.10.103 #本机地址

clientConnection:

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

clusterCIDR: 10.244.0.0/103 #pod网络,不用改

healthzBindAddress: 192.168.10.103:10256 #本机地址

kind: KubeProxyConfiguration

metricsBindAddress: 192.168.10.103:10249 #本机地址

mode: "ipvs" #ipvs比iptables更适用于大型集群

EOF

1.3.5 创建服务启动管理文件

cat > kube-proxy.service << "EOF"

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yaml \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

1.3.6 同步文件到集群工作节点主机

cp kube-proxy*.pem /etc/kubernetes/ssl/

cp kube-proxy.kubeconfig kube-proxy.yaml /etc/kubernetes/

cp kube-proxy.service /usr/lib/systemd/system/

for i in k8s-master2 k8s-master3 k8s-worker1;do scp kube-proxy.kubeconfig kube-proxy.yaml $i:/etc/kubernetes/;done

for i in k8s-master2 k8s-master3 k8s-worker1;do scp kube-proxy.service $i:/usr/lib/systemd/system/;done

说明:

修改kube-proxy.yaml中IP地址为当前主机IP.

vim /etc/kubernetes/kube-proxy.yaml

1.3.7 服务启动

#创建WorkingDirectory

mkdir -p /var/lib/kube-proxy

systemctl daemon-reload

systemctl enable --now kube-proxy

systemctl status kube-proxy

2. 网络组件部署 Calico

2.1 下载

wget https://docs.projectcalico.org/v3.19/manifests/calico.yaml

2.2 修改文件

vim calico.yaml

#修改如下两行,取消注释

3683 - name: CALICO_IPV4POOL_CIDR

3684 value: "10.244.0.0/16" #pod网络

2.3 应用文件

kubectl apply -f calico.yaml

2.4 验证应用结果

[root@k8s-master1 k8s-work]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-7cc8dd57d9-dcwjv 0/1 ContainerCreating 0 94s

calico-node-2pmqz 0/1 Init:0/3 0 94s

calico-node-9ms2r 0/1 Init:0/3 0 94s

calico-node-tj5rt 0/1 Init:0/3 0 94s

calico-node-wnjcv 0/1 PodInitializing 0 94s

[root@k8s-master1 k8s-work]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

calico-kube-controllers-7cc8dd57d9-dcwjv 0/1 ContainerCreating 0 2m29s <none> k8s-master2 <none> <none>

calico-node-2pmqz 0/1 Init:0/3 0 2m29s 192.168.10.103 k8s-master1 <none> <none>

calico-node-9ms2r 0/1 Init:ImagePullBackOff 0 2m29s 192.168.10.105 k8s-master3 <none> <none>

calico-node-tj5rt 0/1 Init:0/3 0 2m29s 192.168.10.106 k8s-worker1 <none> <none>

calico-node-wnjcv 0/1 PodInitializing 0 2m29s 192.168.10.104 k8s-master2 <none> <none>

[root@k8s-master1 k8s-work]#

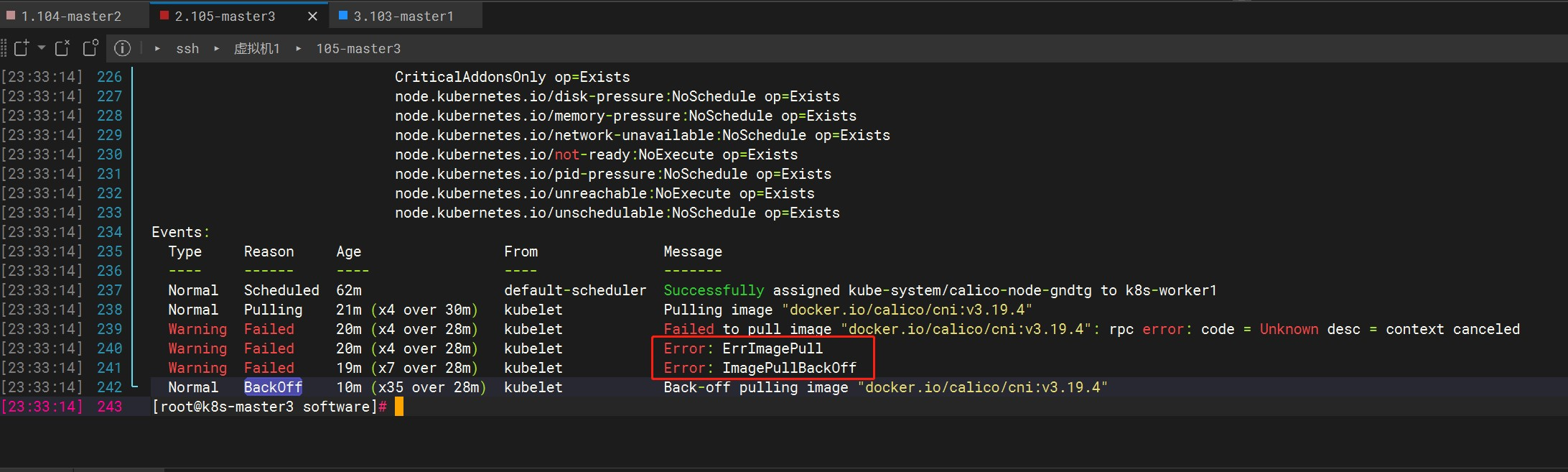

长时间STATUS没有发生变化,可以通过以下命令查看详细信息

kubectl describe pod calico-node-gndtg -n kube-system

如果有pod一直处于Init:ImagePullBackOff,等待很长时间还是没有Runing 可以尝试下载镜像包通过ftp上传到服务器上。

https://github.com/projectcalico/calico/releases?page=3找到需要的版本下载,上传images目录下对应的镜像到服务器

docker load -i calico-pod2daemon-flexvol.tar

docker load -i calico-kube-controllers.tar

docker load -i calico-cni.tar

docker load -i calico-node.tar

docker images

我这里有四台工作节点,其中一台执行命令后正常下载运行Runing,另外三台等了很久一直处于pull状态,最后采用了以上方法解决,总结下来还是网络问题。

如果一直处于Pending,检查一下看看node是否被打污点了

kubectl describe node k8s-master2 |grep Taint

#删除污点

kubectl taint nodes k8s-master2 key:NoSchedule-

污点值有三个,如下:

NoSchedule:一定不被调度

PreferNoSchedule:尽量不被调度【也有被调度的几率】

NoExecute:不会调度,并且还会驱逐Node已有Pod

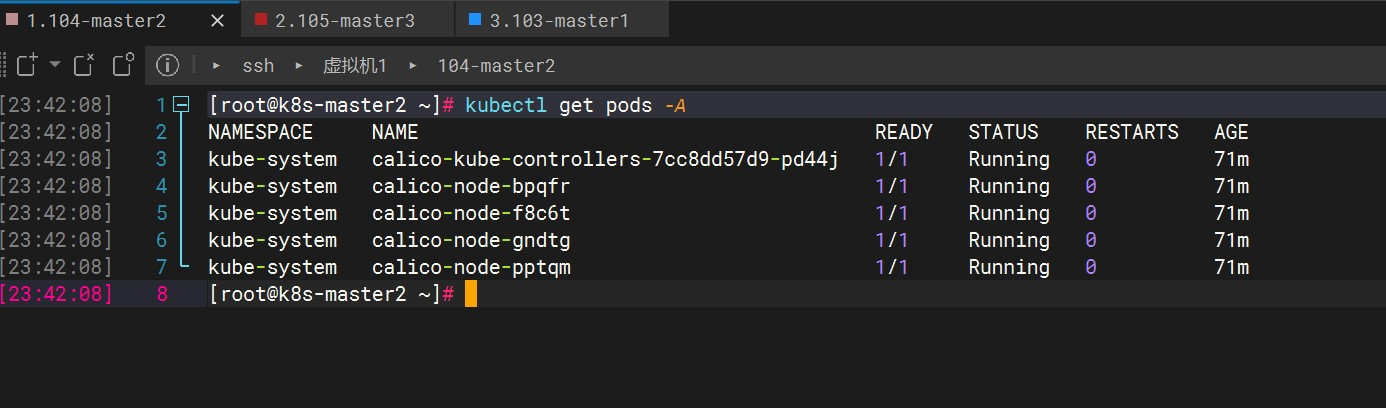

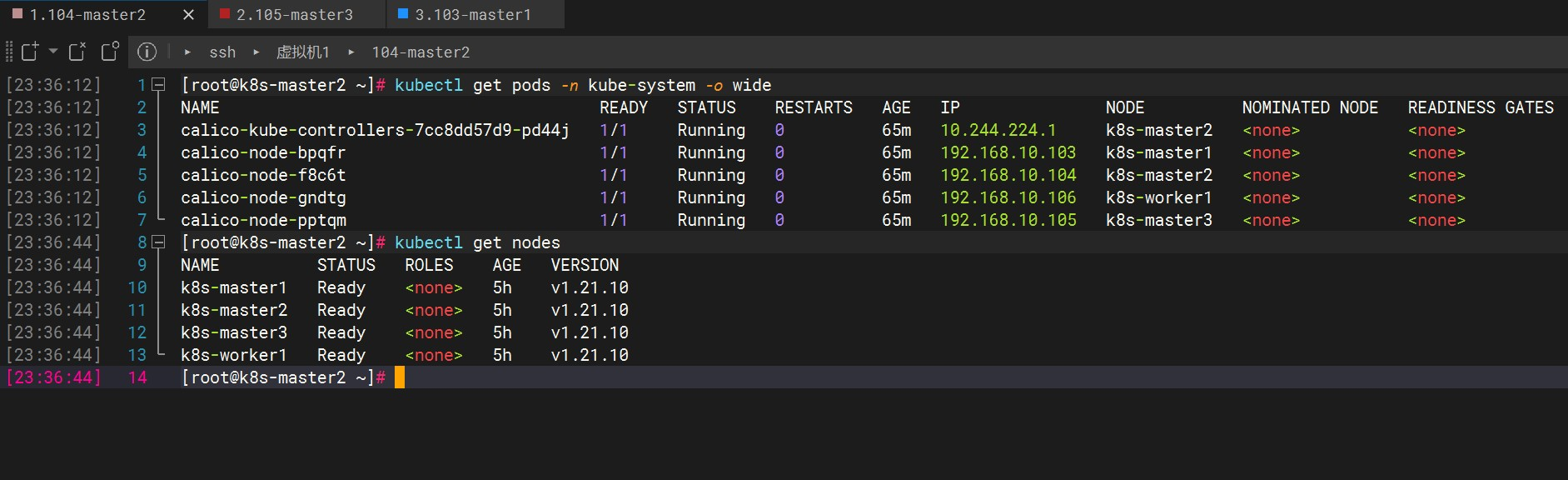

最后终于Ready

# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-7cc8dd57d9-pd44j 1/1 Running 0 70m

kube-system calico-node-bpqfr 1/1 Running 0 70m

kube-system calico-node-f8c6t 1/1 Running 0 70m

kube-system calico-node-gndtg 1/1 Running 0 70m

kube-system calico-node-pptqm 1/1 Running 0 70m

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready <none> 5h v1.21.10

k8s-master2 Ready <none> 5h v1.21.10

k8s-master3 Ready <none> 5h v1.21.10

k8s-worker1 Ready <none> 5h v1.21.10

3. 部署CoreDNS

用于实现k8s内服务间名称解析,例如k8s之间部署了两个服务 想通过名称进行访问,或者是k8s集群内的服务想访问互联网中的一些服务。

在k8s-master1上/data/k8s-work/下执行:

cat > coredns.yaml << "EOF"

apiVersion: v1

kind: ServiceAccount

metadata:

name: coredns

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

rules:

- apiGroups:

- ""

resources:

- endpoints

- services

- pods

- namespaces

verbs:

- list

- watch

- apiGroups:

- discovery.k8s.io

resources:

- endpointslices

verbs:

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:coredns

subjects:

- kind: ServiceAccount

name: coredns

namespace: kube-system

---

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns

namespace: kube-system

data:

Corefile: |

.:53 {

errors

health {

lameduck 5s

}

ready

kubernetes cluster.local in-addr.arpa ip6.arpa {

fallthrough in-addr.arpa ip6.arpa

}

prometheus :9153

forward . /etc/resolv.conf {

max_concurrent 1000

}

cache 30

loop

reload

loadbalance

}

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: kube-dns

kubernetes.io/name: "CoreDNS"

spec:

# replicas: not specified here:

# 1. Default is 1.

# 2. Will be tuned in real time if DNS horizontal auto-scaling is turned on.

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1

selector:

matchLabels:

k8s-app: kube-dns

template:

metadata:

labels:

k8s-app: kube-dns

spec:

priorityClassName: system-cluster-critical

serviceAccountName: coredns

tolerations:

- key: "CriticalAddonsOnly"

operator: "Exists"

nodeSelector:

kubernetes.io/os: linux

affinity:

podAntiAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 100

podAffinityTerm:

labelSelector:

matchExpressions:

- key: k8s-app

operator: In

values: ["kube-dns"]

topologyKey: kubernetes.io/hostname

containers:

- name: coredns

image: coredns/coredns:1.8.4

imagePullPolicy: IfNotPresent

resources:

limits:

memory: 170Mi

requests:

cpu: 100m

memory: 70Mi

args: [ "-conf", "/etc/coredns/Corefile" ]

volumeMounts:

- name: config-volume

mountPath: /etc/coredns

readOnly: true

ports:

- containerPort: 53

name: dns

protocol: UDP

- containerPort: 53

name: dns-tcp

protocol: TCP

- containerPort: 9153

name: metrics

protocol: TCP

securityContext:

allowPrivilegeEscalation: false

capabilities:

add:

- NET_BIND_SERVICE

drop:

- all

readOnlyRootFilesystem: true

livenessProbe:

httpGet:

path: /health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 5

readinessProbe:

httpGet:

path: /ready

port: 8181

scheme: HTTP

dnsPolicy: Default

volumes:

- name: config-volume

configMap:

name: coredns

items:

- key: Corefile

path: Corefile

---

apiVersion: v1

kind: Service

metadata:

name: kube-dns

namespace: kube-system

annotations:

prometheus.io/port: "9153"

prometheus.io/scrape: "true"

labels:

k8s-app: kube-dns

kubernetes.io/cluster-service: "true"

kubernetes.io/name: "CoreDNS"

spec:

selector:

k8s-app: kube-dns

clusterIP: 10.96.0.2 #需要和上边指定的clusterDNS IP一致

ports:

- name: dns

port: 53

protocol: UDP

- name: dns-tcp

port: 53

protocol: TCP

- name: metrics

port: 9153

protocol: TCP

EOF

kubectl apply -f coredns.yaml

# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-7cc8dd57d9-pd44j 1/1 Running 1 24h

kube-system calico-node-bpqfr 1/1 Running 1 24h

kube-system calico-node-f8c6t 1/1 Running 1 24h

kube-system calico-node-gndtg 1/1 Running 2 24h

kube-system calico-node-pptqm 1/1 Running 1 24h

kube-system coredns-675db8b7cc-xlwsp 1/1 Running 0 3m21s

#kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

calico-kube-controllers-7cc8dd57d9-pd44j 1/1 Running 1 24h 10.244.224.2 k8s-master2 <none> <none>

calico-node-bpqfr 1/1 Running 1 24h 192.168.10.103 k8s-master1 <none> <none>

calico-node-f8c6t 1/1 Running 1 24h 192.168.10.104 k8s-master2 <none> <none>

calico-node-gndtg 1/1 Running 2 24h 192.168.10.106 k8s-worker1 <none> <none>

calico-node-pptqm 1/1 Running 1 24h 192.168.10.105 k8s-master3 <none> <none>

coredns-675db8b7cc-xlwsp 1/1 Running 0 3m47s 10.244.159.129 k8s-master1 <none> <none>

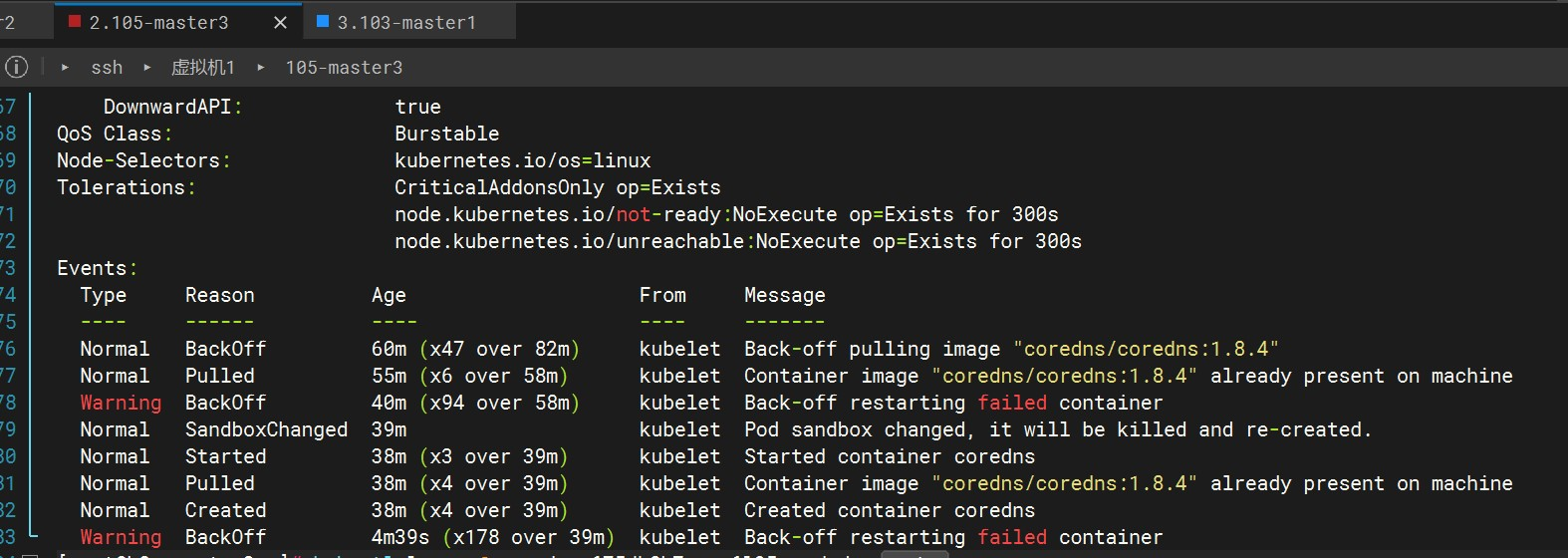

和Calico一样,如果一直处于ImagePullBackOff,查看后是因为拉去镜像的问题,可尝试将镜像本地下载后,上传到服务器load

镜像下载网站,去docker hub搜索要下载的镜像和版本,下载到本地后上传至服务器

docker load -i coredns-coredns-1.8.4-.tar

docker images

#标签不对应的话重新打标签

docker tag 镜像id coredns/coredns:v1.8.4

到这步我还是没有正常启动,提示如下信息

kubectl describe pod coredns-675db8b7cc-q6l95 -n kube-system

尝试删除pod后,重新创建CoreDNS Pod就正常了

# 查看日志

kubectl logs -f coredns-675db8b7cc-q6l95 -n kube-system

# 删除并重新创建CoreDNS Pod

kubectl delete pod coredns-675db8b7cc-q6l95 -n kube-system

kubectl apply -f coredns.yaml

4. 部署应用验证

在k8s-master1上创建pod

[root@k8s-master1 k8s-work]# cat > nginx.yaml << "EOF"

---

apiVersion: v1

kind: ReplicationController

metadata:

name: nginx-web

spec:

replicas: 2

selector:

name: nginx

template:

metadata:

labels:

name: nginx

spec:

containers:

- name: nginx

image: nginx:1.19.6

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service #可以通过不同的方式对k8s集群服务进行访问

metadata:

name: nginx-service-nodeport

spec:

ports:

- port: 80

targetPort: 80

nodePort: 30001 #把k8s集群中运行应用的80端口映射到30001端口

protocol: TCP

type: NodePort

selector:

name: nginx

EOF

kubectl apply -f nginx.yaml

# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-web-qzvw4 1/1 Running 0 58s 10.244.194.65 k8s-worker1 <none> <none>

nginx-web-spw5t 1/1 Running 0 58s 10.244.224.1 k8s-master2 <none> <none>

# kubectl get all

NAME READY STATUS RESTARTS AGE

pod/nginx-web-jnbhx 1/1 Running 1 23h

NAME DESIRED CURRENT READY AGE

replicationcontroller/nginx-web 1 1 1 2d

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 3d6h

service/nginx-service-nodeport NodePort 10.96.72.89 <none> 80:30001/TCP 2d

查看是否有30001端口

ss -anput | grep ":30001"

可以看到每台worker节点都有

访问:http://192.168.10.103:30001,http://192.168.10.104:30001,http://192.168.10.105:30001,http://192.168.10.106:30001

#查看组件状态

kubectl get cs

#查看pod

kubectl get pods