一. 创建环境

-

anaconda下新建一个环境

conda create -n yolo-sam python=3.8

-

激活新建的环境

conda activate yolo-sam

-

更换conda镜像源

conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/free/ conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main/ conda config --set show_channel_urls yes conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud/conda-forge/ conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud/msys2/ conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud/bioconda/ conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud/menpo/ conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud/pytorch/

-

安装pytorch

conda install pytorch==1.11.0 torchvision==0.12.0 torchaudio==0.11.0 cudatoolkit=11.3

-

下载官方代码,并解压

git clone [email protected]:facebookresearch/segment-anything.git

https://github.com/ultralytics/yolov5.git

-

进入下载好的yolov5-6.1文件夹,打开cmd,激活环境,输入一下代码安装yolov5必须的库

pip install -r requirements.txt -i https://mirrors.aliyun.com/pypi/simple/

-

进入下载好的segment-anything文件夹,打开cmd,激活安装好的环境,运行以下代码

pip install -e . -i https://mirrors.aliyun.com/pypi/simple/

-

安装所需python库

pip install opencv-python pycocotools matplotlib onnxruntime onnx flake8 isort black mypy -i https://mirrors.aliyun.com/pypi/simple/

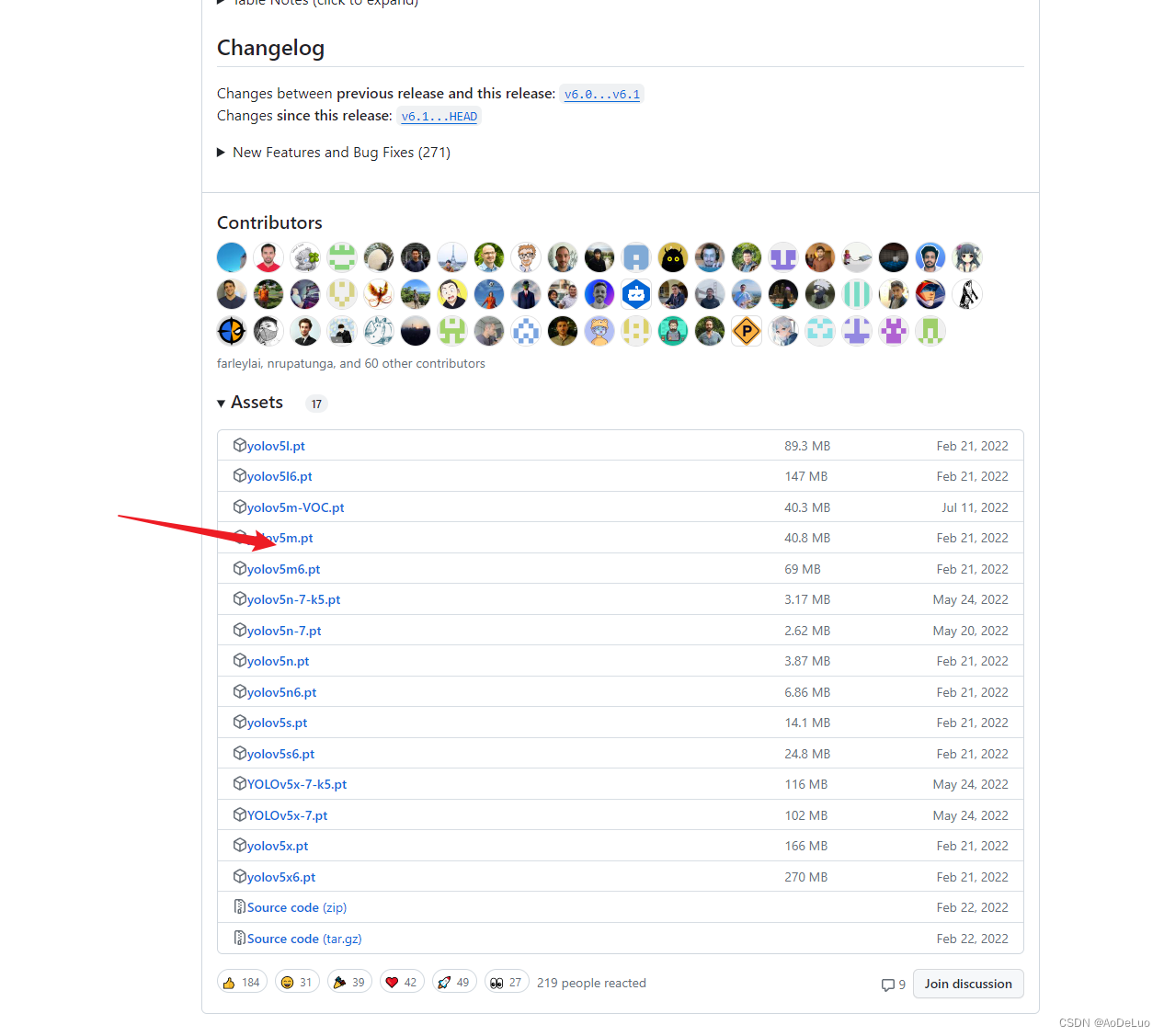

二. 下载模型文件

- 下载yolov5-6.1模型文件

下载地址 https://github.com/ultralytics/yolov5/releases

- 从官网下载sam模型

- 在yolov5项目文件中创建一个文件夹,名为weights,把下载的权重文件放进去

三. 编辑代码

- 将segment-anything项目下的文件夹segment_anything拷贝到yolov5-6.1项目文件夹下

- 在yolov5-6.1项目下创建一个新的python文件,输入如下代码:

import argparse import os import sys import numpy as np from pathlib import Path import cv2 import json import torch from segment_anything import sam_model_registry, SamPredictor from models.common import DetectMultiBackend from utils.datasets import LoadImages from utils.general import (LOGGER, check_img_size, check_requirements, increment_path, non_max_suppression, print_args, scale_coords, colorstr) from utils.plots import Annotator, colors from utils.torch_utils import select_device, time_sync def show_points(coords, labels, ax, marker_size=375): pos_points = coords[labels == 1] neg_points = coords[labels == 0] ax.scatter(pos_points[:, 0], pos_points[:, 1], color='green', marker='*', s=marker_size, edgecolor='white', linewidth=1.25) ax.scatter(neg_points[:, 0], neg_points[:, 1], color='red', marker='*', s=marker_size, edgecolor='white', linewidth=1.25) def show_box(box, img): x0, y0 = box[0], box[1] w, h = box[2] - box[0], box[3] - box[1] cv2.rectangle(img, (int(x0), int(y0)), (int(x0+w), int(y0+h)), (0, 255, 0), 1) def generate_json(masks, result, savePath): if len(masks) == 0: return num = 0 shapes = [] for mask in masks: mask = mask.cpu().numpy()[0] # 过滤面积比较小的物体 if np.count_nonzero(mask == 1) >= 625: # 创建labelme格式 tempData = { "label": "", "points": [], "group_id": None, "shape_type": "polygon", "flags": { } } tempData["label"] = str(result[num]) num = num + 1 # 找出物体轮廓 objImg = np.zeros((mask.shape[0], mask.shape[1]), np.uint8) objImg[mask] = 255 contours, hierarchy = cv2.findContours(objImg, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE) # 找出轮廓最大的 max_area = 0 maxIndex = 0 for i in range(0, len(contours)): area = cv2.contourArea(contours[i]) if area >= max_area: max_area = area maxIndex = i # 将每个物体轮廓点数限制在一定范围内 if len(contours[maxIndex]) >= int(pow(np.count_nonzero(mask == 1), 0.5) / 2): contours = list(contours[maxIndex]) contours = contours[::int(len(contours) / int(pow(np.count_nonzero(mask == 1), 0.5) / 2))] else: contours = list(contours[maxIndex]) # 向labelme数据格式中添加轮廓点 for point in contours: tempData["points"].append([int(point[0][0]), int(point[0][1])]) # 添加物体标注信息 shapes.append(tempData) jsonPath = savePath.replace(savePath.split(".")[-1], "json") # 需要生成的文件路径 print(jsonPath) # 创建json文件 file_out = open(jsonPath, "w") # 载入json文件 jsonData = { } # 8. 写入,修改json文件 jsonData["version"] = "5.2.1" jsonData["flags"] = { } jsonData["shapes"] = shapes jsonData["imagePath"] = savePath.split("\\")[-1] jsonData["imageData"] = None jsonData["imageHeight"] = mask.shape[0] jsonData["imageWidth"] = mask.shape[1] # 保存json文件 file_out.write(json.dumps(jsonData, indent=4)) # 保存文件 # 关闭json文件 file_out.close() FILE = Path(__file__).resolve() ROOT = FILE.parents[0] # YOLOv5 root directory if str(ROOT) not in sys.path: sys.path.append(str(ROOT)) # add ROOT to PATH ROOT = Path(os.path.relpath(ROOT, Path.cwd())) # relative @torch.no_grad() def run(weights_sam=ROOT / 'sam_vit_b_01ec64.pth', # model.pt path(s) weights_yolo=ROOT / 'yolov5s.pt', # model.pt path(s) source=ROOT / 'data/images', # file/dir/URL/glob, 0 for webcam data=ROOT / 'data/coco128.yaml', # dataset.yaml path imgsz=(640, 640), # inference size (height, width) conf_thres=0.25, # confidence threshold iou_thres=0.45, # NMS IOU threshold max_det=1000, # maximum detections per image device='', # cuda device, i.e. 0 or 0,1,2,3 or cpu view_img=False, # show results nosave=False, # do not save images/videos classes=None, # filter by class: --class 0, or --class 0 2 3 agnostic_nms=False, # class-agnostic NMS augment=False, # augmented inference project=ROOT / 'runs/detect', # save results to project/name name='exp', # save results to project/name exist_ok=False, # existing project/name ok, do not increment ): source = str(source) save_img = not nosave and not source.endswith('.txt') # 是否保存检测结果图像标志位 # 创建检测结果保存文件夹 save_dir = increment_path(Path(project) / name, exist_ok=exist_ok) # increment run (save_dir / 'labels' if False else save_dir).mkdir(parents=True, exist_ok=True) # make dir # 载入模型 device = select_device(device) sam = sam_model_registry["vit_" + str(weights_sam).split("_")[2]](checkpoint=weights_sam) sam.to(device="cuda") model = DetectMultiBackend(weights_yolo, device=device, dnn=False, data=data) stride, names, pt, jit, onnx, engine = model.stride, model.names, model.pt, model.jit, model.onnx, model.engine imgsz = check_img_size(imgsz, s=stride) # 检查图像尺寸 # 载入数据 dataset = LoadImages(source, img_size=imgsz, stride=stride, auto=pt) bs = 1 # batch_size vid_path, vid_writer = [None] * bs, [None] * bs # 运行检测 predictor = SamPredictor(sam) model.warmup(imgsz=(1 if pt else bs, 3, *imgsz), half=False) # warmup dt, seen = [0.0, 0.0, 0.0], 0 for path, im, im0s, vid_cap, s in dataset: t1 = time_sync() im = torch.from_numpy(im).to(device) im = im.float() im /= 255 # 0 - 255 to 0.0 - 1.0 if len(im.shape) == 3: im = im[None] # expand for batch dim t2 = time_sync() dt[0] += t2 - t1 # 预测 pred = model(im, augment=augment, visualize=False) t3 = time_sync() dt[1] += t3 - t2 # 非极大值抑制NMS pred = non_max_suppression(pred, conf_thres, iou_thres, classes, agnostic_nms, max_det=max_det) dt[2] += time_sync() - t3 # Process predictions for i, det in enumerate(pred): # per image seen += 1 p, im0, frame = path, im0s.copy(), getattr(dataset, 'frame', 0) p = Path(p) # to Path save_path = str(save_dir / p.name) # im.jpg txt_path = str(save_dir / 'labels' / p.stem) + ('' if dataset.mode == 'image' else f'_{ frame}') # im.txt s += '%gx%g ' % im.shape[2:] # print string gn = torch.tensor(im0.shape)[[1, 0, 1, 0]] # normalization gain whwh imc = im0.copy() if False else im0 # for save_crop annotator = Annotator(im0, line_width=3, example=str(names)) if len(det): # 将目标框从模型检测尺度变换到图像原始尺度 det[:, :4] = scale_coords(im.shape[2:], det[:, :4], im0.shape).round() # 打印检测结果 for c in det[:, -1].unique(): n = (det[:, -1] == c).sum() # detections per class s += f"{ n} { names[int(c)]}{ 's' * (n > 1)}, " # add to string # 写结果 result = [] for *xyxy, conf, cls in reversed(det): if save_img or view_img: # Add bbox to image c = int(cls) # integer class result.append(c) label = None if False else (names[c] if False else f'{ names[c]} { conf:.2f}') annotator.box_label(xyxy, label, color=colors(c, True)) image = im0s.copy() predictor.set_image(cv2.cvtColor(image, cv2.COLOR_BGR2RGB)) input_boxes = det[:, :4].clone().detach() # 假设这是目标检测的预测结果 transformed_boxes = predictor.transform.apply_boxes_torch(input_boxes, image.shape[:2]) masks, _, _ = predictor.predict_torch(point_coords=None, point_labels=None, boxes=transformed_boxes, multimask_output=False) generate_json(masks, result, save_path) for mask in masks: mask = mask.cpu().numpy() color = np.concatenate([np.random.random(3) * 255], axis=0) h, w = mask.shape[-2:] mask_image = mask.reshape(h, w, 1) * color.reshape(1, 1, -1) image = cv2.addWeighted(image, 1, np.array(mask_image, dtype=np.uint8), 0.4, 0) for box in input_boxes: show_box(box.cpu().numpy(), image) if view_img: cv2.imshow("mask", image) cv2.waitKey(0) # Save results (image with detections) if save_img: if dataset.mode == 'image': cv2.imwrite(save_path, image) # Print time (inference-only) LOGGER.info(f'{ s}Done. ({ t3 - t2:.3f}s)') # Print results t = tuple(x / seen * 1E3 for x in dt) # speeds per image LOGGER.info(f'Speed: %.1fms pre-process, %.1fms inference, %.1fms NMS per image at shape { (1, 3, *imgsz)}' % t) if save_img: s = f"\n{ len(list(save_dir.glob('labels/*.txt')))} labels saved to { save_dir / 'labels'}" if False else '' LOGGER.info(f"Results saved to { colorstr('bold', save_dir)}{ s}") def parse_opt(): parser = argparse.ArgumentParser() parser.add_argument('--weights-sam', nargs='+', type=str, default=ROOT / 'weights/sam_vit_h_4b8939.pth', help='model path(s)') parser.add_argument('--weights-yolo', nargs='+', type=str, default=ROOT / 'weights/airblow4s.pt', help='model path(s)') parser.add_argument('--source', type=str, default="D:\\20231126", help='file/dir/URL/glob, 0 for webcam') parser.add_argument('--data', type=str, default=ROOT / 'data/airblow_4.yaml', help='(optional) dataset.yaml path') parser.add_argument('--imgsz', '--img', '--img-size', nargs='+', type=int, default=[640], help='inference size h,w') parser.add_argument('--conf-thres', type=float, default=0.5, help='confidence threshold') parser.add_argument('--iou-thres', type=float, default=0.1, help='NMS IoU threshold') parser.add_argument('--max-det', type=int, default=1000, help='maximum detections per image') parser.add_argument('--device', default='', help='cuda device, i.e. 0 or 0,1,2,3 or cpu') parser.add_argument('--view-img', action='store_true', help='show results') parser.add_argument('--nosave', action='store_true', help='do not save images/videos') parser.add_argument('--classes', nargs='+', type=int, help='filter by class: --classes 0, or --classes 0 2 3') parser.add_argument('--agnostic-nms', action='store_true', help='class-agnostic NMS') parser.add_argument('--augment', action='store_true', help='augmented inference') parser.add_argument('--project', default=ROOT / 'runs/detect', help='save results to project/name') parser.add_argument('--name', default='exp', help='save results to project/name') parser.add_argument('--exist-ok', action='store_true', help='existing project/name ok, do not increment') opt = parser.parse_args() opt.imgsz *= 2 if len(opt.imgsz) == 1 else 1 # expand print_args(FILE.stem, opt) return opt def main(opt): check_requirements(exclude=('tensorboard', 'thop')) run(**vars(opt)) if __name__ == "__main__": opt = parse_opt() main(opt)