前言

这次实验记录的是使用虚拟机搭建的使用了三个计算\存储节点的rook-ceph集群,模拟真实的使用场景。补充之前发的只有单点的部署方式。

链接:基于kubesphere的k8s环境部署单点版本的rook-ceph

一、rook-ceph是什么?

Rook is an open source cloud-native storage orchestrator, providing the platform, framework, and support for Ceph storage to natively integrate with cloud-native environments.

Rook是一个开源的云原生存储编排器,为Ceph存储提供平台、框架和支持,以便与云原生环境进行本地集成。

二、开始部署

2.1 环境准备

虚拟机数量:四台

虚拟机镜像类型:CentOS-7-x86_64-Minimal-2009.iso

k8s环境:一套

k8s环境:1.23.6

机器列表如下

| hostname | IP | 系统盘 | 数据盘 |

|---|---|---|---|

| kubeadmin | 192.168.150.61 | sda(50G) | 无 |

| kubeworker01 | 192.168.150.62 | sda(20G) | vda(20G),vdb(20G) |

| kubeworker02 | 192.168.150.63 | sda(20G) | vda(20G),vdb(20G) |

| kubeworker03 | 192.168.150.64 | sda(20G) | vda(20G),vdb(20G) |

2.2 软件包准备,计算\存储节点执行

安装软件包,加载rbd模块

#软件包装备

yum install -y git lvm2 gdisk

#内核加载rbd模块

modprobe rbd

lsmod | grep rbd

备注:删除残留数据,如果部署失败,一定清理下数据,不清理的话会影响下一次的部署

删除配置文件目录

rm -rf /var/lib/rook/

格式化磁盘

gdisk --zap-all /dev/vda

gdisk --zap-all /dev/vdb

dd if=/dev/zero of=/dev/vda bs=1M count=100 oflag=direct,dsync

dd if=/dev/zero of=/dev/vdb bs=1M count=100 oflag=direct,dsync

2.3 下载rook-ceph文件

下载文件并提取核心文件到自己的部署文件夹

cd /data/

yum install -y git

git clone --single-branch --branch v1.11.6 https://github.com/rook/rook.git

# 提取部署文件

mkdir -p /data/rook-ceph/

cp /data/rook/deploy/examples/crds.yaml /data/rook-ceph/crds.yaml

cp /data/rook/deploy/examples/common.yaml /data/rook-ceph/common.yaml

cp /data/rook/deploy/examples/operator.yaml /data/rook-ceph/operator.yaml

cp /data/rook/deploy/examples/cluster.yaml /data/rook-ceph/cluster.yaml

cp /data/rook/deploy/examples/filesystem.yaml /data/rook-ceph/filesystem.yaml

cp /data/rook/deploy/examples/toolbox.yaml /data/rook-ceph/toolbox.yaml

cp /data/rook/deploy/examples/csi/rbd/storageclass.yaml /data/rook-ceph/storageclass-rbd.yaml

cp /data/rook/deploy/examples/csi/cephfs/storageclass.yaml /data/rook-ceph/storageclass-cephfs.yaml

cp /data/rook/deploy/examples/csi/nfs/storageclass.yaml /data/rook-ceph/storageclass-nfs.yaml

cd /data/rook-ceph

2.4 部署operator

修改镜像仓库信息,operator.yaml中镜像仓库修改为阿里云的镜像仓库配置

ROOK_CSI_CEPH_IMAGE: "quay.io/cephcsi/cephcsi:v3.8.0"

ROOK_CSI_REGISTRAR_IMAGE: "registry.cn-hangzhou.aliyuncs.com/google_containers/csi-node-driver-registrar:v2.7.0"

ROOK_CSI_RESIZER_IMAGE: "registry.cn-hangzhou.aliyuncs.com/google_containers/csi-resizer:v1.7.0"

ROOK_CSI_PROVISIONER_IMAGE: "registry.cn-hangzhou.aliyuncs.com/google_containers/csi-provisioner:v3.4.0"

ROOK_CSI_SNAPSHOTTER_IMAGE: "registry.cn-hangzhou.aliyuncs.com/google_containers/csi-snapshotter:v6.2.1"

ROOK_CSI_ATTACHER_IMAGE: "registry.cn-hangzhou.aliyuncs.com/google_containers/csi-attacher:v4.1.0"

执行部署

# 开始部署

cd /data/rook-ceph

kubectl create -f crds.yaml

kubectl create -f common.yaml

kubectl create -f operator.yaml

# 检查operator的创建运行状态

kubectl -n rook-ceph get pod

# 输出

NAME READY STATUS RESTARTS AGE

rook-ceph-operator-585f6875d-qjhdn 1/1 Running 0 4m36s

2.5 创建ceph集群

修改cluster.yaml,配置osd对应的数据盘,这里截取修改的部分,主要是nodes这里的配置。我这里按照集群的现有硬盘,将三个节点的数据盘都直接配置上了。没有使用规则发现或是全部发现。

priorityClassNames:

mon: system-node-critical

osd: system-node-critical

mgr: system-cluster-critical

useAllNodes: false

useAllDevices: false

deviceFilter:

config:

nodes:

- name: "kubeworker01"

devices:

- name: "vda"

- name: "vdb"

- name: "kubeworker02"

devices:

- name: "vda"

- name: "vdb"

- name: "kubeworker03"

devices:

- name: "vda"

- name: "vdb"

执行部署cluster-test.yaml

kubectl create -f cluster.yaml

# 会部署一段时间

kubectl -n rook-ceph get pod

# 查看部署结果,当全部为Running之后部署工具容器进行集群确认

NAME READY STATUS RESTARTS AGE

csi-cephfsplugin-7qk26 2/2 Running 0 34m

csi-cephfsplugin-dp8zx 2/2 Running 0 34m

csi-cephfsplugin-fb6rh 2/2 Running 0 34m

csi-cephfsplugin-provisioner-5549b4bcff-56ntx 5/5 Running 0 34m

csi-cephfsplugin-provisioner-5549b4bcff-m5j76 5/5 Running 0 34m

csi-rbdplugin-d829n 2/2 Running 0 34m

csi-rbdplugin-provisioner-bcff85bf9-7thl7 5/5 Running 0 34m

csi-rbdplugin-provisioner-bcff85bf9-cctkc 5/5 Running 0 34m

csi-rbdplugin-rj9wp 2/2 Running 0 34m

csi-rbdplugin-zs6s2 2/2 Running 0 34m

rook-ceph-crashcollector-kubeworker01-794647548b-bdrcx 1/1 Running 0 91s

rook-ceph-crashcollector-kubeworker02-d97cfb685-ss2sl 1/1 Running 0 86s

rook-ceph-crashcollector-kubeworker03-9d65c8dd8-zrv5x 1/1 Running 0 22m

rook-ceph-mgr-a-6fccb8744f-5zdvf 3/3 Running 0 23m

rook-ceph-mgr-b-7c4bbbfcf4-fhxm9 3/3 Running 0 23m

rook-ceph-mon-a-56dc4dfb8d-4j2bz 2/2 Running 0 34m

rook-ceph-mon-b-7d6d96649b-spz4p 2/2 Running 0 33m

rook-ceph-mon-c-759c774dc7-8hftq 2/2 Running 0 28m

rook-ceph-operator-f45db9b9f-knbx4 1/1 Running 0 2m9s

rook-ceph-osd-0-86cd7776c8-bm764 2/2 Running 0 91s

rook-ceph-osd-1-7686cf9757-ss9z2 2/2 Running 0 86s

rook-ceph-osd-2-5bc55847d-g2z6l 2/2 Running 0 91s

rook-ceph-osd-3-998bccb64-rq9cf 2/2 Running 0 83s

rook-ceph-osd-4-5c7c7f555b-djdvl 2/2 Running 0 86s

rook-ceph-osd-5-69976f85fc-9xz94 2/2 Running 0 83s

rook-ceph-osd-prepare-kubeworker01-qlvcp 0/1 Completed 0 104s

rook-ceph-osd-prepare-kubeworker02-mnhcj 0/1 Completed 0 100s

rook-ceph-osd-prepare-kubeworker03-sbk76 0/1 Completed 0 97s

rook-ceph-tools-598b59df89-77sm7 1/1 Running 0 7m43s

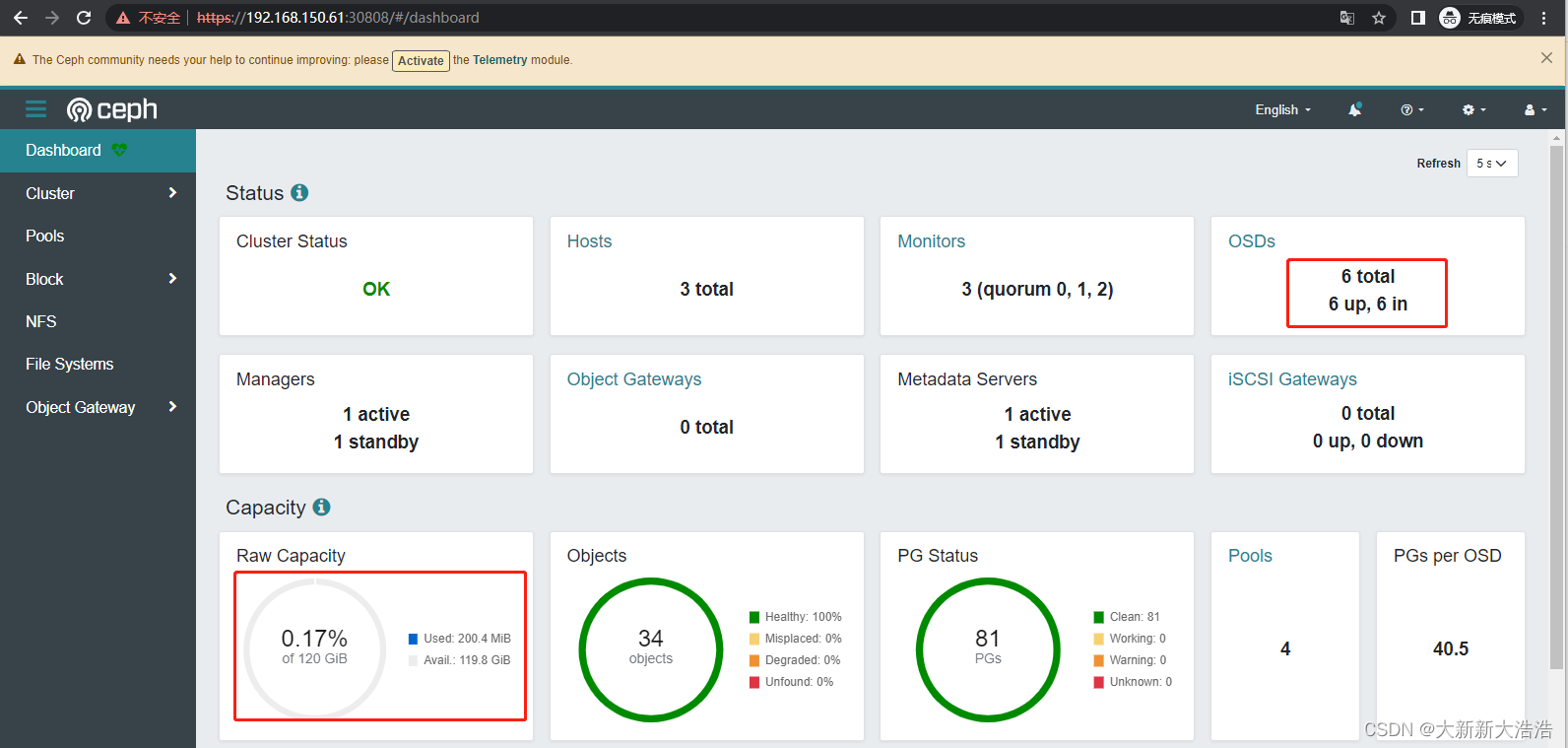

2.6 创建工具容器,检查集群状态

# 创建工具容器

kubectl apply -f toolbox.yaml

# 执行命令查询

kubectl -n rook-ceph exec -it deploy/rook-ceph-tools -- ceph -s

# 输出:

cluster:

id: 3a04d434-a2ac-4f2a-a231-a08ca46c6df3

health: HEALTH_OK

services:

mon: 3 daemons, quorum a,b,c (age 20m)

mgr: a(active, since 4m), standbys: b

osd: 6 osds: 6 up (since 3m), 6 in (since 4m)

data:

pools: 1 pools, 1 pgs

objects: 2 objects, 449 KiB

usage: 521 MiB used, 119 GiB / 120 GiB avail

pgs: 1 active+clean

# 或是进入工具容器内执行命令

kubectl -n rook-ceph exec -it deploy/rook-ceph-tools -- bash

# 查看集群状态

bash-4.4$ ceph -s

cluster:

id: 3a04d434-a2ac-4f2a-a231-a08ca46c6df3

health: HEALTH_OK

services:

mon: 3 daemons, quorum a,b,c (age 21m)

mgr: a(active, since 5m), standbys: b

osd: 6 osds: 6 up (since 5m), 6 in (since 5m)

data:

pools: 1 pools, 1 pgs

objects: 2 objects, 449 KiB

usage: 521 MiB used, 119 GiB / 120 GiB avail

pgs: 1 active+clean

2.7 准备dashboard的nodeport端口映射服务

cat > /data/rook-ceph/dashboard-external-https.yaml <<EOF

apiVersion: v1

kind: Service

metadata:

name: rook-ceph-mgr-dashboard-external-https

namespace: rook-ceph

labels:

app: rook-ceph-mgr

rook_cluster: rook-ceph

spec:

ports:

- name: dashboard

port: 8443

protocol: TCP

targetPort: 8443

nodePort: 30808

selector:

app: rook-ceph-mgr

rook_cluster: rook-ceph

sessionAffinity: None

type: NodePort

EOF

# 这里的nodeport端口建议更换为适合自己环境规划的端口

kubectl apply -f dashboard-external-https.yaml

# 输出

service/rook-ceph-mgr-dashboard-external-https created

# 获取admin用户密码

kubectl -n rook-ceph get secret rook-ceph-dashboard-password -o jsonpath="{['data']['password']}" | base64 --decode && echo

使用浏览器访问端口192.168.150.61:30808,使用admin用户登陆,登陆后可以修改密码,也可以新建用户

成功登陆

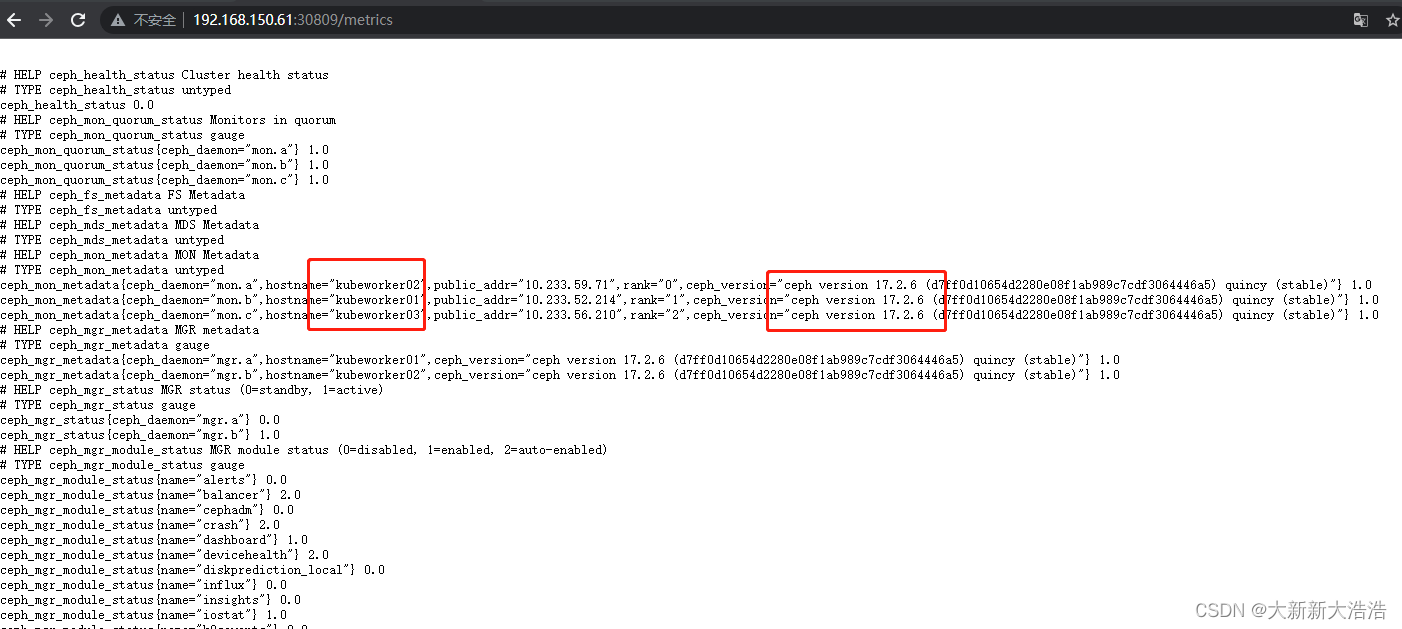

2.8 准备prometheus的metric端口映射服务

cat > /data/rook-ceph/metric-external-https.yaml <<EOF

apiVersion: v1

kind: Service

metadata:

name: rook-ceph-mgr-metric-external-https

namespace: rook-ceph

labels:

app: rook-ceph-mgr

rook_cluster: rook-ceph

spec:

ports:

- name: metric

port: 9283

protocol: TCP

targetPort: 9283

nodePort: 30809

selector:

app: rook-ceph-mgr

rook_cluster: rook-ceph

sessionAffinity: None

type: NodePort

EOF

# 这里的nodeport端口建议更换为适合自己环境规划的端口

kubectl apply -f metric-external-https.yaml

# 输出

service/rook-ceph-mgr-metric-external-https created

使用浏览器访问端口192.168.150.61:30809

三、创建存储类

3.1 创建cephrbd存储类

kubectl apply -f storageclass-rbd.yaml

创建测试用的pvc

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: test-rbd-pv-claim

spec:

storageClassName: rook-ceph-block

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 10G

3.2 创建cephfs存储类

kubectl apply -f filesystem.yaml

kubectl apply -f storageclass-cephfs.yaml

# 创建filesystem.yaml之后会生成rook-ceph-mds-myfs的工作负载

kubectl -n rook-ceph get pod |grep mds

# 输出

rook-ceph-mds-myfs-a-5d5754b77-nlcb9 2/2 Running 0 97s

rook-ceph-mds-myfs-b-9f9dd7f6-sc6qm 2/2 Running 0 96s

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: test-cephfs-pv-claim

spec:

storageClassName: rook-cephfs

accessModes:

- ReadWriteMany

resources:

requests:

storage: 10G

3.3 查看创建结果

查询存储

kubectl get storageclass

# 输出

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

local (default) openebs.io/local Delete WaitForFirstConsumer false 21d

rook-ceph-block rook-ceph.rbd.csi.ceph.com Delete Immediate true 4s

rook-cephfs rook-ceph.cephfs.csi.ceph.com Delete Immediate true 4m44s

查询pvc

kubectl get pvc -o wide

# 输出

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE VOLUMEMODE

test-cephfs-pv-claim Bound pvc-3cdd9e88-2ae2-4e23-9f23-13e095707964 10Gi RWX rook-cephfs 7s Filesystem

test-rbd-pv-claim Bound pvc-55a57b74-b595-4726-8b82-5257fd2d279a 10Gi RWO rook-ceph-block 6s Filesystem

补充

调试方式

当集群没有按照预期成功部署的情况下,可以执行如下的命令重新部署或是查看异常的原因

# 重启ceph operator调度,重新部署

kubectl rollout restart deploy rook-ceph-operator -n rook-ceph

#注:如果新osd pod无法执行起来可以通过查询osd prepare日志找问题

kubectl -n rook-ceph logs rook-ceph-osd-prepare-nodeX-XXXXX provision

#查看状态

kubectl -n rook-ceph get CephCluster -o yaml

关于使用裸分区的实验

用虚拟机测试了一下裸分区作为osd的存储介质,也可以运行。

priorityClassNames:

mon: system-node-critical

osd: system-node-critical

mgr: system-cluster-critical

useAllNodes: false

useAllDevices: false

deviceFilter:

config:

nodes:

- name: "kubeworker01"

devices:

- name: "vda6"

- name: "kubeworker02"

devices:

- name: "vda6"

- name: "kubeworker03"

devices:

- name: "vda6"

总结

正式的集群版本用起来还是很方便的,后续会测试一些加盘和减盘的动作,模拟一下日常可能会遇到的问题。ceph只是初级使用还是很香的。