代码:

import requests

from bs4 import BeautifulSoup

import jieba

# 获取html

url = "http://finance.ifeng.com/a/20180328/16049779_0.shtml"

res = requests.get(url)

res.encoding = 'utf-8'

content = res.text

# 添加至bs4

soup = BeautifulSoup(content, 'html.parser')

div = soup.find(id = 'main_content')

# 写入文件

filename = 'news.txt'

with open(filename,'w',encoding='utf-8') as file_object:

# <p>标签的处理

for line in div.findChildren():

file_object.write(line.get_text()+'\n')

# 使用分词工具

seg_list = jieba.cut("我来到北京清华大学", cut_all=True)

print("Full Mode: " + "/ ".join(seg_list)) # 全模式

seg_list = jieba.cut("我来到北京清华大学", cut_all=False)

print("Default Mode: " + "/ ".join(seg_list)) # 精确模式

seg_list = jieba.cut("他来到了网易杭研大厦") # 默认是精确模式

print(", ".join(seg_list))

with open(filename,'r',encoding='utf-8') as file_object:

with open('cut_news.txt','w',encoding='utf-8') as file_cut_object:

for line in file_object.readlines():

seg_list = jieba.cut(line,cut_all=False)

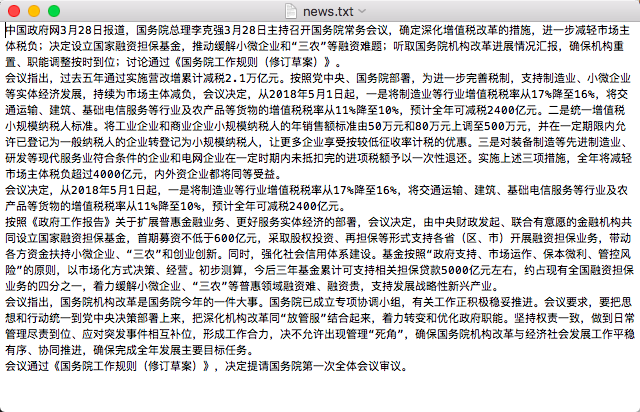

file_cut_object.write('/'.join(seg_list))爬取结果:

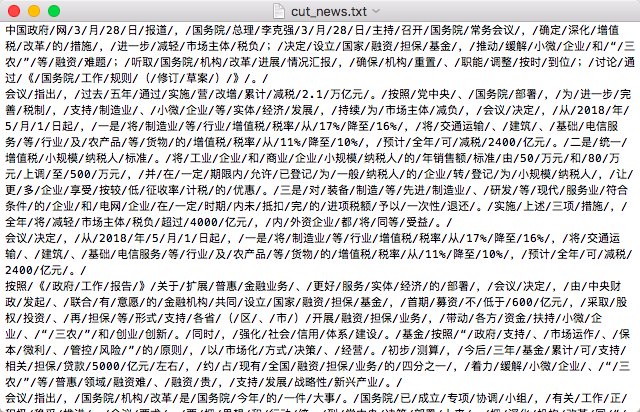

分词结果: