本文是根据最近一份github上很不错的部署教程所做的验证部署测试,不同之处在于原教程中是3节点,而这里共使用了4个节点。Github上的教程地址如下所示,推荐大家参照原作者文章进行自己的实验。在本文中遇到的一些问题,也已经反馈至github issue或讨论中,同时也有很多其他网友反馈遇到或发现的一些问题,其中大部分问题都已经在github教程中得到了校正。

目录:

问题记录:

开篇先谈问题记录,主要原因是参照github上的教程原文去部署自己的实验环境的效果会更好,大家了解下我遇到过的问题即可,提供一个参考。Github上的教程原文内容详实、生动,是以部署操作的说明为主,原理性的知识需要自己另行学习掌握。原文中也曾出现和纠正过一些细微错误,尽信书不如无书,遇到问题后不妨查一下其他参考资料中是怎么说的。本文也只是我的一份过程笔记,供自己后续工作参考。

1、网卡hairpin_mode设置

在准备配置环境的过程中,就要求设置docker网卡的hairpin_mode,不太理解在未安装docker时为什么要求设置这个,且确实无法设置,因为此时连docker也还没有安装。

注:hairpin_mode模式下,虚机或容器间的流量强制要求必须经过物理交换机才能通信。

2、设置系统参数net.bridge.bridge-nf-call-iptables=1(打开iptables管理网桥的功能)

在各节点上执行以下命令:

modprobe br_netfilter

cat > /etc/sysctl.d/kubernetes.conf <<EOF

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

EOF

sysctl -p /etc/sysctl.d/kubernetes.conf

原文中把modprobe br_netfilter放在最后执行的,实际情况是应该首先执行这条命令。

3、

授予 kubernetes 证书访问 kubelet API 的权限的命令的执行顺序错误

应该在成功启动了kube-apiserver服务后再执行该命令。

4、在部署kube-apiserver服务中,制作密钥的证书请求中使用了无法解析的域名kubernetes.default.svc.cluster.local.

该问题已经确认为是go v1.9中的域名语法校验解析bug。在6.29号的最新版本的部署材料中已经发现和纠正了该问题。但此故障引发的coreDNS部署失败并报以下错误,已经折腾了我2天时间寻求答案!

E0628 08:10:41.256264 1 reflector.go:205]

github.com/coredns/coredns/plugin/kubernetes/controller.go:319: Failed to list *v1.Namespace: Get https://10.254.0.1:443/api/v1/namespaces?limit=500&resourceVersion=0: tls: failed to parse certificate from server: x509: cannot parse dnsName "kubernetes.default.svc.cluster.local."

关于该bug的修复说明:

5、关于怎么使用admin密钥访问api接口

下面是正确的方式:

curl -sSL --cacert /etc/kubernetes/cert/ca.pem --cert /home/k8s/admin.pem --key /home/k8s/admin-key.pem https://172.16.10.100:6443/api/v1/endpoints

注:原文中因密钥文件未指定为绝对路径,会导致无法找到而报错。

1、系统初始化配置

ssh登录信息:

- kube-node1,localhost:2222

- kube-node2,localhost:2200

- kube-node3,localhost:2201

- kube-server,localhost:2202

修改每台机器的 /etc/hosts 文件,添加主机名和 IP 的对应关系:

172.16.10.100 kube-server

172.16.10.101 kube-node1

172.16.10.102 kube-node2

172.16.10.103 kube-node3

在每台机器上添加 k8s 账户,可以无密码 sudo:

# useradd -m k8s

# visudo

在末尾添加下面一行

k8s ALL=(ALL) NOPASSWD: ALL

在所有node节点机器上添加 docker 账户,将 k8s 账户添加到 docker 组中,同时配置 dockerd 参数:

useradd -m docker

gpasswd -a k8s docker

mkdir -p /etc/docker/

cat /etc/docker/daemon.json

{

"registry-mirrors": ["https://hub-mirror.c.163.com", "https://docker.mirrors.ustc.edu.cn"],

"max-concurrent-downloads": 20

}

设置 kube-server 可以无密码登录所有节点的 k8s 和 root 账户:

[k8s@kube-server k8s]$ ssh-keygen -t rsa

[k8s@kube-server k8s]$ ssh-copy-id root@kube-node1

[k8s@kube-server k8s]$ ssh-copy-id root@kube-node2

[k8s@kube-server k8s]$ ssh-copy-id root@kube-node3

[k8s@kube-server k8s]$ ssh-copy-id k8s@kube-node1

[k8s@kube-server k8s]$ ssh-copy-id k8s@kube-node2

[k8s@kube-server k8s]$ ssh-copy-id k8s@kube-node3

在每台机器上将可执行文件路径 /opt/k8s/bin 添加到 PATH 变量中:

[root@kube-server ~]# echo 'PATH=/opt/k8s/bin:$PATH:$HOME/bin:$JAVA_HOME/bin' >>/root/.bashrc

[root@kube-server ~]# su - k8s

[k8s@kube-server ~]$ echo 'PATH=/opt/k8s/bin:$PATH:$HOME/bin:$JAVA_HOME/bin' >>~/.bashrc

在每台机器上安装依赖包:

yum install -y epel-release

yum install -y conntrack ipvsadm ipset jq sysstat curl iptables

在每台机器上关闭防火墙:

systemctl stop firewalld

systemctl disable firewalld

iptables -F && iptables -X && iptables -F -t nat && iptables -X -t nat

iptables -P FORWARD ACCEPT

在每台机器上关闭swap分区,k8s从v1.8版本开始默认要求关闭swap,这样做的主要目的是保证性能:

swapoff -a

同时编辑下/etc/fstab文件,注释掉swap分区。

注:也可以选择通过为kubelet将参数 --fail-swap-on 设置为 false 来忽略 swap on

设置系统参数

net.bridge.bridge-nf-call-iptables=1(打开iptables管理网桥的功能)

在各节点上执行以下命令:

modprobe br_netfilter

cat > /etc/sysctl.d/kubernetes.conf <<EOF

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

vm.swappiness=0

EOF

sysctl -p /etc/sysctl.d/kubernetes.conf

使用k8s用户在每台机器上创建目录:

sudo mkdir -p /opt/k8s/bin

sudo chown -R k8s /opt/k8s

sudo sudo mkdir -p /etc/kubernetes/cert

sudo chown -R k8s /etc/kubernetes

sudo mkdir -p /etc/etcd/cert

sudo chown -R k8s /etc/etcd/cert

sudo mkdir -p /var/lib/etcd && chown -R k8s /etc/etcd/cert

后续的部署步骤将使用下面定义的全局环境变量,请根据自己的机器、网络情况修改:

#!/usr/bin/bash

# 生成 EncryptionConfig 所需的加密 key

ENCRYPTION_KEY=$(head -c 32 /dev/urandom | base64)

# 最好使用 当前未用的网段 来定义服务网段和 Pod 网段

# 服务网段,部署前路由不可达,部署后集群内路由可达(kube-proxy 和 ipvs 保证)

SERVICE_CIDR="10.254.0.0/16"

# Pod 网段,建议 /16 段地址,部署前路由不可达,部署后集群内路由可达(flanneld 保证)

CLUSTER_CIDR="172.30.0.0/16"

# 服务端口范围 (NodePort Range)

export NODE_PORT_RANGE="8400-9000"

# 集群各机器 IP 数组

export NODE_IPS=(172.16.10.101 172.16.10.102 172.16.10.103)

# 集群各 IP 对应的 主机名数组

export NODE_NAMES=(kube-node1 kube-node2 kube-node3)

# kube-apiserver 节点 IP

export MASTER_IP=172.16.10.100

# kube-apiserver https 地址

export KUBE_APISERVER="https://${MASTER_IP}:6443"

# etcd 集群服务地址列表

export ETCD_ENDPOINTS="https://172.16.10.101:2379,https://

172.16.10.102:2379,https://

172.16.10.103:2379"

# etcd 集群间通信的 IP 和端口

export ETCD_NODES="kube-node1=https://172.16.10.101:2380,kube-node2=https://172.16.10.102:2380,kube-node3=https://172.16.10.103:2380"

# flanneld 网络配置前缀

export FLANNEL_ETCD_PREFIX="/kubernetes/network"

# kubernetes 服务 IP (一般是 SERVICE_CIDR 中第一个IP)

export CLUSTER_KUBERNETES_SVC_IP="10.254.0.1"

# 集群 DNS 服务 IP (从 SERVICE_CIDR 中预分配)

export CLUSTER_DNS_SVC_IP="10.254.0.2"

# 集群 DNS 域名

export CLUSTER_DNS_DOMAIN="cluster.local."

# 将二进制目录 /opt/k8s/bin 加到 PATH 中

export PATH=/opt/k8s/bin:$PATH

将上面内容保存为/opt/k8s/bin/environment.sh,分发各节点。

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p /opt/k8s/bin && chown -R k8s /opt/k8s/bin"

scp /opt/k8s/bin/environment.sh k8s@${node_ip}:/opt/k8s/bin/environment.sh

done

2、创建CA证书和密钥

使用 CloudFlare 的 PKI 工具集 cfssl 创建所有证书。

安装 cfssl 工具集

sudo mkdir -p /opt/k8s/cert && sudo chown -R k8s /opt/k8s && cd /opt/k8s

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

mv cfssl_linux-amd64 /opt/k8s/bin/cfssl

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

mv cfssljson_linux-amd64 /opt/k8s/bin/cfssljson

wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

mv cfssl-certinfo_linux-amd64 /opt/k8s/bin/cfssl-certinfo

chmod +x /opt/k8s/bin/*

export PATH=/opt/k8s/bin:$PATH

创建根证书 (CA)

CA 配置文件用于配置根证书的使用场景 (profile) 和具体参数 (usage,过期时间、服务端认证、客户端认证、加密等),后续在签名其它证书时需要指定特定场景。

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

EOF

- signing:表示该证书可用于签名其它证书,生成的 ca.pem 证书中 CA=TRUE;

- server auth:表示 client 可以用该该证书对 server 提供的证书进行验证;

- client auth:表示 server 可以用该该证书对 client 提供的证书进行验证;

创建证书签名请求文件

cat > ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "testcorp"

}

]

}

EOF

- CN:Common Name,kube-apiserver 从证书中提取该字段作为请求的用户名 (User Name),浏览器使用该字段验证网站是否合法;

- O:Organization,kube-apiserver 从证书中提取该字段作为请求用户所属的组 (Group);

kube-apiserver 将提取的 User、Group (kubernetes.k8s)作为 RBAC 授权的用户标识;

生成 CA 证书和私钥

[k8s@kube-server ~]$ cfssl gencert -initca ca-csr.json | cfssljson -bare ca

2018/06/25 13:45:47 [INFO] generating a new CA key and certificate from CSR

2018/06/25 13:45:47 [INFO] generate received request

2018/06/25 13:45:47 [INFO] received CSR

2018/06/25 13:45:47 [INFO] generating key: rsa-2048

2018/06/25 13:45:47 [INFO] encoded CSR

2018/06/25 13:45:47 [INFO] signed certificate with serial number 333080208048507116448165428577682216785536827857

[k8s@kube-server ~]$ ls ca*

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem

[k8s@kube-server ~]$

分发证书文件

将生成的 CA 证书、秘钥文件、配置文件拷贝到所有节点的 /etc/kubernetes/cert 目录下:

source /opt/k8s/bin/environment.sh # 导入 NODE_IPS 环境变量

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp ca*.pem ca-config.json k8s@${node_ip}:/etc/kubernetes/cert

done

3、部署 kubectl 命令行工具

kubectl 默认从 ~/.kube/config 文件读取 kube-apiserver 地址、证书、用户名等信息。

下载和分发 kubectl 二进制文件

下载和解压:

wget https://dl.k8s.io/v1.10.4/kubernetes-client-linux-amd64.tar.gz

tar -xzvf kubernetes-client-linux-amd64.tar.gz

分发到所有使用 kubectl 的节点:

source /opt/k8s/bin/environment.sh # 导入 NODE_IPS 环境变量

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp kubernetes/client/bin/kubectl k8s@${node_ip}:/opt/k8s/bin/

ssh k8s@${node_ip} "chmod +x /opt/k8s/bin/*"

done

cp kubernetes/client/bin/kubectl /opt/k8s/bin/

chmod +x /opt/k8s/bin/*

创建 admin 证书和私钥

kubectl 与 apiserver https 安全端口通信,apiserver 对提供的证书进行认证和授权。

kubectl 作为集群的管理工具,需要被授予最高权限。这里创建具有最高权限的 admin 证书。

创建证书签名请求:

cat > admin-csr.json <<EOF

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:masters",

"OU": "testcorp"

}

]

}

EOF

- O 为 system:masters,kube-apiserver 收到该证书后将请求的 Group 设置为 system:masters;

- 预定义的 ClusterRoleBinding cluster-admin 将 Group system:masters 与 Role cluster-admin 绑定,该 Role 授予所有 API的权限;

- 该证书只会被 kubectl 当做 client 证书使用,所以 hosts 字段为空;

生成证书和私钥:

cfssl gencert -ca=/etc/kubernetes/cert/ca.pem -ca-key=/etc/kubernetes/cert/ca-key.pem -config=/etc/kubernetes/cert/ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

ls admin*

创建 kubeconfig 文件

kubeconfig 为 kubectl 的配置文件,包含访问 apiserver 的所有信息,如 apiserver 地址、CA 证书和自身使用的证书。

source /opt/k8s/bin/environment.sh

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/cert/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kubectl.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials admin \

--client-certificate=admin.pem \

--client-key=admin-key.pem \

--embed-certs=true \

--kubeconfig=kubectl.kubeconfig

# 设置上下文参数

kubectl config set-context kubernetes \

--cluster=kubernetes \

--user=admin \

--kubeconfig=kubectl.kubeconfig

# 设置默认上下文

kubectl config use-context kubernetes --kubeconfig=kubectl.kubeconfig

- --certificate-authority:验证 kube-apiserver 证书的根证书;

- --client-certificate、--client-key:刚生成的 admin 证书和私钥,连接 kube-apiserver 时使用;

- --embed-certs=true:将 ca.pem 和 admin.pem 证书内容嵌入到生成的 kubectl.kubeconfig 文件中(不加时,写入的是证书文件路径);

分发 kubeconfig 文件

分发到所有使用 kubelet 命令的节点:

source /opt/k8s/bin/environment.sh # 导入 NODE_IPS 环境变量

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh k8s@${node_ip} "mkdir -p ~/.kube"

scp kubectl.kubeconfig k8s@${node_ip}:~/.kube/config

ssh root@${node_ip} "mkdir -p ~/.kube"

scp kubectl.kubeconfig root@${node_ip}:~/.kube/config

done

cp kubectl.kubeconfig /home/k8s/.kube/config

sudo mkdir -p /root/.kube

sudo cp kubectl.kubeconfig /root/.kube/config

保存到用户的 ~/.kube/config 文件。

4、部署 etcd 集群

我们在kube-node1, kube-node2, kube-node3上面部署一套高可用的etcd服务集群。

下载和分发 etcd 二进制文件

到 https://github.com/coreos/etcd/releases 页面下载最新版本的发布包:

tar -xvf etcd-v3.3.7-linux-amd64.tar.gz

分发二进制文件到集群所有节点

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp etcd-v3.3.7-linux-amd64/etcd* k8s@${node_ip}:/opt/k8s/bin

ssh k8s@${node_ip} "chmod +x /opt/k8s/bin/*"

done

创建 etcd 证书和私钥

创建证书签名请求:

cat > etcd-csr.json <<EOF

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"172.16.10.101",

"172.16.10.102",

"172.16.10.103"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "testcorp"

}

]

}

EOF

hosts 字段指定授权使用该证书的 etcd 节点 IP 或域名列表,这里将 etcd 集群的三个节点 IP 都列在其中。

生成证书和私钥:

cfssl gencert -ca=/etc/kubernetes/cert/ca.pem \

-ca-key=/etc/kubernetes/cert/ca-key.pem \

-config=/etc/kubernetes/cert/ca-config.json \

-profile=kubernetes etcd-csr.json | cfssljson -bare etcd

分发生成的证书和私钥到各 etcd 节点:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p /etc/etcd/cert && chown -R k8s /etc/etcd/cert"

scp etcd*.pem k8s@${node_ip}:/etc/etcd/cert/

done

创建 etcd 的 systemd unit 模板文件

source /opt/k8s/bin/environment.sh

cat > etcd.service.template <<EOF

After=network.target

After=network-online.target

Wants=network-online.target

Documentation=

https://github.com/coreos

[Service]

User=k8s

Type=notify

WorkingDirectory=/var/lib/etcd/

ExecStart=/opt/k8s/bin/etcd \\

--data-dir=/var/lib/etcd \\

--name=##NODE_NAME## \\

--cert-file=/etc/etcd/cert/etcd.pem \\

--key-file=/etc/etcd/cert/etcd-key.pem \\

--trusted-ca-file=/etc/kubernetes/cert/ca.pem \\

--peer-cert-file=/etc/etcd/cert/etcd.pem \\

--peer-key-file=/etc/etcd/cert/etcd-key.pem \\

--peer-trusted-ca-file=/etc/kubernetes/cert/ca.pem \\

--peer-client-cert-auth \\

--client-cert-auth \\

--listen-peer-urls=https://##NODE_IP##:2380 \\

--initial-advertise-peer-urls=https://##NODE_IP##:2380 \\

--listen-client-urls=https://##NODE_IP##:2379,http://127.0.0.1:2379 \\

--advertise-client-urls=https://##NODE_IP##:2379 \\

--initial-cluster-token=etcd-cluster-0 \\

--initial-cluster=${

ETCD_NODES} \\

--initial-cluster-state=new

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

- User:指定以 k8s 账户运行;

- WorkingDirectory、--data-dir:指定工作目录和数据目录为 /var/lib/etcd,需在启动服务前创建这个目录;

- --name:指定节点名称,当 --initial-cluster-state 值为 new 时,--name 的参数值必须位于 --initial-cluster 列表中;

- --cert-file、--key-file:etcd server 与 client 通信时使用的证书和私钥;

- --peer-client-cert-auth、--client-cert-auth:启用与client的加密通信功能;

- --trusted-ca-file:签名 client 证书的 CA 证书,用于验证 client 证书;

- --peer-cert-file、--peer-key-file:etcd 与 peer 通信使用的证书和私钥;

- --peer-trusted-ca-file:签名 peer 证书的 CA 证书,用于验证 peer 证书;

为各节点创建和分发 etcd systemd unit 文件

替换模板文件中的变量,为各节点创建 systemd unit 文件:

source /opt/k8s/bin/environment.sh

for (( i=0; i < 3; i++ ))

do

sed -e "s/##NODE_NAME##/${NODE_NAMES[i]}/" -e "s/##NODE_IP##/${NODE_IPS[i]}/" etcd.service.template > etcd-${NODE_IPS[i]}.service

done

ls *.service

NODE_NAMES 和 NODE_IPS 为相同长度的 bash 数组,分别为节点名称和对应的 IP。

分发生成的 systemd unit 文件:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p /var/lib/etcd && chown -R k8s /var/lib/etcd" # 创建 etcd 数据目录和工作目录

scp etcd-${node_ip}.service root@${node_ip}:/etc/systemd/system/etcd.service

done

文件重命名为 etcd.service。

启动 etcd 服务

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "source /opt/k8s/bin/environment.sh && systemctl daemon-reload && systemctl enable etcd && systemctl start etcd"

done

etcd 进程首次启动时会等待其它节点的 etcd 加入集群,命令 systemctl start etcd 会卡住一段时间,为正常现象。

检查启动结果

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh k8s@${node_ip} "systemctl status etcd|grep Active"

done

>>> 172.16.10.101

Active: active (running) since Mon 2018-06-25 17:17:52 UTC; 58s ago

>>> 172.16.10.102

Active: active (running) since Mon 2018-06-25 17:17:52 UTC; 58s ago

>>> 172.16.10.103

Active: active (running) since Mon 2018-06-25 17:17:58 UTC; 53s ago

验证服务状态

部署完 etcd 集群后,在任一 etc 节点上执行如下命令:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ETCDCTL_API=3 /opt/k8s/bin/etcdctl \

--endpoints=https://${node_ip}:2379 \

--cacert=/etc/kubernetes/cert/ca.pem \

--cert=/etc/etcd/cert/etcd.pem \

--key=/etc/etcd/cert/etcd-key.pem endpoint health

done

>>> 172.16.10.101

https://172.16.10.101:2379 is healthy: successfully committed proposal: took = 1.918083ms

>>> 172.16.10.102

https://172.16.10.102:2379 is healthy: successfully committed proposal: took = 2.779171ms

>>> 172.16.10.103

https://172.16.10.103:2379 is healthy: successfully committed proposal: took = 2.684327ms

输出均为 healthy 时表示集群服务正常。

5、部署flannel网络

下载和分发 flanneld 二进制文件

到 https://github.com/coreos/flannel/releases 页面下载最新版本的发布包:

mkdir flannel

tar -xzvf flannel-v0.10.0-linux-amd64.tar.gz -C flannel

分发 flanneld 二进制文件到集群所有节点:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp flannel/{flanneld,mk-docker-opts.sh} k8s@${node_ip}:/opt/k8s/bin/

ssh k8s@${node_ip} "chmod +x /opt/k8s/bin/*"

done

此为在kube-server节点上的操作,所以还要向本节点复制一份:

cp flannel/flanneld flannel/mk-docker-opts.sh /opt/k8s/bin/

chmod +x /opt/k8s/bin/*

创建 flannel 证书和私钥

flannel 从 etcd 集群存取网段分配信息,而 etcd 集群启用了双向 x509 证书认证,所以需要为 flanneld 生成证书和私钥。

创建证书签名请求:

cat > flanneld-csr.json <<EOF

{

"CN": "flanneld",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "testcorp"

}

]

}

EOF

该证书只会被 kubectl 当做 client 证书使用,所以 hosts 字段为空。

生成证书和私钥:

cfssl gencert -ca=/etc/kubernetes/cert/ca.pem \

-ca-key=/etc/kubernetes/cert/ca-key.pem \

-config=/etc/kubernetes/cert/ca-config.json \

-profile=kubernetes flanneld-csr.json | cfssljson -bare flanneld

ls flanneld*pem

将生成的证书和私钥分发到所有节点(master 和 worker):

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p /etc/flanneld/cert && chown -R k8s /etc/flanneld"

scp flanneld*.pem k8s@${node_ip}:/etc/flanneld/cert

done

给kube-server自身也要分发一份:

sudo mkdir -p /etc/flanneld/cert &&sudo chown -R k8s /etc/flanneld

cp flanneld*.pem /etc/flanneld/cert

向 etcd 写入集群 Pod 网段信息

注意:本步骤只需在某一个node节点上执行一次。

source /opt/k8s/bin/environment.sh

etcdctl \

--endpoints=${ETCD_ENDPOINTS} \

--ca-file=/etc/kubernetes/cert/ca.pem \

--cert-file=/etc/flanneld/cert/flanneld.pem \

--key-file=/etc/flanneld/cert/flanneld-key.pem \

set ${FLANNEL_ETCD_PREFIX}/config '{"Network":"'${CLUSTER_CIDR}'", "SubnetLen": 24, "Backend": {"Type": "vxlan"}}'

预期输出类似:

{"Network":"172.30.0.0/16", "SubnetLen": 24, "Backend": {"Type": "vxlan"}}

- flanneld 当前版本 (v0.10.0) 不支持 etcd v3,故使用 etcd v2 API 写入配置 key 和网段数据;

- 写入的 Pod 网段 ${CLUSTER_CIDR} 必须是 /16 段地址,必须与 kube-controller-manager 的 --cluster-cidr 参数值一致;

创建 flanneld 的 systemd unit 文件

source /opt/k8s/bin/environment.sh

export IFACE=

enp0s8 # 节点间互联的网络接口名称

cat > flanneld.service << EOF

[Unit]

Description=Flanneld overlay address etcd agent

After=network.target

After=network-online.target

Wants=network-online.target

After=etcd.service

Before=docker.service

[Service]

Type=notify

ExecStart=/opt/k8s/bin/flanneld \\

-etcd-cafile=/etc/kubernetes/cert/ca.pem \\

-etcd-certfile=/etc/flanneld/cert/flanneld.pem \\

-etcd-keyfile=/etc/flanneld/cert/flanneld-key.pem \\

-etcd-endpoints=${ETCD_ENDPOINTS} \\

-etcd-prefix=${FLANNEL_ETCD_PREFIX} \\

-iface=${IFACE}

ExecStartPost=/opt/k8s/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/docker

Restart=on-failure

[Install]

WantedBy=multi-user.target

RequiredBy=docker.service

EOF

- mk-docker-opts.sh 脚本将分配给 flanneld 的 Pod 子网网段信息写入 /run/flannel/docker 文件,后续 docker 启动时使用这个文件中的环境变量配置 docker0 网桥;

- flanneld 使用系统缺省路由所在的接口与其它节点通信,对于有多个网络接口(如内网和公网)的节点,可以用 -iface参数指定通信接口;

- flanneld 运行时需要 root 权限;

分发 flanneld systemd unit 文件到所有节点

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp flanneld.service root@${node_ip}:/etc/systemd/system/

done

也要包括kube-server节点自身:

sudo cp flanneld.service /etc/systemd/system/

启动 flanneld 服务

在kube-server节点上:

sudo systemctl daemon-reload && sudo systemctl enable flanneld && sudo systemctl start flanneld

启动其它node节点上的flanneld服务,继续在kube-server上执行:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "systemctl daemon-reload && systemctl enable flanneld && systemctl start flanneld"

done

检查启动结果

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh k8s@${node_ip} "systemctl status flanneld|grep Active"

done

检查分配给各 flanneld 的 Pod 网段信息

在一个node节点上查看集群 Pod 网段(/16):

source /opt/k8s/bin/environment.sh

etcdctl \

--endpoints=${ETCD_ENDPOINTS} \

--ca-file=/etc/kubernetes/cert/ca.pem \

--cert-file=/etc/flanneld/cert/flanneld.pem \

--key-file=/etc/flanneld/cert/flanneld-key.pem \

get ${FLANNEL_ETCD_PREFIX}/config

输出:

{"Network":"172.30.0.0/16", "SubnetLen": 24, "Backend": {"Type": "vxlan"}}

查看已分配的 Pod 子网段列表(/24):

source /opt/k8s/bin/environment.sh

etcdctl \

--endpoints=${ETCD_ENDPOINTS} \

--ca-file=/etc/kubernetes/cert/ca.pem \

--cert-file=/etc/flanneld/cert/flanneld.pem \

--key-file=/etc/flanneld/cert/flanneld-key.pem \

ls ${FLANNEL_ETCD_PREFIX}/subnets

输出:

/kubernetes/network/subnets/172.30.46.0-24

/kubernetes/network/subnets/172.30.49.0-24

/kubernetes/network/subnets/172.30.7.0-24

/kubernetes/network/subnets/172.30.48.0-24

查看某一 Pod 网段对应的节点 IP 和 flannel 接口地址:

source /opt/k8s/bin/environment.sh

etcdctl \

--endpoints=${ETCD_ENDPOINTS} \

--ca-file=/etc/kubernetes/cert/ca.pem \

--cert-file=/etc/flanneld/cert/flanneld.pem \

--key-file=/etc/flanneld/cert/flanneld-key.pem \

get ${FLANNEL_ETCD_PREFIX}/subnets/172.30.46.0-24

输出:

{"PublicIP":"172.16.10.100","BackendType":"vxlan","BackendData":{"VtepMAC":"92:dc:8d:eb:f2:bf"}}

验证各节点能通过 Pod 网段互通

在各节点上部署 flannel 后,检查是否创建了 flannel 接口(名称可能为 flannel0、flannel.0、flannel.1 等):

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh ${node_ip} "/usr/sbin/ip addr show flannel.1|grep -w inet"

done

输出:

>>> 172.16.10.101

inet 172.30.49.0/32 scope global flannel.1

>>> 172.16.10.102

inet 172.30.7.0/32 scope global flannel.1

>>> 172.16.10.103

inet 172.30.48.0/32 scope global flannel.1

在各节点上 ping 所有 flannel 接口 IP,确保能通:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh ${node_ip} "ping -c 1 172.30.46.0"

ssh ${node_ip} "ping -c 1 172.30.49.0"

ssh ${node_ip} "ping -c 1 172.30.7.0"

ssh ${node_ip} "ping -c 1 172.30.48.0"

done

6、部署 master 节点

kubernetes master 节点运行如下组件:

- kube-apiserver

- kube-scheduler

- kube-controller-manager

这 3 个组件均可以以集群模式运行,通过 leader 选举产生一个工作进程,其它进程处于阻塞模式。

下载最新版本的二进制文件

从 CHANGELOG页面 下载 server tarball 文件。

tar -xzvf kubernetes-server-linux-amd64.tar.gz

将二进制文件拷贝到所有 master 节点

因为我们这里只有一个master节点,所以:

cp server/bin/* /opt/k8s/bin/

chmod +x /opt/k8s/bin/*

6.1 部署 kube-apiserver 组件

创建 kubernetes 证书和私钥

创建证书签名请求:

source /opt/k8s/bin/environment.sh

cat > kubernetes-csr.json <<EOF

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"172.16.10.100",

"10.254.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "testcorp"

}

]

}

EOF

- hosts 字段指定授权使用该证书的 IP 或域名列表,这里列出了 apiserver 节点 IP、kubernetes 服务 IP 和域名;

- 域名最后字符不能是 .(如不能为 kubernetes.default.svc.cluster.local.),否则解析时失败,提示: x509: cannot parse dnsName "kubernetes.default.svc.cluster.local.";

- 如果使用非 cluster.local 域名,如 opsnull.com,则需要修改域名列表中的最后两个域名为:kubernetes.default.svc.opsnull、kubernetes.default.svc.opsnull.com

- kubernetes 服务 IP 是 apiserver 自动创建的,一般是 --service-cluster-ip-range 参数指定的网段的第一个IP

生成证书和私钥:

cfssl gencert -ca=/etc/kubernetes/cert/ca.pem \

-ca-key=/etc/kubernetes/cert/ca-key.pem \

-config=/etc/kubernetes/cert/ca-config.json \

-profile=kubernetes kubernetes-csr.json | cfssljson -bare kubernetes

ls kubernetes*

将生成的证书和私钥文件拷贝到 master 节点:

sudo mkdir -p /etc/kubernetes/cert/ && sudo chown -R k8s /etc/kubernetes/cert/

cp kubernetes*.pem /etc/kubernetes/cert/

注:k8s 账户可以读写 /etc/kubernetes/cert/ 目录;

创建加密配置文件

source /opt/k8s/bin/environment.sh

cat > encryption-config.yaml <<EOF

kind: EncryptionConfig

apiVersion: v1

resources:

- resources:

- secrets

providers:

- aescbc:

keys:

- name: key1

secret: ${ENCRYPTION_KEY}

- identity: {}

EOF

将加密配置文件拷贝到 master 节点的 /etc/kubernetes 目录下:

cp encryption-config.yaml /etc/kubernetes/

创建和分发 kube-apiserver systemd unit 文件

source /opt/k8s/bin/environment.sh

cat > kube-apiserver.service <<EOF

[Unit]

Description=Kubernetes API Server

Documentation=

https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

ExecStart=/opt/k8s/bin/kube-apiserver \\

--enable-admission-plugins=Initializers,NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \\

--anonymous-auth=false \\

--experimental-encryption-provider-config=/etc/kubernetes/encryption-config.yaml \\

--advertise-address=

${MASTER_IP} \\

--bind-address=

${MASTER_IP} \\

--insecure-port=0 \\

--authorization-mode=Node,RBAC \\

--runtime-config=api/all \\

--enable-bootstrap-token-auth \\

--service-cluster-ip-range=

${SERVICE_CIDR} \\

--service-node-port-range=

${NODE_PORT_RANGE} \\

--tls-cert-file=/etc/kubernetes/cert/kubernetes.pem \\

--tls-private-key-file=/etc/kubernetes/cert/kubernetes-key.pem \\

--client-ca-file=/etc/kubernetes/cert/ca.pem \\

--kubelet-client-certificate=/etc/kubernetes/cert/kubernetes.pem \\

--kubelet-client-key=/etc/kubernetes/cert/kubernetes-key.pem \\

--service-account-key-file=/etc/kubernetes/cert/ca-key.pem \\

--etcd-cafile=/etc/kubernetes/cert/ca.pem \\

--etcd-certfile=/etc/kubernetes/cert/kubernetes.pem \\

--etcd-keyfile=/etc/kubernetes/cert/kubernetes-key.pem \\

--etcd-servers=

${ETCD_ENDPOINTS} \\

--enable-swagger-ui=true \\

--allow-privileged=true \\

--apiserver-count=3 \\

--audit-log-maxage=30 \\

--audit-log-maxbackup=3 \\

--audit-log-maxsize=100 \\

--audit-log-path=/var/log/audit.log \\

--event-ttl=1h \\

--v=2

Restart=on-failure

RestartSec=5

Type=notify

User=k8s

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

- --experimental-encryption-provider-config:启用加密特性;

- --authorization-mode=Node,RBAC: 开启 Node 和 RBAC 授权模式,拒绝未授权的请求;

- --enable-admission-plugins:启用 ServiceAccount 和 NodeRestriction;

- --service-account-key-file:签名 ServiceAccount Token 的公钥文件,kube-controller-manager 的 --service-account-private-key-file 指定私钥文件,两者配对使用;

- --tls-*-file:指定 apiserver 使用的证书、私钥和 CA 文件。--client-ca-file 用于验证 client (kue-controller-manager、kube-scheduler、kubelet、kube-proxy 等)请求所带的证书;

- --kubelet-client-certificate、--kubelet-client-key:如果指定,则使用 https 访问 kubelet APIs;需要为 kubernete 用户定义 RBAC 规则,否则无权访问 kubelet API;

- --bind-address: 不能为 127.0.0.1,否则外界不能访问它的安全端口 6443;

- --insecure-port=0:关闭监听非安全端口(8080);

- --service-cluster-ip-range: 指定 Service Cluster IP 地址段;

- --service-node-port-range: 指定 NodePort 的端口范围;

- --runtime-config=api/all=true: 启用所有版本的 APIs,如 autoscaling/v2alpha1;

- --enable-bootstrap-token-auth:启用 kubelet bootstrap 的 token 认证;

- --apiserver-count=3:指定集群运行模式,多台 kube-apiserver 会通过 leader 选举产生一个工作节点,其它节点处于阻塞状态;

- User=k8s:使用 k8s 账户运行;

分发 systemd uint 文件到 master 节点:

sudo cp kube-apiserver.service /etc/systemd/system/

启动 kube-apiserver 服务

sudo su -

mkdir -p /var/run/kubernetes && chown -R k8s /var/run/kubernetes

systemctl daemon-reload && systemctl enable kube-apiserver && systemctl start kube-apiserver

检查下服务状态:

[k8s@kube-server ~]$ sudo systemctl status kube-apiserver |grep 'Active:'

Active: active (running) since Tue 2018-06-26 03:38:43 UTC; 4min 14s ago

授予 kubernetes 证书访问 kubelet API 的权限

在执行 kubectl exec、run、logs 等命令时,apiserver 会转发到 kubelet。这里定义 RBAC 规则,授权 apiserver 调用 kubelet API。

[k8s@kube-server ~]$ source /opt/k8s/bin/environment.sh

[k8s@kube-server ~]$ kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes

clusterrolebinding.rbac.authorization.k8s.io "kube-apiserver:kubelet-apis" created

打印 kube-apiserver 写入 etcd 的数据

source /opt/k8s/bin/environment.sh

ETCDCTL_API=3 etcdctl \

--endpoints=${ETCD_ENDPOINTS} \

--cacert=/etc/kubernetes/cert/ca.pem \

--cert=/etc/etcd/cert/etcd.pem \

--key=/etc/etcd/cert/etcd-key.pem \

get /registry/ --prefix --keys-only

检查集群信息

[k8s@kube-server ~]$ kubectl cluster-info

Kubernetes master is running at https://172.16.10.100:6443

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

[k8s@kube-server ~]$ kubectl get all --all-namespaces

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default service/kubernetes ClusterIP 10.254.0.1 <none> 443/TCP 49m

[k8s@kube-server ~]$ kubectl get componentstatuses

NAME STATUS MESSAGE ERROR

scheduler Unhealthy Get http://127.0.0.1:10251/healthz: dial tcp 127.0.0.1:10251: getsockopt: connection refused

controller-manager Unhealthy Get http://127.0.0.1:10252/healthz: dial tcp 127.0.0.1:10252: getsockopt: connection refused

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

注意:

1. 如果执行 kubectl 命令式时输出如下错误信息,则说明使用的 ~/.kube/config 文件不对,请切换到正确的账户后再执行该命令:

The connection to the server localhost:8080 was refused - did you specify the right host or port?

2. 执行 kubectl get componentstatuses 命令时,apiserver 默认向 127.0.0.1 发送请求。当 controller-manager、scheduler 以集群模式运行时,有可能和 kube-apiserver 不在一台机器上,这时 controller-manager 或 scheduler 的状态为 Unhealthy,但实际上它们工作正常。

检查 kube-apiserver 监听的端口

[k8s@kube-server ~]$ sudo netstat -lnpt|grep kube

tcp 0 0 172.16.10.100:6443 0.0.0.0:* LISTEN 11066/kube-apiserve

- 6443: 接收 https 请求的安全端口,对所有请求做认证和授权;

- 由于关闭了非安全端口,故没有监听 8080;

6.2 部署高可用 kube-controller-manager 集群

该集群包含 3 个节点,我们使用node1、node2、node3来搭建,启动后将通过竞争选举机制产生一个 leader 节点,其它节点为阻塞状态。当 leader 节点不可用后,剩余节点将再次进行选举产生新的 leader 节点,从而保证服务的可用性。

为保证通信安全,本文档先生成 x509 证书和私钥,kube-controller-manager 在如下两种情况下使用该证书:

1. 与 kube-apiserver 的安全端口通信时;

2. 在安全端口(https,10252) 输出 prometheus 格式的 metrics;

创建 kube-controller-manager 证书和私钥

创建证书签名请求:

cat > kube-controller-manager-csr.json <<EOF

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"172.16.10.101",

"172.16.10.102",

"172.16.10.103"

],

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:kube-controller-manager",

"OU": "testcorp"

}

]

}

EOF

- hosts 列表包含所有 kube-controller-manager 节点 IP;

- CN 为 system:kube-controller-manager、O 为 system:kube-controller-manager,kubernetes 内置的 ClusterRoleBindings system:kube-controller-manager 赋予 kube-controller-manager 工作所需的权限。

生成证书和私钥:

cfssl gencert -ca=/etc/kubernetes/cert/ca.pem \

-ca-key=/etc/kubernetes/cert/ca-key.pem \

-config=/etc/kubernetes/cert/ca-config.json \

-profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

将生成的证书和私钥分发到所有 kube-controller-manager节点:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp kube-controller-manager*.pem k8s@${node_ip}:/etc/kubernetes/cert/

done

创建和分发 kubeconfig 文件

kubeconfig 文件包含访问 apiserver 的所有信息,如 apiserver 地址、CA 证书和自身使用的证书。

source /opt/k8s/bin/environment.sh

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/cert/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-controller-manager.kubeconfig

kubectl config set-credentials system:kube-controller-manager \

--client-certificate=kube-controller-manager.pem \

--client-key=kube-controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=kube-controller-manager.kubeconfig

kubectl config set-context system:kube-controller-manager \

--cluster=kubernetes \

--user=system:kube-controller-manager \

--kubeconfig=kube-controller-manager.kubeconfig

kubectl config use-context system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

分发 kubeconfig 到所有

kube-controller-manager

节点:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp kube-controller-manager.kubeconfig k8s@${node_ip}:/etc/kubernetes/

done

创建和分发 kube-controller-manager systemd unit 文件

source /opt/k8s/bin/environment.sh

cat > kube-controller-manager.service <<EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

ExecStart=/opt/k8s/bin/kube-controller-manager \\

--port=0 \\

--secure-port=10252 \\

--bind-address=127.0.0.1 \\

--kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \\

--service-cluster-ip-range=${SERVICE_CIDR} \\

--cluster-name=kubernetes \\

--cluster-signing-cert-file=/etc/kubernetes/cert/ca.pem \\

--cluster-signing-key-file=/etc/kubernetes/cert/ca-key.pem \\

--experimental-cluster-signing-duration=8760h \\

--root-ca-file=/etc/kubernetes/cert/ca.pem \\

--service-account-private-key-file=/etc/kubernetes/cert/ca-key.pem \\

--leader-elect=true \\

--feature-gates=RotateKubeletServerCertificate=true \\

--controllers=*,bootstrapsigner,tokencleaner \\

--horizontal-pod-autoscaler-use-rest-clients=true \\

--horizontal-pod-autoscaler-sync-period=10s \\

--tls-cert-file=/etc/kubernetes/cert/kube-controller-manager.pem \\

--tls-private-key-file=/etc/kubernetes/cert/kube-controller-manager-key.pem \\

--use-service-account-credentials=true \\

--v=2

Restart=on

Restart=on-failure

RestartSec=5

User=k8s

[Install]

WantedBy=multi-user.target

EOF

- --port=0:关闭监听 http /metrics 的请求,同时 --address 参数无效,--bind-address 参数有效;

- --secure-port=10252、--bind-address=0.0.0.0: 在所有网络接口监听 10252 端口的 https /metrics 请求;

- --kubeconfig:指定 kubeconfig 文件路径,kube-controller-manager 使用它连接和验证 kube-apiserver;

- --cluster-signing-*-file:签名 TLS Bootstrap 创建的证书;

- --experimental-cluster-signing-duration:指定 TLS Bootstrap 证书的有效期;

- --root-ca-file:放置到容器 ServiceAccount 中的 CA 证书,用来对 kube-apiserver 的证书进行校验;

- --service-account-private-key-file:签名 ServiceAccount 中 Token 的私钥文件,必须和 kube-apiserver 的 --service-account-key-file 指定的公钥文件配对使用;

- --service-cluster-ip-range :指定 Service Cluster IP 网段,必须和 kube-apiserver 中的同名参数一致;

- --leader-elect=true:集群运行模式,启用选举功能;被选为 leader 的节点负责处理工作,其它节点为阻塞状态;

- --feature-gates=RotateKubeletServerCertificate=true:开启 kublet server 证书的自动更新特性;

- --controllers=*,bootstrapsigner,tokencleaner:启用的控制器列表,tokencleaner 用于自动清理过期的 Bootstrap token;

- --horizontal-pod-autoscaler-*:custom metrics 相关参数,支持 autoscaling/v2alpha1;

- --tls-cert-file、--tls-private-key-file:使用 https 输出 metrics 时使用的 Server 证书和秘钥;

- --use-service-account-credentials=true:

- User=k8s:使用 k8s 账户运行;

kube-controller-manager 不对请求 https metrics 的 Client 证书进行校验,故不需要指定 --tls-ca-file 参数,而且该参数已被淘汰。

分发 systemd unit 文件到所有

kube-controller-manager

节点:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp kube-controller-manager.service root@${node_ip}:/etc/systemd/system/

done

kube-controller-manager 的权限

ClusteRole: system:kube-controller-manager 的权限很小,只能创建 secret、serviceaccount 等资源对象,各 controller 的权限分散到 ClusterRole system:controller:XXX 中。

需要在 kube-controller-manager 的启动参数中添加 --use-service-account-credentials=true 参数,这样 main controller 会为各 controller 创建对应的 ServiceAccount XXX-controller。

内置的 ClusterRoleBinding system:controller:XXX 将赋予各 XXX-controller ServiceAccount 对应的 ClusterRole system:controller:XXX 权限。

启动 kube-controller-manager 服务

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "systemctl daemon-reload && systemctl enable kube-controller-manager && systemctl restart kube-controller-manager"

done

检查服务运行状态

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh k8s@${node_ip} "systemctl status kube-controller-manager|grep Active"

done

输出:

>>> 172.16.10.101

Active: active (running) since Tue 2018-06-26 05:15:00 UTC; 21s ago

>>> 172.16.10.102

Active: active (running) since Tue 2018-06-26 05:15:01 UTC; 20s ago

>>> 172.16.10.103

Active: active (running) since Tue 2018-06-26 05:15:02 UTC; 20s ago

查看输出的 metric

注意:以下命令在 kube-controller-manager 节点上执行。

kube-controller-manager 监听 10252 端口,接收 https 请求:

[k8s@kube-node1 system]$ sudo netstat -lnpt|grep kube-controll

tcp 0 0 127.0.0.1:10252 0.0.0.0:* LISTEN 28523/kube-controll

[k8s@kube-node1 system]$ curl -s --cacert /etc/kubernetes/cert/ca.pem https://127.0.0.1:10252/metrics |head

# HELP ClusterRoleAggregator_adds Total number of adds handled by workqueue: ClusterRoleAggregator

# TYPE ClusterRoleAggregator_adds counter

ClusterRoleAggregator_adds 9

# HELP ClusterRoleAggregator_depth Current depth of workqueue: ClusterRoleAggregator

# TYPE ClusterRoleAggregator_depth gauge

ClusterRoleAggregator_depth 0

# HELP ClusterRoleAggregator_queue_latency How long an item stays in workqueueClusterRoleAggregator before being requested.

# TYPE ClusterRoleAggregator_queue_latency summary

ClusterRoleAggregator_queue_latency{quantile="0.5"} 304

ClusterRoleAggregator_queue_latency{quantile="0.9"} 73770

[k8s@kube-node1 system]$

注:* curl --cacert CA 证书用来验证 kube-controller-manager https server 证书。

测试 kube-controller-manager 集群的高可用

停掉一个或两个节点的 kube-controller-manager 服务,观察其它节点的日志,看是否获取了 leader 权限。

查看当前的 leader

[k8s@kube-server ~]$ kubectl get endpoints kube-controller-manager --namespace=kube-system -o yaml

apiVersion: v1

kind: Endpoints

metadata:

annotations:

control-plane.alpha.kubernetes.io/leader: '{"holderIdentity":"kube-node1_e213fdca-78ff-11e8-bb39-080027395360","leaseDurationSeconds":15,"acquireTime":"2018-06-26T05:15:03Z","renewTime":"2018-06-26T05:18:20Z","leaderTransitions":0}'

creationTimestamp: 2018-06-26T05:15:04Z

name: kube-controller-manager

namespace: kube-system

resourceVersion: "340"

selfLink: /api/v1/namespaces/kube-system/endpoints/kube-controller-manager

uid: e2192a53-78ff-11e8-b2bd-080027395360

可见,当前的 leader 为 kube-node1 节点。

我们到kube-node1节点上,关掉kube-controller-manager服务,然后再观察:

[k8s@kube-server ~]$ kubectl get endpoints kube-controller-manager --namespace=kube-system -o yaml

apiVersion: v1

kind: Endpoints

metadata:

annotations:

control-plane.alpha.kubernetes.io/leader: '{"holderIdentity":"kube-node3_e2895c01-78ff-11e8-8ff5-080027395360","leaseDurationSeconds":15,"acquireTime":"2018-06-26T05:19:38Z","renewTime":"2018-06-26T05:19:38Z","leaderTransitions":1}'

creationTimestamp: 2018-06-26T05:15:04Z

name: kube-controller-manager

namespace: kube-system

resourceVersion: "372"

selfLink: /api/v1/namespaces/kube-system/endpoints/kube-controller-manager

uid: e2192a53-78ff-11e8-b2bd-080027395360

[k8s@kube-server ~]$

可以,当前的leader已经变为kube-node3节点了。

6.3 部署高可用 kube-scheduler 集群

本文档介绍部署高可用 kube-scheduler 集群的步骤。

该集群包含 3 个节点,kube-node1、kube-node2、kube-node3,启动后将通过竞争选举机制产生一个 leader 节点,其它节点为阻塞状态。当 leader 节点不可用后,剩余节点将再次进行选举产生新的 leader 节点,从而保证服务的可用性。

为保证通信安全,本文档先生成 x509 证书和私钥,kube-scheduler 在如下两种情况下使用该证书:

1. 与 kube-apiserver 的安全端口通信;

2. 在安全端口(https,10251) 输出 prometheus 格式的 metrics;

创建 kube-scheduler 证书和私钥

创建证书签名请求:

cat > kube-scheduler-csr.json <<EOF

{

"CN": "system:kube-scheduler",

"hosts": [

"127.0.0.1",

"172.16.10.101",

"172.16.10.102",

"172.16.10.103"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:kube-scheduler",

"OU": "testcorp"

}

]

}

EOF

- hosts 列表包含所有 kube-scheduler 节点 IP;

- CN 为 system:kube-scheduler、O 为 system:kube-scheduler,kubernetes 内置的 ClusterRoleBindings system:kube-scheduler 将赋予 kube-scheduler 工作所需的权限。

生成证书和私钥:

cfssl gencert -ca=/etc/kubernetes/cert/ca.pem \

-ca-key=/etc/kubernetes/cert/ca-key.pem \

-config=/etc/kubernetes/cert/ca-config.json \

-profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

创建和分发 kubeconfig 文件

kubeconfig 文件包含访问 apiserver 的所有信息,如 apiserver 地址、CA 证书和自身使用的证书。

source /opt/k8s/bin/environment.sh

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/cert/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-scheduler.kubeconfig

kubectl config set-credentials system:kube-scheduler \

--client-certificate=kube-scheduler.pem \

--client-key=kube-scheduler-key.pem \

--embed-certs=true \

--kubeconfig=kube-scheduler.kubeconfig

kubectl config set-context system:kube-scheduler \

--cluster=kubernetes \

--user=system:kube-scheduler \

--kubeconfig=kube-scheduler.kubeconfig

kubectl config use-context system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

上一步创建的证书、私钥以及 kube-apiserver 地址被写入到 kubeconfig 文件中。

分发 kubeconfig 到所有 kube-scheduler节点:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp kube-scheduler.kubeconfig k8s@${node_ip}:/etc/kubernetes/

done

创建和分发 kube-scheduler systemd unit 文件

cat > kube-scheduler.service <<EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=

https://github.com/GoogleCloudPlatform/kubernetes

[Service]

ExecStart=/opt/k8s/bin/kube-scheduler \\

--address=127.0.0.1 \\

--kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig \\

--leader-elect=true \\

--v=2

Restart=on-failure

RestartSec=5

User=k8s

[Install]

WantedBy=multi-user.target

EOF

- --address:在 127.0.0.1:10251 端口接收 http /metrics 请求;kube-scheduler 目前还不支持接收 https 请求;

- --kubeconfig:指定 kubeconfig 文件路径,kube-scheduler 使用它连接和验证 kube-apiserver;

- --leader-elect=true:集群运行模式,启用选举功能;被选为 leader 的节点负责处理工作,其它节点为阻塞状态;

- User=k8s:使用 k8s 账户运行;

分发 systemd unit 文件到所有

kube-scheduler

节点:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp kube-scheduler.service root@${node_ip}:/etc/systemd/system/

done

启动 kube-scheduler 服务

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "systemctl daemon-reload && systemctl enable kube-scheduler && systemctl start kube-scheduler"

done

检查服务运行状态

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh k8s@${node_ip} "systemctl status kube-scheduler|grep Active"

done

输出:

>>> 172.16.10.101

Active: active (running) since Tue 2018-06-26 05:36:31 UTC; 17s ago

>>> 172.16.10.102

Active: active (running) since Tue 2018-06-26 05:36:32 UTC; 16s ago

>>> 172.16.10.103

Active: active (running) since Tue 2018-06-26 05:36:33 UTC; 16s ago

[k8s@kube-server ~]$

查看输出的 metric

注意:以下命令在 kube-scheduler 节点上执行。

kube-scheduler 监听 10251 端口,接收 http 请求:

[k8s@kube-node1 system]$ sudo netstat -lnpt|grep kube-sche

tcp 0 0 127.0.0.1:10251 0.0.0.0:* LISTEN 30200/kube-schedule

[k8s@kube-node1 system]$ curl -s http://127.0.0.1:10251/metrics |head

# HELP apiserver_audit_event_total Counter of audit events generated and sent to the audit backend.

# TYPE apiserver_audit_event_total counter

apiserver_audit_event_total 0

# HELP go_gc_duration_seconds A summary of the GC invocation durations.

# TYPE go_gc_duration_seconds summary

go_gc_duration_seconds{quantile="0"} 1.1776e-05

go_gc_duration_seconds{quantile="0.25"} 1.2644e-05

go_gc_duration_seconds{quantile="0.5"} 1.7374e-05

go_gc_duration_seconds{quantile="0.75"} 2.2085e-05

go_gc_duration_seconds{quantile="1"} 4.5083e-05

[k8s@kube-node1 system]$

测试 kube-scheduler 集群的高可用

随便找一个或两个 master 节点,停掉 kube-scheduler 服务,看其它节点是否获取了 leader 权限(systemd 日志)。

查看当前的 leader

[k8s@kube-node1 system]$ kubectl get endpoints kube-scheduler --namespace=kube-system -o yaml

apiVersion: v1

kind: Endpoints

metadata:

annotations:

control-plane.alpha.kubernetes.io/leader: '{"holderIdentity":"kube-node1_e1d9a10f-7902-11e8-814d-080027395360","leaseDurationSeconds":15,"acquireTime":"2018-06-26T05:36:33Z","renewTime":"2018-06-26T05:38:42Z","leaderTransitions":0}'

creationTimestamp: 2018-06-26T05:36:33Z

name: kube-scheduler

namespace: kube-system

resourceVersion: "1008"

selfLink: /api/v1/namespaces/kube-system/endpoints/kube-scheduler

uid: e2769a5d-7902-11e8-b2bd-080027395360

可见,当前的 leader 为 kube-node1 节点。

7、部署node节点

kubernetes node节点运行如下组件:

- docker

- kubelet

- kube-proxy

7.1 部署docker组件

kubelet 通过 Container Runtime Interface (CRI) 与 docker 进行交互。

下载和分发 docker 二进制文件

到 https://download.docker.com/linux/static/stable/x86_64/ 页面下载最新发布包:

tar -xvf docker-18.03.1-ce.tgz

分发二进制文件到所有 worker 节点:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp docker/docker* k8s@${node_ip}:/opt/k8s/bin/

ssh k8s@${node_ip} "chmod +x /opt/k8s/bin/*"

done

创建和分发 systemd unit 文件

cat > docker.service <<"EOF"

[Unit]

Description=Docker Application Container Engine

Documentation=

http://docs.docker.io

[Service]

Environment="PATH=/opt/k8s/bin:/bin:/sbin:/usr/bin:/usr/sbin"

EnvironmentFile=-/run/flannel/docker

ExecStart=/opt/k8s/bin/dockerd --log-level=error $DOCKER_NETWORK_OPTIONS

ExecReload=/bin/kill -s HUP $MAINPID

Restart=on-failure

RestartSec=5

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

Delegate=yes

KillMode=process

[Install]

WantedBy=multi-user.target

EOF

- EOF 前后有引号,这样 bash 不会替换文档中的变量,如 $DOCKER_NETWORK_OPTIONS;

- dockerd 运行时会调用其它 docker 命令,如 docker-proxy,所以需要将 docker 命令所在的目录加到 PATH 环境变量中;

- flanneld 启动时将网络配置写入到 /run/flannel/docker 文件中的变量 DOCKER_NETWORK_OPTIONS,dockerd 命令行上指定该变量值来设置docker0 网桥参数;

- 如果指定了多个 EnvironmentFile 选项,则必须将 /run/flannel/docker 放在最后(确保 docker0 使用 flanneld 生成的 bip 参数);

- docker 需要以 root 用于运行;

- docker 从 1.13 版本开始,可能将 iptables FORWARD chain的默认策略设置为DROP,从而导致 ping 其它 Node 上的 Pod IP 失败,遇到这种情况时,需要手动设置策略为 ACCEPT:

分发 systemd unit 文件到所有 worker 机器:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp docker.service root@${node_ip}:/etc/systemd/system/

done

启动 docker 服务

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "systemctl stop firewalld && systemctl disable firewalld"

ssh root@${node_ip} "iptables -F && iptables -X && iptables -F -t nat && iptables -X -t nat"

ssh root@${node_ip} "iptables -P FORWARD ACCEPT"

ssh root@${node_ip} "systemctl daemon-reload && systemctl enable docker && systemctl start docker"

done

- 关闭 firewalld(centos7)/ufw(ubuntu16.04),否则可能会重复创建 iptables 规则;

- 清理旧的 iptables rules 和 chains 规则;

检查服务运行状态

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh k8s@${node_ip} "systemctl status docker|grep Active"

done

检查 docker0 网桥

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh k8s@${node_ip} "/usr/sbin/ip addr show"

done

确认各 work 节点的 docker0 网桥和 flannel.1 接口的 IP 处于同一个网段中(如下 172.30.49.0 和 172.30.49.1):

[k8s@kube-node1 system]$ ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: enp0s3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 08:00:27:39:53:60 brd ff:ff:ff:ff:ff:ff

inet 10.0.2.15/24 brd 10.0.2.255 scope global noprefixroute dynamic enp0s3

valid_lft 69101sec preferred_lft 69101sec

inet6 fe80::953e:9248:d505:388f/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: enp0s8: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 08:00:27:e5:7e:fd brd ff:ff:ff:ff:ff:ff

inet 172.16.10.101/24 brd 172.16.10.255 scope global noprefixroute enp0s8

valid_lft forever preferred_lft forever

inet6 fe80::a00:27ff:fee5:7efd/64 scope link

valid_lft forever preferred_lft forever

4: flannel.1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UNKNOWN group default

link/ether e6:1a:e1:53:92:ef brd ff:ff:ff:ff:ff:ff

inet 172.30.49.0/32 scope global flannel.1

valid_lft forever preferred_lft forever

inet6 fe80::e41a:e1ff:fe53:92ef/64 scope link

valid_lft forever preferred_lft forever

5: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:3f:5a:25:80 brd ff:ff:ff:ff:ff:ff

inet 172.30.49.1/24 brd 172.30.49.255 scope global docker0

valid_lft forever preferred_lft forever

[k8s@kube-node1 system]$

7.2 部署 kubelet 组件

kublet 运行在每个 worker 节点上,接收 kube-apiserver 发送的请求,管理 Pod 容器,执行交互式命令如 exec、run、logs 等。

kublet 启动时自动向 kube-apiserver 注册节点信息,内置的 cadvisor 统计和监控节点的资源使用情况。

为确保安全,本文档只开启接收 https 请求的安全端口,对请求进行认证和授权,拒绝未授权的访问(如 apiserver、heapster)。

下载最新版本的二进制文件

从 CHANGELOG页面 下载 server tarball 文件。

tar -xzvf kubernetes-server-linux-amd64.tar.gz

将二进制文件拷贝到所有 node 节点:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp server/bin/* k8s@${node_ip}:/opt/k8s/bin/

ssh k8s@${node_ip} "chmod +x /opt/k8s/bin/*"

done

创建 kubelet bootstrap kubeconfig 文件

source /opt/k8s/bin/environment.sh

for node_name in ${NODE_NAMES[@]}

do

echo ">>> ${node_name}"

# 创建 token

export BOOTSTRAP_TOKEN=$(kubeadm token create \

--description kubelet-bootstrap-token \

--groups system:bootstrappers:${node_name} \

--kubeconfig ~/.kube/config)

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/cert/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kubelet-bootstrap-${node_name}.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=kubelet-bootstrap-${node_name}.kubeconfig

# 设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=kubelet-bootstrap-${node_name}.kubeconfig

# 设置默认上下文

kubectl config use-context default --kubeconfig=kubelet-bootstrap-${node_name}.kubeconfig

done

证书中写入 Token 而非证书,证书后续由 controller-manager 创建。

查看 kubeadm 为各节点创建的 token:

[k8s@kube-server ~]$ kubeadm token list --kubeconfig ~/.kube/config

TOKEN TTL EXPIRES USAGES DESCRIPTION EXTRA GROUPS

34jnny.c7ks4atqkqpclbe1 23h 2018-06-28T01:32:46Z authentication,signing kubelet-bootstrap-token system:bootstrappers:kube-node2

jlzg9x.la6w75ab1jf9dsg2 23h 2018-06-28T01:32:45Z authentication,signing kubelet-bootstrap-token system:bootstrappers:kube-node1

qgfb6a.8w0fm4i8kwh8y5gd 23h 2018-06-28T01:32:46Z authentication,signing kubelet-bootstrap-token system:bootstrappers:kube-node3

分发 bootstrap kubeconfig 文件到所有 node节点

source /opt/k8s/bin/environment.sh

for node_name in ${NODE_NAMES[@]}

do

echo ">>> ${node_ip}"

scp kubelet-bootstrap-${node_name}.kubeconfig k8s@${node_name}:/etc/kubernetes/kubelet-bootstrap.kubeconfig

done

创建和分发 kubelet 参数配置文件

从 v1.10 开始,kubelet 部分参数需在配置文件中配置,kubelet --help 会提示:

[k8s@kube-server ~]$ kubelet --help|grep DEPRECATED

--address 0.0.0.0 The IP address for the Kubelet to serve on (set to 0.0.0.0 for all IPv4 interfaces and `::` for all IPv6 interfaces) (default 0.0.0.0) (DEPRECATED: This parameter should be set via the config file specified by the Kubelet's --config flag. See

https://kubernetes.io/docs/tasks/administer-cluster/kubelet-config-file/ for more information.)

创建 kubelet 参数配置模板文件:

source /opt/k8s/bin/environment.sh

cat > kubelet.config.json.template <<EOF

{

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1",

"authentication": {

"x509": {

"clientCAFile": "/etc/kubernetes/cert/ca.pem"

},

"webhook": {

"enabled": true,

"cacheTTL": "2m0s"

},

"anonymous": {

"enabled": false

}

},

"authorization": {

"mode": "Webhook",

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"address": "##NODE_IP##",

"port": 10250,

"readOnlyPort": 0,

"cgroupDriver": "cgroupfs",

"hairpinMode": "promiscuous-bridge",

"serializeImagePulls": false,

"featureGates": {

"RotateKubeletClientCertificate": true,

"RotateKubeletServerCertificate": true

},

"clusterDomain": "cluster.local.",

"clusterDNS": ["10.254.0.2"]

}

EOF

- address:API 监听地址,不能为 127.0.0.1,否则 kube-apiserver、heapster 等不能调用 kubelet 的 API;

- readOnlyPort=0:关闭只读端口(默认 10255),等效为未指定;

- authentication.anonymous.enabled:设置为 false,不允许匿名访问 10250 端口;

- authentication.x509.clientCAFile:指定签名客户端证书的 CA 证书,开启 HTTP 证书认证;

- authentication.webhook.enabled=true:开启 HTTPs bearer token 认证;

- 对于未通过 x509 证书和 webhook 认证的请求(kube-apiserver 或其他客户端),将被拒绝,提示 Unauthorized;

- authroization.mode=Webhook:kubelet 使用 SubjectAccessReview API 查询 kube-apiserver 某 user、group 是否具有操作资源的权限(RBAC);

- featureGates.RotateKubeletClientCertificate、featureGates.RotateKubeletServerCertificate:自动 rotate 证书,证书的有效期取决于 kube-controller-manager 的 --experimental-cluster-signing-duration 参数;

- 需要 root 账户运行;

为各节点创建和分发 kubelet 配置文件:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

sed -e "s/##NODE_IP##/${node_ip}/" kubelet.config.json.template > kubelet.config-${node_ip}.json

scp kubelet.config-${node_ip}.json root@${node_ip}:/etc/kubernetes/kubelet.config.json

done

创建和分发 kubelet systemd unit 文件

创建 kubelet systemd unit 文件模板:

cat > kubelet.service.template <<EOF

[Unit]

Description=Kubernetes Kubelet

Documentation=

https://github.com/GoogleCloudPlatform/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/opt/k8s/bin/kubelet \\

--bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig \\

--cert-dir=/etc/kubernetes/cert \\

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig \\

--config=/etc/kubernetes/kubelet.config.json \\

--hostname-override=##NODE_NAME## \\

--pod-infra-container-image=

registry.access.redhat.com/rhel7/pod-infrastructure:latest \\

--logtostderr=true \\

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

- 如果设置了 --hostname-override 选项,则 kube-proxy 也需要设置该选项,否则会出现找不到 Node 的情况;

- --bootstrap-kubeconfig:指向 bootstrap kubeconfig 文件,kubelet 使用该文件中的用户名和 token 向 kube-apiserver 发送 TLS Bootstrapping 请求;

- K8S approve kubelet 的 csr 请求后,在 --cert-dir 目录创建证书和私钥文件,然后写入 --kubeconfig 文件;

- --feature-gates:启用 kuelet 证书轮转功能;

为各节点创建和分发 kubelet systemd unit 文件:

source /opt/k8s/bin/environment.sh

for node_name in ${NODE_NAMES[@]}

do

echo ">>> ${node_name}"

sed -e "s/##NODE_NAME##/${node_name}/" kubelet.service.template > kubelet-${node_name}.service

scp kubelet-${node_name}.service root@${node_name}:/etc/systemd/system/kubelet.service

done

Bootstrap Token Auth 和授予权限

kublet 启动时查找配置的 --kubeletconfig 文件是否存在,如果不存在则使用 --bootstrap-kubeconfig 向 kube-apiserver 发送证书签名请求 (CSR)。

kube-apiserver 收到 CSR 请求后,对其中的 Token 进行认证(事先使用 kubeadm 创建的 token),认证通过后将请求的 user 设置为 system:bootstrap:,group 设置为 system:bootstrappers,这一过程称为 Bootstrap Token Auth。

默认情况下,这个 user 和 group 没有创建 CSR 的权限,kubelet 启动失败,错误日志如下:

$ sudo journalctl -u kubelet -a |grep -A 2 'certificatesigningrequests'

May 06 06:42:36 kube-node1 kubelet[26986]: F0506 06:42:36.314378 26986 server.go:233] failed to run Kubelet: cannot create certificate signing request: certificatesigningrequests.certificates.k8s.io is forbidden: User "system:bootstrap:lemy40" cannot create certificatesigningrequests.certificates.k8s.io at the cluster scope

May 06 06:42:36 kube-node1 systemd[1]: kubelet.service: Main process exited, code=exited, status=255/n/a

May 06 06:42:36 kube-node1 systemd[1]: kubelet.service: Failed with result 'exit-code'.

解决办法是:创建一个 clusterrolebinding,将 group system:bootstrappers 和 clusterrole system:node-bootstrapper 绑定:

$ kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --group=system:bootstrappers

启动 kubelet 服务

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p /var/lib/kubelet" # 必须先创建工作目录

ssh root@${node_ip} "swapoff -a" # 关闭 swap 分区

ssh root@${node_ip} "systemctl daemon-reload && systemctl enable kubelet && systemctl restart kubelet"

done

kubelet 启动后使用 --bootstrap-kubeconfig 向 kube-apiserver 发送 CSR 请求,当这个 CSR 被 approve 后,kube-controller-manager 为 kubelet 创建 TLS 客户端证书、私钥和 --kubeletconfig 文件。

注意:kube-controller-manager 需要配置 --cluster-signing-cert-file 和 --cluster-signing-key-file 参数,才会为 TLS Bootstrap 创建证书和私钥。

[k8s@kube-server ~]$ kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-9UuHCTss6Mxs4FTcuNqU9sBe6FC1of_Da7t8luoVL_0 2m system:bootstrap:jlzg9x Pending

node-csr-9WiUTwjqsFmNLiV3wqKYRY_MCy-V6lxNauLHJuuUxpc 2m system:bootstrap:34jnny Pending

node-csr-j0SQAP6ODUDrP0QQUto0yfCc41Kp_yMYhXYLS3IluCY 2m system:bootstrap:qgfb6a Pending

[k8s@kube-server ~]$ kubectl get nodes

No resources found.

[k8s@kube-server ~]$

三个 node节点的 csr 均处于 pending 状态。

approve kubelet CSR 请求

可以手动或自动 approve CSR 请求。推荐使用自动的方式,因为从 v1.8 版本开始,可以自动轮转approve csr 后生成的证书。

手动 approve CSR 请求

[k8s@kube-server ~]$ kubectl certificate approve node-csr-9UuHCTss6Mxs4FTcuNqU9sBe6FC1of_Da7t8luoVL_0

certificatesigningrequest.certificates.k8s.io "node-csr-9UuHCTss6Mxs4FTcuNqU9sBe6FC1of_Da7t8luoVL_0" approved

[k8s@kube-server ~]$ kubectl describe csr node-csr-9UuHCTss6Mxs4FTcuNqU9sBe6FC1of_Da7t8luoVL_0

Name: node-csr-9UuHCTss6Mxs4FTcuNqU9sBe6FC1of_Da7t8luoVL_0

Labels: <none>

Annotations: <none>

CreationTimestamp: Wed, 27 Jun 2018 04:44:59 +0000

Requesting User: system:bootstrap:jlzg9x

Status: Approved,Issued

Subject:

Common Name: system:node:kube-node1

Serial Number:

Organization: system:nodes

Events: <none>

- Requesting User:请求 CSR 的用户,kube-apiserver 对它进行认证和授权;

- Subject:请求签名的证书信息;

- 证书的 CN 是 system:node:kube-node2, Organization 是 system:nodes,kube-apiserver 的 Node 授权模式会授予该证书的相关权限;

自动 approve CSR 请求

创建三个 ClusterRoleBinding,分别用于自动 approve client、renew client、renew server 证书:

cat > csr-crb.yaml <<EOF

# Approve all CSRs for the group "system:bootstrappers"

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: auto-approve-csrs-for-group

subjects:

- kind: Group

name: system:bootstrappers

apiGroup: rbac.authorization.k8s.io

roleRef:

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:nodeclient

apiGroup: rbac.authorization.k8s.io

---

# To let a node of the group "system:bootstrappers" renew its own credentials

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: node-client-cert-renewal

subjects:

- kind: Group

name: system:bootstrappers

apiGroup: rbac.authorization.k8s.io

roleRef:

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:selfnodeclient

apiGroup: rbac.authorization.k8s.io

---

# A ClusterRole which instructs the CSR approver to approve a node requesting a

# serving cert matching its client cert.

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: approve-node-server-renewal-csr

rules:

- apiGroups: ["certificates.k8s.io"]

resources: ["certificatesigningrequests/selfnodeserver"]

verbs: ["create"]

---

# To let a node of the group "system:nodes" renew its own server credentials

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: node-server-cert-renewal

subjects:

- kind: Group

name: system:nodes

apiGroup: rbac.authorization.k8s.io

roleRef:

kind: ClusterRole

name: approve-node-server-renewal-csr

apiGroup: rbac.authorization.k8s.io

EOF

生效配置:

[k8s@kube-server ~]$ kubectl apply -f csr-crb.yaml

clusterrolebinding.rbac.authorization.k8s.io "auto-approve-csrs-for-group" created

clusterrolebinding.rbac.authorization.k8s.io "node-client-cert-renewal" created

clusterrole.rbac.authorization.k8s.io "approve-node-server-renewal-csr" created

clusterrolebinding.rbac.authorization.k8s.io "node-server-cert-renewal" created

查看 kublet 的情况

等待一段时间(1-10 分钟),三个节点的 CSR 都被自动 approve:

[k8s@kube-server ~]$ kubectl get csr

NAME AGE REQUESTOR CONDITION

csr-72dq4 5m system:node:kube-node1 Pending

node-csr-9UuHCTss6Mxs4FTcuNqU9sBe6FC1of_Da7t8luoVL_0 10m system:bootstrap:jlzg9x Approved,Issued

node-csr-9WiUTwjqsFmNLiV3wqKYRY_MCy-V6lxNauLHJuuUxpc 10m system:bootstrap:34jnny Pending

node-csr-j0SQAP6ODUDrP0QQUto0yfCc41Kp_yMYhXYLS3IluCY 10m system:bootstrap:qgfb6a Pending

[k8s@kube-server ~]$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

kube-node1 Ready <none> 5m v1.10.4

[k8s@kube-server ~]$ kubectl get csr

NAME AGE REQUESTOR CONDITION

csr-72dq4 23m system:node:kube-node1 Approved,Issued

csr-wnkj8 14m system:node:kube-node2 Approved,Issued

csr-zxkbr 14m system:node:kube-node3 Approved,Issued

node-csr-9UuHCTss6Mxs4FTcuNqU9sBe6FC1of_Da7t8luoVL_0 27m system:bootstrap:jlzg9x Approved,Issued

node-csr-9WiUTwjqsFmNLiV3wqKYRY_MCy-V6lxNauLHJuuUxpc 27m system:bootstrap:34jnny Approved,Issued

node-csr-j0SQAP6ODUDrP0QQUto0yfCc41Kp_yMYhXYLS3IluCY 27m system:bootstrap:qgfb6a Approved,Issued

[k8s@kube-server ~]$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

kube-node1 Ready <none> 23m v1.10.4

kube-node2 Ready <none> 14m v1.10.4

kube-node3 Ready <none> 14m v1.10.4

[k8s@kube-server ~]$

kube-controller-manager 为各 node 生成了 kubeconfig 文件和公私钥:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "ls -l /etc/kubernetes/kubelet.kubeconfig"

ssh root@${node_ip} "ls -l /etc/kubernetes/cert/|grep kubelet"

done

输出:

>>> 172.16.10.101

-rw-------. 1 root root 2290 Jun 27 04:49 /etc/kubernetes/kubelet.kubeconfig

-rw-r--r--. 1 root root 1046 Jun 27 04:49 kubelet-client.crt

-rw-------. 1 root root 227 Jun 27 04:44 kubelet-client.key

-rw-------. 1 root root 1330 Jun 27 04:56 kubelet-server-2018-06-27-04-56-32.pem

lrwxrwxrwx. 1 root root 59 Jun 27 04:56 kubelet-server-current.pem -> /etc/kubernetes/cert/kubelet-server-2018-06-27-04-56-32.pem

>>> 172.16.10.102

-rw-------. 1 root root 2290 Jun 27 04:58 /etc/kubernetes/kubelet.kubeconfig

-rw-r--r--. 1 root root 1046 Jun 27 04:58 kubelet-client.crt

-rw-------. 1 root root 227 Jun 27 04:45 kubelet-client.key

-rw-------. 1 root root 1330 Jun 27 04:58 kubelet-server-2018-06-27-04-58-41.pem

lrwxrwxrwx. 1 root root 59 Jun 27 04:58 kubelet-server-current.pem -> /etc/kubernetes/cert/kubelet-server-2018-06-27-04-58-41.pem

>>> 172.16.10.103

-rw-------. 1 root root 2290 Jun 27 04:58 /etc/kubernetes/kubelet.kubeconfig

-rw-r--r--. 1 root root 1046 Jun 27 04:58 kubelet-client.crt

-rw-------. 1 root root 227 Jun 27 04:45 kubelet-client.key

-rw-------. 1 root root 1330 Jun 27 04:58 kubelet-server-2018-06-27-04-58-42.pem

lrwxrwxrwx. 1 root root 59 Jun 27 04:58 kubelet-server-current.pem -> /etc/kubernetes/cert/kubelet-server-2018-06-27-04-58-42.pem

kubelet-server 证书会周期轮转。

kubelet 提供的 API 接口

kublet 启动后监听多个端口,用于接收 kube-apiserver 或其它组件发送的请求:

[k8s@kube-node1 ~]$ sudo netstat -lnpt|grep kubelet

tcp 0 0 172.16.10.101:4194 0.0.0.0:* LISTEN 9191/kubelet

tcp 0 0 127.0.0.1:10248 0.0.0.0:* LISTEN 9191/kubelet

tcp 0 0 172.16.10.101:10250 0.0.0.0:* LISTEN 9191/kubelet

- 4194: cadvisor http 服务;

- 10248: healthz http 服务;

- 10250: https API 服务;注意:未开启只读端口 10255;

例如执行 kubectl ec -it nginx-ds-5rmws -- sh 命令时,kube-apiserver 会向 kubelet 发送如下请求:

POST /exec/default/nginx-ds-5rmws/my-nginx?command=sh&input=1&output=1&tty=1

kubelet 接收 10250 端口的 https 请求:

- /pods、/runningpods

- /metrics、/metrics/cadvisor、/metrics/probes

- /spec

- /stats、/stats/container

- /logs

- /run/、"/exec/", "/attach/", "/portForward/", "/containerLogs/" 等管理;

详情参考:

由于关闭了匿名认证,同时开启了 webhook 授权,所有访问 10250 端口 https API 的请求都需要被认证和授权。

预定义的 ClusterRole system:kubelet-api-admin 授予访问 kubelet 所有 API 的权限:

[k8s@kube-server ~]$ kubectl describe clusterrole system:kubelet-api-admin

Name: system:kubelet-api-admin

Labels: kubernetes.io/bootstrapping=rbac-defaults

Annotations: rbac.authorization.kubernetes.io/autoupdate=true

PolicyRule:

Resources Non-Resource URLs Resource Names Verbs

--------- ----------------- -------------- -----

nodes [] [] [get list watch proxy]

nodes/log [] [] [*]

nodes/metrics [] [] [*]

nodes/proxy [] [] [*]

nodes/spec [] [] [*]

nodes/stats [] [] [*]

kublet api 认证和授权

kublet 配置了如下认证参数:

- authentication.anonymous.enabled:设置为 false,不允许匿名访问 10250 端口;

- authentication.x509.clientCAFile:指定签名客户端证书的 CA 证书,开启 HTTPs 证书认证;

- authentication.webhook.enabled=true:开启 HTTPs bearer token 认证;

同时配置了如下授权参数:

- authroization.mode=Webhook:开启 RBAC 授权;

kubelet 收到请求后,使用 clientCAFile 对证书签名进行认证,或者查询 bearer token 是否有效。如果两者都没通过,则拒绝请求,提示 Unauthorized:

[k8s@kube-server ~]$ curl -s --cacert /etc/kubernetes/cert/ca.pem https://172.16.10.101:10250/metrics

Unauthorized

[k8s@kube-server ~]$

[k8s@kube-server ~]$ curl -s --cacert /etc/kubernetes/cert/ca.pem -H "Authorization: Bearer 123456" https://172.16.10.101:10250/metrics

Unauthorized

[k8s@kube-server ~]$

通过认证后,kubelet 使用 SubjectAccessReview API 向 kube-apiserver 发送请求,查询证书或 token 对应的 user、group 是否有操作资源的权限(RBAC);

证书认证和授权:

# 权限不足的证书;

[k8s@kube-node1 ~]$ curl -s --cacert /etc/kubernetes/cert/ca.pem --cert /etc/kubernetes/cert/kube-controller-manager.pem --key /etc/kubernetes/cert/kube-controller-manager-key.pem https://172.16.10.101:10250/metrics

Forbidden (user=system:kube-controller-manager, verb=get, resource=nodes, subresource=metrics)

[k8s@kube-node1 ~]$

# 使用部署 kubectl 命令行工具时创建的、具有最高权限的 admin 证书;

$ curl -s --cacert /etc/kubernetes/cert/ca.pem --cert /opt/k8s/admin.pem --key /opt/k8s/admin-key.pem https://172.16.10.101:10250/metrics|head

注:如果未使用绝对路径指出admin密钥位置,会找不到。

bear token 认证和授权:

创建一个 ServiceAccount,将它和 ClusterRole system:kubelet-api-admin 绑定,从而具有调用 kubelet API 的权限:

kubectl create sa kubelet-api-test

kubectl create clusterrolebinding kubelet-api-test --clusterrole=system:kubelet-api-admin --serviceaccount=default:kubelet-api-test

SECRET=$(kubectl get secrets | grep kubelet-api-test | awk '{print $1}')

TOKEN=$(kubectl describe secret ${SECRET} | grep -E '^token' | awk '{print $2}')

echo ${TOKEN}

[k8s@kube-server ~]$ echo ${TOKEN}

eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJkZWZhdWx0Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZWNyZXQubmFtZSI6Imt1YmVsZXQtYXBpLXRlc3QtdG9rZW4tZ2RqN2ciLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoia3ViZWxldC1hcGktdGVzdCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6ImY0OWEyMGJlLTc5ZDYtMTFlOC04MTM4LTA4MDAyNzM5NTM2MCIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDpkZWZhdWx0Omt1YmVsZXQtYXBpLXRlc3QifQ.isa6PPJtg0WEstKwuozjT-CHs6EEonq12mpGqCk4SIaPe2TWjPDDHiczRf9Yt4ivmOakquhiYBs9vnDuPuINXWHNCzEudYMDz2mIYHwXH0s26CT-eSxXCnPRH54H9zVjJzNSZ9LhYgLLxOPSFldNaLd8E0MCCjGwBWqucSAraxHNyNmVALbi8LKaPt6u3JiHV02cGhqhG7xEiS5oeSdXh8kWSxd1wOtGc7bmQerrVDNnTqPNflb926zRGuPrELghdm0SeFVWtFGTjILvpgPugq3biLRt199ct8afaIqqH9tuDlpd32Cv4IVPKvvnIutamOILnb04FfrkzwPb6iv4xw

[k8s@kube-server ~]$ curl -s --cacert /etc/kubernetes/cert/ca.pem -H "Authorization: Bearer ${TOKEN}" https://172.16.10.101:10250/metrics|head

# HELP apiserver_client_certificate_expiration_seconds Distribution of the remaining lifetime on the certificate used to authenticate a request.

# TYPE apiserver_client_certificate_expiration_seconds histogram

apiserver_client_certificate_expiration_seconds_bucket{le="0"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="21600"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="43200"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="86400"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="172800"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="345600"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="604800"} 0

apiserver_client_certificate_expiration_seconds_bucket{le="2.592e+06"} 0

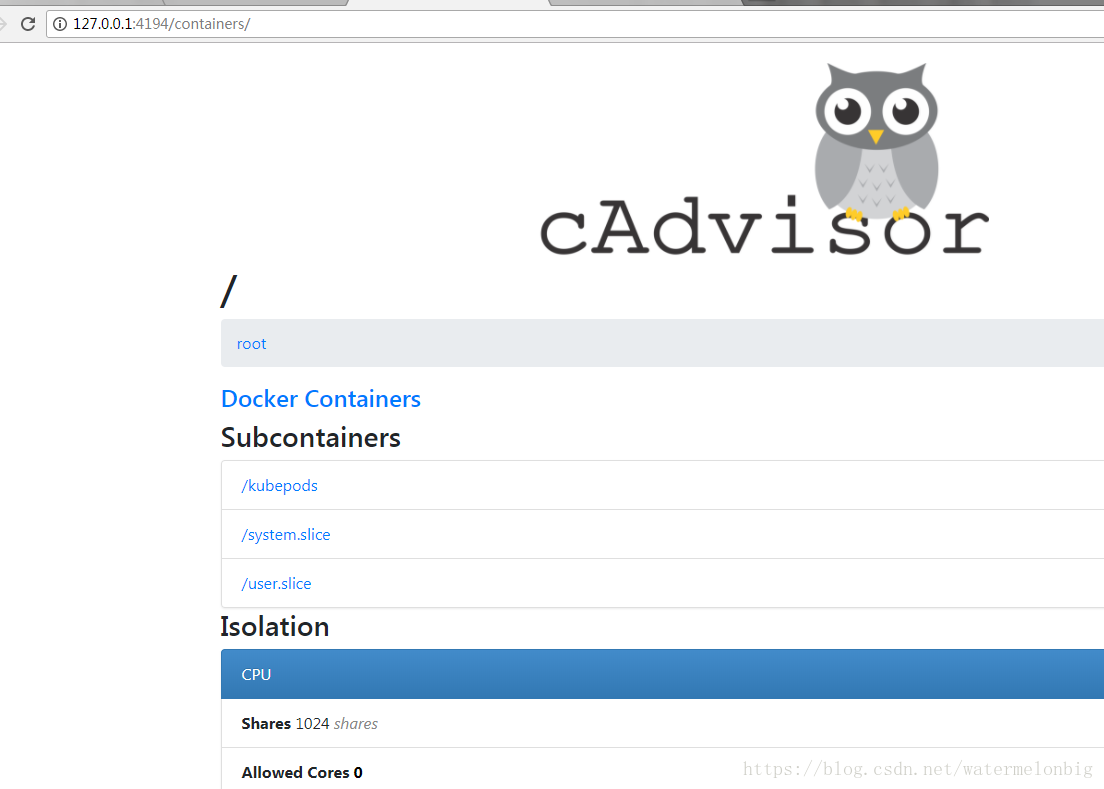

cadvisor 和 metrics

cadvisor 统计所在节点各容器的资源(CPU、内存、磁盘、网卡)使用情况,分别在自己的 http web 页面(4194 端口)和 10250 以 promehteus metrics 的形式输出。

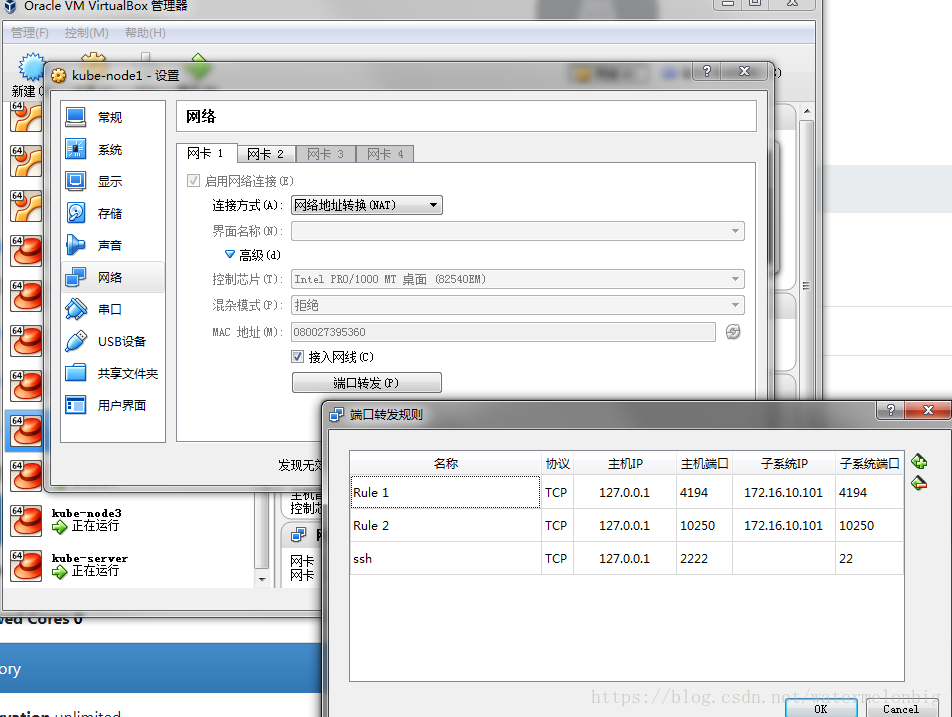

浏览器访问 http://172.16.10.101:4194/containers/ 可以查看到 cadvisor 的监控页面:

因为我们是使用的Virtualbox虚机搭建的测试环境,所以需要配置个转发端口,便于我们从外部访问到测试环境中kube-node1中的服务。

配置方法如下所示。

获取 kublet 的配置

从 kube-apiserver 获取各 node 的配置:

[k8s@kube-server ~]$ curl -sSL --cacert /etc/kubernetes/cert/ca.pem --cert /home/k8s/admin.pem --key /home/k8s/admin-key.pem https://${MASTER_IP}:6443/api/v1/nodes/kube-node1/proxy/configz | jq '.kubeletconfig|.kind="KubeletConfiguration"|.apiVersion="kubelet.config.k8s.io/v1beta1"'

{

"syncFrequency": "1m0s",

"fileCheckFrequency": "20s",

"httpCheckFrequency": "20s",

"address": "172.16.10.101",

"port": 10250,

"authentication": {

"x509": {

"clientCAFile": "/etc/kubernetes/cert/ca.pem"

},

"webhook": {

"enabled": true,

"cacheTTL": "2m0s"

},

"anonymous": {

"enabled": false

}

},

"authorization": {

"mode": "Webhook",

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"registryPullQPS": 5,

"registryBurst": 10,

"eventRecordQPS": 5,

"eventBurst": 10,

"enableDebuggingHandlers": true,

"healthzPort": 10248,

"healthzBindAddress": "127.0.0.1",

"oomScoreAdj": -999,

"clusterDomain": "cluster.local.",

"clusterDNS": [

"10.254.0.2"

],

"streamingConnectionIdleTimeout": "4h0m0s",

"nodeStatusUpdateFrequency": "10s",

"imageMinimumGCAge": "2m0s",

"imageGCHighThresholdPercent": 85,

"imageGCLowThresholdPercent": 80,

"volumeStatsAggPeriod": "1m0s",

"cgroupsPerQOS": true,

"cgroupDriver": "cgroupfs",

"cpuManagerPolicy": "none",

"cpuManagerReconcilePeriod": "10s",

"runtimeRequestTimeout": "2m0s",

"hairpinMode": "promiscuous-bridge",

"maxPods": 110,

"podPidsLimit": -1,

"resolvConf": "/etc/resolv.conf",

"cpuCFSQuota": true,

"maxOpenFiles": 1000000,

"contentType": "application/vnd.kubernetes.protobuf",

"kubeAPIQPS": 5,

"kubeAPIBurst": 10,

"serializeImagePulls": false,

"evictionHard": {

"imagefs.available": "15%",

"memory.available": "100Mi",

"nodefs.available": "10%",

"nodefs.inodesFree": "5%"

},

"evictionPressureTransitionPeriod": "5m0s",

"enableControllerAttachDetach": true,

"makeIPTablesUtilChains": true,

"iptablesMasqueradeBit": 14,

"iptablesDropBit": 15,

"featureGates": {

"RotateKubeletClientCertificate": true,

"RotateKubeletServerCertificate": true

},

"failSwapOn": true,

"containerLogMaxSize": "10Mi",

"containerLogMaxFiles": 5,

"enforceNodeAllocatable": [

"pods"

],

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1"

}

[k8s@kube-server ~]$

7.3 部署 kube-proxy 组件

创建 kube-proxy 证书

创建证书签名请求:

cat > kube-proxy-csr.json <<EOF

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "testcorp"

}

]

}

EOF

- CN:指定该证书的 User 为 system:kube-proxy;

- 预定义的 RoleBinding system:node-proxier 将User system:kube-proxy 与 Role system:node-proxier 绑定,该 Role 授予了调用 kube-apiserver Proxy 相关 API 的权限;

- 该证书只会被 kube-proxy 当做 client 证书使用,所以 hosts 字段为空;

生成证书和私钥:

cfssl gencert -ca=/etc/kubernetes/cert/ca.pem \

-ca-key=/etc/kubernetes/cert/ca-key.pem \

-config=/etc/kubernetes/cert/ca-config.json \