1.克隆3台client(centos7)

右键s200-->管理->克隆-> ... -> 完整克隆

2.启动client

3.启用客户机共享文件夹。

4.修改hostname和ip地址文件

https://blog.csdn.net/ssllkkyyaa/article/details/83410871

ssh免密码登录Permission denied (publickey,gssapi-keyex,gssapi-with-mic) 的解决方案!

当出现Permission denied (publickey,gssapi-keyex,gssapi-with-mic) 警告的时候,恭喜你,你已经离成功很近了。

远程主机这里设为slave2,用户为Hadoop。

本地主机设为slave1

以下都是在远程主机slave2上的配置,使得slave1可以免密码连接到slave2上。如果想免密码互联,原理一样的,在slave1上也这么配置即可!

(1)首先:配置ssh服务器配置文件。

在root 用户下才能配置。

vi /etc/ssh/sshd_config

权限设为no:

#PermitRootLogin yes

#UsePAM yes

#PasswordAuthentication yes

如果前面有# 号,将#号去掉,之后将yes修改为no。

修改之后为:

PermitRootLogin no

UsePAM no

PasswordAuthentication no

权限设为yes:

RSAAuthentication yes

PubkeyAuthentication yes

(2)重启sshd服务

systemctl restart sshd.service

systemctl status sshd.service #查看ssh服务的状态

#systemctl start sshd.service #开启ssh服务

#sytemctl enable sshd.service #ssh服务随开机启动,还有个disabled

#systemctl stop sshd.ervice #停止

正常情况下应该是Active:active(running)

并且权限要配对

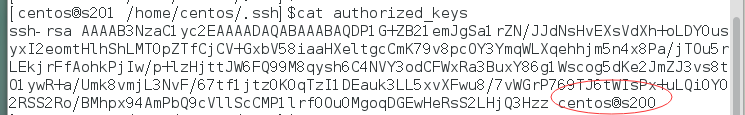

1.~/.ssh/authorized_keys

chmod 700 /home/centos/.ssh

chmod 644 /home/centos/.ssh/authorized_keys

644

2.$/.ssh

700

3.root

[/etc/hostname]

s201

[/etc/sysconfig/network-scripts/ifcfg-eno16777736]

...

IPADDR=..(192.168.77.200-》201 202 203

5.重启网络服务

$>sudo service network restart

6.修改/etc/resolv.conf文件

nameserver 192.168.231.2

7.重复以上3 ~ 6过程.

-----------------------

授权ssh

.删除所有主机上的/home/centos/.ssh/*

本文中方法只是跳转,四机器联动见:

https://blog.csdn.net/ssllkkyyaa/article/details/82839298

2.在s201主机上生成密钥对

$>ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa

3.将s200的公钥文件id_rsa.pub远程复制到201 ~ 203主机上。

并放置/home/centos/.ssh/authorized_keys

$>scp id_rsa.pub centos@s201:/home/centos/.ssh/authorized_keys

$>scp id_rsa.pub centos@s202:/home/centos/.ssh/authorized_keys

$>scp id_rsa.pub centos@s203:/home/centos/.ssh/authorized_keys

三台机子s201 202 203上:

-----------------

名称节点

s200

数据节点

(slaves)

s201 s202 s203

---------------------------

删除软连接:

cd /home/centos/soft

rm hadoop

--------------------------

重新建立软链接(s200 201 202 203)

ln -s /home/centos/soft/full hadoop

-------

s200 配置hadoop

core-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://s200/</value>

</property>

</configuration>

hdfs-site.xml

<?xml version="1.0"?>

<configuration>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

</configuration>

yarn-site.xml

<?xml version="1.0"?>

<configuration>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>s200</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>

mapred-site.xml

<?xml version="1.0"?>

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

slaves

s201

s202

s203

hadoop-env.sh

# Licensed to the Apache Software Foundation (ASF) under one

# or more contributor license agreements. See the NOTICE file

# distributed with this work for additional information

# regarding copyright ownership. The ASF licenses this file

# to you under the Apache License, Version 2.0 (the

# "License"); you may not use this file except in compliance

# with the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# Set Hadoop-specific environment variables here.

# The only required environment variable is JAVA_HOME. All others are

# optional. When running a distributed configuration it is best to

# set JAVA_HOME in this file, so that it is correctly defined on

# remote nodes.

# The java implementation to use.

export JAVA_HOME=/home/centos/soft/jdk

# The jsvc implementation to use. Jsvc is required to run secure datanodes

# that bind to privileged ports to provide authentication of data transfer

# protocol. Jsvc is not required if SASL is configured for authentication of

# data transfer protocol using non-privileged ports.

#export JSVC_HOME=${JSVC_HOME}

export HADOOP_CONF_DIR=/home/centos/soft/hadoop/etc/hadoop

# Extra Java CLASSPATH elements. Automatically insert capacity-scheduler.

for f in $HADOOP_HOME/contrib/capacity-scheduler/*.jar; do

if [ "$HADOOP_CLASSPATH" ]; then

export HADOOP_CLASSPATH=$HADOOP_CLASSPATH:$f

else

export HADOOP_CLASSPATH=$f

fi

done

# The maximum amount of heap to use, in MB. Default is 1000.

#export HADOOP_HEAPSIZE=

#export HADOOP_NAMENODE_INIT_HEAPSIZE=""

# Extra Java runtime options. Empty by default.

export HADOOP_OPTS="$HADOOP_OPTS -Djava.net.preferIPv4Stack=true"

# Command specific options appended to HADOOP_OPTS when specified

export HADOOP_NAMENODE_OPTS="-Dhadoop.security.logger=${HADOOP_SECURITY_LOGGER:-INFO,RFAS} -Dhdfs.audit.logger=${HDFS_AUDIT_LOGGER:-INFO,NullAppender} $HADOOP_NAMENODE_OPTS"

export HADOOP_DATANODE_OPTS="-Dhadoop.security.logger=ERROR,RFAS $HADOOP_DATANODE_OPTS"

export HADOOP_SECONDARYNAMENODE_OPTS="-Dhadoop.security.logger=${HADOOP_SECURITY_LOGGER:-INFO,RFAS} -Dhdfs.audit.logger=${HDFS_AUDIT_LOGGER:-INFO,NullAppender} $HADOOP_SECONDARYNAMENODE_OPTS"

export HADOOP_NFS3_OPTS="$HADOOP_NFS3_OPTS"

export HADOOP_PORTMAP_OPTS="-Xmx512m $HADOOP_PORTMAP_OPTS"

# The following applies to multiple commands (fs, dfs, fsck, distcp etc)

export HADOOP_CLIENT_OPTS="-Xmx512m $HADOOP_CLIENT_OPTS"

#HADOOP_JAVA_PLATFORM_OPTS="-XX:-UsePerfData $HADOOP_JAVA_PLATFORM_OPTS"

# On secure datanodes, user to run the datanode as after dropping privileges.

# This **MUST** be uncommented to enable secure HDFS if using privileged ports

# to provide authentication of data transfer protocol. This **MUST NOT** be

# defined if SASL is configured for authentication of data transfer protocol

# using non-privileged ports.

export HADOOP_SECURE_DN_USER=${HADOOP_SECURE_DN_USER}

# Where log files are stored. $HADOOP_HOME/logs by default.

#export HADOOP_LOG_DIR=${HADOOP_LOG_DIR}/$USER

# Where log files are stored in the secure data environment.

export HADOOP_SECURE_DN_LOG_DIR=${HADOOP_LOG_DIR}/${HADOOP_HDFS_USER}

###

# HDFS Mover specific parameters

###

# Specify the JVM options to be used when starting the HDFS Mover.

# These options will be appended to the options specified as HADOOP_OPTS

# and therefore may override any similar flags set in HADOOP_OPTS

#

# export HADOOP_MOVER_OPTS=""

###

# Advanced Users Only!

###

# The directory where pid files are stored. /tmp by default.

# NOTE: this should be set to a directory that can only be written to by

# the user that will run the hadoop daemons. Otherwise there is the

# potential for a symlink attack.

export HADOOP_PID_DIR=${HADOOP_PID_DIR}

export HADOOP_SECURE_DN_PID_DIR=${HADOOP_PID_DIR}

# A string representing this instance of hadoop. $USER by default.

export HADOOP_IDENT_STRING=$USER

----------------

拷贝到其他机子上

pwd :

/home/centos/soft/hadoop/etc/hadoop

scp -r ./* centos@s201:/home/centos/soft/hadoop/etc/hadoop

scp -r ./* centos@s202:/home/centos/soft/hadoop/etc/hadoop

scp -r ./* centos@s203:/home/centos/soft/hadoop/etc/hadoop

.删除临时目录文件

$>cd /tmp

$>rm -rf hadoop-centos

$>ssh s201 rm -rf /tmp/hadoop-centos

$>ssh s202 rm -rf /tmp/hadoop-centos

$>ssh s203 rm -rf /tmp/hadoop-centos

10.格式化文件系统

$>hadoop namenode -format

11.启动hadoop进程

$>start-all.sh

删除hadoop日志

$>cd /home/centos/soft/hadoop/logs

$>rm -rf *

$>ssh s201 rm -rf /home/centos/soft/hadoop/logs/*

$>ssh s202 rm -rf /home/centos/soft/hadoop/logs/*

$>ssh s203 rm -rf /home/centos/soft/hadoop/logs/*

10.格式化文件系统

$>hadoop namenode -format

11.启动hadoop进程

$>start-all.sh

----------------------------------------------------

重启的话:

hdfs-stop.sh

删掉tmp下的hadoop-centos

$>cd /tmp

$>rm -rf hadoop-centos

$>ssh s201 rm -rf /tmp/hadoop-centos

$>ssh s202 rm -rf /tmp/hadoop-centos

$>ssh s203 rm -rf /tmp/hadoop-centos

>hadoop namenode -format

hdfs-start.sh

=================

jps软连接

ln -s /home/centos/soft/jdk/bin/jps /usr/local/bin/jps

=====================

xcall.sh

xsync.sh 放入/usr/local/bin/下

xcall.sh rm -rf /home/centos/hadooptmp/

hadoop namenode -format

start-all.sh

停止:

stop-all.sh