文章目录

软件包,hadoop用户准备

此次实验使用阿里云3台云主机,指令前没有机名的是对3台机同时做操作。

对于三台机都创建hadoop用户作为我们高可用环境的用户,在software下放软件包

[root@hadoop001 ~]# useradd hadoop

[root@hadoop001 ~]# su - hadoop

[hadoop@hadoop001 ~]$ mkdir software app data lib source

[hadoop@hadoop001 ~]$ ll

total 20

drwxrwxr-x 2 hadoop hadoop 4096 Nov 26 16:30 app 放安装好的软件

drwxrwxr-x 2 hadoop hadoop 4096 Nov 26 16:30 data 测试数据

drwxrwxr-x 2 hadoop hadoop 4096 Nov 26 16:30 lib 依赖包

drwxrwxr-x 2 hadoop hadoop 4096 Nov 26 16:30 software 软件安装包

drwxrwxr-x 2 hadoop hadoop 4096 Nov 26 16:30 source 源代码

接下来上传win下载的软件包到linux,上传要用rz指令,安装这个指令要在root用户下

[root@hadoop001 ~]# yum install -y lrzsz

[hadoop@hadoop001 ~]$ rz

[hadoop@hadoop001 ~]$ mv hadoop-2.6.0-cdh5.7.0.tar.gz jdk-8u45-linux-x64.gz zookeeper-3.4.6.tar.gz ./software/

其他机器也要上传这些安装包,先查看另外两台机的ip

[hadoop@hadoop002 ~]$ hostname -i

172.26.165.126

[hadoop@hadoop002 ~]$ hostname

hadoop002

上传到该ip的root用户下的目录里,如果不指定,就是hadoop(就是取数据源当前操作用户)

[hadoop@hadoop001 software]$ scp * [email protected]:/home/hadoop/software/

上传到hadoop003

[hadoop@hadoop001 software]$ scp * [email protected]:/home/hadoop/software/

3台机安装包所属的用户是root,修改为hadoop

exit 退出到root

更改包用户和用户组

chown -R hadoop:hadoop /home/hadoop/software/*

清屏

clear

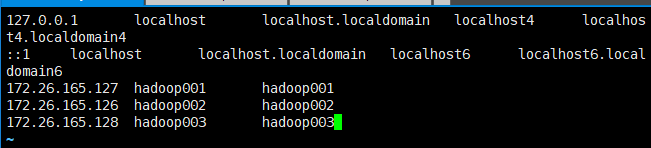

配置etc/hosts

[root@hadoop001 ~]# vi /etc/hosts

配置结果如下图所示,就是把3台机的ip和机器名的对应关系写在一个文件里。

然后传给另外两台机器

[root@hadoop001 ~]# scp /etc/hosts 172.26.165.126:/etc/hosts

[root@hadoop001 ~]# scp /etc/hosts 172.26.165.128:/etc/hosts

多台机器无密码访问(传文件需要输入密码麻烦)

su - hadoop

rm -rf .ssh

3台机器生成密钥文件

ssh-keygen

进入密钥路径

cd .ssh

[hadoop@hadoop001 .ssh]$ ll

total 8

-rw------- 1 hadoop hadoop 1671 Nov 26 18:24 id_rsa

-rw-r--r-- 1 hadoop hadoop 398 Nov 26 18:24 id_rsa.pub

选hadoop001作为主机,把另外两台机的公钥文件发到主机

[hadoop@hadoop002 .ssh]$ scp id_rsa.pub root@hadoop001:/home/hadoop/.ssh/id_rsa.pub2

[hadoop@hadoop003 .ssh]$ scp id_rsa.pub root@hadoop001:/home/hadoop/.ssh/id_rsa.pub3

[hadoop@hadoop001 .ssh]$ ll

total 16

-rw------- 1 hadoop hadoop 1671 Nov 26 18:24 id_rsa

-rw-r--r-- 1 hadoop hadoop 398 Nov 26 18:24 id_rsa.pub

-rw-r--r-- 1 root root 398 Nov 26 18:44 id_rsa.pub2

-rw-r--r-- 1 root root 398 Nov 26 18:45 id_rsa.pub3

汇集3机生成一个密钥

[hadoop@hadoop001 .ssh]$ cat id_rsa.pub >> authorized_keys

[hadoop@hadoop001 .ssh]$ cat id_rsa.pub2 >> authorized_keys

[hadoop@hadoop001 .ssh]$ cat id_rsa.pub3 >> authorized_keys

将生成的这个3机密钥传到另外两台机

[hadoop@hadoop001 .ssh]$ scp authorized_keys root@hadoop002:/home/hadoop/.ssh/

[hadoop@hadoop001 .ssh]$ scp authorized_keys root@hadoop003:/home/hadoop/.ssh/

改权限用户组

exit 退回到root用户

chown -R hadoop:hadoop /home/hadoop/.ssh/*

chown -R hadoop:hadoop /home/hadoop/.ssh

su - hadoop

cd .ssh

3机密钥权限修改

chmod 600 authorized_keys

确认互相信任关系,相当于登陆到那台机,执行date

ssh hadoop001 date

ssh hadoop002 date

ssh hadoop003 date

部署java

exit 到root用户

建立java存放的文件夹,然后解压过来

mkdir /usr/java

tar -xzvf /home/hadoop/software/jdk-8u45-linux-x64.gz -C /usr/java

注意要修改解压后的java用户和用户组

[root@hadoop001 java]# chown -R root:root /usr/java/jdk1.8.0_45

配置java环境变量

vi /etc/profile

#env

export JAVA_HOME=/usr/java/jdk1.8.0_45

export PATH=$JAVA_HOME/bin:$PATH

然后

[root@hadoop001 java]# source /etc/profile

[root@hadoop001 java]# java -version

解压hadoop和zookeeper

su - hadoop

cd software

tar -xzvf hadoop-2.6.0-cdh5.7.0.tar.gz -C ../app/

tar -xzvf zookeeper-3.4.6.tar.gz -C ../app/

修改hadoop目录

cd 返回家目录

vi .bash_profile

export HADOOP_HOME=/home/hadoop/app/hadoop-2.6.0-cdh5.7.0

export ZOOKEEPER_HOME=/home/hadoop/app/zookeeper-3.4.6

export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$ZOOKEEPER_HOME/bin:$PATH

source .bash_profile

看看能不能切,能切说明正常

cd $HADOOP_HOME

建几个文件夹

mkdir $HADOOP_HOME/data && mkdir $HADOOP_HOME/logs &&mkdir $HADOOP_HOME/tmp

hadoop临时目录

chmod -R 777 $HADOOP_HOME/tmp

zookeeper部署

cd zookeeper-3.4.6/

cd conf

cp zoo_sample.cfg zoo.cfg

[hadoop@hadoop001 conf]$ vi zoo.cfg

dataDir是日志问夹路径

dataDir=/home/hadoop/app/zookeeper-3.4.6/data

zookeeper集群所在设置,server.1,1代表id,就是下面myid设置的,2888端口和3888端口,内部通信端口,zookeeper之间互相访问,core-site里面是外部组建访问端口

server.1=hadoop001:2888:3888

server.2=hadoop002:2888:3888

server.3=hadoop003:2888:3888

[hadoop@hadoop001 conf]$ scp zoo.cfg hadoop002:/home/hadoop/app/zookeeper-3.4.6/conf/

[hadoop@hadoop001 conf]$ scp zoo.cfg hadoop003:/home/hadoop/app/zookeeper-3.4.6/conf/

呼应上面的zoo.cfg,配置机器对应的zookeeperid

cd ../

mkdir data

touch data/myid

注意>左边要有空格

[hadoop@hadoop001 zookeeper-3.4.6]$ echo 1 >data/myid

[hadoop@hadoop002 zookeeper-3.4.6]$ echo 2 >data/myid

[hadoop@hadoop003 zookeeper-3.4.6]$ echo 3 >data/myid

hadoop配置

cd hadoop-2.6.0-cdh5.7.0/etc/hadoop

hadoop依赖的java环境

[hadoop@hadoop001 hadoop]$ vi hadoop-env.sh

export JAVA_HOME=/usr/java/jdk1.8.0_45

[hadoop@hadoop001 hadoop]$ scp hadoop-env.sh hadoop002:/home/hadoop/app/hadoop-2.6.0-cdh5.7.0/etc/hadoop

[hadoop@hadoop001 hadoop]$ scp hadoop-env.sh hadoop003:/home/hadoop/app/hadoop-2.6.0-cdh5.7.0/etc/hadoop

先删了

rm -f slaves core-site.xml hdfs-site.xml yarn-site.xml

然后都rz 5个文件,文件配置如下

core-site

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<!--Yarn 需要使用 fs.defaultFS 指定NameNode URI -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://ruozeclusterg5</value>

</property>

<!--==============================Trash机制======================================= -->

<property>

<!--回收站,多长时间创建CheckPoint NameNode截点上运行的CheckPointer 从Current文件夹创建CheckPoint;默认:0 由fs.trash.interval项指定 -->

<name>fs.trash.checkpoint.interval</name>

<value>0</value>

</property>

<property>

<!--回收站,多少分钟.Trash下的CheckPoint目录会被删除,该配置服务器设置优先级大于客户端,默认:0 不删除 -->

<name>fs.trash.interval</name>

<value>1440</value>

</property>

<!--指定hadoop临时目录, hadoop.tmp.dir 是hadoop文件系统依赖的基础配置,很多路径都依赖它。如果hdfs-site.xml中不配 置namenode和datanode的存放位置,默认就放在这>个路径中 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/home/hadoop/app/hadoop-2.6.0-cdh5.7.0/tmp</value>

</property>

<!-- 指定zookeeper地址 -->

<property>

<name>ha.zookeeper.quorum</name>

<value>hadoop001:2181,hadoop002:2181,hadoop003:2181</value>

</property>

<!--指定ZooKeeper超时间隔,单位毫秒 -->

<property>

<name>ha.zookeeper.session-timeout.ms</name>

<value>2000</value>

</property>

<property>

<name>hadoop.proxyuser.hadoop.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.hadoop.groups</name>

<value>*</value>

</property>

<property>

<name>io.compression.codecs</name>

<value>org.apache.hadoop.io.compress.GzipCodec,

org.apache.hadoop.io.compress.DefaultCodec,

org.apache.hadoop.io.compress.BZip2Codec,

org.apache.hadoop.io.compress.SnappyCodec

</value>

</property>

</configuration>

hdfs-site

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<!--HDFS超级用户 -->

<property>

<name>dfs.permissions.superusergroup</name>

<value>hadoop</value>

</property>

<!--开启web hdfs -->

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>/home/hadoop/app/hadoop-2.6.0-cdh5.7.0/data/dfs/name</value>

<description> namenode 存放name table(fsimage)本地目录(需要修改)</description>

</property>

<property>

<name>dfs.namenode.edits.dir</name>

<value>${dfs.namenode.name.dir}</value>

<description>namenode存放 transaction file(edits)本地目录(需要修改)</description>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/home/hadoop/app/hadoop-2.6.0-cdh5.7.0/data/dfs/data</value>

<description>datanode存放block本地目录(需要修改)</description>

</property>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<!-- 块大小256M (默认128M) -->

<property>

<name>dfs.blocksize</name>

<value>268435456</value>

</property>

<!--======================================================================= -->

<!--HDFS高可用配置 -->

<!--指定hdfs的nameservice为ruozeclusterg5,需要和core-site.xml中的保持一致 -->

<property>

<name>dfs.nameservices</name>

<value>ruozeclusterg5</value>

</property>

<property>

<!--设置NameNode IDs 此版本最大只支持两个NameNode -->

<name>dfs.ha.namenodes.ruozeclusterg5</name>

<value>nn1,nn2</value>

</property>

<!-- Hdfs HA: dfs.namenode.rpc-address.[nameservice ID] rpc 通信地址 -->

<property>

<name>dfs.namenode.rpc-address.ruozeclusterg5.nn1</name>

<value>hadoop001:8020</value>

</property>

<property>

<name>dfs.namenode.rpc-address.ruozeclusterg5.nn2</name>

<value>hadoop002:8020</value>

</property>

<!-- Hdfs HA: dfs.namenode.http-address.[nameservice ID] http 通信地址 -->

<property>

<name>dfs.namenode.http-address.ruozeclusterg5.nn1</name>

<value>hadoop001:50070</value>

</property>

<property>

<name>dfs.namenode.http-address.ruozeclusterg5.nn2</name>

<value>hadoop002:50070</value>

</property>

<!--==================Namenode editlog同步 ============================================ -->

<!--保证数据恢复 -->

<property>

<name>dfs.journalnode.http-address</name>

<value>0.0.0.0:8480</value>

</property>

<property>

<name>dfs.journalnode.rpc-address</name>

<value>0.0.0.0:8485</value>

</property>

<property>

<!--设置JournalNode服务器地址,QuorumJournalManager 用于存储editlog -->

<!--格式:qjournal://<host1:port1>;<host2:port2>;<host3:port3>/<journalId> 端口同journalnode.rpc-address -->

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://hadoop001:8485;hadoop002:8485;hadoop003:8485/ruozeclusterg5</value>

</property>

<property>

<!--JournalNode存放数据地址 -->

<name>dfs.journalnode.edits.dir</name>

<value>/home/hadoop/app/hadoop-2.6.0-cdh5.7.0/data/dfs/jn</value>

</property>

<!--==================DataNode editlog同步 ============================================ -->

<property>

<!--DataNode,Client连接Namenode识别选择Active NameNode策略 -->

<!-- 配置失败自动切换实现方式 -->

<name>dfs.client.failover.proxy.provider.ruozeclusterg5</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<!--==================Namenode fencing:=============================================== -->

<!--Failover后防止停掉的Namenode启动,造成两个服务 -->

<property>

<name>dfs.ha.fencing.methods</name>

<value>sshfence</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/home/hadoop/.ssh/id_rsa</value>

</property>

<property>

<!--多少milliseconds 认为fencing失败 -->

<name>dfs.ha.fencing.ssh.connect-timeout</name>

<value>30000</value>

</property>

<!--==================NameNode auto failover base ZKFC and Zookeeper====================== -->

<!--开启基于Zookeeper -->

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<!--动态许可datanode连接namenode列表 -->

<property>

<name>dfs.hosts</name>

<value>/home/hadoop/app/hadoop-2.6.0-cdh5.7.0/etc/hadoop/slaves</value>

</property>

</configuration>

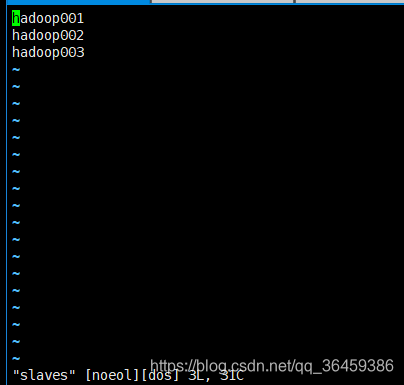

slaves

hadoop001

hadoop002

hadoop003

yarn方面

mapred-site

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<!-- 配置 MapReduce Applications -->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<!-- JobHistory Server ============================================================== -->

<!-- 配置 MapReduce JobHistory Server 地址 ,默认端口10020 -->

<property>

<name>mapreduce.jobhistory.address</name>

<value>hadoop001:10020</value>

</property>

<!-- 配置 MapReduce JobHistory Server web ui 地址, 默认端口19888 -->

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>hadoop001:19888</value>

</property>

<!-- 配置 Map段输出的压缩,snappy-->

<property>

<name>mapreduce.map.output.compress</name>

<value>true</value>

</property>

<property>

<name>mapreduce.map.output.compress.codec</name>

<value>org.apache.hadoop.io.compress.SnappyCodec</value>

</property>

</configuration>

yarn-site

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<!-- nodemanager 配置 ================================================= -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

<property>

<name>yarn.nodemanager.localizer.address</name>

<value>0.0.0.0:23344</value>

<description>Address where the localizer IPC is.</description>

</property>

<property>

<name>yarn.nodemanager.webapp.address</name>

<value>0.0.0.0:23999</value>

<description>NM Webapp address.</description>

</property>

<!-- HA 配置 =============================================================== -->

<!-- Resource Manager Configs -->

<property>

<name>yarn.resourcemanager.connect.retry-interval.ms</name>

<value>2000</value>

</property>

<property>

<name>yarn.resourcemanager.ha.enabled</name>

<value>true</value>

</property>

<property>

<name>yarn.resourcemanager.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<!-- 使嵌入式自动故障转移。HA环境启动,与 ZKRMStateStore 配合 处理fencing -->

<property>

<name>yarn.resourcemanager.ha.automatic-failover.embedded</name>

<value>true</value>

</property>

<!-- 集群名称,确保HA选举时对应的集群 -->

<property>

<name>yarn.resourcemanager.cluster-id</name>

<value>yarn-cluster</value>

</property>

<property>

<name>yarn.resourcemanager.ha.rm-ids</name>

<value>rm1,rm2</value>

</property>

<!--这里RM主备结点需要单独指定,(可选)

<property>

<name>yarn.resourcemanager.ha.id</name>

<value>rm2</value>

</property>

-->

<property>

<name>yarn.resourcemanager.scheduler.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairScheduler</value>

</property>

<property>

<name>yarn.resourcemanager.recovery.enabled</name>

<value>true</value>

</property>

<property>

<name>yarn.app.mapreduce.am.scheduler.connection.wait.interval-ms</name>

<value>5000</value>

</property>

<!-- ZKRMStateStore 配置 -->

<property>

<name>yarn.resourcemanager.store.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore</value>

</property>

<property>

<name>yarn.resourcemanager.zk-address</name>

<value>hadoop001:2181,hadoop002:2181,hadoop003:2181</value>

</property>

<property>

<name>yarn.resourcemanager.zk.state-store.address</name>

<value>hadoop001:2181,hadoop002:2181,hadoop003:2181</value>

</property>

<!-- Client访问RM的RPC地址 (applications manager interface) -->

<property>

<name>yarn.resourcemanager.address.rm1</name>

<value>hadoop001:23140</value>

</property>

<property>

<name>yarn.resourcemanager.address.rm2</name>

<value>hadoop002:23140</value>

</property>

<!-- AM访问RM的RPC地址(scheduler interface) -->

<property>

<name>yarn.resourcemanager.scheduler.address.rm1</name>

<value>hadoop001:23130</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address.rm2</name>

<value>hadoop002:23130</value>

</property>

<!-- RM admin interface -->

<property>

<name>yarn.resourcemanager.admin.address.rm1</name>

<value>hadoop001:23141</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address.rm2</name>

<value>hadoop002:23141</value>

</property>

<!--NM访问RM的RPC端口 -->

<property>

<name>yarn.resourcemanager.resource-tracker.address.rm1</name>

<value>hadoop001:23125</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address.rm2</name>

<value>hadoop002:23125</value>

</property>

<!-- RM web application 地址 -->

<property>

<name>yarn.resourcemanager.webapp.address.rm1</name>

<value>hadoop001:8088</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address.rm2</name>

<value>hadoop002:8088</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.https.address.rm1</name>

<value>hadoop001:23189</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.https.address.rm2</name>

<value>hadoop002:23189</value>

</property>

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<name>yarn.log.server.url</name>

<value>http://hadoop001:19888/jobhistory/logs</value>

</property>

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>2048</value>

</property>

<property>

<name>yarn.scheduler.minimum-allocation-mb</name>

<value>1024</value>

<discription>单个任务可申请最少内存,默认1024MB</discription>

</property>

<property>

<name>yarn.scheduler.maximum-allocation-mb</name>

<value>2048</value>

<discription>单个任务可申请最大内存,默认8192MB</discription>

</property>

<property>

<name>yarn.nodemanager.resource.cpu-vcores</name>

<value>2</value>

</property>

</configuration>

zookeeper,hdfs,yarn启动

先启动zookeeper

$ZOOKEEPER_HOME/bin/zkServer.sh start

zkServer.sh status

如果是两个follower,1个leader,则成功

启动journalnode

cd app/hadoop-2.6.0-cdh5.7.0

sbin/hadoop-daemon.sh start journalnode

[hadoop@hadoop001 hadoop-2.6.0-cdh5.7.0]$ jps

2899 JournalNode

2950 Jps

2782 QuorumPeerMain 这是zookeeper进程名

启动hadoop

第一次启动先格式化一下,注意两个namenode只选取一台做hadoop格式化

[hadoop@hadoop001 hadoop-2.6.0-cdh5.7.0]$ hadoop namenode -format

然后将格式化后的文件(datanode和namenode所在)覆盖第二个namenode所在机器,同步namenode元数据

[hadoop@hadoop001 hadoop-2.6.0-cdh5.7.0]$ scp -r data hadoop002:/home/hadoop/app/hadoop-2.6.0-cdh5.7.0

初始化zkfc,只在hadoop001做,注意,因为一个命名空间里面包括了hadoop001和hadoop002的hdfs地址

[hadoop@hadoop001 hadoop-2.6.0-cdh5.7.0]$ hdfs zkfc -formatZK

Successfully created /hadoop-ha/ruozeclusterg5 in ZK.

启动hdfs

[hadoop@hadoop001 hadoop-2.6.0-cdh5.7.0]$ start-dfs.sh

报错,slaves是dos形式,适用于win,要转格式

[hadoop@hadoop001 hadoop-2.6.0-cdh5.7.0]$ stop-dfs.sh

安装转格式的插件

yum install -y dos2unix

dos2unix slaves

注意启动顺序

[hadoop@hadoop001 hadoop]$ start-dfs.sh

18/11/27 10:18:36 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Starting namenodes on [hadoop001 hadoop002]

hadoop001: starting namenode, logging to /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/logs/hadoop-hadoop-namenode-hadoop001.out

hadoop002: starting namenode, logging to /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/logs/hadoop-hadoop-namenode-hadoop002.out

hadoop002: starting datanode, logging to /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/logs/hadoop-hadoop-datanode-hadoop002.out

hadoop001: starting datanode, logging to /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/logs/hadoop-hadoop-datanode-hadoop001.out

hadoop003: starting datanode, logging to /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/logs/hadoop-hadoop-datanode-hadoop003.out

Starting journal nodes [hadoop001 hadoop002 hadoop003]

hadoop002: starting journalnode, logging to /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/logs/hadoop-hadoop-journalnode-hadoop002.out

hadoop001: starting journalnode, logging to /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/logs/hadoop-hadoop-journalnode-hadoop001.out

hadoop003: starting journalnode, logging to /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/logs/hadoop-hadoop-journalnode-hadoop003.out

18/11/27 10:18:53 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Starting ZK Failover Controllers on NN hosts [hadoop001 hadoop002]

hadoop002: starting zkfc, logging to /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/logs/hadoop-hadoop-zkfc-hadoop002.out

hadoop001: starting zkfc, logging to /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/logs/hadoop-hadoop-zkfc-hadoop001.out

启动yarn

[hadoop@hadoop001 hadoop]$ start-yarn.sh

starting yarn daemons

starting resourcemanager, logging to /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/logs/yarn-hadoop-resourcemanager-hadoop001.out

hadoop002: starting nodemanager, logging to /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/logs/yarn-hadoop-nodemanager-hadoop002.out

hadoop003: starting nodemanager, logging to /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/logs/yarn-hadoop-nodemanager-hadoop003.out

hadoop001: starting nodemanager, logging to /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/logs/yarn-hadoop-nodemanager-hadoop001.out

第二个resourcemanager需要手动启动

[hadoop@hadoop002 hadoop-2.6.0-cdh5.7.0]$ yarn-daemon.sh start resourcemanager

starting resourcemanager, logging to /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/logs/yarn-hadoop-resourcemanager-hadoop002.out

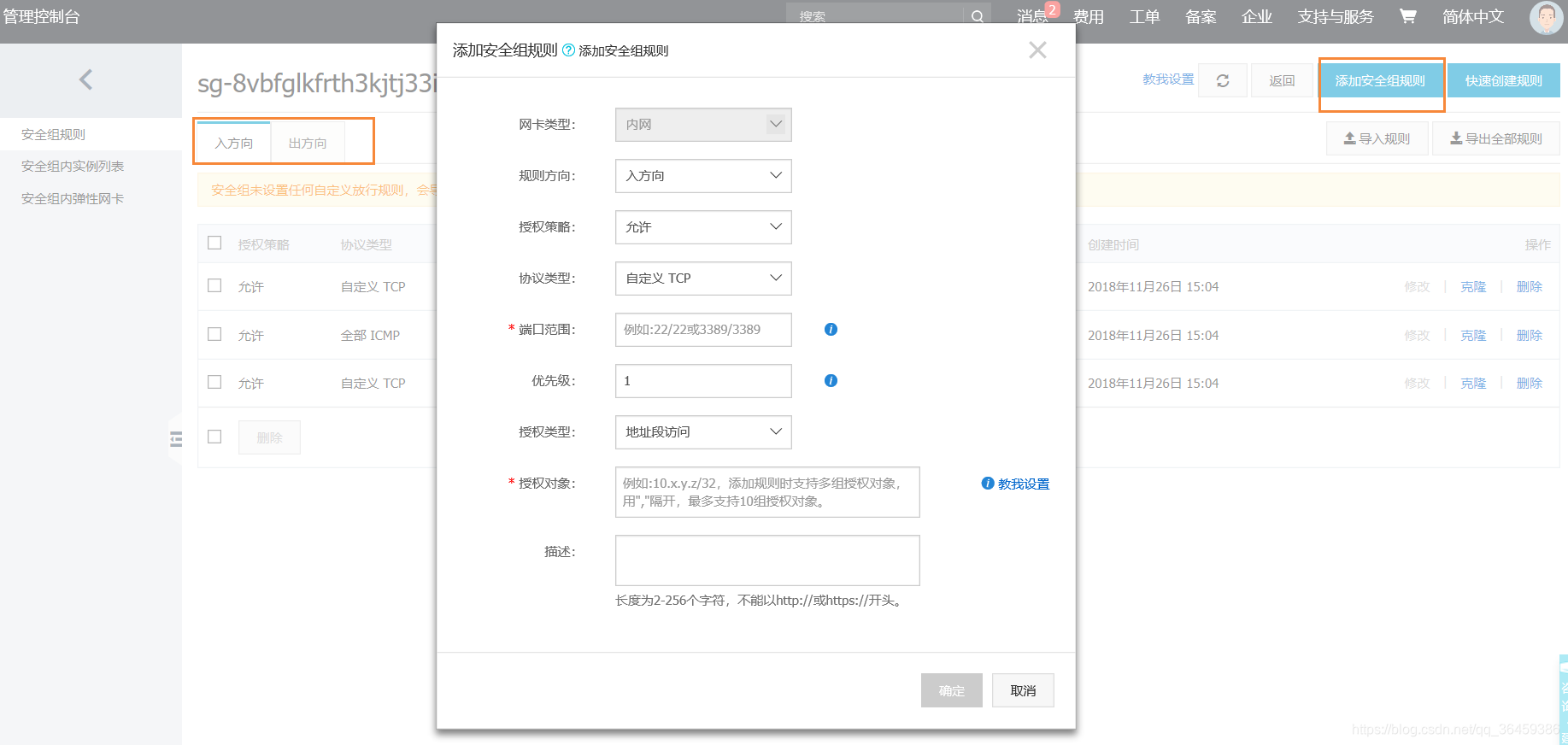

web界面查看

先配置云主机出入方向的安全组规则

如此这般,便可在网页访问

访问公网ip

hadoop

http://47.92.250.235:50070

yarn

http://47.92.250.235:50070:8088 (active)

http://47.92.250.236:50070:8088/cluster/cluster(standby)

启动jobhistory,yarn存储的记录有限

[hadoop@hadoop001 hadoop]$ $HADOOP_HOME/sbin/mr-jobhistory-daemon.sh start historyserver

jobhistory在端口号19888

启动和停止集群顺序

启动

zkServer.sh start

[hadoop@hadoop001 sbin]# start-dfs.sh

[hadoop@hadoop001 sbin]# start-yarn.sh

[hadoop@hadoop002 sbin]# yarn-daemon.sh start resourcemanager

[hadoop@hadoop001 ~]# $HADOOP_HOME/sbin/mr-jobhistory-daemon.sh start historyserver

停止

[hadoop@hadoop001 sbin]# stop-yarn.sh

[hadoop@hadoop002 sbin]# yarn-daemon.sh stop resourcemanager

[hadoop@hadoop001 sbin]# stop-dfs.sh

zkServer.sh stop