1.HBase shell

HBase的命令行工具是最简单的接口,主要用于HBase管理

首先启动HBase

帮助

hbase(main):001:0> help

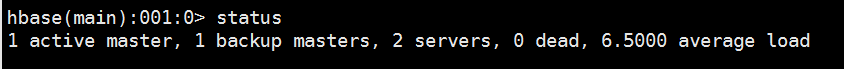

查看HBase服务器状态

hbase(main):001:0> status

查询HBse版本

hbase(main):002:0> version

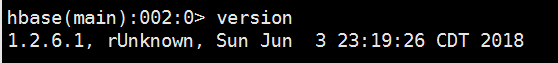

ddl操作

1.创建一个member表

hbase(main):013:0> create 'table1','tab1_id','tab1_add','tab1_info'

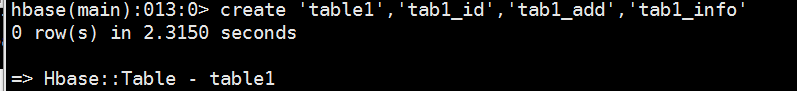

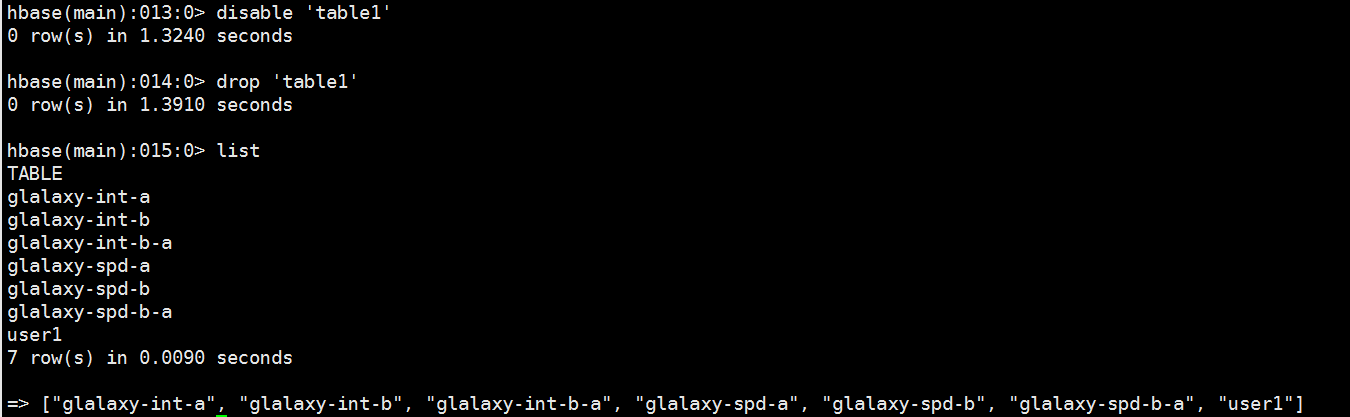

2.查看所有的表

hbase(main):006:0> list

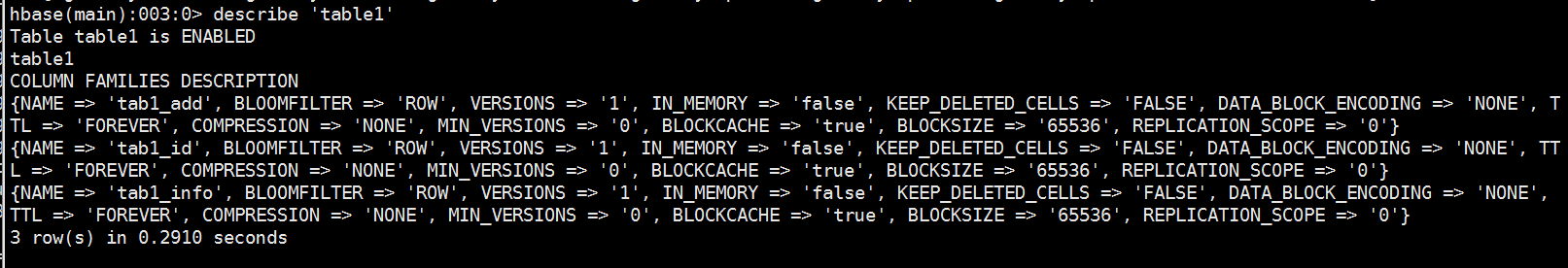

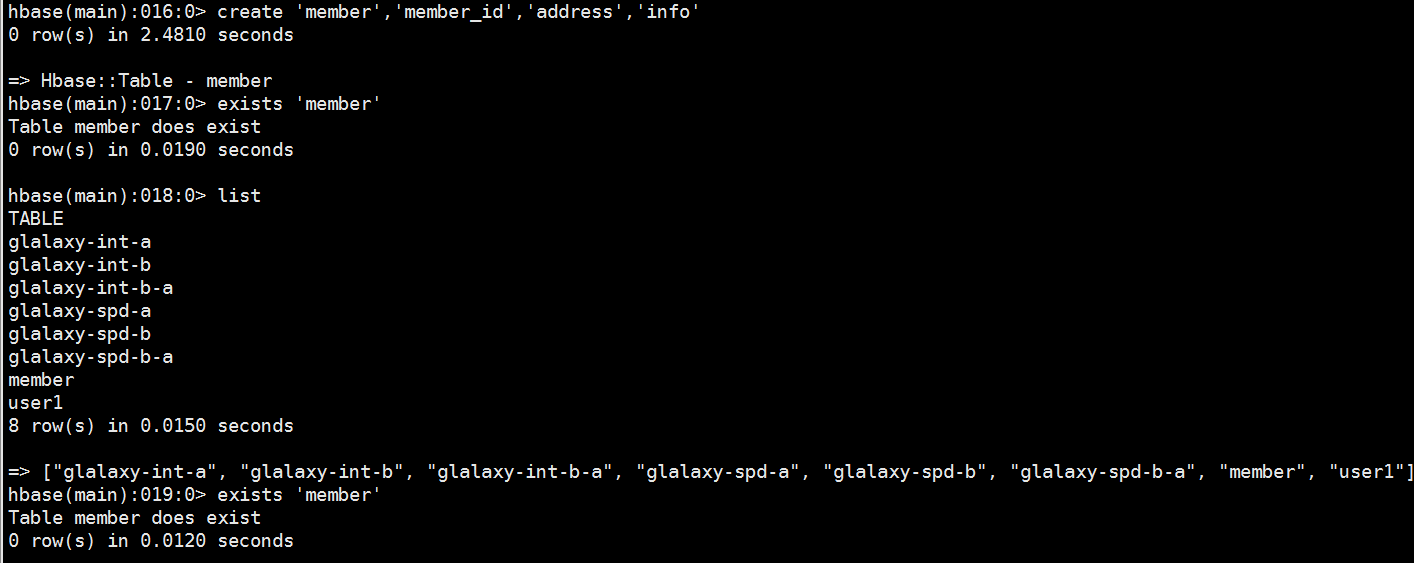

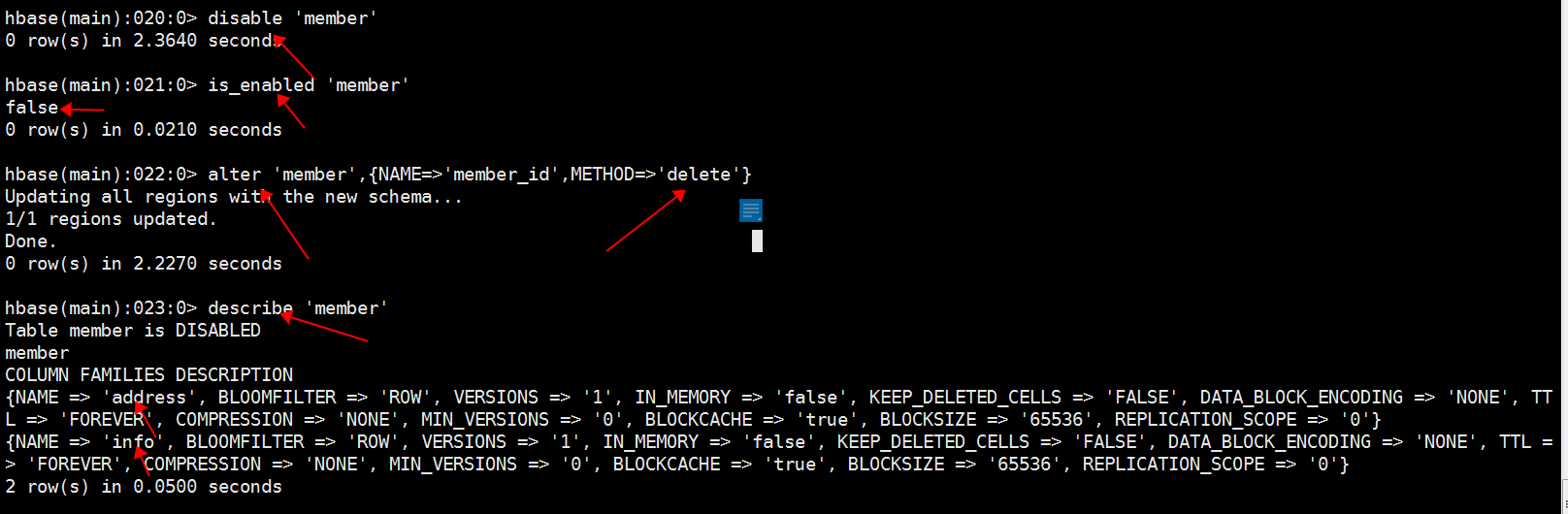

3.查看表结构

hbase(main):007:0> describe 'member'

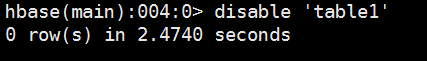

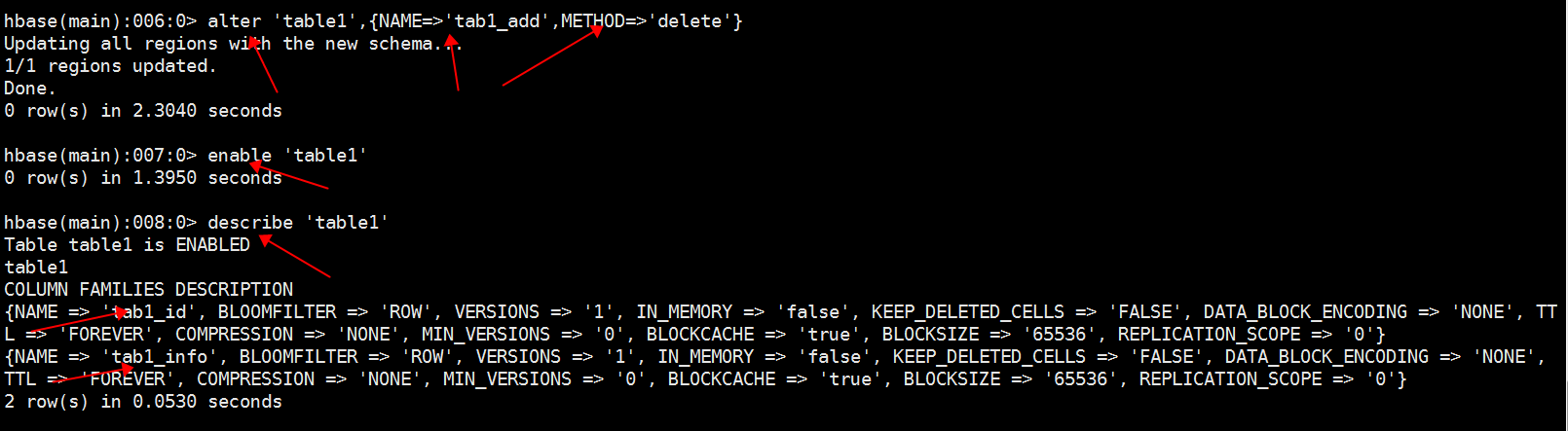

4.删除一个列簇

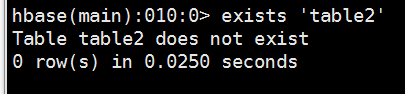

5、查看表是否存在

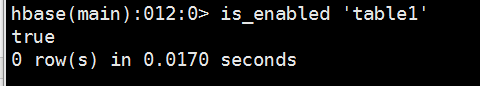

6、判断表是否为"enable"

7、删除一个表

dml操作

1、创建member表

删除一个列簇(一般不超过两个列簇)

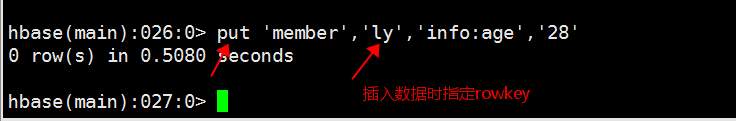

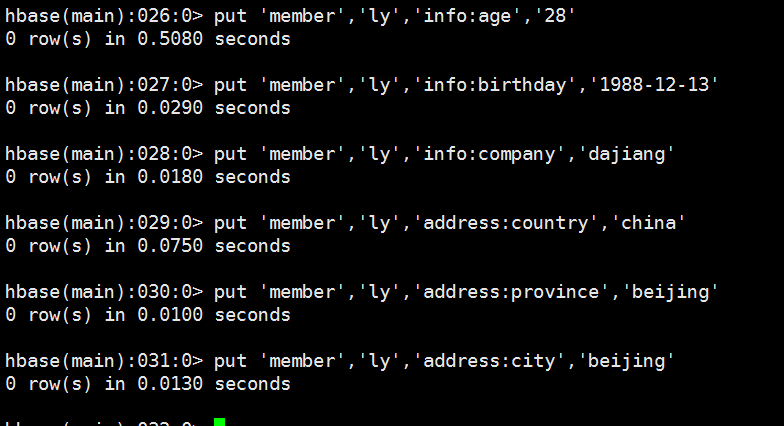

2、往member表插入数据

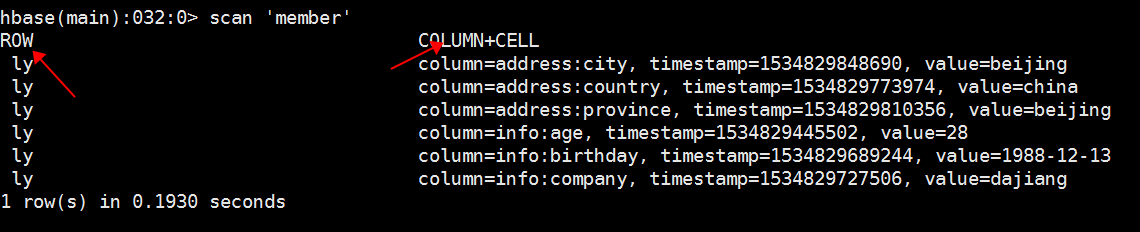

3、扫描查看数据

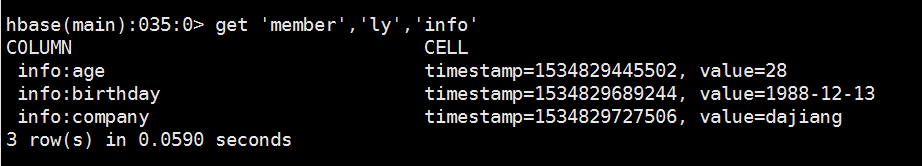

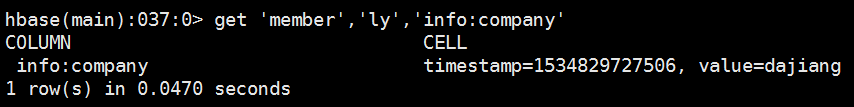

4、获取数据

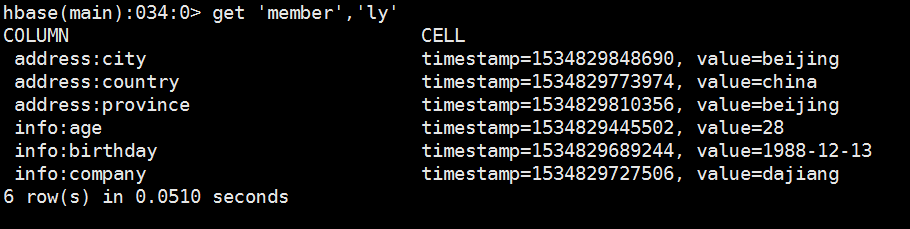

获取一个rowkey的所有数据

获取一个rowkey,一个列簇的所有数据

获取一个rowkey,一个列簇中一个列的所有数据

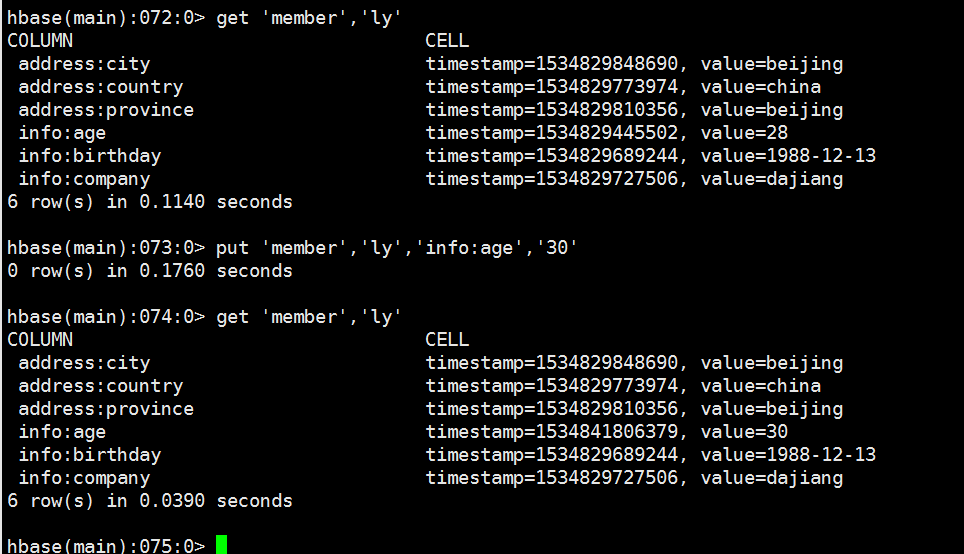

5、更新数据

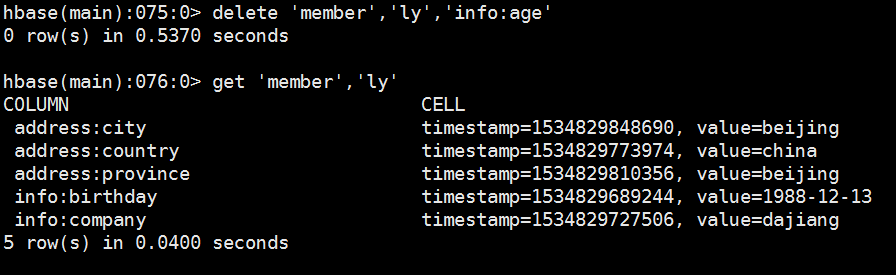

6、删除列簇中其中一列

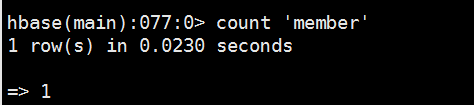

7、统计表中总的行数

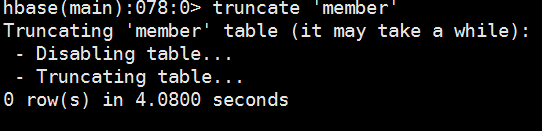

8、清空表中数据

2.java API

最常规且最高效的访问方式

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.HColumnDescriptor;

import org.apache.hadoop.hbase.HTableDescriptor;

import org.apache.hadoop.hbase.KeyValue;

import org.apache.hadoop.hbase.MasterNotRunningException;

import org.apache.hadoop.hbase.ZooKeeperConnectionException;

import org.apache.hadoop.hbase.client.Delete;

import org.apache.hadoop.hbase.client.Get;

import org.apache.hadoop.hbase.client.HBaseAdmin;

import org.apache.hadoop.hbase.client.HTable;

import org.apache.hadoop.hbase.client.Put;

import org.apache.hadoop.hbase.client.Result;

import org.apache.hadoop.hbase.client.ResultScanner;

import org.apache.hadoop.hbase.client.Scan;

import org.apache.hadoop.hbase.util.Bytes;

public class HbaseTest {

public static Configuration conf;

static{

conf = HBaseConfiguration.create();//第一步

conf.set("hbase.zookeeper.quorum", "header-2,core-1,core-2");

conf.set("hbase.zookeeper.property.clientPort", "2181");

conf.set("hbase.master", "header-1:60000");

}

public static void main(String[] args) throws IOException{

//createTable("member");

//insertDataByPut("member");

//QueryByGet("member");

QueryByScan("member");

//DeleteData("member");

}

/**

* 创建表 通过HBaseAdmin对象操作

*

* @param tablename

* @throws IOException

* @throws ZooKeeperConnectionException

* @throws MasterNotRunningException

*

*/

public static void createTable(String tableName) throws MasterNotRunningException, ZooKeeperConnectionException, IOException {

//创建HBaseAdmin对象

HBaseAdmin hBaseAdmin = new HBaseAdmin(conf);

//判断表是否存在,若存在就删除

if(hBaseAdmin.tableExists(tableName)){

hBaseAdmin.disableTable(tableName);

hBaseAdmin.deleteTable(tableName);

}

HTableDescriptor tableDescriptor = new HTableDescriptor(tableName);

//添加Family

tableDescriptor.addFamily(new HColumnDescriptor("info"));

tableDescriptor.addFamily(new HColumnDescriptor("address"));

//创建表

hBaseAdmin.createTable(tableDescriptor);

//释放资源

hBaseAdmin.close();

}

/**

*

* @param tableName

* @throws IOException

*/

@SuppressWarnings("deprecation")

public static void insertDataByPut(String tableName) throws IOException {

//第二步 获取句柄,传入静态配置和表名称

HTable table = new HTable(conf, tableName);

//添加rowkey,添加数据, 通过getBytes方法将string类型都转化为字节流

Put put1 = new Put(getBytes("djt"));

put1.add(getBytes("address"), getBytes("country"), getBytes("china"));

put1.add(getBytes("address"), getBytes("province"), getBytes("beijing"));

put1.add(getBytes("address"), getBytes("city"), getBytes("beijing"));

put1.add(getBytes("info"), getBytes("age"), getBytes("28"));

put1.add(getBytes("info"), getBytes("birthdy"), getBytes("1998-12-23"));

put1.add(getBytes("info"), getBytes("company"), getBytes("dajiang"));

//第三步

table.put(put1);

//释放资源

table.close();

}

/**

* 查询一条记录

* @param tableName

* @throws IOException

*/

public static void QueryByGet(String tableName) throws IOException {

//第二步

HTable table = new HTable(conf, tableName);

//根据rowkey查询

Get get = new Get(getBytes("djt"));

Result r = table.get(get);

System.out.println("获得到rowkey:" + new String(r.getRow()));

for(KeyValue keyvalue : r.raw()){

System.out.println("列簇:" + new String(keyvalue.getFamily())

+ "====列:" + new String(keyvalue.getQualifier())

+ "====值:" + new String(keyvalue.getValue()));

}

table.close();

}

/**

* 扫描

* @param tableName

* @throws IOException

*/

public static void QueryByScan(String tableName) throws IOException {

// 第二步

HTable table = new HTable(conf, tableName);

Scan scan = new Scan();

//指定需要扫描的列簇,列.如果不指定就是全表扫描

scan.addColumn(getBytes("info"), getBytes("company"));

ResultScanner scanner = table.getScanner(scan);

for(Result r : scanner){

System.out.println("获得到rowkey:" + new String(r.getRow()));

for(KeyValue kv : r.raw()){

System.out.println("列簇:" + new String(kv.getFamily())

+ "====列:" + new String(kv.getQualifier())

+ "====值 :" + new String(kv.getValue()));

}

}

//释放资源

scanner.close();

table.close();

}

/**

* 删除一条数据

* @param tableName

* @throws IOException

*/

public static void DeleteData(String tableName) throws IOException {

// 第二步

HTable table = new HTable(conf, tableName);

Delete delete = new Delete(getBytes("djt"));

delete.addColumn(getBytes("info"), getBytes("age"));

table.delete(delete);

//释放资源

table.close();

}

/**

* 转换byte数组(string类型都转化为字节流)

*/

public static byte[] getBytes(String str){

if(str == null)

str = "";

return Bytes.toBytes(str);

}

}3.MapReduce

直接使用MapReduce作业处理HBase数据

import java.io.IOException;

import java.util.StringTokenizer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.HColumnDescriptor;

import org.apache.hadoop.hbase.HTableDescriptor;

import org.apache.hadoop.hbase.client.HBaseAdmin;

import org.apache.hadoop.hbase.client.Put;

import org.apache.hadoop.hbase.io.ImmutableBytesWritable;

import org.apache.hadoop.hbase.mapreduce.TableMapReduceUtil;

import org.apache.hadoop.hbase.mapreduce.TableReducer;

import org.apache.hadoop.hbase.util.Bytes;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

/**

* 将hdfs里面的数据导入hbase

* @author Administrator

*

*/

public class MapReduceWriteHbaseDriver {

public static class WordCountMapperHbase extends Mapper<Object, Text,

ImmutableBytesWritable, IntWritable>{

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(Object key, Text value, Context context) throws IOException, InterruptedException{

StringTokenizer itr = new StringTokenizer(value.toString());

while(itr.hasMoreTokens()){

word.set(itr.nextToken());

//输出到hbase的key类型为ImmutableBytesWritable

context.write(new ImmutableBytesWritable(Bytes.toBytes(word.toString())), one);

}

}

}

public static class WordCountReducerHbase extends TableReducer<ImmutableBytesWritable,

IntWritable, ImmutableBytesWritable>{

private IntWritable result = new IntWritable();

public void reduce(ImmutableBytesWritable key, Iterable<IntWritable> values, Context context)

throws IOException, InterruptedException{

int sum = 0;

for(IntWritable val : values){

sum += val.get();

}

//put实例化 key代表主键,每个单词存一行

Put put = new Put(key.get());

//三个参数分别为:列簇content 列count 列值为词频

put.add(Bytes.toBytes("content"), Bytes.toBytes("count"), Bytes.toBytes(String.valueOf(sum)));

context.write(key, put);

}

}

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException{

String tableName = "wordcount";//hbase数据库表名 也可以通过命令行传入表名args

Configuration conf = HBaseConfiguration.create();//实例化Configuration

conf.set("hbase.zookeeper.quorum", "header-2,core-1,core-2");

conf.set("hbase.zookeeper.property.clientPort", "2181");

conf.set("hbase.master", "header-1");

//如果表已经存在就先删除

HBaseAdmin admin = new HBaseAdmin(conf);

if(admin.tableExists(tableName)){

admin.disableTable(tableName);

admin.deleteTable(tableName);

}

HTableDescriptor htd = new HTableDescriptor(tableName);//指定表名

HColumnDescriptor hcd = new HColumnDescriptor("content");//指定列簇名

htd.addFamily(hcd);//创建列簇

admin.createTable(htd);//创建表

Job job = new Job(conf, "import from hdfs to hbase");

job.setJarByClass(MapReduceWriteHbaseDriver.class);

job.setMapperClass(WordCountMapperHbase.class);

//设置插入hbase时的相关操作

TableMapReduceUtil.initTableReducerJob(tableName, WordCountReducerHbase.class, job, null, null, null, null, false);

//map输出

job.setMapOutputKeyClass(ImmutableBytesWritable.class);

job.setMapOutputValueClass(IntWritable.class);

//reduce输出

job.setOutputKeyClass(ImmutableBytesWritable.class);

job.setOutputValueClass(Put.class);

//读取数据

FileInputFormat.addInputPaths(job, "hdfs://header-1:9000/user/test.txt");

System.out.println(job.waitForCompletion(true) ? 0 : 1);

}

}import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.client.Result;

import org.apache.hadoop.hbase.client.Scan;

import org.apache.hadoop.hbase.io.ImmutableBytesWritable;

import org.apache.hadoop.hbase.mapreduce.TableMapReduceUtil;

import org.apache.hadoop.hbase.mapreduce.TableMapper;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

/**

* 读取hbse数据存入HDFS

* @author Administrator

*

*/

public class MapReduceReaderHbaseDriver {

public static class WordCountHBaseMapper extends TableMapper<Text, Text>{

protected void map(ImmutableBytesWritable key, Result values,Context context) throws IOException, InterruptedException{

StringBuffer sb = new StringBuffer("");

//获取列簇content下面的值

for(java.util.Map.Entry<byte[], byte[]> value : values.getFamilyMap("content".getBytes()).entrySet()){

String str = new String(value.getValue());

if(str != null){

sb.append(str);

}

context.write(new Text(key.get()), new Text(new String(sb)));

}

}

}

public static class WordCountHBaseReducer extends Reducer<Text, Text, Text, Text>{

private Text result = new Text();

public void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException{

for(Text val : values){

result.set(val);

context.write(key, result);

}

}

}

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

String tableName = "wordcount";//表名称

Configuration conf = HBaseConfiguration.create();//实例化Configuration

conf.set("hbase.zookeeper.quorum", "header-2,core-1,core-2");

conf.set("hbase.zookeeper.property.clientPort", "2181");

conf.set("hbase.master", "header-1:60000");

Job job = new Job(conf, "import from hbase to hdfs");

job.setJarByClass(MapReduceReaderHbaseDriver.class);

job.setReducerClass(WordCountHBaseReducer.class);

//配置读取hbase的相关操作

TableMapReduceUtil.initTableMapperJob(tableName, new Scan(), WordCountHBaseMapper.class, Text.class, Text.class, job, false);

//输出路径

FileOutputFormat.setOutputPath(job, new Path("hdfs://header-1:9000/user/out"));

System.out.println(job.waitForCompletion(true) ? 0 : 1);

}

}