python Gstreamer编写mp4视频播放器

对使用Gstreamer编写播放器的理解

使用Gstreamer编写播放器有几个重点:

1.元件的挑选:

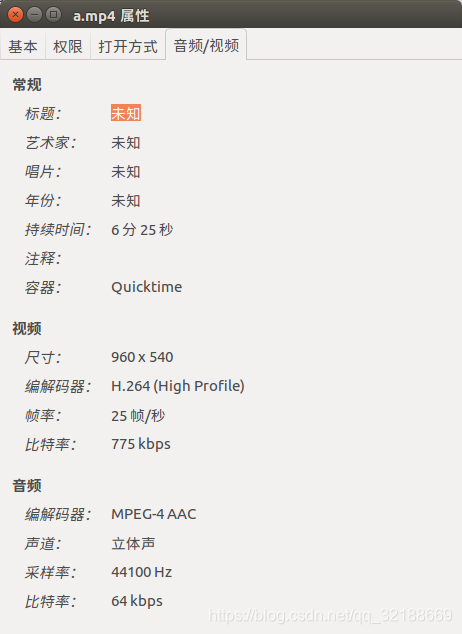

根据视频的封装格式和封装内的视频、音频编码格式挑选所需要的解封装和解码元件。视频、音频编码的格式可以从视频的属性中获得:

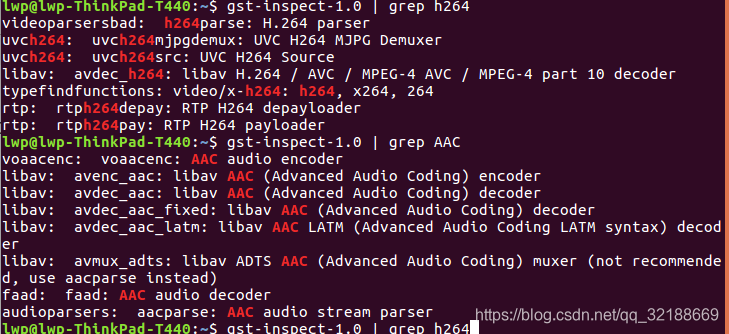

如图中视频的编码格式为H.264,音频的编码格式为AAC。而相关元件可以使用gst-inspect命令来搜索。

2.元件的连接

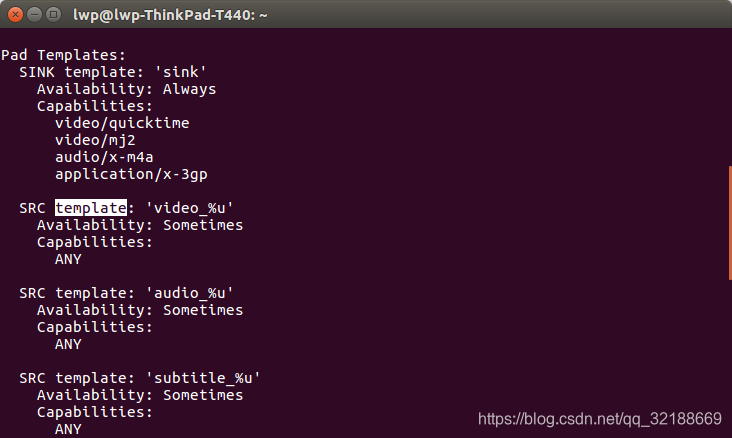

元件连接部分重点在设置回调函数在demuxer产生新衬垫时与队列衬垫连接。使用gst-inspect命令可以获得元件衬垫的template,以此区分视频和音频流来完成产生衬垫时的连接。

命令:gst-inspect-1.0 qtdemux

mp4简易播放器:

import gi

gi.require_version('Gst', '1.0')

from gi.repository import Gst, GObject, GLib

Gst.init(None)

#设置回调函数在demuxer产生新衬垫时与队列衬垫连接

def cb_demuxer_newpad(src, pad, dst,dst2):

if pad.get_property("template").name_template == "video_%u":

vdec_pad = dst.get_static_pad("sink")

pad.link(vdec_pad)

elif pad.get_property("template").name_template == "audio_%u":

adec_pad = dst2.get_static_pad("sink")

pad.link(adec_pad)

#创建elements

pipe = Gst.Pipeline.new("test")

src = Gst.ElementFactory.make("filesrc", "src")

demuxer = Gst.ElementFactory.make("qtdemux", "demux")

#创建视频队列元件

decodebin = Gst.ElementFactory.make("avdec_h264", "decode")

queuev = Gst.ElementFactory.make("queue", "queue")

conv = Gst.ElementFactory.make("videoconvert", "conv")

sink = Gst.ElementFactory.make("xvimagesink", "sink")

#创建音频队列元件

decodebina = Gst.ElementFactory.make("faad", "decodea")

queuea = Gst.ElementFactory.make("queue", "queuea")

conva = Gst.ElementFactory.make("audioconvert", "conva")

sinka = Gst.ElementFactory.make("autoaudiosink", "sinka")

#获取播放地址(这里location表示视频和代码在同一个项目下)

src.set_property("location", "a.mp4")

demuxer.connect("pad-added", cb_demuxer_newpad, queuev,queuea)

#向管道中添加元件

pipe.add(src)

pipe.add(demuxer)

pipe.add(queuev)

pipe.add(decodebin)

pipe.add(conv)

pipe.add(sink)

pipe.add(queuea)

pipe.add(decodebina)

pipe.add(conva)

pipe.add(sinka)

#连接元件

src.link(demuxer)

queuev.link(decodebin)

decodebin.link(conv)

conv.link(sink)

queuea.link(decodebina)

decodebina.link(conva)

conva.link(sinka)

#修改状态为播放

pipe.set_state(Gst.State.PLAYING)

mainloop = GLib.MainLoop()

mainloop.run()

带有gtk界面的mp4播放器

import os

import gi

gi.require_version('Gst', '1.0')

gi.require_version('Gtk', '3.0')

from gi.repository import Gst, GObject, Gtk

class GTK_Main(object):

def __init__(self):

window = Gtk.Window(Gtk.WindowType.TOPLEVEL)

window.set_title("Mpeg2-Player")

window.set_default_size(500, 400)

window.connect("destroy", Gtk.main_quit, "WM destroy")

vbox = Gtk.VBox()

window.add(vbox)

hbox = Gtk.HBox()

vbox.pack_start(hbox, False, False, 0)

self.entry = Gtk.Entry()

hbox.add(self.entry)

self.button = Gtk.Button("Start")

hbox.pack_start(self.button, False, False, 0)

self.button.connect("clicked", self.start_stop)

self.movie_window = Gtk.DrawingArea()

vbox.add(self.movie_window)

window.show_all()

self.player = Gst.Pipeline.new("player")

source = Gst.ElementFactory.make("filesrc", "file-source")

demuxer = Gst.ElementFactory.make("qtdemux", "demuxer")

demuxer.connect("pad-added", self.demuxer_callback)

self.video_decoder = Gst.ElementFactory.make("avdec_h264", "video-decoder")

self.audio_decoder = Gst.ElementFactory.make("faad", "audio-decoder")

audioconv = Gst.ElementFactory.make("audioconvert", "converter")

audiosink = Gst.ElementFactory.make("autoaudiosink", "audio-output")

videosink = Gst.ElementFactory.make("xvimagesink", "video-output")

self.queuea = Gst.ElementFactory.make("queue", "queuea")

self.queuev = Gst.ElementFactory.make("queue", "queuev")

colorspace = Gst.ElementFactory.make("videoconvert", "colorspace")

#colorspace = Gst.ElementFactory.make("ffmpegcolorspace", "colorspace")

self.player.add(source)

self.player.add(demuxer)

self.player.add(self.video_decoder)

self.player.add(self.audio_decoder)

self.player.add(audioconv)

self.player.add(audiosink)

self.player.add(videosink)

self.player.add(self.queuea)

self.player.add(self.queuev)

self.player.add(colorspace)

source.link(demuxer)

self.queuev.link(self.video_decoder)

self.video_decoder.link(colorspace)

colorspace.link(videosink)

self.queuea.link(self.audio_decoder)

self.audio_decoder.link(audioconv)

audioconv.link(audiosink)

bus = self.player.get_bus()

bus.add_signal_watch()

bus.enable_sync_message_emission()

bus.connect("message", self.on_message)

bus.connect("sync-message::element", self.on_sync_message)

def start_stop(self, w):

if self.button.get_label() == "Start":

filepath = self.entry.get_text().strip()

if os.path.isfile(filepath):

filepath = os.path.realpath(filepath)

self.button.set_label("Stop")

self.player.get_by_name("file-source").set_property("location", filepath)

self.player.set_state(Gst.State.PLAYING)

else:

self.player.set_state(Gst.State.NULL)

self.button.set_label("Start")

def on_message(self, bus, message):

t = message.type

if t == Gst.MessageType.EOS:

self.player.set_state(Gst.State.NULL)

self.button.set_label("Start")

elif t == Gst.MessageType.ERROR:

err, debug = message.parse_error()

print ("Error: %s" % err, debug)

self.player.set_state(Gst.State.NULL)

self.button.set_label("Start")

def on_sync_message(self, bus, message):

if message.get_structure().get_name() == 'prepare-window-handle':

imagesink = message.src

imagesink.set_property("force-aspect-ratio", True)

xid = self.movie_window.get_property('window').get_xid()

imagesink.set_window_handle(xid)

def demuxer_callback(self, demuxer, pad):

if pad.get_property("template").name_template == "video_%u":

qv_pad = self.queuev.get_static_pad("sink")

pad.link(qv_pad)

elif pad.get_property("template").name_template == "audio_%u":

qa_pad = self.queuea.get_static_pad("sink")

pad.link(qa_pad)

Gst.init(None)

GTK_Main()

GObject.threads_init()

Gtk.main()