文章目录

1.Oozie调度shell脚本

1.1解压官方案例模板

[hadoop@hadoop03 oozie-4.0.0-cdh5.3.6]$ tar -zxvf oozie-examples.tar.gz

1.2创建工作目录

mkdir oozie-apps

1.3拷贝任务模板到oozie-apps

cp -r examples/apps/shell/ oozie-apps

1.4编写脚本p1.sh

vi oozie-apps/shell/p1.sh

内容如下:

#!/bin/bash

date > /home/hadoop/cdh/p1.log

1.5修改配置文件

1.5.1job.properties

#HDFS地址

nameNode=hdfs://hadoop01:8020

#ResourceManager地址

jobTracker=hadoop03:8032

#队列名称

queueName=default

examplesRoot=oozie-apps

oozie.wf.application.path=${nameNode}/user/${user.name}/${examplesRoot}/shell

EXEC=p1.sh

1.5.2workflow.xml

<workflow-app

xmlns="uri:oozie:workflow:0.4" name="shell-wf">

<start to="shell-node"/>

<action name="shell-node">

<shell

xmlns="uri:oozie:shell-action:0.2">

<job-tracker>${jobTracker}</job-tracker>

<name-node>${nameNode}</name-node>

<configuration>

<property>

<name>mapred.job.queue.name</name>

<value>${queueName}</value>

</property>

</configuration>

<exec>${EXEC}</exec>

<!-- <argument>my_output=Hello Oozie</argument> -->

<file>/user/atguigu/oozie-apps/shell/${EXEC}#${EXEC}</file>

<capture-output/>

</shell>

<ok to="end"/>

<error to="fail"/>

</action>

<decision name="check-output">

<switch>

<case to="end">

${wf:actionData('shell-node')['my_output'] eq 'Hello Oozie'}

</case>

<default to="fail-output"/>

</switch>

</decision>

<kill name="fail">

<message>Shell action failed, error message[${wf:errorMessage(wf:lastErrorNode())}]</message>

</kill>

<kill name="fail-output">

<message>Incorrect output, expected [Hello Oozie] but was [${wf:actionData('shell-node')['my_output']}]</message>

</kill>

<end name="end"/>

</workflow-app>

1.6上传任务到hdfs

新建文件夹

hdfs dfs -mkdir /user/hadoop/oozie-apps/

上传

[hadoop@hadoop03 oozie-4.0.0-cdh5.3.6]$ /home/hadoop/cdh/hadoop-2.5.0-cdh5.3.6/bin/hdfs dfs -put oozie-apps/shell /user/hadoop/oozie-apps/

1.7执行任务

启动oozie

/home/hadoop/cdh/hadoop-2.5.0-cdh5.3.6/bin/oozied.sh start

执行任务

bin/oozie job -oozie http://hadoop03:11000/oozie -config oozie-apps/shell/job.properties -run

执行成功后会给出任务编号

杀死某个任务的命令

bin/oozie job -oozie http://hadoop03:11000/oozie -kill 0000000-200216155457484-oozie-hado-W

2.Oozie逻辑调度执行多个Job

2.1增加脚本

vi oozie-apps/shell/p2.sh

内容如下:

#!/bin/bash

date > /home/hadoop/cdh/p2.log

2.2修改配置文件

2.2.1job.properties

#HDFS地址

nameNode=hdfs://hadoop01:8020

#ResourceManager地址

jobTracker=hadoop03:8032

#队列名称

queueName=default

examplesRoot=oozie-apps

oozie.wf.application.path=${nameNode}/user/${user.name}/${examplesRoot}/shell

EXEC1=p1.sh

EXEC2=p2.sh

2.2.2workflow.xml

<workflow-app

xmlns="uri:oozie:workflow:0.4" name="shell-wf">

<start to="p1-shell-node"/>

<action name="p1-shell-node">

<shell

xmlns="uri:oozie:shell-action:0.2">

<job-tracker>${jobTracker}</job-tracker>

<name-node>${nameNode}</name-node>

<configuration>

<property>

<name>mapred.job.queue.name</name>

<value>${queueName}</value>

</property>

</configuration>

<exec>${EXEC1}</exec>

<file>/user/atguigu/oozie-apps/shell/${EXEC1}#${EXEC1}</file>

<!-- <argument>my_output=Hello Oozie</argument>-->

<capture-output/>

</shell>

<ok to="p2-shell-node"/>

<error to="fail"/>

</action>

<action name="p2-shell-node">

<shell

xmlns="uri:oozie:shell-action:0.2">

<job-tracker>${jobTracker}</job-tracker>

<name-node>${nameNode}</name-node>

<configuration>

<property>

<name>mapred.job.queue.name</name>

<value>${queueName}</value>

</property>

</configuration>

<exec>${EXEC2}</exec>

<file>/user/admin/oozie-apps/shell/${EXEC2}#${EXEC2}</file>

<!-- <argument>my_output=Hello Oozie</argument>-->

<capture-output/>

</shell>

<ok to="end"/>

<error to="fail"/>

</action>

<decision name="check-output">

<switch>

<case to="end">

${wf:actionData('shell-node')['my_output'] eq 'Hello Oozie'}

</case>

<default to="fail-output"/>

</switch>

</decision>

<kill name="fail">

<message>Shell action failed, error message[${wf:errorMessage(wf:lastErrorNode())}]</message>

</kill>

<kill name="fail-output">

<message>Incorrect output, expected [Hello Oozie] but was [${wf:actionData('shell-node')['my_output']}]</message>

</kill>

<end name="end"/>

</workflow-app>

2.3上传任务到hdfs

先在hdfs删除刚刚的目录

/home/hadoop/cdh/hadoop-2.5.0-cdh5.3.6/bin/hdfs dfs -rm -r -f /user/hadoop/oozie-apps/shell

然后上传

[hadoop@hadoop03 oozie-4.0.0-cdh5.3.6]$ /home/hadoop/cdh/hadoop-2.5.0-cdh5.3.6/bin/hdfs dfs -put oozie-apps/shell /user/hadoop/oozie-apps/

2.4执行任务

启动oozie

/home/hadoop/cdh/hadoop-2.5.0-cdh5.3.6/bin/oozied.sh start

执行任务

bin/oozie job -oozie http://hadoop03:11000/oozie -config oozie-apps/shell/job.properties -run

3.Oozie调度MapReduce任务

3.1拷贝官方案例模板

cp -r /home/hadoop/cdh/oozie-4.0.0-cdh5.3.6/examples/apps/map-reduce/ oozie-apps/

3.2测试mapreduce jar

这里我用的官方案例的wordcount jar,可以上传自己的jar

vi wc.txt

wc.txt

Spark

Spark Hadoop

Spark Hadoop Hive

Spark Hadoop Hive Oozie

/home/hadoop/cdh/hadoop-2.5.0-cdh5.3.6/bin/hdfs dfs -mkdir /user/hadoop/mr_input

/home/hadoop/cdh/hadoop-2.5.0-cdh5.3.6/bin/hdfs dfs -mkdir /user/hadoop/mr_output

/home/hadoop/cdh/hadoop-2.5.0-cdh5.3.6/bin/hdfs dfs -put wc.txt /user/hadoop/mr_input

/home/hadoop/cdh/hadoop-2.5.0-cdh5.3.6/bin/yarn jar /home/hadoop/cdh/hadoop-2.5.0-cdh5.3.6/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.5.0-cdh5.3.6.jar wordcount /user/hadoop/mr_input /user/hadoop/mr_output/wcout.txt

确认jar包无误后可以继续下一步

3.3修改配置文件

只用修改两个

3.3.1job.properties

nameNode=hdfs://hadoop01:8020

jobTracker=hadoop03:8032

queueName=default

examplesRoot=oozie-apps

#hdfs://hadoop01:8020/user/hadoop/oozie-apps/map-reduce/workflow.xml

oozie.wf.application.path=${nameNode}/user/${user.name}/${examplesRoot}/map-reduce/workflow.xml

outputDir=map-reduce

3.3.2workflow.xml

<workflow-app

xmlns="uri:oozie:workflow:0.2" name="map-reduce-wf">

<start to="mr-node"/>

<action name="mr-node">

<map-reduce>

<job-tracker>${jobTracker}</job-tracker>

<name-node>${nameNode}</name-node>

<prepare>

<delete path="${nameNode}/user/hadoop/mr_output/"/>

</prepare>

<configuration>

<property>

<name>mapred.job.queue.name</name>

<value>${queueName}</value>

</property>

<!-- 配置调度MR任务时,使用新的API -->

<property>

<name>mapred.mapper.new-api</name>

<value>true</value>

</property>

<property>

<name>mapred.reducer.new-api</name>

<value>true</value>

</property>

<!-- 指定Job Key输出类型 -->

<property>

<name>mapreduce.job.output.key.class</name>

<value>org.apache.hadoop.io.Text</value>

</property>

<!-- 指定Job Value输出类型 -->

<property>

<name>mapreduce.job.output.value.class</name>

<value>org.apache.hadoop.io.IntWritable</value>

</property>

<!-- 指定输入路径 -->

<property>

<name>mapred.input.dir</name>

<value>/user/hadoop/mr_input/</value>

</property>

<!-- 指定输出路径 -->

<property>

<name>mapred.output.dir</name>

<value>/user/hadoop/mr_output/</value>

</property>

<!-- 指定Map类 -->

<property>

<name>mapreduce.job.map.class</name>

<value>org.apache.hadoop.examples.WordCount$TokenizerMapper</value>

</property>

<!-- 指定Reduce类 -->

<property>

<name>mapreduce.job.reduce.class</name>

<value>org.apache.hadoop.examples.WordCount$IntSumReducer</value>

</property>

<property>

<name>mapred.map.tasks</name>

<value>1</value>

</property>

</configuration>

</map-reduce>

<ok to="end"/>

<error to="fail"/>

</action>

<kill name="fail">

<message>Map/Reduce failed, error message[${wf:errorMessage(wf:lastErrorNode())}]</message>

</kill>

<end name="end"/>

</workflow-app>

3.4拷贝待执行的jar包

cp -a /home/hadoop/cdh/hadoop-2.5.0-cdh5.3.6/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.5.0-cdh5.3.6.jar oozie-apps/map-reduce/lib

3.5上传到hdfs

/home/hadoop/cdh/hadoop-2.5.0-cdh5.3.6/bin/hdfs dfs -put oozie-apps/map-reduce/ /user/hadoop/oozie-apps

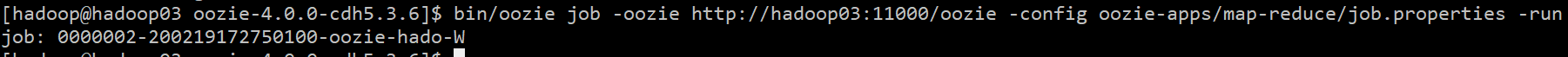

3.6执行任务

[hadoop@hadoop03 oozie-4.0.0-cdh5.3.6]$ bin/oozie job -oozie http://hadoop03:11000/oozie -config oozie-apps/map-reduce/job.properties -run

提交成功!

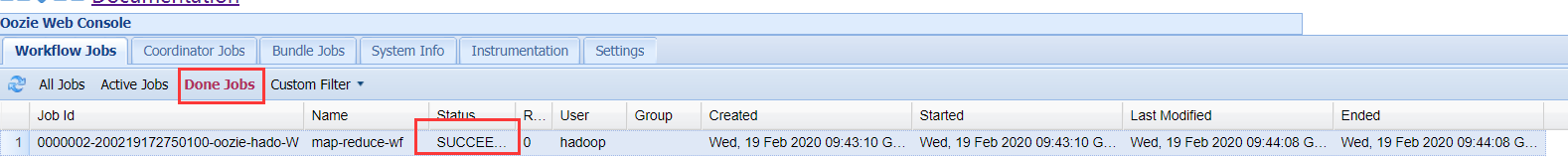

去oozie Web UI 里面看一眼

4.Oozie定时任务

4.1检查时区

date -R

如果显示的时区不是+0800,跟现在的时间不同步,则给集群同步到上海时间

sudo cp /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

4.2修改oozie-site.xml文件

[hadoop@hadoop03 oozie-4.0.0-cdh5.3.6]$ vi /home/hadoop/cdh/hadoop-2.5.0-cdh5.3.6/conf/oozie-site.xml

加入oozie.processing.timezone属性

<property>

<name>oozie.processing.timezone</name>

<value>GMT+0800</value>

<description>

Oozie server timezone. Valid values are UTC and GMT(+/-)####, for example 'GMT+0530' would be India

timezone. All dates parsed and genered dates by Oozie Coordinator/Bundle will be done in the specified

timezone. The default value of 'UTC' should not be changed under normal circumtances. If for any reason

is changed, note that GMT(+/-)#### timezones do not observe DST changes.

</description>

</property>

4.3修改js框架中的关于时间设置的代码

vi /home/hadoop/cdh/oozie-4.0.0-cdh5.3.6/oozie-server/webapps/oozie/oozie-console.js

修改如下

function getTimeZone() {

Ext.state.Manager.setProvider(new Ext.state.CookieProvider());

return Ext.state.Manager.get("TimezoneId","GMT+0800");

}

4.4重启oozie服务

注意,这里要清一下浏览器缓存并重启浏览器!!

[hadoop@hadoop03 oozie-4.0.0-cdh5.3.6]$ bin/oozied.sh stop

[hadoop@hadoop03 oozie-4.0.0-cdh5.3.6]$ bin/oozied.sh start

4.5拷贝官方案例模板

[hadoop@hadoop03 oozie-4.0.0-cdh5.3.6]$ cp -r examples/apps/cron/ oozie-apps/

4.6修改配置文件

4.6.1job.properties

nameNode=hdfs://hadoop01:8020

jobTracker=hadoop03:8032

queueName=default

examplesRoot=oozie-apps

oozie.coord.application.path=${nameNode}/user/${user.name}/${examplesRoot}/cron

#start:必须设置为未来时间,否则任务失败

start=2020-02-19T18:00+0800

end=2020-02-19T18:30+0800

workflowAppUri=${nameNode}/user/${user.name}/${examplesRoot}/cron

EXEC=p1.sh

4.6.2coordinator.xml

注意这里frequency的值最小为5

<coordinator-app name="cron-coord" frequency="${coord:minutes(5)}" start="${start}" end="${end}" timezone="GMT+0800"

xmlns="uri:oozie:coordinator:0.2">

<action>

<workflow>

<app-path>${workflowAppUri}</app-path>

<configuration>

<property>

<name>jobTracker</name>

<value>${jobTracker}</value>

</property>

<property>

<name>nameNode</name>

<value>${nameNode}</value>

</property>

<property>

<name>queueName</name>

<value>${queueName}</value>

</property>

</configuration>

</workflow>

</action>

</coordinator-app>

4.6.3workflow.xml

<workflow-app xmlns="uri:oozie:workflow:0.4" name="shell-wf">

<start to="p1-shell-node"/>

<action name="p1-shell-node">

<shell xmlns="uri:oozie:shell-action:0.2">

<job-tracker>${jobTracker}</job-tracker>

<name-node>${nameNode}</name-node>

<configuration>

<property>

<name>mapred.job.queue.name</name>

<value>${queueName}</value>

</property>

</configuration>

<exec>${EXEC}</exec>

<!--<argument>my_output=Hello Oozie</argument>-->

<file>/user/hadoop/oozie-apps/cron/${EXEC}#${EXEC}</file>

<capture-output/>

</shell>

<ok to="end"/>

<error to="fail"/>

</action>

<decision name="check-output">

<switch>

<case to="end">

${wf:actionData('shell-node')['my_output'] eq 'Hello Oozie'}

</case>

<default to="fail-output"/>

</switch>

</decision>

<kill name="fail">

<message>Shell action failed, error message[${wf:errorMessage(wf:lastErrorNode())}]</message>

</kill>

<kill name="fail-output">

<message>Incorrect output, expected [Hello Oozie] but was [${wf:actionData('shell-node')['my_output']}]</message>

</kill>

<end name="end"/>

</workflow-app>

4.6.4p1.sh

vi p1.sh

#!/bin/bash

date > /home/hadoop/cdh/time.log

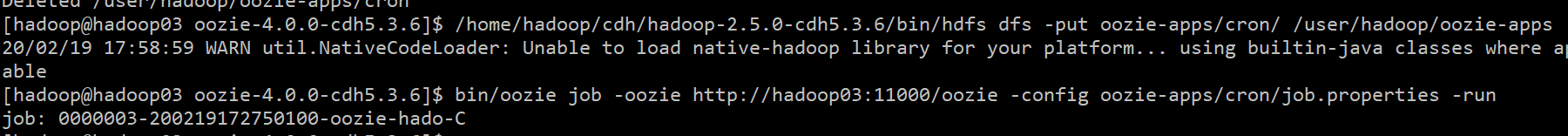

4.7上传到hdfs

/home/hadoop/cdh/hadoop-2.5.0-cdh5.3.6/bin/hdfs dfs -put oozie-apps/cron/ /user/hadoop/oozie-apps

4.8执行任务

bin/oozie job -oozie http://hadoop03:11000/oozie -config oozie-apps/cron/job.properties -run

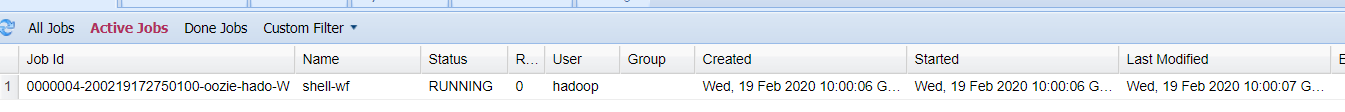

提交成功~

正在Running

显示运行成功

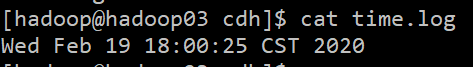

看一下结果

[hadoop@hadoop03 cdh]$ cat time.log