总结DAG

首先:a^b=c -> a=b^c

DGA两个状态

状态1:XOR后的value

状态2:每列

产生:dp[500+5][(1<<10)]

从dp[0][0]开始跑

跑到dp[n][某XOR后的value]

注意

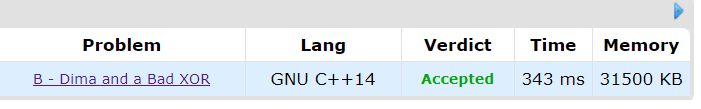

但是这里自己有一点疑问,nm(1<<10)=2.56e8,我所理解的是1e8次运算时1s,但是跑的数据显示是343ms,所以,我只能理解为1e9次运算是1s。不知道理解合理不

#include<bits/stdc++.h>

//typedef long long ll;

//#define ull unsigned long long

//#define int long long

#define F first

#define S second

#define endl "\n"//<<flush

#define lowbit(x) (x&(-x))

#define ferma(a,b) pow(a,b-2)

#define pb push_back

#define mp make_pair

#define all(x) x.begin(),x.end()

#define memset(a,b) memset(a,b,sizeof(a));

#define IOS ios::sync_with_stdio(false),cin.tie(0),cout.tie(0);

using namespace std;

const double PI=acos(-1.0);

const int inf=0x3f3f3f3f;

const int MAXN=0x7fffffff;

const long long INF = 0x3f3f3f3f3f3f3f3fLL;

void file()

{

#ifdef ONLINE_JUDGE

#else

freopen("cin.txt","r",stdin);

// freopen("cout.txt","w",stdout);

#endif

}

const int N=2e3+5;

int dp[N][N],G[N][N];

signed main()

{

IOS;

//file();

int n,m;

cin>>n>>m;

for(int i=1;i<=n;i++)

for(int j=1;j<=m;j++)

cin>>G[i][j];

dp[0][0]=true;

for(int i=1;i<=n;i++)

for(int j=1;j<=m;j++)

for(int k=0;k<(1<<10);k++)

if(dp[i-1][G[i][j]^k])

dp[i][k]=j;

stack<int>ans;

for(int i=1;i<(1<<10);i++)

{

if(dp[n][i])

{

for(int j=n,k=i;j>=1;j--)

{

ans.push(dp[j][k]);

k=k^G[j][dp[j][k]];

}

break;

}

}

cout<<(ans.empty()?"NIE":"TAK")<<endl;

while(!ans.empty())

{

cout<<ans.top()<<" ";

ans.pop();

}

cout<<endl;

return 0;

}