paddle2.0高层API实现自定义数据集文本分类中的情感分析任务

本文包含了:

- 自定义文本分类数据集继承

- 文本分类数据处理

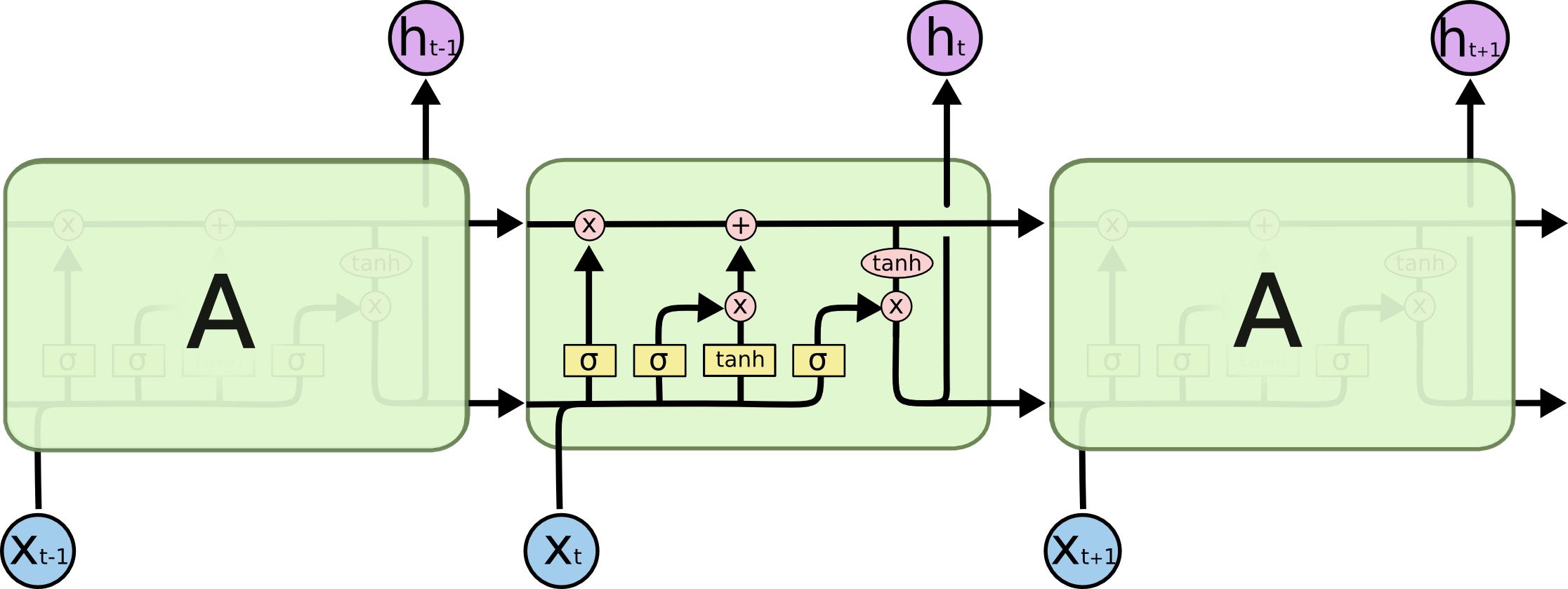

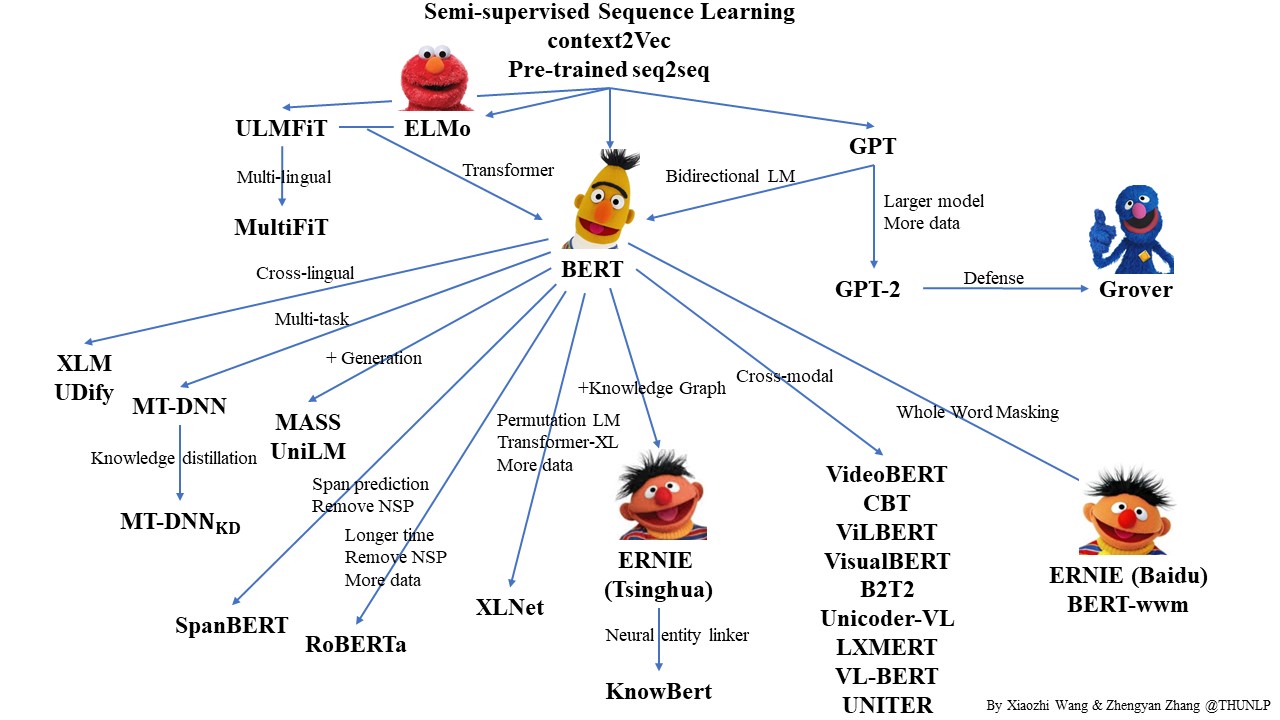

- 循环神经网络RNN, LSTM

- ·seq2vec·

- pretrained预训练模型

『深度学习7日打卡营·day4』

零基础解锁深度学习神器飞桨框架高层API,七天时间助你掌握CV、NLP领域最火模型及应用。

- 掌握深度学习常用模型基础知识

- 熟练掌握一种国产开源深度学习框架

- 具备独立完成相关深度学习任务的能力

- 能用所学为AI加一份年味

问题定义

情感分析是自然语言处理领域一个老生常谈的任务。句子情感分析目的是为了判别说者的情感倾向,比如在某些话题上给出的的态度明确的观点,或者反映的情绪状态等。情感分析有着广泛应用,比如电商评论分析、舆情分析等。

环境介绍

-

PaddlePaddle框架,AI Studio平台已经默认安装最新版2.0。

-

PaddleNLP,深度兼容框架2.0,是飞桨框架2.0在NLP领域的最佳实践。

这里使用的是beta版本,马上也会发布rc版哦。AI Studio平台后续会默认安装PaddleNLP,在此之前可使用如下命令安装。

# 下载paddlenlp

!pip install --upgrade paddlenlp==2.0.0b4 -i https://pypi.org/simple

Requirement already up-to-date: paddlenlp==2.0.0b4 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (2.0.0b4)

Requirement already satisfied, skipping upgrade: colorlog in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from paddlenlp==2.0.0b4) (4.1.0)

Requirement already satisfied, skipping upgrade: colorama in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from paddlenlp==2.0.0b4) (0.4.4)

Requirement already satisfied, skipping upgrade: jieba in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from paddlenlp==2.0.0b4) (0.42.1)

Requirement already satisfied, skipping upgrade: h5py in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from paddlenlp==2.0.0b4) (2.9.0)

Requirement already satisfied, skipping upgrade: visualdl in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from paddlenlp==2.0.0b4) (2.1.1)

Requirement already satisfied, skipping upgrade: seqeval in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from paddlenlp==2.0.0b4) (1.2.2)

Requirement already satisfied, skipping upgrade: numpy>=1.7 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from h5py->paddlenlp==2.0.0b4) (1.16.4)

Requirement already satisfied, skipping upgrade: six in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from h5py->paddlenlp==2.0.0b4) (1.15.0)

Requirement already satisfied, skipping upgrade: protobuf>=3.11.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from visualdl->paddlenlp==2.0.0b4) (3.14.0)

Requirement already satisfied, skipping upgrade: Flask-Babel>=1.0.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from visualdl->paddlenlp==2.0.0b4) (1.0.0)

Requirement already satisfied, skipping upgrade: Pillow>=7.0.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from visualdl->paddlenlp==2.0.0b4) (7.1.2)

Requirement already satisfied, skipping upgrade: flake8>=3.7.9 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from visualdl->paddlenlp==2.0.0b4) (3.8.2)

Requirement already satisfied, skipping upgrade: pre-commit in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from visualdl->paddlenlp==2.0.0b4) (1.21.0)

Requirement already satisfied, skipping upgrade: shellcheck-py in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from visualdl->paddlenlp==2.0.0b4) (0.7.1.1)

Requirement already satisfied, skipping upgrade: flask>=1.1.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from visualdl->paddlenlp==2.0.0b4) (1.1.1)

Requirement already satisfied, skipping upgrade: requests in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from visualdl->paddlenlp==2.0.0b4) (2.22.0)

Requirement already satisfied, skipping upgrade: bce-python-sdk in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from visualdl->paddlenlp==2.0.0b4) (0.8.53)

Requirement already satisfied, skipping upgrade: scikit-learn>=0.21.3 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from seqeval->paddlenlp==2.0.0b4) (0.22.1)

Requirement already satisfied, skipping upgrade: pytz in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from Flask-Babel>=1.0.0->visualdl->paddlenlp==2.0.0b4) (2019.3)

Requirement already satisfied, skipping upgrade: Babel>=2.3 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from Flask-Babel>=1.0.0->visualdl->paddlenlp==2.0.0b4) (2.8.0)

Requirement already satisfied, skipping upgrade: Jinja2>=2.5 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from Flask-Babel>=1.0.0->visualdl->paddlenlp==2.0.0b4) (2.10.1)

Requirement already satisfied, skipping upgrade: importlib-metadata; python_version < "3.8" in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from flake8>=3.7.9->visualdl->paddlenlp==2.0.0b4) (0.23)

Requirement already satisfied, skipping upgrade: mccabe<0.7.0,>=0.6.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from flake8>=3.7.9->visualdl->paddlenlp==2.0.0b4) (0.6.1)

Requirement already satisfied, skipping upgrade: pycodestyle<2.7.0,>=2.6.0a1 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from flake8>=3.7.9->visualdl->paddlenlp==2.0.0b4) (2.6.0)

Requirement already satisfied, skipping upgrade: pyflakes<2.3.0,>=2.2.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from flake8>=3.7.9->visualdl->paddlenlp==2.0.0b4) (2.2.0)

Requirement already satisfied, skipping upgrade: toml in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from pre-commit->visualdl->paddlenlp==2.0.0b4) (0.10.0)

Requirement already satisfied, skipping upgrade: pyyaml in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from pre-commit->visualdl->paddlenlp==2.0.0b4) (5.1.2)

Requirement already satisfied, skipping upgrade: nodeenv>=0.11.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from pre-commit->visualdl->paddlenlp==2.0.0b4) (1.3.4)

Requirement already satisfied, skipping upgrade: virtualenv>=15.2 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from pre-commit->visualdl->paddlenlp==2.0.0b4) (16.7.9)

Requirement already satisfied, skipping upgrade: aspy.yaml in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from pre-commit->visualdl->paddlenlp==2.0.0b4) (1.3.0)

Requirement already satisfied, skipping upgrade: identify>=1.0.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from pre-commit->visualdl->paddlenlp==2.0.0b4) (1.4.10)

Requirement already satisfied, skipping upgrade: cfgv>=2.0.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from pre-commit->visualdl->paddlenlp==2.0.0b4) (2.0.1)

Requirement already satisfied, skipping upgrade: Werkzeug>=0.15 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from flask>=1.1.1->visualdl->paddlenlp==2.0.0b4) (0.16.0)

Requirement already satisfied, skipping upgrade: itsdangerous>=0.24 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from flask>=1.1.1->visualdl->paddlenlp==2.0.0b4) (1.1.0)

Requirement already satisfied, skipping upgrade: click>=5.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from flask>=1.1.1->visualdl->paddlenlp==2.0.0b4) (7.0)

Requirement already satisfied, skipping upgrade: idna<2.9,>=2.5 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from requests->visualdl->paddlenlp==2.0.0b4) (2.8)

Requirement already satisfied, skipping upgrade: urllib3!=1.25.0,!=1.25.1,<1.26,>=1.21.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from requests->visualdl->paddlenlp==2.0.0b4) (1.25.6)

Requirement already satisfied, skipping upgrade: certifi>=2017.4.17 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from requests->visualdl->paddlenlp==2.0.0b4) (2019.9.11)

Requirement already satisfied, skipping upgrade: chardet<3.1.0,>=3.0.2 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from requests->visualdl->paddlenlp==2.0.0b4) (3.0.4)

Requirement already satisfied, skipping upgrade: future>=0.6.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from bce-python-sdk->visualdl->paddlenlp==2.0.0b4) (0.18.0)

Requirement already satisfied, skipping upgrade: pycryptodome>=3.8.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from bce-python-sdk->visualdl->paddlenlp==2.0.0b4) (3.9.9)

Requirement already satisfied, skipping upgrade: joblib>=0.11 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from scikit-learn>=0.21.3->seqeval->paddlenlp==2.0.0b4) (0.14.1)

Requirement already satisfied, skipping upgrade: scipy>=0.17.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from scikit-learn>=0.21.3->seqeval->paddlenlp==2.0.0b4) (1.3.0)

Requirement already satisfied, skipping upgrade: MarkupSafe>=0.23 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from Jinja2>=2.5->Flask-Babel>=1.0.0->visualdl->paddlenlp==2.0.0b4) (1.1.1)

Requirement already satisfied, skipping upgrade: zipp>=0.5 in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from importlib-metadata; python_version < "3.8"->flake8>=3.7.9->visualdl->paddlenlp==2.0.0b4) (0.6.0)

Requirement already satisfied, skipping upgrade: more-itertools in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from zipp>=0.5->importlib-metadata; python_version < "3.8"->flake8>=3.7.9->visualdl->paddlenlp==2.0.0b4) (7.2.0)

查看安装的版本

import paddle

import paddlenlp

print(paddle.__version__, paddlenlp.__version__)

paddle.set_device('gpu')

2.0.0 2.0.0b4

CUDAPlace(0)

PaddleNLP和Paddle框架是什么关系?

- Paddle框架是基础底座,提供深度学习任务全流程API。PaddleNLP基于Paddle框架开发,适用于NLP任务。

PaddleNLP中数据处理、数据集、组网单元等API未来会沉淀到框架paddle.text中。

- 代码中继承

class TSVDataset(paddle.io.Dataset)

使用飞桨完成深度学习任务的通用流程

-

数据集和数据处理

paddle.io.Dataset

paddle.io.DataLoader

paddlenlp.data -

组网和网络配置

paddle.nn.Embedding

paddlenlp.seq2vec

paddle.nn.Linear

paddle.tanhpaddle.nn.CrossEntropyLoss

paddle.metric.Accuracy

paddle.optimizermodel.prepare -

网络训练和评估

model.fit

model.evaluate -

预测

model.predict

注意:建议在GPU下运行。

import numpy as np

from functools import partial

import paddle.nn as nn

import paddle.nn.functional as F

import paddlenlp as ppnlp

from paddlenlp.data import Pad, Stack, Tuple

from paddlenlp.datasets import MapDatasetWrapper

from utils import load_vocab, convert_example

数据集和数据处理

自定义数据集

映射式(map-style)数据集需要继承paddle.io.Dataset

-

__getitem__: 根据给定索引获取数据集中指定样本,在 paddle.io.DataLoader 中需要使用此函数通过下标获取样本。 -

__len__: 返回数据集样本个数, paddle.io.BatchSampler 中需要样本个数生成下标序列。

class SelfDefinedDataset(paddle.io.Dataset):

def __init__(self, data):

super(SelfDefinedDataset, self).__init__()

self.data = data

def __getitem__(self, idx):

return self.data[idx]

def __len__(self):

return len(self.data)

def get_labels(self):

return ["0", "1"]

def txt_to_list(file_name):

res_list = []

for line in open(file_name):

res_list.append(line.strip().split('\t'))

return res_list

trainlst = txt_to_list('train.txt')

devlst = txt_to_list('dev.txt')

testlst = txt_to_list('test.txt')

# 通过get_datasets()函数,将list数据转换为dataset。

# get_datasets()可接收[list]参数,或[str]参数,根据自定义数据集的写法自由选择。

# train_ds, dev_ds, test_ds = ppnlp.datasets.ChnSentiCorp.get_datasets(['train', 'dev', 'test'])

train_ds, dev_ds, test_ds = SelfDefinedDataset.get_datasets([trainlst, devlst, testlst])

训练数据查看

label_list = train_ds.get_labels()

print(label_list)

for i in range(10):

print(train_ds[i])

['0', '1']

['赢在心理,输在出品!杨枝太酸,三文鱼熟了,酥皮焗杏汁杂果可以换个名(九唔搭八)', '0']

['服务一般,客人多,服务员少,但食品很不错', '1']

['東坡肉竟然有好多毛,問佢地點解,佢地仲話係咁架\ue107\ue107\ue107\ue107\ue107\ue107\ue107冇天理,第一次食東坡肉有毛,波羅包就幾好食', '0']

['父亲节去的,人很多,口味还可以上菜快!但是结账的时候,算错了没有打折,我也忘记拿清单了。说好打8折的,收银员没有打,人太多一时自己也没有想起。不知道收银员忘记,还是故意那钱露入自己钱包。。', '0']

['吃野味,吃个新鲜,你当然一定要来广州吃鹿肉啦*价格便宜,量好足,', '1']

['味道几好服务都五错推荐鹅肝乳鸽飞鱼', '1']

['作为老字号,水准保持算是不错,龟岗分店可能是位置问题,人不算多,基本不用等位,自从抢了券,去过好几次了,每次都可以打85以上的评分,算是可以了~粉丝煲每次必点,哈哈,鱼也不错,还会来帮衬的,楼下还可以免费停车!', '1']

['边到正宗啊?味味都咸死人啦,粤菜讲求鲜甜,五知点解感多人话好吃。', '0']

['环境卫生差,出品垃圾,冇下次,不知所为', '0']

['和苑真是精致粤菜第一家,服务菜品都一流', '1']

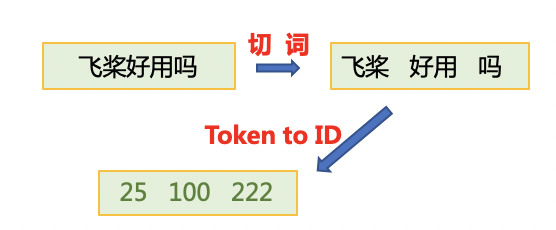

数据处理

为了将原始数据处理成模型可以读入的格式,本项目将对数据作以下处理:

- 首先使用

jieba切词,之后将jieba切完后的单词映射词表中单词id。

- 使用

paddle.io.DataLoader接口多线程异步加载数据。

其中用到了PaddleNLP中关于数据处理的API。PaddleNLP提供了许多关于NLP任务中构建有效的数据pipeline的常用API

| API | 简介 |

|---|---|

paddlenlp.data.Stack |

堆叠N个具有相同shape的输入数据来构建一个batch,它的输入必须具有相同的shape,输出便是这些输入的堆叠组成的batch数据。 |

paddlenlp.data.Pad |

堆叠N个输入数据来构建一个batch,每个输入数据将会被padding到N个输入数据中最大的长度 |

paddlenlp.data.Tuple |

将多个组batch的函数包装在一起 |

更多数据处理操作详见: https://github.com/PaddlePaddle/PaddleNLP/blob/develop/docs/data.md

# 下载词汇表文件word_dict.txt,用于构造词-id映射关系。

# !wget https://paddlenlp.bj.bcebos.com/data/senta_word_dict.txt

# 加载词表

vocab = load_vocab('./senta_word_dict.txt')

# 打印填补单词及对应向量

for k, v in vocab.items():

print(k, v)

break

[PAD] 0

构造dataloder

下面的create_data_loader函数用于创建运行和预测时所需要的DataLoader对象。

-

paddle.io.DataLoader返回一个迭代器,该迭代器根据batch_sampler指定的顺序迭代返回dataset数据。异步加载数据。 -

batch_sampler:DataLoader通过 batch_sampler 产生的mini-batch索引列表来 dataset 中索引样本并组成mini-batch -

collate_fn:指定如何将样本列表组合为mini-batch数据。传给它参数需要是一个callable对象,需要实现对组建的batch的处理逻辑,并返回每个batch的数据。在这里传入的是prepare_input函数,对产生的数据进行pad操作,并返回实际长度等。

# Reads data and generates mini-batches.

def create_dataloader(dataset,

trans_function=None,

mode='train',

batch_size=1,

pad_token_id=0,

batchify_fn=None):

if trans_function:

dataset = dataset.apply(trans_function, lazy=True)

# return_list 数据是否以list形式返回

# collate_fn 指定如何将样本列表组合为mini-batch数据。传给它参数需要是一个callable对象,需要实现对组建的batch的处理逻辑,并返回每个batch的数据。在这里传入的是`prepare_input`函数,对产生的数据进行pad操作,并返回实际长度等。

dataloader = paddle.io.DataLoader(

dataset,

return_list=True,

batch_size=batch_size,

collate_fn=batchify_fn)

return dataloader

# python中的偏函数partial,把一个函数的某些参数固定住(也就是设置默认值),返回一个新的函数,调用这个新函数会更简单。

trans_function = partial(

convert_example,

vocab=vocab,

unk_token_id=vocab.get('[UNK]', 1),

is_test=False)

# 将读入的数据batch化处理,便于模型batch化运算。

# batch中的每个句子将会padding到这个batch中的文本最大长度batch_max_seq_len。

# 当文本长度大于batch_max_seq时,将会截断到batch_max_seq_len;当文本长度小于batch_max_seq时,将会padding补齐到batch_max_seq_len.

batchify_fn = lambda samples, fn=Tuple(

Pad(axis=0, pad_val=vocab['[PAD]']), # input_ids

Stack(dtype="int64"), # seq len

Stack(dtype="int64") # label

): [data for data in fn(samples)]

train_loader = create_dataloader(

train_ds,

trans_function=trans_function,

batch_size=128,

mode='train',

batchify_fn=batchify_fn)

dev_loader = create_dataloader(

dev_ds,

trans_function=trans_function,

batch_size=128,

mode='validation',

batchify_fn=batchify_fn)

test_loader = create_dataloader(

test_ds,

trans_function=trans_function,

batch_size=128,

mode='test',

batchify_fn=batchify_fn)

模型搭建

使用LSTMencoder搭建一个BiLSTM模型用于进行句子建模,得到句子的向量表示。

然后接一个线性变换层,完成二分类任务。

paddle.nn.Embedding组建word-embedding层ppnlp.seq2vec.LSTMEncoder组建句子建模层paddle.nn.Linear构造二分类器

- 除LSTM外,

seq2vec还提供了许多语义表征方法,详细可参考:seq2vec介绍

LSTMEncoder参数:

input_size: int,必选。输入特征Tensor的最后一维维度。hidden_size: int,必选。lstm运算的hidden size。num_layers:int,可选,lstm层数,默认为1。direction: str,可选,lstm运算方向,可选forward, bidirectional。默认forward。dropout: float,可选,dropout概率值。如果设置非0,则将对每一层lstm输出做dropout操作。默认为0.0。pooling_type: str, 可选,默认为None。可选sum,max,mean。如pooling_type=None, 则将最后一层lstm的最后一个step hidden输出作为文本语义表征; 如pooling_type!=None, 则将最后一层lstm的所有step的hidden输出做指定pooling操作,其结果作为文本语义表征。

更多seq2vec信息参考:https://github.com/PaddlePaddle/models/blob/develop/PaddleNLP/paddlenlp/seq2vec/encoder.py

class LSTMModel(nn.Layer):

def __init__(self,

vocab_size,

num_classes,

emb_dim=128,

padding_idx=0,

lstm_hidden_size=198,

direction='forward',

lstm_layers=1,

dropout_rate=0,

pooling_type=None,

fc_hidden_size=96):

super().__init__()

# 首先将输入word id 查表后映射成 word embedding

self.embedder = nn.Embedding(

num_embeddings=vocab_size,

embedding_dim=emb_dim,

padding_idx=padding_idx)

# 将word embedding经过LSTMEncoder变换到文本语义表征空间中

self.lstm_encoder = ppnlp.seq2vec.LSTMEncoder(

emb_dim,

lstm_hidden_size,

num_layers=lstm_layers,

direction=direction,

dropout=dropout_rate,

pooling_type=pooling_type)

# LSTMEncoder.get_output_dim()方法可以获取经过encoder之后的文本表示hidden_size

self.fc = nn.Linear(self.lstm_encoder.get_output_dim(), fc_hidden_size)

# 最后的分类器

self.output_layer = nn.Linear(fc_hidden_size, num_classes)

def forward(self, text, seq_len):

# text shape: (batch_size, num_tokens)

# print('input :', text.shape)

# Shape: (batch_size, num_tokens, embedding_dim)

embedded_text = self.embedder(text)

# print('after word-embeding:', embedded_text.shape)

# Shape: (batch_size, num_tokens, num_directions*lstm_hidden_size)

# num_directions = 2 if direction is 'bidirectional' else 1

text_repr = self.lstm_encoder(embedded_text, sequence_length=seq_len)

# print('after lstm:', text_repr.shape)

# Shape: (batch_size, fc_hidden_size)

fc_out = paddle.tanh(self.fc(text_repr))

# print('after Linear classifier:', fc_out.shape)

# Shape: (batch_size, num_classes)

logits = self.output_layer(fc_out)

# print('output:', logits.shape)

# probs 分类概率值

probs = F.softmax(logits, axis=-1)

# print('output probability:', probs.shape)

return probs

model= LSTMModel(

len(vocab),

len(label_list),

direction='bidirectional',

padding_idx=vocab['[PAD]'])

model = paddle.Model(model)

模型配置和训练

模型配置

optimizer = paddle.optimizer.Adam(

parameters=model.parameters(), learning_rate=5e-5)

loss = paddle.nn.CrossEntropyLoss()

metric = paddle.metric.Accuracy()

model.prepare(optimizer, loss, metric)

# 设置visualdl路径

log_dir = './visualdl'

callbacks = paddle.callbacks.VisualDL(log_dir=log_dir)

模型训练

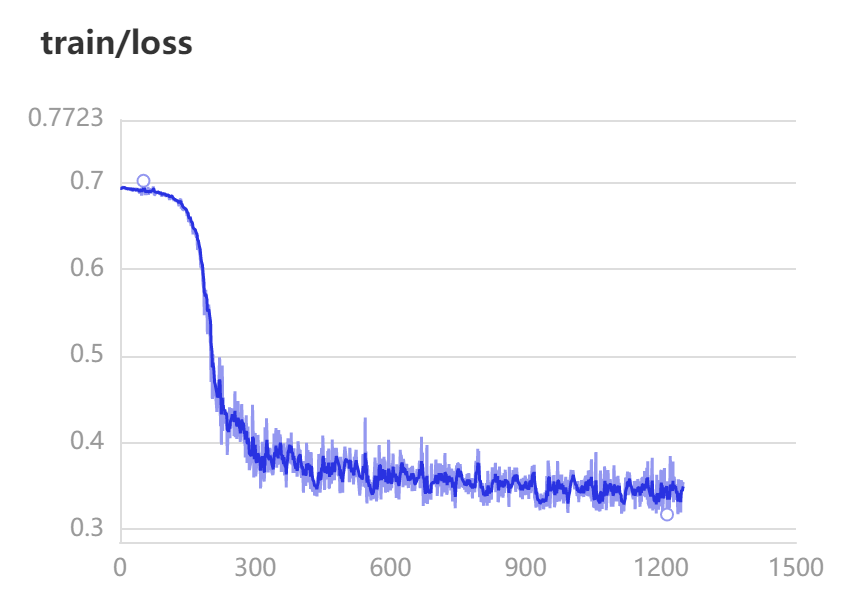

训练过程中会输出loss、acc等信息。

这里一共训练了10个epoch,在训练集上准确率约97%。

model.fit(train_loader,

dev_loader,

epochs=10,

save_dir='./checkpoints',

save_freq=5,

callbacks=callbacks)

The loss value printed in the log is the current step, and the metric is the average value of previous step.

Epoch 1/10

Building prefix dict from the default dictionary ...

Dumping model to file cache /tmp/jieba.cache

Loading model cost 0.867 seconds.

Prefix dict has been built successfully.

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:77: DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working

return (isinstance(seq, collections.Sequence) and

step 10/125 - loss: 0.6945 - acc: 0.4734 - 234ms/step

step 20/125 - loss: 0.6929 - acc: 0.4914 - 163ms/step

step 30/125 - loss: 0.6919 - acc: 0.5068 - 138ms/step

step 40/125 - loss: 0.6904 - acc: 0.5109 - 125ms/step

step 50/125 - loss: 0.6878 - acc: 0.5145 - 119ms/step

step 60/125 - loss: 0.6949 - acc: 0.5137 - 115ms/step

step 70/125 - loss: 0.6923 - acc: 0.5143 - 113ms/step

step 80/125 - loss: 0.6877 - acc: 0.5125 - 111ms/step

step 90/125 - loss: 0.6898 - acc: 0.5122 - 109ms/step

step 100/125 - loss: 0.6846 - acc: 0.5141 - 107ms/step

step 110/125 - loss: 0.6800 - acc: 0.5156 - 105ms/step

step 120/125 - loss: 0.6790 - acc: 0.5281 - 104ms/step

step 125/125 - loss: 0.6796 - acc: 0.5379 - 102ms/step

save checkpoint at /home/aistudio/checkpoints/0

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.6775 - acc: 0.7937 - 96ms/step

step 20/84 - loss: 0.6774 - acc: 0.7941 - 84ms/step

step 30/84 - loss: 0.6776 - acc: 0.7964 - 78ms/step

step 40/84 - loss: 0.6765 - acc: 0.7986 - 74ms/step

step 50/84 - loss: 0.6798 - acc: 0.7972 - 71ms/step

step 60/84 - loss: 0.6748 - acc: 0.7991 - 69ms/step

step 70/84 - loss: 0.6782 - acc: 0.8012 - 68ms/step

step 80/84 - loss: 0.6776 - acc: 0.8011 - 66ms/step

step 84/84 - loss: 0.6750 - acc: 0.8011 - 63ms/step

Eval samples: 10646

Epoch 2/10

step 10/125 - loss: 0.6812 - acc: 0.7531 - 125ms/step

step 20/125 - loss: 0.6665 - acc: 0.7902 - 110ms/step

step 30/125 - loss: 0.6578 - acc: 0.7987 - 108ms/step

step 40/125 - loss: 0.6452 - acc: 0.7977 - 104ms/step

step 50/125 - loss: 0.6238 - acc: 0.8003 - 103ms/step

step 60/125 - loss: 0.5803 - acc: 0.8124 - 102ms/step

step 70/125 - loss: 0.4889 - acc: 0.8177 - 101ms/step

step 80/125 - loss: 0.4504 - acc: 0.8218 - 100ms/step

step 90/125 - loss: 0.4354 - acc: 0.8266 - 99ms/step

step 100/125 - loss: 0.3977 - acc: 0.8316 - 98ms/step

step 110/125 - loss: 0.4341 - acc: 0.8364 - 97ms/step

step 120/125 - loss: 0.4397 - acc: 0.8417 - 97ms/step

step 125/125 - loss: 0.4236 - acc: 0.8430 - 95ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.4360 - acc: 0.8906 - 87ms/step

step 20/84 - loss: 0.4167 - acc: 0.8898 - 71ms/step

step 30/84 - loss: 0.4203 - acc: 0.8971 - 67ms/step

step 40/84 - loss: 0.3835 - acc: 0.8986 - 64ms/step

step 50/84 - loss: 0.3996 - acc: 0.8978 - 64ms/step

step 60/84 - loss: 0.4477 - acc: 0.8962 - 62ms/step

step 70/84 - loss: 0.4174 - acc: 0.8952 - 63ms/step

step 80/84 - loss: 0.4231 - acc: 0.8960 - 65ms/step

step 84/84 - loss: 0.4522 - acc: 0.8966 - 62ms/step

Eval samples: 10646

Epoch 3/10

step 10/125 - loss: 0.4684 - acc: 0.8922 - 107ms/step

step 20/125 - loss: 0.4446 - acc: 0.8938 - 96ms/step

step 30/125 - loss: 0.4317 - acc: 0.9008 - 100ms/step

step 40/125 - loss: 0.4128 - acc: 0.9084 - 101ms/step

step 50/125 - loss: 0.4111 - acc: 0.9125 - 98ms/step

step 60/125 - loss: 0.3678 - acc: 0.9182 - 96ms/step

step 70/125 - loss: 0.3552 - acc: 0.9212 - 95ms/step

step 80/125 - loss: 0.3769 - acc: 0.9218 - 94ms/step

step 90/125 - loss: 0.3651 - acc: 0.9234 - 94ms/step

step 100/125 - loss: 0.3755 - acc: 0.9231 - 93ms/step

step 110/125 - loss: 0.3678 - acc: 0.9234 - 93ms/step

step 120/125 - loss: 0.3909 - acc: 0.9249 - 92ms/step

step 125/125 - loss: 0.3978 - acc: 0.9244 - 90ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.3998 - acc: 0.9320 - 64ms/step

step 20/84 - loss: 0.3875 - acc: 0.9309 - 65ms/step

step 30/84 - loss: 0.3662 - acc: 0.9305 - 67ms/step

step 40/84 - loss: 0.3615 - acc: 0.9322 - 67ms/step

step 50/84 - loss: 0.3896 - acc: 0.9309 - 66ms/step

step 60/84 - loss: 0.3854 - acc: 0.9326 - 65ms/step

step 70/84 - loss: 0.3862 - acc: 0.9317 - 64ms/step

step 80/84 - loss: 0.3754 - acc: 0.9324 - 62ms/step

step 84/84 - loss: 0.4394 - acc: 0.9332 - 60ms/step

Eval samples: 10646

Epoch 4/10

step 10/125 - loss: 0.4256 - acc: 0.9219 - 129ms/step

step 20/125 - loss: 0.4016 - acc: 0.9305 - 108ms/step

step 30/125 - loss: 0.3773 - acc: 0.9315 - 101ms/step

step 40/125 - loss: 0.3954 - acc: 0.9346 - 96ms/step

step 50/125 - loss: 0.3782 - acc: 0.9353 - 95ms/step

step 60/125 - loss: 0.3464 - acc: 0.9398 - 93ms/step

step 70/125 - loss: 0.3456 - acc: 0.9427 - 93ms/step

step 80/125 - loss: 0.3636 - acc: 0.9429 - 93ms/step

step 90/125 - loss: 0.3477 - acc: 0.9435 - 93ms/step

step 100/125 - loss: 0.3602 - acc: 0.9432 - 92ms/step

step 110/125 - loss: 0.3622 - acc: 0.9431 - 92ms/step

step 120/125 - loss: 0.3756 - acc: 0.9439 - 92ms/step

step 125/125 - loss: 0.3703 - acc: 0.9433 - 90ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.3898 - acc: 0.9391 - 84ms/step

step 20/84 - loss: 0.3813 - acc: 0.9410 - 73ms/step

step 30/84 - loss: 0.3603 - acc: 0.9414 - 73ms/step

step 40/84 - loss: 0.3644 - acc: 0.9422 - 71ms/step

step 50/84 - loss: 0.3744 - acc: 0.9417 - 70ms/step

step 60/84 - loss: 0.3567 - acc: 0.9437 - 70ms/step

step 70/84 - loss: 0.3745 - acc: 0.9420 - 70ms/step

step 80/84 - loss: 0.3677 - acc: 0.9426 - 68ms/step

step 84/84 - loss: 0.4366 - acc: 0.9432 - 66ms/step

Eval samples: 10646

Epoch 5/10

step 10/125 - loss: 0.3941 - acc: 0.9328 - 114ms/step

step 20/125 - loss: 0.3838 - acc: 0.9387 - 107ms/step

step 30/125 - loss: 0.3766 - acc: 0.9414 - 102ms/step

step 40/125 - loss: 0.3818 - acc: 0.9439 - 98ms/step

step 50/125 - loss: 0.3641 - acc: 0.9450 - 97ms/step

step 60/125 - loss: 0.3353 - acc: 0.9488 - 96ms/step

step 70/125 - loss: 0.3363 - acc: 0.9510 - 95ms/step

step 80/125 - loss: 0.3508 - acc: 0.9511 - 95ms/step

step 90/125 - loss: 0.3450 - acc: 0.9513 - 95ms/step

step 100/125 - loss: 0.3450 - acc: 0.9514 - 95ms/step

step 110/125 - loss: 0.3547 - acc: 0.9513 - 94ms/step

step 120/125 - loss: 0.3697 - acc: 0.9520 - 93ms/step

step 125/125 - loss: 0.3807 - acc: 0.9512 - 92ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.3845 - acc: 0.9414 - 86ms/step

step 20/84 - loss: 0.3750 - acc: 0.9465 - 71ms/step

step 30/84 - loss: 0.3583 - acc: 0.9458 - 67ms/step

step 40/84 - loss: 0.3670 - acc: 0.9463 - 65ms/step

step 50/84 - loss: 0.3688 - acc: 0.9453 - 64ms/step

step 60/84 - loss: 0.3614 - acc: 0.9467 - 63ms/step

step 70/84 - loss: 0.3717 - acc: 0.9452 - 64ms/step

step 80/84 - loss: 0.3554 - acc: 0.9458 - 65ms/step

step 84/84 - loss: 0.4361 - acc: 0.9465 - 64ms/step

Eval samples: 10646

Epoch 6/10

step 10/125 - loss: 0.3749 - acc: 0.9477 - 75ms/step

step 20/125 - loss: 0.3694 - acc: 0.9504 - 75ms/step

step 30/125 - loss: 0.3521 - acc: 0.9539 - 73ms/step

step 40/125 - loss: 0.3791 - acc: 0.9541 - 78ms/step

step 50/125 - loss: 0.3515 - acc: 0.9544 - 81ms/step

step 60/125 - loss: 0.3352 - acc: 0.9574 - 81ms/step

step 70/125 - loss: 0.3314 - acc: 0.9590 - 81ms/step

step 80/125 - loss: 0.3496 - acc: 0.9584 - 82ms/step

step 90/125 - loss: 0.3433 - acc: 0.9582 - 82ms/step

step 100/125 - loss: 0.3400 - acc: 0.9580 - 83ms/step

step 110/125 - loss: 0.3451 - acc: 0.9580 - 83ms/step

step 120/125 - loss: 0.3599 - acc: 0.9589 - 83ms/step

step 125/125 - loss: 0.3598 - acc: 0.9585 - 81ms/step

save checkpoint at /home/aistudio/checkpoints/5

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.3754 - acc: 0.9484 - 85ms/step

step 20/84 - loss: 0.3714 - acc: 0.9535 - 69ms/step

step 30/84 - loss: 0.3558 - acc: 0.9523 - 65ms/step

step 40/84 - loss: 0.3603 - acc: 0.9533 - 62ms/step

step 50/84 - loss: 0.3719 - acc: 0.9514 - 61ms/step

step 60/84 - loss: 0.3442 - acc: 0.9525 - 60ms/step

step 70/84 - loss: 0.3654 - acc: 0.9513 - 59ms/step

step 80/84 - loss: 0.3602 - acc: 0.9514 - 58ms/step

step 84/84 - loss: 0.4414 - acc: 0.9520 - 56ms/step

Eval samples: 10646

Epoch 7/10

step 10/125 - loss: 0.3673 - acc: 0.9523 - 104ms/step

step 20/125 - loss: 0.3638 - acc: 0.9566 - 93ms/step

step 30/125 - loss: 0.3488 - acc: 0.9599 - 90ms/step

step 40/125 - loss: 0.3790 - acc: 0.9600 - 88ms/step

step 50/125 - loss: 0.3557 - acc: 0.9583 - 88ms/step

step 60/125 - loss: 0.3309 - acc: 0.9604 - 88ms/step

step 70/125 - loss: 0.3366 - acc: 0.9621 - 88ms/step

step 80/125 - loss: 0.3372 - acc: 0.9618 - 87ms/step

step 90/125 - loss: 0.3326 - acc: 0.9615 - 87ms/step

step 100/125 - loss: 0.3365 - acc: 0.9612 - 88ms/step

step 110/125 - loss: 0.3404 - acc: 0.9615 - 88ms/step

step 120/125 - loss: 0.3582 - acc: 0.9626 - 87ms/step

step 125/125 - loss: 0.3549 - acc: 0.9621 - 86ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.3800 - acc: 0.9531 - 85ms/step

step 20/84 - loss: 0.3618 - acc: 0.9559 - 69ms/step

step 30/84 - loss: 0.3520 - acc: 0.9555 - 66ms/step

step 40/84 - loss: 0.3568 - acc: 0.9566 - 63ms/step

step 50/84 - loss: 0.3752 - acc: 0.9552 - 62ms/step

step 60/84 - loss: 0.3430 - acc: 0.9559 - 62ms/step

step 70/84 - loss: 0.3786 - acc: 0.9550 - 61ms/step

step 80/84 - loss: 0.3554 - acc: 0.9557 - 59ms/step

step 84/84 - loss: 0.3533 - acc: 0.9563 - 57ms/step

Eval samples: 10646

Epoch 8/10

step 10/125 - loss: 0.3558 - acc: 0.9617 - 109ms/step

step 20/125 - loss: 0.3595 - acc: 0.9641 - 97ms/step

step 30/125 - loss: 0.3484 - acc: 0.9654 - 93ms/step

step 40/125 - loss: 0.3728 - acc: 0.9639 - 90ms/step

step 50/125 - loss: 0.3405 - acc: 0.9639 - 89ms/step

step 60/125 - loss: 0.3275 - acc: 0.9660 - 88ms/step

step 70/125 - loss: 0.3262 - acc: 0.9673 - 87ms/step

step 80/125 - loss: 0.3359 - acc: 0.9668 - 87ms/step

step 90/125 - loss: 0.3285 - acc: 0.9667 - 87ms/step

step 100/125 - loss: 0.3344 - acc: 0.9663 - 87ms/step

step 110/125 - loss: 0.3351 - acc: 0.9666 - 87ms/step

step 120/125 - loss: 0.3564 - acc: 0.9676 - 87ms/step

step 125/125 - loss: 0.3524 - acc: 0.9672 - 86ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.3692 - acc: 0.9602 - 73ms/step

step 20/84 - loss: 0.3669 - acc: 0.9582 - 72ms/step

step 30/84 - loss: 0.3471 - acc: 0.9586 - 70ms/step

step 40/84 - loss: 0.3467 - acc: 0.9586 - 69ms/step

step 50/84 - loss: 0.3713 - acc: 0.9573 - 69ms/step

step 60/84 - loss: 0.3442 - acc: 0.9578 - 69ms/step

step 70/84 - loss: 0.3561 - acc: 0.9576 - 69ms/step

step 80/84 - loss: 0.3410 - acc: 0.9579 - 69ms/step

step 84/84 - loss: 0.4010 - acc: 0.9585 - 66ms/step

Eval samples: 10646

Epoch 9/10

step 10/125 - loss: 0.3517 - acc: 0.9602 - 106ms/step

step 20/125 - loss: 0.3577 - acc: 0.9648 - 95ms/step

step 30/125 - loss: 0.3434 - acc: 0.9669 - 92ms/step

step 40/125 - loss: 0.3667 - acc: 0.9660 - 89ms/step

step 50/125 - loss: 0.3391 - acc: 0.9661 - 89ms/step

step 60/125 - loss: 0.3251 - acc: 0.9680 - 88ms/step

step 70/125 - loss: 0.3235 - acc: 0.9695 - 87ms/step

step 80/125 - loss: 0.3325 - acc: 0.9692 - 87ms/step

step 90/125 - loss: 0.3263 - acc: 0.9694 - 90ms/step

step 100/125 - loss: 0.3323 - acc: 0.9692 - 92ms/step

step 110/125 - loss: 0.3316 - acc: 0.9694 - 93ms/step

step 120/125 - loss: 0.3547 - acc: 0.9702 - 93ms/step

step 125/125 - loss: 0.3506 - acc: 0.9699 - 91ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.3649 - acc: 0.9609 - 84ms/step

step 20/84 - loss: 0.3670 - acc: 0.9586 - 69ms/step

step 30/84 - loss: 0.3463 - acc: 0.9586 - 64ms/step

step 40/84 - loss: 0.3450 - acc: 0.9594 - 62ms/step

step 50/84 - loss: 0.3687 - acc: 0.9583 - 61ms/step

step 60/84 - loss: 0.3484 - acc: 0.9587 - 60ms/step

step 70/84 - loss: 0.3511 - acc: 0.9587 - 59ms/step

step 80/84 - loss: 0.3392 - acc: 0.9592 - 58ms/step

step 84/84 - loss: 0.4006 - acc: 0.9597 - 56ms/step

Eval samples: 10646

Epoch 10/10

step 10/125 - loss: 0.3449 - acc: 0.9625 - 89ms/step

step 20/125 - loss: 0.3561 - acc: 0.9676 - 105ms/step

step 30/125 - loss: 0.3506 - acc: 0.9693 - 108ms/step

step 40/125 - loss: 0.3665 - acc: 0.9676 - 109ms/step

step 50/125 - loss: 0.3380 - acc: 0.9675 - 107ms/step

step 60/125 - loss: 0.3235 - acc: 0.9693 - 106ms/step

step 70/125 - loss: 0.3228 - acc: 0.9708 - 105ms/step

step 80/125 - loss: 0.3274 - acc: 0.9706 - 106ms/step

step 90/125 - loss: 0.3256 - acc: 0.9705 - 105ms/step

step 100/125 - loss: 0.3307 - acc: 0.9702 - 103ms/step

step 110/125 - loss: 0.3350 - acc: 0.9700 - 102ms/step

step 120/125 - loss: 0.3551 - acc: 0.9709 - 100ms/step

step 125/125 - loss: 0.3524 - acc: 0.9706 - 98ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.3675 - acc: 0.9602 - 89ms/step

step 20/84 - loss: 0.3638 - acc: 0.9602 - 71ms/step

step 30/84 - loss: 0.3522 - acc: 0.9599 - 66ms/step

step 40/84 - loss: 0.3541 - acc: 0.9600 - 63ms/step

step 50/84 - loss: 0.3643 - acc: 0.9587 - 62ms/step

step 60/84 - loss: 0.3276 - acc: 0.9600 - 60ms/step

step 70/84 - loss: 0.3688 - acc: 0.9596 - 60ms/step

step 80/84 - loss: 0.3467 - acc: 0.9597 - 58ms/step

step 84/84 - loss: 0.3462 - acc: 0.9603 - 56ms/step

Eval samples: 10646

save checkpoint at /home/aistudio/checkpoints/final

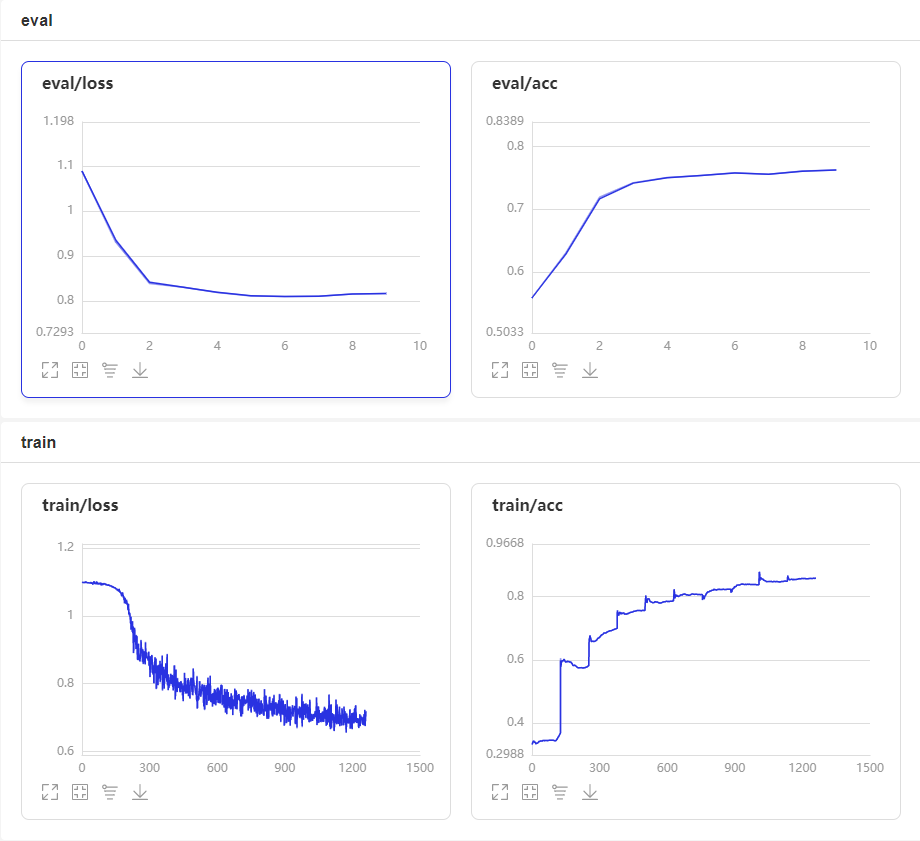

启动VisualDL查看训练过程可视化结果

启动步骤:

- 1、切换到本界面左侧「可视化」

- 2、日志文件路径选择 ‘visualdl’

- 3、点击「启动VisualDL」后点击「打开VisualDL」,即可查看可视化结果:

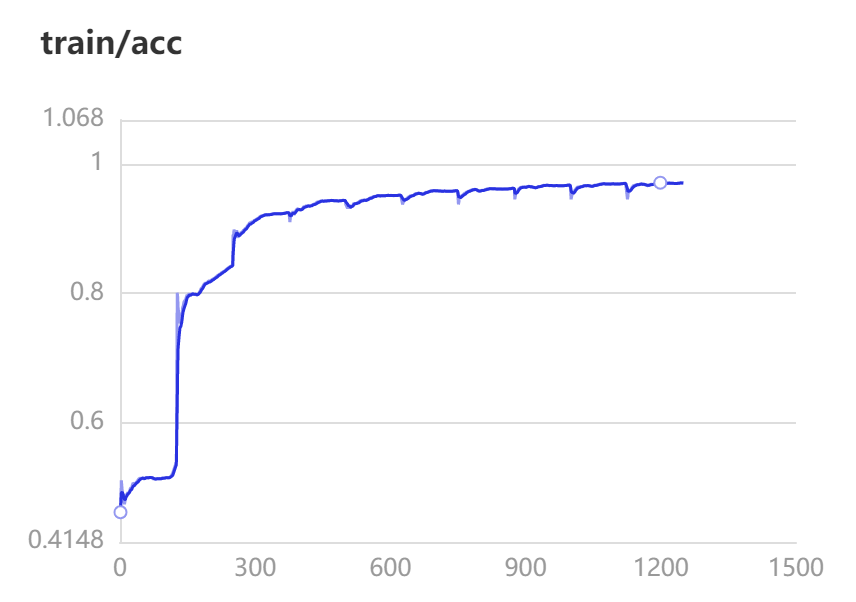

Accuracy和Loss的实时变化趋势如下:

评估

最终得到的评估准确率为96%

results = model.evaluate(dev_loader)

print("Finally test acc: %.5f" % results['acc'])

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.3675 - acc: 0.9602 - 85ms/step

step 20/84 - loss: 0.3638 - acc: 0.9602 - 70ms/step

step 30/84 - loss: 0.3522 - acc: 0.9599 - 66ms/step

step 40/84 - loss: 0.3541 - acc: 0.9600 - 64ms/step

step 50/84 - loss: 0.3643 - acc: 0.9587 - 63ms/step

step 60/84 - loss: 0.3276 - acc: 0.9600 - 62ms/step

step 70/84 - loss: 0.3688 - acc: 0.9596 - 61ms/step

step 80/84 - loss: 0.3467 - acc: 0.9597 - 61ms/step

step 84/84 - loss: 0.3462 - acc: 0.9603 - 59ms/step

Eval samples: 10646

Finally test acc: 0.96027

#保存模型

model.save('./finetuning/lstm/model', training=True)

预测

model= LSTMModel(

len(vocab),

len(label_list),

direction='bidirectional',

padding_idx=vocab['[PAD]'])

model = paddle.Model(model)

model.load('./finetuning/model')

model.prepare()

label_map = {

0: 'negative', 1: 'positive'}

results = model.predict(test_loader, batch_size=128)[0]

predictions = []

for batch_probs in results:

# 映射分类label

idx = np.argmax(batch_probs, axis=-1)

idx = idx.tolist()

labels = [label_map[i] for i in idx]

predictions.extend(labels)

# 看看预测数据前5个样例分类结果

for idx, data in enumerate(test_ds.data[:10]):

print('Data: {} \t Label: {}'.format(data[0], predictions[idx]))

Predict begin...

step 42/42 [==============================] - ETA: 3s - 97ms/ste - ETA: 3s - 104ms/st - ETA: 3s - 108ms/st - ETA: 3s - 97ms/step - ETA: 2s - 89ms/ste - ETA: 2s - 85ms/ste - ETA: 2s - 81ms/ste - ETA: 2s - 78ms/ste - ETA: 1s - 76ms/ste - ETA: 1s - 74ms/ste - ETA: 1s - 73ms/ste - ETA: 1s - 72ms/ste - ETA: 1s - 71ms/ste - ETA: 0s - 70ms/ste - ETA: 0s - 70ms/ste - ETA: 0s - 69ms/ste - ETA: 0s - 69ms/ste - ETA: 0s - 68ms/ste - ETA: 0s - 67ms/ste - ETA: 0s - 64ms/ste - 62ms/step

Predict samples: 5353

Data: 楼面经理服务态度极差,等位和埋单都差,楼面小妹还挺好 Label: negative

Data: 欺负北方人没吃过鲍鱼是怎么着?简直敷衍到可笑的程度,团购连青菜都是两人份?!难吃到死,菜色还特别可笑,什么时候粤菜的小菜改成拍黄瓜了?!把团购客人当傻子,可这满大厅的傻子谁还会再来?! Label: negative

Data: 如果大家有时间而且不怕麻烦的话可以去这里试试,点一个饭等左2个钟,没错!是两个钟!期间催了n遍都说马上到,结果?呵呵。乳鸽的味道,太咸,可能不新鲜吧……要用重口味盖住异味。上菜超级慢!中途还搞什么表演,麻烦有人手的话就上菜啊,表什么演?!?!要大家饿着看表演吗?最后结账还算错单,我真心服了……有一种店叫不会有下次,大概就是指它吧 Label: negative

Data: 偌大的一个大厅就一个人点菜,点菜速度超级慢,菜牌上多个菜停售,连续点了两个没标停售的菜也告知没有,粥上来是凉的,榴莲酥火大了,格格肉超级油腻而且咸?????? Label: negative

Data: 泥撕雞超級好吃!!!吃了一個再叫一個還想打包的節奏! Label: positive

Data: 作为地道的广州人,从小就跟着家人在西关品尝各式美食,今日带着家中长辈来这个老字号泮溪酒家真实失望透顶,出品差、服务差、洗手间邋遢弥漫着浓郁尿骚味、丢广州人的脸、丢广州老字号的脸。 Label: negative

Data: 辣味道很赞哦!猪肚鸡一直是我们的最爱,每次来都必点,服务很给力,环境很好,值得分享哦!西洋菜 Label: positive

Data: 第一次吃到這麼脏的火鍋:吃着吃著吃出一條尾指粗的黑毛毛蟲——惡心!脏!!!第一次吃到這麼無誠信的火鍋服務:我們呼喚人員時,某女部長立即使服務員迅速取走蟲所在的碗,任我們多次叫「放下」論理,她們也置若罔聞轉身將蟲毁屍滅跡,還嘻皮笑臉辯稱只是把碗換走,態度行為惡劣——奸詐!毫無誠信!!爛!!!當然還有剛坐下時的情形:第一次吃到這樣的火鍋:所有肉食熟食都上桌了,鍋底遲遲沒上,足足等了半小時才姍姍來遲;---差!!第一次吃到這樣的火鍋:1元雞鍋、1碟6塊小牛肉、1碟小腐皮、1碟5塊裝的普通肥牛、1碟數片的細碎牛肚結帳便2百多元;---不值!!以下省略千字差評......白云路的稻香是最差、最失禮的稻香,天河城、華廈的都比它好上過萬倍!!白云路的稻香是史上最差的餐廳!!! Label: negative

Data: 文昌鸡份量很少且很咸,其他菜味道很一般!服务态度差差差!还要10%的服务费、 Label: negative

Data: 这个网站的评价真是越来越不可信了,搞不懂为什么这么多好评。真的是很一般,不要迷信什么哪里回来的大厨吧。环境和出品若是当作普通茶餐厅来看待就还说得过去,但是价格又不是茶餐厅的价格,这就很尴尬了。。服务也是有待提高。 Label: negative

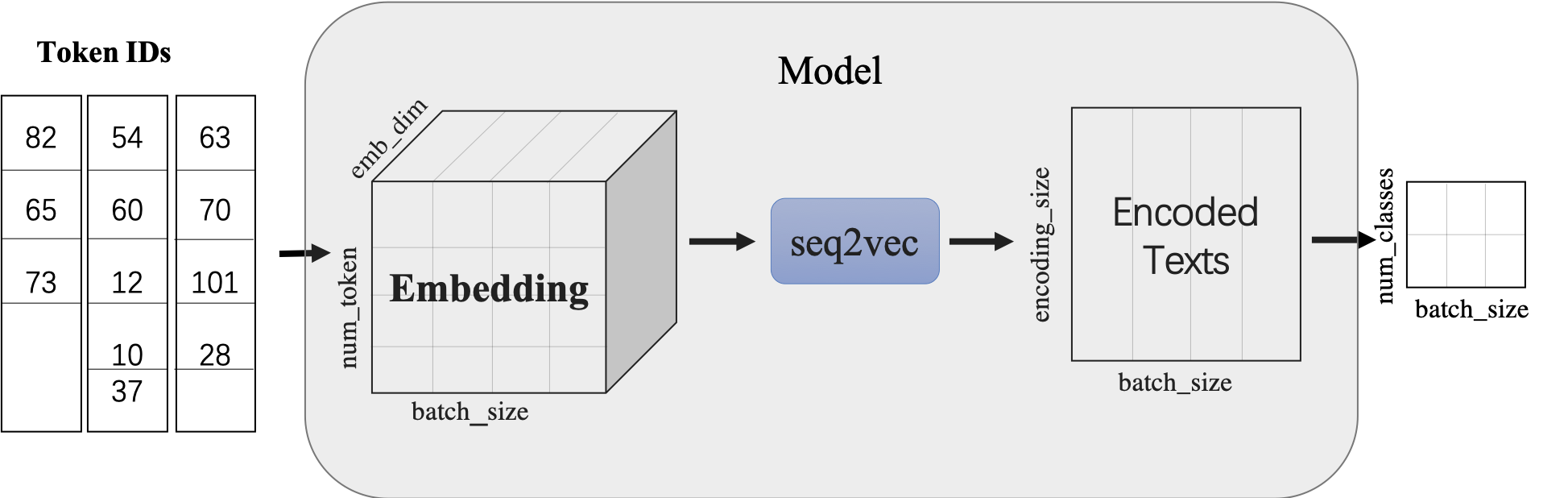

修改seq2vec模型

seq2vec模块

-

输入:文本序列的Embedding Tensor,shape:(batch_size, num_token, emb_dim)

-

输出:文本语义表征Enocded Texts Tensor,shape:(batch_sie,encoding_size)

-

提供了

BoWEncoder,CNNEncoder,GRUEncoder,LSTMEncoder,RNNEncoder等模型-

BoWEncoder是将输入序列Embedding Tensor在num_token维度上叠加,得到文本语义表征Enocded Texts Tensor。 -

CNNEncoder是将输入序列Embedding Tensor进行卷积操作,在对卷积结果进行max_pooling,得到文本语义表征Enocded Texts Tensor。 -

GRUEncoder是对输入序列Embedding Tensor进行GRU运算,在运算结果上进行pooling或者取最后一个step的隐表示,得到文本语义表征Enocded Texts Tensor。 -

LSTMEncoder是对输入序列Embedding Tensor进行LSTM运算,在运算结果上进行pooling或者取最后一个step的隐表示,得到文本语义表征Enocded Texts Tensor。 -

RNNEncoder是对输入序列Embedding Tensor进行RNN运算,在运算结果上进行pooling或者取最后一个step的隐表示,得到文本语义表征Enocded Texts Tensor。

-

-

seq2vec提供了许多语义表征方法,那么这些方法有什么特点呢?BoWEncoder采用Bag of Word Embedding方法,其特点是简单。但其缺点是没有考虑文本的语境,所以对文本语义的表征不足以表意。CNNEncoder采用卷积操作,提取局部特征,其特点是可以共享权重。但其缺点同样只考虑了局部语义,上下文信息没有充分利用。

图2:卷积示意图 RNNEnocder采用RNN方法,在计算下一个token语义信息时,利用上一个token语义信息作为其输入。但其缺点容易产生梯度消失和梯度爆炸。

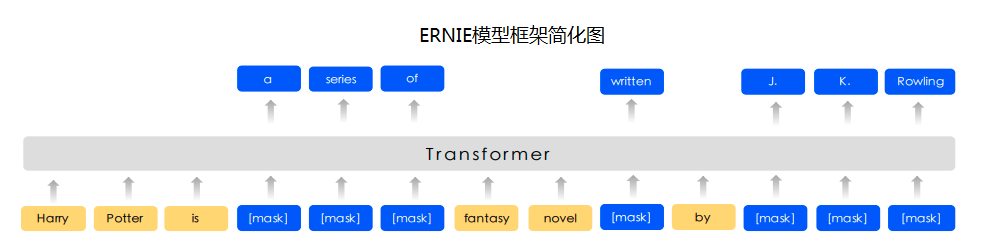

图3:RNN示意图 LSTMEnocder采用LSTM方法,LSTM是RNN的一种变种。为了学到长期依赖关系,LSTM 中引入了门控机制来控制信息的累计速度,包括有选择地加入新的信息,并有选择地遗忘之前累计的信息。

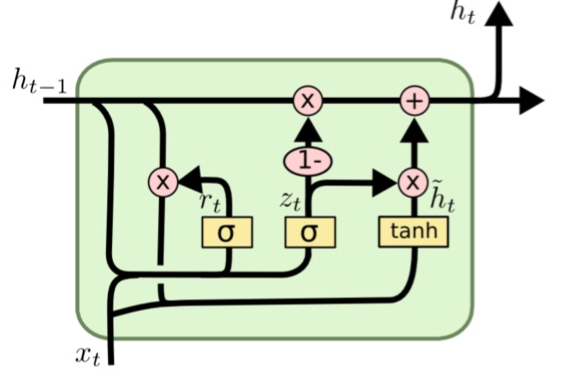

图4:LSTM示意图 GRUEncoder采用GRU方法,GRU也是RNN的一种变种。一个LSTM单元有四个输入 ,因而参数是RNN的四倍,带来的结果是训练速度慢。GRU对LSTM进行了简化,在不影响效果的前提下加快了训练速度。

关于CNN、LSTM、GRU、RNN等更多信息参考:

- Understanding LSTM Networks: https://colah.github.io/posts/2015-08-Understanding-LSTMs/

- Empirical Evaluation of Gated Recurrent Neural Networks on Sequence Modeling:https://arxiv.org/abs/1412.3555

- A Critical Review of Recurrent Neural Networks

for Sequence Learning: https://arxiv.org/pdf/1506.00019 - A Convolutional Neural Network for Modelling Sentences: https://arxiv.org/abs/1404.2188

数据准备

import paddlenlp as ppnlp

# 使用paddlenlp内置数据集

# train_ds, dev_ds, test_ds = ppnlp.datasets.ChnSentiCorp.get_datasets(['train', 'dev', 'test'])

# label_list = train_ds.get_labels()

# print(label_list)

# for sent, label in train_ds[:5]:

# print (sent, label)

# tmp = ppnlp.datasets.ChnSentiCorp('train')

# tmp1 = ppnlp.datasets.MapDatasetWrapper(tmp)

# print(type(tmp), type(tmp1))

# for sent, label in tmp1[:5]:

# print(sent, label)

from functools import partial

from paddlenlp.data import Pad, Stack, Tuple

from utils import create_dataloader,convert_example

# Reads data and generates mini-batches.

trans_fn = partial(

convert_example,

vocab=vocab,

unk_token_id=vocab.get('[UNK]', 1),

is_test=False)

# 将读入的数据batch化处理,便于模型batch化运算。

# batch中的每个句子将会padding到这个batch中的文本最大长度batch_max_seq_len。

# 当文本长度大于batch_max_seq时,将会截断到batch_max_seq_len;当文本长度小于batch_max_seq时,将会padding补齐到batch_max_seq_len.

batchify_fn = lambda samples, fn=Tuple(

Pad(axis=0, pad_val=vocab['[PAD]']), # input_ids

Stack(dtype="int64"), # seq len

Stack(dtype="int64") # label

): [data for data in fn(samples)]

train_loader = create_dataloader(

train_ds,

trans_fn=trans_fn,

batch_size=128,

mode='train',

batchify_fn=batchify_fn)

dev_loader = create_dataloader(

dev_ds,

trans_fn=trans_fn,

batch_size=128,

mode='validation',

batchify_fn=batchify_fn)

test_loader = create_dataloader(

test_ds,

trans_fn=trans_fn,

batch_size=128,

mode='test',

batchify_fn=batchify_fn)

模型建立

class GRUModel(nn.Layer):

def __init__(self,

vocab_size,

num_classes,

emb_dim=128,

padding_idx=0,

hidden_size=198,

direction='forward',

num_layers=1,

dropout_rate=0,

pooling_type=None,

fc_hidden_size=96):

super().__init__()

# 首先将输入word id 查表后映射成 word embedding

self.embedder = nn.Embedding(

num_embeddings=vocab_size,

embedding_dim=emb_dim,

padding_idx=padding_idx)

# 将word embedding经过LSTMEncoder变换到文本语义表征空间中

self.gru_encoder = ppnlp.seq2vec.GRUEncoder(

emb_dim,

hidden_size,

num_layers=num_layers,

direction=direction,

dropout=dropout_rate,

pooling_type=pooling_type)

# LSTMEncoder.get_output_dim()方法可以获取经过encoder之后的文本表示hidden_size

self.fc = nn.Linear(self.gru_encoder.get_output_dim(), fc_hidden_size)

# 最后的分类器

self.output_layer = nn.Linear(fc_hidden_size, num_classes)

def forward(self, text, seq_len):

# text shape: (batch_size, num_tokens)

# print('input :', text.shape)

# Shape: (batch_size, num_tokens, embedding_dim)

embedded_text = self.embedder(text)

# print('after word-embeding:', embedded_text.shape)

# Shape: (batch_size, num_tokens, num_directions*lstm_hidden_size)

# num_directions = 2 if direction is 'bidirectional' else 1

text_repr = self.gru_encoder(embedded_text, sequence_length=seq_len)

# print('after lstm:', text_repr.shape)

# Shape: (batch_size, fc_hidden_size)

fc_out = paddle.tanh(self.fc(text_repr))

# print('after Linear classifier:', fc_out.shape)

# Shape: (batch_size, num_classes)

logits = self.output_layer(fc_out)

# print('output:', logits.shape)

# probs 分类概率值

probs = F.softmax(logits, axis=-1)

# print('output probability:', probs.shape)

return probs

模型配置

model= GRUModel(

len(vocab),

len(label_list),

direction='bidirectional',

padding_idx=vocab['[PAD]'])

model = paddle.Model(model)

optimizer = paddle.optimizer.Adam(

parameters=model.parameters(), learning_rate=5e-5)

loss = paddle.nn.CrossEntropyLoss()

metric = paddle.metric.Accuracy()

model.prepare(optimizer, loss, metric)

# 设置visualdl路径

log_dir = './visualdl/gru_v1'

callbacks = paddle.callbacks.VisualDL(log_dir=log_dir)

model.fit(train_loader,

dev_loader,

epochs=10,

save_dir='./checkpoints/gru_v1',

save_freq=5,

callbacks=callbacks)

The loss value printed in the log is the current step, and the metric is the average value of previous step.

Epoch 1/10

step 10/125 - loss: 1.1046 - acc: 0.0000e+00 - 111ms/step

step 20/125 - loss: 1.0817 - acc: 0.2332 - 99ms/step

step 30/125 - loss: 1.0604 - acc: 0.3221 - 95ms/step

step 40/125 - loss: 1.0446 - acc: 0.3602 - 94ms/step

step 50/125 - loss: 1.0306 - acc: 0.3811 - 92ms/step

step 60/125 - loss: 1.0166 - acc: 0.4025 - 91ms/step

step 70/125 - loss: 1.0039 - acc: 0.4552 - 91ms/step

step 80/125 - loss: 0.9935 - acc: 0.4743 - 91ms/step

step 90/125 - loss: 0.9841 - acc: 0.5078 - 91ms/step

step 100/125 - loss: 0.9752 - acc: 0.5260 - 90ms/step

step 110/125 - loss: 0.9695 - acc: 0.5223 - 90ms/step

step 120/125 - loss: 0.9620 - acc: 0.5324 - 90ms/step

step 125/125 - loss: 0.9583 - acc: 0.5418 - 88ms/step

save checkpoint at /home/aistudio/checkpoints/gru_v1/0

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.9590 - acc: 0.7398 - 95ms/step

step 20/84 - loss: 0.9589 - acc: 0.7324 - 75ms/step

step 30/84 - loss: 0.9585 - acc: 0.7328 - 69ms/step

step 40/84 - loss: 0.9579 - acc: 0.7295 - 67ms/step

step 50/84 - loss: 0.9593 - acc: 0.7316 - 67ms/step

step 60/84 - loss: 0.9556 - acc: 0.7346 - 67ms/step

step 70/84 - loss: 0.9594 - acc: 0.7323 - 67ms/step

step 80/84 - loss: 0.9589 - acc: 0.7323 - 66ms/step

step 84/84 - loss: 0.9616 - acc: 0.7330 - 63ms/step

Eval samples: 10646

Epoch 2/10

step 10/125 - loss: 0.9529 - acc: 0.7086 - 114ms/step

step 20/125 - loss: 0.9500 - acc: 0.7051 - 104ms/step

step 30/125 - loss: 0.9377 - acc: 0.7341 - 98ms/step

step 40/125 - loss: 0.9367 - acc: 0.7318 - 96ms/step

step 50/125 - loss: 0.9213 - acc: 0.7439 - 94ms/step

step 60/125 - loss: 0.8881 - acc: 0.7379 - 93ms/step

step 70/125 - loss: 0.8537 - acc: 0.7412 - 92ms/step

step 80/125 - loss: 0.8674 - acc: 0.7401 - 93ms/step

step 90/125 - loss: 0.8386 - acc: 0.7376 - 92ms/step

step 100/125 - loss: 0.8068 - acc: 0.7366 - 92ms/step

step 110/125 - loss: 0.8452 - acc: 0.7364 - 92ms/step

step 120/125 - loss: 0.7921 - acc: 0.7374 - 91ms/step

step 125/125 - loss: 0.8001 - acc: 0.7378 - 89ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.7727 - acc: 0.7500 - 88ms/step

step 20/84 - loss: 0.7982 - acc: 0.7555 - 71ms/step

step 30/84 - loss: 0.7934 - acc: 0.7549 - 67ms/step

step 40/84 - loss: 0.7760 - acc: 0.7557 - 65ms/step

step 50/84 - loss: 0.7713 - acc: 0.7572 - 64ms/step

step 60/84 - loss: 0.7942 - acc: 0.7568 - 62ms/step

step 70/84 - loss: 0.8002 - acc: 0.7545 - 62ms/step

step 80/84 - loss: 0.7784 - acc: 0.7554 - 61ms/step

step 84/84 - loss: 0.8570 - acc: 0.7559 - 59ms/step

Eval samples: 10646

Epoch 3/10

step 10/125 - loss: 0.7971 - acc: 0.7773 - 109ms/step

step 20/125 - loss: 0.7422 - acc: 0.7855 - 100ms/step

step 30/125 - loss: 0.7125 - acc: 0.7951 - 97ms/step

step 40/125 - loss: 0.7575 - acc: 0.8016 - 94ms/step

step 50/125 - loss: 0.6859 - acc: 0.8089 - 93ms/step

step 60/125 - loss: 0.6763 - acc: 0.8214 - 93ms/step

step 70/125 - loss: 0.6569 - acc: 0.8319 - 94ms/step

step 80/125 - loss: 0.6440 - acc: 0.8431 - 93ms/step

step 90/125 - loss: 0.6573 - acc: 0.8521 - 95ms/step

step 100/125 - loss: 0.6323 - acc: 0.8586 - 94ms/step

step 110/125 - loss: 0.6311 - acc: 0.8650 - 94ms/step

step 120/125 - loss: 0.6425 - acc: 0.8699 - 94ms/step

step 125/125 - loss: 0.6360 - acc: 0.8723 - 92ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.6429 - acc: 0.9258 - 87ms/step

step 20/84 - loss: 0.6527 - acc: 0.9227 - 70ms/step

step 30/84 - loss: 0.6373 - acc: 0.9237 - 66ms/step

step 40/84 - loss: 0.6258 - acc: 0.9271 - 64ms/step

step 50/84 - loss: 0.6468 - acc: 0.9269 - 63ms/step

step 60/84 - loss: 0.6249 - acc: 0.9279 - 63ms/step

step 70/84 - loss: 0.6554 - acc: 0.9261 - 62ms/step

step 80/84 - loss: 0.6408 - acc: 0.9262 - 61ms/step

step 84/84 - loss: 0.6208 - acc: 0.9280 - 58ms/step

Eval samples: 10646

Epoch 4/10

step 10/125 - loss: 0.6157 - acc: 0.9445 - 107ms/step

step 20/125 - loss: 0.6325 - acc: 0.9371 - 97ms/step

step 30/125 - loss: 0.6596 - acc: 0.9365 - 95ms/step

step 40/125 - loss: 0.6046 - acc: 0.9361 - 93ms/step

step 50/125 - loss: 0.6530 - acc: 0.9320 - 92ms/step

step 60/125 - loss: 0.6364 - acc: 0.9341 - 91ms/step

step 70/125 - loss: 0.6242 - acc: 0.9348 - 90ms/step

step 80/125 - loss: 0.6116 - acc: 0.9347 - 90ms/step

step 90/125 - loss: 0.6371 - acc: 0.9350 - 90ms/step

step 100/125 - loss: 0.6125 - acc: 0.9355 - 90ms/step

step 110/125 - loss: 0.5946 - acc: 0.9368 - 90ms/step

step 120/125 - loss: 0.6274 - acc: 0.9363 - 89ms/step

step 125/125 - loss: 0.5729 - acc: 0.9368 - 87ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.6248 - acc: 0.9398 - 84ms/step

step 20/84 - loss: 0.6306 - acc: 0.9383 - 69ms/step

step 30/84 - loss: 0.6160 - acc: 0.9401 - 65ms/step

step 40/84 - loss: 0.6045 - acc: 0.9424 - 64ms/step

step 50/84 - loss: 0.6228 - acc: 0.9414 - 62ms/step

step 60/84 - loss: 0.5969 - acc: 0.9419 - 61ms/step

step 70/84 - loss: 0.6313 - acc: 0.9408 - 60ms/step

step 80/84 - loss: 0.6134 - acc: 0.9413 - 59ms/step

step 84/84 - loss: 0.6030 - acc: 0.9427 - 56ms/step

Eval samples: 10646

Epoch 5/10

step 10/125 - loss: 0.5897 - acc: 0.9445 - 107ms/step

step 20/125 - loss: 0.6003 - acc: 0.9520 - 98ms/step

step 30/125 - loss: 0.6177 - acc: 0.9516 - 96ms/step

step 40/125 - loss: 0.6054 - acc: 0.9525 - 94ms/step

step 50/125 - loss: 0.6303 - acc: 0.9537 - 93ms/step

step 60/125 - loss: 0.6067 - acc: 0.9530 - 94ms/step

step 70/125 - loss: 0.5856 - acc: 0.9540 - 93ms/step

step 80/125 - loss: 0.5976 - acc: 0.9545 - 92ms/step

step 90/125 - loss: 0.6220 - acc: 0.9537 - 91ms/step

step 100/125 - loss: 0.6000 - acc: 0.9540 - 91ms/step

step 110/125 - loss: 0.6048 - acc: 0.9522 - 91ms/step

step 120/125 - loss: 0.6144 - acc: 0.9520 - 91ms/step

step 125/125 - loss: 0.6159 - acc: 0.9520 - 89ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.6338 - acc: 0.9406 - 87ms/step

step 20/84 - loss: 0.6171 - acc: 0.9441 - 71ms/step

step 30/84 - loss: 0.6064 - acc: 0.9461 - 67ms/step

step 40/84 - loss: 0.5934 - acc: 0.9490 - 65ms/step

step 50/84 - loss: 0.6212 - acc: 0.9481 - 64ms/step

step 60/84 - loss: 0.5881 - acc: 0.9484 - 64ms/step

step 70/84 - loss: 0.6167 - acc: 0.9477 - 63ms/step

step 80/84 - loss: 0.5918 - acc: 0.9479 - 61ms/step

step 84/84 - loss: 0.6166 - acc: 0.9488 - 59ms/step

Eval samples: 10646

Epoch 6/10

step 10/125 - loss: 0.5790 - acc: 0.9539 - 111ms/step

step 20/125 - loss: 0.5977 - acc: 0.9559 - 99ms/step

step 30/125 - loss: 0.5986 - acc: 0.9557 - 96ms/step

step 40/125 - loss: 0.5777 - acc: 0.9551 - 95ms/step

step 50/125 - loss: 0.5906 - acc: 0.9563 - 93ms/step

step 60/125 - loss: 0.5921 - acc: 0.9577 - 93ms/step

step 70/125 - loss: 0.5816 - acc: 0.9589 - 92ms/step

step 80/125 - loss: 0.6051 - acc: 0.9584 - 92ms/step

step 90/125 - loss: 0.5874 - acc: 0.9579 - 91ms/step

step 100/125 - loss: 0.5957 - acc: 0.9590 - 91ms/step

step 110/125 - loss: 0.6152 - acc: 0.9592 - 91ms/step

step 120/125 - loss: 0.5884 - acc: 0.9595 - 90ms/step

step 125/125 - loss: 0.6076 - acc: 0.9595 - 88ms/step

save checkpoint at /home/aistudio/checkpoints/gru_v1/5

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.6259 - acc: 0.9492 - 86ms/step

step 20/84 - loss: 0.6103 - acc: 0.9500 - 69ms/step

step 30/84 - loss: 0.5972 - acc: 0.9516 - 65ms/step

step 40/84 - loss: 0.5912 - acc: 0.9543 - 63ms/step

step 50/84 - loss: 0.6174 - acc: 0.9533 - 62ms/step

step 60/84 - loss: 0.5815 - acc: 0.9540 - 61ms/step

step 70/84 - loss: 0.6090 - acc: 0.9535 - 60ms/step

step 80/84 - loss: 0.5879 - acc: 0.9540 - 58ms/step

step 84/84 - loss: 0.6208 - acc: 0.9546 - 56ms/step

Eval samples: 10646

Epoch 7/10

step 10/125 - loss: 0.5885 - acc: 0.9609 - 111ms/step

step 20/125 - loss: 0.5848 - acc: 0.9637 - 98ms/step

step 30/125 - loss: 0.5899 - acc: 0.9648 - 95ms/step

step 40/125 - loss: 0.6268 - acc: 0.9602 - 93ms/step

step 50/125 - loss: 0.5984 - acc: 0.9617 - 92ms/step

step 60/125 - loss: 0.6060 - acc: 0.9624 - 91ms/step

step 70/125 - loss: 0.6193 - acc: 0.9633 - 90ms/step

step 80/125 - loss: 0.6107 - acc: 0.9638 - 90ms/step

step 90/125 - loss: 0.6120 - acc: 0.9636 - 90ms/step

step 100/125 - loss: 0.5850 - acc: 0.9638 - 90ms/step

step 110/125 - loss: 0.6033 - acc: 0.9646 - 90ms/step

step 120/125 - loss: 0.5901 - acc: 0.9643 - 89ms/step

step 125/125 - loss: 0.5671 - acc: 0.9646 - 87ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.6079 - acc: 0.9547 - 87ms/step

step 20/84 - loss: 0.5994 - acc: 0.9566 - 71ms/step

step 30/84 - loss: 0.5997 - acc: 0.9573 - 67ms/step

step 40/84 - loss: 0.5883 - acc: 0.9590 - 65ms/step

step 50/84 - loss: 0.6115 - acc: 0.9583 - 64ms/step

step 60/84 - loss: 0.5703 - acc: 0.9592 - 62ms/step

step 70/84 - loss: 0.6082 - acc: 0.9585 - 61ms/step

step 80/84 - loss: 0.5885 - acc: 0.9590 - 60ms/step

step 84/84 - loss: 0.5901 - acc: 0.9596 - 58ms/step

Eval samples: 10646

Epoch 8/10

step 10/125 - loss: 0.6017 - acc: 0.9648 - 105ms/step

step 20/125 - loss: 0.5801 - acc: 0.9695 - 97ms/step

step 30/125 - loss: 0.5824 - acc: 0.9693 - 92ms/step

step 40/125 - loss: 0.6109 - acc: 0.9689 - 91ms/step

step 50/125 - loss: 0.5899 - acc: 0.9686 - 90ms/step

step 60/125 - loss: 0.5632 - acc: 0.9691 - 89ms/step

step 70/125 - loss: 0.6070 - acc: 0.9694 - 89ms/step

step 80/125 - loss: 0.5853 - acc: 0.9693 - 89ms/step

step 90/125 - loss: 0.6099 - acc: 0.9687 - 90ms/step

step 100/125 - loss: 0.5829 - acc: 0.9694 - 90ms/step

step 110/125 - loss: 0.5884 - acc: 0.9694 - 90ms/step

step 120/125 - loss: 0.6085 - acc: 0.9689 - 90ms/step

step 125/125 - loss: 0.5566 - acc: 0.9688 - 88ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.6086 - acc: 0.9563 - 89ms/step

step 20/84 - loss: 0.5964 - acc: 0.9582 - 72ms/step

step 30/84 - loss: 0.5983 - acc: 0.9591 - 67ms/step

step 40/84 - loss: 0.5864 - acc: 0.9605 - 64ms/step

step 50/84 - loss: 0.6144 - acc: 0.9600 - 63ms/step

step 60/84 - loss: 0.5730 - acc: 0.9604 - 63ms/step

step 70/84 - loss: 0.6029 - acc: 0.9597 - 61ms/step

step 80/84 - loss: 0.5849 - acc: 0.9601 - 60ms/step

step 84/84 - loss: 0.5951 - acc: 0.9606 - 58ms/step

Eval samples: 10646

Epoch 9/10

step 10/125 - loss: 0.5861 - acc: 0.9633 - 110ms/step

step 20/125 - loss: 0.5853 - acc: 0.9652 - 100ms/step

step 30/125 - loss: 0.5744 - acc: 0.9695 - 95ms/step

step 40/125 - loss: 0.5724 - acc: 0.9689 - 93ms/step

step 50/125 - loss: 0.5910 - acc: 0.9702 - 92ms/step

step 60/125 - loss: 0.5868 - acc: 0.9701 - 91ms/step

step 70/125 - loss: 0.5931 - acc: 0.9705 - 91ms/step

step 80/125 - loss: 0.5752 - acc: 0.9715 - 91ms/step

step 90/125 - loss: 0.5677 - acc: 0.9720 - 91ms/step

step 100/125 - loss: 0.5638 - acc: 0.9712 - 90ms/step

step 110/125 - loss: 0.5650 - acc: 0.9715 - 90ms/step

step 120/125 - loss: 0.5765 - acc: 0.9715 - 90ms/step

step 125/125 - loss: 0.5677 - acc: 0.9713 - 88ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.6051 - acc: 0.9578 - 89ms/step

step 20/84 - loss: 0.5957 - acc: 0.9602 - 73ms/step

step 30/84 - loss: 0.5952 - acc: 0.9607 - 68ms/step

step 40/84 - loss: 0.5860 - acc: 0.9625 - 65ms/step

step 50/84 - loss: 0.6130 - acc: 0.9616 - 64ms/step

step 60/84 - loss: 0.5709 - acc: 0.9620 - 62ms/step

step 70/84 - loss: 0.5985 - acc: 0.9615 - 61ms/step

step 80/84 - loss: 0.5859 - acc: 0.9617 - 60ms/step

step 84/84 - loss: 0.5923 - acc: 0.9622 - 57ms/step

Eval samples: 10646

Epoch 10/10

step 10/125 - loss: 0.5898 - acc: 0.9703 - 109ms/step

step 20/125 - loss: 0.5808 - acc: 0.9699 - 96ms/step

step 30/125 - loss: 0.5680 - acc: 0.9706 - 94ms/step

step 40/125 - loss: 0.5849 - acc: 0.9723 - 94ms/step

step 50/125 - loss: 0.5791 - acc: 0.9741 - 94ms/step

step 60/125 - loss: 0.5722 - acc: 0.9742 - 93ms/step

step 70/125 - loss: 0.5931 - acc: 0.9735 - 92ms/step

step 80/125 - loss: 0.5773 - acc: 0.9725 - 91ms/step

step 90/125 - loss: 0.5967 - acc: 0.9731 - 91ms/step

step 100/125 - loss: 0.5766 - acc: 0.9735 - 90ms/step

step 110/125 - loss: 0.5773 - acc: 0.9739 - 90ms/step

step 120/125 - loss: 0.5805 - acc: 0.9740 - 89ms/step

step 125/125 - loss: 0.5547 - acc: 0.9741 - 88ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.6034 - acc: 0.9563 - 85ms/step

step 20/84 - loss: 0.5972 - acc: 0.9598 - 69ms/step

step 30/84 - loss: 0.5895 - acc: 0.9604 - 65ms/step

step 40/84 - loss: 0.5856 - acc: 0.9623 - 63ms/step

step 50/84 - loss: 0.6117 - acc: 0.9614 - 62ms/step

step 60/84 - loss: 0.5724 - acc: 0.9618 - 61ms/step

step 70/84 - loss: 0.5927 - acc: 0.9614 - 60ms/step

step 80/84 - loss: 0.5870 - acc: 0.9614 - 59ms/step

step 84/84 - loss: 0.5899 - acc: 0.9620 - 57ms/step

Eval samples: 10646

save checkpoint at /home/aistudio/checkpoints/gru_v1/final

model.save('finetuning/gru/model', training=True)

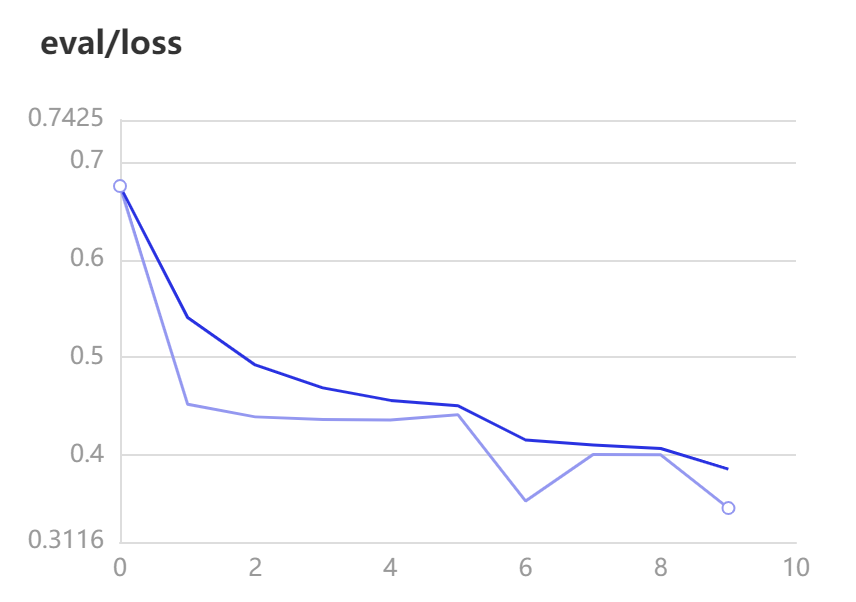

VisualDL可视化

模型评估

-

调用

model.evaluate一键评估模型 -

参数:

eval_data(Dataset|DataLoader) - 一个可迭代的数据源,推荐给定一个paddle.io.Dataset或paddle.io.Dataloader的实例。默认值:None。

results = model.evaluate(dev_loader)

print("Finally test acc: %.5f" % results['acc'])

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/84 - loss: 0.6034 - acc: 0.9563 - 88ms/step

step 20/84 - loss: 0.5972 - acc: 0.9598 - 71ms/step

step 30/84 - loss: 0.5895 - acc: 0.9604 - 67ms/step

step 40/84 - loss: 0.5856 - acc: 0.9623 - 64ms/step

step 50/84 - loss: 0.6117 - acc: 0.9614 - 62ms/step

step 60/84 - loss: 0.5724 - acc: 0.9618 - 61ms/step

step 70/84 - loss: 0.5927 - acc: 0.9614 - 60ms/step

step 80/84 - loss: 0.5870 - acc: 0.9614 - 58ms/step

step 84/84 - loss: 0.5899 - acc: 0.9620 - 56ms/step

Eval samples: 10646

Finally test acc: 0.96196

模型

-

调用

model.predict进行预测。 -

参数

test_data(Dataset|DataLoader): 一个可迭代的数据源,推荐给定一个paddle.io.Dataset或paddle.io.Dataloader的实例。默认值:None。

import numpy as np

label_map = {

0: 'negative', 1: 'positive'}

results = model.predict(test_loader, batch_size=64)[0]

predictions = []

for batch_probs in results:

# 映射分类label

idx = np.argmax(batch_probs, axis=-1)

idx = idx.tolist()

labels = [label_map[i] for i in idx]

predictions.extend(labels)

# 看看预测数据前5个样例分类结果

for idx, data in enumerate(test_ds.data[:5]):

print('Data: {} \t Label: {}'.format(data[0], predictions[idx]))

Predict begin...

step 42/42 [==============================] - ETA: 3s - 89ms/ste - ETA: 3s - 86ms/ste - ETA: 3s - 96ms/ste - ETA: 3s - 95ms/ste - ETA: 2s - 87ms/ste - ETA: 2s - 83ms/ste - ETA: 2s - 80ms/ste - ETA: 2s - 78ms/ste - ETA: 1s - 75ms/ste - ETA: 1s - 74ms/ste - ETA: 1s - 74ms/ste - ETA: 1s - 70ms/ste - ETA: 1s - 68ms/ste - ETA: 0s - 70ms/ste - ETA: 0s - 71ms/ste - ETA: 0s - 71ms/ste - ETA: 0s - 71ms/ste - ETA: 0s - 70ms/ste - ETA: 0s - 68ms/ste - ETA: 0s - 66ms/ste - 64ms/step

Predict samples: 5353

Data: 楼面经理服务态度极差,等位和埋单都差,楼面小妹还挺好 Label: negative

Data: 欺负北方人没吃过鲍鱼是怎么着?简直敷衍到可笑的程度,团购连青菜都是两人份?!难吃到死,菜色还特别可笑,什么时候粤菜的小菜改成拍黄瓜了?!把团购客人当傻子,可这满大厅的傻子谁还会再来?! Label: negative

Data: 如果大家有时间而且不怕麻烦的话可以去这里试试,点一个饭等左2个钟,没错!是两个钟!期间催了n遍都说马上到,结果?呵呵。乳鸽的味道,太咸,可能不新鲜吧……要用重口味盖住异味。上菜超级慢!中途还搞什么表演,麻烦有人手的话就上菜啊,表什么演?!?!要大家饿着看表演吗?最后结账还算错单,我真心服了……有一种店叫不会有下次,大概就是指它吧 Label: negative

Data: 偌大的一个大厅就一个人点菜,点菜速度超级慢,菜牌上多个菜停售,连续点了两个没标停售的菜也告知没有,粥上来是凉的,榴莲酥火大了,格格肉超级油腻而且咸?????? Label: negative

Data: 泥撕雞超級好吃!!!吃了一個再叫一個還想打包的節奏! Label: positive

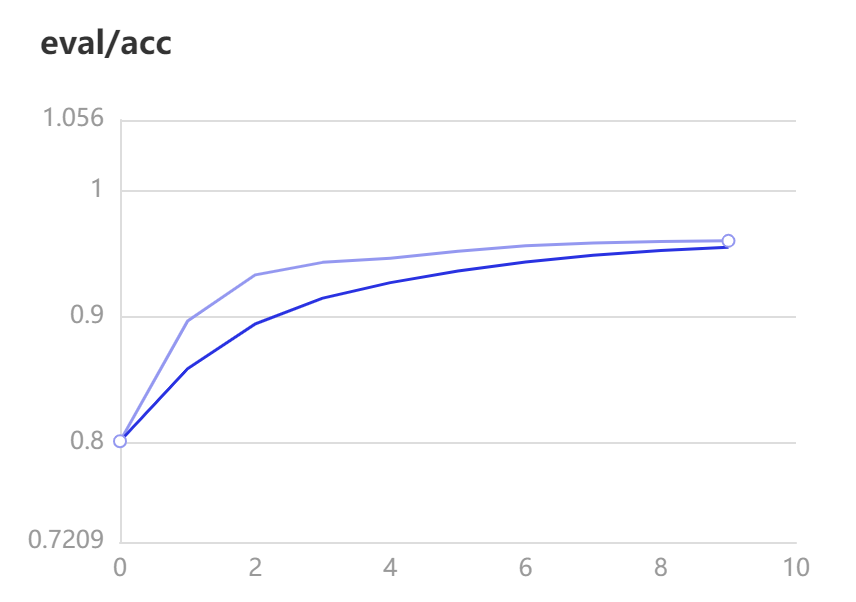

更换三分类数据集进行测试

三分类除了涉及到positive和negative两种情感外,还有一种neural情感,从原始数据集中可以提取到有语义转折的句子,“然而”,“但”都是关键词。从而可以得到3份不同语义的数据集。

数据准备

class Classifier3Dataset(paddle.io.Dataset):

def __init__(self, data):

super(Classifier3Dataset, self).__init__()

self.data = data

def __getitem__(self, idx):

return self.data[idx]

def __len__(self):

return len(self.data)

def get_labels(self):

return ["0", "1", "2"]

def txt_to_list(file_name):

res_list = []

for line in open(file_name):

res_list.append(line.strip().split('\t'))

return res_list

from functools import partial

from paddlenlp.data import Pad, Stack, Tuple

from utils import create_dataloader, convert_example

trainlst = txt_to_list('./my_data/train.txt')

devlst = txt_to_list('./my_data/dev.txt')

testlst = txt_to_list('./my_data/test.txt')

# 通过get_datasets()函数,将list数据转换为dataset。

# get_datasets()可接收[list]参数,或[str]参数,根据自定义数据集的写法自由选择。

# train_ds, dev_ds, test_ds = ppnlp.datasets.ChnSentiCorp.get_datasets(['train', 'dev', 'test'])

train_ds, dev_ds, test_ds = Classifier3Dataset.get_datasets([trainlst, devlst, testlst])

label_list = train_ds.get_labels()

print(label_list)

for sent, label in train_ds[:5]:

print (sent, label)

tmp = ppnlp.datasets.ChnSentiCorp('train')

tmp1 = ppnlp.datasets.MapDatasetWrapper(tmp)

print(type(tmp), type(tmp1))

for sent, label in tmp1[:5]:

print(sent, label)

# Reads data and generates mini-batches.

trans_fn = partial(

convert_example,

vocab=vocab,

unk_token_id=vocab.get('[UNK]', 1),

is_test=False)

# 将读入的数据batch化处理,便于模型batch化运算。

# batch中的每个句子将会padding到这个batch中的文本最大长度batch_max_seq_len。

# 当文本长度大于batch_max_seq时,将会截断到batch_max_seq_len;当文本长度小于batch_max_seq时,将会padding补齐到batch_max_seq_len.

batchify_fn = lambda samples, fn=Tuple(

Pad(axis=0, pad_val=vocab['[PAD]']), # input_ids

Stack(dtype="int64"), # seq len

Stack(dtype="int64") # label

): [data for data in fn(samples)]

# batch_size改为256

train_loader = create_dataloader(

train_ds,

trans_fn=trans_fn,

batch_size=256,

mode='train',

batchify_fn=batchify_fn)

dev_loader = create_dataloader(

dev_ds,

trans_fn=trans_fn,

batch_size=256,

mode='validation',

batchify_fn=batchify_fn)

test_loader = create_dataloader(

test_ds,

trans_fn=trans_fn,

batch_size=256,

mode='test',

batchify_fn=batchify_fn)

['0', '1', '2']

环境不错,叉烧包小孩爱吃,三色煎糕很一般 2

刚来的时候让我们等位置,我们就在门口等了十分钟左右,没有见到有人离开,然后有工作人员让我们上来二楼,上来后看到有十来桌是没有人的,既然有位置,为什么非得让我们在门口等位!!!本以为有座位后就可以马上吃饭了,让人内心崩溃的是点菜就等了十分钟才有人有空过来理我们。上菜更是郁闷,端来了一锅猪肚鸡,但是没人开火,要点调味料也没人管,服务质量太差,之前来过一次觉得还可以,这次让我再也不想来这家店了!真心失望 0

口味还可以服务真的差到爆啊我来过45次真的次次都只给差评东西确实不错但你看看你们的服务还收服务费我的天干蒸什么的60块比太古汇翠园还贵主要是没人收台没人倒水谁还要自己倒我的天给你服务费还什么都自己干我接受不了钱花了服务没有实在不行 0

出品不错老字号就是好有山有水有树有鱼赞 1

在江南大道这间~服务态度好差,食物出品平凡,应该唔会再去了 1

<class 'paddlenlp.datasets.chnsenticorp.ChnSentiCorp'> <class 'paddlenlp.datasets.dataset.MapDatasetWrapper'>

选择珠江花园的原因就是方便,有电动扶梯直接到达海边,周围餐馆、食廊、商场、超市、摊位一应俱全。酒店装修一般,但还算整洁。 泳池在大堂的屋顶,因此很小,不过女儿倒是喜欢。 包的早餐是西式的,还算丰富。 服务吗,一般 1

15.4寸笔记本的键盘确实爽,基本跟台式机差不多了,蛮喜欢数字小键盘,输数字特方便,样子也很美观,做工也相当不错 1

房间太小。其他的都一般。。。。。。。。。 0

1.接电源没有几分钟,电源适配器热的不行. 2.摄像头用不起来. 3.机盖的钢琴漆,手不能摸,一摸一个印. 4.硬盘分区不好办. 0

今天才知道这书还有第6卷,真有点郁闷:为什么同一套书有两种版本呢?当当网是不是该跟出版社商量商量,单独出个第6卷,让我们的孩子不会有所遗憾。 1

训练感觉有点过拟合 加大了

batch_size 128->256,hidden_size 96->128同时引入了dropout=0.2

vocab = load_vocab('./senta_word_dict.txt')

model = GRUModel(

vocab_size=len(vocab),

num_classes=len(label_list),

direction='bidirectional',

padding_idx=vocab['[PAD]'],

dropout_rate=0.2,

fc_hidden_size=128) # out -> 128 -> 3

model = paddle.Model(model)

optimizer = paddle.optimizer.Adam(

parameters=model.parameters(), learning_rate=5e-5)

loss = paddle.nn.CrossEntropyLoss()

metric = paddle.metric.Accuracy()

model.prepare(optimizer, loss, metric)

# 设置visualdl路径

log_dir = './visualdl/gru_3'

callbacks = paddle.callbacks.VisualDL(log_dir=log_dir)

model.fit(train_loader,

dev_loader,

epochs=10,

save_dir='./checkpoints/gru_3',

save_freq=5,

callbacks=callbacks)

The loss value printed in the log is the current step, and the metric is the average value of previous step.

Epoch 1/10

step 10/126 - loss: 1.0978 - acc: 0.3398 - 208ms/step

step 20/126 - loss: 1.0996 - acc: 0.3350 - 181ms/step

step 30/126 - loss: 1.0976 - acc: 0.3408 - 173ms/step

step 40/126 - loss: 1.0953 - acc: 0.3434 - 169ms/step

step 50/126 - loss: 1.0956 - acc: 0.3451 - 165ms/step

step 60/126 - loss: 1.0965 - acc: 0.3449 - 162ms/step

step 70/126 - loss: 1.0983 - acc: 0.3444 - 161ms/step

step 80/126 - loss: 1.0960 - acc: 0.3454 - 160ms/step

step 90/126 - loss: 1.0955 - acc: 0.3456 - 159ms/step

step 100/126 - loss: 1.0925 - acc: 0.3445 - 158ms/step

step 110/126 - loss: 1.0915 - acc: 0.3480 - 158ms/step

step 120/126 - loss: 1.0898 - acc: 0.3595 - 158ms/step

step 126/126 - loss: 1.0895 - acc: 0.3675 - 154ms/step

save checkpoint at /home/aistudio/checkpoints/gru_3/0

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/16 - loss: 1.0886 - acc: 0.5535 - 129ms/step

step 16/16 - loss: 1.0892 - acc: 0.5592 - 117ms/step

Eval samples: 3968

Epoch 2/10

step 10/126 - loss: 1.0875 - acc: 0.5953 - 200ms/step

step 20/126 - loss: 1.0836 - acc: 0.5965 - 177ms/step

step 30/126 - loss: 1.0774 - acc: 0.5948 - 171ms/step

step 40/126 - loss: 1.0721 - acc: 0.5929 - 166ms/step

step 50/126 - loss: 1.0589 - acc: 0.5895 - 164ms/step

step 60/126 - loss: 1.0572 - acc: 0.5824 - 161ms/step

step 70/126 - loss: 1.0510 - acc: 0.5797 - 161ms/step

step 80/126 - loss: 1.0356 - acc: 0.5745 - 160ms/step

step 90/126 - loss: 0.9759 - acc: 0.5755 - 160ms/step

step 100/126 - loss: 0.9620 - acc: 0.5741 - 160ms/step

step 110/126 - loss: 0.9256 - acc: 0.5756 - 159ms/step

step 120/126 - loss: 0.9095 - acc: 0.5785 - 158ms/step

step 126/126 - loss: 0.8743 - acc: 0.5817 - 154ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/16 - loss: 0.8846 - acc: 0.6320 - 138ms/step

step 16/16 - loss: 0.9308 - acc: 0.6308 - 123ms/step

Eval samples: 3968

Epoch 3/10

step 10/126 - loss: 0.9291 - acc: 0.6621 - 197ms/step

step 20/126 - loss: 0.8573 - acc: 0.6590 - 176ms/step

step 30/126 - loss: 0.9236 - acc: 0.6594 - 168ms/step

step 40/126 - loss: 0.8650 - acc: 0.6684 - 164ms/step

step 50/126 - loss: 0.8187 - acc: 0.6738 - 162ms/step

step 60/126 - loss: 0.8715 - acc: 0.6797 - 161ms/step

step 70/126 - loss: 0.8505 - acc: 0.6854 - 160ms/step

step 80/126 - loss: 0.8257 - acc: 0.6878 - 159ms/step

step 90/126 - loss: 0.8558 - acc: 0.6905 - 159ms/step

step 100/126 - loss: 0.8403 - acc: 0.6926 - 159ms/step

step 110/126 - loss: 0.7945 - acc: 0.6958 - 158ms/step

step 120/126 - loss: 0.8609 - acc: 0.6980 - 158ms/step

step 126/126 - loss: 0.8882 - acc: 0.6992 - 154ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/16 - loss: 0.8116 - acc: 0.7141 - 134ms/step

step 16/16 - loss: 0.8394 - acc: 0.7193 - 119ms/step

Eval samples: 3968

Epoch 4/10

step 10/126 - loss: 0.7935 - acc: 0.7508 - 231ms/step

step 20/126 - loss: 0.7982 - acc: 0.7494 - 190ms/step

step 30/126 - loss: 0.8068 - acc: 0.7480 - 177ms/step

step 40/126 - loss: 0.7855 - acc: 0.7446 - 172ms/step

step 50/126 - loss: 0.8038 - acc: 0.7469 - 168ms/step

step 60/126 - loss: 0.8076 - acc: 0.7500 - 165ms/step

step 70/126 - loss: 0.7809 - acc: 0.7518 - 165ms/step

step 80/126 - loss: 0.7585 - acc: 0.7547 - 163ms/step

step 90/126 - loss: 0.7997 - acc: 0.7564 - 162ms/step

step 100/126 - loss: 0.8227 - acc: 0.7561 - 161ms/step

step 110/126 - loss: 0.7757 - acc: 0.7562 - 160ms/step

step 120/126 - loss: 0.7927 - acc: 0.7562 - 160ms/step

step 126/126 - loss: 0.7383 - acc: 0.7566 - 155ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/16 - loss: 0.7797 - acc: 0.7344 - 131ms/step

step 16/16 - loss: 0.8309 - acc: 0.7424 - 118ms/step

Eval samples: 3968

Epoch 5/10

step 10/126 - loss: 0.7490 - acc: 0.7945 - 204ms/step

step 20/126 - loss: 0.7892 - acc: 0.7848 - 178ms/step

step 30/126 - loss: 0.7733 - acc: 0.7818 - 170ms/step

step 40/126 - loss: 0.7219 - acc: 0.7829 - 165ms/step

step 50/126 - loss: 0.7361 - acc: 0.7833 - 163ms/step

step 60/126 - loss: 0.7994 - acc: 0.7804 - 161ms/step

step 70/126 - loss: 0.7618 - acc: 0.7810 - 161ms/step

step 80/126 - loss: 0.7607 - acc: 0.7832 - 161ms/step

step 90/126 - loss: 0.7378 - acc: 0.7851 - 160ms/step

step 100/126 - loss: 0.7430 - acc: 0.7852 - 159ms/step

step 110/126 - loss: 0.7676 - acc: 0.7856 - 159ms/step

step 120/126 - loss: 0.7475 - acc: 0.7865 - 158ms/step

step 126/126 - loss: 0.7938 - acc: 0.7866 - 154ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/16 - loss: 0.7679 - acc: 0.7441 - 132ms/step

step 16/16 - loss: 0.8193 - acc: 0.7505 - 118ms/step

Eval samples: 3968

Epoch 6/10

step 10/126 - loss: 0.7762 - acc: 0.8020 - 200ms/step

step 20/126 - loss: 0.7412 - acc: 0.8004 - 177ms/step

step 30/126 - loss: 0.7627 - acc: 0.8049 - 169ms/step

step 40/126 - loss: 0.7367 - acc: 0.8074 - 165ms/step

step 50/126 - loss: 0.7610 - acc: 0.8068 - 163ms/step

step 60/126 - loss: 0.7663 - acc: 0.8056 - 161ms/step

step 70/126 - loss: 0.7318 - acc: 0.8050 - 160ms/step

step 80/126 - loss: 0.7516 - acc: 0.8081 - 159ms/step

step 90/126 - loss: 0.7567 - acc: 0.8073 - 158ms/step

step 100/126 - loss: 0.7430 - acc: 0.8080 - 159ms/step

step 110/126 - loss: 0.7549 - acc: 0.8069 - 159ms/step

step 120/126 - loss: 0.7199 - acc: 0.8068 - 159ms/step

step 126/126 - loss: 0.7319 - acc: 0.8067 - 155ms/step

save checkpoint at /home/aistudio/checkpoints/gru_3/5

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/16 - loss: 0.7777 - acc: 0.7492 - 131ms/step

step 16/16 - loss: 0.8116 - acc: 0.7538 - 118ms/step

Eval samples: 3968

Epoch 7/10

step 10/126 - loss: 0.7169 - acc: 0.8000 - 195ms/step

step 20/126 - loss: 0.7229 - acc: 0.8127 - 176ms/step

step 30/126 - loss: 0.7203 - acc: 0.8156 - 170ms/step

step 40/126 - loss: 0.7103 - acc: 0.8191 - 166ms/step

step 50/126 - loss: 0.6762 - acc: 0.8223 - 163ms/step

step 60/126 - loss: 0.7651 - acc: 0.8215 - 161ms/step

step 70/126 - loss: 0.7337 - acc: 0.8228 - 160ms/step

step 80/126 - loss: 0.7348 - acc: 0.8228 - 160ms/step

step 90/126 - loss: 0.7023 - acc: 0.8231 - 160ms/step

step 100/126 - loss: 0.7326 - acc: 0.8229 - 159ms/step

step 110/126 - loss: 0.7133 - acc: 0.8237 - 159ms/step

step 120/126 - loss: 0.7220 - acc: 0.8237 - 159ms/step

step 126/126 - loss: 0.6864 - acc: 0.8238 - 155ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/16 - loss: 0.7588 - acc: 0.7543 - 133ms/step

step 16/16 - loss: 0.8104 - acc: 0.7581 - 118ms/step

Eval samples: 3968

Epoch 8/10

step 10/126 - loss: 0.7200 - acc: 0.8258 - 196ms/step

step 20/126 - loss: 0.7397 - acc: 0.8305 - 176ms/step

step 30/126 - loss: 0.7124 - acc: 0.8366 - 170ms/step

step 40/126 - loss: 0.7328 - acc: 0.8372 - 167ms/step

step 50/126 - loss: 0.7100 - acc: 0.8402 - 164ms/step

step 60/126 - loss: 0.6964 - acc: 0.8393 - 162ms/step

step 70/126 - loss: 0.7164 - acc: 0.8388 - 163ms/step

step 80/126 - loss: 0.7102 - acc: 0.8390 - 165ms/step

step 90/126 - loss: 0.7163 - acc: 0.8385 - 165ms/step

step 100/126 - loss: 0.7125 - acc: 0.8386 - 165ms/step

step 110/126 - loss: 0.7342 - acc: 0.8384 - 164ms/step

step 120/126 - loss: 0.7394 - acc: 0.8379 - 163ms/step

step 126/126 - loss: 0.6978 - acc: 0.8382 - 158ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/16 - loss: 0.7574 - acc: 0.7547 - 140ms/step

step 16/16 - loss: 0.8107 - acc: 0.7558 - 127ms/step

Eval samples: 3968

Epoch 9/10

step 10/126 - loss: 0.6952 - acc: 0.8582 - 208ms/step

step 20/126 - loss: 0.7105 - acc: 0.8520 - 178ms/step

step 30/126 - loss: 0.7099 - acc: 0.8492 - 170ms/step

step 40/126 - loss: 0.7073 - acc: 0.8480 - 167ms/step

step 50/126 - loss: 0.7057 - acc: 0.8480 - 163ms/step

step 60/126 - loss: 0.6870 - acc: 0.8474 - 160ms/step

step 70/126 - loss: 0.6980 - acc: 0.8481 - 159ms/step

step 80/126 - loss: 0.6783 - acc: 0.8481 - 158ms/step

step 90/126 - loss: 0.7179 - acc: 0.8467 - 158ms/step

step 100/126 - loss: 0.6865 - acc: 0.8482 - 158ms/step

step 110/126 - loss: 0.6836 - acc: 0.8488 - 158ms/step

step 120/126 - loss: 0.7049 - acc: 0.8488 - 158ms/step

step 126/126 - loss: 0.7239 - acc: 0.8485 - 154ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/16 - loss: 0.7657 - acc: 0.7578 - 134ms/step

step 16/16 - loss: 0.8159 - acc: 0.7608 - 121ms/step

Eval samples: 3968

Epoch 10/10

step 10/126 - loss: 0.6735 - acc: 0.8539 - 202ms/step

step 20/126 - loss: 0.7280 - acc: 0.8547 - 178ms/step

step 30/126 - loss: 0.7055 - acc: 0.8539 - 170ms/step

step 40/126 - loss: 0.7137 - acc: 0.8554 - 165ms/step

step 50/126 - loss: 0.6825 - acc: 0.8568 - 162ms/step

step 60/126 - loss: 0.7012 - acc: 0.8569 - 161ms/step

step 70/126 - loss: 0.7151 - acc: 0.8573 - 160ms/step

step 80/126 - loss: 0.7342 - acc: 0.8571 - 158ms/step

step 90/126 - loss: 0.6726 - acc: 0.8569 - 158ms/step

step 100/126 - loss: 0.7126 - acc: 0.8577 - 157ms/step

step 110/126 - loss: 0.6896 - acc: 0.8578 - 158ms/step

step 120/126 - loss: 0.6932 - acc: 0.8581 - 158ms/step

step 126/126 - loss: 0.7185 - acc: 0.8580 - 153ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/16 - loss: 0.7604 - acc: 0.7590 - 134ms/step

step 16/16 - loss: 0.8171 - acc: 0.7626 - 121ms/step

Eval samples: 3968

save checkpoint at /home/aistudio/checkpoints/gru_3/final

# 由于acc还在上升 再训练5个epoch

model.fit(train_loader,

dev_loader,

epochs=5,

save_dir='./checkpoints/gru_3',

save_freq=5,

callbacks=callbacks)

The loss value printed in the log is the current step, and the metric is the average value of previous step.

Epoch 1/5

step 10/126 - loss: 0.6639 - acc: 0.8621 - 205ms/step

step 20/126 - loss: 0.6666 - acc: 0.8641 - 184ms/step

step 30/126 - loss: 0.6868 - acc: 0.8629 - 178ms/step

step 40/126 - loss: 0.6952 - acc: 0.8665 - 174ms/step

step 50/126 - loss: 0.6786 - acc: 0.8670 - 172ms/step

step 60/126 - loss: 0.6718 - acc: 0.8674 - 172ms/step

step 70/126 - loss: 0.7139 - acc: 0.8652 - 170ms/step

step 80/126 - loss: 0.7146 - acc: 0.8647 - 168ms/step

step 90/126 - loss: 0.6890 - acc: 0.8660 - 167ms/step

step 100/126 - loss: 0.7247 - acc: 0.8655 - 165ms/step

step 110/126 - loss: 0.6748 - acc: 0.8660 - 164ms/step

step 120/126 - loss: 0.6856 - acc: 0.8658 - 163ms/step

step 126/126 - loss: 0.7343 - acc: 0.8657 - 159ms/step

save checkpoint at /home/aistudio/checkpoints/gru_3/0

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 10/16 - loss: 0.7668 - acc: 0.7523 - 130ms/step

step 16/16 - loss: 0.8106 - acc: 0.7581 - 116ms/step

Eval samples: 3968

Epoch 2/5

step 10/126 - loss: 0.6910 - acc: 0.8793 - 201ms/step

step 20/126 - loss: 0.6806 - acc: 0.8732 - 178ms/step

step 30/126 - loss: 0.6818 - acc: 0.8702 - 168ms/step

step 40/126 - loss: 0.7119 - acc: 0.8700 - 164ms/step

step 50/126 - loss: 0.6921 - acc: 0.8701 - 163ms/step