源码基于:Android R

0. 前言

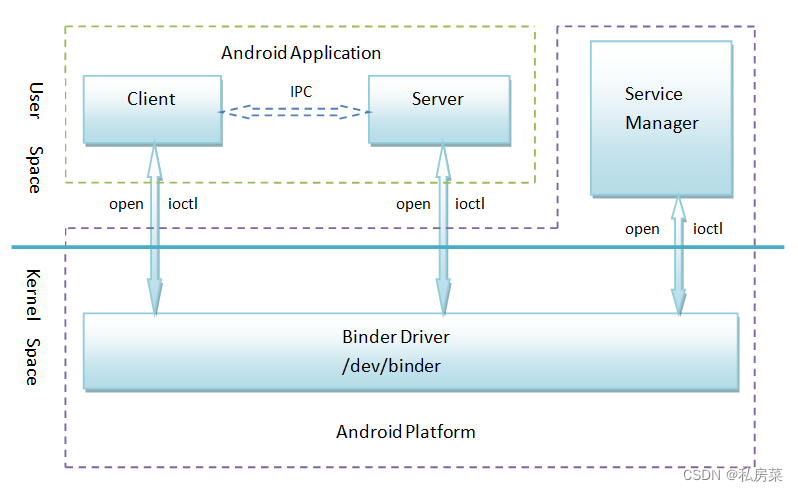

下图是android 8.0 之前binder 的软件框架,依赖的驱动设备是/dev/binder,binder机制的四要素分别是client、server、servicemanager和binder驱动。

对于android 8.0后的binder 和vndbinder依然同这个框架,只不过驱动的设备多加/dev/vndbinder

这篇主要分析servicemanger的流程,hwservicemanger后续补充。

2. servicemanager 生成

先来看下该bin 文件产生:

frameworks/native/cmds/servicemanager/Android.bp

cc_binary {

name: "servicemanager",

defaults: ["servicemanager_defaults"],

init_rc: ["servicemanager.rc"],

srcs: ["main.cpp"],

}

cc_binary {

name: "vndservicemanager",

defaults: ["servicemanager_defaults"],

init_rc: ["vndservicemanager.rc"],

vendor: true,

cflags: [

"-DVENDORSERVICEMANAGER=1",

],

srcs: ["main.cpp"],

}该目录下通过同样的main.cpp 编译出两个bin 文件:servicemanager 和 vndservicemanager,两个bin 文件对应不同的 *.rc 文件。

frameworks/native/cmds/servicemanager/servicemanager.rc

service servicemanager /system/bin/servicemanager

class core animation

user system

group system readproc

critical

onrestart restart healthd

onrestart restart zygote

onrestart restart audioserver

onrestart restart media

onrestart restart surfaceflinger

onrestart restart inputflinger

onrestart restart drm

onrestart restart cameraserver

onrestart restart keystore

onrestart restart gatekeeperd

onrestart restart thermalservice

writepid /dev/cpuset/system-background/tasks

shutdown criticalframeworks/native/cmds/servicemanager/vndservicemanager.rc

service vndservicemanager /vendor/bin/vndservicemanager /dev/vndbinder

class core

user system

group system readproc

writepid /dev/cpuset/system-background/tasks

shutdown critical3. servicemanager 的main()

frameworks/native/cmds/servicemanager/main.cpp

int main(int argc, char** argv) {

if (argc > 2) {

LOG(FATAL) << "usage: " << argv[0] << " [binder driver]";

}

//根据参数确认代码的设备是binder还是vndbinder

const char* driver = argc == 2 ? argv[1] : "/dev/binder";

//驱动设备的初始化工作,后面第 3.1 节详细说明

sp<ProcessState> ps = ProcessState::initWithDriver(driver);

//告知驱动最大线程数,并设定servicemanger的线程最大数

ps->setThreadPoolMaxThreadCount(0);

//调用处理,以error方式还是fatal

ps->setCallRestriction(ProcessState::CallRestriction::FATAL_IF_NOT_ONEWAY);

//核心的接口都在这里

sp<ServiceManager> manager = new ServiceManager(std::make_unique<Access>());

//将servicemanager作为一个特殊service添加进来

if (!manager->addService("manager", manager, false /*allowIsolated*/, IServiceManager::DUMP_FLAG_PRIORITY_DEFAULT).isOk()) {

LOG(ERROR) << "Could not self register servicemanager";

}

//保存进程的context obj

IPCThreadState::self()->setTheContextObject(manager);

//通知驱动context mgr是该context

ps->becomeContextManager(nullptr, nullptr);

//servicemanager 中新建一个looper,用以处理binder消息

sp<Looper> looper = Looper::prepare(false /*allowNonCallbacks*/);

//通知驱动进入looper,并开始监听驱动消息,并设立callback进行处理

BinderCallback::setupTo(looper);

ClientCallbackCallback::setupTo(looper, manager);

//启动looper,进入每次的poll处理,进程如果没有出现异常情况导致abort是不会退出的

while(true) {

looper->pollAll(-1);

}

// should not be reached

return EXIT_FAILURE;

}

3.1 initWithDriver()

frameworks/native/libs/binder/ProcessState.cpp

sp<ProcessState> ProcessState::initWithDriver(const char* driver)

{

Mutex::Autolock _l(gProcessMutex);

if (gProcess != nullptr) {

// Allow for initWithDriver to be called repeatedly with the same

// driver.

if (!strcmp(gProcess->getDriverName().c_str(), driver)) {

return gProcess;

}

LOG_ALWAYS_FATAL("ProcessState was already initialized.");

}

if (access(driver, R_OK) == -1) {

ALOGE("Binder driver %s is unavailable. Using /dev/binder instead.", driver);

driver = "/dev/binder";

}

gProcess = new ProcessState(driver);

return gProcess;

}函数的参数为driver 的设备名称,是 /dev/binder 还是 /dev/vndbinder。

另外的逻辑比较简单,ProcesState 是管理 “进程状态”,Binder 中每个进程都会有且只有一个mProcess 对象,如果该实例不为空,则确认该实例打开的driver 是否为当前需要init 的driver 名称;如果该实例不存在,则通过 new 创建一个。

详细的PorcessState 可以查看第 4 节。

3.2 new ServiceManager()

核心的接口都是位于Servicemanager 中,这里构造时新建一个Access 对象,如下:

frameworks/native/cmds/servicemanager/ServiceManager.cpp

ServiceManager::ServiceManager(std::unique_ptr<Access>&& access) : mAccess(std::move(access)) {

...

}通过move 接口调用 Access 的移动构造函数,创建实例mAccess,mAccess 用于通过selinux 确认servicemanager的权限。

3.3 addService()

if (!manager->addService("manager", manager, false /*allowIsolated*/, IServiceManager::DUMP_FLAG_PRIORITY_DEFAULT).isOk()) {

LOG(ERROR) << "Could not self register servicemanager";

}如果是其他的service 注册到servicemanager 是需要通过 IServiceManager 经过binder 最终调用到ServiceManager 中的addService(),而这里直接通过 ServiceManager 对象直接注册。

frameworks/native/cmds/servicemanager/ServiceManager.cpp

Status ServiceManager::addService(const std::string& name, const sp<IBinder>& binder, bool allowIsolated, int32_t dumpPriority) {

auto ctx = mAccess->getCallingContext();

//应用进程没有权限注册服务

if (multiuser_get_app_id(ctx.uid) >= AID_APP) {

return Status::fromExceptionCode(Status::EX_SECURITY);

}

// selinux 曲线是否允许注册为SELABEL_CTX_ANDROID_SERVICE

if (!mAccess->canAdd(ctx, name)) {

return Status::fromExceptionCode(Status::EX_SECURITY);

}

//传入的IBinder 不能为nullptr

if (binder == nullptr) {

return Status::fromExceptionCode(Status::EX_ILLEGAL_ARGUMENT);

}

//service name 需要符合要求,由0-9、a-z、A-Z、下划线、短线、点号、斜杠组成,name 长度不能超过127

if (!isValidServiceName(name)) {

LOG(ERROR) << "Invalid service name: " << name;

return Status::fromExceptionCode(Status::EX_ILLEGAL_ARGUMENT);

}

//这里应该是需要普通的vnd service 进行vintf 声明

#ifndef VENDORSERVICEMANAGER

if (!meetsDeclarationRequirements(binder, name)) {

// already logged

return Status::fromExceptionCode(Status::EX_ILLEGAL_ARGUMENT);

}

#endif // !VENDORSERVICEMANAGER

//注册linkToDeath,监听service 状态

if (binder->remoteBinder() != nullptr && binder->linkToDeath(this) != OK) {

LOG(ERROR) << "Could not linkToDeath when adding " << name;

return Status::fromExceptionCode(Status::EX_ILLEGAL_STATE);

}

//添加到map 中

auto entry = mNameToService.emplace(name, Service {

.binder = binder,

.allowIsolated = allowIsolated,

.dumpPriority = dumpPriority,

.debugPid = ctx.debugPid,

});

//确认是否注册了service callback,如果注册调用回调

auto it = mNameToRegistrationCallback.find(name);

if (it != mNameToRegistrationCallback.end()) {

for (const sp<IServiceCallback>& cb : it->second) {

entry.first->second.guaranteeClient = true;

// permission checked in registerForNotifications

cb->onRegistration(name, binder);

}

}

return Status::ok();

}3.4 setTheContextObject()

IPCThreadState::self()->setTheContextObject(manager);

ps->becomeContextManager(nullptr, nullptr);第一行是创建 IPCThreadState,并将 servicemanager 存放到IPCThreadState 中,用于后面transact 使用。

第二行通过命令 BINDER_SET_CONTEXT_MGR_EXT 通知驱动 context mgr:

frameworks/native/libs/binder/ProcessState.cpp

bool ProcessState::becomeContextManager(context_check_func checkFunc, void* userData)

{

AutoMutex _l(mLock);

mBinderContextCheckFunc = checkFunc;

mBinderContextUserData = userData;

flat_binder_object obj {

.flags = FLAT_BINDER_FLAG_TXN_SECURITY_CTX,

};

int result = ioctl(mDriverFD, BINDER_SET_CONTEXT_MGR_EXT, &obj);

// fallback to original method

if (result != 0) {

android_errorWriteLog(0x534e4554, "121035042");

int dummy = 0;

result = ioctl(mDriverFD, BINDER_SET_CONTEXT_MGR, &dummy);

}

if (result == -1) {

mBinderContextCheckFunc = nullptr;

mBinderContextUserData = nullptr;

ALOGE("Binder ioctl to become context manager failed: %s\n", strerror(errno));

}

return result == 0;

}通知驱动创建context_mgr_node,下面是驱动层的代码:

drivers/android/binder.c

static long binder_ioctl(struct file *filp, unsigned int cmd, unsigned long arg)

{

...

case BINDER_SET_CONTEXT_MGR_EXT: {

struct flat_binder_object fbo;

if (copy_from_user(&fbo, ubuf, sizeof(fbo))) {

ret = -EINVAL;

goto err;

}

ret = binder_ioctl_set_ctx_mgr(filp, &fbo);

if (ret)

goto err;

break;

}

case BINDER_SET_CONTEXT_MGR:

ret = binder_ioctl_set_ctx_mgr(filp, NULL);

if (ret)

goto err;

break;

...

...

}两个cmd 主要区别是否有flat_binder_object,最终都是调用binder_ioctl_set_ctx_mgr 函数:

drivers/android/binder.c

static int binder_ioctl_set_ctx_mgr(struct file *filp,

struct flat_binder_object *fbo)

{

int ret = 0;

//进程的binder_proc, 这里是ServiceManager的 binder_proc,之前通过open("/dev/binder")得来

struct binder_proc *proc = filp->private_data;

struct binder_context *context = proc->context;

struct binder_node *new_node;

kuid_t curr_euid = current_euid(); // 线程的uid

mutex_lock(&context->context_mgr_node_lock);

//正常第一次为null,如果不为null则说明该进程已经设置过context mgr则直接退出

if (context->binder_context_mgr_node) {

pr_err("BINDER_SET_CONTEXT_MGR already set\n");

ret = -EBUSY;

goto out;

}

//检查当前进程是否具有注册Context Manager的SEAndroid安全权限

ret = security_binder_set_context_mgr(proc->tsk);

if (ret < 0)

goto out;

if (uid_valid(context->binder_context_mgr_uid)) {

//读取binder_context_mgr_uid和当前的比,如果不一样,报错

if (!uid_eq(context->binder_context_mgr_uid, curr_euid)) {

pr_err("BINDER_SET_CONTEXT_MGR bad uid %d != %d\n",

from_kuid(&init_user_ns, curr_euid),

from_kuid(&init_user_ns,

context->binder_context_mgr_uid));

ret = -EPERM;

goto out;

}

} else {

context->binder_context_mgr_uid = curr_euid;

}

//创建binder_node对象

new_node = binder_new_node(proc, fbo);

if (!new_node) {

ret = -ENOMEM;

goto out;

}

binder_node_lock(new_node);

new_node->local_weak_refs++;

new_node->local_strong_refs++;

new_node->has_strong_ref = 1;

new_node->has_weak_ref = 1;

//把新创建的node对象赋值给context->binder_context_mgr_node,成为serviceManager的binder管理实体

context->binder_context_mgr_node = new_node;

binder_node_unlock(new_node);

binder_put_node(new_node);

out:

mutex_unlock(&context->context_mgr_node_lock);

return ret;

}binder_ioctl_set_ctx_mgr()的流程也比较简单

- 先检查当前进程是否具有注册Context Manager的SEAndroid安全权限

- 如果具有SELinux权限,会为整个系统的上下文管理器专门生成一个binder_node节点,使该节点的强弱应用加1

- 新创建的binder_node 节点,记入context->binder_context_mgr_node,即ServiceManager 进程的context binder节点,使之成为serviceManager的binder管理实体

3.5 Looper::prepare()

sp<Looper> looper = Looper::prepare(0 /* opts */);详细代码不列出来,主要是通过 epoll 方式添加对 fd 的监听

3.6 BinderCallback::setupTo(looper)

frameworks/native/cmds/servicemanager/main.cpp

class BinderCallback : public LooperCallback {

public:

static sp<BinderCallback> setupTo(const sp<Looper>& looper) {

sp<BinderCallback> cb = new BinderCallback;

int binder_fd = -1;

//获取主线程的binder fd,并通知驱动ENTER_LOOPER

IPCThreadState::self()->setupPolling(&binder_fd);

LOG_ALWAYS_FATAL_IF(binder_fd < 0, "Failed to setupPolling: %d", binder_fd);

//将线程中的cmd flush 给驱动,此处应该是ENTER_LOOPER

IPCThreadState::self()->flushCommands();

//looper 中的epoll 添加对binder_fd 的监听,并且将callback 注册进去,会回调handleEvent

int ret = looper->addFd(binder_fd,

Looper::POLL_CALLBACK,

Looper::EVENT_INPUT,

cb,

nullptr /*data*/);

LOG_ALWAYS_FATAL_IF(ret != 1, "Failed to add binder FD to Looper");

return cb;

}

//epoll 触发该fd事件时,会回调该函数

int handleEvent(int /* fd */, int /* events */, void* /* data */) override {

IPCThreadState::self()->handlePolledCommands();

return 1; // Continue receiving callbacks.

}

};其实,每一个普通的service 在创建后,都会调用 ProcessState::startThreadPool() 产生一个main IPC thread,进而用其通过 IPCThreadState::joinThreadPool() 卵生其他的 IPCThreadState,但是 servicemanager 因为不需要其他线程,所以只是在主线程中使用Looper进行进一步的监听。

每一个IPCThreadState 核心应该就是监听、处理 binder 驱动的交互信息,而这些操作都是在函数getAndExecuteCommand() 中,详细看第 5.4 节。

3.7 ClientCallbackCallback::setupTo(looper, manager)

这里具体不清楚是为什么,从代码上看是ServiceManager 在addService之前可以选择先registerClientCallback,这样如果 addService() 成功会回调通知。

至于这里ClientCallbackCallback,设定了定时器,5s 触发一次,感觉是个心跳包。

3.8 looper->pollAll(-1)

进入无限循环

4. ProcessState 类

ProcesState 是管理 “进程状态”,Binder 中每个进程都会有且只有一个mProcess 对象。该对象用以:

- 初始化驱动设备;

- 记录驱动的名称、FD;

- 记录进程线程数量的上限;

- 记录binder 的 context obj;

- 启动 binder线程;

4.1 ProcessState 构造

frameworks/native/libs/binder/ProcessState.cpp

ProcessState::ProcessState(const char *driver)

: mDriverName(String8(driver))

, mDriverFD(open_driver(driver))

, mVMStart(MAP_FAILED)

, mThreadCountLock(PTHREAD_MUTEX_INITIALIZER)

, mThreadCountDecrement(PTHREAD_COND_INITIALIZER)

, mExecutingThreadsCount(0)

, mMaxThreads(DEFAULT_MAX_BINDER_THREADS)

, mStarvationStartTimeMs(0)

, mBinderContextCheckFunc(nullptr)

, mBinderContextUserData(nullptr)

, mThreadPoolStarted(false)

, mThreadPoolSeq(1)

, mCallRestriction(CallRestriction::NONE)

{

// TODO(b/139016109): enforce in build system

#if defined(__ANDROID_APEX__)

LOG_ALWAYS_FATAL("Cannot use libbinder in APEX (only system.img libbinder) since it is not stable.");

#endif

if (mDriverFD >= 0) {

// mmap the binder, providing a chunk of virtual address space to receive transactions.

mVMStart = mmap(nullptr, BINDER_VM_SIZE, PROT_READ, MAP_PRIVATE | MAP_NORESERVE, mDriverFD, 0);

if (mVMStart == MAP_FAILED) {

// *sigh*

ALOGE("Using %s failed: unable to mmap transaction memory.\n", mDriverName.c_str());

close(mDriverFD);

mDriverFD = -1;

mDriverName.clear();

}

}

#ifdef __ANDROID__

LOG_ALWAYS_FATAL_IF(mDriverFD < 0, "Binder driver '%s' could not be opened. Terminating.", driver);

#endif

}该函数主要做了如下:

在初始化列表中,通过调用 open_driver() 代码设备驱动,详见后面第 4.3 节;

如果驱动open 成功,mDriverFD 被赋值后,通过mmap() 创建大小 BINDER_VM_SIZE 的buffer,用以接收 transactions 数据。

通过命令行可以确认这个大小,假设 servicemanager 的PID 为510,则通过:

cat /proc/510/maps 可以看到:

748c323000-748c421000 r--p 00000000 00:1f 4 /dev/binderfs/binder不用奇怪为什么不是/dev/binder,软连接而已:

lrwxrwxrwx 1 root root 20 1970-01-01 05:43 binder -> /dev/binderfs/binder

lrwxrwxrwx 1 root root 22 1970-01-01 05:43 hwbinder -> /dev/binderfs/hwbinder

lrwxrwxrwx 1 root root 22 1970-01-01 05:43 vndbinder -> /dev/binderfs/vndbinder4.2 ProcessState 单例

ProcessState 使用self() 函数获取对象,因为有 vndbinder 和binder共用一份代码,所以如果需要使用 vndbinder,需要在调用 self() 函数前调用 initWithDriver() 来指定驱动设备名称。当然如果强制使用self() 函数,那么获取的单例针对的驱动设备为 kDefaultDriver。

frameworks/native/libs/binder/ProcessState.cpp

#ifdef __ANDROID_VNDK__

const char* kDefaultDriver = "/dev/vndbinder";

#else

const char* kDefaultDriver = "/dev/binder";

#endif

sp<ProcessState> ProcessState::self()

{

Mutex::Autolock _l(gProcessMutex);

if (gProcess != nullptr) {

return gProcess;

}

gProcess = new ProcessState(kDefaultDriver);

return gProcess;

}

4.3 open_driver()

对于 binder 和 vndbinder 设备,在 ProcessState 构造的时候会在初始化列表中调用 open_driver() 来对设备进行 open 和初始化。

frameworks/native/libs/binder/ProcessState.cpp

static int open_driver(const char *driver)

{

//通过系统调用open 设备

int fd = open(driver, O_RDWR | O_CLOEXEC);

if (fd >= 0) {

int vers = 0;

//如果open 成功,会查询binder version是否匹配

status_t result = ioctl(fd, BINDER_VERSION, &vers);

if (result == -1) {

ALOGE("Binder ioctl to obtain version failed: %s", strerror(errno));

close(fd);

fd = -1;

}

//如果不是当前的version,为什么还是不return?

if (result != 0 || vers != BINDER_CURRENT_PROTOCOL_VERSION) {

ALOGE("Binder driver protocol(%d) does not match user space protocol(%d)! ioctl() return value: %d",

vers, BINDER_CURRENT_PROTOCOL_VERSION, result);

close(fd);

fd = -1;

}

size_t maxThreads = DEFAULT_MAX_BINDER_THREADS;

//如果binder version 是当前的,会通知驱动设置最大的线程数

result = ioctl(fd, BINDER_SET_MAX_THREADS, &maxThreads);

if (result == -1) {

ALOGE("Binder ioctl to set max threads failed: %s", strerror(errno));

}

} else {

ALOGW("Opening '%s' failed: %s\n", driver, strerror(errno));

}

return fd;

}这里需要注意,每个进程创建的 binder 的最大线程数为:DEFAULT_MAX_BINDER_THREADS

frameworks/native/libs/binder/ProcessState.cpp

#define DEFAULT_MAX_BINDER_THREADS 15对于servicemanager,main 函数中设定为 0,也就是servicemanager 直接使用主线程,普通service 限制最大的binder thread为 15,详细在后面进行驱动分析。

这里补充一下,在system_server 进程中,binder 的数量最大值为 31:

frameworks/base/services/java/com/android/server/SystemServer.java

private static final int sMaxBinderThreads = 31;

private void run() {

...

BinderInternal.setMaxThreads(sMaxBinderThreads);

...

}4.4 makeBinderThreadName()

frameworks/native/libs/binder/ProcessState.cpp

String8 ProcessState::makeBinderThreadName() {

int32_t s = android_atomic_add(1, &mThreadPoolSeq);

pid_t pid = getpid();

String8 name;

name.appendFormat("Binder:%d_%X", pid, s);

return name;

}这是产生binder 线程名称的函数,通过变量 mThreadPoolSeq 控制顺序和个数,最终的binder 线程名称类似 Binder:1234_F。

线程的最大数量是规定的,如上一些中说到的 DEFAULT_MAX_BINDER_THREADS (默认为15)。

当然,也可以通过 setThreadPoolMaxThreadCount() 函数来设定最大线程数,在servicemanager 中就是通过该函数指定 max threads为0,详细见第 3 节。

4.4.1 setThreadPoolMaxThreadCount()

frameworks/native/libs/binder/ProcessState.cpp

status_t ProcessState::setThreadPoolMaxThreadCount(size_t maxThreads) {

status_t result = NO_ERROR;

if (ioctl(mDriverFD, BINDER_SET_MAX_THREADS, &maxThreads) != -1) {

mMaxThreads = maxThreads;

} else {

result = -errno;

ALOGE("Binder ioctl to set max threads failed: %s", strerror(-result));

}

return result;

}在第 4.3 节的时候已经说明过,当 ProcessState 构造时会open_driver(),此处会将默认的 binder thread 最大值通知给 binder 驱动。默认binder thread 的MAX 值为 15。

进程可以通过 ProcessState 单独设定binder thread 的MAX 值,例如,system_server 进程就将该值设为 31,servicemanager 进程将该值设为0.

4.5 startThreadPool()

frameworks/native/libs/binder/ProcessState.cpp

void ProcessState::startThreadPool()

{

AutoMutex _l(mLock);

if (!mThreadPoolStarted) {

mThreadPoolStarted = true;

spawnPooledThread(true);

}

}每个binder 通信的进程,都需要调用该函数。

该函数可以说是binder 通信的开端和必然流程。原因大概有两个:

- 每个进程的 binder 通信都会保存一个 ProcessState 单例,都会有个状态保护,也就是这里 mThreadPoolStarted 变量,后面任何binder 线程卵生都需要调用 spawnPooledThread(),而这个函数前提条件是 mThreadPoolStarted 为true;

- 对于 binder 驱动而言,每个进程都需要创建一个 主 binder 线程,其他binder 线程都是非主线程;

4.6 spawnPooledThread()

frameworks/native/libs/binder/ProcessState.cpp

void ProcessState::spawnPooledThread(bool isMain)

{

if (mThreadPoolStarted) {

String8 name = makeBinderThreadName();

ALOGV("Spawning new pooled thread, name=%s\n", name.string());

sp<Thread> t = new PoolThread(isMain);

t->run(name.string());

}

}这个用来卵生新的 binder thread,PoolThread 继承自Thread,run 的时候会将 binder name 带入,所以,在打印线程堆栈时能知道第几个Binder 的线程。而每一个binder thread 都会通过IPCThreadState 管理:

frameworks/native/libs/binder/ProcessState.cpp

class PoolThread : public Thread

{

public:

explicit PoolThread(bool isMain)

: mIsMain(isMain)

{

}

protected:

virtual bool threadLoop()

{

IPCThreadState::self()->joinThreadPool(mIsMain);

return false;

}

const bool mIsMain;

};5. IPCThreadState 类

同 ProcessState 类,每个进程有很多的线程用来记录 “线程状态”,在每次binder 的BINDER_WRITE_READ 调用后,驱动都会根据情况确定是否需要spawn 线程,而创建一个PoolThread(详见ProcessState) 都会伴随一个IPCThreadState进行管理,而binder 线程中所有的操作都是通过 IPCThreadState 进行的。

5.1 IPCThreadState 构造

frameworks/native/libs/binder/IPCThreadState.cpp

IPCThreadState::IPCThreadState()

: mProcess(ProcessState::self()),

mServingStackPointer(nullptr),

mWorkSource(kUnsetWorkSource),

mPropagateWorkSource(false),

mStrictModePolicy(0),

mLastTransactionBinderFlags(0),

mCallRestriction(mProcess->mCallRestriction)

{

pthread_setspecific(gTLS, this);

clearCaller();

mIn.setDataCapacity(256);

mOut.setDataCapacity(256);

}5.2 self()

frameworks/native/libs/binder/IPCThreadState.cpp

IPCThreadState* IPCThreadState::self()

{

if (gHaveTLS.load(std::memory_order_acquire)) {

restart:

const pthread_key_t k = gTLS;

IPCThreadState* st = (IPCThreadState*)pthread_getspecific(k);

if (st) return st;

return new IPCThreadState;

}

// Racey, heuristic test for simultaneous shutdown.

if (gShutdown.load(std::memory_order_relaxed)) {

ALOGW("Calling IPCThreadState::self() during shutdown is dangerous, expect a crash.\n");

return nullptr;

}

pthread_mutex_lock(&gTLSMutex);

if (!gHaveTLS.load(std::memory_order_relaxed)) {

int key_create_value = pthread_key_create(&gTLS, threadDestructor);

if (key_create_value != 0) {

pthread_mutex_unlock(&gTLSMutex);

ALOGW("IPCThreadState::self() unable to create TLS key, expect a crash: %s\n",

strerror(key_create_value));

return nullptr;

}

gHaveTLS.store(true, std::memory_order_release);

}

pthread_mutex_unlock(&gTLSMutex);

goto restart;

}每个线程创建后,都会通过pthread_getspecific() 确认TLS中是否有已经创建了IPCThreadState,如果有就直接返回,如果没有则新建一个。

5.3 setupPolling()

frameworks/native/libs/binder/IPCThreadState.cpp

int IPCThreadState::setupPolling(int* fd)

{

if (mProcess->mDriverFD < 0) {

return -EBADF;

}

mOut.writeInt32(BC_ENTER_LOOPER);

*fd = mProcess->mDriverFD;

return 0;

}

主要做两件事情,发送 BC_ENTER_LOOPER 通知驱动进入looper,并将驱动 fd 返回。

5.4 getAndExecuteCommand()

frameworks/native/libs/binder/IPCThreadState.cpp

status_t IPCThreadState::getAndExecuteCommand()

{

status_t result;

int32_t cmd;

//step1,与binder驱动交互,等待binder驱动返回

result = talkWithDriver();

if (result >= NO_ERROR) {

size_t IN = mIn.dataAvail();

if (IN < sizeof(int32_t)) return result;

//step2,解析从binder驱动中的reply command

cmd = mIn.readInt32();

//step3,留意binder处理的thread count

//system server中会喂狗,这里当处理的线程count超过最大值,monitor会阻塞直到有足够的数量

pthread_mutex_lock(&mProcess->mThreadCountLock);

mProcess->mExecutingThreadsCount++;

if (mProcess->mExecutingThreadsCount >= mProcess->mMaxThreads &&

mProcess->mStarvationStartTimeMs == 0) {

mProcess->mStarvationStartTimeMs = uptimeMillis();

}

pthread_mutex_unlock(&mProcess->mThreadCountLock);

//step4,binder通信用户端的核心处理函数,根据reply command进行对应的处理

result = executeCommand(cmd);

//step5,每个线程executeCommand() 完成都会将thread count减1,且每次都会条件变量broadcast

pthread_mutex_lock(&mProcess->mThreadCountLock);

mProcess->mExecutingThreadsCount--;

if (mProcess->mExecutingThreadsCount < mProcess->mMaxThreads &&

mProcess->mStarvationStartTimeMs != 0) {

int64_t starvationTimeMs = uptimeMillis() - mProcess->mStarvationStartTimeMs;

if (starvationTimeMs > 100) {

ALOGE("binder thread pool (%zu threads) starved for %" PRId64 " ms",

mProcess->mMaxThreads, starvationTimeMs);

}

mProcess->mStarvationStartTimeMs = 0;

}

pthread_cond_broadcast(&mProcess->mThreadCountDecrement);

pthread_mutex_unlock(&mProcess->mThreadCountLock);

}

return result;

}代码逻辑上还是比较简单,主要是三部分:

- talkWithDriver() 与binder 驱动交互,并确定返回值是否异常;

- 确定execute thread count,system server会喂狗;

- executeCommand() 进行核心处理;

重点的两个函数处理逻辑比较复杂,下面先简单分析下。

5.4.1 talkWithDriver()

frameworks/native/libs/binder/IPCThreadState.cpp

status_t IPCThreadState::talkWithDriver(bool doReceive)

{

if (mProcess->mDriverFD < 0) {

return -EBADF;

}

binder_write_read bwr;

// Is the read buffer empty?

const bool needRead = mIn.dataPosition() >= mIn.dataSize();

// We don't want to write anything if we are still reading

// from data left in the input buffer and the caller

// has requested to read the next data.

const size_t outAvail = (!doReceive || needRead) ? mOut.dataSize() : 0;

bwr.write_size = outAvail;

bwr.write_buffer = (uintptr_t)mOut.data();

// This is what we'll read.

if (doReceive && needRead) {

bwr.read_size = mIn.dataCapacity();

bwr.read_buffer = (uintptr_t)mIn.data();

} else {

bwr.read_size = 0;

bwr.read_buffer = 0;

}

...

// Return immediately if there is nothing to do.

if ((bwr.write_size == 0) && (bwr.read_size == 0)) return NO_ERROR;

bwr.write_consumed = 0;

bwr.read_consumed = 0;

status_t err;

do {

if (ioctl(mProcess->mDriverFD, BINDER_WRITE_READ, &bwr) >= 0)

err = NO_ERROR;

else

err = -errno;

if (mProcess->mDriverFD < 0) {

err = -EBADF;

}

} while (err == -EINTR);

if (err >= NO_ERROR) {

if (bwr.write_consumed > 0) {

if (bwr.write_consumed < mOut.dataSize())

LOG_ALWAYS_FATAL(...);

else {

mOut.setDataSize(0);

processPostWriteDerefs();

}

}

if (bwr.read_consumed > 0) {

mIn.setDataSize(bwr.read_consumed);

mIn.setDataPosition(0);

}

return NO_ERROR;

}

return err;

}IPCThreadState 中存放了两个信息:mIn 和 mOut,mIn 是用来read驱动的数据,mOut 是用来write 驱动的数据。

这里核心是do...while循环,通过命令 BINDER_WRITE_READ 与驱动交互,如果 ioctl 没有碰到中断打扰,do...while 在处理完后会返回。

详细的 BINDER_WIRTE_READ 驱动端的处理,在后面会详细分析。

5.4.2 executeCommand()

binder 线程核心处理部分,talkWithDriver() 之后对结果处理核心:

frameworks/native/libs/binder/IPCThreadState.cpp

status_t IPCThreadState::executeCommand(int32_t cmd)

{

BBinder* obj;

RefBase::weakref_type* refs;

status_t result = NO_ERROR;

switch ((uint32_t)cmd) {

case BR_ERROR:

result = mIn.readInt32();

break;

case BR_OK:

break;

case BR_ACQUIRE:

...

break;

case BR_RELEASE:

...

break;

case BR_INCREFS:

...

break;

case BR_DECREFS:

...

break;

case BR_ATTEMPT_ACQUIRE:

...

break;

case BR_TRANSACTION_SEC_CTX:

case BR_TRANSACTION:

...

break;

case BR_DEAD_BINDER:

...

case BR_CLEAR_DEATH_NOTIFICATION_DONE:

...

case BR_FINISHED:

result = TIMED_OUT;

break;

case BR_NOOP:

break;

case BR_SPAWN_LOOPER:

mProcess->spawnPooledThread(false);

break;

default:

ALOGE("*** BAD COMMAND %d received from Binder driver\n", cmd);

result = UNKNOWN_ERROR;

break;

}

if (result != NO_ERROR) {

mLastError = result;

}

return result;

}这里暂时不做分析,后续会在 native 端 C-S 中详细剖析。

至此,servicemanager 的启动流程已经梳理完毕,基本流程如下:

- 根据命令行参数,选择启动设备 binder 还是设备 vndbinder;

- 通过ProcessState::initWithDriver() open、初始化设备驱动,并通过该进程的最大 thread 数量为 0;

- 实例化 ServcieManager,并将其以特殊的 servie,注册到ServiceManager 中的mServiceMap 中;

- 将特殊的 context obj 存放到 IPCThreadState 中,并通过 ProcessState 通知驱动context mgr;

- 通过 BinderCallback,通知驱动servicemanger 就绪,进入 BC_ENTER_LOOPER;

- 通过Looper 中的Epoll 将驱动设备fd 添加监听,并回调 hanleEvent();

- 在 handleEvent() 中处理poll cmd,处理所有的信息;