从零入门人工智能相关文档

从零入门人工智能之机器学习

一、机器学习之线性回归

1.机器学习介绍

- 从数据中寻找规律、建立关系,根据建立的关系去解决问题的方法。

从数据中学习并且实现自我优化与升级 - 机器学习的类别:

- 监督学习–训练数据包括正确的结果,可应用于人脸识别、语音翻译、医学诊断。

- 无监督学习–训练数据不包括正确的结果,可应用于新闻聚类。

- 半监督学习–训练数据包括少量正确的结果

- 强化学习–根据每次结果收获的奖惩进行学习,实现优化,可应用于AlphaGO。

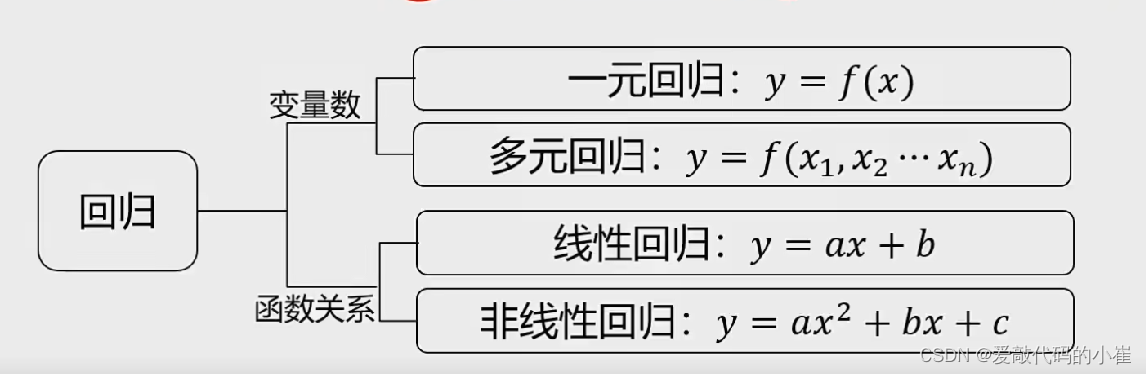

2.线性回归

- 根据数据,确定两种或两种以上变量间相互依赖的定量关系

函数表达式:

3.线性回归实战

scikit-learn是基于python的机器学习工具包,通过pandas、Numpy、Matplotlib等python数值计算的库实现高效的算法应用。

实战任务:

基于generated_data.csv数据,建立线性回归模型,预测x=3.5对应对的y值,评估模型表现。

#load the data

import pandas as pd

data = pd.read_csv('generated_data.csv')

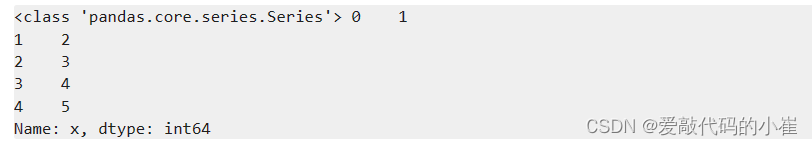

#data 赋值

x = data.loc[:,'x']

y = data['y']

print(type(x),x.head())

#visualize the data

from matplotlib import pyplot as plt

plt.figure(figsize=(4,4))

plt.scatter(x,y)

plt.show()

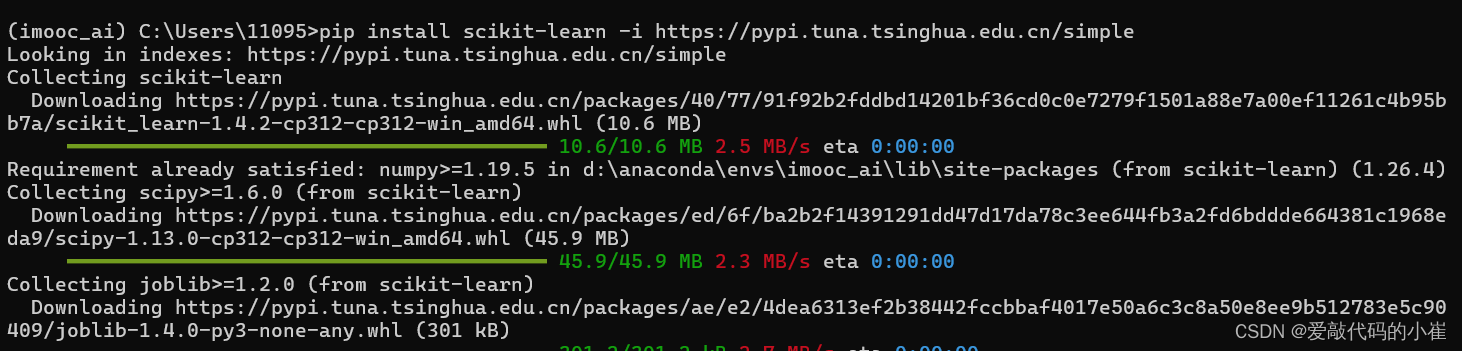

使用sclearn时,需要导包

再黑框中输入 pip install scikit-learn -i https://pypi.tuna.tsinghua.edu.cn/simple

#set up a linear regression model

from sklearn.linear_model import LinearRegression

lr_model = LinearRegression()

#将x y从一维转为二维

import numpy as np

x = np.array(x).reshape(-1,1)

y = np.array(y).reshape(-1,1)

#训练模型,确定参数 拟合

lr_model.fit(x,y)

#查看预测结果是否跟y吻合

y_predict = lr_model.predict(x)

print(y_predict)

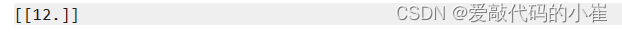

#查看3.5对应的y值

y_3= lr_model.predict([[3.5]])

print(y_3)

#查看系数a,截距b

a = lr_model.coef_

b = lr_model.intercept_

print(a,b)

metrics提供了丰富多样的评价指标,可以根据实际任务类型和需求选择合适的度量来评估模型性能。

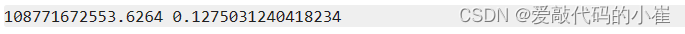

#求均方差(MSE),方差R方

from sklearn.metrics import mean_squared_error,r2_score

MSE = mean_squared_error(y,y_predict)

r2 = r2_score(y,y_predict)

print(MSE,r2)

4.python调用sklearn实现线性回归

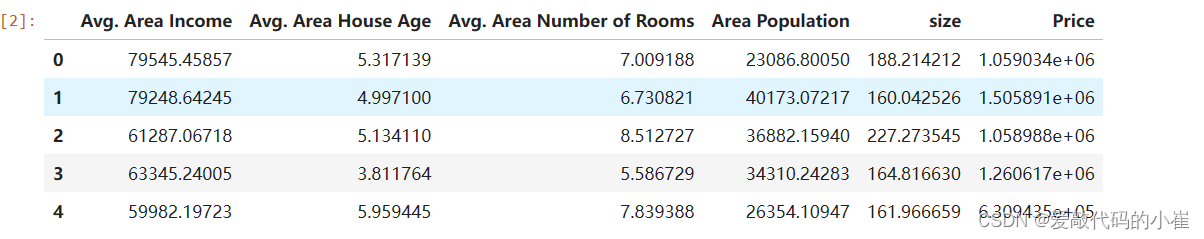

任务:

基于usa_housing_price.csv数据,建立线性回归模型,预测合理房价:

1. 以面积为输入变量建立单因子模型,评估模型表现,可视化线性回归预测结果

2. 以income、house age、numbers of rooms、population、area为输入变量,建立多因子模型,评估模型表现

3. 预测Income = 65000,House Age = 5,Number of Rooms = 5,Population = 30000,size = 200的合理房价

#load the data

import pandas as pd

data = pd.read_csv('usa_housing_price.csv')

data.head()

from matplotlib import pyplot as plt

plt.figure(figsize = (15,10))

fig1 = plt.subplot(231)

plt.scatter(data['Avg. Area Income'],data['Price'])

plt.title('Price vs Income')

fig2 = plt.subplot(232)

plt.scatter(data.loc[:,'Avg. Area House Age'],data.loc[:,'Price'])

plt.title('Price vs House Age')

fig3 = plt.subplot(233)

plt.scatter(data.loc[:,'Avg. Area Number of Rooms'],data.loc[:,'Price'])

plt.title('Price vs Rooms')

fig4 = plt.subplot(234)

plt.scatter(data['Area Population'],data['Price'])

plt.title('Price vs Area Population')

fig5 = plt.subplot(235)

plt.scatter(data['size'],data['Price'])

plt.title('Price vs size')

plt.show()

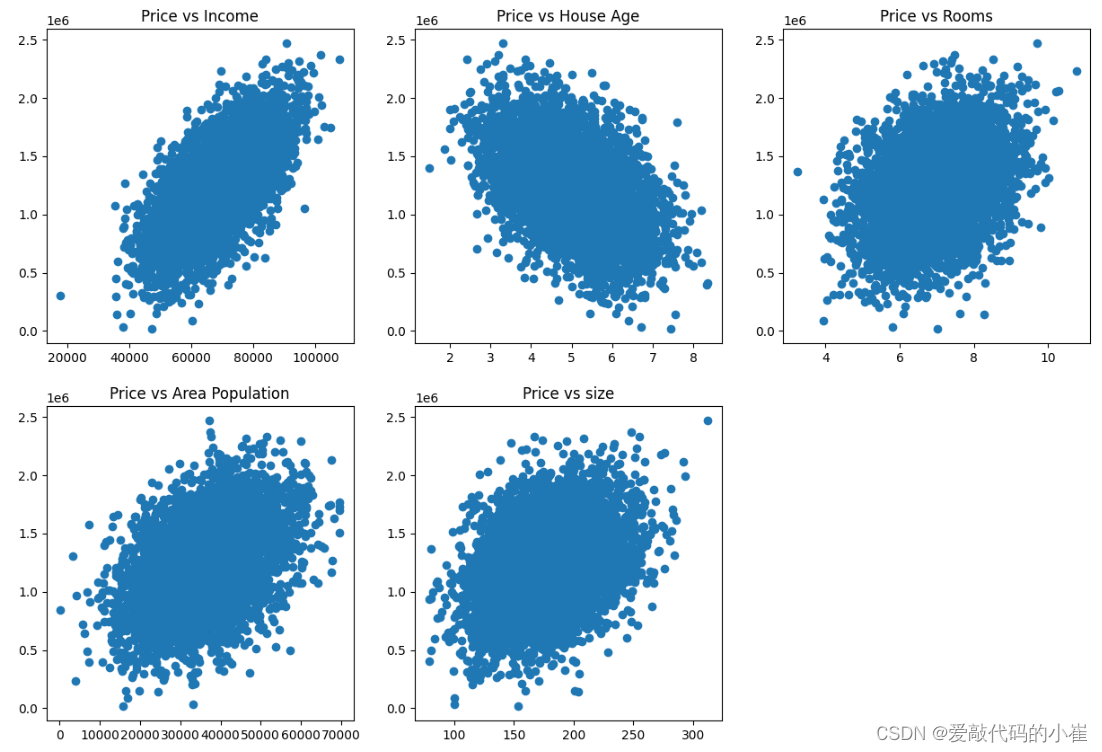

#define x and y

import numpy as np

x = data.loc[:,'size']

y = data.loc[:,'Price']

x = np.array(x).reshape(-1,1)

y = np.array(y).reshape(-1,1)

print(type(x),x,x.shape)

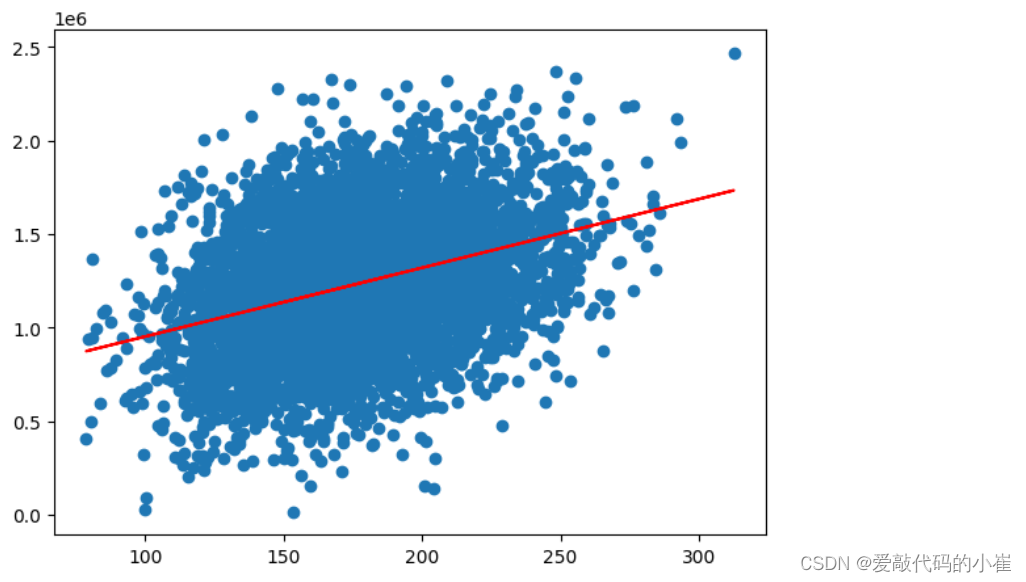

单因子模型

#set up the linear regression model

from sklearn.linear_model import LinearRegression

LR1 = LinearRegression()

#train the model

LR1.fit(x,y)

#calculate the price vs size

y_predict_1 = LR1.predict(x)

print(y_predict_1)

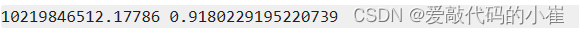

#evaluate the model

from sklearn.metrics import mean_squared_error,r2_score

MSE = mean_squared_error(y,y_predict_1)

r2 = r2_score(y,y_predict_1)

print(MSE,r2)

fig6 = plt.figure(figsize=(7,5))

plt.scatter(x,y)

plt.plot(x,y_predict_1,'r')

plt.show()

多因子模型

drop函数默认删除行,列需要加axis = 1

#define x_multi

x_multi = data.drop(['Price'],axis = 1)

print(x_multi.shape)

#set up linear model

LR_multi = LinearRegression()

#train the model

LR_multi.fit(x_multi, y)

#make prediction

y_multi_predict = LR_multi.predict(x_multi)

print(y_multi_predict)

MSE_multi = mean_squared_error(y,y_multi_predict)

r2_multi = r2_score(y,y_multi_predict)

print(MSE_multi,r2_multi)

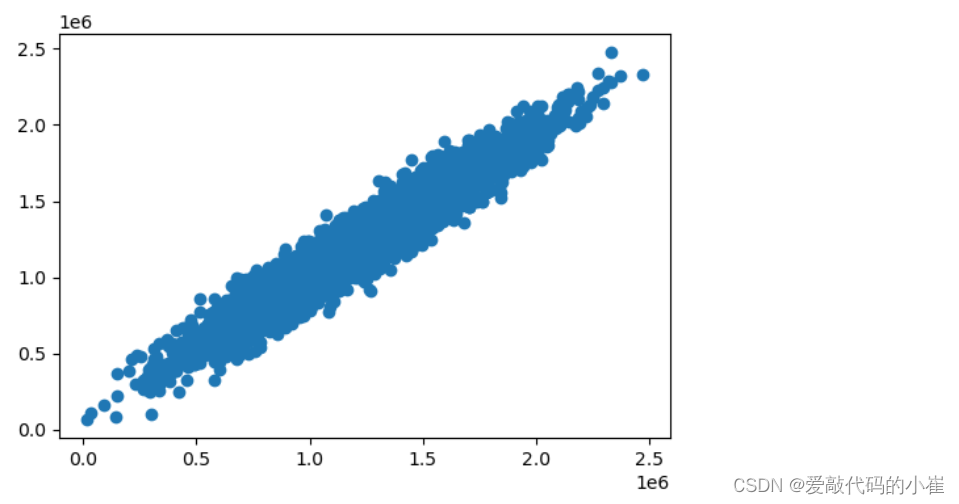

多因子无法看x和y的效果,只能查看y和y_multi_predict

fig7 = plt.figure(figsize = (6,4))

plt.scatter(y,y_multi_predict)

plt.show()

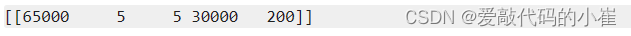

x_test = [65000,5,5,30000,200]

x_test = np.array(x_test).reshape(1,-1)

print(x_test)

y_test_predict = LR_multi.predict(x_test)

print(y_test_predict)

二、机器学习之逻辑回归

1.分类

根据已知样本的某些特征,判断一个新的样本属于哪种已知的样本类。

分类方法:逻辑回归、KNN近邻模型、决策树、神经网络

2.逻辑回归

用于解决分类问题的一种模型。根据数据特征或属性,计算其归属于某一类别的概率P(x),根据概率数值判断其所属类别。主要应用场景:二分类问题。

逻辑回归求解:

1. 根据训练样本,寻找类别边界;

p ( x ) = 1 1 + e − g ( x ) p(x) = {1 \over 1 + e^{-g(x)}} p(x)=1+e−g(x)1

g ( x ) = θ 0 + θ 1 X 1 + θ 2 X 2 + . . . . g(x) = \theta_0 + \theta_1X_1 + \theta_2X_2 + .... g(x)=θ0+θ1X1+θ2X2+....

根据训练样本确定参数 θ 0 θ 1 θ 2 \theta_0 \theta_1 \theta_2 θ0θ1θ2 。

- 逻辑回归求解,最小损失函数(J):

J i = { − l o g ( p ( x i ) ) , i f y i = 1 − l o g ( 1 − p ( x i ) ) , i f y i = 0 J_i = \begin{cases} -log(p(x_i)),\,\ if \,\ y_i = 1 \\ -log(1-p(x_i)), \,\ if \,\ y_i = 0 \end{cases} Ji={ −log(p(xi)), if yi=1−log(1−p(xi)), if yi=0

J = 1 m ∑ i = 1 m J i = − 1 m [ ∑ i = 1 m ( J i l o g ( p ( x i ) ) + ( 1 − y i ) l o g ( 1 − p ( x i ) ) ) ] J = {1 \over m} \sum_{i=1}^mJ_i = -{1 \over m} [\sum_{i=1}^m (J_ilog(p(x_i)) +(1-y_i)log(1-p(x_i))) ] J=m1i=1∑mJi=−m1[i=1∑m(Jilog(p(xi))+(1−yi)log(1−p(xi)))]

3.实战(一):考试通过预测

任务:

1. 基于examdata.csv数据,建立逻辑回归模型,评估模型表现

2. 预测Exam1 = 75,Exam2 = 60时,该同学能否通过Exam3

3. 建立二阶边界函数,重复任务1、2

加载examdata.csv数据

#load the data

import pandas as pd

import numpy as np

data = pd.read_csv('examdata.csv')

data.head()

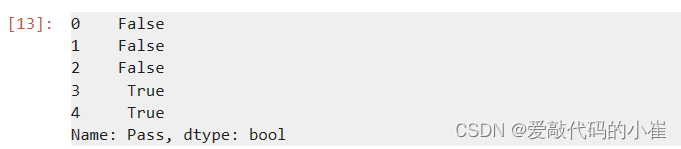

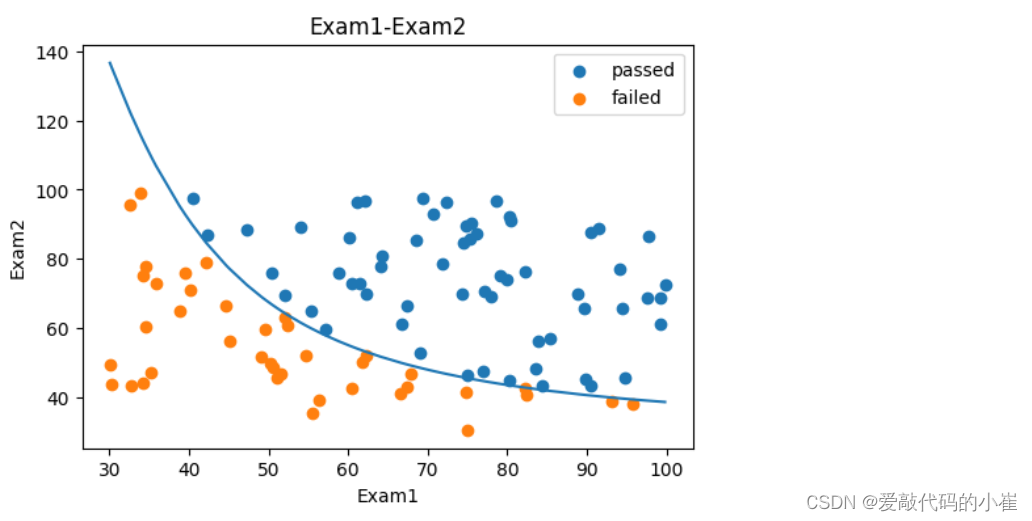

#visualize the data

from matplotlib import pyplot as plt

fig1 = plt.figure(figsize = (6,4))

plt.scatter(data['Exam1'],data['Exam2'])

plt.title('Exam1-Exam2')

plt.xlabel('Exam1')

plt.ylabel('Exam2')

plt.show()

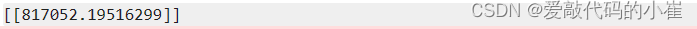

#add lable mask 添加标签

mask = data.loc[:,'Pass'] == 1

mask.head()

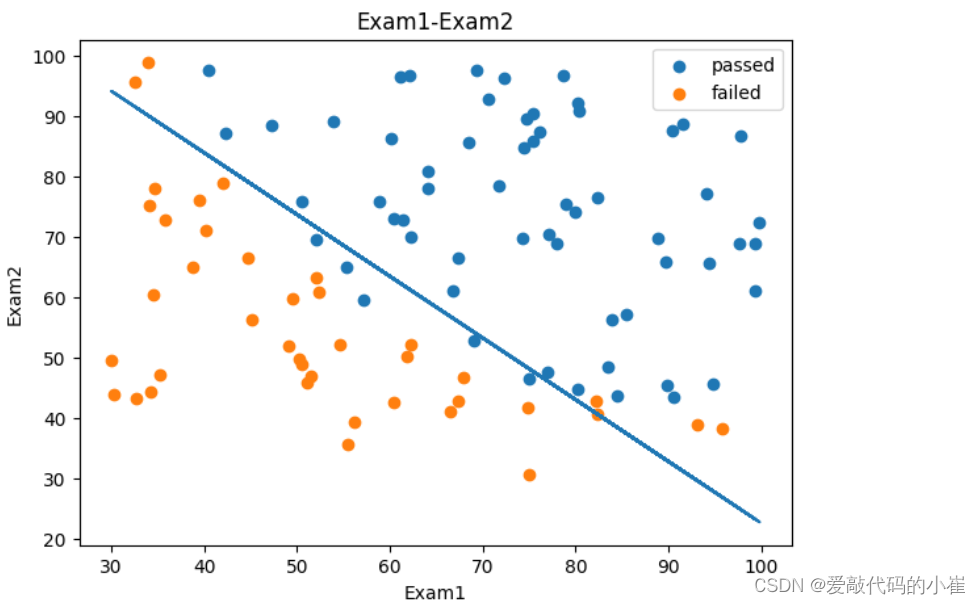

对添加标签后的数据进线可视化,legend()函数是用来在图形中显示图例名称的,可以帮助观察者区分不同的数据来源或类别

fig2 = plt.figure(figsize = (6,4))

passed = plt.scatter(data['Exam1'][mask],data['Exam2'][mask])

failed = plt.scatter(data['Exam1'][~mask],data['Exam2'][~mask])

plt.title('Exam1-Exam2')

plt.xlabel('Exam1')

plt.ylabel('Exam2')

plt.legend((passed,failed),('passed','failed'))

plt.show()

#define x y

x = data.drop(['Pass'],axis = 1)

y = data.loc[:,'Pass']

print(x.shape,y.shape)

#establish the model and train it

from sklearn.linear_model import LogisticRegression

LR = LogisticRegression()

LR.fit(x,y)

#show the predicted result

y_predict=LR.predict(x)

print(y_predict)

#evaluate model accuracy

from sklearn.metrics import accuracy_score

accuracy_score_1 = accuracy_score(y,y_predict)

print(accuracy_score_1)

#预测Exam1 = 75,Exam2 = 60时,该同学能否通过Exam3

#predict Exam1 = 75 and Exam2 = 60

x_test = [75,60]

x_test = pd.array(y_test).reshape(1,-1)

y_test_predict = LR.predict(y_test)

print('passed' if y_test_predict == 1 else 'failed')

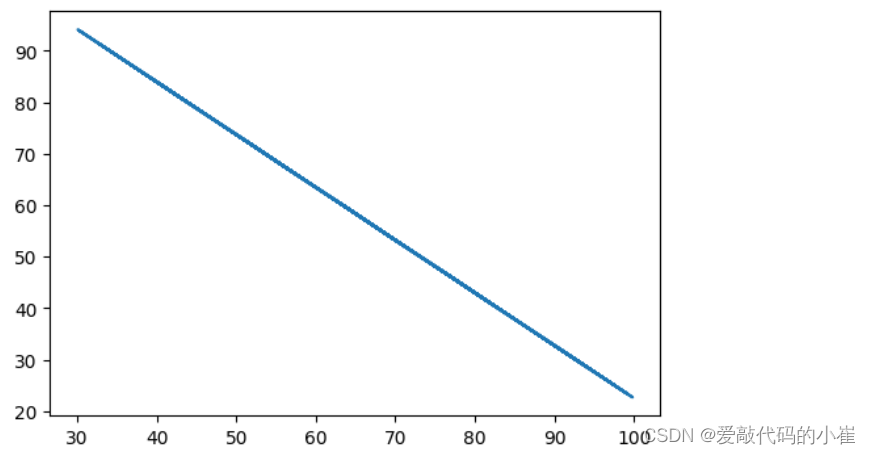

边界函数: θ 0 + θ 1 X 1 + θ 2 X 2 = 0 \theta_0 + \theta_1X_1 + \theta_2X_2 = 0 θ0+θ1X1+θ2X2=0

求解边界参数 θ 0 θ 1 θ 2 \theta_0 \theta_1 \theta_2 θ0θ1θ2,并根据公式求解 x 2 x_2 x2

theta0 = LR.intercept_

theta1,theta2 = LR.coef_[0][0],LR.coef_[0][1]

print(theta0,theta1,theta2)

x1 = data.loc[:,'Exam1']

print(type(x1))

x2 = data.loc[:,'Exam2']

x2_new = -(theta0 + theta1*x1)/theta2

#对x1和预测结果x2_new进行可视化

fig3 = plt.figure(figsize = (6,4))

plt.plot(x1,x2_new)

plt.show()

#Exam1—Exam2散点图与Exam1—预测边界数据可视化

fig4 = plt.figure(figsize = (7,5))

passed = plt.scatter(data['Exam1'][mask],data['Exam2'][mask])

failed = plt.scatter(data['Exam1'][~mask],data['Exam2'][~mask])

plt.plot(x1,x2_new)

plt.title('Exam1-Exam2')

plt.xlabel('Exam1')

plt.ylabel('Exam2')

plt.legend((passed,failed),('passed','failed'))

plt.show()

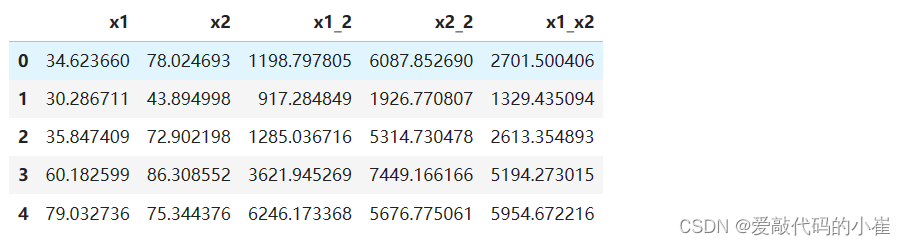

使用更加准确的二次函数进行预测

#create new data

x1_2 = x1 * x1

x2_2 = x2 * x2

x1_x2 = x1 * x2

x_new = {

'x1':x1, 'x2':x2, 'x1_2':x1_2, 'x2_2':x2_2, 'x1_x2':x1_x2}

x_new = pd.DataFrame(x_new)

x_new.head()

#establish new model and train

LR2 = LogisticRegression()

LR2.fit(x_new,y)

#evaulate the accuracy

y2_predict=LR2.predict(x_new)

accuracy_score_2 = accuracy_score(y,y2_predict)

print(accuracy_score_2)

二阶边界函数: θ 0 + θ 1 X 1 + θ 2 X 2 + θ 3 x 1 2 + θ 4 x 2 2 + θ 5 x 1 x 2 = 0 \theta_0 + \theta_1X_1 + \theta_2X_2 + \theta_3x_1^2 + \theta_4x_2^2 + \theta_5x_1x_2= 0 θ0+θ1X1+θ2X2+θ3x12+θ4x22+θ5x1x2=0

a x 2 + b x + c = 0 : x 1 = ( − b + b 2 − 4 a c ) / 2 a : x 2 ( − b − b 2 − 4 a c ) / 2 a ax^2 + bx + c = 0 :\,\ x1 = (-b + \sqrt{b^2 - 4ac})/ 2a : \,\ x2 (-b - \sqrt{b^2 - 4ac})/ 2a ax2+bx+c=0: x1=(−b+b2−4ac)/2a: x2(−b−b2−4ac)/2a

θ 4 x 2 2 + ( θ 5 x 1 + θ 2 ) x 2 + ( θ 0 + θ 1 x 1 + θ 3 x 1 2 ) = 0 \theta_4x_2^2 + (\theta_5x_1 + \theta_2)x_2 + (\theta_0 + \theta_1x_1 + \theta_3x_1^2) = 0 θ4x22+(θ5x1+θ2)x2+(θ0+θ1x1+θ3x12)=0

求解函数参数 θ 0 θ 1 θ 2 θ 3 θ 4 θ 5 \theta_0 \theta_1 \theta_2 \theta_3 \theta_4 \theta_5 θ0θ1θ2θ3θ4θ5

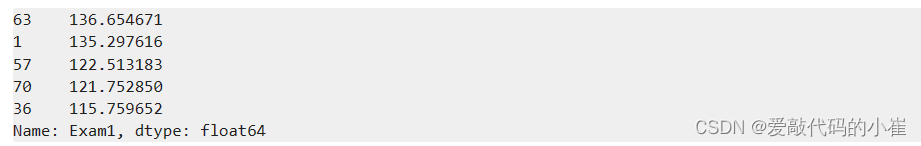

x1_new = x1.sort_values()

theta0 = LR2.intercept_

theta1,theta2,theta3,theta4,theta5 = LR2.coef_[0][0],LR2.coef_[0][1],LR2.coef_[0][2],LR2.coef_[0][3],LR2.coef_[0][4]

print(theta0,theta1,theta2,theta3,theta4,theta5)

a = theta4

b = theta5*x1_new+theta2

c = theta0+theta1*x1_new+theta3*x1_new*x1_new

x2_new_boundary = (-b + np.sqrt(b * b - 4 * a * c))/(2*a)

x2_new_boundary.head()

fig5 = plt.figure(figsize = (6,4))

plt.plot(x1_new,x2_new_boundary)

plt.show()

fig6 = plt.figure(figsize = (6,4))

passed = plt.scatter(data['Exam1'][mask],data['Exam2'][mask])

failed = plt.scatter(data['Exam1'][~mask],data['Exam2'][~mask])

plt.plot(x1_new,x2_new_boundary)

plt.title('Exam1-Exam2')

plt.xlabel('Exam1')

plt.ylabel('Exam2')

plt.legend((passed,failed),('passed','failed'))

plt.show()

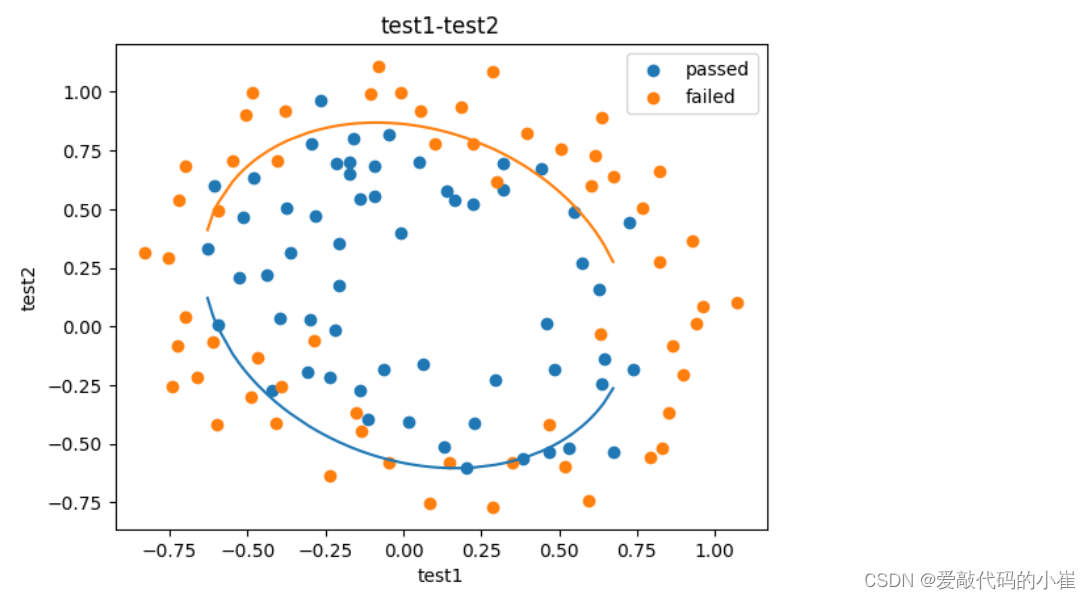

4.实战(二):芯片质量预测

任务:

1. 基于chip_test.csv数据,建立逻辑回归模型(二阶边界),评估模型表现

2. 以函数的方式求解边界曲线

3. 描绘出完整的决策边界曲线

#load the data

import pandas as pd

import numpy as np

data = pd.read_csv('chip_test.csv')

data.head()

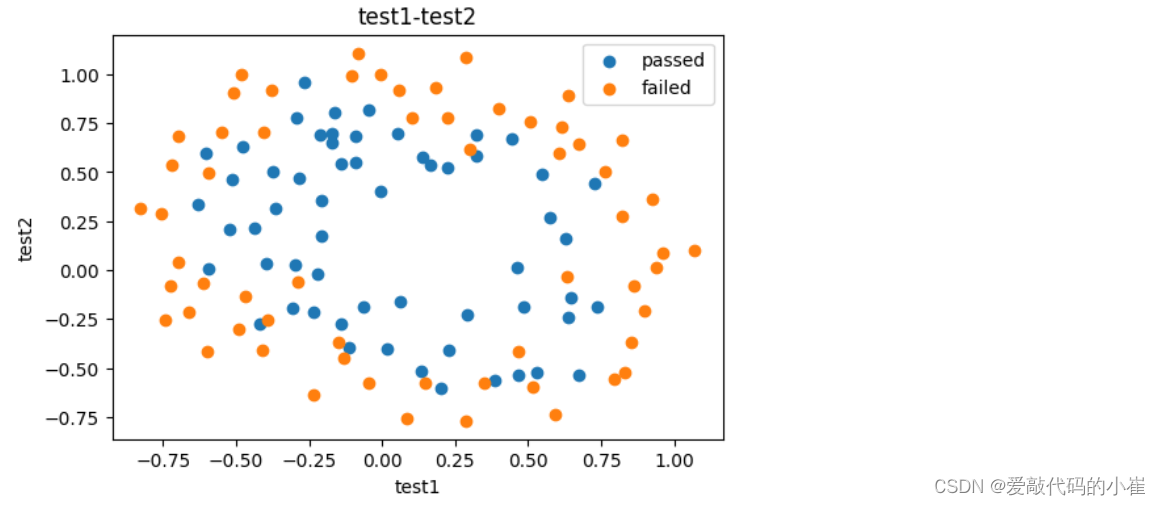

#visualize the data

from matplotlib import pyplot as plt

fig1 = plt.figure(figsize = (6,4))

mask = data.loc[:,'pass'] == 1

passed = plt.scatter(data['test1'][mask],data['test2'][mask])

failed = plt.scatter(data['test1'][~mask],data['test2'][~mask])

plt.title('test1-test2')

plt.xlabel('test1')

plt.ylabel('test2')

plt.legend((passed,failed),('passed','failed'))

plt.show()

二阶边界函数: θ 0 + θ 1 X 1 + θ 2 X 2 + θ 3 x 1 2 + θ 4 x 2 2 + θ 5 x 1 x 2 = 0 二阶边界函数:\theta_0 + \theta_1X_1 + \theta_2X_2 + \theta_3x_1^2 + \theta_4x_2^2 + \theta_5x_1x_2= 0 二阶边界函数:θ0+θ1X1+θ2X2+θ3x12+θ4x22+θ5x1x2=0

#create new data

x = data.drop(['pass'],axis = 1)

y = data.loc[:,'pass']

x1 = data.loc[:,'test1']

x2 = data.loc[:,'test2']

x1_2 = x1 * x1

x2_2 = x2 * x2

x1_x2 = x1 * x2

x_new={

'x1':x1,'x2':x2,'x1_2':x1_2,'x2_2':x2_2,'x1_x2':x1_x2}

x_new=pd.DataFrame(x_new)

x_new.head()

#establish the model and train

from sklearn.linear_model import LogisticRegression

LR=LogisticRegression()

LR.fit(x_new,y)

#predict

y_predict = LR.predict(x_new)

print(y_predict)

#evaluate the accuracy_score

from sklearn.metrics import accuracy_score

accuracy = accuracy_score(y,y_predict)

print(accuracy)

a x 2 + b x + c = 0 : x 1 = ( − b + b 2 − 4 a c ) / 2 a : x 2 ( − b − b 2 − 4 a c ) / 2 a ax^2 + bx + c = 0 :\,\ x1 = (-b + \sqrt{b^2 - 4ac})/ 2a : \,\ x2 (-b - \sqrt{b^2 - 4ac})/ 2a ax2+bx+c=0: x1=(−b+b2−4ac)/2a: x2(−b−b2−4ac)/2a

θ 4 x 2 2 + ( θ 5 x 1 + θ 2 ) x 2 + ( θ 0 + θ 1 x 1 + θ 3 x 1 2 ) = 0 \theta_4x_2^2 + (\theta_5x_1 + \theta_2)x_2 + (\theta_0 + \theta_1x_1 + \theta_3x_1^2) = 0 θ4x22+(θ5x1+θ2)x2+(θ0+θ1x1+θ3x12)=0

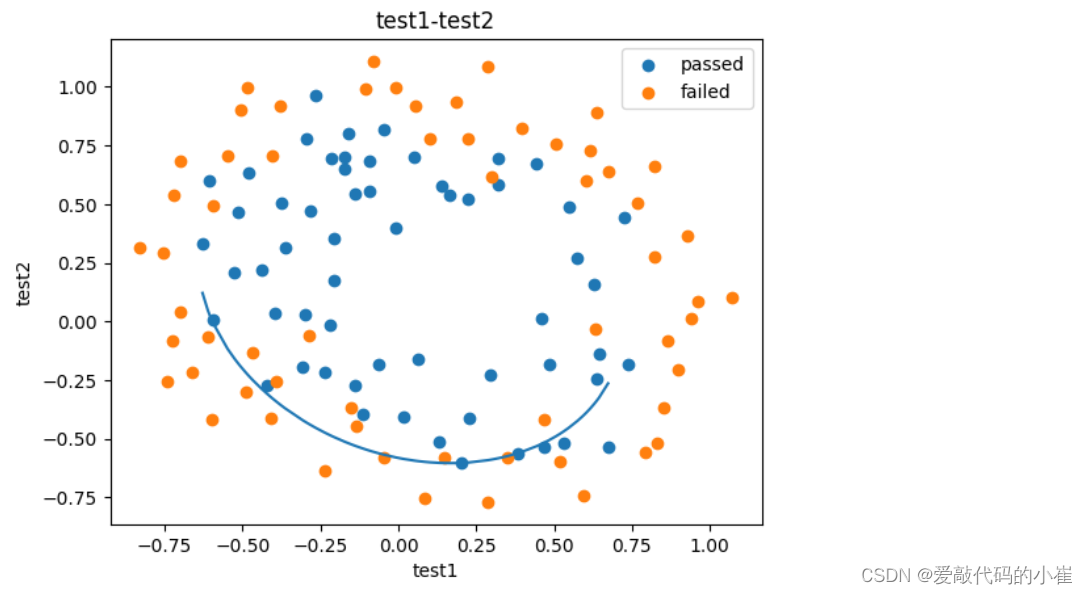

#decision boundary

x1_new = x1.sort_values()

theta0 = LR.intercept_

theta1,theta2,theta3,theta4,theta5=LR.coef_[0][0],LR.coef_[0][1],LR.coef_[0][2],LR.coef_[0][3],LR.coef_[0][4]

a = theta4

b = theta5*x1_new+theta2

c = theta0+theta1*x1_new+theta3*x1_new*x1_new

x2_new_boundary = (-b + np.sqrt(b * b - 4 * a * c))/(2*a)

fig2 = plt.figure()

passed = plt.scatter(data['test1'][mask],data['test2'][mask])

failed = plt.scatter(data['test1'][~mask],data['test2'][~mask])

plt.plot(x1_new,x2_new_boundary)

plt.title('test1-test2')

plt.xlabel('test1')

plt.ylabel('test2')

plt.legend((passed,failed),('passed','failed'))

plt.show()

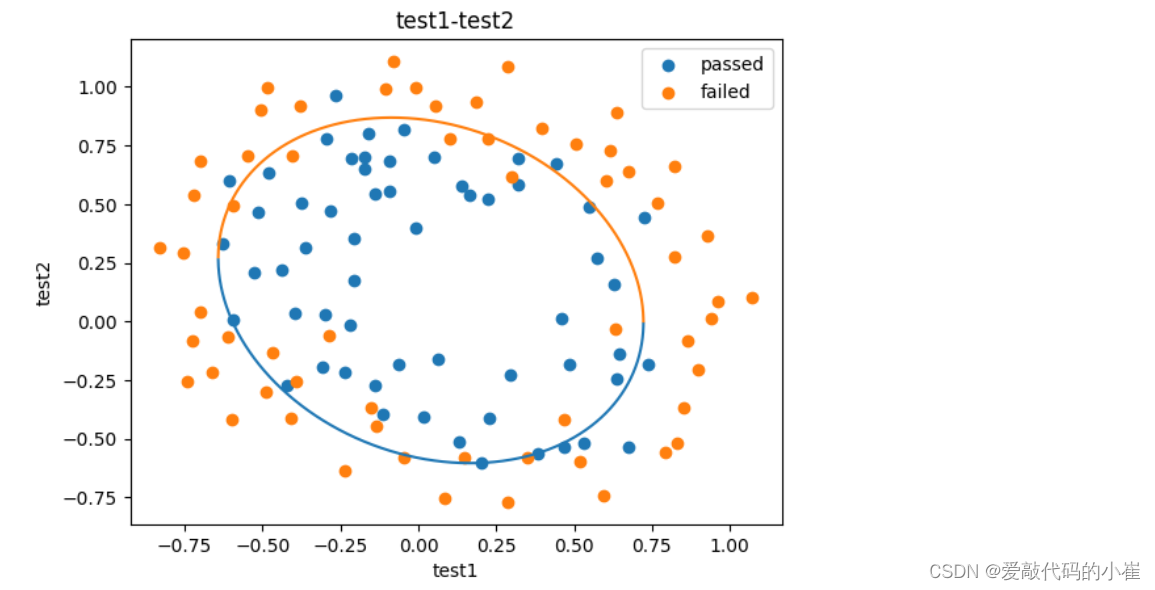

函数f(x)的返回值是一个元组(根1, 根2),元组是Python的一种序列类型,支持索引访问。对于一个包含n个元素的元组my_tuple,可以用my_tuple[i]来获取第i个元素(索引从0开始)。因此,在下面代码中:

- f(x)返回一个包含两个元素的元组,表示二次方程的两个根。

- 使用

f(x)[0]和f(x)[1]分别访问这个元组的第0个和第1个元素,也就是两个根的值。 - 将这两个根分别追加到相应的列表

x2_new_boundary1和x2_new_boundary2中,以收集所有输入x对应的二次方程根。

#define f(x)

def f(x):

a = theta4

b = theta5*x+theta2

c = theta0+theta1*x+theta3*x*x

x2_new_boundary1 = (-b + np.sqrt(b * b - 4 * a * c))/(2*a)

x2_new_boundary2 = (-b - np.sqrt(b * b - 4 * a * c))/(2*a)

return x2_new_boundary1,x2_new_boundary2

x2_new_boundary1=[]

x2_new_boundary2=[]

for x in x1_new:

x2_new_boundary1.append(f(x)[0]) #使用append()方法将元素添加到列表末尾

x2_new_boundary2.append(f(x)[1])

print(x2_new_boundary1)

fig3 = plt.figure()

passed = plt.scatter(data['test1'][mask],data['test2'][mask])

failed = plt.scatter(data['test1'][~mask],data['test2'][~mask])

plt.plot(x1_new,x2_new_boundary1)

plt.plot(x1_new,x2_new_boundary2)

plt.title('test1-test2')

plt.xlabel('test1')

plt.ylabel('test2')

plt.legend((passed,failed),('passed','failed'))

plt.show()

#创建x方向的密度集,解决画图过程中x轴数据分散导致图形不完整问题

x1_range = [-0.75 + x/10000 for x in range(0,19000)]

x1_range = np.array(x1_range)

x2_new_boundary1=[]

x2_new_boundary2=[]

for x in x1_range:

x2_new_boundary1.append(f(x)[0])

x2_new_boundary2.append(f(x)[1])

fig4 = plt.figure()

passed = plt.scatter(data['test1'][mask],data['test2'][mask])

failed = plt.scatter(data['test1'][~mask],data['test2'][~mask])

plt.plot(x1_range,x2_new_boundary1)

plt.plot(x1_range,x2_new_boundary2)

plt.title('test1-test2')

plt.xlabel('test1')

plt.ylabel('test2')

plt.legend((passed,failed),('passed','failed'))

plt.show()

三、机器学习之聚类

1.无监督学习

- 概念:

机器学习的一种方法,没有给定事先标记的训练示例,自动对输入的数据进行分类和分群 - 优点:

- 算法不受监督信息的约束,可能考虑到新的信息

- 不需要标签数据,极大程度扩大数据样本

- 主要应用:

聚类分析、关联规则、维度缩减

2.KMeans、KNN、Mean-shift

聚类分析:又称群分析,根据对象某些属性的相似度,将其自动划分为不同的类别,例如:客户划分、新闻关联、基因聚类。

KMeans聚类(k均值聚类)

-

K-均值算法以空间中k个点为中心进行聚类,对最靠近他们的对象归类,是聚类算法中最为基础但也最为重要的算法

-

公式:

- 数据点与各簇中心点距离:dist( x i , u j t x_i,u_j^t xi,ujt)

- 根据距离归类: x i ∈ u n e a r e s t t x_i \in u_{nearest}^t xi∈unearestt

- 中心更新: u j t + 1 = 1 k ∑ x i ∈ s j ∞ ( x i ) u_j^{t+1} = {1 \over k} \sum_{x_i \in s_j}^\infty (x_i) ujt+1=k1xi∈sj∑∞(xi)

-

算法流程

- 选择聚类的个数k

- 确定聚类中心

- 根据点到聚类中心聚类确定各个点所属类别

- 根据各个类别数据更新聚类中心

- 重复以上不知直到收敛(中心点不再变化)

-

特点:

- 实现简单,收敛快

- 需要指定类别的数量

K近邻分类模型(KNN)

给定一个训练数据集,对新的输入实例,在训练数据集中找到与该实例最邻近的k个实例,这k个实例的多数属于某个类,就把该输入实例分类到这个类中(监督学习)

Meanshift算法(均值漂移聚类)

-

一种基于密度梯度上升的聚类算法(沿着密度上升方向寻找聚类中心点)

-

算法流程:

- 随机选择未分类点作为中心点

- 找出离中心点距离在带宽之内的点,记作集合s

- 计算从中心点到集合s中每个元素的偏移向量M

- 中心点以向量M移动

- 重复步骤2-4直到收敛

- 重复1-5直到所有的点都被归类

- 分类:根据每个类,对每个点的访问频率,取访问频率最大的呢个类,作为当前点集的所属类

-

特点:

- 自动发现类别数量,不需要人工选择

- 需要选择区域半径

BDSCAN算法(基于密度的空间聚类算法)

-

- 基于区域点密度筛选有效数据

- 基于有效数据向周边扩张,知道没有新点加入

- 特点:

- 过滤噪音数据

- 不需要人为选择类别数量

- 数据密度不同时影响结果

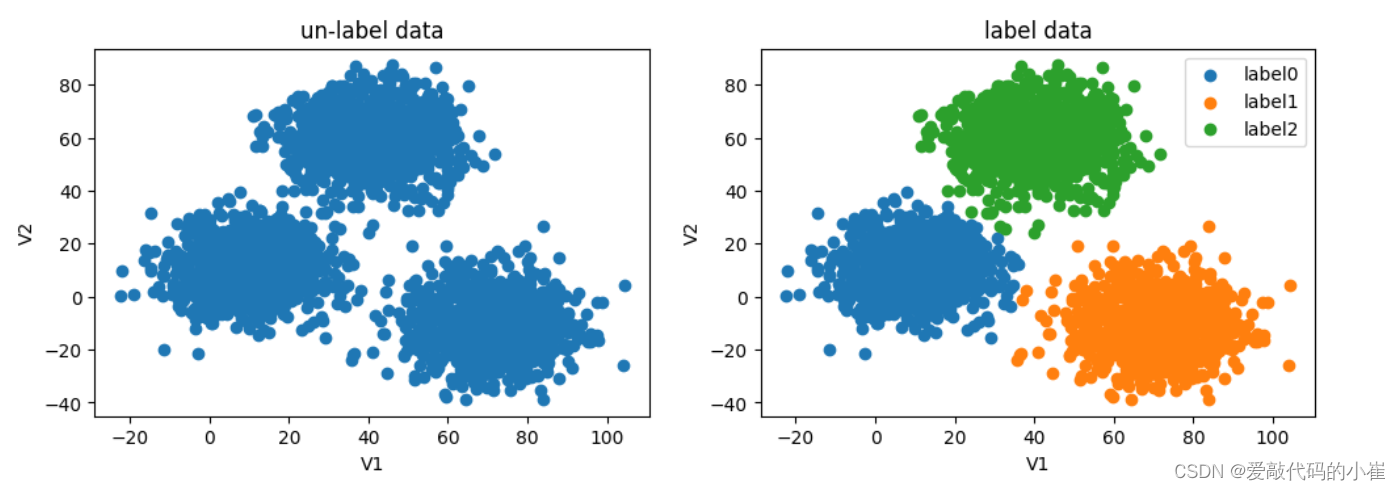

3.实战(一):2D数据类别划分

任务:

1. 采用Kmeans算法实现2D数据自动聚类,预测V1=80,V2=60数据类别;

2. 计算预测准确率,完成结果矫正

3. 采用KNN、Meanshift算法,重复步骤1-2

采用Kmeans算法

#load the data

import pandas as pd

import numpy as np

data = pd.read_csv('data.csv')

data.head()

无监督学习不需要标签(labels),这里的labels只是为了跟无监督式预测做一个对比

#define x and y

x=data.drop(['labels'],axis=1)

y=data.loc[:,'labels']

x.head()

#visualize the data

from matplotlib import pyplot as plt

fig=plt.figure(figsize=(12,8))

fig1=plt.subplot(221)

plt.scatter(data.loc[:,'V1'],data.loc[:,'V2'])

plt.title('un-label data')

plt.xlabel('V1')

plt.ylabel('V2')

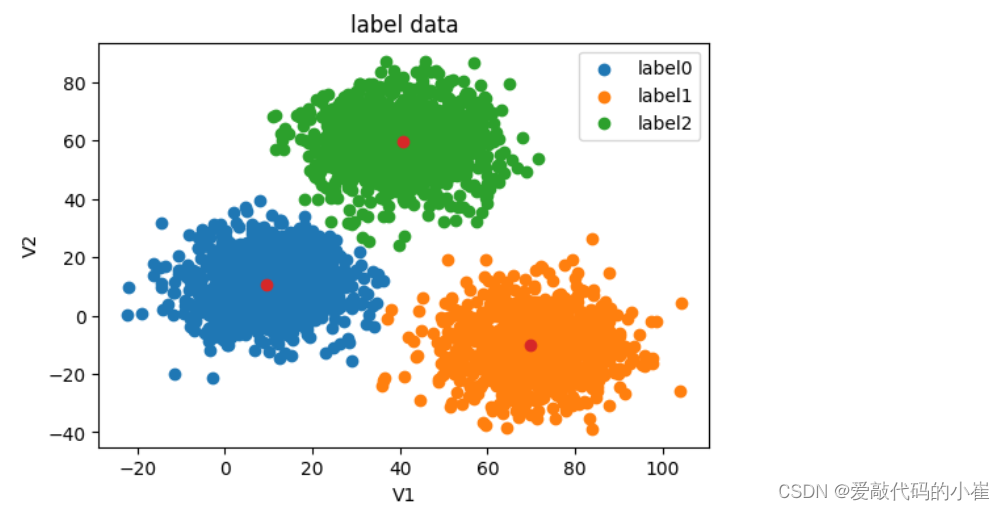

fig2=plt.subplot(222)

label0=plt.scatter(data.loc[:,'V1'][y==0],data.loc[:,'V2'][y==0])

label1=plt.scatter(data.loc[:,'V1'][y==1],data.loc[:,'V2'][y==1])

label2=plt.scatter(data.loc[:,'V1'][y==2],data.loc[:,'V2'][y==2])

plt.title('label data')

plt.xlabel('V1')

plt.ylabel('V2')

plt.legend((label0,label1,label2),('label0','label1','label2'))

plt.show()

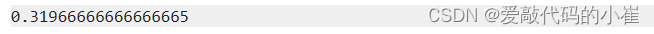

无标签数据绘图与有标签数据绘图做对比

n_clusters是将数据集划分为多少个簇

random_state是确保实验的可重复性以及结果的可复现性

#establish the model and train

from sklearn.cluster import KMeans

KM = KMeans(n_clusters=3,random_state=0)

KM.fit(x)

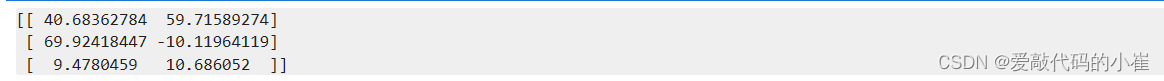

#求解数据模型中心点

centers = KM.cluster_centers_

print(centers)

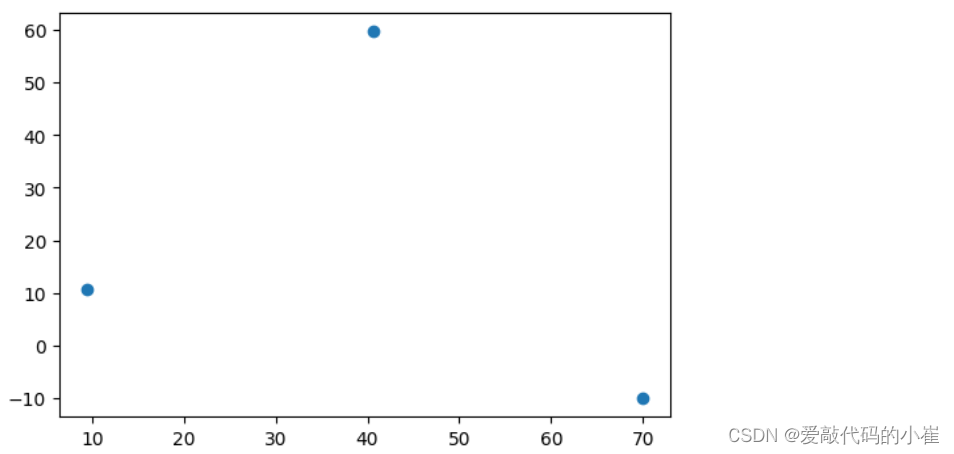

fig3 = plt.figure(figsize=(6,4))

plt.scatter(centers[:,0],centers[:,1])

plt.show()

fig4=plt.figure(figsize=(6,4))

label0=plt.scatter(data.loc[:,'V1'][y==0],data.loc[:,'V2'][y==0])

label1=plt.scatter(data.loc[:,'V1'][y==1],data.loc[:,'V2'][y==1])

label2=plt.scatter(data.loc[:,'V1'][y==2],data.loc[:,'V2'][y==2])

plt.scatter(centers[:,0],centers[:,1])

plt.title('label data')

plt.xlabel('V1')

plt.ylabel('V2')

plt.legend((label0,label1,label2),('label0','label1','label2'))

plt.show()

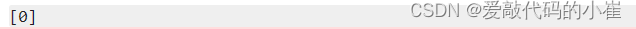

#test data:V1=80,V2=60

y_predict_test=KM.predict([[80,60]])

print(y_predict_test)

value_counts()函数用于统计不同取值出现的频数

#predict based on training data

y_predict=KM.predict(x)

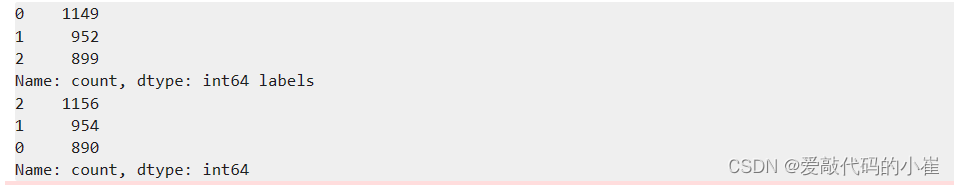

print(pd.value_counts(y_predict),pd.value_counts(y))

from sklearn.metrics import accuracy_score

accuracy=accuracy_score(y,y_predict)

print(accuracy)

#visualize the data

from matplotlib import pyplot as plt

fig=plt.figure(figsize=(12,8))

fig5=plt.subplot(221)

label0=plt.scatter(data.loc[:,'V1'][y_predict==0],data.loc[:,'V2'][y_predict==0])

label1=plt.scatter(data.loc[:,'V1'][y_predict==1],data.loc[:,'V2'][y_predict==1])

label2=plt.scatter(data.loc[:,'V1'][y_predict==2],data.loc[:,'V2'][y_predict==2])

plt.scatter(centers[:,0],centers[:,1])

plt.title('predict data')

plt.xlabel('V1')

plt.ylabel('V2')

plt.legend((label0,label1,label2),('label0','label1','label2'))

fig6=plt.subplot(222)

label0=plt.scatter(data.loc[:,'V1'][y==0],data.loc[:,'V2'][y==0])

label1=plt.scatter(data.loc[:,'V1'][y==1],data.loc[:,'V2'][y==1])

label2=plt.scatter(data.loc[:,'V1'][y==2],data.loc[:,'V2'][y==2])

plt.scatter(centers[:,0],centers[:,1])

plt.title('label data')

plt.xlabel('V1')

plt.ylabel('V2')

plt.legend((label0,label1,label2),('label0','label1','label2'))

plt.show()

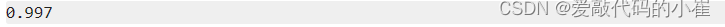

无监督学习预测结果标签与原数据规定标签所对应不上,因为无监督学习没有规定数据标签类别,故需要数据矫正

#correct the results 数据矫正

y_corrected=[]

for i in y_predict:

if i == 0:

y_corrected.append(2)

elif i == 1:

y_corrected.append(1)

else:

y_corrected.append(0)

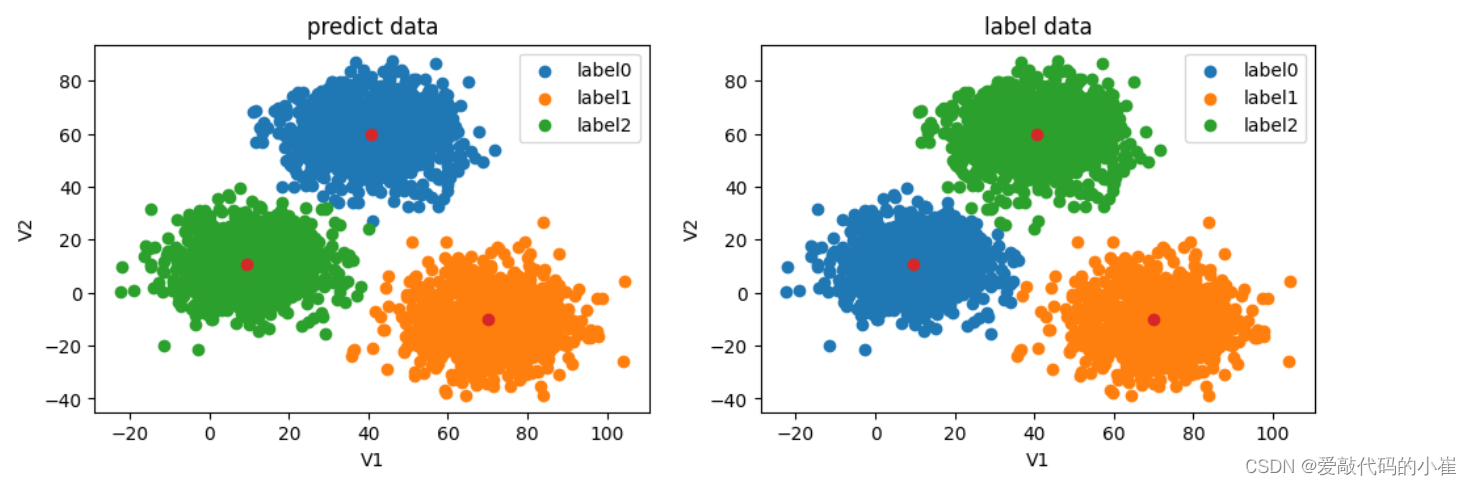

print(pd.value_counts(y_corrected),pd.value_counts(y))

accuracy_new=accuracy_score(y,y_corrected)

print(accuracy_new)

数据矫正后精确度提高

y_corrected=np.array(y_corrected)

print(type(y),type(y_corrected))

#visualize the data

from matplotlib import pyplot as plt

fig=plt.figure(figsize=(12,8))

fig5=plt.subplot(221)

label0=plt.scatter(data.loc[:,'V1'][y_corrected==0],data.loc[:,'V2'][y_corrected==0])

label1=plt.scatter(data.loc[:,'V1'][y_corrected==1],data.loc[:,'V2'][y_corrected==1])

label2=plt.scatter(data.loc[:,'V1'][y_corrected==2],data.loc[:,'V2'][y_corrected==2])

plt.scatter(centers[:,0],centers[:,1])

plt.title('corrected data')

plt.xlabel('V1')

plt.ylabel('V2')

plt.legend((label0,label1,label2),('label0','label1','label2'))

fig6=plt.subplot(222)

label0=plt.scatter(data.loc[:,'V1'][y==0],data.loc[:,'V2'][y==0])

label1=plt.scatter(data.loc[:,'V1'][y==1],data.loc[:,'V2'][y==1])

label2=plt.scatter(data.loc[:,'V1'][y==2],data.loc[:,'V2'][y==2])

plt.scatter(centers[:,0],centers[:,1])

plt.title('label data')

plt.xlabel('V1')

plt.ylabel('V2')

plt.legend((label0,label1,label2),('label0','label1','label2'))

plt.show()

4. 实战(二)

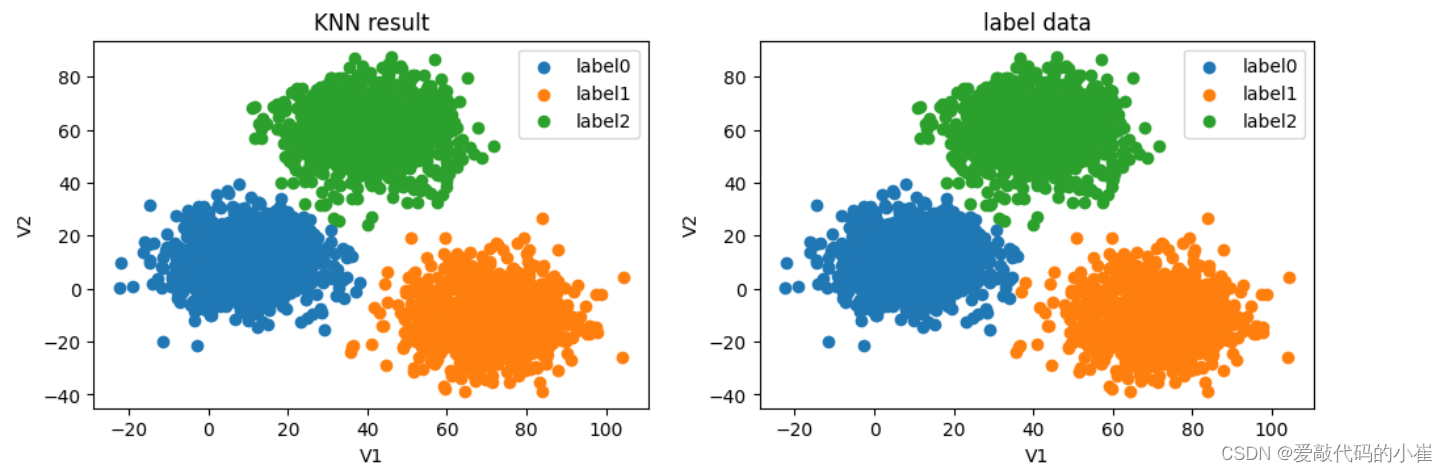

采用KNN算法

#establish a KNN model(KNN监督学习)

from sklearn.neighbors import KNeighborsClassifier

KN = KNeighborsClassifier()

KN.fit(x,y)

#predict based on the test data V1=80,V2=60

y_predict_KNN_test=KN.predict([[80,60]])

y_predict_KNN=KN.predict(x)

accuracy_KNN_score=accuracy_score(y,y_predict_KNN)

print(y_predict_KNN_test)

print(accuracy_KNN_score)

print(pd.value_counts(y),pd.value_counts(y_predict_KNN))

#visualize the data

from matplotlib import pyplot as plt

fig=plt.figure(figsize=(12,8))

fig5=plt.subplot(221)

label0=plt.scatter(data.loc[:,'V1'][y_predict_KNN==0],data.loc[:,'V2'][y_predict_KNN==0])

label1=plt.scatter(data.loc[:,'V1'][y_predict_KNN==1],data.loc[:,'V2'][y_predict_KNN==1])

label2=plt.scatter(data.loc[:,'V1'][y_predict_KNN==2],data.loc[:,'V2'][y_predict_KNN==2])

plt.title('KNN result')

plt.xlabel('V1')

plt.ylabel('V2')

plt.legend((label0,label1,label2),('label0','label1','label2'))

fig6=plt.subplot(222)

label0=plt.scatter(data.loc[:,'V1'][y==0],data.loc[:,'V2'][y==0])

label1=plt.scatter(data.loc[:,'V1'][y==1],data.loc[:,'V2'][y==1])

label2=plt.scatter(data.loc[:,'V1'][y==2],data.loc[:,'V2'][y==2])

plt.title('label data')

plt.xlabel('V1')

plt.ylabel('V2')

plt.legend((label0,label1,label2),('label0','label1','label2'))

plt.show()

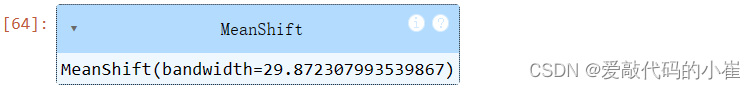

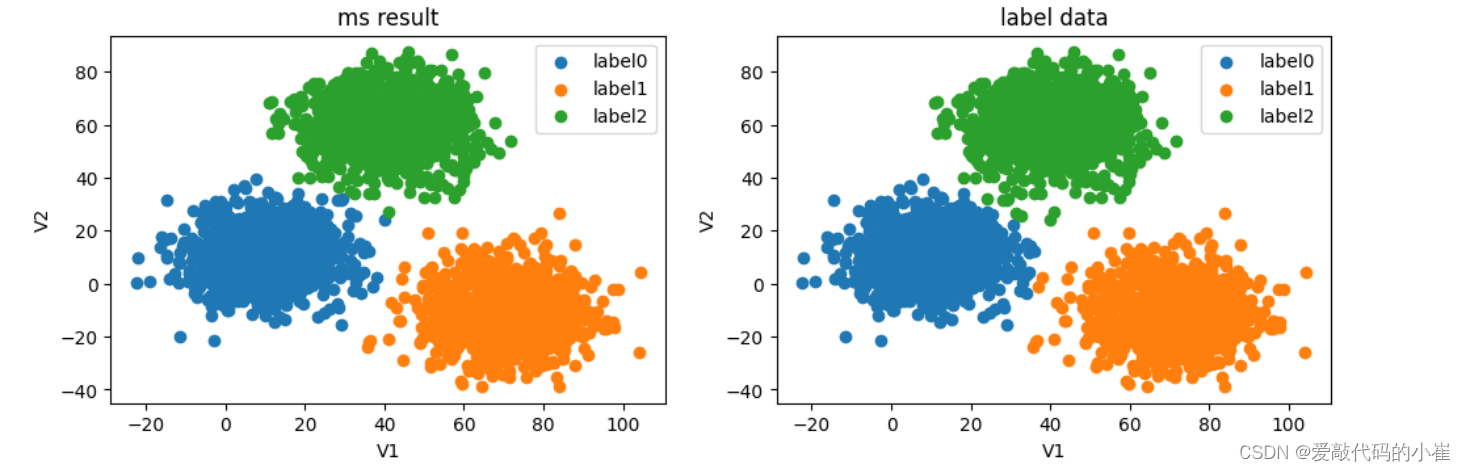

采用Meanshift算法

#try meanshift model

from sklearn.cluster import MeanShift,estimate_bandwidth

bw=estimate_bandwidth(x,n_samples=3000)

print(bw)

计算圆的半径

#train data model

MS=MeanShift(bandwidth=bw)

MS.fit(x)

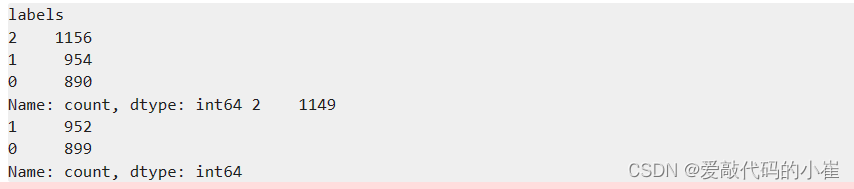

y_predict_ms=MS.predict(x)

print(pd.value_counts(y),pd.value_counts(y_predict_ms))

#correct the results

y_correct_ms=[]

for i in y_predict_ms:

if i == 0:

y_correct_ms.append(2)

elif i ==1:

y_correct_ms.append(1)

else:

y_correct_ms.append(0)

print(pd.value_counts(y),pd.value_counts(y_correct_ms))

accuracy_ms_score=accuracy_score(y,y_correct_ms)

print(accuracy_ms_score)

#covert the results to numpy array

y_correct_ms=np.array(y_correct_ms)

print(type(y_correct_ms)

#visualize the data

from matplotlib import pyplot as plt

fig=plt.figure(figsize=(12,8))

fig9=plt.subplot(221)

label0=plt.scatter(data.loc[:,'V1'][y_correct_ms==0],data.loc[:,'V2'][y_correct_ms==0])

label1=plt.scatter(data.loc[:,'V1'][y_correct_ms==1],data.loc[:,'V2'][y_correct_ms==1])

label2=plt.scatter(data.loc[:,'V1'][y_correct_ms==2],data.loc[:,'V2'][y_correct_ms==2])

plt.title('ms result')

plt.xlabel('V1')

plt.ylabel('V2')

plt.legend((label0,label1,label2),('label0','label1','label2'))

fig10=plt.subplot(222)

label0=plt.scatter(data.loc[:,'V1'][y==0],data.loc[:,'V2'][y==0])

label1=plt.scatter(data.loc[:,'V1'][y==1],data.loc[:,'V2'][y==1])

label2=plt.scatter(data.loc[:,'V1'][y==2],data.loc[:,'V2'][y==2])

plt.title('label data')

plt.xlabel('V1')

plt.ylabel('V2')

plt.legend((label0,label1,label2),('label0','label1','label2'))

plt.show()

四、机器学习其他常用技术

1.决策树(Dicesion Tree)

-

一种对实例进行分类的树形结构,通过多层判断区分目标所属类别

本质:通过多层判断,从训练数据集中归纳出一组分类规则 -

优点:

- 计算量小,运算速度快

- 易于理解,可清晰查看个属性的重要性

-

缺点:

- 忽略属性间的相关性

- 样本类别分布不均匀时,容易影响模型表现

-

求解方法

ID3:利用信息熵原理选择信息增益最大的属性作为分类属性,递归的拓展决策树的分枝,完成决策树的构造。

信息熵是度量随机变量不确定性的指标,熵越大,变量的不确定性就越大。假定当前样本集合D中第k类样本所占的比例为 p k p_k pk,则D的信息熵为: E n t ( D ) = − ∑ k = 1 ∣ y ∣ p k l o g 2 p k Ent(D) = -\sum_{k=1}^{|y|}p_klog_2p_k Ent(D)=−k=1∑∣y∣pklog2pk

根据信息熵,可以计算以属性a进行样本划分带来的信息增益:

G a i n ( D , a ) = E n t ( D ) − ∑ v = 1 V D v D E n t ( D v ) Gain(D,a)=Ent(D) - \sum_{v=1}^V{D^v \over D}Ent(D^v) Gain(D,a)=Ent(D)−v=1∑VDDvEnt(Dv)

V为根据属性a划分出的类别数、D为当前样本总数, D v D^v Dv为类别v样本数

目标:划分后样本分布不确定性尽可能小,即划分后信息熵小,信息增益大

2.异常检测(Anomaly Detection)

基于高斯分布实现异常检测

高斯分布的概率密度函数是:

p ( x ) = 1 δ 2 π e − ( x − μ ) 2 2 σ p(x)={1 \over \delta \sqrt{2\pi}} e^{-{(x-\mu)^2\over 2\sigma}} p(x)=δ2π1e−2σ(x−μ)2

其中, μ \mu μ为数据均值, σ \sigma σ为标准差:

μ = 1 m ∑ i = 1 m x ( i ) \mu={1 \over m} \sum_{i=1} ^mx(i) μ=m1i=1∑mx(i)

σ 2 = 1 m ∑ i = 1 m ( x ( i ) − μ ) 2 \sigma^2={1 \over m}\sum_{i=1}^m(x(i)-\mu)^2 σ2=m1i=1∑m(x(i)−μ)2

- 计算数据均值 μ \mu μ,标准差 σ \sigma σ

- 计算对应的高斯分布的概率密度函数

- 根据数据点概率,进行判断,如果 p ( x ) p(x) p(x)< ε \varepsilon ε,该点为异常点

数据高于一维时:

{ x 1 ( 1 ) , x 1 ( 2 ) , . . . . . . x 1 ( m ) x n ( 1 ) , x n ( 2 ) , . . . . . . x n ( m ) } \begin{Bmatrix} x_1(1),x_1(2),......x_1(m) \\ x_n(1),x_n(2),......x_n(m) \end{Bmatrix} {

x1(1),x1(2),......x1(m)xn(1),xn(2),......xn(m)}

- 计算数据均值 μ 1 , μ 2 , . . . . . μ n \mu_1,\mu_2,.....\mu_n μ1,μ2,.....μn,标准差 σ 1 , σ 2 , . . . . . . σ n \sigma_1,\sigma_2,......\sigma_n σ1,σ2,......σn

μ j = 1 m ∑ i = 1 m x j ( i ) σ j 2 = 1 m ∑ i = 1 m ( x j ( i ) − μ j ) 2 \mu_j={1 \over m}\sum_{i=1}^mx_j(i) \qquad \sigma_j^2={1 \over m} \sum_{i=1}^m(x_j(i)-\mu_j)^2 μj=m1i=1∑mxj(i)σj2=m1i=1∑m(xj(i)−μj)2 - 计算概率密度函数 p ( x ) p(x) p(x)

p ( x ) = ∏ j = 1 n 1 σ j 2 π e − ( x j − μ j ) 2 2 σ 2 p(x) = \prod_{j=1}^n{1 \over \sigma_j \sqrt{2\pi}}e^{-{(x_j-\mu_j)^2 \over 2\sigma^2}} p(x)=j=1∏nσj2π1e−2σ2(xj−μj)2

3.主成分分析(PCA)

数据降维,是指在某些限定条件下,降低随机变量个数,得到一组“不相关”主变量的过程。

PCA:数据降维方法

目标:寻找k(k<n)维新数据,使它们反映事物的主要过程。

核心:在信息损失尽可能少的情况下,降低数据维度。

计算过程:

1. 原始数据预处理(标准化: μ = 0 , σ = 1 \mu = 0,\sigma = 1 μ=0,σ=1)

2. 计算协方差矩阵特征向量、及数据在各特征向量投影后的方差

3. 根据需求确定降维维度k

4. 选取k维特征向量,计算数据在其形成空间的投影

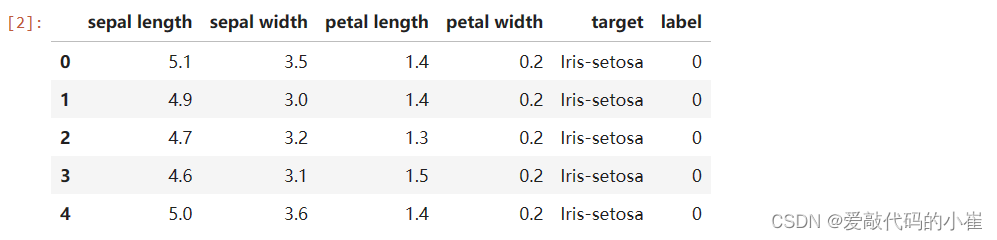

4.实战(一):决策树实现iris数据集分类

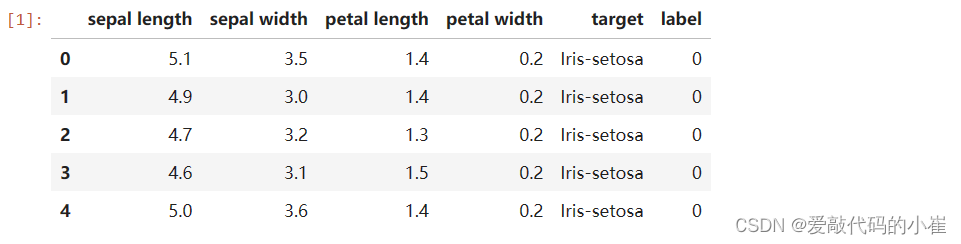

iris数据集

- iris鸢尾花数据集是一个经典数据集,在统计学习和机器学习领域都经常被用作示例。

- 3类(setosa,versicolour,virginica)共150条记录,每类各50个数据

- 每条记录都有4项特征:花萼长度(SepalLength)、花萼宽度(SepalWidth)、花瓣长度(PetalLength)、花瓣宽度(PetalWidth)

任务:

1. 基于iris_data.csv数据,建立决策树模型,评估模型表现

2. 可视化决策树结构

3. 修改min_samples_leaf参数,对比模型结果

#load the data

import pandas as pd

import numpy as np

data = pd.read_csv('iris_data.csv')

data.head()

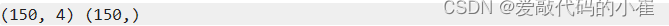

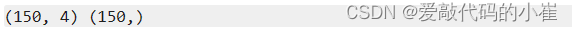

#define the x and y

x = data.drop(['target','label'],axis = 1)

y = data.loc[:,'label']

print(x.shape,y.shape)

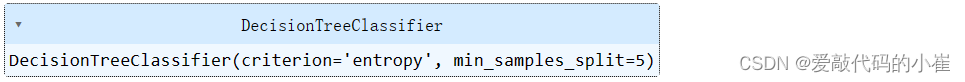

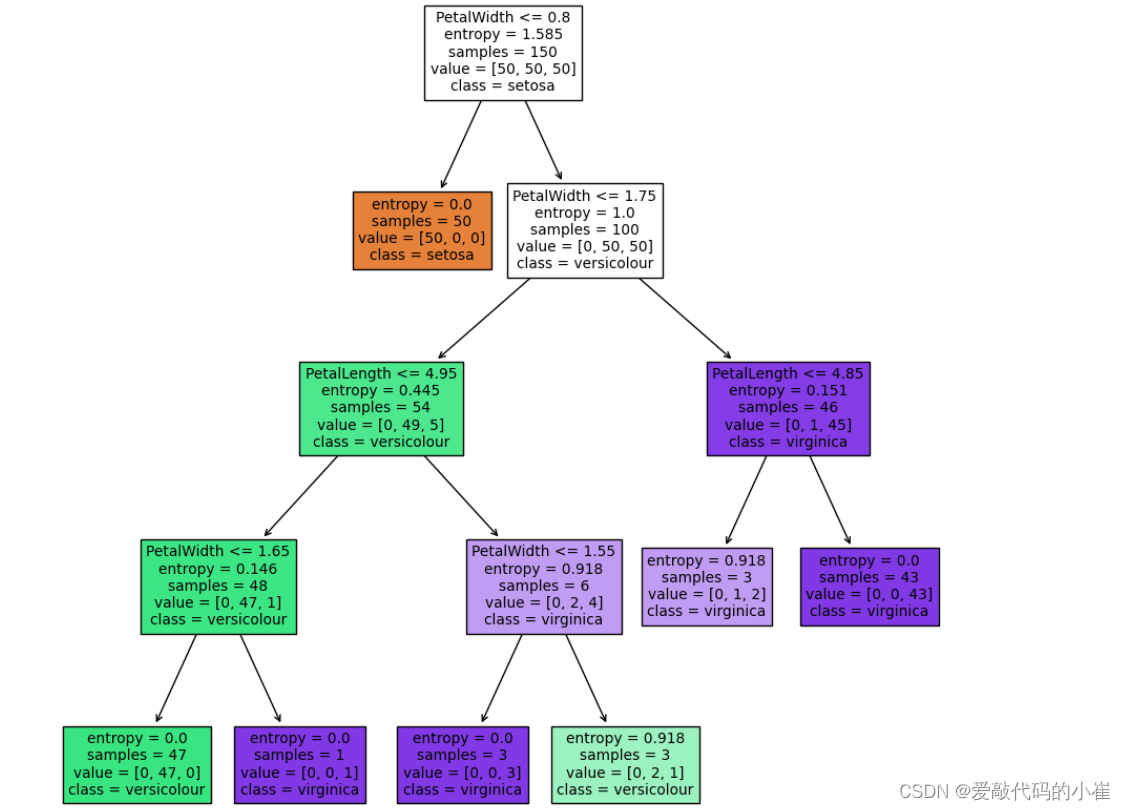

#establish the decision tree model

from sklearn import tree

#criterion用于衡量节点纯度的指标(ID3信息熵),min_samples_split指定内部节点再划分所需最小样本数

dc_tree = tree.DecisionTreeClassifier(criterion='entropy',min_samples_split=5)

dc_tree.fit(x,y)

#evaluate the model

from sklearn.metrics import accuracy_score

y_predict = dc_tree.predict(x)

accuracy = accuracy_score(y,y_predict)

print(accuracy)

#visualize the tree

from matplotlib import pyplot as plt

fig = plt.figure(figsize = (11,11))

#filled='True'表示以填充颜色表示节点的不纯度,feature_names对应数据集中特征的名称,class_names用于指定各个类别的名称

tree.plot_tree(dc_tree,filled = 'True',feature_names = ['SepalLength','SepalWidth','PetalLength','PetalWidth'],class_names = ['setosa','versicolour','virginica'])

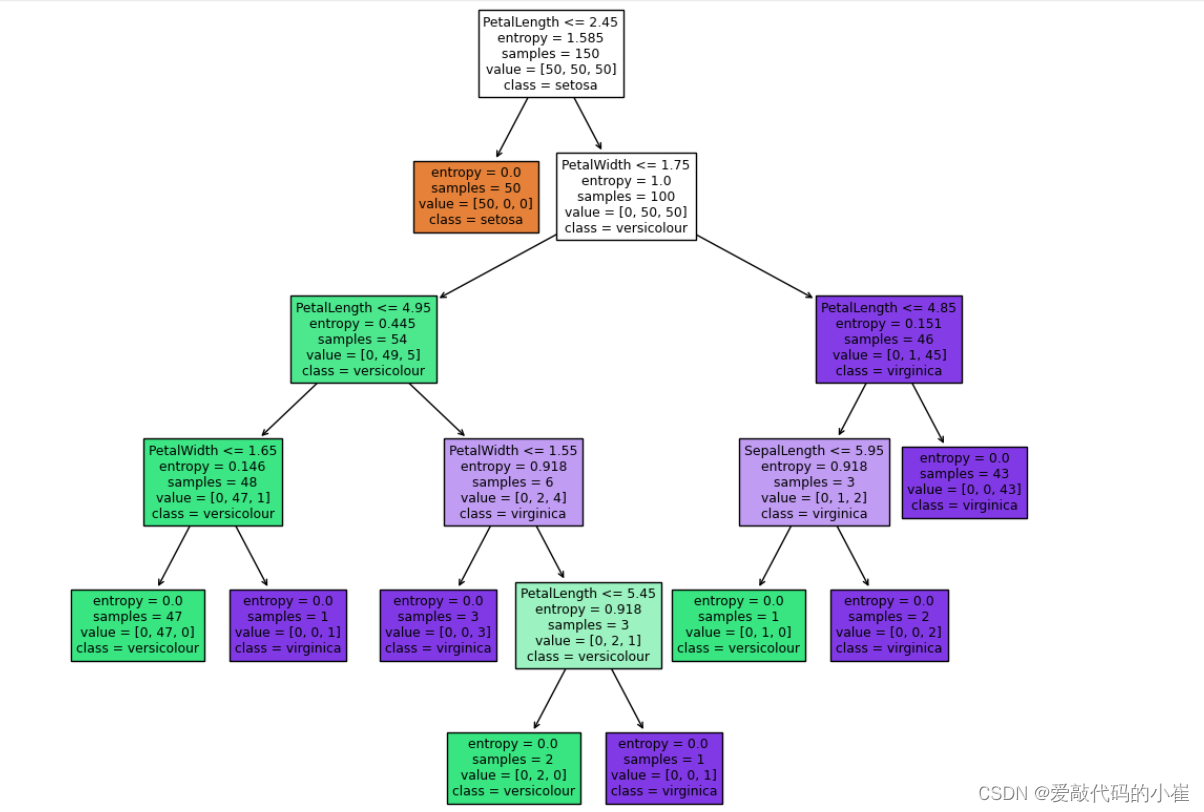

#modify min_samples_split

dc_tree=tree.DecisionTreeClassifier(criterion='entropy',min_samples_split=3)

dc_tree.fit(x,y)

fig = plt.figure(figsize = (12,12))

tree.plot_tree(dc_tree,filled = 'True',feature_names = ['SepalLength','SepalWidth','PetalLength','PetalWidth'],class_names = ['setosa','versicolour','virginica'])

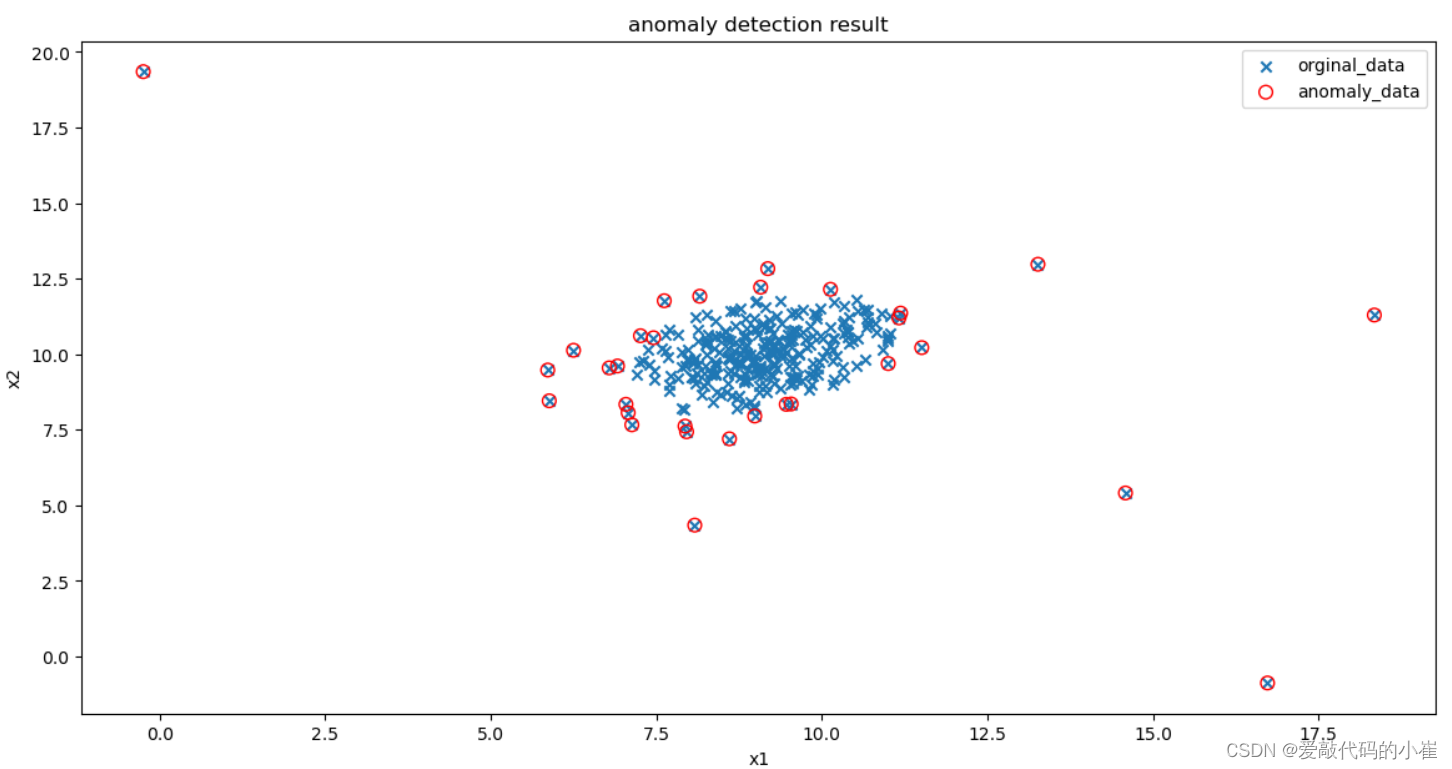

5.实战(二):异常数据检测

任务:

- 基于anomaly_data.csv数据,可视化数据分布情况、及其对应高斯分布的概率密度函数

- 建立模型,实现异常数据点预测

- 可视化异常检测处理结果

- 修改概率分布阈值EllipticEnvelope(contamination=0.1)中的contamination(异常点),查看阈值改变对结果的影响

#load the data

import pandas as pd

import numpy as np

data = pd.read_csv('anomaly_data.csv')

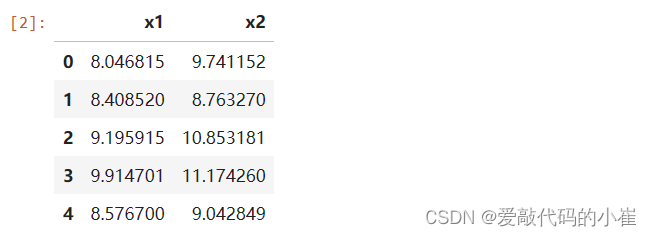

data.head()

#define x and y

x1 = data.loc[:,'x1']

x2 = data.loc[:,'x2']

print(x1.shape,x2.shape)

#visualize the data

from matplotlib import pyplot as plt

fig1 = plt.figure(figsize = (6,4))

plt.scatter(x1,x2)

plt.title('x1 and x2')

plt.xlabel('x1')

plt.ylabel('x2')

plt.show()

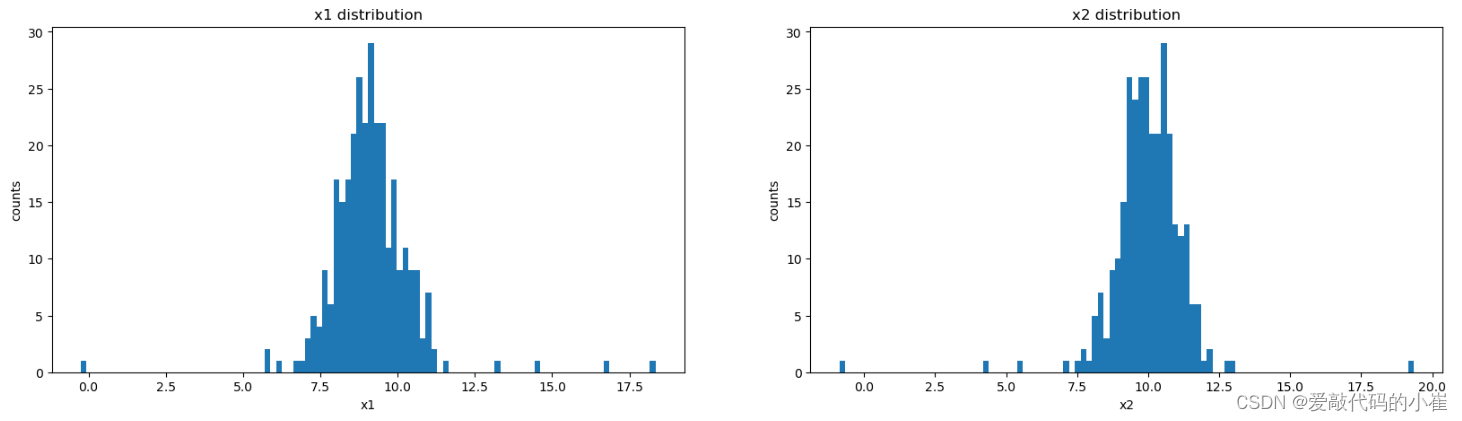

#visualize the data hist

fig2 = plt.figure(figsize = (20,5))

plt.subplot(121)

plt.hist(x1,bins=100) #bins指定直方图的区间数量

plt.title('x1 distribution')

plt.xlabel('x1')

plt.ylabel('counts')

plt.subplot(122)

plt.hist(x2,bins=100)

plt.title('x2 distribution')

plt.xlabel('x2')

plt.ylabel('counts')

plt.show()

#calculate the mean and sigma of x1 and x2

x1_mean = np.mean(x1)

x2_mean = np.mean(x2)

x1_sigma = np.std(x1)

x2_sigma = np.std(x2)

print(x1_mean,x2_mean,x1_sigma,x2_sigma)

#calculate the gaussian distribution p(x)

#scipy.stats统计学与概率分布 norm正态分布

from scipy.stats import norm

#linspace用于生成在指定区间内等间距的数值数组

x1_range = np.linspace(0,20,300)

#x1_range希望计算其概率密度的点.对于正态分布,还需要提供均值(mean)和标准差(sigma)

x1_normal = norm.pdf(x1_range,x1_mean,x1_sigma)

x2_range = np.linspace(0,20,300)

x2_normal = norm.pdf(x2_range,x2_mean,x2_sigma)

#visualize the p(x)

fig3 = plt.figure(figsize = (20,5))

plt.subplot(121)

plt.plot(x1_range,x1_normal)

plt.title('normal p(x1)')

plt.xlabel('x1_range')

plt.ylabel('x1_normal')

plt.subplot(122)

plt.plot(x2_range,x2_normal)

plt.title('normal p(x2)')

plt.xlabel('x2_range')

plt.ylabel('x2_normal')

plt.show()

#establish the model and predict

#EllipticEnvelope用于检测高斯分布数据集中的异常值

from sklearn.covariance import EllipticEnvelope

ad_model = EllipticEnvelope()

ad_model.fit(data)

#make prediction

y_predict=ad_model.predict(data)

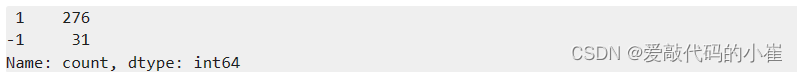

print(pd.value_counts(y_predict))

#visualize the result

fig4 = plt.figure(figsize = (14,7))

orginal_data = plt.scatter(x1,x2,marker='x')

#marker定义散点的形状edgecolor设置散点边缘的颜色

anomaly_data = plt.scatter(x1[y_predict == -1],x2[y_predict == -1],marker='o',facecolor = 'none',edgecolor='red',s=60)

plt.title('anomaly detection result')

plt.xlabel('x1')

plt.ylabel('x2')

plt.legend((orginal_data,anomaly_data),('orginal_data','anomaly_data'))

plt.show()

#modify contamination

#contamination默认为0.1,影响模型对异常值的敏感度。较高的值意味着模型将标记更多的点为异常。

ad_model = EllipticEnvelope(contamination=0.02)

ad_model.fit(data)

y_predict=ad_model.predict(data)

fig5 = plt.figure(figsize = (14,7))

orginal_data = plt.scatter(x1,x2,marker='x')

#marker定义散点的形状edgecolor设置散点边缘的颜色

anomaly_data = plt.scatter(x1[y_predict == -1],x2[y_predict == -1],marker='o',facecolor = 'none',edgecolor='red',s=60)

plt.title('anomaly detection result')

plt.xlabel('x1')

plt.ylabel('x2')

plt.legend((orginal_data,anomaly_data),('orginal_data','anomaly_data'))

plt.show()

6.实战(三):PCA降维后实现iris数据集分类

任务:

- 基于iris_data.csv数据,建立KNN模型实现数据分类(n_neighbors)

- 对数据进行标准化处理,选取一个维度可视化处理后的效果

- 进行与原数据等维度PCA,查看各主成分的方差比例

- 保留合适的主成分,可视化降维后的数据

#load the data

import pandas as pd

import numpy as np

data = pd.read_csv('iris_data.csv')

data.head()

#define x and y

x = data.drop(['target','label'],axis = 1)

y = data.loc[:,'label']

print(x.shape,y.shape)

#establish a KNN model(KNN监督学习)

from sklearn.neighbors import KNeighborsClassifier

#n_neighbors设置要寻找的最近邻的数量,默认为5

KNN = KNeighborsClassifier(n_neighbors = 3)

KNN.fit(x,y)

#calculate the accuracy

from sklearn.metrics import accuracy_score

y_predict = KNN.predict(x)

accuracy = accuracy_score(y,y_predict)

print(accuracy)

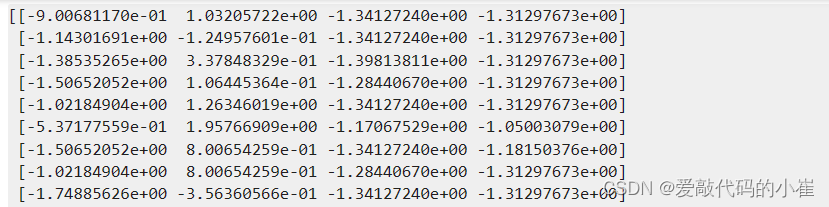

#standardized the data

#standardScaler对数据进行标准化,其目的是将数据转换为均值为0、标准差为1的标准正态分布。

#这种标准化处理有助于消除不同特征间尺度和单位的影响,使得模型在处理各种特征时更加公平

from sklearn.preprocessing import StandardScaler

scaler = StandardScaler()

#fit_transform先调用 fit() 计算均值和标准差,再调用 transform() 对 X 进行标准化处理。

x_norm = scaler.fit_transform(x)

print(x_norm)

#calculate the mean and sigma

x1_mean = np.mean(x.loc[:,'sepal length'])

x1_sigma = np.std(x.loc[:,'sepal length'])

x1_norm_mean = np.mean(x_norm[:,0])

x1_norm_sigma = np.std(x_norm[:,0])

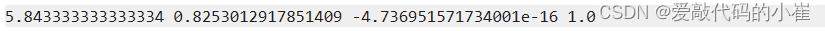

print(x1_mean,x1_sigma,x1_norm_mean,x1_norm_sigma)

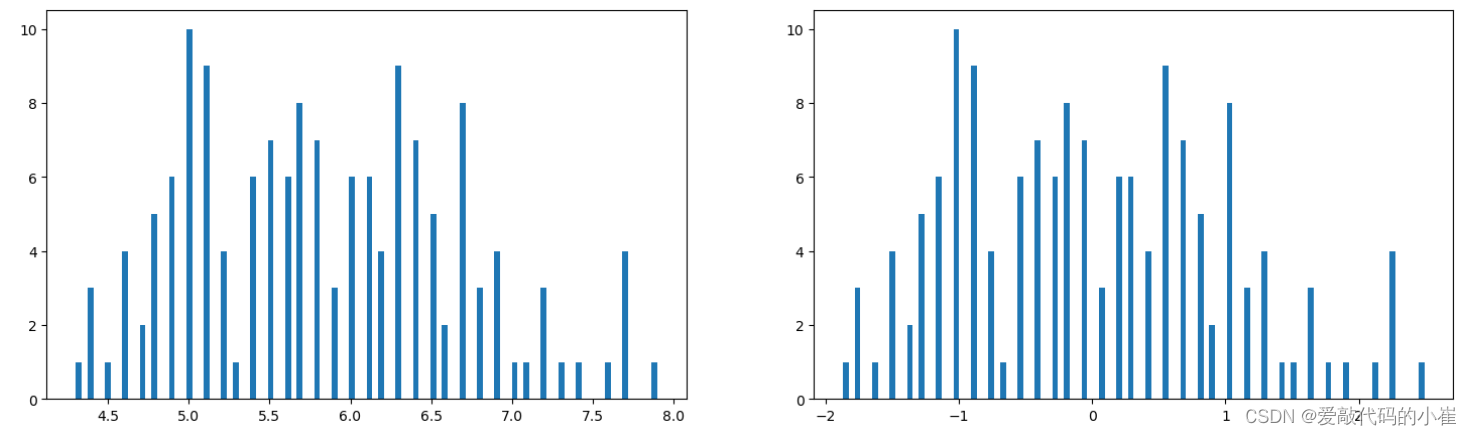

#visualize the data model

from matplotlib import pyplot as plt

fig = plt.figure(figsize = (18,5))

plt.subplot(121)

plt.hist(x.loc[:,'sepal length'],bins = 100)

plt.subplot(122)

plt.hist(x_norm[:,0],bins = 100)

plt.show()

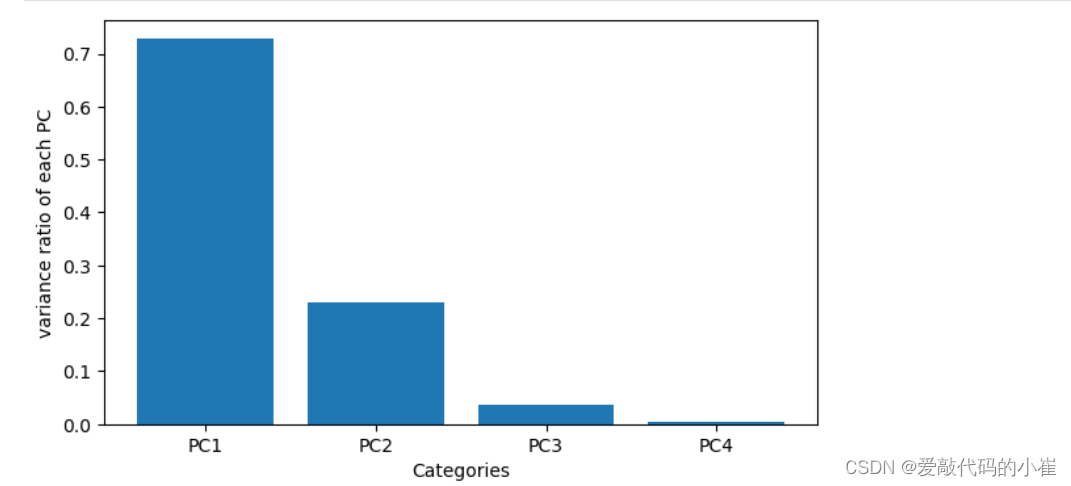

#PCA analysis

#'decomposition'(分解)这种分解技术广泛应用于降维

from sklearn.decomposition import PCA

#n_components主成分个数

pca = PCA(n_components=4)

pca.fit(x_norm)

#calculate the variance ratio of each principle components

#pca.explained_variance_ratio它表示每个主成分所解释的原始数据方差的比例。

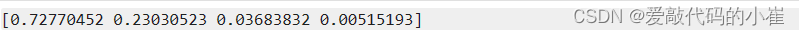

var_ratio = pca.explained_variance_ratio_

print(var_ratio)

#visualize the original variance ratio model

fig2 = plt.figure(figsize = (7,4))

plt.bar([1,2,3,4],var_ratio)

plt.xticks([1,2,3,4],['PC1','PC2','PC3','PC4'])

plt.ylabel('variance ratio of each PC')

plt.xlabel('Categories')

plt.show()

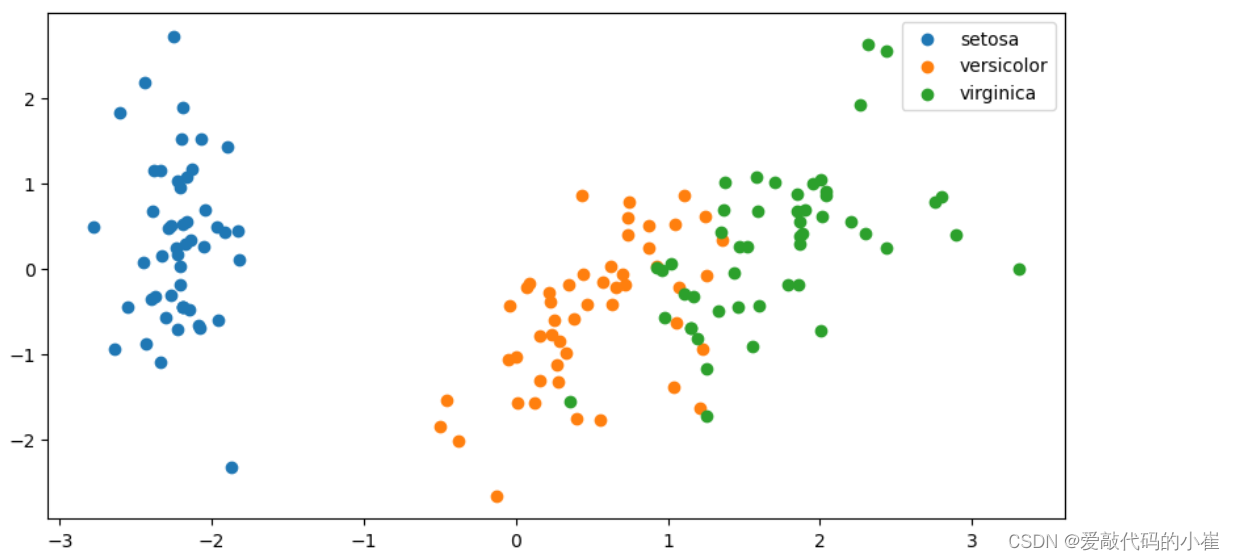

#dimensionality reduction降维

pca_new = PCA(n_components=2)

#fit_transform先用数据集 X 训练PCA模型,然后立即对同一份数据集 X 进行降维处理。

x_pca = pca_new.fit_transform(x_norm)

print(x_pca.shape)

#visualize the PCA result

fig3 = plt.figure(figsize = (10,5))

setosa = plt.scatter(x_pca[:,0][y == 0],x_pca[:,1][y == 0])

versicolor = plt.scatter(x_pca[:,0][y == 1],x_pca[:,1][y == 1])

virginica = plt.scatter(x_pca[:,0][y == 2],x_pca[:,1][y == 2])

plt.legend((setosa,versicolor,virginica),('setosa','versicolor','virginica'))

plt.show()

#calculate the dimensionality reduction data model accuracy

KNN_new = KNeighborsClassifier(n_neighbors=3)

KNN_new.fit(x_pca,y)

y_predict_pca = KNN_new.predict(x_pca)

accuracy_pac = accuracy_score(y,y_predict_pca)

print(accuracy_pac)

五、模型评价与优化

1.过拟合与欠拟合

- 过拟合原因:

- 模型结构过于复杂(维度过高)

- 使用了过多的属性,模型训练时包含了干扰项信息

- 解决办法:

-

简化模型结构(使用低阶模型,比如线性模型)

-

数据预处理,保留主成分信息(数据PCA处理)

-

在模型训练时,增加正则化项

线性回归正则化处理后的损失函数 ( J ) (J) (J) J = 1 2 m ∑ i = 1 m ( g ( θ , x i ) − y i ) 2 + λ 2 m ∑ j = 1 n θ j 2 J = {1 \over 2m}\sum_{i=1}^m(g(\theta,x_i) - y_i)^2 + {\lambda \over 2m}\sum_{j=1}^n\theta_j^2 J=2m1i=1∑m(g(θ,xi)−yi)2+2mλj=1∑nθj2

通过引入正则化项, λ \lambda λ取值大的情况下,可约束 θ \theta θ取值,有效控制各个属性数据的影响。

- 欠拟合原因:

- 模型结构过于简单(维度过低)

- 训练不足,模型训练过程中的迭代次数不足,未能充分优化模型参数

- 解决办法:

- 复杂模型结构

- 优化训练过程

- 调整正则化参数,将正则化参数调低

2.数据分离与混淆矩阵

- 数据分离:

- 把数据分成两部分:训练集、测试集

- 使用训练集数据进行模型训练

- 使用测试集数据进行预测,更有效地评估模型对新数据的预测表现

- 混淆矩阵

用于衡量分类算法的准确程度

| 预测结果 | |||

| 0 | 1 | ||

| 实际结果 | 0 | True Negative(TN) | False Positive(FP) |

| 1 | False Negative(FN) | True Positive(TP) | |

True Negative(TN):预测准确、实际为正样本的数量(实际为1,预测为1)

True Positive(TP):预测准确、实际为负样本的数量(实际为0,预测为0)

False Positive(FP):预测错误、实际为负样本的数量(实际为0,预测为1)

False Negative(FN):预测错误、实际为正样本的数量(实际为1,预测为0)

| 率 | 公式 | 定义 |

|---|---|---|

| 准确率 | T P + T N T P + T N + F P + F N TP + TN \over TP + TN + FP + FN TP+TN+FP+FNTP+TN | 总样本中,预测正确比例 |

| 错误率 | F P + F N T P + T N + F P + F N FP + FN \over TP + TN + FP + FN TP+TN+FP+FNFP+FN | 总样本中,预测错误比例 |

| 召回率 | T P T P + F N TP\over TP + FN TP+FNTP | 正样本中,预测正确比例 |

| 特异度 | T N T N + F P TN \over TN + FP TN+FPTN | 负样本中,预测正确比例 |

| 精确率 | T P T P + F P TP \over TP + FP TP+FPTP | 预测结果的正的样本中,预测 正确的比例 |

| F1分数 | 2 ∗ P r e c i s i o n ∗ R e c a l l P r e c i s i o n + R e c a l l 2 * Precision * Recall \over Precision + Recall Precision+Recall2∗Precision∗Recall | 综合Precison和Recall的指标 |

- 混淆矩阵指标特点:

- 分类任务中,相比单一的预测准确率,混淆矩阵提供了更全面的模型评估信息(TP\TN\FP\FN)

- 通过混淆矩阵,可以计算出多样的模型表现衡量指标,从而更好地选择模型

3.模型优化

数据的质量决定模型表现的上限!

目标:在确定模型类别后,如何让模型表现更好

方法:

1. 遍历核心参数组合,评估对应模型表现(比如:逻辑回归边界函数考虑多项式、KNN尝试不用的n_neighbors值)

2. 扩大数据样本

3. 增加或减少数据属性

4. 对数据进行降维处理

5. 对模型进行正则化处理,调整正则项 λ \lambda λ的数值

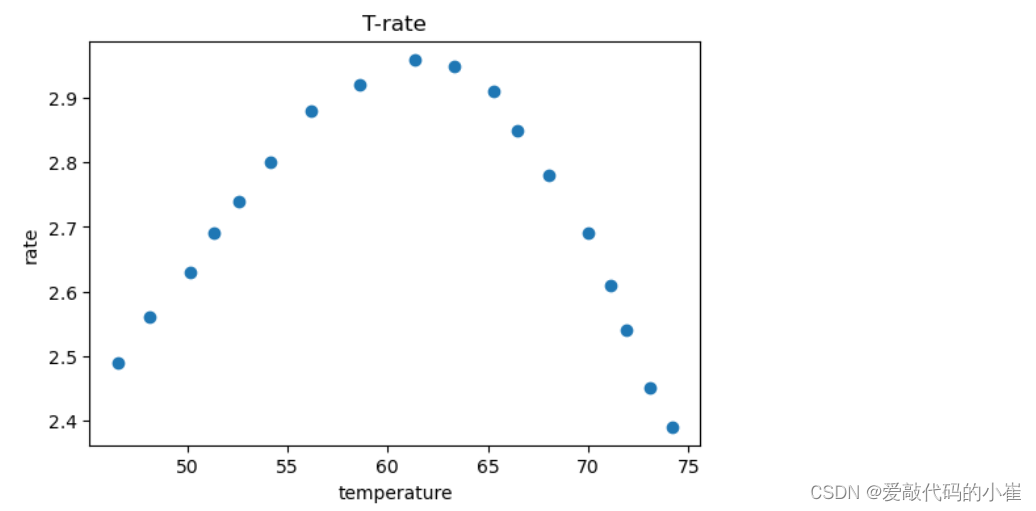

4.实战(一):酶活性预测

任务:

- 基于T-R-train.csv数据,建立线性回归模型,计算其在T-R-test.csv数据上的r2分数,可视化模型预测结果

- 加入多项式特征(2次、5次),建立回归模型

- 计算多项式回归模型对测试数据进行预测的r2分数,判断哪个模型预测更准确

- 可视化多项式回归模型数据预测结果,判断哪个模型预测更准确

一阶阶多项式建立线性回归模型

#load the data

import pandas as pd

import numpy as np

data_train = pd.read_csv('T-R-train.csv')

data_train.head()

#define the x and y

x_train = data_train.loc[:,'T']

y_train = data_train.loc[:,'rate']

print(x_train.shape,y_train.shape)

#visualize the data

from matplotlib import pyplot as plt

fig1 = plt.figure(figsize = (6,4))

plt.scatter(x_train,y_train)

plt.title('T-rate')

plt.xlabel('temperature')

plt.ylabel('rate')

plt.show()

x_train = np.array(x_train).reshape(-1,1)

print(x_train.shape)

#set up the linear regression model

from sklearn.linear_model import LinearRegression

LR = LinearRegression()

LR.fit(x_train,y_train)

#load the test data

data_test = pd.read_csv('T-R-test.csv')

x_test = data_test.loc[:,'T']

y_test = data_test.loc[:,'rate']

print(x_test.shape,y_test.shape)

x_test = np.array(x_test).reshape(-1,1)

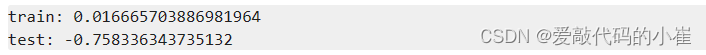

#make prediction on the training and test data

from sklearn.metrics import r2_score

y_train_predict = LR.predict(x_train)

y_test_predict = LR.predict(x_test)

r2_train = r2_score(y_train,y_train_predict)

r2_test = r2_score(y_test,y_test_predict)

print('train:',r2_train)

print('test:',r2_test)

#generate new data

x_range = np.linspace(35,85,300).reshape(-1,1)

y_range_predict = LR.predict(x_range)

#visualize the data

fig2 = plt.figure(figsize = (6,4))

plt.plot(x_range,y_range_predict,'r')

plt.scatter(x_train,y_train)

plt.title('prediction data')

plt.xlabel('temperature')

plt.ylabel('rate')

plt.show()

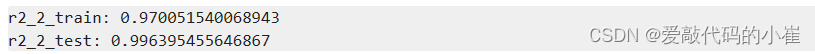

二阶多项式建立线性回归模型

#多项式模型

#generate new features

from sklearn.preprocessing import PolynomialFeatures

#degree = 2 表示2阶多项式特征

poly2 = PolynomialFeatures(degree = 2)

#transform对x_train中的每个样本进行二阶多项式特征生成

x2_train = poly2.fit_transform(x_train)

x2_test = poly2.transform(x_test)

print(type(x2_train),x2_train)

LR2 = LinearRegression()

LR2.fit(x2_train,y_train)

y2_train_predict = LR2.predict(x2_train)

y2_test_predict = LR2.predict(x2_test)

r2_2_train = r2_score(y_train,y2_train_predict)

r2_2_test = r2_score(y_test,y2_test_predict)

print('r2_2_train:',r2_2_train)

print('r2_2_test:',r2_2_test)

#generate new data

x2_range = np.linspace(35,85,300).reshape(-1,1)

x2_range =poly2.transform(x2_range)

y2_range_predict = LR2.predict(x2_range)

#visualize the data

fig3 = plt.figure(figsize = (6,4))

plt.plot(x_range,y2_range_predict)

train_data = plt.scatter(x_train,y_train)

test_data= plt.scatter(x_test,y_test)

plt.title('polynomial prediction result (2)')

plt.xlabel('temperature')

plt.ylabel('rate')

plt.legend((train_data,test_data),('train_data','test_data'))

plt.show()

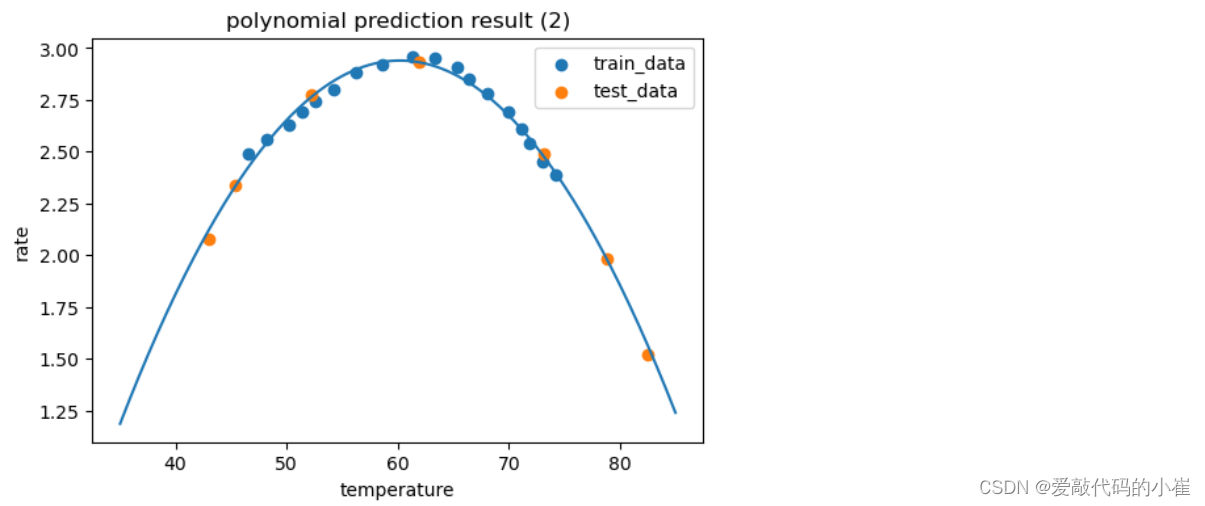

五阶多项式建立线性回归模型

poly5 = PolynomialFeatures(degree=5)

x5_train = poly5.fit_transform(x_train)

x5_test = poly5.transform(x_test)

LR3 = LinearRegression()

LR3.fit(x5_train,y_train)

y5_train_predict = LR3.predict(x5_train)

y5_test_predict = LR3.predict(x5_test)

r2_5_train = r2_score(y_train,y5_train_predict)

r2_5_test = r2_score(y_test,y5_test_predict)

print('r2_5_train:',r2_5_train)

print('r2_5_test:',r2_5_test)

x5_range = np.linspace(35,85,300).reshape(-1,1)

x5_range = poly5.transform(x5_range)

y5_range_predict = LR3.predict(x5_range)

fig4 = plt.figure(figsize = (6,4))

plt.plot(x_range,y5_range_predict)

train_data = plt.scatter(x_train,y_train)

test_data= plt.scatter(x_test,y_test)

plt.title('polynomial prediction result (5)')

plt.xlabel('temperature')

plt.ylabel('rate')

plt.legend((train_data,test_data),('train_data','test_data'))

plt.show()

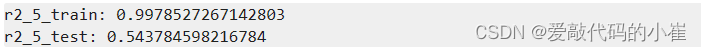

5.实战(二):质量好坏预测

任务:

- 基于data_class_raw.csv数据,根据高斯分布概率密度函数,寻找异常点并剔除

- 基于data_class_processed.csv数据,进行PCA处理,确定重要数据维度及成分

- 完成数据分离,数据分离参数:random_state=4,test_size=0.4

- 建立KNN模型完成分类,n_neighbors取10,计算分类准确率,可视化分类边界

- 计算测试数据集对应的混淆矩阵,计算准确率、召回率、特异度、精确率、F1分数

- 尝试不用的n_neighbors(1-20),计算其在训练数据集、测试数据集上的准确率并作图

根据高斯分布概率密度函数,寻找异常点

#load the data

import pandas as pd

import numpy as np

data = pd.read_csv('data_class_raw.csv')

data.head()

#define x and y

x = data.drop(['y'],axis = 1)

y = data.loc[:,'y']

print(x.shape,y.shape)

#visualize the data

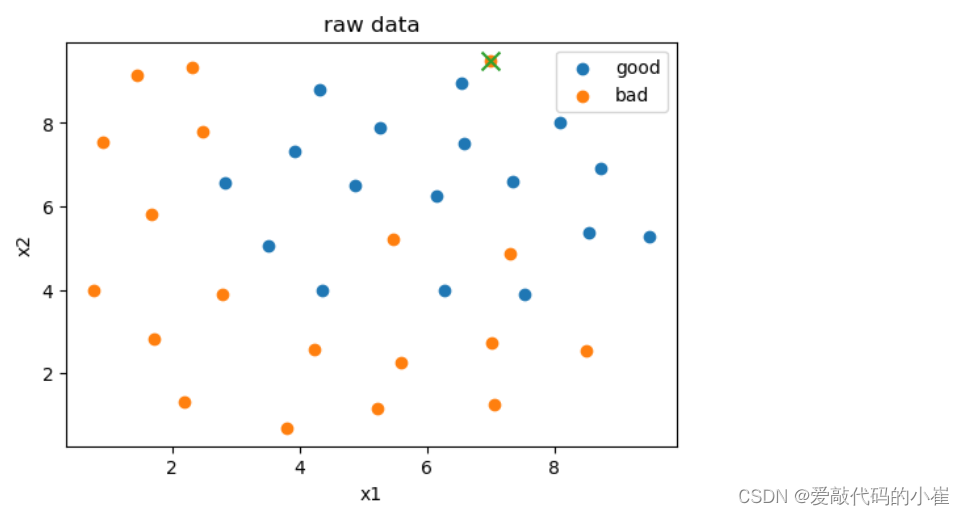

from matplotlib import pyplot as plt

fig = plt.figure(figsize = (6,4))

good = plt.scatter(data.loc[:,'x1'][y==1],data.loc[:,'x2'][y==1])

bad = plt.scatter(data.loc[:,'x1'][y==0],data.loc[:,'x2'][y==0])

plt.title('raw data')

plt.xlabel('x1')

plt.ylabel('x2')

plt.legend((good,bad),('good','bad'))

plt.show()

#anomaly detection

from sklearn.covariance import EllipticEnvelope

ad_model = EllipticEnvelope(contamination=0.02)

ad_model.fit(x[y==0])

y_predict_bad = ad_model.predict(x[y==0])

print(y_predict_bad)

from matplotlib import pyplot as plt

fig1 = plt.figure(figsize = (6,4))

good = plt.scatter(data.loc[:,'x1'][y==1],data.loc[:,'x2'][y==1])

bad = plt.scatter(data.loc[:,'x1'][y==0],data.loc[:,'x2'][y==0])

plt.scatter(data.loc[:,'x1'][y==0][y_predict_bad==-1],data.loc[:,'x2'][y==0][y_predict_bad==-1],marker = 'x',s = 100)

plt.title('raw data')

plt.xlabel('x1')

plt.ylabel('x2')

plt.legend((good,bad),('good','bad'))

plt.show()

进行PCA处理

#load the data

import pandas as pd

import numpy as np

data = pd.read_csv('data_class_processed.csv')

data.head()

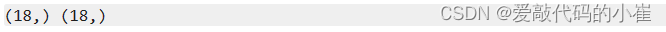

#define x and y

x = data.drop(['y'],axis = 1)

y = data.loc[:,'y']

print(x.shape,y.shape)

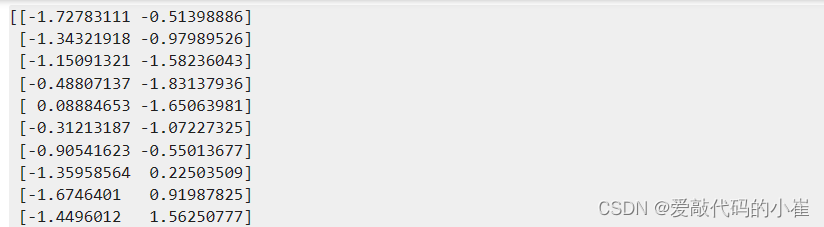

#standardized the data

from sklearn.preprocessing import StandardScaler

scaler = StandardScaler()

x_norm = scaler.fit_transform(x)

print(x_norm)

#PCA analysis

from sklearn.decomposition import PCA

pca = PCA(n_components=2)

pca.fit(x_norm)

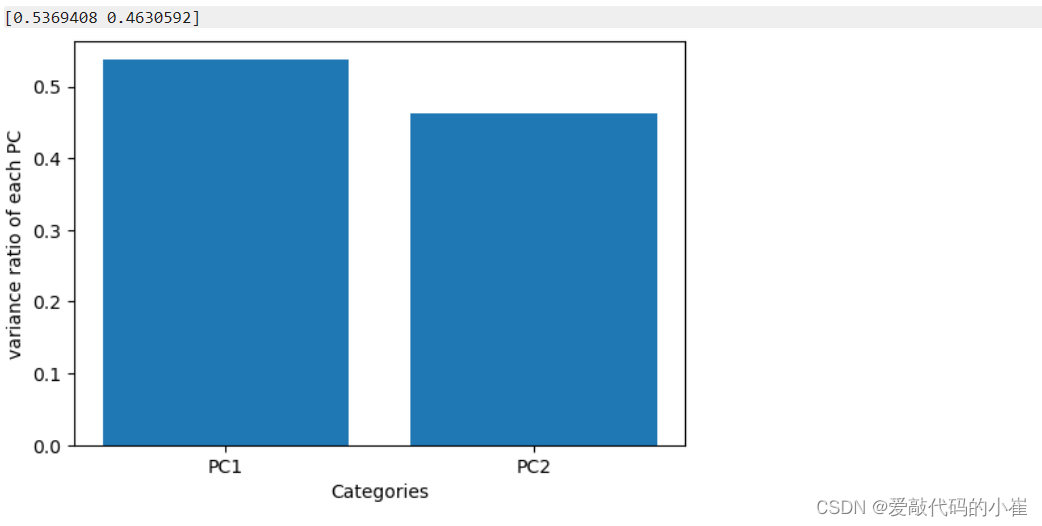

#calculate the variance ratio of each principle components

var_ratio = pca.explained_variance_ratio_

print(var_ratio)

#visualize the original variance ratio model

plt2 = plt.figure(figsize = (6,4))

plt.bar([1,2],var_ratio)

plt.xticks([1,2],['PC1','PC2'])

plt.ylabel('variance ratio of each PC')

plt.xlabel('Categories')

plt.show()

进行数据分离

#train and test split:random_state=4,test_size=0.4

from sklearn.model_selection import train_test_split

x_train,x_test,y_train,y_test = train_test_split(x,y,random_state=4,test_size=0.4)

print(x_train.shape,x_test.shape)

建立KNN模型

#set up the KNN model

from sklearn.neighbors import KNeighborsClassifier

KNN = KNeighborsClassifier(n_neighbors=10)

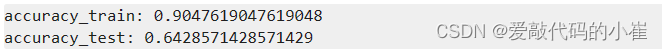

KNN.fit(x_train,y_train)

#calculate the accuracy

from sklearn.metrics import accuracy_score

y_train_predict = KNN.predict(x_train)

y_test_predict = KNN.predict(x_test)

accuracy_train = accuracy_score(y_train,y_train_predict)

accuracy_test = accuracy_score(y_test,y_test_predict)

print('accuracy_train:',accuracy_train)

print('accuracy_test:',accuracy_test)

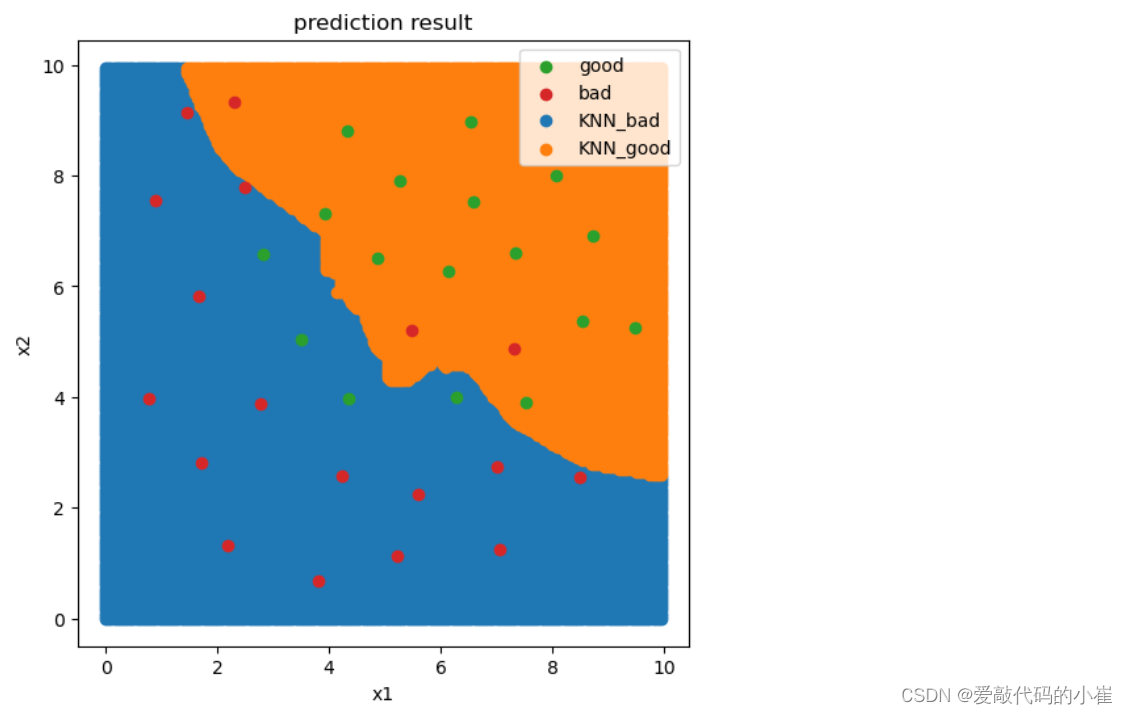

#visualize the knn result and boundary

#创建网格坐标

xx,yy = np.meshgrid(np.arange(0,10,0.05),np.arange(0,10,0.05))

print(xx,xx.shape)

#展平并堆叠坐标

x_range = np.c_[xx.ravel(),yy.ravel()]

print(x_range,x_range.shape)

y_range_predict = KNN.predict(x_range)

fig4 = plt.figure(figsize = (6,6))

KNN_bad = plt.scatter(x_range[:,0][y_range_predict==0],x_range[:,1][y_range_predict==0])

KNN_good = plt.scatter(x_range[:,0][y_range_predict==1],x_range[:,1][y_range_predict==1])

good = plt.scatter(data.loc[:,'x1'][y==1],data.loc[:,'x2'][y==1])

bad = plt.scatter(data.loc[:,'x1'][y==0],data.loc[:,'x2'][y==0])

plt.title('prediction result')

plt.xlabel('x1')

plt.ylabel('x2')

plt.legend((good,bad,KNN_bad,KNN_good),('good','bad','KNN_bad','KNN_good'))

plt.show()

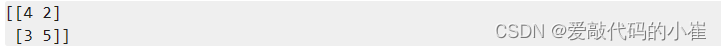

计算混淆矩阵以及各种率

from sklearn.metrics import confusion_matrix

cm = confusion_matrix(y_test,y_test_predict)

print(cm)

TP = cm[1,1]

TN = cm[0,0]

FP = cm[0,1]

FN = cm[1,0]

print(TP,TN,FP,FN)

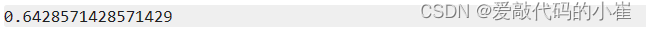

accuracy = (TP + TN) / (TP + TN + FP + FN)

print(accuracy)

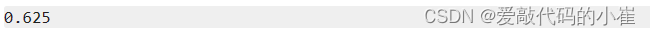

recall = TP / (TP + FN)

print(recall)

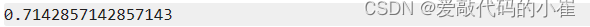

specifity = TN / (TN + FP)

print(specifity)

precision = TP / (TP + FP)

print(precision)

f1 = 2 * precision * recall / (precision + recall)

print(f1)

不同的n_neighbors(1-20,)求准确率

#try different k and calculate the accuracy for each

n = [i for i in range(1,21)]

accuracy_train = []

accuracy_test = []

for i in n:

knn = KNeighborsClassifier(n_neighbors=i)

knn.fit(x_train,y_train)

y_train_predict = knn.predict(x_train)

y_test_predict = knn.predict(x_test)

accuracy_train_i = accuracy_score(y_train,y_train_predict)

accuracy_test_i = accuracy_score(y_test,y_test_predict)

accuracy_train.append(accuracy_train_i)

accuracy_test.append(accuracy_test_i)

print(accuracy_train,accuracy_test)

fig5 = plt.figure(figsize = (15,5))

plt.subplot(121)

plt.plot(n,accuracy_train,marker = 'o')

plt.title('training accuracy vs n_neighbors')

plt.xlabel('n_neighbors')

plt.ylabel('accuracy')

plt.subplot(122)

plt.plot(n,accuracy_test,marker = 'o')

plt.title('test accuracy vs n_neighbors')

plt.xlabel('n_neighbors')

plt.ylabel('accuracy')

plt.show()