JSON is a lightweight data exchange format, or a configuration file format.

Files in this format are what we often encounter in data processing

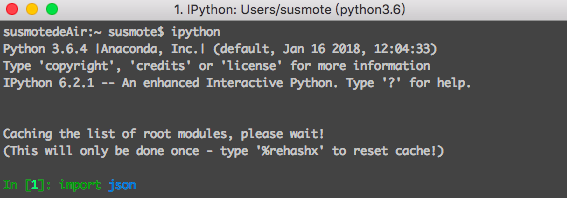

Python provides a built-in module json, just need to import it before use

You can view the help documentation of json through the help function

The commonly used methods of json are load, loads, dump and dumps, which are all beginners of python, I will not explain too much

json can be used in conjunction with a database, which is very useful when dealing with large amounts of data later

Next, we formally use data mining to process json files

Many websites now use Ajax, so generally many are XHR files

pass through

Here I want to use a map website to demonstrate

We obtained the relevant url through browser debugging

https://ditu.amap.com/service/poiInfo?id=B001B0IZY1&query_type=IDQ

Next, we simulate the http request sent by the browser through the get method in the requests module, and return the result object

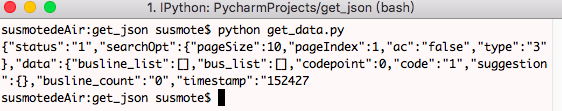

code show as below

# coding=utf-8 __Author__ = "susmote" import requests url = "https://ditu.amap.com/service/poiInfo?id=B001B0IZY1&query_type=IDQ" resp = requests.get(url) print(resp.text[0:200])

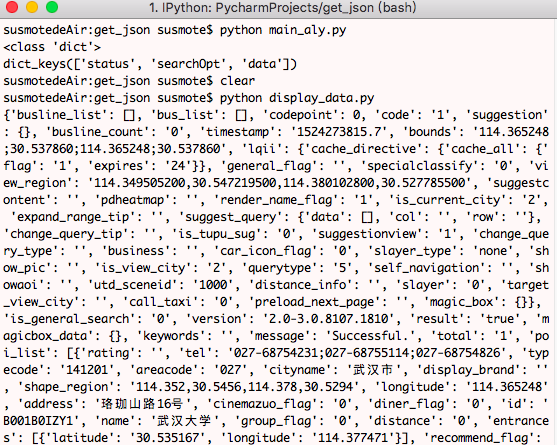

The result of running it in the terminal is as follows

The data has been obtained, but in order to use the data next, we need to use the json module to analyze the data

code show as below

import requests import json url = "https://ditu.amap.com/service/poiInfo?id=B001B0IZY1&query_type=IDQ" resp = requests.get(url) json_dict = json.loads(resp.text) print(type(json_dict)) print(json_dict.keys())

Briefly describe the code above:

Import the json module, then call the loads method, passing the returned text as a parameter of the method

The result of running it in the terminal is as follows

It can be seen that the result of the conversion is a dictionary corresponding to the json string, because type(json_dict) returns <class 'dict'>

Because the object is a dictionary, we can call the method of the dictionary, here we call the keys method

The result returns three keys, namely status, searcOpt, data

Now let's look at the data in the data key

import requests import json url = "https://ditu.amap.com/service/poiInfo?id=B001B0IZY1&query_type=IDQ" resp = requests.get(url) json_dict = json.loads(resp.text) print(json_dict['data'])

Run this piece of code in the terminal below

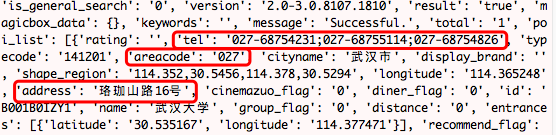

You can see that there is a lot of data we need, such as

If you don't mark them one by one, you can see which ones are useful by comparing them with what is displayed on the web page.

Below we get useful information through the code and output it clearly

# coding=utf-8

__Author__ = "susmote"

import requests

import json

url = "https://ditu.amap.com/service/poiInfo?id=B001B0IZY1&query_type=IDQ"

resp = requests.get(url)

json_dict = json.loads(resp.text)

data_dict = json_dict['data']

data_list = data_dict['poi_list']

dis_data = data_list[0]

print('City: ', dis_data['cityname'])

print('Name: ', dis_data['name'])

print('Tel: ', dis_data['tel'])

print('area code: ', dis_data['areacode'])

print('Address: ', dis_data['address'])

print('Longitude: ', dis_data['longitude'])

print('Latitude: ', dis_data['latitude'])

Because it returns a dictionary, through the study of the file structure, the dictionary is nested with a list, and the list is nested with a dictionary, and the data is successfully obtained by unpacking layer by layer.

I've listed the steps separately here so you can see more clearly

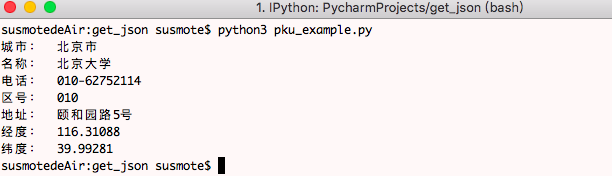

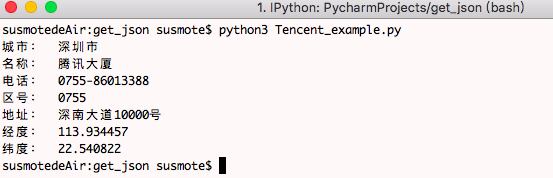

Next, we run the program through the terminal to get the information we want

Is it very simple, this program can be used as a template, and you only need to change a url when getting information from other places

For example the following examples

Beijing University

Or Tencent Building

There is no end to data mining. I hope you can analyze the data more and find the data you want.

My blog www.susmote.com