1. 读邮件数据集文件,提取邮件本身与标签。

列表

numpy数组

2.邮件预处理

- 邮件分句

- 句子分词

- 大小写,标点符号,去掉过短的单词

- 词性还原:复数、时态、比较级

- 连接成字符串

2.1 传统方法来实现

2.2 nltk库的安装与使用

pip install nltk

import nltk

nltk.download() # sever地址改成 http://www.nltk.org/nltk_data/

或

https://github.com/nltk/nltk_data下载gh-pages分支,里面的Packages就是我们要的资源。

将Packages文件夹改名为nltk_data。

或

网盘链接:https://pan.baidu.com/s/1iJGCrz4fW3uYpuquB5jbew 提取码:o5ea

放在用户目录。

----------------------------------

安装完成,通过下述命令可查看nltk版本:

import nltk

print nltk.__doc__

2.1 nltk库 分词

nltk.sent_tokenize(text) #对文本按照句子进行分割

nltk.word_tokenize(sent) #对句子进行分词

2.2 punkt 停用词

from nltk.corpus import stopwords

stops=stopwords.words('english')

*如果提示需要下载punkt

nltk.download(‘punkt’)

或 下载punkt.zip

https://pan.baidu.com/s/1OwLB0O8fBWkdLx8VJ-9uNQ 密码:mema

复制到对应的失败的目录C:\Users\Administrator\AppData\Roaming\nltk_data\tokenizers并解压。

2.3 NLTK 词性标注

nltk.pos_tag(tokens)

2.4 Lemmatisation(词性还原)

from nltk.stem import WordNetLemmatizer

lemmatizer = WordNetLemmatizer()

lemmatizer.lemmatize('leaves') #缺省名词

lemmatizer.lemmatize('best',pos='a')

lemmatizer.lemmatize('made',pos='v')

一般先要分词、词性标注,再按词性做词性还原。

2.5 编写预处理函数

def preprocessing(text):

sms_data.append(preprocessing(line[1])) #对每封邮件做预处理

3. 训练集与测试集

4. 词向量

5. 模型

import nltk

from nltk.corpus import stopwords

from nltk.stem import WordNetLemmatizer

text="Yes i think so. I am in office but my lap is in room i think thats on for the last few days. I didnt shut that down"

# 预处理

def preprocessing(text):

tokens = [word for sent in nltk.sent_tokenize(text) for word in nltk.word_tokenize(sent)]

stops = stopwords.words('english')

tokens = [token for token in tokens if token not in stops]

tokens = [token.lower() for token in tokens if len(token) >= 3]

lmtzr = WordNetLemmatizer()

tokens = [lmtzr.lemmatize(token) for token in tokens]

preprocessed_text ="".join(tokens)

return preprocessed_text

# 读取数据集

import csv # 用csv读取邮件数据,分解出邮件类别及邮件内容

file_path = r"E:\data\SMSSpamCollection.txt"

sms = open(file_path,"r", encoding = "utf - 8")

sms_data = []

sms_label = []

csv_reader = csv.reader(sms, delimiter="\t")

for line in csv_reader:

sms_label.append(line[0])

sms_data.append(line[1])

sms.close()

# 按0.7:0.3比例分为训练集和测试集

import numpy as np

sms_data = np.array(sms_data)

sms_label = np.array(sms_label)

from sklearn.model_selection import train_test_split

x_train, x_test, y_train, y_test = train_test_split(sms_data, sms_label, test_size=0.3, random_state=0,

stratify=sms_label) # 训练集,测试集

# 将其向量化

from sklearn.feature_extraction.text import TfidfVectorizer

vectorizer = TfidfVectorizer(min_df=2, ngram_range=(1, 2), stop_words="english", strip_accents ="unicode", norm ="l2")

X_train = vectorizer.fit_transform(x_train)

X_test = vectorizer.transform(x_test)

# 朴素贝叶斯分类群

from sklearn.naive_bayes import MultinomialNB

clf = MultinomialNB().fit(X_train, y_train)

y_nb_pred = clf.predict(X_test)

# 分类结果显示

from sklearn.metrics import confusion_matrix

from sklearn.metrics import classification_report

print(y_nb_pred.shape, y_nb_pred) # x_test预测结果

print("nb_confusion_matrik:")

cm = confusion_matrix(y_test, y_nb_pred) # 混淆矩阵

print(cm)

print("nb_classification_report:")

cr = classification_report(y_test, y_nb_pred) # 主要分类指标的文本报告

print(cr)

feature_names = vectorizer.get_feature_names() # 出现过的单词列表

coefs = clf.coef_ # 先验概率 P(x_i|y),6034 feature_log_prob_

intercept = clf.intercept_ # P(y),class_log_prior_:array,shape(n_classes,)

coefs_with_fns = sorted(zip(coefs[0], feature_names)) # 对数概率P(x_i|y)与单词x_i映射

n = 10

top = zip(coefs_with_fns[:n], coefs_with_fns[:-(n + 1):-1])

for (coef_1, fn_1), (coef_2, fn_2) in top:

print("\t % .4f\t % -15s\t\t % .4f\t % -15s" % (coef_1, fn_1, coef_2, fn_2))

sms_label

print(len(x_train), len(x_test))

print(X_train.shape, X_test.shape)

x_train

X_train

a = X_train.toarray()

a

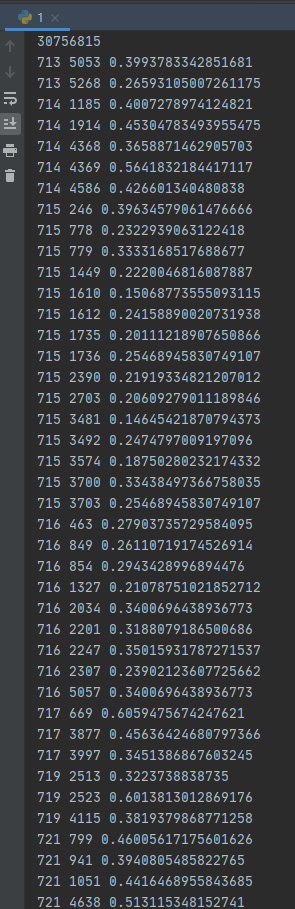

for i in range(1000):

for j in range(5984):

if a[i, j] != 0:

print(i, j, a[i, j])

vectorizer.get_feature_names()[1610]