最近有个需求,需要将一张表同步redis,

找到了CANAL,又是阿里开发的,下面是官方介绍

开发背景

早期阿里巴巴因为杭州和美国双机房部署,存在跨机房同步的业务需求,实现方式主要是基于业务 trigger 获取增量变更。从 2010 年开始,业务逐步尝试数据库日志解析获取增量变更进行同步,由此衍生出了大量的数据库增量订阅和消费业务

如上面图片意思,大概CANAL相当于一个从机,监听订阅了主机MYSQL的binary log队列,

当主机MYSQL有数据变化时,CANAL会马上知道,然后获得记录,你就可以开始的业务需求了

可以同步MYSQL,插入队列,ES等,是不是很强大。

网上最新的是 1.1.4的版本,而且阿里还再1.1.4版本提供的前端代码,终结了黑屏操作,但是资料甚少,

本节还是用的1.1.3版本。

git地址: https://github.com/alibaba/canal

下面是测试步骤。

1, 数据库开启binlog模式。并重启mysql,使之有效

log_bin=mysql-bin #指定bin-log的名称,尽量可以标识业务含义

binlog_format=row #选择row模式,必须!!!

server_id=1 #mysql服务器id2,建立个mysql用户,给CANAL订阅用

mysql> CREATE USER 'canal'@'%' IDENTIFIED BY 'canal';

mysql> GRANT ALL ON canal.* TO 'canal'@'%';

mysql> GRANT SELECT, REPLICATION CLIENT, REPLICATION SLAVE ON *.* TO 'canal'@'%';

mysql>FLUSH PRIVILEGES;3,下载1.1.3tar包,地址 https://github.com/alibaba/canal/releases/tag/canal-1.1.3

4,解压文件 tar xzvf canal.deployer-1.1.3.tar.gz -C canal

5,修改CANAL的instance.properties文件 再canal/conf/example目录下

5,启动CANAL

canal/bin/start.sh

查看日志 canal/logs/example

find start position successfully, EntryPosition[included=false,journalName=mysql-bin.000001,position=6337,serverId=1,gtid=,timestamp=1573609267000] cost : 9ms , the next step is binlog dump

这个就是启动成功了

6,编写CACAL的client端

6.1 引入pom

<dependency>

<groupId>com.alibaba.otter</groupId>

<artifactId>canal.client</artifactId>

<version>1.1.3</version>

</dependency>

<dependency>

<groupId>redis.clients</groupId>

<artifactId>jedis</artifactId>

<version>2.4.2</version>

</dependency>6.2 写客户端类

import com.alibaba.fastjson.JSONObject;

import com.alibaba.otter.canal.client.CanalConnector;

import com.alibaba.otter.canal.client.CanalConnectors;

import com.alibaba.otter.canal.protocol.CanalEntry.*;

import com.alibaba.otter.canal.protocol.Message;

import com.shrek.canal.util.RedisUtil;

import java.net.InetSocketAddress;

import java.util.List;

public class CanalClientTest{

public static void main(String args[]) {

CanalConnector connector = CanalConnectors.newSingleConnector(new InetSocketAddress("192.168.233.107",

11111), "example", "", "");

int batchSize = 1000;

try {

connector.connect();

connector.subscribe(".*\\..*");

connector.rollback();

while (true) {

Message message = connector.getWithoutAck(batchSize); // 获取指定数量的数据

long batchId = message.getId();

int size = message.getEntries().size();

if (batchId == -1 || size == 0) {

try {

Thread.sleep(1000);

} catch (InterruptedException e) {

e.printStackTrace();

}

} else {

printEntry(message.getEntries());

}

connector.ack(batchId); // 提交确认

// connector.rollback(batchId); // 处理失败, 回滚数据

}

} finally {

connector.disconnect();

}

}

private static void printEntry( List<Entry> entrys) {

for (Entry entry : entrys) {

if (entry.getEntryType() == EntryType.TRANSACTIONBEGIN || entry.getEntryType() == EntryType.TRANSACTIONEND) {

continue;

}

RowChange rowChage = null;

try {

rowChage = RowChange.parseFrom(entry.getStoreValue());

} catch (Exception e) {

throw new RuntimeException("ERROR ## parser of eromanga-event has an error , data:" + entry.toString(),

e);

}

EventType eventType = rowChage.getEventType();

System.out.println(String.format("================> binlog[%s:%s] , name[%s,%s] , eventType : %s",

entry.getHeader().getLogfileName(), entry.getHeader().getLogfileOffset(),

entry.getHeader().getSchemaName(), entry.getHeader().getTableName(),

eventType));

for (RowData rowData : rowChage.getRowDatasList()) {

if (eventType == EventType.DELETE) {

redisDelete(rowData.getBeforeColumnsList());

} else if (eventType == EventType.INSERT) {

redisInsert(rowData.getAfterColumnsList());

} else {

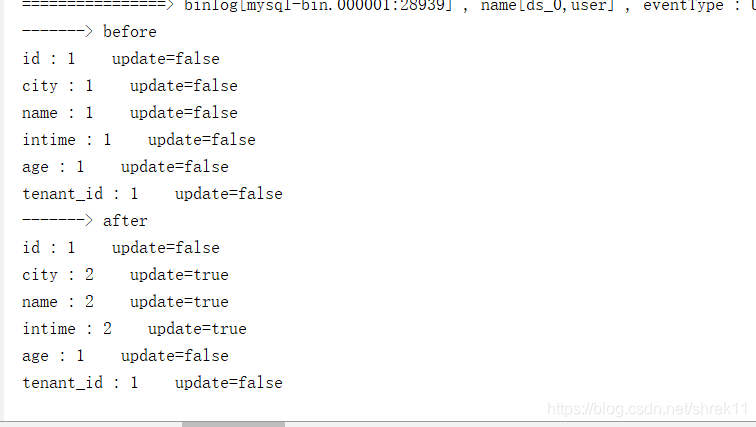

System.out.println("-------> before");

printColumn(rowData.getBeforeColumnsList());

System.out.println("-------> after");

printColumn(rowData.getAfterColumnsList());

redisUpdate(rowData.getAfterColumnsList());

}

}

}

}

private static void printColumn( List<Column> columns) {

for (Column column : columns) {

System.out.println(column.getName() + " : " + column.getValue() + " update=" + column.getUpdated());

}

}

private static void redisInsert( List<Column> columns){

JSONObject json=new JSONObject();

for (Column column : columns) {

json.put(column.getName(), column.getValue());

}

if(columns.size()>0){

RedisUtil.stringSet("user:"+ columns.get(0).getValue(),json.toJSONString());

}

}

private static void redisUpdate( List<Column> columns){

JSONObject json=new JSONObject();

for (Column column : columns) {

json.put(column.getName(), column.getValue());

}

if(columns.size()>0){

RedisUtil.stringSet("user:"+ columns.get(0).getValue(),json.toJSONString());

}

}

private static void redisDelete( List<Column> columns){

JSONObject json=new JSONObject();

for (Column column : columns) {

json.put(column.getName(), column.getValue());

}

if(columns.size()>0){

RedisUtil.delKey("user:"+ columns.get(0).getValue());

}

}

} 6.3 因为我是同步到redis,需要redis工具类

import redis.clients.jedis.Jedis;

import redis.clients.jedis.JedisPool;

import redis.clients.jedis.JedisPoolConfig;

public class RedisUtil {

// Redis服务器IP

private static String ADDR = "192.168.233.106";

// Redis的端口号

private static int PORT = 6379;

// 访问密码

//private static String AUTH = "admin";

// 可用连接实例的最大数目,默认值为8;

// 如果赋值为-1,则表示不限制;如果pool已经分配了maxActive个jedis实例,则此时pool的状态为exhausted(耗尽)。

private static int MAX_ACTIVE = 1024;

// 控制一个pool最多有多少个状态为idle(空闲的)的jedis实例,默认值也是8。

private static int MAX_IDLE = 200;

// 等待可用连接的最大时间,单位毫秒,默认值为-1,表示永不超时。如果超过等待时间,则直接抛出JedisConnectionException;

private static int MAX_WAIT = 10000;

// 过期时间

protected static int expireTime = 60 * 60 *24;

// 连接池

protected static JedisPool pool;

/**

* 静态代码,只在初次调用一次

*/

static {

JedisPoolConfig config = new JedisPoolConfig();

//最大连接数

config.setMaxTotal(MAX_ACTIVE);

//最多空闲实例

config.setMaxIdle(MAX_IDLE);

//超时时间

config.setMaxWaitMillis(MAX_WAIT);

//

config.setTestOnBorrow(false);

pool = new JedisPool(config, ADDR, PORT, 1000);

}

/**

* 获取jedis实例

*/

protected static synchronized Jedis getJedis() {

Jedis jedis = null;

try {

jedis = pool.getResource();

} catch (Exception e) {

e.printStackTrace();

if (jedis != null) {

pool.returnBrokenResource(jedis);

}

}

return jedis;

}

/**

* 释放jedis资源

* @param jedis

* @param isBroken

*/

protected static void closeResource(Jedis jedis, boolean isBroken) {

try {

if (isBroken) {

pool.returnBrokenResource(jedis);

} else {

pool.returnResource(jedis);

}

} catch (Exception e) {

}

}

/**

* 是否存在key

* @param key

*/

public static boolean existKey(String key) {

Jedis jedis = null;

boolean isBroken = false;

try {

jedis = getJedis();

jedis.select(2);

return jedis.exists(key);

} catch (Exception e) {

isBroken = true;

} finally {

closeResource(jedis, isBroken);

}

return false;

}

/**

* 删除key

* @param key

*/

public static void delKey(String key) {

Jedis jedis = null;

boolean isBroken = false;

try {

jedis = getJedis();

jedis.select(2);

jedis.del(key);

} catch (Exception e) {

isBroken = true;

} finally {

closeResource(jedis, isBroken);

}

}

/**

* 取得key的值

* @param key

*/

public static String stringGet(String key) {

Jedis jedis = null;

boolean isBroken = false;

String lastVal = null;

try {

jedis = getJedis();

jedis.select(2);

lastVal = jedis.get(key);

jedis.expire(key, expireTime);

} catch (Exception e) {

isBroken = true;

} finally {

closeResource(jedis, isBroken);

}

return lastVal;

}

/**

* 添加string数据

* @param key

* @param value

*/

public static String stringSet(String key, String value) {

Jedis jedis = null;

boolean isBroken = false;

String lastVal = null;

try {

jedis = getJedis();

jedis.select(2);

lastVal = jedis.set(key, value);

jedis.expire(key, expireTime);

} catch (Exception e) {

e.printStackTrace();

isBroken = true;

} finally {

closeResource(jedis, isBroken);

}

return lastVal;

}

/**

* 添加hash数据

* @param key

* @param field

* @param value

*/

public static void hashSet(String key, String field, String value) {

boolean isBroken = false;

Jedis jedis = null;

try {

jedis = getJedis();

if (jedis != null) {

jedis.select(2);

jedis.hset(key, field, value);

jedis.expire(key, expireTime);

}

} catch (Exception e) {

isBroken = true;

} finally {

closeResource(jedis, isBroken);

}

}

}

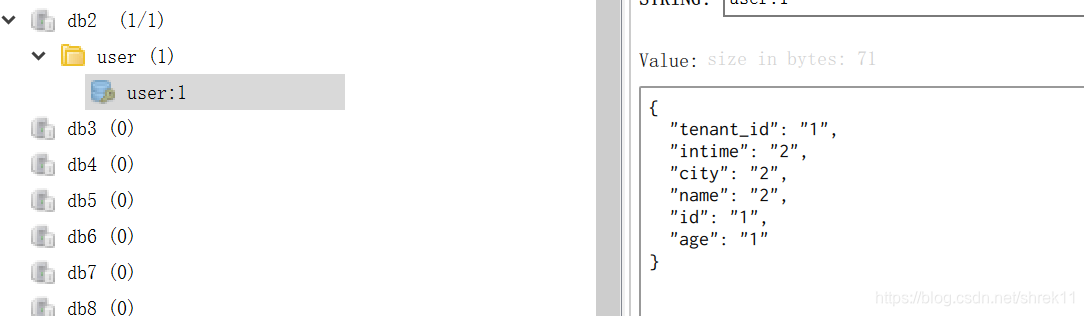

效果:

修改数据库时,CLIENT客户端马上可以收到消息

然后立马同步到redis,基本上是实时的了