环境:python3.8,pycharm2020

硬件:罗技c505e

基于前面一篇文章,我们已经能够实现手关键点的信息的提取,通过对这些信息的处理我们可以很轻松地进行手势识别。(完整代码见文末)

先看一波效果图:

封装函数(可跳过)

为方便调用,先将之前关键点提取相关函数分装成类

import cv2

import mediapipe as mp

import time

import math

class handDetctor():

def __init__(self, mode=False, maxHands=2, detectionCon=0.5, trackCon=0.5):

self.mode = mode

self.maxHands = maxHands

self.detectionCon = detectionCon

self.trackCon = trackCon

self.mpHands = mp.solutions.hands

self.hands = self.mpHands.Hands(self.mode, self.maxHands,

self.detectionCon, self.trackCon)

self.mpDraw = mp.solutions.drawing_utils

def findHands(self, img, draw=True, ):

imgRGB = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)#转换为rgb

self.results = self.hands.process(imgRGB)

# print(results.multi_hand_landmarks)

if self.results.multi_hand_landmarks:

for handLms in self.results.multi_hand_landmarks:

if draw:

self.mpDraw.draw_landmarks(img, handLms, self.mpHands.HAND_CONNECTIONS)

return img

def findPosition(self, img, handNo=0, draw=True):

lmList = []

if self.results.multi_hand_landmarks:

myHand = self.results.multi_hand_landmarks[handNo]

for id, lm in enumerate(myHand.landmark):

# print(id, lm)

# 获取手指关节点

h, w, c = img.shape

cx, cy = int(lm.x*w), int(lm.y*h)

lmList.append([id, cx, cy])

if draw:

cv2.putText(img, str(int(id)), (cx+10, cy+10), cv2.FONT_HERSHEY_PLAIN,

1, (0, 0, 255), 2)

return lmList

# 调用方式

def main():

cap = cv2.VideoCapture(0, cv2.CAP_DSHOW)

# 帧率统计

pTime = 0

cTime = 0

detector = handDetctor()

while True:

success, img = cap.read()

img = detector.findHands(img)

lmList = detector.findPosition(img, draw=False)

if len(lmList) != 0:

print(lmList)

# 统计屏幕帧率

cTime = time.time()

fps = 1 / (cTime - pTime)

pTime = cTime

cv2.putText(img, str(int(fps)), (10, 70), cv2.FONT_HERSHEY_PLAIN, 3, (255, 0, 255), 3)

cv2.imshow("image", img)

if cv2.waitKey(2) & 0xFF == 27:

break

cap.release()

if __name__ == '__main__':

main()

手势判断

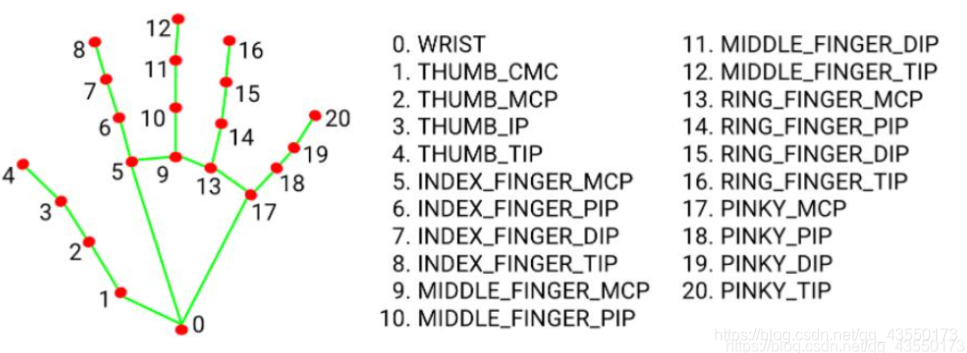

基于mediapipe我们已经能够获取手指关键点的坐标位置了,进一步只需要判断每根手指的开合状态即可得到手势。先贴以下关键点分布图:

下以判断食指的开合为例:

当食指伸直时,我们很容易发现8点到0点的距离明显比6点到0点的大

当食指缩回时,反之

判断每个手指:

def fingerStatus(self, lmList):

fingerList = []

id, originx, originy = lmList[0]

keypoint_list = [[2, 4], [6, 8], [10, 12], [14, 16], [18, 20]]

for point in keypoint_list:

id, x1, y1 = lmList[point[0]]

id, x2, y2 = lmList[point[1]]

if math.hypot(x2-originx, y2-originy) > math.hypot(x1-originx, y1-originy):

fingerList.append(True)

else:

fingerList.append(False)

return fingerList

调用:thumbOpen, firstOpen, secondOpen, thirdOpen, fourthOpen = detector.fingerStatus(lmList),需要注意的是要先获取21个标志点的坐标。

完整代码

HandTrackingModule.py

import cv2

import mediapipe as mp

import time

import math

class handDetctor():

def __init__(self, mode=False, maxHands=2, detectionCon=0.5, trackCon=0.5):

self.mode = mode

self.maxHands = maxHands

self.detectionCon = detectionCon

self.trackCon = trackCon

self.mpHands = mp.solutions.hands

self.hands = self.mpHands.Hands(self.mode, self.maxHands,

self.detectionCon, self.trackCon)

self.mpDraw = mp.solutions.drawing_utils

def findHands(self, img, draw=True, ):

imgRGB = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)#转换为rgb

self.results = self.hands.process(imgRGB)

# print(results.multi_hand_landmarks)

if self.results.multi_hand_landmarks:

for handLms in self.results.multi_hand_landmarks:

if draw:

self.mpDraw.draw_landmarks(img, handLms, self.mpHands.HAND_CONNECTIONS)

return img

def findPosition(self, img, handNo=0, draw=True):

lmList = []

if self.results.multi_hand_landmarks:

myHand = self.results.multi_hand_landmarks[handNo]

for id, lm in enumerate(myHand.landmark):

# print(id, lm)

# 获取手指关节点

h, w, c = img.shape

cx, cy = int(lm.x*w), int(lm.y*h)

lmList.append([id, cx, cy])

if draw:

cv2.putText(img, str(int(id)), (cx+10, cy+10), cv2.FONT_HERSHEY_PLAIN,

1, (0, 0, 255), 2)

return lmList

# 返回列表 包含每个手指的开合状态

def fingerStatus(self, lmList):

fingerList = []

id, originx, originy = lmList[0]

keypoint_list = [[2, 4], [6, 8], [10, 12], [14, 16], [18, 20]]

for point in keypoint_list:

id, x1, y1 = lmList[point[0]]

id, x2, y2 = lmList[point[1]]

if math.hypot(x2-originx, y2-originy) > math.hypot(x1-originx, y1-originy):

fingerList.append(True)

else:

fingerList.append(False)

return fingerList

def main():

cap = cv2.VideoCapture(0, cv2.CAP_DSHOW)

# 帧率统计

pTime = 0

cTime = 0

detector = handDetctor()

while True:

success, img = cap.read()

img = detector.findHands(img)

lmList = detector.findPosition(img, draw=False)

if len(lmList) != 0:

# print(lmList)

print(detector.fingerStatus(lmList))

# 统计屏幕帧率

cTime = time.time()

fps = 1 / (cTime - pTime)

pTime = cTime

cv2.putText(img, str(int(fps)), (10, 70), cv2.FONT_HERSHEY_PLAIN, 3, (255, 0, 255), 3)

cv2.imshow("image", img)

if cv2.waitKey(2) & 0xFF == 27:

break

cap.release()

if __name__ == '__main__':

main()

gestureRecognition.py

import time

import cv2

import os

import HandTrackingModule as htm

wCam, hCam = 640, 480

cap = cv2.VideoCapture(0, cv2.CAP_DSHOW)

cap.set(3, wCam)

cap.set(4, hCam)

# 缓冲图像

picture_path = "gesture_picture"

myList = os.listdir(picture_path)

print(myList)

overlayList = []

for imPath in myList:

image = cv2.imread(f'{picture_path}/{imPath}')

overlayList.append(image)

detector = htm.handDetctor(detectionCon=0.7)

while True:

success, img = cap.read()

img = detector.findHands(img)

lmList = detector.findPosition(img, draw=False)

if len(lmList) != 0:

thumbOpen, firstOpen, secondOpen, thirdOpen, fourthOpen = detector.fingerStatus(lmList)

if not firstOpen and not secondOpen and not thirdOpen and not fourthOpen:

img[0:200, 0:200] = overlayList[1]

if firstOpen and secondOpen and not thirdOpen and not fourthOpen:

img[0:200, 0:200] = overlayList[0]

if firstOpen and secondOpen and thirdOpen and fourthOpen:

img[0:200, 0:200] = overlayList[2]

cv2.imshow("image", img)

if cv2.waitKey(2) & 0xFF == 27:

break

相关连接

https://gist.github.com/TheJLifeX/74958cc59db477a91837244ff598ef4a