Python爬取房天下某城市数据

随着互联网时代的兴起,技术日新月异,掌握一门新技术对职业发展有着很深远的意义,做的第一个demo,以后会在爬虫和数据分析方便做更深的研究,本人不会做详细的文档,有哪里不足的地方,希望大牛们指点讲解。废话不多说,上代码。

你需要的技能:

(1)对前端知识熟悉会调试浏览器

(2)熟练python基础知识,对一些常用的库熟练掌握

(3)掌握一般关系型数据库

import requests as req

import time

import pandas as pd

from bs4 import BeautifulSoup

from sqlalchemy import create_engine

global info

def getHouseInfo(url):

info = {}

soup = BeautifulSoup(req.get(url).text,"html.parser")

resinfo = soup.select(".tab-cont-right .trl-item1")

# 获取户型、建筑面积、单价、朝向、楼层、装修情况

for re in resinfo:

tmp = re.text.strip().split("\n")

name = tmp[1].strip()

if("朝向" in name):

name = name.strip("进门")

if("楼层" in name):

name = name[0:2]

if("地上层数" in name):

name = "楼层"

if("装修程度" in name):

name = "装修"

info[name] = tmp[0].strip()

xiaoqu = soup.select(".rcont .blue")[0].text.strip()

info["小区名字"] = xiaoqu

zongjia = soup.select(".tab-cont-right .trl-item")

info["总价"] = zongjia[0].text

return info

domain = "http://esf.anyang.fang.com/"

city = "house/"

#获取总页数

def getTotalPage():

res = req.get(domain+city+"i31")

soup = BeautifulSoup(res.text, "html.parser")

endPage = soup.select(".page_al a").pop()['href']

pageNum = endPage.strip("/").split("/")[1].strip("i3")

print("loading.....总共 "+pageNum+" 页数据.....")

return pageNum

# 分页爬取数据

def pageFun(i):

pageUrl = domain + city + "i3" +i

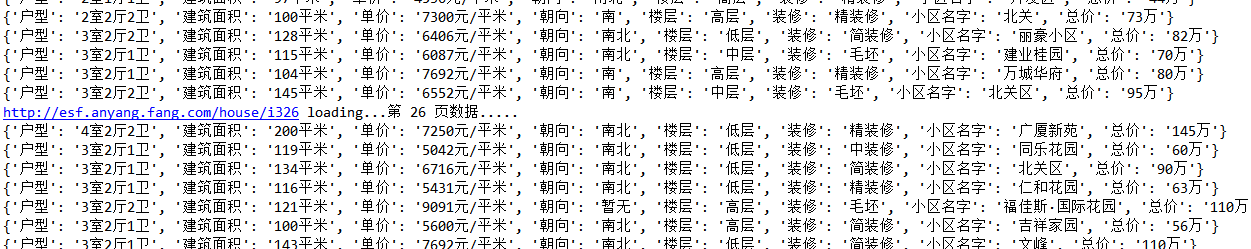

print(pageUrl+" loading...第 "+i+" 页数据.....")

res = req.get(pageUrl)

soup = BeautifulSoup(res.text,"html.parser")

houses = soup.select(".shop_list dl")

pageInfoList = []

for house in houses:

try:

# print(domain + house.select("a")[0]['href'])

info = getHouseInfo(domain + house.select("a")[0]['href'])

pageInfoList.append(info)

print(info)

except Exception as e:

print("---->出现异常,跳过 继续执行",e)

df = pd.DataFrame(pageInfoList)

return df

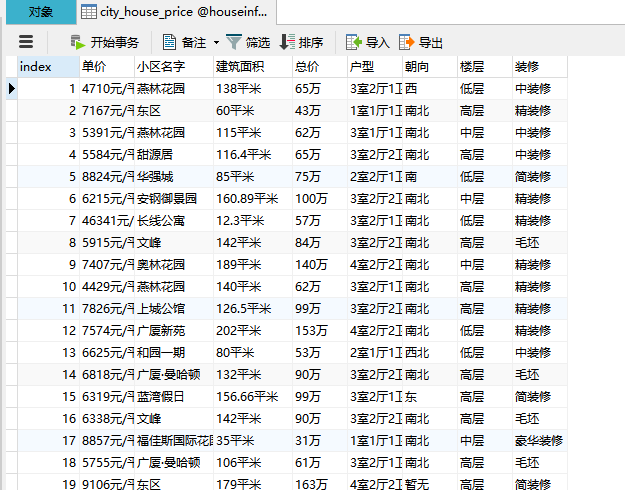

connect = create_engine("mysql+pymysql://root:root@localhost:3306/houseinfo?charset=utf8")

for i in range(1,int(getTotalPage())+1):

try:

df_onePage = pageFun(str(i))

except Exception as e:

print("Exception",e)

pd.io.sql.to_sql(df_onePage, "city_house_price", connect, schema="houseinfo", if_exists="append")