LVS简介

LVS中文官方手册: http://www.linuxvirtualserver.org/zh/index.html 。这个手册对于了解lvs的背景知识很有帮助。

LVS英文官方手册: http://www.linuxvirtualserver.org/Documents.html 。这个手册比较全面,对于了解和学习lvs的原理、配置很有帮助。

LVS(Linux virtual server),即Linux虚拟服务器。是因为LVS自身是个负载均衡器(director),不直接处理请求,而是将请求转发至位于它后端真正的服务器realserver上,所以是虚拟服务器

LVS是四层(传输层tcp/udp)、七层(应用层)的负载均衡工具,只不过大众一般都使用它的四层负载均衡功能ipvs,而七层的内容分发负载工具ktcpvs(kernel tcp virtual server)不怎么完善,使用的人并不多。

ipvs是集成在内核中的框架,可以通过用户空间的程序 ipvsadm 工具来管理,该工具可以定义一些规则来管理内核中的ipvs。就像iptables和netfilter的关系一样。

LVS相关的几种IP:

VIP :virtual IP,LVS服务器上接收外网数据报文的网卡IP地址。

DIP :director,LVS服务器上发送数据报文到realserver的网卡IP地址。

RIP :realserver(常简称为RS)上的IP,即提供服务的服务器IP。

CIP :客户端的IP。

lvs的四种工作模式

DR > TUN(隧道) > NAT > FULLNAT

DR(直接路由模式): client ->VS -> RS ->client

NAT(地址转换模式): client ->VS -> RS

TUN(隧道模式): client -> VS ->RS ->client

#以上三种都没有抗攻击能力

FULLNAT: client ->VS -> RS

#支持多vlan,抗攻击,进行两次NAT,

五元组:地址,目标地址,端口,目标端口,协议

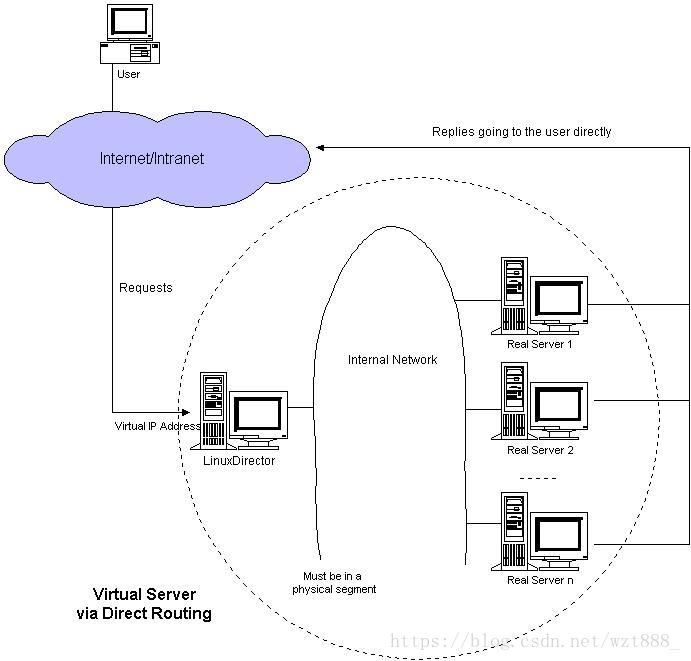

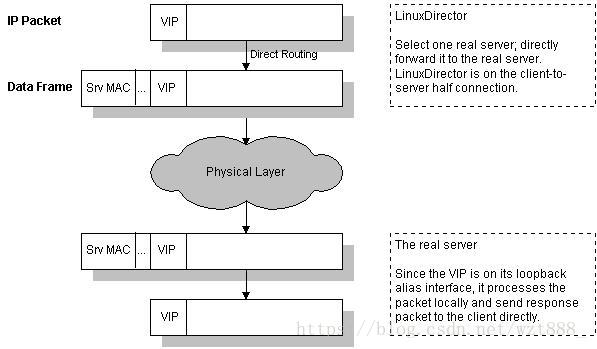

DR(直接路由模式): client ->VS -> RS ->client

VS/DR 的工作流程如上图所示:它的报文转发方法是将报文直接路由给目标服务器。在VS/DR 中,调度器根据各个服务器的负载情况,动态地选择一台服务器,不修改也不封装IP报文,而是将数据帧的MAC地址改为选出服务器的MAC地址,再将修改后 的数据帧在与服务器组的局域网上发送。因为数据帧的MAC地址是选出的服务器,所以服务器肯定可以收到这个数据帧,从中可以获得该IP报文。当服务器发现 报文的目标地址VIP是在本地的网络设备上,服务器处理这个报文,然后根据路由表将响应报文直接返回给客户。

在VS/DR中,根据缺省的TCP/IP协议栈处理,请求报文的目标地址为VIP,响应报文的源地址肯定也为VIP,所以响应报文不需要作任何修改,可以直接返回给客户,客户认为得到正常的服务,而不会知道是哪一台服务器处理的。VS/DR负载调度器跟VS/TUN一样只处于从客户到服务器的半连接中,按照半连接的TCP有限状态机进行状态迁移。

详细知识点来源于官网:点击这里了解详更多细内容

VS(Virtual Server) #调度器server1

RS (Real Server) #真实后端服务器server2,server3

1.随机匹配MAC

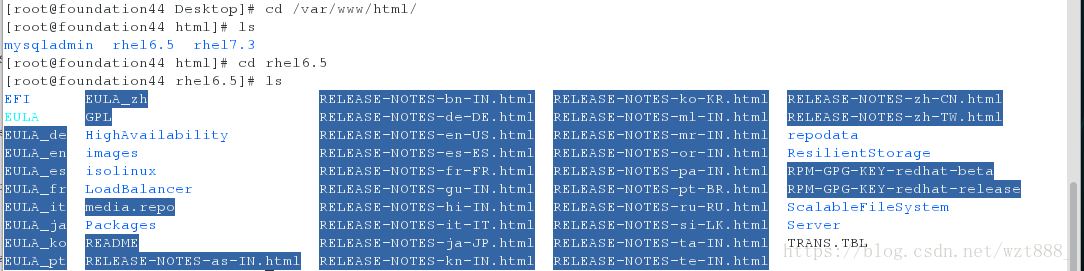

#在真机里

[root@foundation44 Desktop]# cd /var/www/html/

[root@foundation44 html]# ls

mysqladmin rhel6.5 rhel7.3

[root@foundation44 html]# cd rhel6.5

[root@foundation44 rhel6.5]# ls #查看yum源,企业6里的yum源很多种

EFI EULA_zh RELEASE-NOTES-bn-IN.html RELEASE-NOTES-ko-KR.html RELEASE-NOTES-zh-CN.html

EULA GPL RELEASE-NOTES-de-DE.html RELEASE-NOTES-ml-IN.html RELEASE-NOTES-zh-TW.html

EULA_de HighAvailability RELEASE-NOTES-en-US.html RELEASE-NOTES-mr-IN.html repodata

EULA_en images RELEASE-NOTES-es-ES.html RELEASE-NOTES-or-IN.html ResilientStorage

EULA_es isolinux RELEASE-NOTES-fr-FR.html RELEASE-NOTES-pa-IN.html RPM-GPG-KEY-redhat-beta

EULA_fr LoadBalancer RELEASE-NOTES-gu-IN.html RELEASE-NOTES-pt-BR.html RPM-GPG-KEY-redhat-release

EULA_it media.repo RELEASE-NOTES-hi-IN.html RELEASE-NOTES-ru-RU.html ScalableFileSystem

EULA_ja Packages RELEASE-NOTES-it-IT.html RELEASE-NOTES-si-LK.html Server #server是我们平使用的yum源

EULA_ko README RELEASE-NOTES-ja-JP.html RELEASE-NOTES-ta-IN.html TRANS.TBL

EULA_pt RELEASE-NOTES-as-IN.html RELEASE-NOTES-kn-IN.html RELEASE-NOTES-te-IN.html

#在server1(调度器)上:

<1>/etc/init.d/varnish stop

<2>/etc/init.d/httpd stop

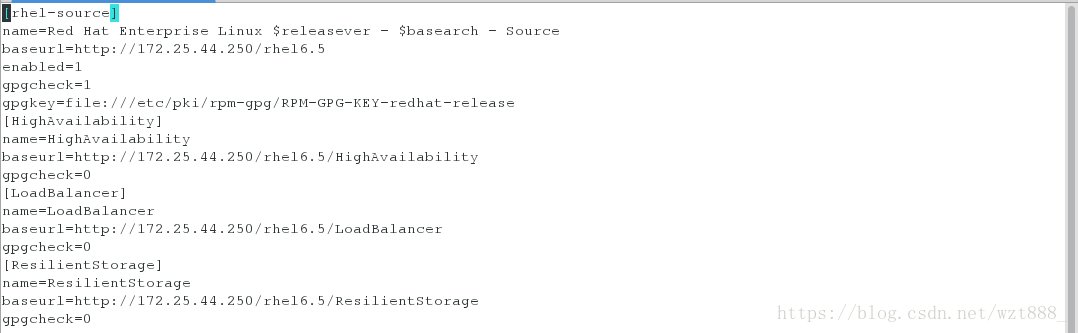

<3> vim /etc/yum.repos.d/rhel-source.repo #这个实验需要其他的yum源,要一一添加

[rhel-source]

name=Red Hat Enterprise Linux $releasever - $basearch - Source

baseurl=http://172.25.44.250/rhel6.5

enabled=1

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-redhat-release

[HighAvailability] #大文件系统

name=HighAvailability

baseurl=http://172.25.44.250/rhel6.5/HighAvailability

gpgcheck=0

[LoadBalancer] #负载均衡

name=LoadBalancer

baseurl=http://172.25.44.250/rhel6.5/LoadBalancer

gpgcheck=0

[ResilientStorage] #分布式存储

name=ResilientStorage

baseurl=http://172.25.44.250/rhel6.5/ResilientStorage

gpgcheck=0

<4>yum repolist #执行成功,说明yum源配置成功

Loaded plugins: product-id, subscription-manager

This system is not registered to Red Hat Subscription Management. You can use subscription-manager to register.

HighAvailability | 3.9 kB 00:00

HighAvailability/primary_db | 43 kB 00:00

LoadBalancer | 3.9 kB 00:00

LoadBalancer/primary_db | 7.0 kB 00:00

ResilientStorage | 3.9 kB 00:00

ResilientStorage/primary_db | 47 kB 00:00

rhel-source | 3.9 kB 00:00

repo id repo name status

HighAvailability HighAvailability 56

LoadBalancer LoadBalancer 4

ResilientStorage ResilientStorage 62

rhel-source Red Hat Enterprise Linux 6Server - x86_64 - S 3,690

repolist: 3,812 #此处必须有数值才代表yum源配置成功

<5> yum install ipvsadm -y

<6> ipvsadm -L

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

<7>ipvsadm -A -t 172.25.44.100:80 -s rr #-A表示添加一个虚拟服务,把数据进行分摊,rr表示轮询调度算法

<8>ipvsadm -a -t 172.25.44.100:80 -r 172.25.44.2:80 -g #-g表示采用直连模式

<9>ipvsadm -a -t 172.25.44.100:80 -r 172.25.44.3:80 -g

<10>ipvsadm -L

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.25.44.100:http rr #TCP添加成功

-> server2:http Route 1 0 0

-> server3:http Route 1 0 0

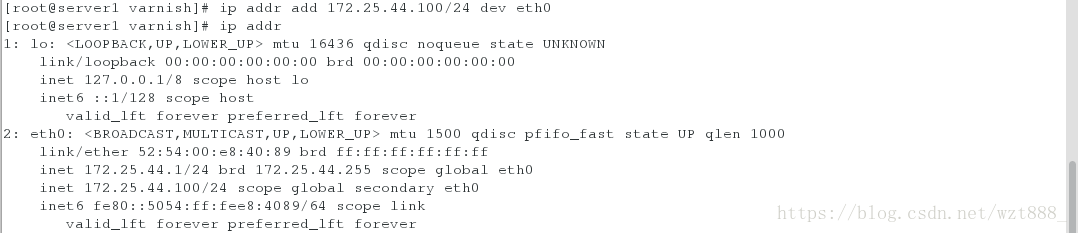

<11>ip addr add 172.25.44.100/24 dev eth0 #添加VIP

<12>ip addr

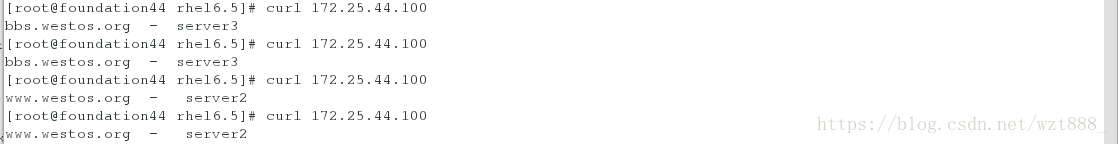

#在真机上访问测试

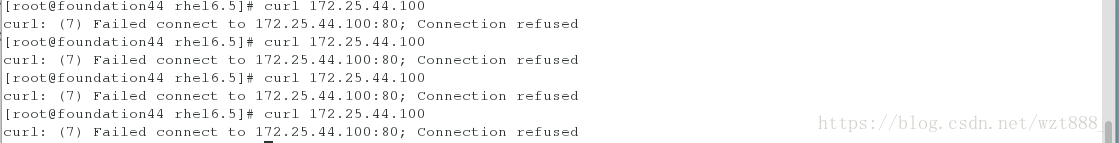

curl 172.25.44.100 #加载不出来,说明server2和server3上没有Vip(虚拟主机ip)

[root@foundation44 rhel6.5]# curl 172.25.44.100

^C

[root@foundation44 rhel6.5]# curl 172.25.44.100

^C

RS上必须有vip才能建立连接

#在server2上

<1>ip addr add 172.25.44.100/24 dev eth0 #手动添加Vip,临时添加

<2>/etc/init.d/httpd start

#在server3上

<1>ip addr add 172.25.44.100/24 dev eth0

<2>/etc/init.d/httpd start

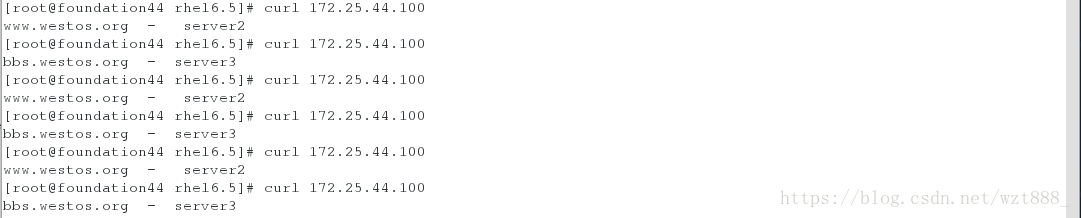

#再在真机上访问测试

[root@foundation44 ~]# curl 172.25.44.100

www.westos.org - server2

[root@foundation44 ~]# curl 172.25.44.100

bbs.westos.org - server3

[root@foundation44 ~]# curl 172.25.44.100

www.westos.org - server2

2.arp 在调度器内进行广播,谁先响应,就连谁

arp响应限制arp_ignore:

0 - (默认值): 回应任何网络接口上对任何本地IP地址的arp查询请求

1 - 只回答目标IP地址是来访网络接口本地地址的ARP查询请求

2 -只回答目标IP地址是来访网络接口本地地址的ARP查询请求,且来访IP必须在该网络接口的子网段内

3 - 不回应该网络界面的arp请求,而只对设置的唯一和连接地址做出回应

4-7 - 保留未使用

8 -不回应所有(本地地址)的arp查询

arp响应限制arp_announce:对网络接口上,本地IP地址的发出的,ARP回应,作出相应级别的限制: 确定不同程度的限制,宣布对来自本地源IP地址发出Arp请求的接口

0 - (默认) 在任意网络接口(eth0,eth1,lo)上的任何本地地址

1 -尽量避免不在该网络接口子网段的本地地址做出arp回应. 当发起ARP请求的源IP地址是被设置应该经由路由达到此网络接口的时候很有用.此时会检查来访IP是否为所有接口上的子网段内ip之一.如果改来访IP不属于各个网络接口上的子网段内,那么将采用级别2的方式来进行处理.

2 - 对查询目标使用最适当的本地地址.在此模式下将忽略这个IP数据包的源地址并尝试选择与能与该地址通信的本地地址.首要是选择所有的网络接口的子网中外出访问子网中包含该目标IP地址的本地地址. 如果没有合适的地址被发现,将选择当前的发送网络接口或其他的有可能接受到该ARP回应的网络接口来进行发送.

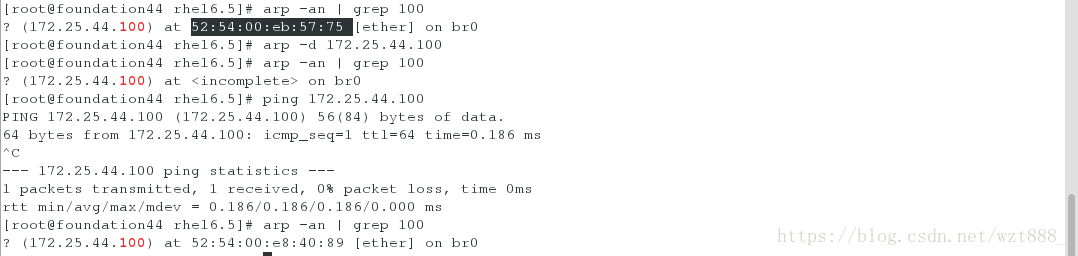

#MAC地址

server1 link/ether 52:54:00:e8:40:89 brd ff:ff:ff:ff:ff:ff

server2 link/ether 52:54:00:d3:bc:cf brd ff:ff:ff:ff:ff:ff

server3 link/ether 52:54:00:eb:57:75 brd ff:ff:ff:ff:ff:ff

#在真机中

<1>arp -an | grep 100 #查看连接的MAC地址

? (172.25.44.100) at 52:54:00:e8:40:89 [ether] on br0

<2>arp -d 172.25.44.100 #删除之前的响应

<3>arp -an | grep 100

? (172.25.44.100) at <incomplete> on br0

<4> ping 172.25.44.100

<5>arp -an | grep 100 #再次查看,地址已经改变

? (172.25.44.100) at 52:54:00:d3:bc:cf [ether] on br0

##多次测试,可以看出其具有随机性,因为三台server在同一VLAN下具有相同的ip,因此并不能保证每次都访问的是调度器server1

为了解决这个问题,用arptables(控制内核响应,专门控制arp

)进行如下操作:

#在server2上

<1>yum install arptables_jf -y

<2>arptables -L #查看策略,和iptables功能类似

Chain IN (policy ACCEPT)

target source-ip destination-ip source-hw destination-hw hlen op hrd pro

Chain OUT (policy ACCEPT)

target source-ip destination-ip source-hw destination-hw hlen op hrd pro

Chain FORWARD (policy ACCEPT)

target source-ip destination-ip source-hw destination-hw hlen op hrd pro

<3>arptables -A IN -d 172.25.44.100 -j DROP #不做响应

<4> arptables -A OUT -s 172.25.44.100 -j mangle --mangle-ip-s 172.25.44.2

<5>/etc/init.d/arptables_jf save #保存策略,再次重启,上面添加的策略就不会丢失

Saving current rules to /etc/sysconfig/arptables: [ OK ]

<6>/etc/init.d/arptables_jf restart

Flushing all current rules and user defined chains: [ OK ]

Clearing all current rules and user defined chains: [ OK ]

Applying arptables firewall rules: [ OK ]

<7>arptables -nL

Chain IN (policy ACCEPT)

target source-ip destination-ip source-hw destination-hw hlen op hrd pro

DROP 0.0.0.0/0 172.25.44.100 00/00 00/00 any 0000/0000 0000/0000 0000/0000

Chain OUT (policy ACCEPT)

target source-ip destination-ip source-hw destination-hw hlen op hrd pro

mangle 172.25.44.100 0.0.0.0/0 00/00 00/00 any 0000/0000 0000/0000 0000/0000 --mangle-ip-s 172.25.44.2

Chain FORWARD (policy ACCEPT)

target source-ip destination-ip source-hw destination-hw hlen op hrd pro

#再在真机上测试(每次都访问VS)

[root@foundation44 ~]# arp -an | grep 100

? (172.25.44.100) at **52:54:00:e8:40:89** [ether] on br0

[root@foundation44 ~]# arp -d 172.25.44.100

[root@foundation44 ~]# arp -an | grep 100

? (172.25.44.100) at <incomplete> on br0

[root@foundation44 ~]# ping 172.25.44.100

PING 172.25.44.100 (172.25.44.100) 56(84) bytes of data.

64 bytes from 172.25.44.100: icmp_seq=1 ttl=64 time=0.180 ms

^C

--- 172.25.44.100 ping statistics ---

1 packets transmitted, 1 received, 0% packet loss, time 0ms

rtt min/avg/max/mdev = 0.180/0.180/0.180/0.000 ms

[root@foundation44 ~]# arp -an | grep 100

? (172.25.44.100) at **52:54:00:e8:40:89** [ether] on br0

[root@server2 html]# iptables -A INPUT -j DROP #丢弃所有数据包,连接中断

[root@server2 ~]# iptables -F #清除防火墙策略,可正常连接

Iptables与Lvs同有INPUT链,iptables优先级高,当iptables允许数据包进入INPUT链时,Lvs策略生效,不访问VS的80端口,而访问RS的80端口

3.ldirectord #解决了lvs的健康检查问题,帮助lvs策略的更新

#在server1上

<1> yum install ldirectord-3.9.5-3.1.x86_64.rpm -y

<2> rpm -ql ldirectord #查看ldirectord策略目录

/etc/ha.d

/etc/ha.d/resource.d

/etc/ha.d/resource.d/ldirectord

/etc/init.d/ldirectord

/etc/logrotate.d/ldirectord

/usr/lib/ocf/resource.d/heartbeat/ldirectord

/usr/sbin/ldirectord

/usr/share/doc/ldirectord-3.9.5

/usr/share/doc/ldirectord-3.9.5/COPYING

/usr/share/doc/ldirectord-3.9.5/ldirectord.cf

/usr/share/man/man8/ldirectord.8.gz

<3>cp /usr/share/doc/ldirectord-3.9.5/ldirectord.cf /etc/ha.d

<4>cd /etc/ha.d

<5>ls

ldirectord.cf resource.d shellfuncs

<6>vim ldirectord.cf

从第25行开始

virtual=172.25.44.100:80

real=172.25.44.2:80 gate

real=172.25.44.3:80 gate

fallback=127.0.0.1:80 gate

service=http

scheduler=rr

#persistent=600

#netmask=255.255.255.255

protocol=tcp

checktype=negotiate

checkport=80

request="index.html"

#receive="Test Page"

#virtualhost=www.x.y.z

<7>ipvsadm -C #清除之前添加的所有策略

<8>vim /etc/httpd/conf.d/

把端口改为 listen = 80

<9>/etc/init.d/httpd restart

#在真机上进行测试:(server2,server3的服务都是好的)

[root@foundation44 rhel6.5]# curl 172.25.44.100

bbs.westos.org - server3

[root@foundation44 rhel6.5]# curl 172.25.44.100

www.westos.org - server2

[root@foundation44 rhel6.5]# curl 172.25.44.100

bbs.westos.org - server3

[root@foundation44 rhel6.5]# curl 172.25.44.100

www.westos.org - server2

#在server2上:

[root@server2 ~]# /etc/init.d/httpd stop #只关掉server2的服务

#在真机上进行测试:(server2down掉了,server3的服务是好的)

[root@foundation44 rhel6.5]# curl 172.25.44.100

bbs.westos.org - server3

[root@foundation44 rhel6.5]# curl 172.25.44.100

bbs.westos.org - server3

[root@foundation44 rhel6.5]# curl 172.25.44.100

bbs.westos.org - server3

在server2上:

[root@server2 ~]# /etc/init.d/httpd stop #关掉服务,模拟服务器坏掉

在server3上:

[root@server3 ~]# /etc/init.d/httpd stop #关掉服务,模拟服务器坏掉

#在真机上进行测试:(server2,server3的服务都down了)

[root@foundation44 rhel6.5]# curl 172.25.44.100

server1 ------正在维护-------

[root@foundation44 rhel6.5]# curl 172.25.44.100

server1 ------正在维护-------

[root@foundation44 rhel6.5]# curl 172.25.44.100

server1 ------正在维护-------

[root@foundation44 rhel6.5]# curl 172.25.44.100

server1 ------正在维护-------

4.keepalived

keepalived是什么?

keepalived是集群管理中保证集群高可用的一个服务软件,其功能类似于heartbeat,用来防止单点故障。

#创建环境

把server2和server3的大小改为512M

再建立一个虚拟机server4

原码编译三部曲

<1> ./configure --prefix=/uer/local/keepalived --with-init=SYSV #生成makefile

<2>Make #编译

<3>make install

[root@server1 html]# /etc/init.d/ldirectord stop

获取安装包:keepalived-2.0.6.tar.gz

keepalived安装包来源:Keepalived for Linux(官方网站)

#配置server1

1. tar zxf keepalived-2.0.6.tar.gz

2. cd keepalived-2.0.6

3. less INSTALL #查看需要下载的软件

4. yum install openssl-devel libnl3-devel ipset-devel

iptables-devel libnfnetlink-devel -y

5. ./configure --prefix=/uer/local/keepalived --with-init=SYSV make make install cd /usr/local/keepalived/etc/rc.d/init.d/

5. chmod +x keepalived

6. ln -s /usr/local/keepalived/etc/rc.d/init.d/keepalived /etc/init.d/ #建立软链接

7. ln -s /usr/local/keepalived/etc/keepalived/ /etc/

8. ln -s /usr/local/keepalived/etc/sysconfig/keepalived /etc/sysconfig/

9. ln -s /usr/local/keepalived/sbin/keepalived /sbin/

10. which keepalived #查看keepalived所在的目录

11. /etc/init.d/keepalived stop

12. /etc/init.d/keepalived start

13./etc/init.d/keepalived restart #保证重启成功,说名配置成功

14.vim keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email{

root@localhost

}

notification_email_from keepalived@localhost

smtp_server 192.168.200.1

smtp_connect_timeout 30

router_id LVS_DEVEL

vrrp_skip_check_adv_addr

#vrrp_strict

vrrp_garp_interval 0

vrrp_gna_interval 0

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 44

priority 100 #数值越大,优先级越高

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.25.44.100

}

}

virtual_server 172.25.44.100 80 { #VS的vip,服务启动生效时自动添加

delay_loop 3 #对后端的健康检查时间

lb_algo rr #调度算法

lb_kind DR #模式为DR

persistence_timeout 50

protocol TCP

real_server 172.25.44.2 80 {

weight 1

TCP_CHECK {

connect_timeout 3

retry 3

delay_before_retry 3

}

}

real_server 172.25.44.3 80 {

weight 1

TCP_CHECK{

connect_timeout 3

retry 3

delay_before_retry 3

}

}

}

15.[root@server1 keepalived]#/etc/init.d/keepalived restart

16.[root@server1 keepalived]#ipvsadm -ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.25.44.100:80 rr persistent 50

-> 172.25.44.2:80 Route 1 0 0

-> 172.25.44.3:80 Route 1 0 0

17.iptables -L

Chain INPUT (policy ACCEPT)

target prot opt source destination

DROP all -- anywhere 172.25.44.100

Chain FORWARD (policy ACCEPT)

target prot opt source destination

Chain OUTPUT (policy ACCEPT)

target prot opt source destination

#在server4上:

<1>cd /etc/keepalived

<2>vim keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

root@localhost

}

notification_email_from keepalived@localhost

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id LVS_DEVEL

vrrp_skip_check_adv_addr

#vrrp_strict #注释防止其修改防火墙规则

vrrp_garp_interval 0

vrrp_gna_interval 0

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 44

priority 50 #优先级低于server1

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.25.44.100

}

}

virtual_server 172.25.44.100 80 {

delay_loop 3

lb_algo rr

lb_kind NAT #此处改为NAT

persistence_timeout 50

protocol TCP

real_server 172.25.44.2 80 {

weight 1

TCP_CHECK{

connect_timeout 3

retry 3

delay_before_retry 3

}

}

<3>/etc/init.d/keepalived restart

#server2

[root@server2 ~]ip addr add 172.25.44.100/24 dev eth0

[root@server2 ~]# /etc/init.d/iptables stop

[root@server2 ~]# /etc/init.d/httpd start

#server3

[root@server3 ~]#ip addr add 172.25.44.100/24 dev eth0

[root@server3 ~]# /etc/init.d/iptables stop

[root@server3 ~]# /etc/init.d/httpd start

#在真机测试:

[root@foundation44 Desktop]# curl 172.25.44.100

www.westos.org - server2

[root@foundation44 Desktop]# curl 172.25.44.100

www.westos.org - server2

[root@foundation44 Desktop]# curl 172.25.44.100

www.westos.org - server3

[root@foundation44 Desktop]# curl 172.25.44.100

www.westos.org - server3

[root@foundation44 Desktop]# curl 172.25.44.100

www.westos.org - server3

模拟服务器挂掉

#server2:

[root@server2 ~]# /etc/init.d/httpd stop

#server3:

[root@server3 ~]# /etc/init.d/httpd stop

#在真机测试:

[root@foundation44 rhel6.5]# curl 172.25.44.100

curl: (7) Failed connect to 172.25.44.100:80; Connection refused

[root@foundation44 rhel6.5]# curl 172.25.44.100

curl: (7) Failed connect to 172.25.44.100:80; Connection refused

[root@foundation44 rhel6.5]# curl 172.25.44.100

curl: (7) Failed connect to 172.25.44.100:80; Connection refused

[root@foundation44 rhel6.5]# curl 172.25.44.100

curl: (7) Failed connect to 172.25.44.100:80; Connection refused

#此时server1不会顶替工作