前言

今天想在win 10上搭一个Hadoop的开发环境,希望能够直联Hadoop集群并提交MapReduce任务,这里给出相关的关键配置。

步骤

关于maven以及idea的安装这里不再赘述,非常简单。

- 在win 10上配置Hadoop

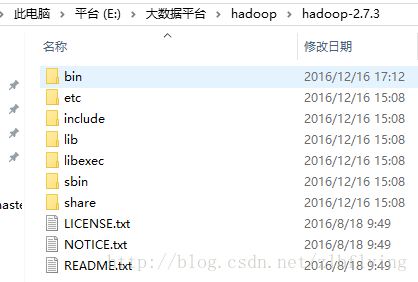

将Hadoop 2.7.3直接解压到系统某个位置,以我的文件名称为例,解压到E:\大数据平台\hadoop\hadoop-2.7.3中

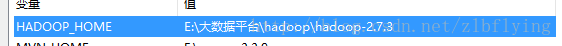

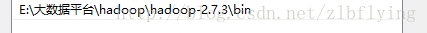

配置HADOOP_HOME以及PATH

创建名为HADOOP_HOME的环境变量

将bin路径添加到PATH中

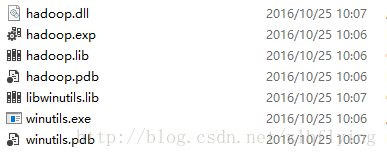

添加Hadoop在win上需要的相关库文件,将其添加到hadoop的bin目录中

建立maven项目,在pom文件中添加相关的依赖

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>edu.hfut.wls</groupId>

<artifactId>hadoop</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

<hadoop.version>2.7.3</hadoop.version>

</properties>

<dependencies>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>${hadoop.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>${hadoop.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>${hadoop.version}</version>

</dependency>

</dependencies>

</project>- 将Hadoop的相关配置文件添加到resources文件夹下

- 编写WordCount程序

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

import java.io.IOException;

/**

* Created by lianbin zhang on 2016/12/16.

*/

public class WordCount extends Configured implements Tool {

public int run(String[] strings) throws Exception {

try {

Configuration conf = getConf();

conf.set("mapreduce.job.jar", "src/main/wc.jar");

conf.set("mapreduce.framework.name", "yarn");

conf.set("yarn.resourcemanager.hostname", "10.20.10.100");

conf.set("mapreduce.app-submission.cross-platform", "true");

Job job = Job.getInstance(conf);

job.setJarByClass(WordCount.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(LongWritable.class);

job.setMapperClass(WcMapper.class);

job.setReducerClass(WcReducer.class);

job.setInputFormatClass(TextInputFormat.class);

job.setOutputFormatClass(TextOutputFormat.class);

FileInputFormat.setInputPaths(job, "hdfs://ns1/myid");

FileOutputFormat.setOutputPath(job, new Path("hdfs://ns1/out"));

job.waitForCompletion(true);

} catch (Exception e) {

e.printStackTrace();

}

return 0;

}

public static class WcMapper extends Mapper<LongWritable, Text, Text, LongWritable>{

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String mVal = value.toString();

context.write(new Text(mVal), new LongWritable(1));

}

}

public static class WcReducer extends Reducer<Text, LongWritable, Text, LongWritable>{

@Override

protected void reduce(Text key, Iterable<LongWritable> values, Context context) throws IOException, InterruptedException {

long sum = 0;

for(LongWritable lVal : values){

sum += lVal.get();

}

context.write(key, new LongWritable(sum));

}

}

public static void main(String[] args) throws Exception {

ToolRunner.run(new WordCount(), args);

}

}

注意:在run方法中有四项配置:

- mapreduce.job.jar:应用程序打包后的jar位置;

- mapreduce.framework.name:使用的mapreduce框架

- yarn.resourcemanager.hostname:rm的主机名,可以在hosts文件中配置对应的主机名

- mapreduce.app-submission.cross-platform:是否跨平台提交mr程序

- 提交程序

提交程序进行运行时,由于跨平台提交,默认会将当前win的登陆用户作为user去操作hdfs集群,这里会存在权限问题,大多数解决方案中都是对hdfs文件的权限进行修改。本文采用的方案是在提交时添加虚拟机运行参数

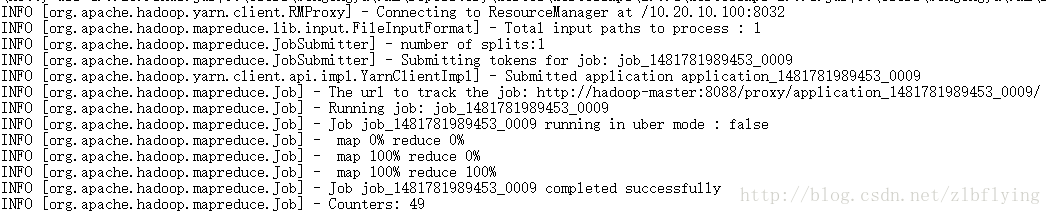

-DHADOOP_USER_NAME=hadoop // hadoop需要换成你自己的用户名- 运行结果