前言

本篇介绍Hive的一些常用知识。要说和网上其他manual的区别,那就是这是笔者写的一套成体系的文档,不是随心所欲而作。

本文所用的环境为:

- CentOS 6.5 64位

- Hive 2.1.1

- Java 1.8

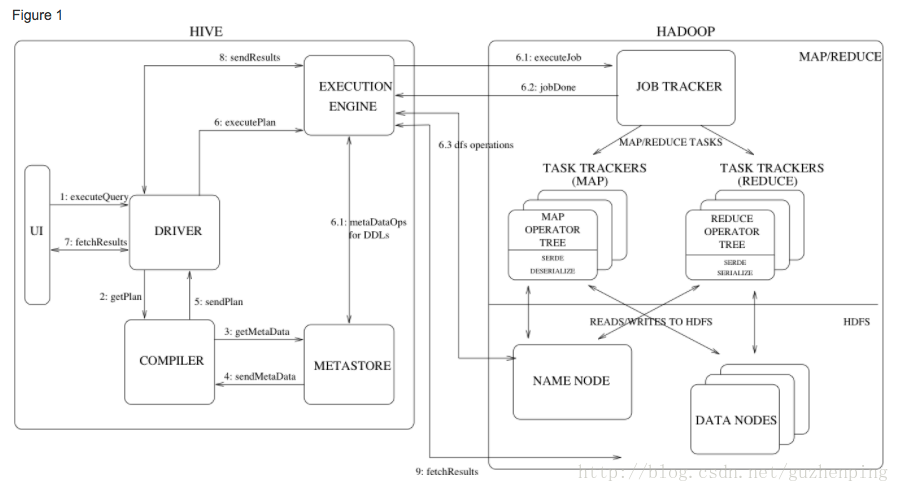

Hive Architecture

引自官网,务必仔细阅读:

Figure 1 also shows how a typical query flows through the system. The UI calls the execute interface to the Driver (step 1 in Figure 1). The Driver creates a session handle for the query and sends the query to the compiler to generate an execution plan (step 2). The compiler gets the necessary metadata from the metastore (steps 3 and 4). This metadata is used to typecheck the expressions in the query tree as well as to prune partitions based on query predicates. The plan generated by the compiler (step 5) is a DAG of stages with each stage being either a map/reduce job, a metadata operation or an operation on HDFS. For map/reduce stages, the plan contains map operator trees (operator trees that are executed on the mappers) and a reduce operator tree (for operations that need reducers). The execution engine submits these stages to appropriate components (steps 6, 6.1, 6.2 and 6.3). In each task (mapper/reducer) the deserializer associated with the table or intermediate outputs is used to read the rows from HDFS files and these are passed through the associated operator tree. Once the output is generated, it is written to a temporary HDFS file though the serializer (this happens in the mapper in case the operation does not need a reduce). The temporary files are used to provide data to subsequent map/reduce stages of the plan. For DML operations the final temporary file is moved to the table’s location. This scheme is used to ensure that dirty data is not read (file rename being an atomic operation in HDFS). For queries, the contents of the temporary file are read by the execution engine directly from HDFS as part of the fetch call from the Driver (steps 7, 8 and 9).

Hive QL

- 创建数据库

-- 创建hello_world数据库

create database hello_world; - 查看所有数据库

show databases;- 查看所有表

show tables;- 创建内部表

-- 创建hello_world_inner

create table hello_world_inner

(

id bigint,

account string,

name string,

age int

)

row format delimited fields terminated by '\t';- 创建分区表

create table hello_world_parti

(

id bigint,

name string

)

partitioned by (dt string, country string)

;- 展示表分区

show partition hello_world_parti;- 更改表名称

alter table hello_world_parti to hello_world2_parti;- 导入数据

load data local inpath '/home/deploy/user_info.txt' into table user_info;导入数据的几种方式

比如有一张测试表:

create table hello

(

id int,

name string,

message string

)

partitioned by (

dt string

)

ROW FORMAT DELIMITED

FIELDS TERMINATED BY '\t'

STORED AS TEXTFILE

;- 从本地文件系统中导入数据到hive表

例如:

load data local inpath 'data.txt' into table hello;- 从HDFS上导入数据到hive表

- 从别的表中查询出相应的数据并导入到hive表中

- 创建表时从别的表查到数据并插入的所创建的表中

结语

更多学习交流、技术分析,可加微信群聊–谷同学的IT圈。扫码进入: