版权声明:本人原创文章,转载时请保留所有权并以超链接形式标明文章出处 https://blog.csdn.net/qq_37138818/article/details/83304125

1-Scrapy建立新工程

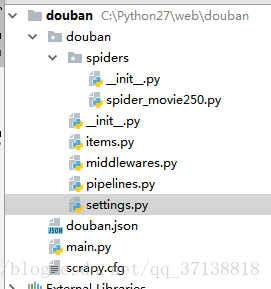

在开始爬取之前,您必须创建一个新的 Scrapy 项目。 进入您打算存储代码的目录中【工作目录】,运行下列命令,如下是我创建的一个爬取豆瓣的工程douban【存储路径为:C:\python27\web】:

命令: scrapy startproject douban

2-目录 如下

3--items的编写

首先,文件中有items.py,这个里面这要是用来封装爬虫所要爬的字段,如爬豆瓣电影,需要爬电影的ID,url,电影名称等。

# -*- coding:utf-8 -*-

import scrapy

class MovieItem(scrapy.Item):

rank = scrapy.Field()

title = scrapy.Field()

link = scrapy.Field()

rate = scrapy.Field()

quote = scrapy.Field()4-spider_movie250.py 的编写

# -*- coding:utf-8 -*-

import scrapy

from douban.items import MovieItem

class Movie250Spider(scrapy.Spider):

# 定义爬虫的名称,主要main方法使用

name = 'doubanmovie'

allowed_domains = ["douban.com"]

start_urls = [

"http://movie.douban.com/top250/"

]

# 解析数据

def parse(self, response):

items = []

for info in response.xpath('//div[@class="item"]'):

item = MovieItem()

item['rank'] = info.xpath('div[@class="pic"]/em/text()').extract()

item['title'] = info.xpath('div[@class="pic"]/a/img/@alt').extract()

item['link'] = info.xpath('div[@class="pic"]/a/@href').extract()

item['rate'] = info.xpath('div[@class="info"]/div[@class="bd"]/div[@class="star"]/span/text()').extract()

item['quote'] = info.xpath('div[@class="info"]/div[@class="bd"]/p[@class="quote"]/span/text()').extract()

items.append(item)

yield item

# 翻页

next_page = response.xpath('//span[@class="next"]/a/@href')

if next_page:

url = response.urljoin(next_page[0].extract())

#爬每一页

yield scrapy.Request(url, self.parse)5-编写pipelines

# -*- coding: utf-8 -*-

import json

import codecs

#以Json的形式存储

class JsonWithEncodingCnblogsPipeline(object):

def __init__(self):

self.file = codecs.open('douban.json', 'w', encoding='utf-8')

def process_item(self, item, spider):

line = json.dumps(dict(item), ensure_ascii=False) + "\n"

self.file.write(line)

return item

def spider_closed(self, spider):

self.file.close()

#将数据存储到mysql数据库

from twisted.enterprise import adbapi

import MySQLdb

import MySQLdb.cursors

class MySQLStorePipeline(object):

#数据库参数

def __init__(self):

dbargs = dict(

host = '127.0.0.1',

db = '数据库名',

user = 'root',

passwd = 'root',

cursorclass = MySQLdb.cursors.DictCursor,

charset = 'utf8',

use_unicode = True

)

self.dbpool = adbapi.ConnectionPool('MySQLdb',**dbargs)

'''

The default pipeline invoke function

'''

def process_item(self, item,spider):

res = self.dbpool.runInteraction(self.insert_into_table,item)

return item

#插入的表,此表需要事先建好

def insert_into_table(self,conn,item):

conn.execute('insert into douban(rank,title,rate,qute,link) values(%s,%s,%s,%s,%s)', (

item['rank'][0],

item['title'][0],

item['rate'][0],

item['quote'][0],

item['link'][0])

)6-settings的编写

USER_AGENT = 'Mozilla/5.0 (Windows NT 6.1) XXXXXXX) Chrome/70.0.3538.67 Safari/537.36'

# start MySQL database configure setting

MYSQL_HOST = '127.0.0.1'

MYSQL_DBNAME = '数据库名'

MYSQL_USER = 'root'

MYSQL_PASSWD = 'root'

# end of MySQL database configure setting

ITEM_PIPELINES = {

'douban.pipelines.JsonWithEncodingCnblogsPipeline': 300,

'douban.pipelines.MySQLStorePipeline': 300,

}7-main 的编写

from scrapy import cmdline

cmdline.execute("scrapy crawl doubanmovie".split())