版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/bingdianone/article/details/85602880

官方网站:

http://storm.apache.org/releases/1.2.2/Distributed-RPC.html

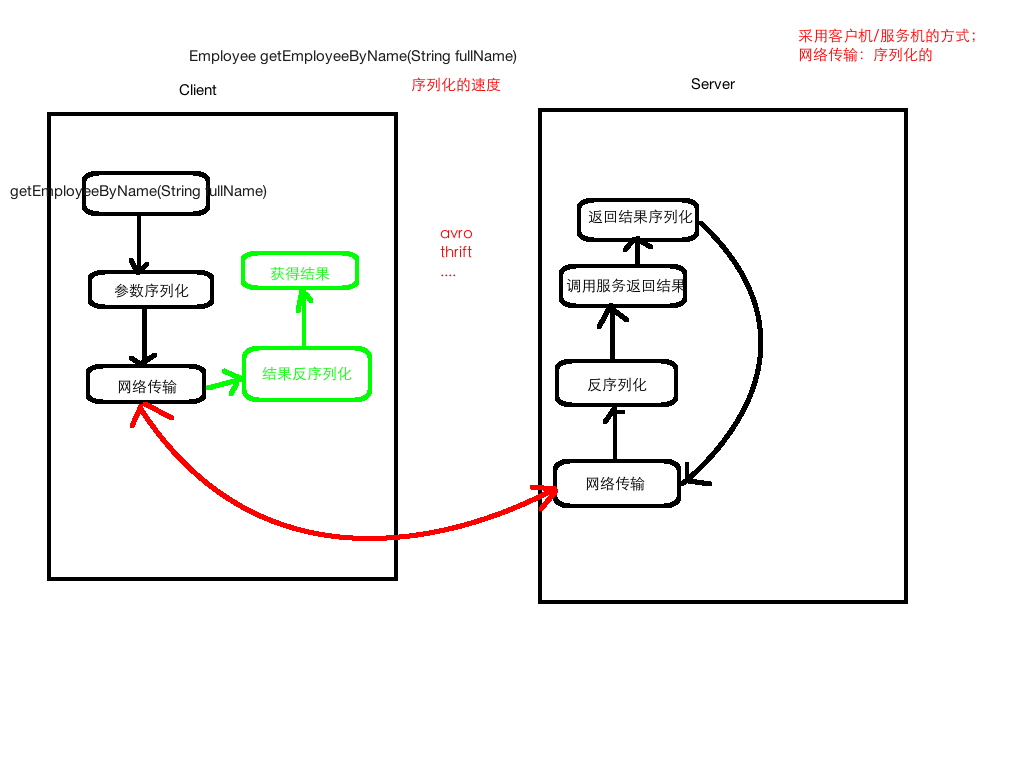

RPC原理图解

基于Hadoop的RPC实现

添加依赖

<!--添加cloudera的repository-->

<repositories>

<repository>

<id>cloudera</id>

<url>https://repository.cloudera.com/artifactory/cloudera-repos</url>

</repository>

</repositories>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.6.0-cdh5.7.0</version>

<exclusions>

<exclusion>

<groupId>com.google.guava</groupId>

<artifactId>guava</artifactId>

</exclusion>

</exclusions>

</dependency>

/**

* 用户的服务接口

*/

public interface UserService {

public static final long versionID = 88888888;

/**

* 添加用户

* @param name 名字

* @param age 年龄

*/

public void addUser(String name, int age);

}

/**

* 用户的服务接口实现类

*/

public class UserServiceImpl implements UserService{

public void addUser(String name, int age) {

System.out.println("From Server Invoked: add user success... , name is :" + name);

}

}

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.ipc.RPC;

/**

* RPC Server服务

*/

public class RPCServer {

public static void main(String[] args) throws Exception{

Configuration configuration = new Configuration();

RPC.Builder builder = new RPC.Builder(configuration);

// Java Builder模式

RPC.Server server = builder.setProtocol(UserService.class)

.setInstance(new UserServiceImpl())

.setBindAddress("localhost")

.setPort(9999)

.build();

server.start();

}

}

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.ipc.RPC;

import java.net.InetSocketAddress;

/**

* RPC客户端

*/

public class RPCClient {

public static void main(String[] args) throws Exception {

Configuration configuration = new Configuration();

long clientVersion = 88888888;

UserService userService = RPC.getProxy(UserService.class,clientVersion,

new InetSocketAddress("localhost",9999),

configuration);

userService.addUser("lisi", 30);

System.out.println("From client... invoked");

RPC.stopProxy(userService);

}

}

测试:先启动服务端;再启动客户端。

Storm DRPC概述

分布式RPC (DRPC)背后的思想是使用Storm并行地计算真正强大的函数。Storm topology 接受函数参数流作为输入,并为每个函数调用发出结果流。

与其说DRPC是Storm的一个特性,不如说它是从Storm的streams, spouts, bolts, topologies的原语中表达出来的模式。DRPC可以从Storm中打包为一个单独的库,但是它非常有用,所以与Storm绑定在一起。

分布式RPC由“DRPC服务器”协调(Storm附带了一个实现)。DRPC服务器协调接收RPC请求、将请求发送到Storm topology、接收Storm topology的结果并将结果发送回等待的客户机。从客户机的角度来看,分布式RPC调用看起来就像常规RPC调用。例如,下面是客户机如何使用参数“http://twitter.com”计算“reach”函数的结果:

Config conf = new Config();

conf.put("storm.thrift.transport", "org.apache.storm.security.auth.plain.PlainSaslTransportPlugin");

conf.put(Config.STORM_NIMBUS_RETRY_TIMES, 3);

conf.put(Config.STORM_NIMBUS_RETRY_INTERVAL, 10);

conf.put(Config.STORM_NIMBUS_RETRY_INTERVAL_CEILING, 20);

DRPCClient client = new DRPCClient(conf, "drpc-host", 3772);

String result = client.execute("reach", "http://twitter.com");

客户机向DRPC服务器发送要执行的函数的名称和参数。实现该函数的拓扑使用DRPCSpout从DRPC服务器接收函数调用流。每个函数调用都由DRPC服务器用惟一的id标记。topology计算结果和最终拓topology为ReturnResults bolts连接到DRPC服务器和函数调用的id的结果。然后DRPC服务器使用id匹配客户机正在等待的结果,解除阻塞等待的客户机,并将结果发送给它。

本地DRPC

import org.apache.storm.Config;

import org.apache.storm.LocalCluster;

import org.apache.storm.LocalDRPC;

import org.apache.storm.drpc.LinearDRPCTopologyBuilder;

import org.apache.storm.task.OutputCollector;

import org.apache.storm.task.TopologyContext;

import org.apache.storm.topology.OutputFieldsDeclarer;

import org.apache.storm.topology.base.BaseRichBolt;

import org.apache.storm.tuple.Fields;

import org.apache.storm.tuple.Tuple;

import org.apache.storm.tuple.Values;

import java.util.Map;

/**

* 本地DRPC

*/

public class LocalDRPCTopology {

public static class MyBolt extends BaseRichBolt {

private OutputCollector outputCollector;

public void prepare(Map stormConf, TopologyContext context,

OutputCollector collector) {

this.outputCollector = collector;

}

public void execute(Tuple input) {

Object requestId = input.getValue(0); //请求的id

String name = input.getString(1); //请求的参数

/**

* TODO... 业务逻辑处理

*/

String result = "add user: " + name ;

this.outputCollector.emit(new Values(requestId, result));

}

public void declareOutputFields(OutputFieldsDeclarer declarer) {

declarer.declare(new Fields("id","result"));

}

}

public static void main(String[] args) {

LinearDRPCTopologyBuilder builder = new LinearDRPCTopologyBuilder("addUser");

builder.addBolt(new MyBolt());

LocalCluster localCluster = new LocalCluster();

LocalDRPC drpc = new LocalDRPC();

localCluster.submitTopology("local-drpc", new Config(),

builder.createLocalTopology(drpc));

String result = drpc.execute("addUser", "zhangsan");

System.err.println("From client: " + result);

localCluster.shutdown();

drpc.shutdown();

}

}

远程DRPC

改动ymal文件

drpc.servers:

- "hadoop000"

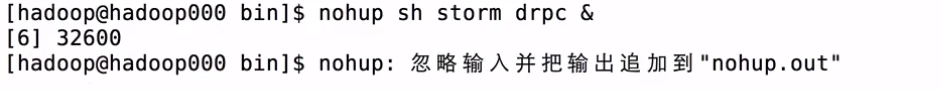

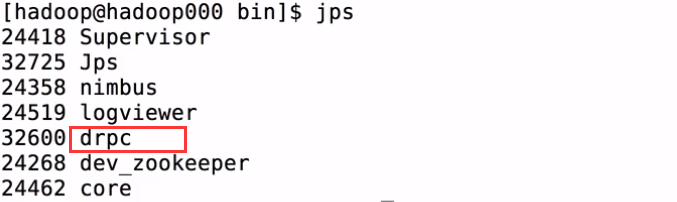

启动服务

import org.apache.storm.Config;

import org.apache.storm.LocalCluster;

import org.apache.storm.LocalDRPC;

import org.apache.storm.StormSubmitter;

import org.apache.storm.drpc.LinearDRPCTopologyBuilder;

import org.apache.storm.generated.AlreadyAliveException;

import org.apache.storm.generated.AuthorizationException;

import org.apache.storm.generated.InvalidTopologyException;

import org.apache.storm.task.OutputCollector;

import org.apache.storm.task.TopologyContext;

import org.apache.storm.topology.OutputFieldsDeclarer;

import org.apache.storm.topology.base.BaseRichBolt;

import org.apache.storm.tuple.Fields;

import org.apache.storm.tuple.Tuple;

import org.apache.storm.tuple.Values;

import java.util.Map;

/**

* 远程DRPC

*/

public class RemoteDRPCTopology {

public static class MyBolt extends BaseRichBolt {

private OutputCollector outputCollector;

public void prepare(Map stormConf, TopologyContext context,

OutputCollector collector) {

this.outputCollector = collector;

}

public void execute(Tuple input) {

Object requestId = input.getValue(0); //请求的id

String name = input.getString(1); //请求的参数

/**

* TODO... 业务逻辑处理

*/

String result = "add user: " + name ;

this.outputCollector.emit(new Values(requestId, result));

}

public void declareOutputFields(OutputFieldsDeclarer declarer) {

declarer.declare(new Fields("id","result"));

}

}

public static void main(String[] args) {

LinearDRPCTopologyBuilder builder = new LinearDRPCTopologyBuilder("addUser");

builder.addBolt(new MyBolt());

try {

StormSubmitter.submitTopology("drpc-topology",

new Config(),

builder.createRemoteTopology());

} catch (Exception e) {

e.printStackTrace();

}

}

}

server端的代码打包上传服务器

import org.apache.storm.Config;

import org.apache.storm.utils.DRPCClient;

/**

* Remote DRPC客户端测试类.

*/

public class RemoteDRPCClient {

public static void main(String[] args) throws Exception {

Config config = new Config();

// 这一组参数

config.put("storm.thrift.transport", "org.apache.storm.security.auth.SimpleTransportPlugin");

config.put(Config.STORM_NIMBUS_RETRY_TIMES, 3);

config.put(Config.STORM_NIMBUS_RETRY_INTERVAL, 10);

config.put(Config.STORM_NIMBUS_RETRY_INTERVAL_CEILING, 20);

config.put(Config.DRPC_MAX_BUFFER_SIZE, 1048576);

DRPCClient client = new DRPCClient(config,"hadoop000", 3772);

String result = client.execute("addUser", "wangwu");

System.out.println("Client invoked: " + result);

}

}