题目链接:点击打开链接

先贴笔记

代码:

costFunction.m(求代价和各方向梯度)(注意: 单独计算):

function [J, grad] = costFunctionReg(theta, X, y, lambda)

%COSTFUNCTIONREG Compute cost and gradient for logistic regression with regularization

% J = COSTFUNCTIONREG(theta, X, y, lambda) computes the cost of using

% theta as the parameter for regularized logistic regression and the

% gradient of the cost w.r.t. to the parameters.

% Initialize some useful values

m = length(y); % number of training examples

% You need to return the following variables correctly

J = 0;

grad = zeros(size(theta));

% ====================== YOUR CODE HERE ======================

% Instructions: Compute the cost of a particular choice of theta.

% You should set J to the cost.

% Compute the partial derivatives and set grad to the partial

% derivatives of the cost w.r.t. each parameter in theta

[~, n] = size(X);

%以下计算一定要记得不正则化theta_0

J = (-y'*log(sigmoid(X*theta))-(1-y')*log(1-sigmoid(X*theta)))/m + ...

lambda/(2.0*m)*(theta(2:n)'*theta(2:n));

grad(1) = X(:,1)'*(sigmoid(X*theta)-y)./m;

grad(2:n) = X(:,2:n)'*(sigmoid(X*theta)-y)./m + lambda/m*theta(2:n);

% =============================================================

end

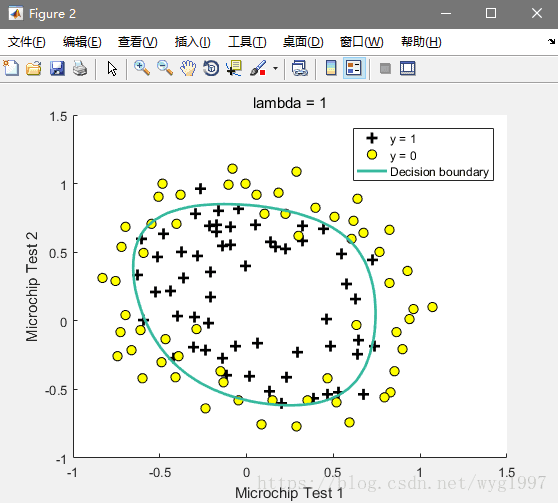

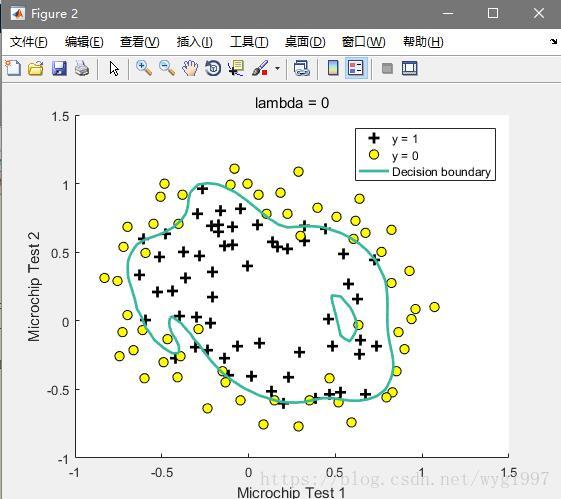

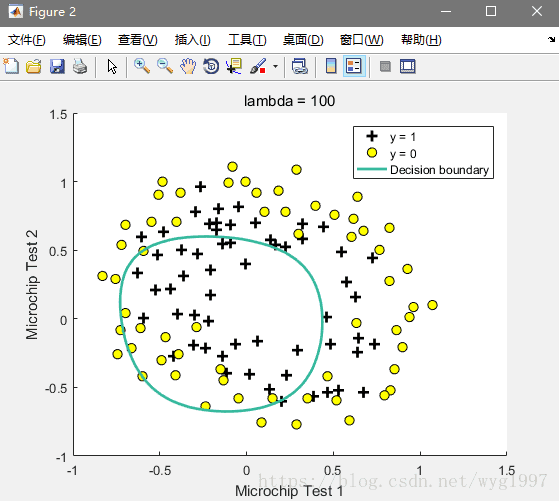

然后展示下不同λ画出的不同图案