续上一篇:

深度残差网络+自适应参数化ReLU激活函数(调参记录2)

https://www.cnblogs.com/shisuzanian/p/12906984.html

本文继续测试深度残差网络和自适应参数化ReLU激活函数在Cifar10图像集上的表现,残差模块仍然是27个,卷积核的个数分别增加到16个、32个和64个,迭代次数从1000个epoch减到了500个epoch(主要是为了节省时间)。

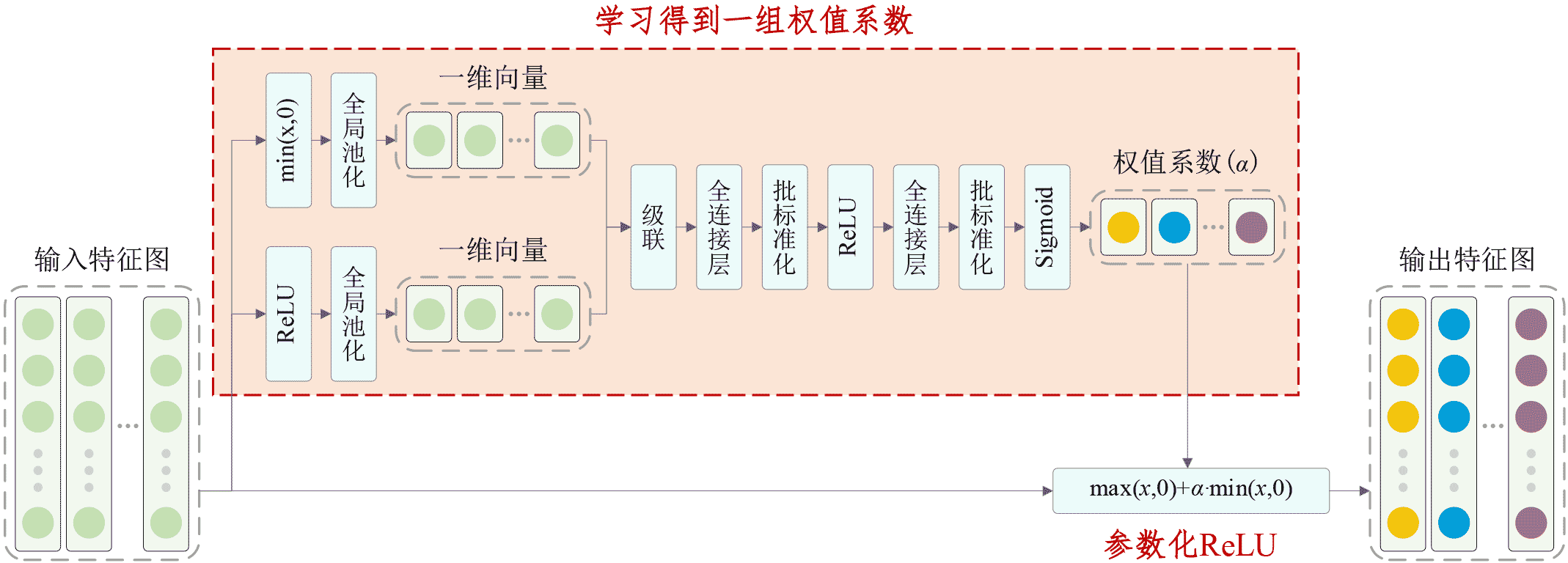

自适应参数化ReLU是Parametric ReLU的升级版本,如下图所示:

具体Keras代码如下:

1 #!/usr/bin/env python3 2 # -*- coding: utf-8 -*- 3 """ 4 Created on Tue Apr 14 04:17:45 2020 5 Implemented using TensorFlow 1.0.1 and Keras 2.2.1 6 7 Minghang Zhao, Shisheng Zhong, Xuyun Fu, Baoping Tang, Shaojiang Dong, Michael Pecht, 8 Deep Residual Networks with Adaptively Parametric Rectifier Linear Units for Fault Diagnosis, 9 IEEE Transactions on Industrial Electronics, 2020, DOI: 10.1109/TIE.2020.2972458 10 11 @author: Minghang Zhao 12 """ 13 14 from __future__ import print_function 15 import keras 16 import numpy as np 17 from keras.datasets import cifar10 18 from keras.layers import Dense, Conv2D, BatchNormalization, Activation, Minimum 19 from keras.layers import AveragePooling2D, Input, GlobalAveragePooling2D, Concatenate, Reshape 20 from keras.regularizers import l2 21 from keras import backend as K 22 from keras.models import Model 23 from keras import optimizers 24 from keras.preprocessing.image import ImageDataGenerator 25 from keras.callbacks import LearningRateScheduler 26 K.set_learning_phase(1) 27 28 # The data, split between train and test sets 29 (x_train, y_train), (x_test, y_test) = cifar10.load_data() 30 31 # Noised data 32 x_train = x_train.astype('float32') / 255. 33 x_test = x_test.astype('float32') / 255. 34 x_test = x_test-np.mean(x_train) 35 x_train = x_train-np.mean(x_train) 36 print('x_train shape:', x_train.shape) 37 print(x_train.shape[0], 'train samples') 38 print(x_test.shape[0], 'test samples') 39 40 # convert class vectors to binary class matrices 41 y_train = keras.utils.to_categorical(y_train, 10) 42 y_test = keras.utils.to_categorical(y_test, 10) 43 44 # Schedule the learning rate, multiply 0.1 every 200 epoches 45 def scheduler(epoch): 46 if epoch % 200 == 0 and epoch != 0: 47 lr = K.get_value(model.optimizer.lr) 48 K.set_value(model.optimizer.lr, lr * 0.1) 49 print("lr changed to {}".format(lr * 0.1)) 50 return K.get_value(model.optimizer.lr) 51 52 # An adaptively parametric rectifier linear unit (APReLU) 53 def aprelu(inputs): 54 # get the number of channels 55 channels = inputs.get_shape().as_list()[-1] 56 # get a zero feature map 57 zeros_input = keras.layers.subtract([inputs, inputs]) 58 # get a feature map with only positive features 59 pos_input = Activation('relu')(inputs) 60 # get a feature map with only negative features 61 neg_input = Minimum()([inputs,zeros_input]) 62 # define a network to obtain the scaling coefficients 63 scales_p = GlobalAveragePooling2D()(pos_input) 64 scales_n = GlobalAveragePooling2D()(neg_input) 65 scales = Concatenate()([scales_n, scales_p]) 66 scales = Dense(channels//4, activation='linear', kernel_initializer='he_normal', kernel_regularizer=l2(1e-4))(scales) 67 scales = BatchNormalization()(scales) 68 scales = Activation('relu')(scales) 69 scales = Dense(channels, activation='linear', kernel_initializer='he_normal', kernel_regularizer=l2(1e-4))(scales) 70 scales = BatchNormalization()(scales) 71 scales = Activation('sigmoid')(scales) 72 scales = Reshape((1,1,channels))(scales) 73 # apply a paramtetric relu 74 neg_part = keras.layers.multiply([scales, neg_input]) 75 return keras.layers.add([pos_input, neg_part]) 76 77 # Residual Block 78 def residual_block(incoming, nb_blocks, out_channels, downsample=False, 79 downsample_strides=2): 80 81 residual = incoming 82 in_channels = incoming.get_shape().as_list()[-1] 83 84 for i in range(nb_blocks): 85 86 identity = residual 87 88 if not downsample: 89 downsample_strides = 1 90 91 residual = BatchNormalization()(residual) 92 residual = aprelu(residual) 93 residual = Conv2D(out_channels, 3, strides=(downsample_strides, downsample_strides), 94 padding='same', kernel_initializer='he_normal', 95 kernel_regularizer=l2(1e-4))(residual) 96 97 residual = BatchNormalization()(residual) 98 residual = aprelu(residual) 99 residual = Conv2D(out_channels, 3, padding='same', kernel_initializer='he_normal', 100 kernel_regularizer=l2(1e-4))(residual) 101 102 # Downsampling 103 if downsample_strides > 1: 104 identity = AveragePooling2D(pool_size=(1,1), strides=(2,2))(identity) 105 106 # Zero_padding to match channels 107 if in_channels != out_channels: 108 zeros_identity = keras.layers.subtract([identity, identity]) 109 identity = keras.layers.concatenate([identity, zeros_identity]) 110 in_channels = out_channels 111 112 residual = keras.layers.add([residual, identity]) 113 114 return residual 115 116 117 # define and train a model 118 inputs = Input(shape=(32, 32, 3)) 119 net = Conv2D(16, 3, padding='same', kernel_initializer='he_normal', kernel_regularizer=l2(1e-4))(inputs) 120 net = residual_block(net, 9, 16, downsample=False) 121 net = residual_block(net, 1, 32, downsample=True) 122 net = residual_block(net, 8, 32, downsample=False) 123 net = residual_block(net, 1, 64, downsample=True) 124 net = residual_block(net, 8, 64, downsample=False) 125 net = BatchNormalization()(net) 126 net = aprelu(net) 127 net = GlobalAveragePooling2D()(net) 128 outputs = Dense(10, activation='softmax', kernel_initializer='he_normal', kernel_regularizer=l2(1e-4))(net) 129 model = Model(inputs=inputs, outputs=outputs) 130 sgd = optimizers.SGD(lr=0.1, decay=0., momentum=0.9, nesterov=True) 131 model.compile(loss='categorical_crossentropy', optimizer=sgd, metrics=['accuracy']) 132 133 # data augmentation 134 datagen = ImageDataGenerator( 135 # randomly rotate images in the range (deg 0 to 180) 136 rotation_range=30, 137 # randomly flip images 138 horizontal_flip=True, 139 # randomly shift images horizontally 140 width_shift_range=0.125, 141 # randomly shift images vertically 142 height_shift_range=0.125) 143 144 reduce_lr = LearningRateScheduler(scheduler) 145 # fit the model on the batches generated by datagen.flow(). 146 model.fit_generator(datagen.flow(x_train, y_train, batch_size=100), 147 validation_data=(x_test, y_test), epochs=500, 148 verbose=1, callbacks=[reduce_lr], workers=4) 149 150 # get results 151 K.set_learning_phase(0) 152 DRSN_train_score1 = model.evaluate(x_train, y_train, batch_size=100, verbose=0) 153 print('Train loss:', DRSN_train_score1[0]) 154 print('Train accuracy:', DRSN_train_score1[1]) 155 DRSN_test_score1 = model.evaluate(x_test, y_test, batch_size=100, verbose=0) 156 print('Test loss:', DRSN_test_score1[0]) 157 print('Test accuracy:', DRSN_test_score1[1])

实验结果如下:

1 Using TensorFlow backend. 2 x_train shape: (50000, 32, 32, 3) 3 50000 train samples 4 10000 test samples 5 Epoch 1/500 6 91s 181ms/step - loss: 2.4539 - acc: 0.4100 - val_loss: 2.0730 - val_acc: 0.5339 7 Epoch 2/500 8 63s 126ms/step - loss: 1.9860 - acc: 0.5463 - val_loss: 1.7375 - val_acc: 0.6207 9 Epoch 3/500 10 63s 126ms/step - loss: 1.7263 - acc: 0.6070 - val_loss: 1.5633 - val_acc: 0.6542 11 Epoch 4/500 12 63s 126ms/step - loss: 1.5410 - acc: 0.6480 - val_loss: 1.4049 - val_acc: 0.6839 13 Epoch 5/500 14 63s 126ms/step - loss: 1.4072 - acc: 0.6701 - val_loss: 1.3024 - val_acc: 0.7038 15 Epoch 6/500 16 63s 126ms/step - loss: 1.2918 - acc: 0.6950 - val_loss: 1.1935 - val_acc: 0.7256 17 Epoch 7/500 18 63s 126ms/step - loss: 1.1959 - acc: 0.7151 - val_loss: 1.0884 - val_acc: 0.7488 19 Epoch 8/500 20 63s 126ms/step - loss: 1.1186 - acc: 0.7316 - val_loss: 1.0709 - val_acc: 0.7462 21 Epoch 9/500 22 63s 126ms/step - loss: 1.0602 - acc: 0.7459 - val_loss: 0.9674 - val_acc: 0.7760 23 Epoch 10/500 24 63s 126ms/step - loss: 1.0074 - acc: 0.7569 - val_loss: 0.9300 - val_acc: 0.7801 25 Epoch 11/500 26 63s 126ms/step - loss: 0.9667 - acc: 0.7662 - val_loss: 0.9094 - val_acc: 0.7894 27 Epoch 12/500 28 64s 127ms/step - loss: 0.9406 - acc: 0.7689 - val_loss: 0.8765 - val_acc: 0.7899 29 Epoch 13/500 30 63s 127ms/step - loss: 0.9083 - acc: 0.7775 - val_loss: 0.8589 - val_acc: 0.7949 31 Epoch 14/500 32 63s 127ms/step - loss: 0.8872 - acc: 0.7832 - val_loss: 0.8389 - val_acc: 0.7997 33 Epoch 15/500 34 63s 127ms/step - loss: 0.8653 - acc: 0.7877 - val_loss: 0.8390 - val_acc: 0.7990 35 Epoch 16/500 36 63s 126ms/step - loss: 0.8529 - acc: 0.7901 - val_loss: 0.8052 - val_acc: 0.8061 37 Epoch 17/500 38 63s 126ms/step - loss: 0.8347 - acc: 0.7964 - val_loss: 0.8033 - val_acc: 0.8101 39 Epoch 18/500 40 63s 126ms/step - loss: 0.8186 - acc: 0.8014 - val_loss: 0.7835 - val_acc: 0.8171 41 Epoch 19/500 42 63s 126ms/step - loss: 0.8080 - acc: 0.8026 - val_loss: 0.7852 - val_acc: 0.8172 43 Epoch 20/500 44 63s 126ms/step - loss: 0.7982 - acc: 0.8070 - val_loss: 0.7596 - val_acc: 0.8249 45 Epoch 21/500 46 63s 126ms/step - loss: 0.7932 - acc: 0.8079 - val_loss: 0.7477 - val_acc: 0.8266 47 Epoch 22/500 48 63s 126ms/step - loss: 0.7862 - acc: 0.8106 - val_loss: 0.7489 - val_acc: 0.8285 49 Epoch 23/500 50 63s 126ms/step - loss: 0.7762 - acc: 0.8145 - val_loss: 0.7451 - val_acc: 0.8301 51 Epoch 24/500 52 63s 126ms/step - loss: 0.7691 - acc: 0.8174 - val_loss: 0.7402 - val_acc: 0.8271 53 Epoch 25/500 54 63s 126ms/step - loss: 0.7651 - acc: 0.8207 - val_loss: 0.7442 - val_acc: 0.8316 55 Epoch 26/500 56 63s 126ms/step - loss: 0.7562 - acc: 0.8218 - val_loss: 0.7177 - val_acc: 0.8392 57 Epoch 27/500 58 63s 126ms/step - loss: 0.7521 - acc: 0.8241 - val_loss: 0.7243 - val_acc: 0.8356 59 Epoch 28/500 60 63s 126ms/step - loss: 0.7436 - acc: 0.8254 - val_loss: 0.7505 - val_acc: 0.8289 61 Epoch 29/500 62 63s 126ms/step - loss: 0.7429 - acc: 0.8265 - val_loss: 0.7424 - val_acc: 0.8292 63 Epoch 30/500 64 63s 126ms/step - loss: 0.7391 - acc: 0.8313 - val_loss: 0.7185 - val_acc: 0.8392 65 Epoch 31/500 66 63s 126ms/step - loss: 0.7361 - acc: 0.8323 - val_loss: 0.7276 - val_acc: 0.8406 67 Epoch 32/500 68 63s 126ms/step - loss: 0.7311 - acc: 0.8343 - val_loss: 0.7167 - val_acc: 0.8405 69 Epoch 33/500 70 63s 126ms/step - loss: 0.7247 - acc: 0.8346 - val_loss: 0.7345 - val_acc: 0.8382 71 Epoch 34/500 72 63s 126ms/step - loss: 0.7196 - acc: 0.8378 - val_loss: 0.7058 - val_acc: 0.8481 73 Epoch 35/500 74 63s 126ms/step - loss: 0.7132 - acc: 0.8400 - val_loss: 0.7212 - val_acc: 0.8457 75 Epoch 36/500 76 63s 126ms/step - loss: 0.7112 - acc: 0.8436 - val_loss: 0.7031 - val_acc: 0.8496 77 Epoch 37/500 78 63s 126ms/step - loss: 0.7101 - acc: 0.8429 - val_loss: 0.7199 - val_acc: 0.8421 79 Epoch 38/500 80 63s 126ms/step - loss: 0.7093 - acc: 0.8439 - val_loss: 0.6786 - val_acc: 0.8550 81 Epoch 39/500 82 63s 126ms/step - loss: 0.7026 - acc: 0.8453 - val_loss: 0.7023 - val_acc: 0.8474 83 Epoch 40/500 84 63s 126ms/step - loss: 0.6992 - acc: 0.8470 - val_loss: 0.6993 - val_acc: 0.8491 85 Epoch 41/500 86 63s 126ms/step - loss: 0.6955 - acc: 0.8485 - val_loss: 0.7176 - val_acc: 0.8447 87 Epoch 42/500 88 63s 127ms/step - loss: 0.6987 - acc: 0.8471 - val_loss: 0.7265 - val_acc: 0.8433 89 Epoch 43/500 90 63s 126ms/step - loss: 0.6953 - acc: 0.8504 - val_loss: 0.6921 - val_acc: 0.8523 91 Epoch 44/500 92 63s 126ms/step - loss: 0.6875 - acc: 0.8522 - val_loss: 0.6824 - val_acc: 0.8584 93 Epoch 45/500 94 63s 126ms/step - loss: 0.6888 - acc: 0.8518 - val_loss: 0.6953 - val_acc: 0.8534 95 Epoch 46/500 96 63s 126ms/step - loss: 0.6816 - acc: 0.8538 - val_loss: 0.7102 - val_acc: 0.8492 97 Epoch 47/500 98 63s 126ms/step - loss: 0.6857 - acc: 0.8545 - val_loss: 0.6985 - val_acc: 0.8504 99 Epoch 48/500 100 63s 126ms/step - loss: 0.6835 - acc: 0.8533 - val_loss: 0.6992 - val_acc: 0.8540 101 Epoch 49/500 102 63s 126ms/step - loss: 0.6775 - acc: 0.8568 - val_loss: 0.6907 - val_acc: 0.8543 103 Epoch 50/500 104 63s 126ms/step - loss: 0.6782 - acc: 0.8554 - val_loss: 0.7010 - val_acc: 0.8504 105 Epoch 51/500 106 63s 126ms/step - loss: 0.6756 - acc: 0.8561 - val_loss: 0.6905 - val_acc: 0.8544 107 Epoch 52/500 108 63s 126ms/step - loss: 0.6730 - acc: 0.8581 - val_loss: 0.6838 - val_acc: 0.8568 109 Epoch 53/500 110 63s 126ms/step - loss: 0.6681 - acc: 0.8595 - val_loss: 0.6835 - val_acc: 0.8578 111 Epoch 54/500 112 63s 126ms/step - loss: 0.6691 - acc: 0.8593 - val_loss: 0.6691 - val_acc: 0.8647 113 Epoch 55/500 114 63s 126ms/step - loss: 0.6637 - acc: 0.8627 - val_loss: 0.6778 - val_acc: 0.8580 115 Epoch 56/500 116 63s 126ms/step - loss: 0.6661 - acc: 0.8620 - val_loss: 0.6654 - val_acc: 0.8639 117 Epoch 57/500 118 63s 126ms/step - loss: 0.6623 - acc: 0.8618 - val_loss: 0.6829 - val_acc: 0.8580 119 Epoch 58/500 120 64s 127ms/step - loss: 0.6626 - acc: 0.8636 - val_loss: 0.6701 - val_acc: 0.8610 121 Epoch 59/500 122 64s 127ms/step - loss: 0.6584 - acc: 0.8625 - val_loss: 0.6879 - val_acc: 0.8538 123 Epoch 60/500 124 63s 127ms/step - loss: 0.6530 - acc: 0.8653 - val_loss: 0.6670 - val_acc: 0.8641 125 Epoch 61/500 126 64s 127ms/step - loss: 0.6563 - acc: 0.8655 - val_loss: 0.6671 - val_acc: 0.8639 127 Epoch 62/500 128 64s 127ms/step - loss: 0.6543 - acc: 0.8656 - val_loss: 0.6792 - val_acc: 0.8620 129 Epoch 63/500 130 63s 127ms/step - loss: 0.6549 - acc: 0.8653 - val_loss: 0.6826 - val_acc: 0.8581 131 Epoch 64/500 132 63s 127ms/step - loss: 0.6477 - acc: 0.8696 - val_loss: 0.6842 - val_acc: 0.8599 133 Epoch 65/500 134 64s 127ms/step - loss: 0.6556 - acc: 0.8649 - val_loss: 0.6681 - val_acc: 0.8625 135 Epoch 66/500 136 63s 127ms/step - loss: 0.6463 - acc: 0.8690 - val_loss: 0.6611 - val_acc: 0.8673 137 Epoch 67/500 138 64s 127ms/step - loss: 0.6462 - acc: 0.8703 - val_loss: 0.6766 - val_acc: 0.8605 139 Epoch 68/500 140 64s 127ms/step - loss: 0.6420 - acc: 0.8705 - val_loss: 0.6551 - val_acc: 0.8687 141 Epoch 69/500 142 63s 127ms/step - loss: 0.6353 - acc: 0.8737 - val_loss: 0.6761 - val_acc: 0.8635 143 Epoch 70/500 144 64s 127ms/step - loss: 0.6473 - acc: 0.8699 - val_loss: 0.6616 - val_acc: 0.8684 145 Epoch 71/500 146 63s 127ms/step - loss: 0.6335 - acc: 0.8743 - val_loss: 0.6712 - val_acc: 0.8656 147 Epoch 72/500 148 63s 127ms/step - loss: 0.6325 - acc: 0.8738 - val_loss: 0.6801 - val_acc: 0.8604 149 Epoch 73/500 150 64s 127ms/step - loss: 0.6378 - acc: 0.8719 - val_loss: 0.6607 - val_acc: 0.8678 151 Epoch 74/500 152 64s 127ms/step - loss: 0.6355 - acc: 0.8743 - val_loss: 0.6568 - val_acc: 0.8671 153 Epoch 75/500 154 63s 127ms/step - loss: 0.6344 - acc: 0.8744 - val_loss: 0.6646 - val_acc: 0.8646 155 Epoch 76/500 156 64s 127ms/step - loss: 0.6283 - acc: 0.8745 - val_loss: 0.6571 - val_acc: 0.8703 157 Epoch 77/500 158 64s 127ms/step - loss: 0.6291 - acc: 0.8763 - val_loss: 0.6789 - val_acc: 0.8638 159 Epoch 78/500 160 63s 127ms/step - loss: 0.6291 - acc: 0.8781 - val_loss: 0.6485 - val_acc: 0.8708 161 Epoch 79/500 162 64s 127ms/step - loss: 0.6285 - acc: 0.8779 - val_loss: 0.6366 - val_acc: 0.8758 163 Epoch 80/500 164 64s 127ms/step - loss: 0.6310 - acc: 0.8755 - val_loss: 0.6587 - val_acc: 0.8710 165 Epoch 81/500 166 63s 127ms/step - loss: 0.6265 - acc: 0.8770 - val_loss: 0.6511 - val_acc: 0.8685 167 Epoch 82/500 168 63s 126ms/step - loss: 0.6246 - acc: 0.8784 - val_loss: 0.6405 - val_acc: 0.8742 169 Epoch 83/500 170 63s 126ms/step - loss: 0.6283 - acc: 0.8772 - val_loss: 0.6565 - val_acc: 0.8701 171 Epoch 84/500 172 63s 126ms/step - loss: 0.6225 - acc: 0.8778 - val_loss: 0.6565 - val_acc: 0.8731 173 Epoch 85/500 174 63s 126ms/step - loss: 0.6185 - acc: 0.8810 - val_loss: 0.6819 - val_acc: 0.8586 175 Epoch 86/500 176 63s 126ms/step - loss: 0.6241 - acc: 0.8792 - val_loss: 0.6703 - val_acc: 0.8685 177 Epoch 87/500 178 63s 127ms/step - loss: 0.6194 - acc: 0.8811 - val_loss: 0.6514 - val_acc: 0.8705 179 Epoch 88/500 180 64s 127ms/step - loss: 0.6159 - acc: 0.8798 - val_loss: 0.6401 - val_acc: 0.8764 181 Epoch 89/500 182 64s 127ms/step - loss: 0.6196 - acc: 0.8794 - val_loss: 0.6436 - val_acc: 0.8739 183 Epoch 90/500 184 64s 127ms/step - loss: 0.6144 - acc: 0.8817 - val_loss: 0.6491 - val_acc: 0.8718 185 Epoch 91/500 186 63s 127ms/step - loss: 0.6180 - acc: 0.8813 - val_loss: 0.6449 - val_acc: 0.8758 187 Epoch 92/500 188 63s 127ms/step - loss: 0.6091 - acc: 0.8822 - val_loss: 0.6465 - val_acc: 0.8758 189 Epoch 93/500 190 64s 127ms/step - loss: 0.6172 - acc: 0.8825 - val_loss: 0.6414 - val_acc: 0.8754 191 Epoch 94/500 192 63s 127ms/step - loss: 0.6110 - acc: 0.8822 - val_loss: 0.6582 - val_acc: 0.8710 193 Epoch 95/500 194 63s 126ms/step - loss: 0.6170 - acc: 0.8820 - val_loss: 0.6572 - val_acc: 0.8704 195 Epoch 96/500 196 63s 126ms/step - loss: 0.6132 - acc: 0.8843 - val_loss: 0.6744 - val_acc: 0.8656 197 Epoch 97/500 198 63s 126ms/step - loss: 0.6127 - acc: 0.8824 - val_loss: 0.6296 - val_acc: 0.8795 199 Epoch 98/500 200 63s 126ms/step - loss: 0.6056 - acc: 0.8857 - val_loss: 0.6586 - val_acc: 0.8738 201 Epoch 99/500 202 63s 127ms/step - loss: 0.6131 - acc: 0.8831 - val_loss: 0.6579 - val_acc: 0.8719 203 Epoch 100/500 204 63s 127ms/step - loss: 0.6076 - acc: 0.8846 - val_loss: 0.6507 - val_acc: 0.8716 205 Epoch 101/500 206 63s 127ms/step - loss: 0.6082 - acc: 0.8849 - val_loss: 0.6661 - val_acc: 0.8717 207 Epoch 102/500 208 64s 127ms/step - loss: 0.6117 - acc: 0.8836 - val_loss: 0.6860 - val_acc: 0.8612 209 Epoch 103/500 210 63s 127ms/step - loss: 0.6068 - acc: 0.8861 - val_loss: 0.6470 - val_acc: 0.8776 211 Epoch 104/500 212 64s 127ms/step - loss: 0.6063 - acc: 0.8872 - val_loss: 0.6613 - val_acc: 0.8679 213 Epoch 105/500 214 64s 127ms/step - loss: 0.6042 - acc: 0.8844 - val_loss: 0.6494 - val_acc: 0.8781 215 Epoch 106/500 216 64s 127ms/step - loss: 0.6036 - acc: 0.8871 - val_loss: 0.6507 - val_acc: 0.8717 217 Epoch 107/500 218 63s 127ms/step - loss: 0.6039 - acc: 0.8859 - val_loss: 0.6332 - val_acc: 0.8822 219 Epoch 108/500 220 63s 127ms/step - loss: 0.6054 - acc: 0.8865 - val_loss: 0.6511 - val_acc: 0.8737 221 Epoch 109/500 222 63s 127ms/step - loss: 0.6038 - acc: 0.8864 - val_loss: 0.6591 - val_acc: 0.8708 223 Epoch 110/500 224 63s 127ms/step - loss: 0.5994 - acc: 0.8888 - val_loss: 0.6289 - val_acc: 0.8843 225 Epoch 111/500 226 63s 127ms/step - loss: 0.5970 - acc: 0.8882 - val_loss: 0.6455 - val_acc: 0.8778 227 Epoch 112/500 228 63s 127ms/step - loss: 0.5990 - acc: 0.8878 - val_loss: 0.6369 - val_acc: 0.8788 229 Epoch 113/500 230 64s 127ms/step - loss: 0.6001 - acc: 0.8880 - val_loss: 0.6324 - val_acc: 0.8834 231 Epoch 114/500 232 63s 127ms/step - loss: 0.5944 - acc: 0.8893 - val_loss: 0.6233 - val_acc: 0.8844 233 Epoch 115/500 234 63s 127ms/step - loss: 0.5906 - acc: 0.8915 - val_loss: 0.6327 - val_acc: 0.8781 235 Epoch 116/500 236 63s 127ms/step - loss: 0.6013 - acc: 0.8870 - val_loss: 0.6265 - val_acc: 0.8827 237 Epoch 117/500 238 63s 127ms/step - loss: 0.5928 - acc: 0.8915 - val_loss: 0.6423 - val_acc: 0.8766 239 Epoch 118/500 240 63s 127ms/step - loss: 0.5988 - acc: 0.8878 - val_loss: 0.6609 - val_acc: 0.8695 241 Epoch 119/500 242 64s 127ms/step - loss: 0.5920 - acc: 0.8909 - val_loss: 0.6242 - val_acc: 0.8846 243 Epoch 120/500 244 64s 127ms/step - loss: 0.5941 - acc: 0.8894 - val_loss: 0.6528 - val_acc: 0.8716 245 Epoch 121/500 246 63s 126ms/step - loss: 0.5939 - acc: 0.8895 - val_loss: 0.6338 - val_acc: 0.8806 247 Epoch 122/500 248 63s 126ms/step - loss: 0.5936 - acc: 0.8900 - val_loss: 0.6290 - val_acc: 0.8827 249 Epoch 123/500 250 63s 126ms/step - loss: 0.5937 - acc: 0.8891 - val_loss: 0.6471 - val_acc: 0.8693 251 Epoch 124/500 252 63s 126ms/step - loss: 0.5900 - acc: 0.8902 - val_loss: 0.6098 - val_acc: 0.8911 253 Epoch 125/500 254 63s 126ms/step - loss: 0.5854 - acc: 0.8933 - val_loss: 0.6445 - val_acc: 0.8757 255 Epoch 126/500 256 63s 126ms/step - loss: 0.5913 - acc: 0.8898 - val_loss: 0.6354 - val_acc: 0.8824 257 Epoch 127/500 258 63s 126ms/step - loss: 0.5927 - acc: 0.8893 - val_loss: 0.6420 - val_acc: 0.8843 259 Epoch 128/500 260 63s 126ms/step - loss: 0.5926 - acc: 0.8901 - val_loss: 0.6244 - val_acc: 0.8825 261 Epoch 129/500 262 63s 127ms/step - loss: 0.5879 - acc: 0.8906 - val_loss: 0.6230 - val_acc: 0.8849 263 Epoch 130/500 264 63s 126ms/step - loss: 0.5917 - acc: 0.8908 - val_loss: 0.6428 - val_acc: 0.8771 265 Epoch 131/500 266 63s 126ms/step - loss: 0.5861 - acc: 0.8920 - val_loss: 0.6582 - val_acc: 0.8761 267 Epoch 132/500 268 63s 126ms/step - loss: 0.5857 - acc: 0.8934 - val_loss: 0.6353 - val_acc: 0.8792 269 Epoch 133/500 270 64s 127ms/step - loss: 0.5868 - acc: 0.8926 - val_loss: 0.6154 - val_acc: 0.8878 271 Epoch 134/500 272 63s 126ms/step - loss: 0.5869 - acc: 0.8932 - val_loss: 0.6369 - val_acc: 0.8805 273 Epoch 135/500 274 63s 126ms/step - loss: 0.5853 - acc: 0.8934 - val_loss: 0.6133 - val_acc: 0.8832 275 Epoch 136/500 276 63s 126ms/step - loss: 0.5818 - acc: 0.8944 - val_loss: 0.6538 - val_acc: 0.8751 277 Epoch 137/500 278 63s 126ms/step - loss: 0.5801 - acc: 0.8937 - val_loss: 0.6478 - val_acc: 0.8733 279 Epoch 138/500 280 63s 127ms/step - loss: 0.5788 - acc: 0.8955 - val_loss: 0.6310 - val_acc: 0.8805 281 Epoch 139/500 282 63s 126ms/step - loss: 0.5828 - acc: 0.8926 - val_loss: 0.6172 - val_acc: 0.8869 283 Epoch 140/500 284 63s 126ms/step - loss: 0.5828 - acc: 0.8944 - val_loss: 0.6508 - val_acc: 0.8762 285 Epoch 141/500 286 63s 126ms/step - loss: 0.5856 - acc: 0.8934 - val_loss: 0.6242 - val_acc: 0.8797 287 Epoch 142/500 288 63s 126ms/step - loss: 0.5815 - acc: 0.8944 - val_loss: 0.6483 - val_acc: 0.8749 289 Epoch 143/500 290 63s 127ms/step - loss: 0.5807 - acc: 0.8964 - val_loss: 0.6374 - val_acc: 0.8789 291 Epoch 144/500 292 63s 126ms/step - loss: 0.5810 - acc: 0.8943 - val_loss: 0.6414 - val_acc: 0.8782 293 Epoch 145/500 294 63s 127ms/step - loss: 0.5807 - acc: 0.8959 - val_loss: 0.6279 - val_acc: 0.8783 295 Epoch 146/500 296 63s 126ms/step - loss: 0.5784 - acc: 0.8967 - val_loss: 0.6179 - val_acc: 0.8827 297 Epoch 147/500 298 63s 126ms/step - loss: 0.5754 - acc: 0.8948 - val_loss: 0.6358 - val_acc: 0.8791 299 Epoch 148/500 300 63s 126ms/step - loss: 0.5764 - acc: 0.8960 - val_loss: 0.6279 - val_acc: 0.8828 301 Epoch 149/500 302 63s 126ms/step - loss: 0.5749 - acc: 0.8965 - val_loss: 0.6513 - val_acc: 0.8770 303 Epoch 150/500 304 63s 126ms/step - loss: 0.5791 - acc: 0.8964 - val_loss: 0.6436 - val_acc: 0.8795 305 Epoch 151/500 306 63s 126ms/step - loss: 0.5786 - acc: 0.8959 - val_loss: 0.6276 - val_acc: 0.8807 307 Epoch 152/500 308 63s 127ms/step - loss: 0.5761 - acc: 0.8952 - val_loss: 0.6359 - val_acc: 0.8821 309 Epoch 153/500 310 63s 126ms/step - loss: 0.5729 - acc: 0.8967 - val_loss: 0.6416 - val_acc: 0.8779 311 Epoch 154/500 312 63s 126ms/step - loss: 0.5742 - acc: 0.8982 - val_loss: 0.6312 - val_acc: 0.8819 313 Epoch 155/500 314 63s 126ms/step - loss: 0.5750 - acc: 0.8973 - val_loss: 0.6173 - val_acc: 0.8856 315 Epoch 156/500 316 63s 126ms/step - loss: 0.5722 - acc: 0.8972 - val_loss: 0.6239 - val_acc: 0.8850 317 Epoch 157/500 318 63s 126ms/step - loss: 0.5760 - acc: 0.8963 - val_loss: 0.6322 - val_acc: 0.8807 319 Epoch 158/500 320 63s 126ms/step - loss: 0.5759 - acc: 0.8967 - val_loss: 0.6482 - val_acc: 0.8718 321 Epoch 159/500 322 63s 126ms/step - loss: 0.5696 - acc: 0.8991 - val_loss: 0.6134 - val_acc: 0.8857 323 Epoch 160/500 324 63s 127ms/step - loss: 0.5722 - acc: 0.8986 - val_loss: 0.6347 - val_acc: 0.8787 325 Epoch 161/500 326 64s 127ms/step - loss: 0.5712 - acc: 0.8986 - val_loss: 0.6508 - val_acc: 0.8753 327 Epoch 162/500 328 64s 127ms/step - loss: 0.5757 - acc: 0.8968 - val_loss: 0.6117 - val_acc: 0.8860 329 Epoch 163/500 330 64s 127ms/step - loss: 0.5679 - acc: 0.8992 - val_loss: 0.6201 - val_acc: 0.8843 331 Epoch 164/500 332 64s 127ms/step - loss: 0.5672 - acc: 0.9005 - val_loss: 0.6270 - val_acc: 0.8822 333 Epoch 165/500 334 63s 127ms/step - loss: 0.5703 - acc: 0.8994 - val_loss: 0.6234 - val_acc: 0.8832 335 Epoch 166/500 336 63s 127ms/step - loss: 0.5704 - acc: 0.8982 - val_loss: 0.6396 - val_acc: 0.8781 337 Epoch 167/500 338 63s 127ms/step - loss: 0.5731 - acc: 0.8973 - val_loss: 0.6287 - val_acc: 0.8836 339 Epoch 168/500 340 64s 127ms/step - loss: 0.5674 - acc: 0.8997 - val_loss: 0.6274 - val_acc: 0.8840 341 Epoch 169/500 342 63s 127ms/step - loss: 0.5710 - acc: 0.8963 - val_loss: 0.6319 - val_acc: 0.8833 343 Epoch 170/500 344 64s 127ms/step - loss: 0.5677 - acc: 0.8996 - val_loss: 0.6248 - val_acc: 0.8873 345 Epoch 171/500 346 63s 127ms/step - loss: 0.5713 - acc: 0.8987 - val_loss: 0.6324 - val_acc: 0.8819 347 Epoch 172/500 348 64s 127ms/step - loss: 0.5674 - acc: 0.9004 - val_loss: 0.6259 - val_acc: 0.8849 349 Epoch 173/500 350 63s 127ms/step - loss: 0.5743 - acc: 0.8967 - val_loss: 0.6394 - val_acc: 0.8796 351 Epoch 174/500 352 63s 127ms/step - loss: 0.5656 - acc: 0.8995 - val_loss: 0.6117 - val_acc: 0.8833 353 Epoch 175/500 354 63s 127ms/step - loss: 0.5643 - acc: 0.9009 - val_loss: 0.6178 - val_acc: 0.8855 355 Epoch 176/500 356 64s 127ms/step - loss: 0.5660 - acc: 0.9002 - val_loss: 0.6457 - val_acc: 0.8772 357 Epoch 177/500 358 63s 127ms/step - loss: 0.5715 - acc: 0.8991 - val_loss: 0.6284 - val_acc: 0.8854 359 Epoch 178/500 360 63s 126ms/step - loss: 0.5704 - acc: 0.9005 - val_loss: 0.6210 - val_acc: 0.8829 361 Epoch 179/500 362 63s 126ms/step - loss: 0.5669 - acc: 0.9010 - val_loss: 0.6091 - val_acc: 0.8868 363 Epoch 180/500 364 63s 126ms/step - loss: 0.5695 - acc: 0.8991 - val_loss: 0.6315 - val_acc: 0.8817 365 Epoch 181/500 366 63s 127ms/step - loss: 0.5679 - acc: 0.8981 - val_loss: 0.5973 - val_acc: 0.8885 367 Epoch 182/500 368 63s 127ms/step - loss: 0.5633 - acc: 0.9011 - val_loss: 0.6239 - val_acc: 0.8797 369 Epoch 183/500 370 64s 127ms/step - loss: 0.5621 - acc: 0.9014 - val_loss: 0.6133 - val_acc: 0.8911 371 Epoch 184/500 372 64s 127ms/step - loss: 0.5660 - acc: 0.9004 - val_loss: 0.6123 - val_acc: 0.8871 373 Epoch 185/500 374 63s 127ms/step - loss: 0.5676 - acc: 0.8983 - val_loss: 0.6330 - val_acc: 0.8801 375 Epoch 186/500 376 63s 127ms/step - loss: 0.5647 - acc: 0.9008 - val_loss: 0.6295 - val_acc: 0.8816 377 Epoch 187/500 378 63s 126ms/step - loss: 0.5637 - acc: 0.9005 - val_loss: 0.6291 - val_acc: 0.8801 379 Epoch 188/500 380 63s 127ms/step - loss: 0.5629 - acc: 0.9009 - val_loss: 0.6170 - val_acc: 0.8846 381 Epoch 189/500 382 64s 127ms/step - loss: 0.5616 - acc: 0.9013 - val_loss: 0.6206 - val_acc: 0.8827 383 Epoch 190/500 384 64s 127ms/step - loss: 0.5678 - acc: 0.8990 - val_loss: 0.6226 - val_acc: 0.8805 385 Epoch 191/500 386 63s 126ms/step - loss: 0.5613 - acc: 0.9008 - val_loss: 0.6092 - val_acc: 0.8865 387 Epoch 192/500 388 63s 127ms/step - loss: 0.5601 - acc: 0.9025 - val_loss: 0.6156 - val_acc: 0.8890 389 Epoch 193/500 390 63s 127ms/step - loss: 0.5608 - acc: 0.9018 - val_loss: 0.6255 - val_acc: 0.8846 391 Epoch 194/500 392 63s 126ms/step - loss: 0.5668 - acc: 0.8993 - val_loss: 0.6239 - val_acc: 0.8812 393 Epoch 195/500 394 63s 126ms/step - loss: 0.5576 - acc: 0.9034 - val_loss: 0.6230 - val_acc: 0.8844 395 Epoch 196/500 396 63s 126ms/step - loss: 0.5642 - acc: 0.9002 - val_loss: 0.6197 - val_acc: 0.8853 397 Epoch 197/500 398 63s 126ms/step - loss: 0.5651 - acc: 0.8991 - val_loss: 0.6171 - val_acc: 0.8885 399 Epoch 198/500 400 63s 126ms/step - loss: 0.5602 - acc: 0.9028 - val_loss: 0.6147 - val_acc: 0.8872 401 Epoch 199/500 402 63s 126ms/step - loss: 0.5635 - acc: 0.9023 - val_loss: 0.6115 - val_acc: 0.8878 403 Epoch 200/500 404 63s 126ms/step - loss: 0.5618 - acc: 0.9015 - val_loss: 0.6213 - val_acc: 0.8853 405 Epoch 201/500 406 lr changed to 0.010000000149011612 407 63s 127ms/step - loss: 0.4599 - acc: 0.9378 - val_loss: 0.5280 - val_acc: 0.9159 408 Epoch 202/500 409 63s 126ms/step - loss: 0.4110 - acc: 0.9526 - val_loss: 0.5197 - val_acc: 0.9206 410 Epoch 203/500 411 63s 127ms/step - loss: 0.3926 - acc: 0.9573 - val_loss: 0.5123 - val_acc: 0.9200 412 Epoch 204/500 413 63s 127ms/step - loss: 0.3759 - acc: 0.9617 - val_loss: 0.5096 - val_acc: 0.9201 414 Epoch 205/500 415 63s 126ms/step - loss: 0.3625 - acc: 0.9633 - val_loss: 0.5113 - val_acc: 0.9201 416 Epoch 206/500 417 63s 126ms/step - loss: 0.3524 - acc: 0.9660 - val_loss: 0.5002 - val_acc: 0.9227 418 Epoch 207/500 419 63s 126ms/step - loss: 0.3444 - acc: 0.9675 - val_loss: 0.5007 - val_acc: 0.9229 420 Epoch 208/500 421 63s 126ms/step - loss: 0.3388 - acc: 0.9678 - val_loss: 0.4948 - val_acc: 0.9221 422 Epoch 209/500 423 63s 127ms/step - loss: 0.3282 - acc: 0.9700 - val_loss: 0.4957 - val_acc: 0.9231 424 Epoch 210/500 425 63s 126ms/step - loss: 0.3192 - acc: 0.9722 - val_loss: 0.4946 - val_acc: 0.9216 426 Epoch 211/500 427 63s 126ms/step - loss: 0.3153 - acc: 0.9713 - val_loss: 0.4878 - val_acc: 0.9205 428 Epoch 212/500 429 63s 126ms/step - loss: 0.3066 - acc: 0.9731 - val_loss: 0.4880 - val_acc: 0.9222 430 Epoch 213/500 431 63s 126ms/step - loss: 0.2996 - acc: 0.9739 - val_loss: 0.4867 - val_acc: 0.9219 432 Epoch 214/500 433 63s 126ms/step - loss: 0.2968 - acc: 0.9750 - val_loss: 0.4878 - val_acc: 0.9208 434 Epoch 215/500 435 63s 126ms/step - loss: 0.2880 - acc: 0.9757 - val_loss: 0.4854 - val_acc: 0.9226 436 Epoch 216/500 437 64s 127ms/step - loss: 0.2832 - acc: 0.9755 - val_loss: 0.4865 - val_acc: 0.9207 438 Epoch 217/500 439 63s 127ms/step - loss: 0.2759 - acc: 0.9780 - val_loss: 0.4830 - val_acc: 0.9209 440 Epoch 218/500 441 63s 127ms/step - loss: 0.2751 - acc: 0.9766 - val_loss: 0.4798 - val_acc: 0.9231 442 Epoch 219/500 443 63s 127ms/step - loss: 0.2701 - acc: 0.9775 - val_loss: 0.4781 - val_acc: 0.9228 444 Epoch 220/500 445 64s 127ms/step - loss: 0.2676 - acc: 0.9767 - val_loss: 0.4748 - val_acc: 0.9217 446 Epoch 221/500 447 64s 127ms/step - loss: 0.2580 - acc: 0.9790 - val_loss: 0.4820 - val_acc: 0.9205 448 Epoch 222/500 449 64s 127ms/step - loss: 0.2552 - acc: 0.9793 - val_loss: 0.4761 - val_acc: 0.9210 450 Epoch 223/500 451 63s 127ms/step - loss: 0.2510 - acc: 0.9797 - val_loss: 0.4766 - val_acc: 0.9215 452 Epoch 224/500 453 63s 126ms/step - loss: 0.2500 - acc: 0.9791 - val_loss: 0.4754 - val_acc: 0.9184 454 Epoch 225/500 455 63s 127ms/step - loss: 0.2453 - acc: 0.9793 - val_loss: 0.4659 - val_acc: 0.9233 456 Epoch 226/500 457 63s 126ms/step - loss: 0.2424 - acc: 0.9795 - val_loss: 0.4714 - val_acc: 0.9227 458 Epoch 227/500 459 63s 127ms/step - loss: 0.2367 - acc: 0.9804 - val_loss: 0.4790 - val_acc: 0.9169 460 Epoch 228/500 461 63s 126ms/step - loss: 0.2381 - acc: 0.9791 - val_loss: 0.4642 - val_acc: 0.9222 462 Epoch 229/500 463 63s 126ms/step - loss: 0.2304 - acc: 0.9814 - val_loss: 0.4627 - val_acc: 0.9201 464 Epoch 230/500 465 63s 126ms/step - loss: 0.2281 - acc: 0.9812 - val_loss: 0.4662 - val_acc: 0.9167 466 Epoch 231/500 467 63s 126ms/step - loss: 0.2260 - acc: 0.9810 - val_loss: 0.4733 - val_acc: 0.9164 468 Epoch 232/500 469 63s 127ms/step - loss: 0.2270 - acc: 0.9799 - val_loss: 0.4643 - val_acc: 0.9190 470 Epoch 233/500 471 63s 126ms/step - loss: 0.2190 - acc: 0.9817 - val_loss: 0.4691 - val_acc: 0.9160 472 Epoch 234/500 473 63s 126ms/step - loss: 0.2189 - acc: 0.9815 - val_loss: 0.4615 - val_acc: 0.9196 474 Epoch 235/500 475 63s 126ms/step - loss: 0.2155 - acc: 0.9821 - val_loss: 0.4510 - val_acc: 0.9188 476 Epoch 236/500 477 63s 126ms/step - loss: 0.2123 - acc: 0.9816 - val_loss: 0.4546 - val_acc: 0.9175 478 Epoch 237/500 479 63s 126ms/step - loss: 0.2138 - acc: 0.9810 - val_loss: 0.4443 - val_acc: 0.9185 480 Epoch 238/500 481 63s 127ms/step - loss: 0.2122 - acc: 0.9809 - val_loss: 0.4674 - val_acc: 0.9143

迭代到238次,又无意中按了Ctrl+C,中断了程序,又没跑完。准确率已经到了91.43%,估计跑完的话,还能涨一点。然后让电脑通宵跑程序,自己回家了,结果被同办公室的老师“帮忙”关了电脑。

Minghang Zhao, Shisheng Zhong, Xuyun Fu, Baoping Tang, Shaojiang Dong, Michael Pecht, Deep Residual Networks with Adaptively Parametric Rectifier Linear Units for Fault Diagnosis, IEEE Transactions on Industrial Electronics, 2020, DOI: 10.1109/TIE.2020.2972458