1 说明

本文基于《本地数据仓库项目(一)——本地数仓搭建详细流程》业务数据,在本地搭建系统业务数仓。

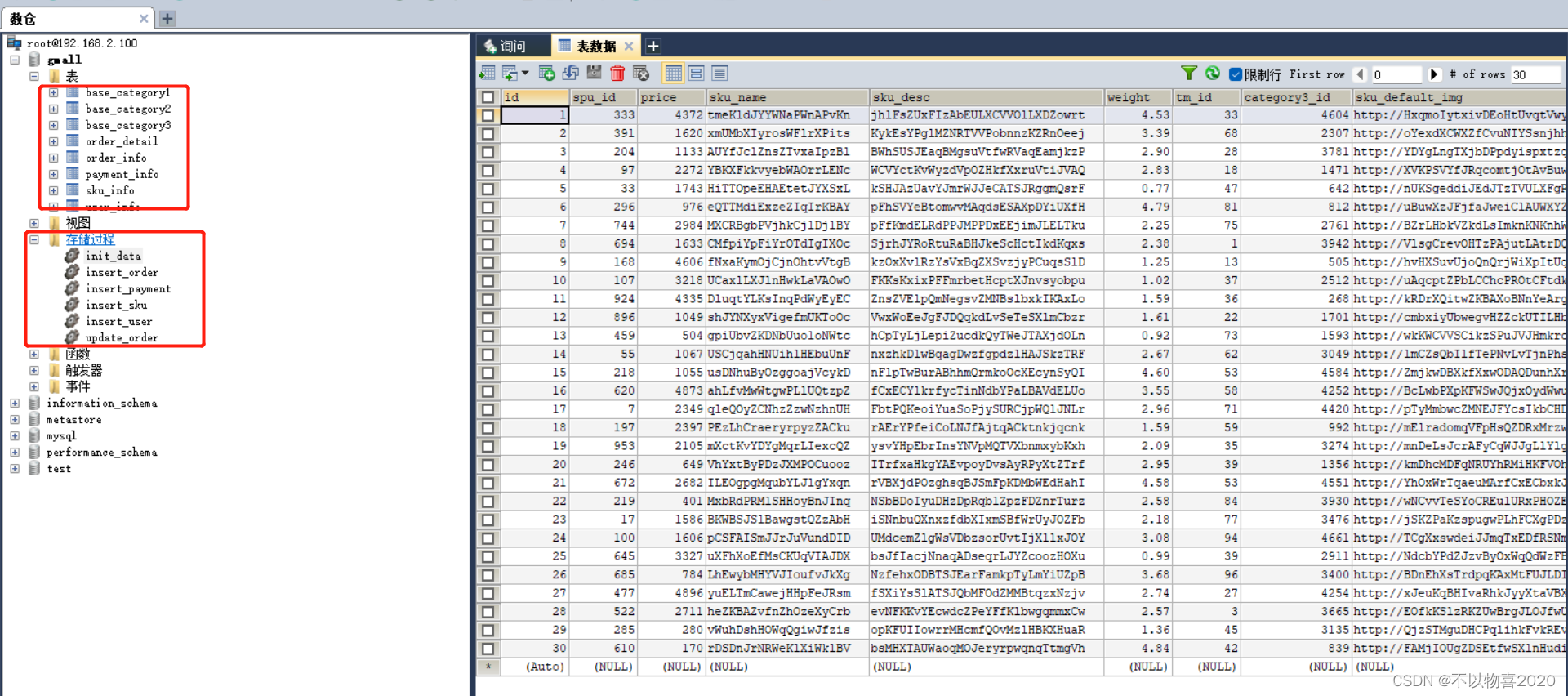

根据模拟sql脚本生成业务数据,依次执行生成业务数据即可。

sql脚本提供如下

链接:https://pan.baidu.com/s/1AhLIuTNIyJ_GBD7M0b2RoA

提取码:1lm8

生成的数据如下:

2 业务数据导入数仓

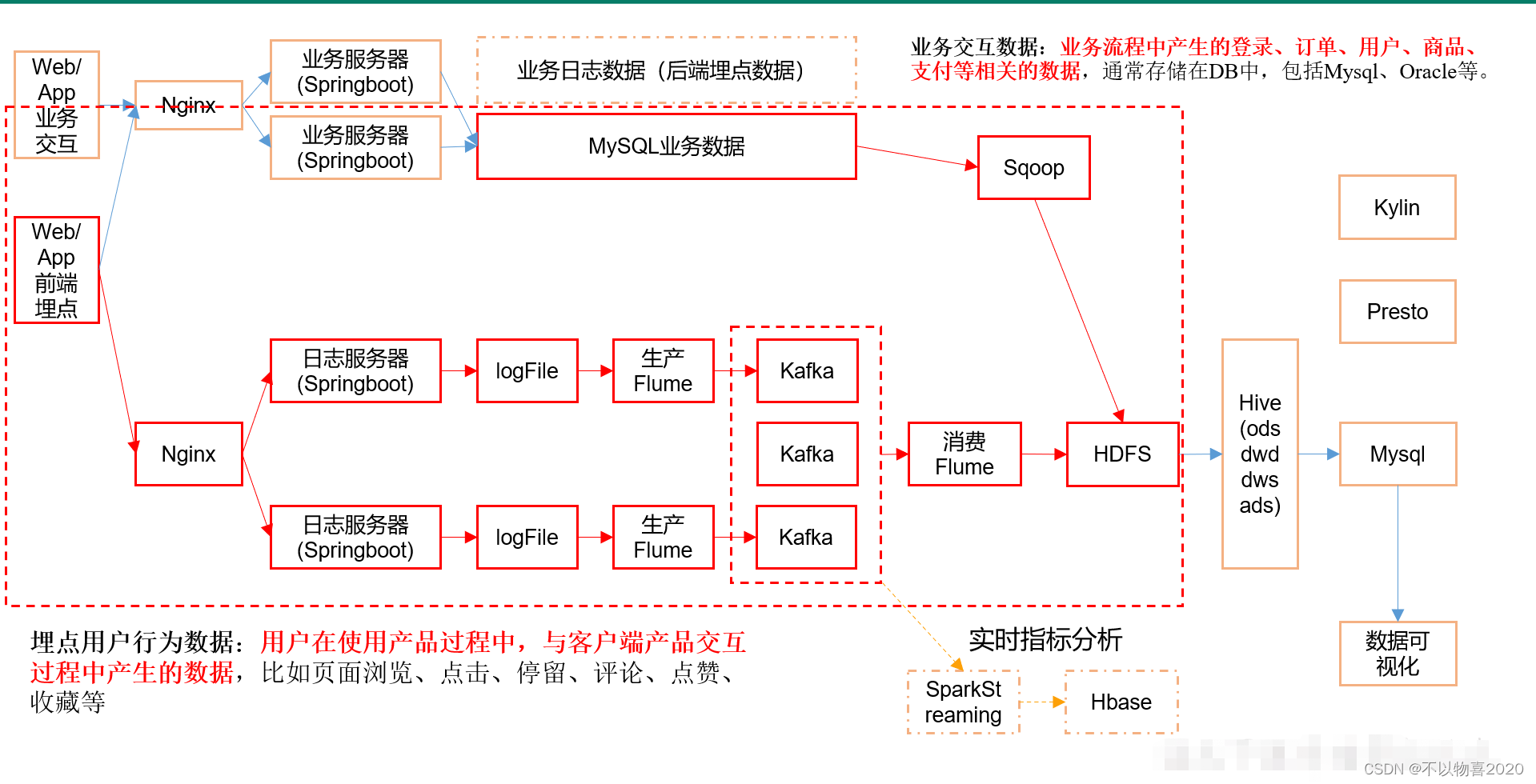

数仓整体框架如下,在前面的《本地数据仓库项目(一)——本地数仓搭建详细流程》已完成对数据采集及分析整体流程。这里的业务数仓数据需要用到sqoop完成从mysql导入数据到HDFS中。

2.1 安装sqoop

2.1.1 解压并重命名

tar -zxvf sqoop-1.4.6.bin__hadoop-2.0.4-alpha.tar.gz

mv sqoop-1.4.6.bin__hadoop-2.0.4-alpha sqoop-1.4.6

2.1.2 配置SQOOP_HOME环境变量

SQOOP_HOME=/root/soft/sqoop-1.4.6

PATH=$PATH:$JAVA_HOME/bin:$SHELL_HOME:$FLUME_HOME/bin:$HIVE_HOME/bin:$KAFKA_HOME/bin:$ZOOKEEPER_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$SQOOP_HOME/bin

2.1.3 配置sqoop-env.sh

mv sqoop-env-template.sh sqoop-env.sh

export HADOOP_COMMON_HOME=/root/soft/hadoop-2.7.2

export HADOOP_MAPRED_HOME=/root/soft/hadoop-2.7.2

export HIVE_HOME=/root/soft/hive

export ZOOKEEPER_HOME=/root/soft/zookeeper-3.4.10

export ZOOCFGDIR=/root/soft/zookeeper-3.4.10

2.1.4拷贝mysql的jdbc驱动到sqoop的lib目录下

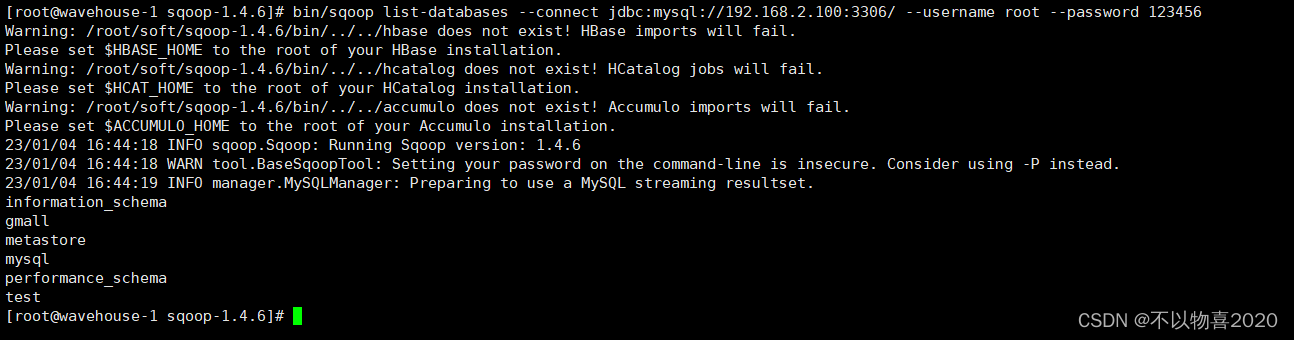

2.1.5 测试链接

bin/sqoop list-databases --connect jdbc:mysql://192.168.2.100:3306/ --username root --password 123456

出现如下页面表示sqoop安装成功

2.2 sqoop导入数据到HDFS

如下sqoop脚本,可实现定时自动导入数据到HDFS

#!/bin/bash

db_date=$2

echo $db_date

db_name=gmall

import_data() {

/root/soft/sqoop-1.4.6/bin/sqoop import \

--connect jdbc:mysql://192.168.2.100:3306/$db_name \

--username root \

--password 123456 \

--target-dir /origin_data/$db_name/db/$1/$db_date \

--delete-target-dir \

--num-mappers 1 \

--fields-terminated-by "\t" \

--query "$2"' and $CONDITIONS;' \

--null-string '\\N' \

--null-non-string '\\N'

}

import_sku_info(){

import_data "sku_info" "select

id, spu_id, price, sku_name, sku_desc, weight, tm_id,

category3_id, create_time

from sku_info where 1=1"

}

import_user_info(){

import_data "user_info" "select

id, name, birthday, gender, email, user_level,

create_time

from user_info where 1=1"

}

import_base_category1(){

import_data "base_category1" "select

id, name from base_category1 where 1=1"

}

import_base_category2(){

import_data "base_category2" "select

id, name, category1_id from base_category2 where 1=1"

}

import_base_category3(){

import_data "base_category3" "select id, name, category2_id from base_category3 where 1=1"

}

import_order_detail(){

import_data "order_detail" "select

od.id,

order_id,

user_id,

sku_id,

sku_name,

order_price,

sku_num,

o.create_time

from order_info o, order_detail od

where o.id=od.order_id

and DATE_FORMAT(create_time,'%Y-%m-%d')='$db_date'"

}

import_payment_info(){

import_data "payment_info" "select

id,

out_trade_no,

order_id,

user_id,

alipay_trade_no,

total_amount,

subject,

payment_type,

payment_time

from payment_info

where DATE_FORMAT(payment_time,'%Y-%m-%d')='$db_date'"

}

import_order_info(){

import_data "order_info" "select

id,

total_amount,

order_status,

user_id,

payment_way,

out_trade_no,

create_time,

operate_time

from order_info

where (DATE_FORMAT(create_time,'%Y-%m-%d')='$db_date' or DATE_FORMAT(operate_time,'%Y-%m-%d')='$db_date')"

}

case $1 in

"base_category1")

import_base_category1

;;

"base_category2")

import_base_category2

;;

"base_category3")

import_base_category3

;;

"order_info")

import_order_info

;;

"order_detail")

import_order_detail

;;

"sku_info")

import_sku_info

;;

"user_info")

import_user_info

;;

"payment_info")

import_payment_info

;;

"all")

import_base_category1

import_base_category2

import_base_category3

import_order_info

import_order_detail

import_sku_info

import_user_info

import_payment_info

;;

esac

注意:

①默认sqoop到import数据时,将Mysql的Null类型,转为’null’

②hive中使用\N代表NULL类型

③如果希望在import时,讲将Mysql的Null类型,转为自己期望的类型,

需要使用–null-string and --null-non-string

–null-string: 当mysql的string类型列为null时,导入到hive时,使用什么来代替!

–null-string a: 如果mysql中,当前列是字符串类型(varchar,char),假如这列值为NULL,导入到hive时,使用a来代替!

–null-non-string: 当mysql的非string类型列为null时,导入到hive时,使用什么来代替!

–null-non-string b: 如果mysql中,当前列不是字符串类型(varchar,char),假如这列值为NULL,导入到hive时,使用b来代替!

④如果到导出时,希望将指定的参数,导出为mysql的NULL类型,需要使用

–input-null-string and --input-null-non-string --input-null-string a: 在hive导出到mysql时,如果hive中string类型的列的值为a,导出到mysql中,使用NULL代替!

–input-null-non-string b:

在hive导出到mysql时,如果hive中非string类型的列的值为b,导出到mysql中,使用NULL代替!

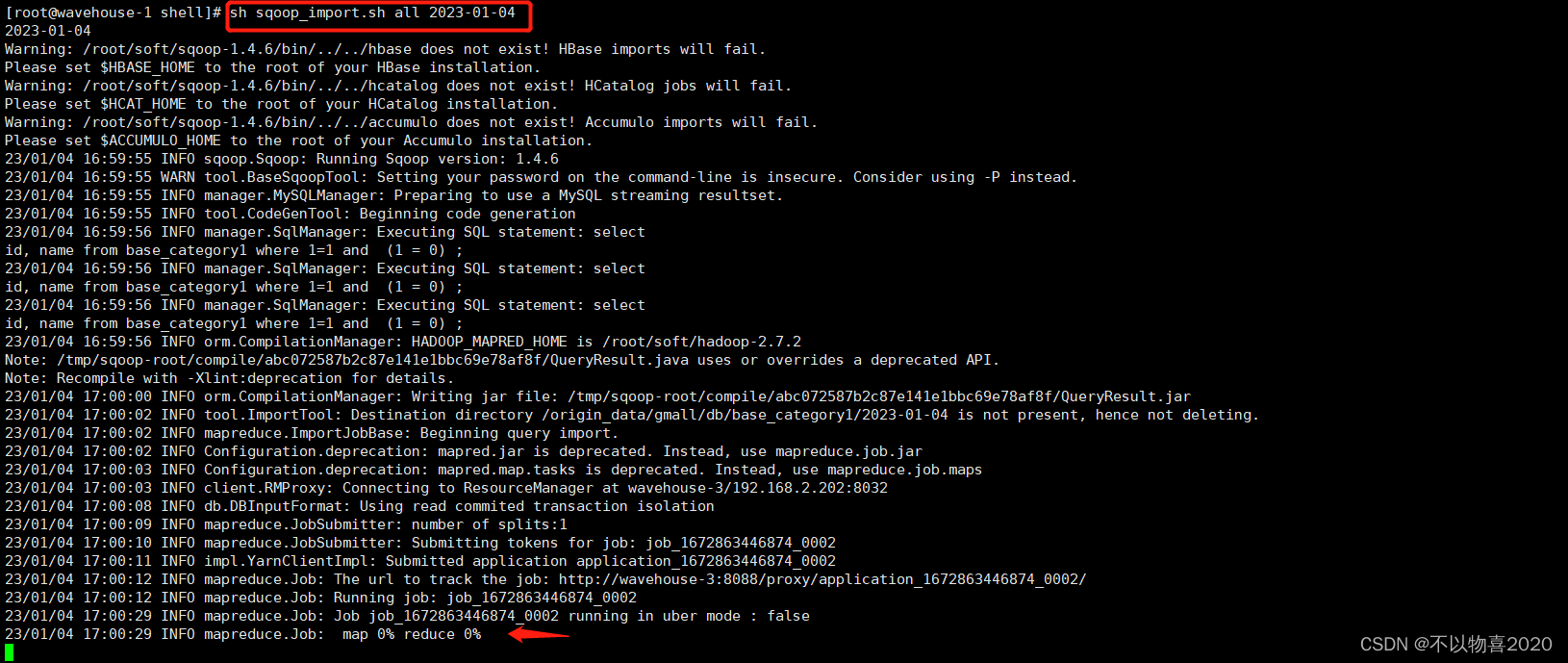

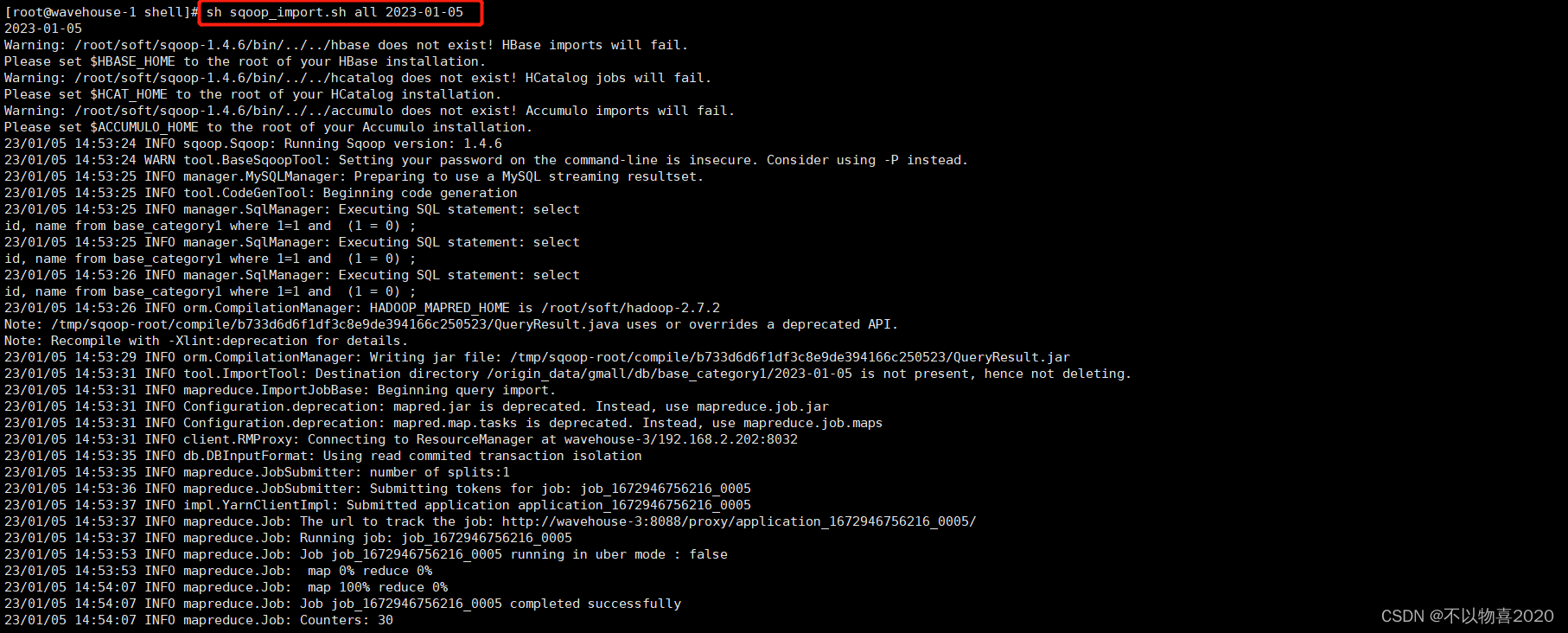

执行脚本,导入数据

3 ODS层

3.1 创建ods表

3.1.1 创建订单表

drop table if exists ods_order_info;

create external table ods_order_info (

`id` string COMMENT '订单编号',

`total_amount` decimal(10,2) COMMENT '订单金额',

`order_status` string COMMENT '订单状态',

`user_id` string COMMENT '用户id',

`payment_way` string COMMENT '支付方式',

`out_trade_no` string COMMENT '支付流水号',

`create_time` string COMMENT '创建时间',

`operate_time` string COMMENT '操作时间'

) COMMENT '订单表'

PARTITIONED BY (`dt` string)

row format delimited fields terminated by '\t'

location '/wavehouse/gmall/ods/ods_order_info/';

3.1.2 创建订单明细表

drop table if exists ods_order_detail;

create external table ods_order_detail(

`id` string COMMENT '订单详情编号',

`order_id` string COMMENT '订单号',

`user_id` string COMMENT '用户id',

`sku_id` string COMMENT '商品id',

`sku_name` string COMMENT '商品名称',

`order_price` string COMMENT '商品单价',

`sku_num` string COMMENT '商品数量',

`create_time` string COMMENT '创建时间'

) COMMENT '订单明细表'

PARTITIONED BY (`dt` string)

row format delimited fields terminated by '\t'

location '/wavehouse/gmall/ods/ods_order_detail/';

3.1.3 创建商品信息表

drop table if exists ods_sku_info;

create external table ods_sku_info(

`id` string COMMENT 'skuId',

`spu_id` string COMMENT 'spuid',

`price` decimal(10,2) COMMENT '价格',

`sku_name` string COMMENT '商品名称',

`sku_desc` string COMMENT '商品描述',

`weight` string COMMENT '重量',

`tm_id` string COMMENT '品牌id',

`category3_id` string COMMENT '品类id',

`create_time` string COMMENT '创建时间'

) COMMENT '商品表'

PARTITIONED BY (`dt` string)

row format delimited fields terminated by '\t'

location '/wavehouse/gmall/ods/ods_sku_info/';

3.1.4 创建用户表

drop table if exists ods_user_info;

create external table ods_user_info(

`id` string COMMENT '用户id',

`name` string COMMENT '姓名',

`birthday` string COMMENT '生日',

`gender` string COMMENT '性别',

`email` string COMMENT '邮箱',

`user_level` string COMMENT '用户等级',

`create_time` string COMMENT '创建时间'

) COMMENT '用户信息'

PARTITIONED BY (`dt` string)

row format delimited fields terminated by '\t'

location '/wavehouse/gmall/ods/ods_user_info/';

3.1.5 创建商品一级分类表

drop table if exists ods_base_category1;

create external table ods_base_category1(

`id` string COMMENT 'id',

`name` string COMMENT '名称'

) COMMENT '商品一级分类'

PARTITIONED BY (`dt` string)

row format delimited fields terminated by '\t'

location '/wavehouse/gmall/ods/ods_base_category1/';

3.1.6 创建商品二级分类表

drop table if exists ods_base_category2;

create external table ods_base_category2(

`id` string COMMENT ' id',

`name` string COMMENT '名称',

category1_id string COMMENT '一级品类id'

) COMMENT '商品二级分类'

PARTITIONED BY (`dt` string)

row format delimited fields terminated by '\t'

location '/wavehouse/gmall/ods/ods_base_category2/';

3.1.7 创建商品三级表

drop table if exists ods_base_category3;

create external table ods_base_category3(

`id` string COMMENT ' id',

`name` string COMMENT '名称',

category2_id string COMMENT '二级品类id'

) COMMENT '商品三级分类'

PARTITIONED BY (`dt` string)

row format delimited fields terminated by '\t'

location '/wavehouse/gmall/ods/ods_base_category3/';

3.1.8 创建支付流水表

drop table if exists ods_payment_info;

create external table ods_payment_info(

`id` bigint COMMENT '编号',

`out_trade_no` string COMMENT '对外业务编号',

`order_id` string COMMENT '订单编号',

`user_id` string COMMENT '用户编号',

`alipay_trade_no` string COMMENT '支付宝交易流水编号',

`total_amount` decimal(16,2) COMMENT '支付金额',

`subject` string COMMENT '交易内容',

`payment_type` string COMMENT '支付类型',

`payment_time` string COMMENT '支付时间'

) COMMENT '支付流水表'

PARTITIONED BY (`dt` string)

row format delimited fields terminated by '\t'

location '/wavehouse/gmall/ods/ods_payment_info/';

3.2 导入数据

load data inpath '/origin_data/gmall/db/order_info/2023-01-04' OVERWRITE into table gmall.ods_order_info partition(dt='2023-01-04');

load data inpath '/origin_data/gmall/db/order_info/2023-01-05' OVERWRITE into table gmall.ods_order_info partition(dt='2023-01-05');

load data inpath '/origin_data/gmall/db/order_detail/2023-01-04' OVERWRITE into table gmall.ods_order_detail partition(dt='2023-01-04');

load data inpath '/origin_data/gmall/db/order_detail/2023-01-05' OVERWRITE into table gmall.ods_order_detail partition(dt='2023-01-05');

load data inpath '/origin_data/gmall/db/sku_info/2023-01-04' OVERWRITE into table gmall.ods_sku_info partition(dt='2023-01-04');

load data inpath '/origin_data/gmall/db/sku_info/2023-01-05' OVERWRITE into table gmall.ods_sku_info partition(dt='2023-01-05');

load data inpath '/origin_data/gmall/db/user_info/2023-01-04' OVERWRITE into table gmall.ods_user_info partition(dt='2023-01-04');

load data inpath '/origin_data/gmall/db/user_info/2023-01-05' OVERWRITE into table gmall.ods_user_info partition(dt='2023-01-05');

load data inpath '/origin_data/gmall/db/payment_info/2023-01-04' OVERWRITE into table gmall.ods_payment_info partition(dt='2023-01-04');

load data inpath '/origin_data/gmall/db/payment_info/2023-01-05' OVERWRITE into table gmall.ods_payment_info partition(dt='2023-01-05');

load data inpath '/origin_data/gmall/db/base_category1/2023-01-04' OVERWRITE into table gmall.ods_base_category1 partition(dt='2023-01-04');

load data inpath '/origin_data/gmall/db/base_category1/2023-01-05' OVERWRITE into table gmall.ods_base_category1 partition(dt='2023-01-05');

load data inpath '/origin_data/gmall/db/base_category2/2023-01-04' OVERWRITE into table gmall.ods_base_category2 partition(dt='2023-01-04');

load data inpath '/origin_data/gmall/db/base_category2/2023-01-05' OVERWRITE into table gmall.ods_base_category2 partition(dt='2023-01-05');

load data inpath '/origin_data/gmall/db/base_category3/2023-01-04' OVERWRITE into table gmall.ods_base_category3 partition(dt='2023-01-04');

load data inpath '/origin_data/gmall/db/base_category3/2023-01-05' OVERWRITE into table gmall.ods_base_category3 partition(dt='2023-01-05');

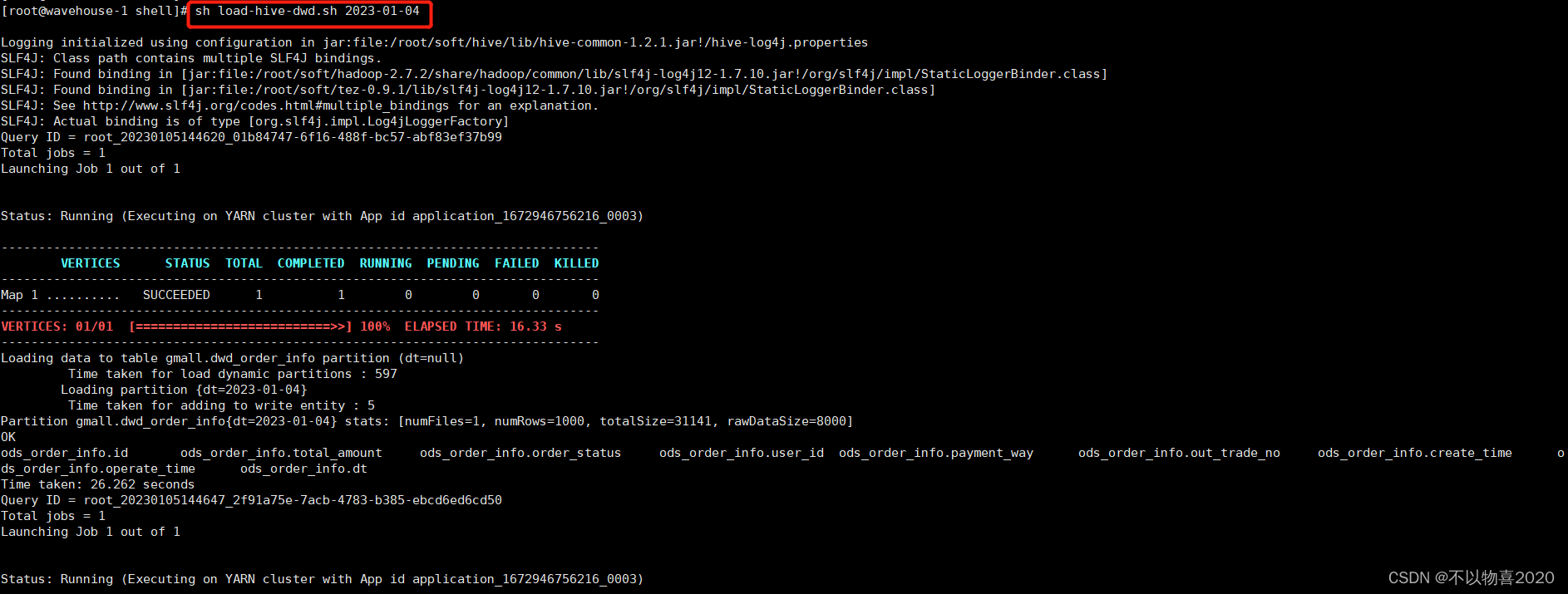

可以将以上写成脚本,以日期为传参参数,每天定时执行即可。

4 DWD层

4.1 创建dwd明细表

4.1.1 创建订单表

drop table if exists dwd_order_info;

create external table dwd_order_info (

`id` string COMMENT '',

`total_amount` decimal(10,2) COMMENT '',

`order_status` string COMMENT ' 1 2 3 4 5',

`user_id` string COMMENT 'id',

`payment_way` string COMMENT '',

`out_trade_no` string COMMENT '',

`create_time` string COMMENT '',

`operate_time` string COMMENT ''

)

PARTITIONED BY (`dt` string)

stored as parquet

location '/wavehouse/gmall/dwd/dwd_order_info/'

tblproperties ("parquet.compression"="snappy");

4.1.2 创建订单详情表

drop table if exists dwd_order_detail;

create external table dwd_order_detail(

`id` string COMMENT '',

`order_id` decimal(10,2) COMMENT '',

`user_id` string COMMENT 'id',

`sku_id` string COMMENT 'id',

`sku_name` string COMMENT '',

`order_price` string COMMENT '',

`sku_num` string COMMENT '',

`create_time` string COMMENT ''

)

PARTITIONED BY (`dt` string)

stored as parquet

location '/wavehouse/gmall/dwd/dwd_order_detail/'

tblproperties ("parquet.compression"="snappy");

4.1.3 创建用户表

drop table if exists dwd_user_info;

create external table dwd_user_info(

`id` string COMMENT 'id',

`name` string COMMENT '',

`birthday` string COMMENT '',

`gender` string COMMENT '',

`email` string COMMENT '',

`user_level` string COMMENT '',

`create_time` string COMMENT ''

)

PARTITIONED BY (`dt` string)

stored as parquet

location '/wavehouse/gmall/dwd/dwd_user_info/'

tblproperties ("parquet.compression"="snappy");

4.1.4 创建支付流水表

drop table if exists dwd_payment_info;

create external table dwd_payment_info(

`id` bigint COMMENT '',

`out_trade_no` string COMMENT '',

`order_id` string COMMENT '',

`user_id` string COMMENT '',

`alipay_trade_no` string COMMENT '',

`total_amount` decimal(16,2) COMMENT '',

`subject` string COMMENT '',

`payment_tpe` string COMMENT '',

`payment_time` string COMMENT ''

)

PARTITIONED BY (`dt` string)

stored as parquet

location '/wavehouse/gmall/dwd/dwd_payment_info/'

tblproperties ("parquet.compression"="snappy");

4.1.5 创建商品分类表

drop table if exists dwd_sku_info;

create external table dwd_sku_info(

`id` string COMMENT 'skuId',

`spu_id` string COMMENT 'spuid',

`price` decimal(10,2) COMMENT '',

`sku_name` string COMMENT '',

`sku_desc` string COMMENT '',

`weight` string COMMENT '',

`tm_id` string COMMENT 'id',

`category3_id` string COMMENT '1id',

`category2_id` string COMMENT '2id',

`category1_id` string COMMENT '3id',

`category3_name` string COMMENT '3',

`category2_name` string COMMENT '2',

`category1_name` string COMMENT '1',

`create_time` string COMMENT ''

)

PARTITIONED BY (`dt` string)

stored as parquet

location '/wavehouse/gmall/dwd/dwd_sku_info/'

tblproperties ("parquet.compression"="snappy");

4.2 导入数据

#!/bin/bash

# 定义变量方便修改

APP=gmall

hive=/root/soft/hive/bin/hive

# 如果是输入的日期按照取输入日期;如果没输入日期取当前时间的前一天

if [ -n "$1" ] ;then

do_date=$1

else

do_date=`date -d "-1 day" +%F`

fi

sql="

set hive.exec.dynamic.partition.mode=nonstrict;

insert overwrite table "$APP".dwd_order_info partition(dt)

select * from "$APP".ods_order_info

where dt='$do_date' and id is not null;

insert overwrite table "$APP".dwd_order_detail partition(dt)

select * from "$APP".ods_order_detail

where dt='$do_date' and id is not null;

insert overwrite table "$APP".dwd_user_info partition(dt)

select * from "$APP".ods_user_info

where dt='$do_date' and id is not null;

insert overwrite table "$APP".dwd_payment_info partition(dt)

select * from "$APP".ods_payment_info

where dt='$do_date' and id is not null;

insert overwrite table "$APP".dwd_sku_info partition(dt)

select

sku.id,

sku.spu_id,

sku.price,

sku.sku_name,

sku.sku_desc,

sku.weight,

sku.tm_id,

sku.category3_id,

c2.id category2_id,

c1.id category1_id,

c3.name category3_name,

c2.name category2_name,

c1.name category1_name,

sku.create_time,

sku.dt

from

"$APP".ods_sku_info sku

join "$APP".ods_base_category3 c3 on sku.category3_id=c3.id

join "$APP".ods_base_category2 c2 on c3.category2_id=c2.id

join "$APP".ods_base_category1 c1 on c2.category1_id=c1.id

where sku.dt='$do_date' and c2.dt='$do_date'

and c3.dt='$do_date' and c1.dt='$do_date'

and sku.id is not null;

"

$hive -e "$sql"

5 dws层

5.1 用户行为宽表

需求目标,把每个用户单日的行为聚合起来组成一张多列宽表,以便之后关联用户维度信息后进行,不同角度的统计分析。

5.1.1 创建用户行为宽表

drop table if exists dws_user_action;

create external table dws_user_action

(

user_id string comment '用户 id',

order_count bigint comment '下单次数 ',

order_amount decimal(16,2) comment '下单金额 ',

payment_count bigint comment '支付次数',

payment_amount decimal(16,2) comment '支付金额 ',

comment_count bigint comment '评论次数'

) COMMENT '每日用户行为宽表'

PARTITIONED BY (`dt` string)

stored as parquet

location '/wavehouse/gmall/dws/dws_user_action/';

5.1.2 导入数据

with

tmp_order as

(

select

user_id,

count(*) order_count,

sum(oi.total_amount) order_amount

from dwd_order_info oi

where date_format(oi.create_time,'yyyy-MM-dd')='2023-01-04'

group by user_id

) ,

tmp_payment as

(

select

user_id,

sum(pi.total_amount) payment_amount,

count(*) payment_count

from dwd_payment_info pi

where date_format(pi.payment_time,'yyyy-MM-dd')='2023-01-04'

group by user_id

),

tmp_comment as

(

select

user_id,

count(*) comment_count

from dwd_comment_log c

where date_format(c.dt,'yyyy-MM-dd')='2023-01-04'

group by user_id

)

insert overwrite table dws_user_action partition(dt='2023-01-04')

select

user_actions.user_id,

sum(user_actions.order_count),

sum(user_actions.order_amount),

sum(user_actions.payment_count),

sum(user_actions.payment_amount),

sum(user_actions.comment_count)

from

(

select

user_id,

order_count,

order_amount,

0 payment_count,

0 payment_amount,

0 comment_count

from tmp_order

union all

select

user_id,

0,

0,

payment_count,

payment_amount,

0

from tmp_payment

union all

select

user_id,

0,

0,

0,

0,

comment_count

from tmp_comment

) user_actions

group by user_id;

6 需求

6.1 需求1

求GMV成交总额。GMV是指一定时间内的成交总额(如一天、一周、一个月)

建表

drop table if exists ads_gmv_sum_day;

create external table ads_gmv_sum_day(

`dt` string COMMENT '统计日期',

`gmv_count` bigint COMMENT '当日gmv订单个数',

`gmv_amount` decimal(16,2) COMMENT '当日gmv订单总金额',

`gmv_payment` decimal(16,2) COMMENT '当日支付金额'

) COMMENT 'GMV'

row format delimited fields terminated by '\t'

location '/wavehouse/gmall/ads/ads_gmv_sum_day/';

插入数据

INSERT INTO TABLE ads_gmv_sum_day

SELECT

'2023-01-04' dt,

sum(order_count) gmv_count,

sum(order_amount) gmv_amount,

sum(payment_amount) gmv_payment

FROM

dws_user_action

WHERE dt='2023-01-04'

GROUP BY dt;

6.2 需求2

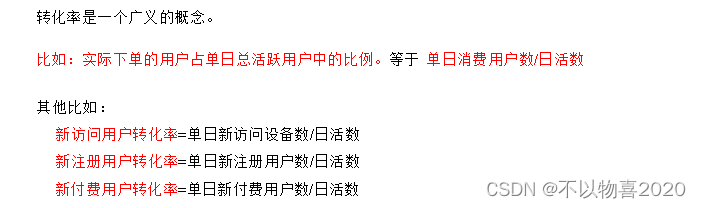

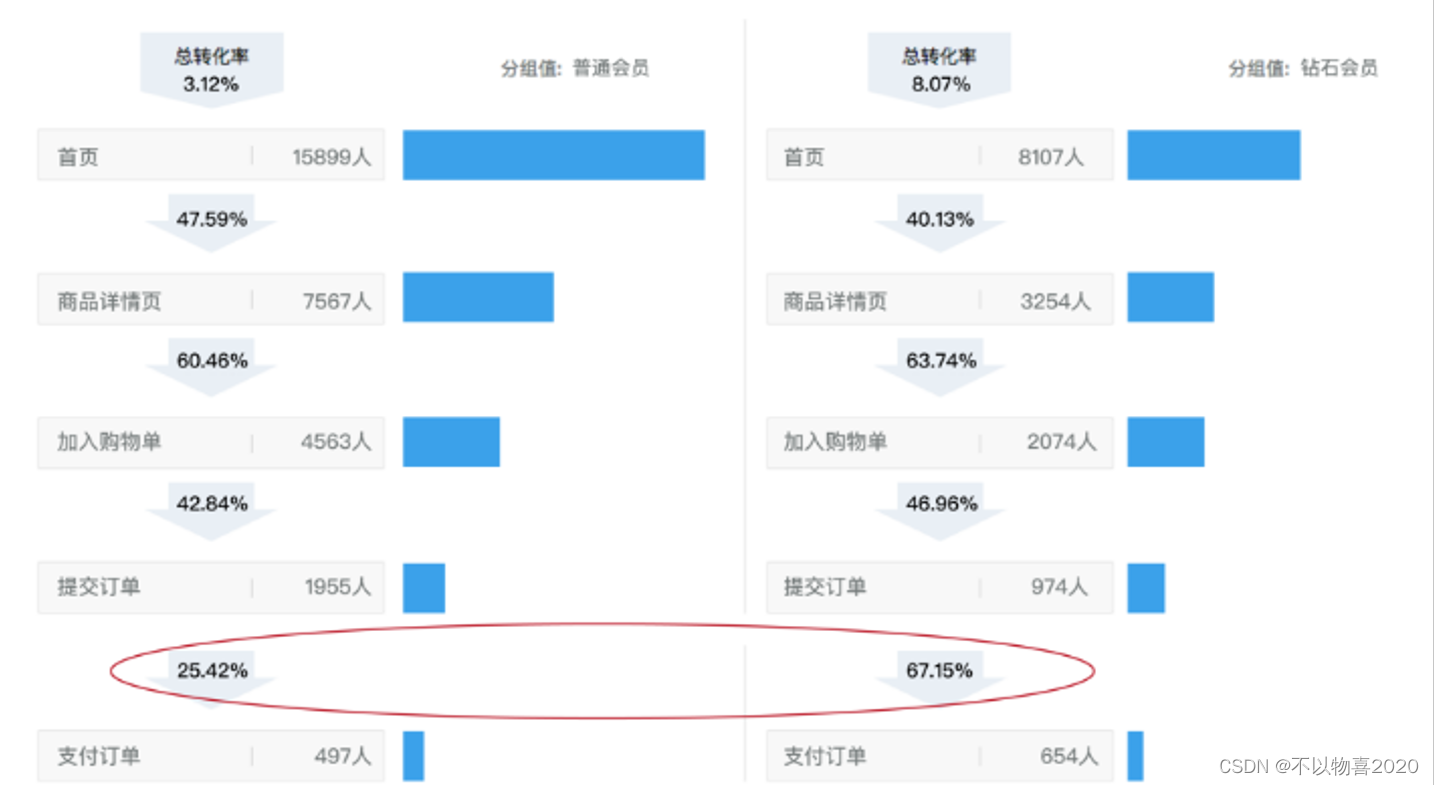

求转换率之用户新鲜度及漏斗分析

6.2.1 ADS层之新增用户占日活跃用户比率(用户新鲜度)

建表

drop table if exists ads_user_convert_day;

create external table ads_user_convert_day(

`dt` string COMMENT '统计日期',

`uv_m_count` bigint COMMENT '当日活跃设备',

`new_m_count` bigint COMMENT '当日新增设备',

`new_m_ratio` decimal(10,2) COMMENT '当日新增占日活的比率'

) COMMENT '转化率'

row format delimited fields terminated by '\t'

location '/wavehouse/gmall/ads/ads_user_convert_day/';

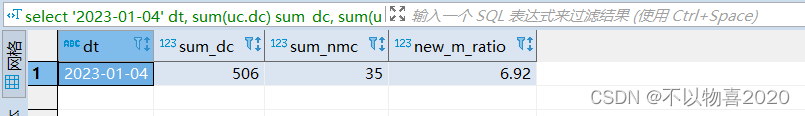

数据导入

insert into table ads_user_convert_day

select

'2023-01-04' dt,

sum(uc.dc) sum_dc,

sum(uc.nmc) sum_nmc,

cast(sum( uc.nmc)/sum( uc.dc)*100 as decimal(10,2)) new_m_ratio

from

(

select

day_count dc,

0 nmc

from ads_uv_count

where dt='2023-01-04'

union all

select

0 dc,

new_mid_count nmc

from ads_new_mid_count

where create_date='2023-01-04'

)uc;

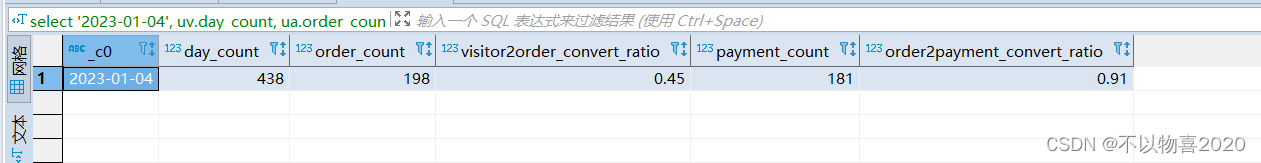

6.2.2 ADS层之用户行为漏斗分析

创建表

drop table if exists ads_user_action_convert_day;

create external table ads_user_action_convert_day(

`dt` string COMMENT '统计日期',

`total_visitor_m_count` bigint COMMENT '总访问人数',

`order_u_count` bigint COMMENT '下单人数',

`visitor2order_convert_ratio` decimal(10,2) COMMENT '访问到下单转化率',

`payment_u_count` bigint COMMENT '支付人数',

`order2payment_convert_ratio` decimal(10,2) COMMENT '下单到支付的转化率'

) COMMENT '用户行为漏斗分析'

row format delimited fields terminated by '\t'

location '/wavehouse/gmall/ads/ads_user_action_convert_day/';

插入数据

insert into table ads_user_action_convert_day

select

'2023-01-04',

uv.day_count,

ua.order_count,

cast(ua.order_count/uv.day_count as decimal(10,2)) visitor2order_convert_ratio,

ua.payment_count,

cast(ua.payment_count/ua.order_count as decimal(10,2)) order2payment_convert_ratio

from

(

select

dt,

sum(if(order_count>0,1,0)) order_count,

sum(if(payment_count>0,1,0)) payment_count

from dws_user_action

where dt='2023-01-04'

group by dt

)ua join ads_uv_count uv on uv.dt=ua.dt;

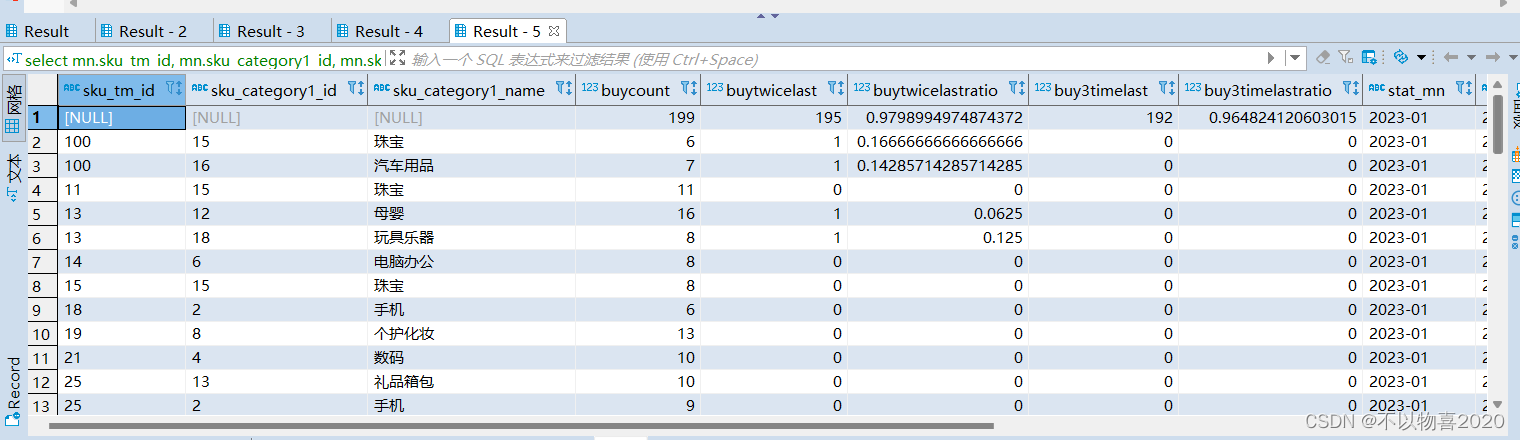

6.3 需求3

品牌复购率

需求:以月为单位统计,购买2次以上商品的用户

6.3.1 DWS层建表

drop table if exists dws_sale_detail_daycount;

create external table dws_sale_detail_daycount

(

user_id string comment '用户 id',

sku_id string comment '商品 Id',

user_gender string comment '用户性别',

user_age string comment '用户年龄',

user_level string comment '用户等级',

order_price decimal(10,2) comment '商品价格',

sku_name string comment '商品名称',

sku_tm_id string comment '品牌id',

sku_category3_id string comment '商品三级品类id',

sku_category2_id string comment '商品二级品类id',

sku_category1_id string comment '商品一级品类id',

sku_category3_name string comment '商品三级品类名称',

sku_category2_name string comment '商品二级品类名称',

sku_category1_name string comment '商品一级品类名称',

spu_id string comment '商品 spu',

sku_num int comment '购买个数',

order_count string comment '当日下单单数',

order_amount string comment '当日下单金额'

) COMMENT '用户购买商品明细表'

PARTITIONED BY (`dt` string)

stored as parquet

location '/wavehouse/gmall/dws/dws_user_sale_detail_daycount/'

tblproperties ("parquet.compression"="snappy");

数据导入

with

tmp_detail as

(

select

user_id,

sku_id,

sum(sku_num) sku_num,

count(*) order_count,

sum(od.order_price*sku_num) order_amount

from dwd_order_detail od

where od.dt='2023-01-05'

group by user_id, sku_id

)

insert overwrite table dws_sale_detail_daycount partition(dt='2023-01-05')

select

tmp_detail.user_id,

tmp_detail.sku_id,

u.gender,

months_between('2023-01-05', u.birthday)/12 age,

u.user_level,

price,

sku_name,

tm_id,

category3_id,

category2_id,

category1_id,

category3_name,

category2_name,

category1_name,

spu_id,

tmp_detail.sku_num,

tmp_detail.order_count,

tmp_detail.order_amount

from tmp_detail

left join dwd_user_info u on tmp_detail.user_id =u.id and u.dt='2023-01-05'

left join dwd_sku_info s on tmp_detail.sku_id =s.id and s.dt='2023-01-05';

6.3.2 ods层

建表

drop table ads_sale_tm_category1_stat_mn;

create external table ads_sale_tm_category1_stat_mn

(

tm_id string comment '品牌id',

category1_id string comment '1级品类id ',

category1_name string comment '1级品类名称 ',

buycount bigint comment '购买人数',

buy_twice_last bigint comment '两次以上购买人数',

buy_twice_last_ratio decimal(10,2) comment '单次复购率',

buy_3times_last bigint comment '三次以上购买人数',

buy_3times_last_ratio decimal(10,2) comment '多次复购率',

stat_mn string comment '统计月份',

stat_date string comment '统计日期'

) COMMENT '复购率统计'

row format delimited fields terminated by '\t'

location '/wavehouse/gmall/ads/ads_sale_tm_category1_stat_mn/';

插入数据

insert into table ads_sale_tm_category1_stat_mn

select

mn.sku_tm_id,

mn.sku_category1_id,

mn.sku_category1_name,

sum(if(mn.order_count>=1,1,0)) buycount,

sum(if(mn.order_count>=2,1,0)) buyTwiceLast,

sum(if(mn.order_count>=2,1,0))/sum( if(mn.order_count>=1,1,0)) buyTwiceLastRatio,

sum(if(mn.order_count>=3,1,0)) buy3timeLast ,

sum(if(mn.order_count>=3,1,0))/sum( if(mn.order_count>=1,1,0)) buy3timeLastRatio ,

date_format('2023-01-04' ,'yyyy-MM') stat_mn,

'2023-01-04' stat_date

from

(

select

user_id,

sd.sku_tm_id,

sd.sku_category1_id,

sd.sku_category1_name,

sum(order_count) order_count

from dws_sale_detail_daycount sd

where date_format(dt,'yyyy-MM')=date_format('2023-01-04' ,'yyyy-MM')

group by user_id, sd.sku_tm_id, sd.sku_category1_id, sd.sku_category1_name

) mn

group by mn.sku_tm_id, mn.sku_category1_id, mn.sku_category1_name;

6.4 需求4

各用户等级对应的复购率前十的商品排行

建表

drop table ads_ul_rep_ratio;

create table ads_ul_rep_ratio(

user_level string comment '用户等级' ,

sku_id string comment '商品id',

buy_count bigint comment '购买总人数',

buy_twice_count bigint comment '两次购买总数',

buy_twice_rate decimal(10,2) comment '二次复购率',

rank string comment '排名' ,

state_date string comment '统计日期'

) COMMENT '复购率统计'

row format delimited fields terminated by '\t'

location '/wavehouse/gmall/ads/ads_ul_rep_ratio/';

插入数据

with

tmp_count as(

select -- 每个等级内每个用户对每个产品的下单次数

user_level,

user_id,

sku_id,

sum(order_count) order_count

from dws_sale_detail_daycount

where dt<='2023-01-04'

group by user_level, user_id, sku_id

)

insert overwrite table ads_ul_rep_ratio

select

*

from(

select

user_level,

sku_id,

sum(if(order_count >=1, 1, 0)) buy_count,

sum(if(order_count >=2, 1, 0)) buy_twice_count,

sum(if(order_count >=2, 1, 0)) / sum(if(order_count >=1, 1, 0)) * 100 buy_twice_rate,

row_number() over(partition by user_level order by sum(if(order_count >=2, 1, 0)) / sum(if(order_count >=1, 1, 0)) desc) rn,

'2023-01-04'

from tmp_count

group by user_level, sku_id

) t1

where rn<=10

接下来是本地数仓项目数据可视化和任务调度,详见《本地数仓项目(三)—— 数据可视化和任务调度》