版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/fragrant_no1/article/details/86088776

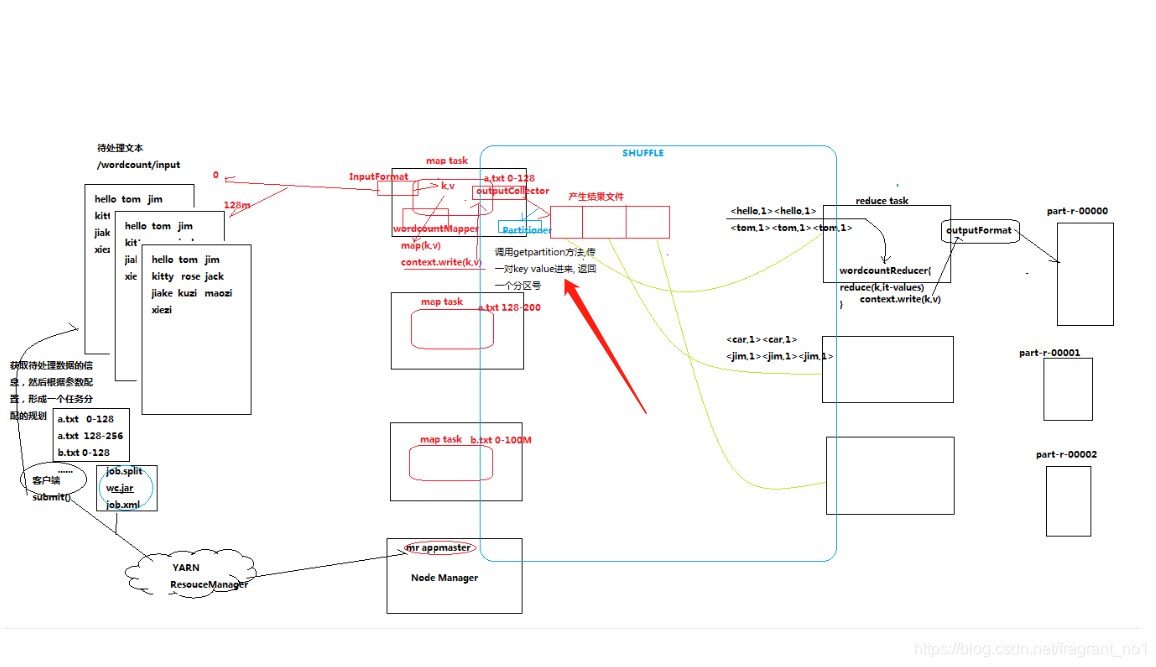

maptask的任务执行完毕之后,会将输出结果先放入缓存中进行分区处理,这个处理动作可以通过Partitioner组件完成:

实例:统计流量且按照手机号的归属地,将结果数据输出到不同的省份文件中

package cn.itcast.bigdata.mr.provinceflow;

import java.util.HashMap;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Partitioner;

/**

* K2 V2 对应的是map输出kv的类型

*/

public class ProvincePartitioner extends Partitioner<Text, FlowBean>{

public static HashMap<String, Integer> proviceDict = new HashMap<String, Integer>();

static{

proviceDict.put("136", 0);

proviceDict.put("137", 1);

proviceDict.put("138", 2);

proviceDict.put("139", 3);

}

@Override

public int getPartition(Text key, FlowBean value, int numPartitions) {

String prefix = key.toString().substring(0, 3);

Integer provinceId = proviceDict.get(prefix);

return provinceId==null?4:provinceId;

}

}

package cn.itcast.bigdata.mr.provinceflow;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class FlowCount {

static class FlowCountMapper extends Mapper<LongWritable, Text, Text, FlowBean>{

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String line = value.toString(); //将一行内容转成string

String[] fields = line.split("\t"); //切分字段

String phoneNbr = fields[1]; //取出手机号

long upFlow = Long.parseLong(fields[fields.length-3]); //取出上行流量下行流量

long dFlow = Long.parseLong(fields[fields.length-2]);

context.write(new Text(phoneNbr), new FlowBean(upFlow, dFlow));

}

}

static class FlowCountReducer extends Reducer<Text, FlowBean, Text, FlowBean>{

//<183323,bean1><183323,bean2><183323,bean3><183323,bean4>.......

@Override

protected void reduce(Text key, Iterable<FlowBean> values, Context context) throws IOException, InterruptedException {

long sum_upFlow = 0;

long sum_dFlow = 0;

//遍历所有bean,将其中的上行流量,下行流量分别累加

for(FlowBean bean: values){

sum_upFlow += bean.getUpFlow();

sum_dFlow += bean.getdFlow();

}

FlowBean resultBean = new FlowBean(sum_upFlow, sum_dFlow);

context.write(key, resultBean);

}

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

/*conf.set("mapreduce.framework.name", "yarn");

conf.set("yarn.resoucemanager.hostname", "mini1");*/

Job job = Job.getInstance(conf);

/*job.setJar("/home/hadoop/wc.jar");*/

//指定本程序的jar包所在的本地路径

job.setJarByClass(FlowCount.class);

//指定本业务job要使用的mapper/Reducer业务类

job.setMapperClass(FlowCountMapper.class);

job.setReducerClass(FlowCountReducer.class);

//指定我们自定义的数据分区器

job.setPartitionerClass(ProvincePartitioner.class);

//自定义partition后,要根据自定义partitioner的逻辑设置相应数量的reduce task,也就是指定相应“分区”数量的reducetask

job.setNumReduceTasks(5);

//指定mapper输出数据的kv类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(FlowBean.class);

//指定最终输出的数据的kv类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(FlowBean.class);

//指定job的输入原始文件所在目录

FileInputFormat.setInputPaths(job, new Path(args[0]));

//指定job的输出结果所在目录

FileOutputFormat.setOutputPath(job, new Path(args[1]));

//将job中配置的相关参数,以及job所用的java类所在的jar包,提交给yarn去运行

/*job.submit();*/

boolean res = job.waitForCompletion(true);

System.exit(res?0:1);

}

}

package cn.itcast.bigdata.mr.provinceflow;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

import org.apache.hadoop.io.Writable;

public class FlowBean implements Writable{

private long upFlow;

private long dFlow;

private long sumFlow;

//反序列化时,需要反射调用空参构造函数,所以要显示定义一个

public FlowBean(){}

public FlowBean(long upFlow, long dFlow) {

this.upFlow = upFlow;

this.dFlow = dFlow;

this.sumFlow = upFlow + dFlow;

}

public long getUpFlow() {

return upFlow;

}

public void setUpFlow(long upFlow) {

this.upFlow = upFlow;

}

public long getdFlow() {

return dFlow;

}

public void setdFlow(long dFlow) {

this.dFlow = dFlow;

}

public long getSumFlow() {

return sumFlow;

}

public void setSumFlow(long sumFlow) {

this.sumFlow = sumFlow;

}

/**

* 序列化方法

*/

@Override

public void write(DataOutput out) throws IOException {

out.writeLong(upFlow);

out.writeLong(dFlow);

out.writeLong(sumFlow);

}

/**

* 反序列化方法

* 注意:反序列化的顺序跟序列化的顺序完全一致

*/

@Override

public void readFields(DataInput in) throws IOException {

upFlow = in.readLong();

dFlow = in.readLong();

sumFlow = in.readLong();

}

@Override

public String toString() {

return upFlow + "\t" + dFlow + "\t" + sumFlow;

}

}

说明:

1,partitioner的功能实现对maptask,reducetask的实现不产生干扰。

注意:

如果reduceTask的数量>= getPartition的结果数 ,则会多产生几个空的输出文件part-r-000xx

如果 1<reduceTask的数量<getPartition的结果数 ,则有一部分分区数据无处安放,会Exception!!!

如果 reduceTask的数量=1,则不管mapTask端输出多少个分区文件,最终结果都交给这一个reduceTask,最终也就只会产生一个结果文件 part-r-00000