任务——手写KNN算法实现分类问题

1. 导入数据集

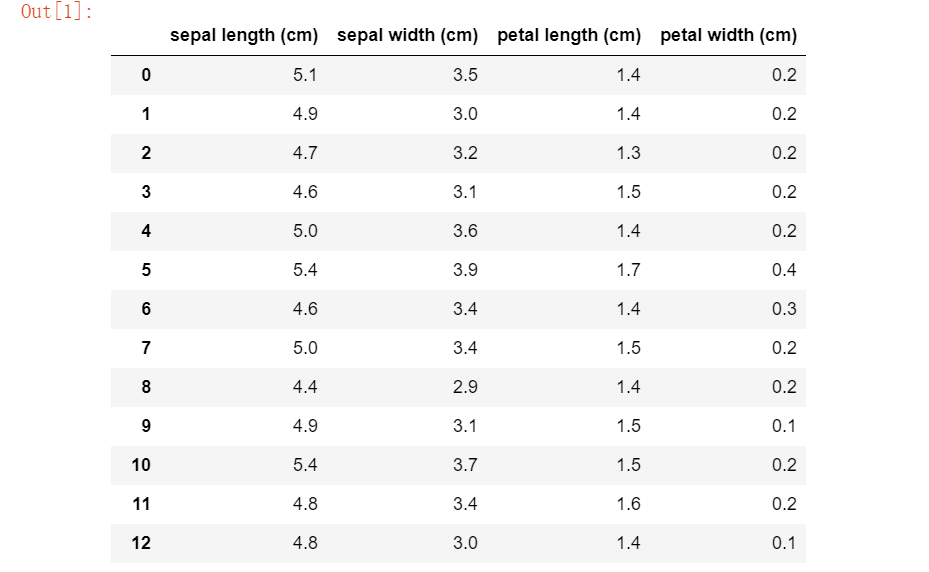

在查资料的时候看到了导iris数据集还可以直接利用Python中的机器学习包scikit-learn直接导入。

from sklearn.datasets import load_iris data = load_iris() print(dir(data)) # 查看data所具有的属性或方法 print(data.DESCR) # 查看数据集的简介 import pandas as pd #直接读到pandas的数据框中 pd.DataFrame(data=data.data, columns=data.feature_names)

['DESCR', 'data', 'feature_names', 'filename', 'target', 'target_names'] .. _iris_dataset: Iris plants dataset -------------------- **Data Set Characteristics:** :Number of Instances: 150 (50 in each of three classes) :Number of Attributes: 4 numeric, predictive attributes and the class :Attribute Information: - sepal length in cm - sepal width in cm - petal length in cm - petal width in cm - class: - Iris-Setosa - Iris-Versicolour - Iris-Virginica :Summary Statistics: ============== ==== ==== ======= ===== ==================== Min Max Mean SD Class Correlation ============== ==== ==== ======= ===== ==================== sepal length: 4.3 7.9 5.84 0.83 0.7826 sepal width: 2.0 4.4 3.05 0.43 -0.4194 petal length: 1.0 6.9 3.76 1.76 0.9490 (high!) petal width: 0.1 2.5 1.20 0.76 0.9565 (high!) ============== ==== ==== ======= ===== ==================== :Missing Attribute Values: None :Class Distribution: 33.3% for each of 3 classes. :Creator: R.A. Fisher :Donor: Michael Marshall (MARSHALL%[email protected]) :Date: July, 1988 The famous Iris database, first used by Sir R.A. Fisher. The dataset is taken from Fisher's paper. Note that it's the same as in R, but not as in the UCI Machine Learning Repository, which has two wrong data points. This is perhaps the best known database to be found in the pattern recognition literature. Fisher's paper is a classic in the field and is referenced frequently to this day. (See Duda & Hart, for example.) The data set contains 3 classes of 50 instances each, where each class refers to a type of iris plant. One class is linearly separable from the other 2; the latter are NOT linearly separable from each other. .. topic:: References - Fisher, R.A. "The use of multiple measurements in taxonomic problems" Annual Eugenics, 7, Part II, 179-188 (1936); also in "Contributions to Mathematical Statistics" (John Wiley, NY, 1950). - Duda, R.O., & Hart, P.E. (1973) Pattern Classification and Scene Analysis. (Q327.D83) John Wiley & Sons. ISBN 0-471-22361-1. See page 218. - Dasarathy, B.V. (1980) "Nosing Around the Neighborhood: A New System Structure and Classification Rule for Recognition in Partially Exposed Environments". IEEE Transactions on Pattern Analysis and Machine Intelligence, Vol. PAMI-2, No. 1, 67-71. - Gates, G.W. (1972) "The Reduced Nearest Neighbor Rule". IEEE Transactions on Information Theory, May 1972, 431-433. - See also: 1988 MLC Proceedings, 54-64. Cheeseman et al"s AUTOCLASS II conceptual clustering system finds 3 classes in the data. - Many, many more ...

参考:https://scikit-learn.org/stable/datasets/index.html#iris-plants-database

2.定义求距离函数

欧氏距离的计算就很好弄了,直接根据公式先求差的平方和再开方。

def euc_dis(instance1, instance2): diff = instance1 - instance2 diff = diff**2 dist = sum(diff)**0.5 return dist

3.定义KNN分类函数

def knn_classify(X, y, testInstance, k): dis = [] for i in X: dis.append(euc_dis(i,testInstance))#调用欧氏距离求解函数去计算testInstance与X的每个向量之间的欧式距离 maxIndex = map(dis.index,heapq.nsmallest(k,dis))#求出最小的K个距离的下标 maxY = [] for i in maxIndex: maxY.append(y[i])#将样本对应的标签加到maxY数组 return max(maxY,key = maxY.count)#出现次数最多的标签值

这个函数就是根据KNN算法的原理来写,先求出所有的距离,排序后都放到数组里面,返回出现次数最多的最小距离下标。

这里用到了堆排序(也是第一次接触python里面的堆排序函数用法),获取堆中最小的范围值使用代码题目中的heapq.nsmallest(),最大范围可以使用heapq.nlargest()

关于库模块heapq:

(1)创建堆

可以使用一个空列表,然后使用heapq.heappush()函数把值加入堆中,也可以使用heap.heapify(list)转换列表成为堆结构。

(2)方法

除了heapq.nsmallest()和heapq.nlargest()还有很多。

heapq.heappop() :弹出堆中最小值。

heapq.heaprepalce():删除堆中最小元素并加入一个元素。

等...

(找时间都敲一下比较好,然后可以再看一下其他的排序)

4.预测结果

predictions = [knn_classify(X_train,y_train,data,3) for data in X_test] correct = np.count_nonzero((predictions == y_test)== True) print("Accuracy is %.3f" %(correct/len(X_test)))

5.心得

KNN算法算是比较简单的一个算法,过程好理解,也好实现。

每次任务都会一点一点丰富自己以前没接触过过或者比较模糊的知识点,还是应该多练习,每个查到的新知识点都敲一遍理解一下,然后再一步一步去扩展。