1.前言

对于互联网公司来说,nginx的请求日志简直就是一座金矿,如果不能充分利用,简直太可惜了。

初期一般都是输出到日志文件,要查什么就awk\grep\uniq\sort...,能满足不少统计需求,但最大的缺点是不直观,不方便监控(目前虽然用了ELK,但是有些信息我还是用shell统计,两者互补)。

整理下实施ELK最起码要实现的需求:

- 查询条件(精确匹配):一级域名、二级域名、客户真实IP、HTTP状态码、HTTP方法、request_time、response_time、代理IP、body_bytes_sent

- 查询条件(模糊匹配):url(如查找SQL注入关键字)、refere(流量来源)、agent(如查找搜索引擎)

- 近期(1周、1个月)内整体请求量走势情况;

- 如果发现总体走势异常,要很方便找到那个域名走势异常;

- 过去一个周期内(1天、1周、1月)所有请求构成,按不同域名出饼图;

- 实时监控爬虫IP过高的频率访问(如单个IP1分钟请求超过100次报警);

- 实时监控500状态请求情况(如单个域名1分钟出现30个500就报警);

- ……

2.拓扑

nginx需要配置syslog协议输出;

logstash作为syslog服务器,收集日志,输出2个方向:elastersearch入库,本地文件;

elasticsearch需要设计好模型,目的:支持不同字段的查找需求(精确或模糊,甚至某个字段同时要支持精确+模糊,不过我没用到)、空间不浪费;

kibana可视化,主要是配置Discovery\Visualize;

elastalert,配置各种规则,实现实时监控需求。

3.nginx配置

nginx.conf

日志以json格式输出,方便logstash解析;

因为syslog协议一条消息最大2K,因此有些变了做了阶段(_short后缀的变量);

level1domain、level2domain分别指一级域名、二级域名;

log_format main_json '{"project":"${level1domain}","domain":"${level1domain}_${level2domain}","real_ip":"$real_ip","http_x_forwarded_for":"$http_x_forwarded_for","time_local":"$time_iso8601","

request":"$request_short","request_body":"$request_body_short","status":$status,"body_bytes_sent":"$body_bytes_sent","http_referer":"$http_referer_short","upstream_response_time":"$upstream_re

sponse_time","request_time":"$request_time","http_user_agent":"$http_user_agent"}';location.conf

#取前750个字节

if ( $request ~ "^(.{0,750})" ) {

set $request_short $1;

}

#取前750个字节

if ( $request_body ~ "^(.{0,750})" ) {

set $request_body_short $1;

}

#取前100个字节

set $http_referer_short "-";

if ( $http_referer ~ "^(.{1,100})" ) {

set $http_referer_short $1;

}

#从$http_x_forward_for中获取第一个IP,作为客户端实际IP

set $real_ip $remote_addr;

if ( $http_x_forwarded_for ~ "^(\d+\.\d+\.\d+\.\d+)" ) {

set $real_ip $1;

}

#server_name的格式是:N级域名.……三级域名.二级域名.一级域名.com或cn,或者一级域名.com或cn;

#解析一级域名部分为$level1domain

#解析一级域名之前的部分为$level2domain

set $level1domain unparse;

set $level2domain unparse;

if ( $server_name ~ "^(.+)\.([0-9a-zA-Z]+)\.(com|cn)$" ) {

set $level1domain $2;

set $level2domain $1;

}

if ( $server_name ~ "^([0-9a-zA-Z]+)\.(com|cn)$" ) {

set $level1domain $1;

set $level2domain none;

}

#syslog输出配置

access_log syslog:local7:info:logstash_ip:515:nginx main_json;4.logstash配置

安装:

安装jdk8

解压logstash-6.2.1.tar.gz

查看插件:

./logstash-plugin list | grep syslog

安装非默认插件

./logstash-plugin install logstash-filter-alter

测试:

# ./logstash -e 'input { stdin { } } output { stdout {} }'

启动:

启动logstash:nohup ./bin/logstash -f mylogstash.conf & disown

配置:

mylogstash.conf

input {

syslog {

type => "system-syslog"

port => 515

}

}

filter {

#在json化之前,使用mutte对\\x字符串进行替换,防止以下错误:ParserError: Unrecognized character escape 'x' (code 120)

mutate {

gsub => ["message", "\\x", "\\\x"]

}

json {

source => "message"

#删除无用字段,节约空间

remove_field => "message"

remove_field => "severity"

remove_field => "pid"

remove_field => "logsource"

remove_field => "timestamp"

remove_field => "facility_label"

remove_field => "type"

remove_field => "facility"

remove_field => "@version"

remove_field => "priority"

remove_field => "severity_label"

}

date {

#用nginx请求时间替换logstash生成的时间

match => ["time_local", "ISO8601"]

target => "@timestamp"

}

grok {

#从时间中获取day

match => { "time_local" => "(?<day>.{10})" }

}

grok {

#将request解析成2个字段:method\url

match => { "request" => "%{WORD:method} (?<url>.* )" }

}

grok {

#截取http_referer问号前的部分,问号后的信息无价值,浪费空间

match => { "http_referer" => "(?<referer>-|%{URIPROTO}://(?:%{USER}(?::[^@]*)?@)?(?:%{URIHOST})?)" }

}

mutate {

#解析出新的字段后,原字段丢弃

remove_field => "request"

remove_field => "http_referer"

rename => { "http_user_agent" => "agent" }

rename => { "upstream_response_time" => "response_time" }

rename => { "host" => "log_source" }

rename => { "http_x_forwarded_for" => "x_forwarded_for" }

#以下2个字段以逗号分隔后,以数组形式入库

split => { "x_forwarded_for" => ", " }

split => { "response_time" => ", " }

}

alter {

#不满足elasticsearch索引模型的,入库会失败,因此做以下数据转换

condrewrite => [

"x_forwarded_for", "-", "0.0.0.0",

"x_forwarded_for", "unknown", "0.0.0.0",

"response_time", "-", "0",

"real_ip", "", "0.0.0.0"

]

}

}

output {

#入库,以template指定的模型作为索引模型

elasticsearch {

hosts => ["elasticsearch_ip:9200"]

index => "nginx-%{day}"

manage_template => true

template_overwrite => true

template_name => "mynginx"

template => "/root/logstash/mynginxtemplate.json"

codec => json

}

#本地文件放一份,作为ELK的补充

file {

flush_interval => 600

path => '/nginxlog/%{day}/%{domain}.log'

codec => line { format => '<%{time_local}> <%{real_ip}> <%{method}> <%{url}> <%{status}> <%{request_time}> <%{response_time}> <%{body_bytes_sent}> <%{request_body}> <%{referer}> <%{x_f

orwarded_for}> <%{log_source}> <%{agent}>'}

}

}

mynginxtemplate.json

{

"template": "nginx-*",

"settings": {

"index.number_of_shards": 8,

"number_of_replicas": 0,

"analysis": {

"analyzer": {

#自定义stop关键字,不收集http等字段的索引

"stop_url": {

"type": "stop",

"stopwords": ["http","https","www","com","cn","net"]

}

}

}

},

"mappings" : {

"doc" : {

"properties" : {

# index:true 分词、生产搜索引擎

# analyzer:指定索引分析器

"referer": {

"type": "text",

"norms": false,

"index": true,

"analyzer": "stop_url"

},

"agent": {

"type": "text",

"norms": false,

"index": true

},

# IP字段类型

"real_ip": {

"type": "ip"

},

"x_forwarded_for": {

"type": "ip"

},

# keyword,作为完整字段索引,不可分词索引

"status": {

"type": "keyword"

},

"method": {

"type": "keyword"

},

"url": {

"type": "text",

"norms": false,

"index": true,

"analyzer": "stop_url"

},

"status": {

"type": "keyword"

},

"response_time": {

"type": "half_float"

},

"request_time": {

"type": "half_float"

},

"domain": {

"type": "keyword"

},

"project": {

"type": "keyword"

},

"request_body": {

"type": "text",

"norms": false,

"index": true

},

"body_bytes_sent": {

"type": "long"

},

"log_source": {

"type": "ip"

},

"@timestamp" : {

"type" : "date",

"format" : "dateOptionalTime",

"doc_values" : true

},

"time_local": {

"enabled": false

},

"day": {

"enabled": false

}

}

}

}

}5.elasticsearch配置

elasticsearch.yml

cluster.name: nginxelastic

# 节点名称,每个节点不同

node.name: node1

bootstrap.system_call_filter: false

bootstrap.memory_lock: true

# 本节点IP

network.host: 10.10.10.1

http.port: 9200

transport.tcp.port: 9300

# 单播自动发现,配置集群中其他节点的IP+端口,host1:port1,host2:port2,本例中只有2个节点,因此只配置另一个节点的IP和端口

discovery.zen.ping.unicast.hosts: ["other_node_ip:9300"]

# 一个节点需要看到的具有master节点资格的最小数量,推荐(N/2)+1

discovery.zen.minimum_master_nodes: 2

http.cors.enabled: true

http.cors.allow-origin: /.*/

path.data: /elastic/data

path.logs: /elastic/logs# jvm初始和最大内存,建议设置为服务器内存的一半

-Xms8g

-Xmx8gcrontab自动删除历史数据del_index.sh

#!/bin/bash

DELINDEX="nginx-"`date -d "-30 day" +%Y-%m-%d`

curl -H "Content-Type: application/json" -XDELETE 'http://10.10.10.1:9200/'"${DELINDEX}"6.kibana配置

kibana.yml

server.port: 80

server.host: 10.10.10.3

elasticsearch.url: "http://10.10.10.1:9200"

elasticsearch.username: "kibana"

elasticsearch.password: "mypwd"界面设置:

management -> advanced settings:

dateFormat(日期格式):YYYYMMDD HH:mm:ss

defaultColumns(默认字段): method, url, status, request_time, real_ip

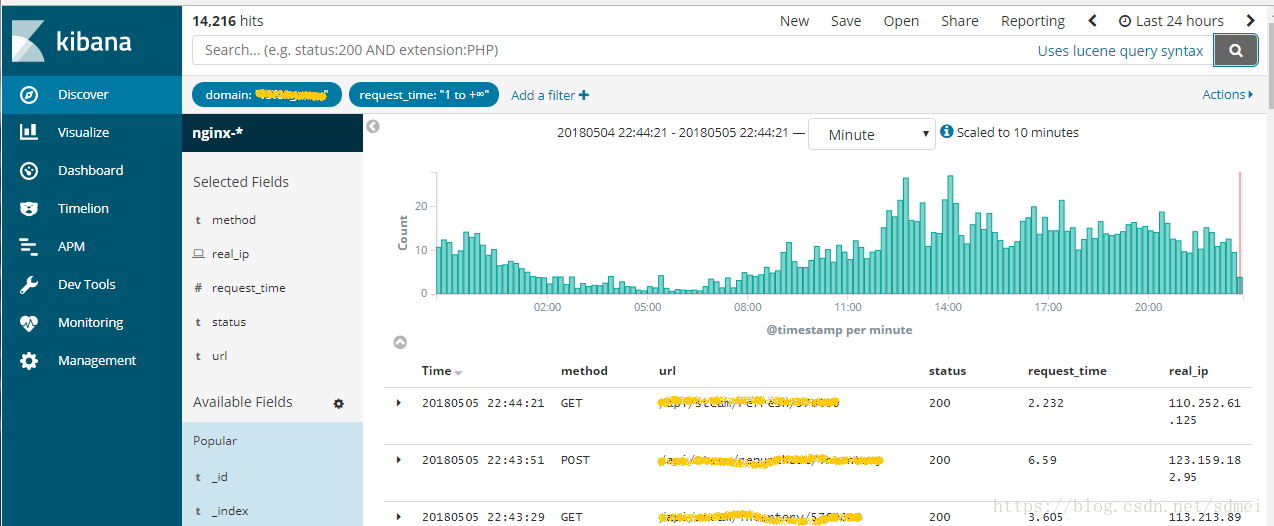

查询某域名下耗时超过1秒的请求

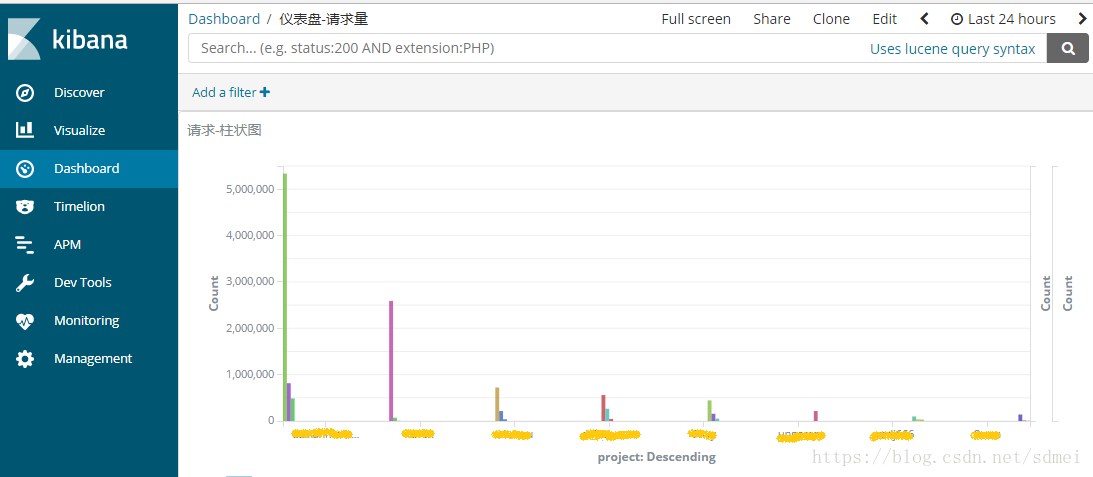

查询过去24小时各域名请流量柱状图

7.elastalert配置

官方有个watcher可用于实时监控ELK收集的日志,可惜是商业版的,想用免费的,elastalert是个不错的方案。

https://github.com/Yelp/elastalert

elastalert常用的监控类型有frequency\spike\等(http://elastalert.readthedocs.io/en/latest/ruletypes.html#rule-types)

- frequency: 监控特定事件出现的频率,如某IP每分钟请求超过600次,某域名每分钟出现30个以上耗时超过3秒的请求,某域名每分钟出现10个以上500状态的请求等。

- spike:监控事件出现的变化幅度,如最近1小时比上1小时请求增加了1倍,最近1天比上一天请求了减少了50%等等。

这里以frequency类型的监控为例,实时监控500状态错误。

config.yaml

# 指定es地址

es_host: 10.10.10.1

es_port: 9200freq-500.yaml

#文件名自定义,容易区分即可

es_host: 10.10.10.1

es_port: 9200

name: elk-nginx-freq-500

type: frequency

index: nginx-*

# 周期内出现10次以上则报警

num_events: 10

# 周期1分钟

timeframe:

minutes: 1

# 查询条件

# status in (500,501,502,503,504)

# domain 不包含test,即测试域名下的事件忽略

filter:

- bool:

must:

- terms:

status: ["500","501","502","503","504"]

must_not:

- wildcard:

domain: "*test*"

# 对每个domain单独计算num_events,最多计算10个domain,某个domain的num_events达到10个,才会报警

use_terms_query: true

doc_type: doc

terms_size: 10

query_key: domain

# 分别以domain和status列出top5的keys数量,报警邮件中提高top 5 domain和top 5 status

top_count_keys:

- domain

- status

top_count_number: 5

raw_count_keys: false

# 10分钟内不重复报警

realert:

minutes: 10

# 分别通过command(短信)和email报警

alert:

- command

- email

# 自己写的调用短信接口的命令发生短信,短信内容比较简单,通知什么域名出现500状态报警

new_style_string_format: true

command: ["/root/elastalert-0.1.29/myalert/sms.sh", "15800000000", "elk nginx warning - freq 500 exceed, domain: {match[domain]}"]

# 以下是elastalert封装好的email报警配置

# smtp_auth_file.yaml中配置邮件的用户名密码

smtp_host: smtp.exmail.qq.com

smtp_port: 465

smtp_ssl : true

from_addr: "[email protected]"

smtp_auth_file: "/root/elastalert-0.1.29/myalert/smtp_auth_file.yaml"

email:

- "[email protected]"

alert_subject: "elk nginx warning - freq 500 exceed, domain: {0}"

alert_subject_args:

- domainpython -m elastalert.elastalert --verbose --rule freq-500.yaml >> freq-500.log 2>&1 & disown

报警邮件