通过编程实现词频统计并导出jar在终端运行

创建词文件夹

mkdir wordcount

进入文件夹

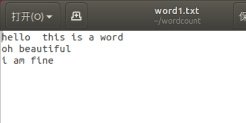

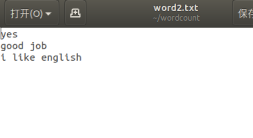

创建两个词文档

vim word1.txt

vim word2.txt

打开eclipse编写程序

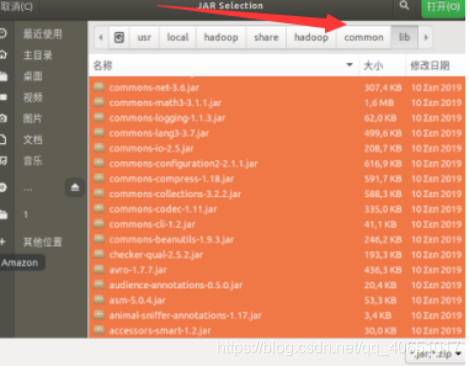

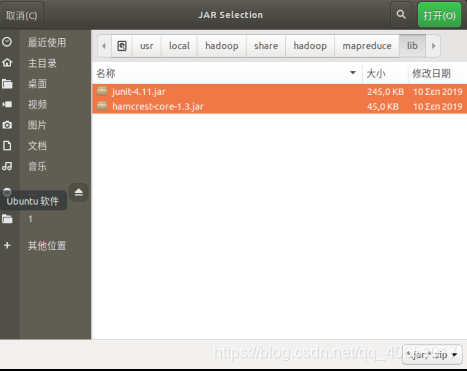

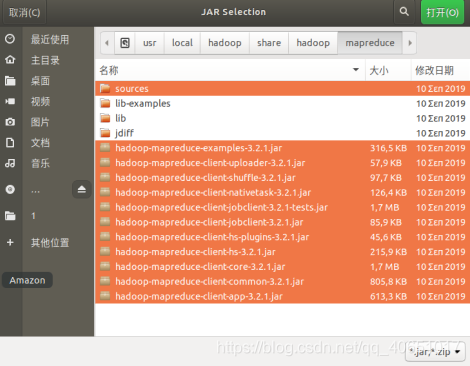

1.导入需要的jar

导入此路径下所有jar(下同)

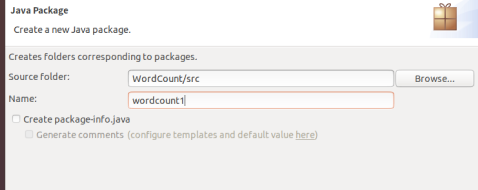

2.创建package

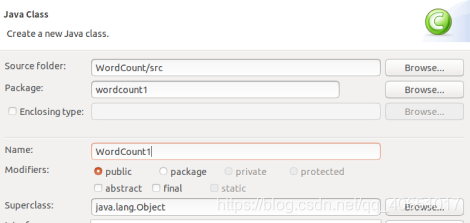

3.创建class

编写代码

代码如下:

package wordcount1;

import java.io.IOException;

import java.util.Iterator;

import java.util.StringTokenizer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

public class WordCount1 {

public WordCount1() {

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

String[] otherArgs = (new GenericOptionsParser(conf, args)).getRemainingArgs();

if(otherArgs.length < 2) {

System.err.println("Usage: wordcount <in> [<in>...] <out>");

System.exit(2);

}

Job job = Job.getInstance(conf, "word count");

job.setJarByClass(WordCount1.class);

job.setMapperClass(WordCount1.TokenizerMapper.class);

job.setCombinerClass(WordCount1.IntSumReducer.class);

job.setReducerClass(WordCount1.IntSumReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

for(int i = 0; i < otherArgs.length - 1; ++i) {

FileInputFormat.addInputPath(job, new Path(otherArgs[i]));

}

FileOutputFormat.setOutputPath(job, new Path(otherArgs[otherArgs.length - 1]));

System.exit(job.waitForCompletion(true)?0:1);

}

public static class TokenizerMapper extends Mapper<Object, Text, Text, IntWritable> {

private static final IntWritable one = new IntWritable(1);

private Text word = new Text();

public TokenizerMapper() {

}

public void map(Object key, Text value, Mapper<Object, Text, Text, IntWritable>.Context context) throws IOException, InterruptedException {

StringTokenizer itr = new StringTokenizer(value.toString());

while(itr.hasMoreTokens()) {

this.word.set(itr.nextToken());

context.write(this.word, one);

}

}

}

public static class IntSumReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

private IntWritable result = new IntWritable();

public IntSumReducer() {

}

@SuppressWarnings("rawtypes")

public void reduce(Text key, Iterable<IntWritable> values, Reducer<Text, IntWritable, Text, IntWritable>.Context context) throws IOException, InterruptedException {

int sum = 0;

IntWritable val;

for(Iterator i$ = values.iterator(); i$.hasNext(); sum += val.get()) {

val = (IntWritable)i$.next();

}

this.result.set(sum);

context.write(key, this.result);

}

}

}

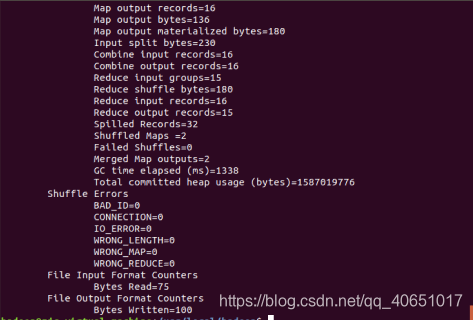

运行结果

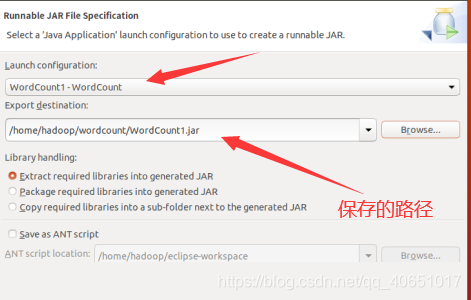

导出jar

打开hadoop

运行hadoop

cd /usr/local/hadoop

./sbin/start-dfs.sh

在hdfs文件系统上创建input1文件夹

hdfs dfs -mkdir /user/hadoop/input1

将word1、word2上传到所创建的input1中

hdfs dfs -put ~/wordcount/word1.txt /user/hadoop/input1

hdfs dfs -put ~/wordcount/word2.txt /user/hadoop/input1

运行词频统计的jar

hadoop jar ~/wordcount/WordCount1.jar /user/hadoop/input1 /user/hadoop/output1

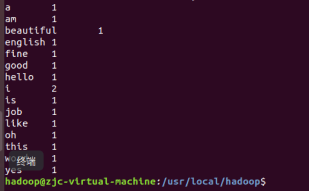

通过终端命令列出output1目录下的词频统计结果

hdfs dfs -cat /user/hadoop/output1/*

到此我们的目的得到实现,关闭hadoop

./sbin/stop-dfs.sh

以上就是本篇博客所有的分享内容