版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/weijianpeng2013_2015/article/details/71511893

给出child-parent表,输出grandchild-grandparent表

child parent

Tom Lucy

Tom Jack

Jone Lucy

Lucy Mary

Lucy Ben

Jack Alice

Jack Jesse

Terry Alice

Terry Jesse

Philip Terry

Philip Alma

Mark Terry

Mark Alma

代码:

import java.io.IOException;

import java.util.Iterator;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class STjoin {

public static int time=0;

public static class Map extends Mapper<LongWritable, Text, Text, Text>{

@Override

protected void map(LongWritable key, Text value,Context context)

throws IOException, InterruptedException {

String childname=new String();

String parentname=new String();

String relationtype=new String();

String line=value.toString();

int i=0;

while(line.charAt(i)!=' '){

i++;

}

String[] values={line.substring(0, i),line.substring(i+1)};

if(values[0].compareTo("child")!=0){

childname=values[0];

parentname=values[1];

relationtype="1";//左右表区分标志

context.write(new Text(values[1]), new Text(relationtype+"+"+childname+"+"+parentname));

//右表

relationtype="2";

context.write(new Text(values[0]), new Text(relationtype+"+"+childname+"+"+parentname));

}

}

}

public static class Reduce extends Reducer<Text, Text, Text, Text>{

@Override

protected void reduce(Text key, Iterable<Text> values,Context context)

throws IOException, InterruptedException {

if(time==0){

//输出表头

context.write(new Text("grandchild"), new Text("grandparent"));

time++;

}

int grandchildnum=0;

String grandchild[]=new String[10];

int grandparentnum=0;

String grandparent[]=new String[10];

Iterator iterator=values.iterator();

while(iterator.hasNext()){

String record=iterator.next().toString();

int len=record.length();

int i=2;

if(len==0)

continue;

char relationtype=record.charAt(0);

String childname=new String();

String parentname=new String();

//获取value-list中value的child

while(record.charAt(i)!='+'){

childname=childname+record.charAt(i);

i++;

}

i=i+1;//越过加号

//获取value-list中value的parent

while(i<len){

parentname=parentname+record.charAt(i);

i++;

}

System.out.println("childname="+childname+" parentname="+parentname);

//左表,取出child放入grandchild

if(relationtype=='1'){

grandchild[grandchildnum]=childname;

grandchildnum++;

}else{//右表,取出parent放入 grandparent

grandparent[grandparentnum]=parentname;

grandparentnum++;

}

}

//grandchild和grandparent数组求笛卡儿积

if(grandparentnum!=0&&grandchildnum!=0){

System.out.println("******执行成功************");

for(int m=0;m<grandchildnum;m++){

for(int n=0;n<grandparentnum;n++){

context.write(new Text(grandchild[m]), new Text(grandparent[n]));

}

}

}

}

}

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

Configuration conf=new Configuration();

Job job=new Job(conf, "STJOIN");

job.setJarByClass(STjoin.class);

job.setMapperClass(Map.class);

job.setReducerClass(Reduce.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

FileInputFormat.addInputPath(job, new Path("/input/st"));

FileOutputFormat.setOutputPath(job, new Path("/output/st"));

System.out.println(job.waitForCompletion(true) ? 0:1);

}

}

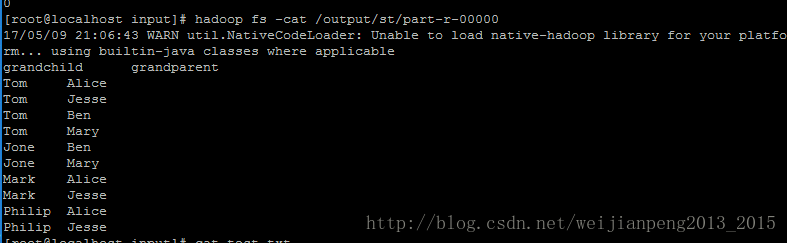

结果: