一、生成数据集

generator.py

#导入模块,生成模拟数据集

import numpy as np

import matplotlib.pyplot as plt

seed = 2

def generator():

# 基于seed产生随机数

rdm = np.random.RandomState(seed)

# 随机数返回300行2列的矩阵,表示300组坐标点(x0,x1)作为输入数据集

X = rdm.randn(300, 2)

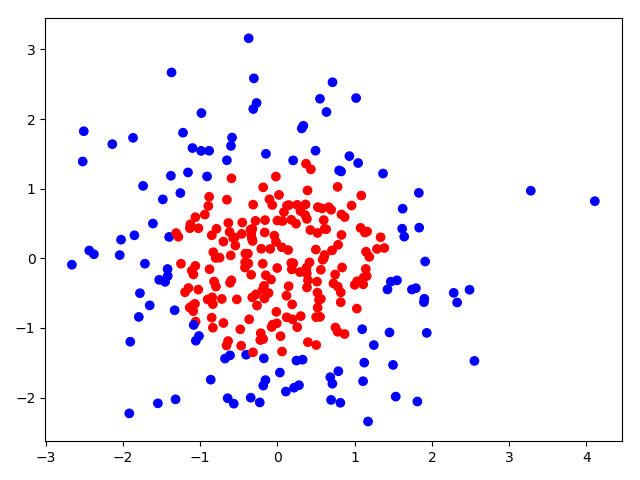

# 从X这个300行2列的矩阵中取出一行,判断如果两个坐标平方和小于2,给Yield赋值1,其余赋值0

# Y_作为输入数据集的标签(正确答案)

Y_ = [int(x0*x0+x1*x1 < 2) for (x0, x1) in X]

# 遍历Y中每个元素,1赋值'red',其余赋值'blue',这样便于可视化观察

Y_c =[['red' if y else 'blue'] for y in Y_]

# 对数据集X和标签Y进行形状整理,第一个元素为-1表示跟随第二列计算;

# 第二个元素表示多少列,可见X为两列,Y为1列

X = np.vstack(X).reshape(-1, 2)

Y_ = np.vstack(Y_).reshape(-1, 1)

return X, Y_, Y_c

if __name__ == '__main__':

X, Y, Y_c = generator()

X = np.array(X)

Y = np.array(Y)

Y_c = np.array(Y_c)

print("X:", X.shape)

print("Y:", Y.shape)

print("Y_c:", Y_c.shape)

print("show_over")

# squeeze函数:从数组的形状中删除单维度条目,即把shape中为1的维度去掉

plt.scatter(X[:, 0], X[:, 1], c=np.squeeze(Y_c))

plt.show()输出结果:

二、前向传播

前向传播就是搭建网络,设计网络结构(forward.py)

# coding:utf-8

# 导入模块,生成模拟数据集

import tensorflow as tf

from tensorflowuse.formalsteps.generator import generator

import numpy as np

# 定义网络输入、参数和输出,定义前向传播过程

def get_weight(shape, regularizer):

W = tf.Variable(tf.random_normal(shape), dtype=tf.float32)

tf.add_to_collection('losses', tf.contrib.layers.l2_regularizer(regularizer)(W))

return W

# 对参数 w 设定

def get_bias(shape):

b = tf.Variable(tf.constant(0.01, shape=shape))

return b

def forward(X, regularizer):

W1 = get_weight([2, 11], regularizer)

b1 = get_bias([11])

y1 = tf.nn.relu(tf.matmul(X, W1) + b1)

W2 = get_weight([11, 1], regularizer)

b2 = get_bias([1])

y = tf.matmul(y1, W2) + b2 # 输出层不过激活

return y

if __name__ == '__main__':

X, Y, Y_c = generator()

X = np.array(X)

Y = np.array(Y)

Y_c = np.array(Y_c)

print("X:", X.shape)

print("Y:", Y.shape)

print("Y_c:", Y_c.shape)输出结果:

X: (300, 2)

Y: (300, 1)

Y_c: (300, 1)函数 forward():完成网络结构的设计,从输入到输出搭建完整的网络结构,实现前向传播过程。该函数中,参数 x 为输入,regularizer 为正则化权重,返回值为预测或分类结果y。

三、反向传播

反向传播就是训练网络,优化网络参数(backward.py)

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

from tensorflowuse.formalsteps import generator, forward

STEPS = 40000

BATCH_SIZE = 32

# LEARNING_RATE_BASE = 0.001

# LEARNING_RATE_BASE = 1.0

LEARNING_RATE_BASE = .01

LEARNING_RATE_DECAY = 0.99

REGULARIZER = 0.01

# After 39800 steps, loss_v is 0.089410:

# 反向传播就是训练网络,优化网络参数

def backward():

x = tf.placeholder(tf.float32, shape=(None, 2))

y_ = tf.placeholder(tf.float32, shape=(None, 1))

X, Y_, Y_c = generator.generator()

pred_y = forward.forward(x, REGULARIZER)

global_step = tf.Variable(0, trainable=False)

learning_rate = tf.train.exponential_decay(

LEARNING_RATE_BASE,

global_step,

300/BATCH_SIZE,

LEARNING_RATE_DECAY,

staircase=True)

# 定义损失函数

loss_mse = tf.reduce_mean(tf.square(pred_y-y_))

# 带正则化的损失函数

loss_total = loss_mse + tf.add_n(tf.get_collection('losses'))

# 定义反向传播方法:包括正则化

train_step = tf.train.AdamOptimizer(learning_rate).minimize(loss_total)

with tf.Session() as sess:

init_op = tf.global_variables_initializer()

sess.run(init_op)

for i in range(STEPS):

start = (i*BATCH_SIZE) % 300

end = start + BATCH_SIZE

sess.run(train_step, feed_dict={x: X[start:end], y_: Y_[start:end]})

if i % 200 == 0:

loss_v = sess.run(loss_total, feed_dict={x: X, y_: Y_})

print("After %d steps, loss_v is %f:" % (i, loss_v))

# 收集规定区域内所有的网格坐标点

# 找到规定区域以步长为分辨率的行列网格坐标点

# xx, yy = np.mgrid[ 起: : 止: : 步长 , 起: : 止: : 步长] ]

# xx, yy = np.mgrid[-3:3:0.1, -3:3:0.1]

# 收集规定区域内所有的网格坐标点

# grid = np.c_[xx.ravel(), yy.ravel()]

# probs = sess.run(pred_y, feed_dict={x: grid})

# probs = probs.reshape(xx.shape)

# plt.scatter(X[:, 0], X[:, 1], c=np.squeeze(Y_c))

# # plt.contour(xx, yy, probs, levels=[.5])

# plt.show()

# After 39800 steps, loss_v is 0.064519:

if __name__ == '__main__':

backward()get_weight()对参数 w 设定。该函数中,参数 shape 表示参数 w 的形状,regularizer表示正则化权重,返回值为参数 w。其中,tf.variable()给 w 赋初值,tf.add_to_collection()表示将参数 w 正则化损失加到总损失 losses 中。

注意:正则化参数的大小对于训练过程有很大的影响。